Low Latency for Cloud Data Management

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Beyond Relational Databases

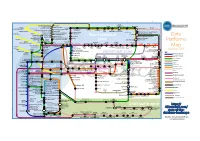

EXPERT ANALYSIS BY MARCOS ALBE, SUPPORT ENGINEER, PERCONA Beyond Relational Databases: A Focus on Redis, MongoDB, and ClickHouse Many of us use and love relational databases… until we try and use them for purposes which aren’t their strong point. Queues, caches, catalogs, unstructured data, counters, and many other use cases, can be solved with relational databases, but are better served by alternative options. In this expert analysis, we examine the goals, pros and cons, and the good and bad use cases of the most popular alternatives on the market, and look into some modern open source implementations. Beyond Relational Databases Developers frequently choose the backend store for the applications they produce. Amidst dozens of options, buzzwords, industry preferences, and vendor offers, it’s not always easy to make the right choice… Even with a map! !# O# d# "# a# `# @R*7-# @94FA6)6 =F(*I-76#A4+)74/*2(:# ( JA$:+49>)# &-)6+16F-# (M#@E61>-#W6e6# &6EH#;)7-6<+# &6EH# J(7)(:X(78+# !"#$%&'( S-76I6)6#'4+)-:-7# A((E-N# ##@E61>-#;E678# ;)762(# .01.%2%+'.('.$%,3( @E61>-#;(F7# D((9F-#=F(*I## =(:c*-:)U@E61>-#W6e6# @F2+16F-# G*/(F-# @Q;# $%&## @R*7-## A6)6S(77-:)U@E61>-#@E-N# K4E-F4:-A%# A6)6E7(1# %49$:+49>)+# @E61>-#'*1-:-# @E61>-#;6<R6# L&H# A6)6#'68-# $%&#@:6F521+#M(7#@E61>-#;E678# .761F-#;)7-6<#LNEF(7-7# S-76I6)6#=F(*I# A6)6/7418+# @ !"#$%&'( ;H=JO# ;(\X67-#@D# M(7#J6I((E# .761F-#%49#A6)6#=F(*I# @ )*&+',"-.%/( S$%=.#;)7-6<%6+-# =F(*I-76# LF6+21+-671># ;G';)7-6<# LF6+21#[(*:I# @E61>-#;"# @E61>-#;)(7<# H618+E61-# *&'+,"#$%&'$#( .761F-#%49#A6)6#@EEF46:1-# -

Download File

Annex 2: List of tested and analyzed data sharing tools (non-exhaustive) Below are the specifications of the tools surveyed, as to February 2015, with some updates from April 2016. The tools selected in the context of EU BON are available in the main text of the publication and are described in more details. This list is also available on the EU BON Helpdesk website, where it will be regularly updated as needed. Additional lists are available through the GBIF resources page, the DataONE software tools catalogue, the BioVel BiodiversityCatalogue and the BDTracker A.1 GBIF Integrated Publishing Toolkit (IPT) Main usage, purpose, selected examples The Integrated Publishing Toolkit is a free open source software tool written in Java which is used to publish and share biodiversity data sets and metadata through the GBIF network. Designed for interoperability, it enables the publishing of content in databases or text files using open standards, namely, the Darwin Core and the Ecological Metadata Language. It also provides a 'one-click' service to convert data set metadata into a draft data paper manuscript for submission to a peer-reviewed journal. Currently, the IPT supports three core types of data: checklists, occurrence datasets and sample based data (plus datasets at metadata level only). The IPT is a community-driven tool. Core development happens at the GBIF Secretariat but the coding, documentation, and internationalization are a community effort. New versions incorporate the feedback from the people who actually use the IPT. In this way, users can help get the features they want by becoming involved. The user interface of the IPT has so far been translated into six languages: English, French, Spanish, Traditional Chinese, Brazilian Portuguese, Japanese (Robertson et al, 2014). -

System and Organization Controls (SOC) 3 Report Over the Google Cloud Platform System Relevant to Security, Availability, and Confidentiality

System and Organization Controls (SOC) 3 Report over the Google Cloud Platform System Relevant to Security, Availability, and Confidentiality For the Period 1 May 2020 to 30 April 2021 Google LLC 1600 Amphitheatre Parkway Mountain View, CA, 94043 650 253-0000 main Google.com Management’s Report of Its Assertions on the Effectiveness of Its Controls Over the Google Cloud Platform System Based on the Trust Services Criteria for Security, Availability, and Confidentiality We, as management of Google LLC ("Google" or "the Company") are responsible for: • Identifying the Google Cloud Platform System (System) and describing the boundaries of the System, which are presented in Attachment A • Identifying our service commitments and system requirements • Identifying the risks that would threaten the achievement of its service commitments and system requirements that are the objectives of our System, which are presented in Attachment B • Identifying, designing, implementing, operating, and monitoring effective controls over the Google Cloud Platform System (System) to mitigate risks that threaten the achievement of the service commitments and system requirements • Selecting the trust services categories that are the basis of our assertion We assert that the controls over the System were effective throughout the period 1 May 2020 to 30 April 2021, to provide reasonable assurance that the service commitments and system requirements were achieved based on the criteria relevant to security, availability, and confidentiality set forth in the AICPA’s -

A Program Optimization for Automatic Database Result Caching

rtifact A Program Optimization for Comp le * A t * te n * te A is W s E * e n l l C o L D C o P * * c u e m s Automatic Database Result Caching O E u e e P n R t v e o d t * y * s E a a l d u e a t Ziv Scully Adam Chlipala Carnegie Mellon University, Pittsburgh, PA, USA Massachusetts Institute of Technology CSAIL, [email protected] Cambridge, MA, USA [email protected] Abstract formance bottleneck, even with the maturity of today’s database Most popular Web applications rely on persistent databases based systems. Several pathologies are common. Database queries may on languages like SQL for declarative specification of data mod- incur significant latency by themselves, on top of the cost of interpro- els and the operations that read and modify them. As applications cess communication and network round trips, exacerbated by high scale up in user base, they often face challenges responding quickly load. Applications may also perform complicated postprocessing of enough to the high volume of requests. A common aid is caching of query results. database results in the application’s memory space, taking advantage One principled mitigation is caching of query results. Today’s of program-specific knowledge of which caching schemes are sound most-used Web applications often include handwritten code to main- and useful, embodied in handwritten modifications that make the tain data structures that cache computations on query results, keyed off of the inputs to those queries. Many frameworks are used widely program less maintainable. -

Data Platforms Map from 451 Research

1 2 3 4 5 6 Azure AgilData Cloudera Distribu2on HDInsight Metascale of Apache Kaa MapR Streams MapR Hortonworks Towards Teradata Listener Doopex Apache Spark Strao enterprise search Apache Solr Google Cloud Confluent/Apache Kaa Al2scale Qubole AWS IBM Azure DataTorrent/Apache Apex PipelineDB Dataproc BigInsights Apache Lucene Apache Samza EMR Data Lake IBM Analy2cs for Apache Spark Oracle Stream Explorer Teradata Cloud Databricks A Towards SRCH2 So\ware AG for Hadoop Oracle Big Data Cloud A E-discovery TIBCO StreamBase Cloudera Elas2csearch SQLStream Data Elas2c Found Apache S4 Apache Storm Rackspace Non-relaonal Oracle Big Data Appliance ObjectRocket for IBM InfoSphere Streams xPlenty Apache Hadoop HP IDOL Elas2csearch Google Azure Stream Analy2cs Data Ar2sans Apache Flink Azure Cloud EsgnDB/ zone Platforms Oracle Dataflow Endeca Server Search AWS Apache Apache IBM Ac2an Treasure Avio Kinesis LeanXcale Trafodion Splice Machine MammothDB Drill Presto Big SQL Vortex Data SciDB HPCC AsterixDB IBM InfoSphere Towards LucidWorks Starcounter SQLite Apache Teradata Map Data Explorer Firebird Apache Apache JethroData Pivotal HD/ Apache Cazena CitusDB SIEM Big Data Tajo Hive Impala Apache HAWQ Kudu Aster Loggly Ac2an Ingres Sumo Cloudera SAP Sybase ASE IBM PureData January 2016 Logic Search for Analy2cs/dashDB Logentries SAP Sybase SQL Anywhere Key: B TIBCO Splunk Maana Rela%onal zone B LogLogic EnterpriseDB SQream General purpose Postgres-XL Microso\ Ry\ X15 So\ware Oracle IBM SAP SQL Server Oracle Teradata Specialist analy2c PostgreSQL Exadata -

ACID Compliant Distributed Key-Value Store

ACID Compliant Distributed Key-Value Store #1 #2 #3 Lakshmi Narasimhan Seshan , Rajesh Jalisatgi , Vijaeendra Simha G A # 1l [email protected] # 2r [email protected] #3v [email protected] Abstract thought that would be easier to implement. All Built a fault-tolerant and strongly-consistent key/value storage components run http and grpc endpoints. Http endpoints service using the existing RAFT implementation. Add ACID are for debugging/configuration. Grpc Endpoints are transaction capability to the service using a 2PC variant. Build used for communication between components. on the raftexample[1] (available as part of etcd) to add atomic Replica Manager (RM): RM is the service discovery transactions. This supports sharding of keys and concurrent transactions on sharded KV Store. By Implementing a part of the system. RM is initiated with each of the transactional distributed KV store, we gain working knowledge Replica Servers available in the system. Users have to of different protocols and complexities to make it work together. instantiate the RM with the number of shards that the user wants the key to be split into. Currently we cannot Keywords— ACID, Key-Value, 2PC, sharding, 2PL Transaction dynamically change while RM is running. New Replica Servers cannot be added or removed from once RM is I. INTRODUCTION up and running. However Replica Servers can go down Distributed KV stores have become a norm with the and come back and RM can detect this. Each Shard advent of microservices architecture and the NoSql Leader updates the information to RM, while the DTM revolution. Initially KV Store discarded ACID properties leader updates its information to the RM. -

2.2 Adaptive Routing Algorithms and Router Design 20

https://theses.gla.ac.uk/ Theses Digitisation: https://www.gla.ac.uk/myglasgow/research/enlighten/theses/digitisation/ This is a digitised version of the original print thesis. Copyright and moral rights for this work are retained by the author A copy can be downloaded for personal non-commercial research or study, without prior permission or charge This work cannot be reproduced or quoted extensively from without first obtaining permission in writing from the author The content must not be changed in any way or sold commercially in any format or medium without the formal permission of the author When referring to this work, full bibliographic details including the author, title, awarding institution and date of the thesis must be given Enlighten: Theses https://theses.gla.ac.uk/ [email protected] Performance Evaluation of Distributed Crossbar Switch Hypermesh Sarnia Loucif Dissertation Submitted for the Degree of Doctor of Philosophy to the Faculty of Science, Glasgow University. ©Sarnia Loucif, May 1999. ProQuest Number: 10391444 All rights reserved INFORMATION TO ALL USERS The quality of this reproduction is dependent upon the quality of the copy submitted. In the unlikely event that the author did not send a com plete manuscript and there are missing pages, these will be noted. Also, if material had to be removed, a note will indicate the deletion. uest ProQuest 10391444 Published by ProQuest LLO (2017). Copyright of the Dissertation is held by the Author. All rights reserved. This work is protected against unauthorized copying under Title 17, United States C ode Microform Edition © ProQuest LLO. ProQuest LLO. -

Arangodb Is Hailed As a Native Multi-Model Database by Its Developers

ArangoDB i ArangoDB About the Tutorial Apparently, the world is becoming more and more connected. And at some point in the very near future, your kitchen bar may well be able to recommend your favorite brands of whiskey! This recommended information may come from retailers, or equally likely it can be suggested from friends on Social Networks; whatever it is, you will be able to see the benefits of using graph databases, if you like the recommendations. This tutorial explains the various aspects of ArangoDB which is a major contender in the landscape of graph databases. Starting with the basics of ArangoDB which focuses on the installation and basic concepts of ArangoDB, it gradually moves on to advanced topics such as CRUD operations and AQL. The last few chapters in this tutorial will help you understand how to deploy ArangoDB as a single instance and/or using Docker. Audience This tutorial is meant for those who are interested in learning ArangoDB as a Multimodel Database. Graph databases are spreading like wildfire across the industry and are making an impact on the development of new generation applications. So anyone who is interested in learning different aspects of ArangoDB, should go through this tutorial. Prerequisites Before proceeding with this tutorial, you should have the basic knowledge of Database, SQL, Graph Theory, and JavaScript. Copyright & Disclaimer Copyright 2018 by Tutorials Point (I) Pvt. Ltd. All the content and graphics published in this e-book are the property of Tutorials Point (I) Pvt. Ltd. The user of this e-book is prohibited to reuse, retain, copy, distribute or republish any contents or a part of contents of this e-book in any manner without written consent of the publisher. -

Adaptive Parallelism for Coupled, Multithreaded Message-Passing Programs Samuel K

University of New Mexico UNM Digital Repository Computer Science ETDs Engineering ETDs Fall 12-1-2018 Adaptive Parallelism for Coupled, Multithreaded Message-Passing Programs Samuel K. Gutiérrez Follow this and additional works at: https://digitalrepository.unm.edu/cs_etds Part of the Numerical Analysis and Scientific omputC ing Commons, OS and Networks Commons, Software Engineering Commons, and the Systems Architecture Commons Recommended Citation Gutiérrez, Samuel K.. "Adaptive Parallelism for Coupled, Multithreaded Message-Passing Programs." (2018). https://digitalrepository.unm.edu/cs_etds/95 This Dissertation is brought to you for free and open access by the Engineering ETDs at UNM Digital Repository. It has been accepted for inclusion in Computer Science ETDs by an authorized administrator of UNM Digital Repository. For more information, please contact [email protected]. Samuel Keith Guti´errez Candidate Computer Science Department This dissertation is approved, and it is acceptable in quality and form for publication: Approved by the Dissertation Committee: Professor Dorian C. Arnold, Chair Professor Patrick G. Bridges Professor Darko Stefanovic Professor Alexander S. Aiken Patrick S. McCormick Adaptive Parallelism for Coupled, Multithreaded Message-Passing Programs by Samuel Keith Guti´errez B.S., Computer Science, New Mexico Highlands University, 2006 M.S., Computer Science, University of New Mexico, 2009 DISSERTATION Submitted in Partial Fulfillment of the Requirements for the Degree of Doctor of Philosophy Computer Science The University of New Mexico Albuquerque, New Mexico December 2018 ii ©2018, Samuel Keith Guti´errez iii Dedication To my beloved family iv \A Dios rogando y con el martillo dando." Unknown v Acknowledgments Words cannot adequately express my feelings of gratitude for the people that made this possible. -

Vimal Daga Chief Technical Officer (CTO) – Linuxworld Informatics Pvt Ltd Professional Experience & Certifications

Vimal Daga Chief Technical Officer (CTO) – LinuxWorld Informatics Pvt Ltd Professional Experience & Certifications: I Professional Experience During this period, has been engaged with various corporate clients on different domains and has been involved in imparting corporate Training programs and Consultancy for various technologies that covers the following: A. Sr. Machine Learning / Deep Learning / Data Scientist / NLP Consultant and Researcher Expertise in the field of Artificial Intelligence, Deep Learning, and Computer Vision and having ability to solve problems such as Face Detection, Face Recognition and Object Detection using Deep Neural Network (CNN, DNN, RNN, Convolution Networks etc.) and Optical Character Detection and Recognition (OCD & OCR) Worked in tools such as Tensorflow, Caffe/Caffe2, Keras, Theano, PyTorch etc. Build prototypes related to deep learning problems in the field of computer vision. Publications at top international conferences/ journals in fields related to computer vision/deep learning/machine learning / AI Experience on tools, frameworks like Microsoft Azure ML, Chat Bot Framework/LUIS . IBM Watson / ConversationService, Google TensorFlow / Python for Machine Learning (e.g. scikit-learn),Open source ML libraries and tools like Apache Spark Highly Worked on Data Science, Big Data,datastructures, statistics , algorithms like Regression, Classification etc. Working knowlegde of Supervised / Unsuperivsed learning (Decision Trees, Logistic Regression, SVMs,GBM, etc) Expertise in Sentiment Analysis, Entity Extraction, Natural Language Understanding (NLU), Intent recognition Strong understanding of text pre-processing and normalization techniques, such as tokenization, POS tagging, and parsing, and how they work at a basic level and NLP toolkits as NLTK, Gensim,, Apac SpaCyhe UIMA etc. I have Hands on experience related to Datasets such as or including text, images and other logs or clickstreams. -

Managing Cache Consistency to Scale Dynamic Web Systems

Managing Cache Consistency to Scale Dynamic Web Systems by Chris Wasik A thesis presented to the University of Waterloo in fulfilment of the thesis requirement for the degree of Master of Applied Science in Electrical and Computer Engineering Waterloo, Ontario, Canada, 2007 c Chris Wasik 2007 AUTHORS DECLARATION FOR ELECTRONIC SUBMISSION OF A THESIS I hereby declare that I am the sole author of this thesis. This is a true copy of the thesis, including any required final revisions, as accepted by my examiners. I understand that my thesis may be made electronically available to the public. ii Abstract Data caching is a technique that can be used by web servers to speed up the response time of client requests. Dynamic websites are becoming more popular, but they pose a problem - it is difficult to cache dynamic content, as each user may receive a different version of a webpage. Caching fragments of content in a distributed way solves this problem, but poses a maintainability challenge: cached fragments may depend on other cached fragments, or on underlying information in a database. When the underlying information is updated, care must be taken to ensure cached information is also invalidated. If new code is added that updates the database, the cache can very easily become inconsistent with the underlying data. The deploy-time dependency analysis method solves this maintainability problem by analyzing web application source code at deploy-time, and statically writing cache dependency information into the deployed application. This allows for the significant performance gains distributed object caching can allow, without any of the maintainability problems that such caching creates. -

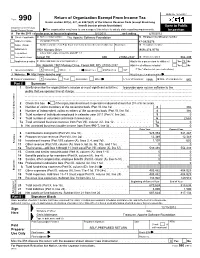

Return of Organization Exempt from Income

OMB No. 1545-0047 Return of Organization Exempt From Income Tax Form 990 Under section 501(c), 527, or 4947(a)(1) of the Internal Revenue Code (except black lung benefit trust or private foundation) Open to Public Department of the Treasury Internal Revenue Service The organization may have to use a copy of this return to satisfy state reporting requirements. Inspection A For the 2011 calendar year, or tax year beginning 5/1/2011 , and ending 4/30/2012 B Check if applicable: C Name of organization The Apache Software Foundation D Employer identification number Address change Doing Business As 47-0825376 Name change Number and street (or P.O. box if mail is not delivered to street address) Room/suite E Telephone number Initial return 1901 Munsey Drive (909) 374-9776 Terminated City or town, state or country, and ZIP + 4 Amended return Forest Hill MD 21050-2747 G Gross receipts $ 554,439 Application pending F Name and address of principal officer: H(a) Is this a group return for affiliates? Yes X No Jim Jagielski 1901 Munsey Drive, Forest Hill, MD 21050-2747 H(b) Are all affiliates included? Yes No I Tax-exempt status: X 501(c)(3) 501(c) ( ) (insert no.) 4947(a)(1) or 527 If "No," attach a list. (see instructions) J Website: http://www.apache.org/ H(c) Group exemption number K Form of organization: X Corporation Trust Association Other L Year of formation: 1999 M State of legal domicile: MD Part I Summary 1 Briefly describe the organization's mission or most significant activities: to provide open source software to the public that we sponsor free of charge 2 Check this box if the organization discontinued its operations or disposed of more than 25% of its net assets.