Santana Vagnerfigueredode M.Pdf

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

World Wide Web: Introduzione E Componenti

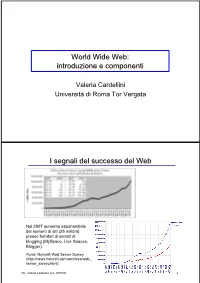

World Wide Web: introduzione e componenti Valeria Cardellini Università di Roma Tor Vergata I segnali del successo del Web Nel 2007 aumento esponenziale del numero di siti (25 milioni) presso fornitori di servizi di blogging (MySpace, Live Spaces, Blogger) Fonte: Netcraft Web Server Survey (http://news.netcraft.com/archives/web_ server_survey.html) IW - Valeria Cardellini, A.A. 2007/08 2 I segnali del successo del Web (2) • Fino all’introduzione dei sistemi P2P, il Web è stata l’applicazione killer di Internet (75% del traffico di Internet nel 1998) Event Period Peak day Peak minute NCSA server (Oct. 1995) - 2 Million - Olympic Summer Games 192 Million 8 Million - (Aug. 1996) (17 days) Nasa Pathfinder 942 Million 40 Million - (July 1997) (14 days) Olympic Winter Games 634.7 Million 55 Million 110,000 (Feb. 1998) (16 days) Wimbledon (July 1998) - - 145,000 FIFA World Cup 1,350 Million 73 Million 209,000 (July 1998) (84 days) Wimbledon (July 1999) - 125 Million 430,000 Wimbledon (July 2000) - 282 Million 964,000 Olympic Summer Games - 875 Million 1,200,000 (Sept. 2000) [Carico misurato in contatti] • Inoltre: google.com, msn.com, yahoo.com (> 200 milioni di contatti al giorno) IW - Valeria Cardellini, A.A. 2007/08 3 I motivi alla base del successo del Web • Digitalizzazione dell’informazione – Qualsiasi informazione rappresentabile in binario come sequenza di 0 e 1 • Diffusione di Internet (dagli anni 1970) – Trasporto dell’informazione ovunque, in tempi rapidissimi e a costi bassissimi • Diffusione dei PC (dagli anni 1980) – Accesso, memorizzazione ed elaborazione dell’informazione da parte di chiunque a costi bassissimi • Semplicità di utilizzo e trasparenza dell’allocazione delle risorse – Semplicità di utilizzo mediante l’uso di interfacce grafiche IW - Valeria Cardellini, A.A. -

MHCC Accessibility Conformance Report

Mt. Hood Community College Website Accessibility Conformance Report WCAG Edition VPAT® Version 2.3 (Revised) – April 2019 Name of Product/Version: www.mhcc.edu Product Description: MHCC Main Website Report Date: August 2, 2019 Contact Information: Tristan Price – [email protected] Notes: Evaluation Methods Used: IAAP WAS Testing Methodology, SiteImprove, Manual Testing __________________________________ “Voluntary Product Accessibility Template” and “VPAT” are registered service marks of the Information Technology Industry Council (ITI) Page 1 of 5 Applicable Standards/Guidelines This report covers the degree of conformance for the following accessibility standard/guidelines: Standard/Guideline Included In Report Web Content Accessibility Guidelines 2.0 Level A (Yes / No ) Level AA (Yes / No ) Level AAA (Yes / No ) Web Content Accessibility Guidelines 2.1 Level A (Yes / No ) Level AA (Yes / No ) Level AAA (Yes / No ) Terms The terms used in the Conformance Level information are defined as follows: • Supports: The functionality of the product has at least one method that meets the criterion without known defects or meets with equivalent facilitation. • Partially Supports: Some functionality of the product does not meet the criterion. • Does Not Support: The majority of product functionality does not meet the criterion. • Not Applicable: The criterion is not relevant to the product. • Not Evaluated: The product has not been evaluated against the criterion. This can be used only in WCAG 2.0 Level AAA. WCAG 2.x Report Note: When reporting on conformance with the WCAG 2.x Success Criteria, they are scoped for full pages, complete processes, and accessibility-supported ways of using technology as documented in the WCAG 2.0 Conformance Requirements. -

THE FUTURE of SCREENS from James Stanton a Little Bit About Me

THE FUTURE OF SCREENS From james stanton A little bit about me. Hi I am James (Mckenzie) Stanton Thinker / Designer / Engineer / Director / Executive / Artist / Human / Practitioner / Gardner / Builder / and much more... Born in Essex, United Kingdom and survived a few hair raising moments and learnt digital from the ground up. Ok enough of the pleasantries I have been working in the design field since 1999 from the Falmouth School of Art and onwards to the RCA, and many companies. Ok. less about me and more about what I have seen… Today we are going to cover - SCREENS CONCEPTS - DIGITAL TRANSFORMATION - WHY ASSETS LIBRARIES - CODE LIBRARIES - COST EFFECTIVE SOLUTION FOR IMPLEMENTATION I know, I know, I know. That's all good and well, but what does this all mean to a company like mine? We are about to see a massive change in consumer behavior so let's get ready. DIGITAL TRANSFORMATION AS A USP Getting this correct will change your company forever. DIGITAL TRANSFORMATION USP-01 Digital transformation (DT) – the use of technology to radically improve performance or reach of enterprises – is becoming a hot topic for companies across the globe. VERY DIGITAL CHANGING NOT VERY DIGITAL DIGITAL TRANSFORMATION USP-02 Companies face common pressures from customers, employees and competitors to begin or speed up their digital transformation. However they are transforming at different paces with different results. VERY DIGITAL CHANGING NOT VERY DIGITAL DIGITAL TRANSFORMATION USP-03 Successful digital transformation comes not from implementing new technologies but from transforming your organisation to take advantage of the possibilities that new technologies provide. -

Using the Dojo Toolkit in a Webworks Application Michelle Mendoza RIM Developer Relations Dylan Schiemann Co-Founder of Dojo Toolkit & CEO of Sitepen Inc

Using the Dojo Toolkit in a WebWorks application Michelle Mendoza RIM Developer Relations Dylan Schiemann Co-founder of Dojo Toolkit & CEO of SitePen Inc. Agenda What is Dojo? Using the Dojo Mobile package Dojo Mobile Application Demos Basic Code Walkthrough Q&A 2 Dojo Toolkit Build sophisticated Web apps http://dojotoolkit.org/ Supports multiple browsers Enables rapid development Philosophy: Push the limits (SVG, WebGL, HTML5) Define & adopt defacto standards Developer productivity & tools Open, free & liberally licensed Toolkit Components Dojo – also known as “core”, AJAX, DOM manipulation, class-like programming, events Dijit – extensive set of UI components known as widgets DojoX – collection of packages and modules built upon Dojo core and Dijit dojox/mobile dojox/gfx dojox/charting and many more Util – utility scripts Create optimized builds DOH: Dojo Objective Harness 4 Dojo 1.8 Dojo 1.8 Improvements Performance optimizations New widgets added 175 sub-packages and 1400 modules Much improved documentation 5 AMD - Asynchronous Module Definition Mechanism allows modules and dependencies to be loaded asynchronously Asynchronous format reduces app loading time Better performance, easier code handling Only load the modules you require Dojo has a lightweight AMD loader of < 4kb Reduce page load time by up to 10x 6 Interactive Experience Grid displays Dynamic charts Various Form Controls and Form Validators Cross Platform graphics Mapping using OpenLayers Animated effects Gauges 7 Interactive Experience -

HTTP Cookie - Wikipedia, the Free Encyclopedia 14/05/2014

HTTP cookie - Wikipedia, the free encyclopedia 14/05/2014 Create account Log in Article Talk Read Edit View history Search HTTP cookie From Wikipedia, the free encyclopedia Navigation A cookie, also known as an HTTP cookie, web cookie, or browser HTTP Main page cookie, is a small piece of data sent from a website and stored in a Persistence · Compression · HTTPS · Contents user's web browser while the user is browsing that website. Every time Request methods Featured content the user loads the website, the browser sends the cookie back to the OPTIONS · GET · HEAD · POST · PUT · Current events server to notify the website of the user's previous activity.[1] Cookies DELETE · TRACE · CONNECT · PATCH · Random article Donate to Wikipedia were designed to be a reliable mechanism for websites to remember Header fields Wikimedia Shop stateful information (such as items in a shopping cart) or to record the Cookie · ETag · Location · HTTP referer · DNT user's browsing activity (including clicking particular buttons, logging in, · X-Forwarded-For · Interaction or recording which pages were visited by the user as far back as months Status codes or years ago). 301 Moved Permanently · 302 Found · Help 303 See Other · 403 Forbidden · About Wikipedia Although cookies cannot carry viruses, and cannot install malware on 404 Not Found · [2] Community portal the host computer, tracking cookies and especially third-party v · t · e · Recent changes tracking cookies are commonly used as ways to compile long-term Contact page records of individuals' browsing histories—a potential privacy concern that prompted European[3] and U.S. -

Paweł Rajba [email protected]

Paweł Rajba [email protected] http://itcourses.eu/ Wprowadzenie Zalety Wady XMLHttpRequest AJAX w praktyce AJAX + jQuery SOP, CORS i JSONP Literatura Z czego się składa? . JavaScript + DOM . Obiekt XMLHttpRequest . Jakakolwiek technologia po stronie serwera AJAX to nic nowego (w pewnym sensie) . Technoogie w roku 1998 . Artykuł Jesse James Garreta w roku 2005 Jak to działa? . Nagłówek X-Requested-With: XMLHttpRequest Aplikacje są bardziej interaktywne . Szybsza aktualizacja treści na stronie . Mniejsze zużycie pasma Daje możliwość tworzenia bardziej rozbudowanych interfejsów użytkownika Wykorzystuje istniejące technologie Domyślnie aplikacja widoczna pod tylko jednym adresem: . Nie działa przycisk wstecz, który wg raportu Jacoba Nielsena jest pod drugą pod względem użyteczności funkcją nawigacyjną . Stan aplikacji jest reprezentowany przez adres URL ▪ przez co nie można go zapisać np. do zakładek ▪ uniemożliwia to reklamę ,,pantoflową’’ jak też zwykłe przesyłanie linków znajomym . Można to obejść, ale wymaga dodatkowej pracy Silniki wyszukiwarek mogą mieć problemy z poprawnym indeksowaniem stron Trudniej debugować Trudniej testować Metody obiektu XMLHttpRequest . abort() – przerywa żądanie . getResponseHeader( klucz ) – pobiera wartość pola nagłówka http . open( metoda, Uri, [async, [nazwa_użytkownika, [hasło]]]) – określa parametry żądania: ▪ metoda – GET lub POST ▪ Uri – adres żądania ▪ async – czy asynchronicznie (domyślnie true) ▪ użytkownik, hasło – możemy podać, jeśli dostęp do zasobu wymaga uwierzytelnienia -

Tecnologias Da Informação E Comunicação Vs Exclusão Social

Instituto Superior de Engenharia do Porto Departamento de Engenharia Informática Ramo de Computadores e Sistemas Projecto 5º Ano Tecnologias da Informação e Comunicação vs Exclusão Social Autor/Número Sérgio Francisco dos Santos Morais / 990348 Orientador Dr. Constantino Martins Porto, Setembro de 2004 Agradecimentos “Não há no mundo exagero mais belo que a gratidão” La Bruyère (escritor francês 1645-1695) Aproveito este espaço para agradecer a todas as pessoas que directamente ou indirectamente contribuíram e me ajudaram na elaboração deste projecto, pelo seu apoio e incentivo, com um agradecimento especial: ü Ao meu orientador Dr. Constantino Martins, pelo interesse, ajuda e apoio que me prestou. Pela disponibilidade que teve para esclarecimento de dúvidas que foram surgindo e para a revisão das diversas versões do projecto; ü Ao Eng.º Paulo Ferreira pela ajuda que me deu na parte final do projecto; ü Ao Eng.º Carlos Vaz de Carvalho pela autorização concedida para a entrega dos inquéritos aos alunos. Pela revisão do inquérito e pelas suas sugestões; ü À Eng.ª Bertil Marques e ao Eng.º António Costa pela entrega e recolha dos inquéritos aos seus alunos; ü Aos alunos que preencheram os inquéritos; ü À minha família e amigos pela motivação e incentivo que me deram e pela paciência que tiveram para suportar dias e noites sem a minha atenção. A todos o meu muito obrigado. I Resumo Vivemos numa sociedade cada vez mais tecnológica, em que as Tecnologias de Informação e Comunicação (TIC) tem um papel importante e decisivo. As TIC fazem parte integrante do nosso quotidiano ao ponto de ser quase impossível viver sem elas. -

The Effect of Ajax on Performance and Usability in Web Environments

The effect of Ajax on performance and usability in web environments Y.D.C.N. op ’t Roodt, BICT Date of acceptance: August 31st, 2006 One Year Master Course Software Engineering Thesis Supervisor: Dr. Jurgen Vinju Internship Supervisor: Ir. Koen Kam Company or Institute: Hyves (Startphone Limited) Availability: public domain Universiteit van Amsterdam, Hogeschool van Amsterdam, Vrije Universiteit 2 This page intentionally left blank 3 Table of contents 1 Foreword ................................................................................................... 6 2 Motivation ................................................................................................. 7 2.1 Tasks and sources................................................................................ 7 2.2 Research question ............................................................................... 9 3 Research method ..................................................................................... 10 3.1 On implementation........................................................................... 11 4 Background and context of Ajax .............................................................. 12 4.1 Background....................................................................................... 12 4.2 Rich Internet Applications ................................................................ 12 4.3 JavaScript.......................................................................................... 13 4.4 The XMLHttpRequest object.......................................................... -

Download Ebook ^ Javascript: Ajax, Cross-Site Scripting, Couchdb

W5CAMG0U1NWQ < PDF ^ JavaScript: Ajax, Cross-Site Scripting, CouchDB, WebKit, JQuery, Dojo Toolkit, Bookmarklet, ActionScript, V8,... JavaScript: A jax, Cross-Site Scripting, Couch DB, W ebKit, JQuery, Dojo Toolkit, Bookmarklet, A ctionScript, V 8, SpiderMonkey, Qooxdoo, Ext JS Filesize: 7.09 MB Reviews It becomes an amazing book which i actually have at any time study. It is actually loaded with wisdom and knowledge You wont sense monotony at at any time of your respective time (that's what catalogues are for regarding should you request me). (Rosina Schowalter V) DISCLAIMER | DMCA EUQW6UIGSWMD > Kindle « JavaScript: Ajax, Cross-Site Scripting, CouchDB, WebKit, JQuery, Dojo Toolkit, Bookmarklet, ActionScript, V8,... JAVASCRIPT: AJAX, CROSS-SITE SCRIPTING, COUCHDB, WEBKIT, JQUERY, DOJO TOOLKIT, BOOKMARKLET, ACTIONSCRIPT, V8, SPIDERMONKEY, QOOXDOO, EXT JS Books LLC, Wiki Series, 2011. Condition: New. This item is printed on demand for shipment within 3 working days. Read JavaScript: Ajax, Cross-Site Scripting, CouchDB, WebKit, JQuery, Dojo Toolkit, Bookmarklet, ActionScript, V8, SpiderMonkey, Qooxdoo, Ext JS Online Download PDF JavaScript: Ajax, Cross-Site Scripting, CouchDB, WebKit, JQuery, Dojo Toolkit, Bookmarklet, ActionScript, V8, SpiderMonkey, Qooxdoo, Ext JS R6UOTKQRMAXT « PDF \ JavaScript: Ajax, Cross-Site Scripting, CouchDB, WebKit, JQuery, Dojo Toolkit, Bookmarklet, ActionScript, V8,... See Also A Smarter Way to Learn JavaScript: The New Approach That Uses Technology to Cut Your Effort in Half Createspace, United States, 2014. Paperback. Book Condition: New. 251 x 178 mm. Language: English . Brand New Book ***** Print on Demand *****.The ultimate learn-by-doing approachWritten for beginners, useful for experienced developers who want to... Read PDF » Why We Hate Us: American Discontent in the New Millennium Random House USA Inc, United States, 2009. -

Learning Technology Supplement Support

Welcome to this special issue of the Learning Technol- ogy supplement. This issue highlights new legislation Learning coming into force in September which has major impli- cations for any staff who publish information on the Web. Staff from many departments and teams have contributed to this issue and thanks are extended to all Technology of them for their contributions, comments, advice and BITs Learning Technology Supplement support. Number Eight: June 2002 8 Why do you want a website anyway? New legislation (see page 2) has major implications for any staff involved in producing Web pages. All staff involved in Web publishing should review their pages in the light of this legislation. Information seminars (see back page) are to be held on 12th June. Although this supplement focuses on making your website as accessible as possible, there is no point having a fully accessible, attractive website if it has no real purpose and if your students or target users don’t ever visit it. Before you go to the bother and effort of building a website, stop for a moment and ask yourself a couple of questions: “Who is this website for?” and “What is the user supposed to gain from using this site?” Websites can have many purposes, from attracting potential students to your course to publicising your excellent research activities. They can be used to provide up-to-date information or to act as a resource base or to give the students a self study centre, or… Whatever your reason, it has to be clear and appropriate for your users. -

The Influence of Assistive Technology on Students with Disability: a Review

SRJIS/BIMONTHLY / DR. B. S. PARIMAL (375-393) THE INFLUENCE OF ASSISTIVE TECHNOLOGY ON STUDENTS WITH DISABILITY: A REVIEW B. S. Parimal, Ph. D. Assistant Professor, Department of Psychology, the Maharaja Sayajirao University of Baroda Abstract The 21st century is known as century of Technology and the aim of technology is to improve the lives of human beings. Assistive Technology can be defined as equipment and software that are used to maintain or improve the functional capabilities of person with a disability. Technological innovation has dramatically altered the landscape of both preventive and therapeutic approaches to students with disability. Assistive Technology interventions measurably enhance students with learning disabilities immediate and long-term academicals performances. Assistive Technology play significant role in prevention and treatment of students with disability. Technical innovation has had a dramatic impact on a central arena of primary disability prevention: the reduction of serious, disabling injuries in children. In general, children with disabilities rely more heavily than other children on technical interventions, including medications, specialized medical and educational services, and a variety of assistive devices. Childhood disability cannot be fully understood without a clear appreciation for the power and machinery of technical innovation in the modern world. Technical progress in both preventive and therapeutic interventions is constantly reshaping the character and prevalence of students with disability and therefore its essential challenge to both the health and education communities. Computer technology has the potential to act as an equalizer by freeing many students from their disabilities in a way that allows them to achieve their true potential. The results reflected an improvement in student achievement as well as an increase in their attitudes about the use of the technology. -

Empresas Podem Fazer Pela Inclusão Digital

O QUE AS EMPRESAS PODEM FAZER PELA INCLUSÃO DIGITAL Renato Cruz O Que as Empresas Podem Fazer pela Inclusão Digital é uma publicação do Instituto Ethos, distribuída gratuitamente aos seus associados. Realização Instituto Ethos de Empresas e Responsabilidade Social Rua Francisco Leitão, 469 - 14º andar, conj, 1407 Pinheiros - 05414-020, SP Tel. (11) 3897-2400 www.ethos.org.br Patrocínio Sadia, ALSTOM Autoria Renato Cruz Colaboradores do Instituto Ethos Benjamin Gonçalves, Carlos Adriano Silva, Carmen Weingrill, Fernando Pachi (coordenação), Leno F. Silva, Mateus Cozer, Paulo Itacarambi Colaboradores Cristina De Luca, Daniel Marinho e Ethevaldo Siqueira Redação Renato Cruz Revisão Ana Maria Barbosa Projeto e produção gráfica Planeta Terra Design (Waldemar Zaidler e William Haruo) Dados Internacionais de Catalogação na Publicação (CIP) (Câmara Brasileira do Livro, SP, Brasil) Cruz, Renato O que as empresas podem fazer pela inclusão digital / Renato Cruz — São Paulo : Instituto Ethos, 2004 ISBN 85-88046-15-6 Vários Colaboradores. Bibliografia. 1. Empresas - Responsabilidade Social 2. Tecnologia da informação I. Título. 04-1504 CDD-303.4833 Índices para catálogo sistemático: 1. Inclusão digital : Tecnologia da informação e comunicação : Aspectos sociais 303.4833 Tiragem: 7.000 exemplares São Paulo, março de 2004. É permitida a reprodução desta publicação, desde que citada a fonte e com autorização prévia do Instituto Ethos. Esclarecimentos importantes sobre as atividades do Instituto Ethos: 1. O trabalho de orientação às empresas é voluntário, sem nenhuma cobrança ou remuneração. 2. Não fazemos consultoria e não credenciamos nem autorizamos profissionais a oferecer qualquer tipo de serviço em nosso nome. 3. Não somos entidade certificadora de responsabilidade social nem fornecemos “selo” com essa função.