Virtualizing Gpus in Vmware Vsphere Using NVIDIA Virtual Compute Server on Dell EMC Infrastructure

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

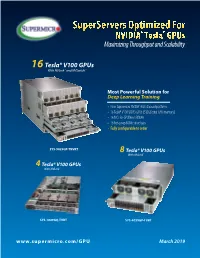

Supermicro GPU Solutions Optimized for NVIDIA Nvlink

SuperServers Optimized For NVIDIA® Tesla® GPUs Maximizing Throughput and Scalability 16 Tesla® V100 GPUs With NVLink™ and NVSwitch™ Most Powerful Solution for Deep Learning Training • New Supermicro NVIDIA® HGX-2 based platform • 16 Tesla® V100 SXM3 GPUs (512GB total GPU memory) • 16 NICs for GPUDirect RDMA • 16 hot-swap NVMe drive bays • Fully configurable to order SYS-9029GP-TNVRT 8 Tesla® V100 GPUs With NVLink™ 4 Tesla® V100 GPUs With NVLink™ SYS-1029GQ-TVRT SYS-4029GP-TVRT www.supermicro.com/GPU March 2019 Maximum Acceleration for AI/DL Training Workloads PERFORMANCE: Highest Parallel peak performance with NVIDIA Tesla V100 GPUs THROUGHPUT: Best in class GPU-to-GPU bandwidth with a maximum speed of 300GB/s SCALABILITY: Designed for direct interconections between multiple GPU nodes FLEXIBILITY: PCI-E 3.0 x16 for low latency I/O expansion capacity & GPU Direct RDMA support DESIGN: Optimized GPU cooling for highest sustained parallel computing performance EFFICIENCY: Redundant Titanium Level power supplies & intelligent cooling control Model SYS-1029GQ-TVRT SYS-4029GP-TVRT • Dual Intel® Xeon® Scalable processors with 3 UPI up to • Dual Intel® Xeon® Scalable processors with 3 UPI up to 10.4GT/s CPU Support 10.4GT/s • Supports up to 205W TDP CPU • Supports up to 205W TDP CPU • 8 NVIDIA® Tesla® V100 GPUs • 4 NVIDIA Tesla V100 GPUs • NVIDIA® NVLink™ GPU Interconnect up to 300GB/s GPU Support • NVIDIA® NVLink™ GPU Interconnect up to 300GB/s • Optimized for GPUDirect RDMA • Optimized for GPUDirect RDMA • Independent CPU and GPU thermal zones -

GPU Virtualization on Vmware's Hosted I/O Architecture

GPU Virtualization on VMware’s Hosted I/O Architecture Micah Dowty, Jeremy Sugerman VMware, Inc. 3401 Hillview Ave, Palo Alto, CA 94304 [email protected], [email protected] Abstract more computational performance than CPUs. At the Modern graphics co-processors (GPUs) can produce same time, GPU acceleration has extended beyond en- high fidelity images several orders of magnitude faster tertainment (e.g., games and video) into the basic win- than general purpose CPUs, and this performance expec- dowing systems of recent operating systems and is start- tation is rapidly becoming ubiquitous in personal com- ing to be applied to non-graphical high-performance ap- puters. Despite this, GPU virtualization is a nascent field plications including protein folding, financial modeling, of research. This paper introduces a taxonomy of strate- and medical image processing. The rise in applications gies for GPU virtualization and describes in detail the that exploit, or even assume, GPU acceleration makes specific GPU virtualization architecture developed for it increasingly important to expose the physical graph- VMware’s hosted products (VMware Workstation and ics hardware in virtualized environments. Additionally, VMware Fusion). virtual desktop infrastructure (VDI) initiatives have led We analyze the performance of our GPU virtualiza- many enterprises to try to simplify their desktop man- tion with a combination of applications and microbench- agement by delivering VMs to their users. Graphics vir- marks. We also compare against software rendering, the tualization is extremely important to a user whose pri- GPU virtualization in Parallels Desktop 3.0, and the na- mary desktop runs inside a VM. tive GPU. We find that taking advantage of hardware GPUs pose a unique challenge in the field of virtu- acceleration significantly closes the gap between pure alization. -

High Performance Computing and AI Solutions Portfolio

Brochure High Performance Computing and AI Solutions Portfolio Technology and expertise to help you accelerate discovery and innovation Discovery and innovation have always started with great minds Go ahead. dreaming big. As artificial intelligence (AI), high performance computing (HPC) and data analytics continue to converge and Dream big. evolve, they are fueling the next industrial revolution and the next quantum leap in human progress. And with the help of increasingly powerful technology, you can dream even bigger. Dell Technologies will be there every step of the way with the technology you need to power tomorrow’s discoveries and the expertise to bring it all together, today. 463 exabytes The convergence of HPC and AI is driven by data. The data‑driven age is dramatically reshaping industries and reinventing the future. As of data will be created each day by 20251 vast amounts of data pour in from increasingly diverse sources, leveraging that data is both critical and transformational. Whether you’re working to save lives, understand the universe, build better machines, neutralize financial risks or anticipate customer sentiment, 44X ROI data informs and drives decisions that impact the success of your organization — and Average return on investment (ROI) shapes the future of our world. for HPC2 AI, HPC and data analytics are technologies designed to unlock the value of your data. While they have long been treated as separate, the three technologies are converging as 83% of CIOs it becomes clear that analytics and AI are both big‑data problems that require the power, Say they are investing in AI scalable compute, networking and storage provided by HPC. -

BRKIOT-2394.Pdf

Unlocking the Mystery of Machine Learning and Big Data Analytics Robert Barton Jerome Henry Distinguished Architect Principal Engineer @MrRobbarto Office of the CTAO CCIE #6660 @wirelessccie CCDE #2013::6 CCIE #24750 CWNE #45 BRKIOT-2394 Cisco Webex Teams Questions? Use Cisco Webex Teams to chat with the speaker after the session How 1 Find this session in the Cisco Events Mobile App 2 Click “Join the Discussion” 3 Install Webex Teams or go directly to the team space 4 Enter messages/questions in the team space BRKIOT-2394 © 2020 Cisco and/or its affiliates. All rights reserved. Cisco Public 3 Tuesday, Jan. 28th Monday, Jan. 27th Wednesday, Jan. 29th BRKIOT-2600 BRKIOT-2213 16:45 Enabling OT-IT collaboration by 17:00 From Zero to IOx Hero transforming traditional industrial TECIOT-2400 networks to modern IoT Architectures IoT Fundamentals 08:45 BRKIOT-1618 Bootcamp 14:45 Industrial IoT Network Management PSOIOT-1156 16:00 using Cisco Industrial Network Director Securing Industrial – A Deep Dive. Networks: Introduction to Cisco Cyber Vision PSOIOT-2155 Enhancing the Commuter 13:30 BRKIOT-1775 Experience - Service Wireless technologies and 14:30 BRKIOT-2698 BRKIOT-1520 Provider WiFi at the Use Cases in Industrial IOT Industrial IoT Routing – Connectivity 12:15 Cisco Remote & Mobile Asset speed of Trains and Beyond Solutions PSOIOT-2197 Cisco Innovates Autonomous 14:00 TECIOT-2000 Vehicles & Roadways w/ IoT BRKIOT-2497 BRKIOT-2900 Understanding Cisco's 14:30 IoT Solutions for Smart Cities and 11:00 Automating the Network of Internet Of Things (IOT) BRKIOT-2108 Communities Industrial Automation Solutions Connected Factory Architecture Theory and 11:00 Practice PSOIOT-2100 BRKIOT-1291 Unlock New Market 16:15 Opening Keynote 09:00 08:30 Opportunities with LoRaWAN for IOT Enterprises Embedded Cisco services Technologies IOT IOT IOT Track #CLEMEA www.ciscolive.com/emea/learn/technology-tracks.html Cisco Live Thursday, Jan. -

Gscale: Scaling up GPU Virtualization with Dynamic Sharing of Graphics

gScale: Scaling up GPU Virtualization with Dynamic Sharing of Graphics Memory Space Mochi Xue, Shanghai Jiao Tong University and Intel Corporation; Kun Tian, Intel Corporation; Yaozu Dong, Shanghai Jiao Tong University and Intel Corporation; Jiacheng Ma, Jiajun Wang, and Zhengwei Qi, Shanghai Jiao Tong University; Bingsheng He, National University of Singapore; Haibing Guan, Shanghai Jiao Tong University https://www.usenix.org/conference/atc16/technical-sessions/presentation/xue This paper is included in the Proceedings of the 2016 USENIX Annual Technical Conference (USENIX ATC ’16). June 22–24, 2016 • Denver, CO, USA 978-1-931971-30-0 Open access to the Proceedings of the 2016 USENIX Annual Technical Conference (USENIX ATC ’16) is sponsored by USENIX. gScale: Scaling up GPU Virtualization with Dynamic Sharing of Graphics Memory Space Mochi Xue1,2, Kun Tian2, Yaozu Dong1,2, Jiacheng Ma1, Jiajun Wang1, Zhengwei Qi1, Bingsheng He3, Haibing Guan1 {xuemochi, mjc0608, jiajunwang, qizhenwei, hbguan}@sjtu.edu.cn {kevin.tian, eddie.dong}@intel.com [email protected] 1Shanghai Jiao Tong University, 2Intel Corporation, 3National University of Singapore Abstract As one of the key enabling technologies of GPU cloud, GPU virtualization is intended to provide flexible and With increasing GPU-intensive workloads deployed on scalable GPU resources for multiple instances with high cloud, the cloud service providers are seeking for practi- performance. To achieve such a challenging goal, sev- cal and efficient GPU virtualization solutions. However, eral GPU virtualization solutions were introduced, i.e., the cutting-edge GPU virtualization techniques such as GPUvm [28] and gVirt [30]. gVirt, also known as GVT- gVirt still suffer from the restriction of scalability, which g, is a full virtualization solution with mediated pass- constrains the number of guest virtual GPU instances. -

Crane: Fast and Migratable GPU Passthrough for Opencl Applications

Crane: Fast and Migratable GPU Passthrough for OpenCL Applications James Gleeson, Daniel Kats, Charlie Mei, Eyal de Lara University of Toronto Toronto, Canada {jgleeson,dbkats,cmei,delara}@cs.toronto.edu ABSTRACT (IaaS) providers now have virtualization offerings with ded- General purpose GPU (GPGPU) computing in virtualized icated GPUs that customers can leverage in their guest vir- environments leverages PCI passthrough to achieve GPU tual machines (VMs). For example, Amazon Web Services performance comparable to bare-metal execution. However, allows VMs to take advantage of NVIDIA’s high-end GPUs GPU passthrough prevents service administrators from per- in EC2 [6]. Microsoft and Google recently introduced simi- forming virtual machine migration between physical hosts. lar offerings on Azure and GCP platforms respectively [34, Crane is a new technique for virtualizing OpenCL-based 21]. GPGPU computing that achieves within 5:25% of pass- Virtualized GPGPU computing leverages modern through GPU performance while supporting VM migra- IOMMU features to give the VM direct use of the GPU tion. Crane interposes a virtualization-aware OpenCL li- through DMA and interrupt remapping – a technique which brary that makes it possible to reclaim and subsequently is usually called PCI passthrough [17, 50]. By running reassign physical GPUs to a VM without terminating the the GPU driver in the context of the guest operating guest or its applications. Crane also enables continued GPU system, PCI passthrough bypasses the hypervisor and operation while the VM is undergoing live migration by achieves performance that is comparable to bare-metal OS transparently switching between GPU passthrough opera- execution [47]. tion and API remoting. -

A Full GPU Virtualization Solution with Mediated Pass-Through

A Full GPU Virtualization Solution with Mediated Pass-Through Kun Tian, Yaozu Dong, David Cowperthwaite Intel Corporation Abstract shows the spectrum of GPU virtualization solutions Graphics Processing Unit (GPU) virtualization is an (with hardware acceleration increasing from left to enabling technology in emerging virtualization right). Device emulation [7] has great complexity and scenarios. Unfortunately, existing GPU virtualization extremely low performance, so it does not meet today’s approaches are still suboptimal in performance and full needs. API forwarding [3][9][22][31] employs a feature support. frontend driver, to forward the high level API calls inside a VM, to the host for acceleration. However, API This paper introduces gVirt, a product level GPU forwarding faces the challenge of supporting full virtualization implementation with: 1) full GPU features, due to the complexity of intrusive virtualization running native graphics driver in guest, modification in the guest graphics software stack, and and 2) mediated pass-through that achieves both good incompatibility between the guest and host graphics performance and scalability, and also secure isolation software stacks. Direct pass-through [5][37] dedicates among guests. gVirt presents a virtual full-fledged GPU the GPU to a single VM, providing full features and the to each VM. VMs can directly access best performance, but at the cost of device sharing performance-critical resources, without intervention capability among VMs. Mediated pass-through [19], from the hypervisor in most cases, while privileged passes through performance-critical resources, while operations from guest are trap-and-emulated at minimal mediating privileged operations on the device, with cost. Experiments demonstrate that gVirt can achieve good performance, full features, and sharing capability. -

GPU Virtualization on Vmware's Hosted I/O Architecture

GPU Virtualization on VMware's Hosted I/O Architecture Micah Dowty Jeremy Sugerman VMware, Inc. VMware, Inc. 3401 Hillview Ave, Palo Alto, CA 94304 3401 Hillview Ave, Palo Alto, CA 94304 [email protected] [email protected] ABSTRACT 1. INTRODUCTION Modern graphics co-processors (GPUs) can produce high fi- Over the past decade, virtual machines (VMs) have be- delity images several orders of magnitude faster than general come increasingly popular as a technology for multiplexing purpose CPUs, and this performance expectation is rapidly both desktop and server commodity x86 computers. Over becoming ubiquitous in personal computers. Despite this, that time, several critical challenges in CPU virtualization GPU virtualization is a nascent field of research. This paper have been overcome, and there are now both software and introduces a taxonomy of strategies for GPU virtualization hardware techniques for virtualizing CPUs with very low and describes in detail the specific GPU virtualization archi- overheads [2]. I/O virtualization, however, is still very tecture developed for VMware’s hosted products (VMware much an open problem and a wide variety of strategies are Workstation and VMware Fusion). used. Graphics co-processors (GPUs) in particular present a challenging mixture of broad complexity, high performance, We analyze the performance of our GPU virtualization with rapid change, and limited documentation. a combination of applications and microbenchmarks. We also compare against software rendering, the GPU virtual- Modern high-end GPUs have more transistors, draw more ization in Parallels Desktop 3.0, and the native GPU. We power, and offer at least an order of magnitude more com- find that taking advantage of hardware acceleration signif- putational performance than CPUs. -

NVIDIA Gpudirect RDMA (GDR) Host Memory Host Memory • Pipeline Through Host for Large Msg 2 IB IB 4 2

Efficient and Scalable Communication Middleware for Emerging Dense-GPU Clusters Ching-Hsiang Chu Advisor: Dhabaleswar K. Panda Network Based Computing Lab Department of Computer Science and Engineering The Ohio State University, Columbus, OH Outline • Introduction • Problem Statement • Detailed Description and Results • Broader Impact on the HPC Community • Expected Contributions Network Based Computing Laboratory SC 19 Doctoral Showcase 2 Trends in Modern HPC Architecture: Heterogeneous Multi/ Many-core High Performance Interconnects Accelerators / Coprocessors SSD, NVMe-SSD, Processors InfiniBand, Omni-Path, EFA high compute density, NVRAM <1usec latency, 100Gbps+ Bandwidth high performance/watt Node local storage • Multi-core/many-core technologies • High Performance Storage and Compute devices • High Performance Interconnects • Variety of programming models (MPI, PGAS, MPI+X) #1 Summit #2 Sierra (17,280 GPUs) #8 ABCI #22 DGX SuperPOD (27,648 GPUs) #10 Lassen (2,664 GPUs) (4,352 GPUs) (1,536 GPUs) Network Based Computing Laboratory SC 19 Doctoral Showcase 3 Trends in Modern Large-scale Dense-GPU Systems • Scale-up (up to 150 GB/s) • Scale-out (up to 25 GB/s) – PCIe, NVLink/NVSwitch – InfiniBand, Omni-path, Ethernet – Infinity Fabric, Gen-Z, CXL – Cray Slingshot Network Based Computing Laboratory SC 19 Doctoral Showcase 4 GPU-enabled HPC Applications Lattice Quantum Chromodynamics Weather Simulation Wave propagation simulation Fuhrer O, Osuna C, Lapillonne X, Gysi T, Bianco M, Schulthess T. Towards GPU-accelerated operational weather -

Dell EMC Poweredge C4140 Technical Guide

Dell EMC PowerEdge C4140 Technical Guide Regulatory Model: E53S Series Regulatory Type: E53S001 Notes, cautions, and warnings NOTE: A NOTE indicates important information that helps you make better use of your product. CAUTION: A CAUTION indicates either potential damage to hardware or loss of data and tells you how to avoid the problem. WARNING: A WARNING indicates a potential for property damage, personal injury, or death. © 2017 - 2019 Dell Inc. or its subsidiaries. All rights reserved. Dell, EMC, and other trademarks are trademarks of Dell Inc. or its subsidiaries. Other trademarks may be trademarks of their respective owners. 2019 - 09 Rev. A00 Contents 1 System overview ......................................................................................................................... 5 Introduction............................................................................................................................................................................ 5 New technologies.................................................................................................................................................................. 5 2 System features...........................................................................................................................7 Specifications......................................................................................................................................................................... 7 Product comparison............................................................................................................................................................. -

HPE Apollo 6500 Gen10 System Overview

QuickSpecs HPE Apollo 6500 Gen10 System Overview HPE Apollo 6500 Gen10 System The ability of computers to autonomously learn, predict, and adapt using massive datasets is driving innovation and competitive advantage across many industries and applications. The HPE Apollo 6500 Gen10 System is an ideal HPC and Deep Learning platform providing unprecedented performance with industry leading GPUs, fast GPU interconnect, high bandwidth fabric and a configurable GPU topology to match your workloads. The system with rock-solid RAS features (reliable, available, secure) includes up to eight high power GPUs per server tray (node), NVLink 2.0 for fast GPU-to-GPU communication, Intel® Xeon® Scalable Processors support, choice of up to four high-speed / low latency fabric adapters, and the ability to optimize your configurations to match your workload and choice of GPU. And while the HPE Apollo 6500 Gen10 System is ideal for deep learning workloads, the system is suitable for complex high performance computing workloads such as simulation and modeling. Eight GPU per server for faster and more economical deep learning system training compared to more servers with fewer GPU each. Keep your researchers productive as they iterate on a model more rapidly for a better solution, in less time. Now available with NVLink 2.0 to connect GPUs at up to 300 GB/s for the world’s most powerful computing servers. HPC and AI models that would consume days or weeks can now be trained in a few hours or minutes. Front View –with two HPE DL38X Gen10 Premium 6 SFF SAS/SATA + 2 NVMe drive cages shown 1. -

Enabling VDI for Engineers and Designers Positioning Information

Enabling VDI for Engineers and Designers Positioning Information The ability to work remotely has been a critical business need for many years. But with the recent pandemic, the focus has shifted from supporting the occasional business trip or conference attendee, to supporting all non-essential employees. Virtual Desktop Infrastructure (VDI) provides a robust solution for businesses, allowing their employees to access business data on any mobile device – be it a laptop, tablet, or smartphone – without risk of losing that business data if the device is lost or stolen. With VDI the data resides in the data center, behind company firewalls. VDI allows companies to implement flexible work schedules, where employees have the freedom to pick up the kids from school and log on later in the evening to finish up. VDI also provides for centralized management of security fixes and application upgrades, ensuring all workers are using the latest version of applications. Although a great solution for your typical office workers, the benefits of VDI have largely been unavailable to another set of employees. Power users – engineers and designers using 3D or other graphics visualization applications – have generally been left out of these flexible arrangements. The graphics visualization applications they use are processor intensive, and the files they create and modify can be very large. The workstations that run the applications and the high-resolution monitors that display the designs are heavy and thus difficult to move. In order to have decent response times, power users have needed to be connected to a high-speed local area network. Work has been underway for several years to expand the benefits of VDI to the power user, and today solutions exist to support the majority of visualization applications.