Interpolation.Pdf

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

10 7 General Vector Spaces Proposition & Definition 7.14

10 7 General vector spaces Proposition & Definition 7.14. Monomial basis of n(R) P Letn N 0. The particular polynomialsm 0,m 1,...,m n n(R) defined by ∈ ∈P n 1 n m0(x) = 1,m 1(x) = x, . ,m n 1(x) =x − ,m n(x) =x for allx R − ∈ are called monomials. The family =(m 0,m 1,...,m n) forms a basis of n(R) B P and is called the monomial basis. Hence dim( n(R)) =n+1. P Corollary 7.15. The method of equating the coefficients Letp andq be two real polynomials with degreen N, which means ∈ n n p(x) =a nx +...+a 1x+a 0 andq(x) =b nx +...+b 1x+b 0 for some coefficientsa n, . , a1, a0, bn, . , b1, b0 R. ∈ If we have the equalityp=q, which means n n anx +...+a 1x+a 0 =b nx +...+b 1x+b 0, (7.3) for allx R, then we can concludea n =b n, . , a1 =b 1 anda 0 =b 0. ∈ VL18 ↓ Remark: Since dim( n(R)) =n+1 and we have the inclusions P 0(R) 1(R) 2(R) (R) (R), P ⊂P ⊂P ⊂···⊂P ⊂F we conclude that dim( (R)) and dim( (R)) cannot befinite natural numbers. Sym- P F bolically, we write dim( (R)) = in such a case. P ∞ 7.4 Coordinates with respect to a basis 11 7.4 Coordinates with respect to a basis 7.4.1 Basis implies coordinates Again, we deal with the caseF=R andF=C simultaneously. Therefore, letV be an F-vector space with the two operations+ and . -

On Multivariate Interpolation

On Multivariate Interpolation Peter J. Olver† School of Mathematics University of Minnesota Minneapolis, MN 55455 U.S.A. [email protected] http://www.math.umn.edu/∼olver Abstract. A new approach to interpolation theory for functions of several variables is proposed. We develop a multivariate divided difference calculus based on the theory of non-commutative quasi-determinants. In addition, intriguing explicit formulae that connect the classical finite difference interpolation coefficients for univariate curves with multivariate interpolation coefficients for higher dimensional submanifolds are established. † Supported in part by NSF Grant DMS 11–08894. April 6, 2016 1 1. Introduction. Interpolation theory for functions of a single variable has a long and distinguished his- tory, dating back to Newton’s fundamental interpolation formula and the classical calculus of finite differences, [7, 47, 58, 64]. Standard numerical approximations to derivatives and many numerical integration methods for differential equations are based on the finite dif- ference calculus. However, historically, no comparable calculus was developed for functions of more than one variable. If one looks up multivariate interpolation in the classical books, one is essentially restricted to rectangular, or, slightly more generally, separable grids, over which the formulae are a simple adaptation of the univariate divided difference calculus. See [19] for historical details. Starting with G. Birkhoff, [2] (who was, coincidentally, my thesis advisor), recent years have seen a renewed level of interest in multivariate interpolation among both pure and applied researchers; see [18] for a fairly recent survey containing an extensive bibli- ography. De Boor and Ron, [8, 12, 13], and Sauer and Xu, [61, 10, 65], have systemati- cally studied the polynomial case. -

Selecting a Monomial Basis for Sums of Squares Programming Over a Quotient Ring

Selecting a Monomial Basis for Sums of Squares Programming over a Quotient Ring Frank Permenter1 and Pablo A. Parrilo2 Abstract— In this paper we describe a method for choosing for λi(x) and s(x), this feasibility problem is equivalent to a “good” monomial basis for a sums of squares (SOS) program an SDP. This follows because the sum of squares constraint formulated over a quotient ring. It is known that the monomial is equivalent to a semidefinite constraint on the coefficients basis need only include standard monomials with respect to a Groebner basis. We show that in many cases it is possible of s(x), and the equality constraint is equivalent to linear to use a reduced subset of standard monomials by combining equations on the coefficients of s(x) and λi(x). Groebner basis techniques with the well-known Newton poly- Naturally, the complexity of this sums of squares program tope reduction. This reduced subset of standard monomials grows with the complexity of the underlying variety, as yields a smaller semidefinite program for obtaining a certificate does the size of the corresponding SDP. It is therefore of non-negativity of a polynomial on an algebraic variety. natural to explore how algebraic structure can be exploited I. INTRODUCTION to simplify this sums of squares program. Consider the Many practical engineering problems require demonstrat- following reformulation [10], which is feasible if and only ing non-negativity of a multivariate polynomial over an if (2) is feasible: algebraic variety, i.e. over the solution set of polynomial Find s(x) equations. -

1 Expressing Vectors in Coordinates

Math 416 - Abstract Linear Algebra Fall 2011, section E1 Working in coordinates In these notes, we explain the idea of working \in coordinates" or coordinate-free, and how the two are related. 1 Expressing vectors in coordinates Let V be an n-dimensional vector space. Recall that a choice of basis fv1; : : : ; vng of V is n the same data as an isomorphism ': V ' R , which sends the basis fv1; : : : ; vng of V to the n standard basis fe1; : : : ; eng of R . In other words, we have ' n ': V −! R vi 7! ei 2 3 c1 6 . 7 v = c1v1 + ::: + cnvn 7! 4 . 5 : cn This allows us to manipulate abstract vectors v = c v + ::: + c v simply as lists of numbers, 2 3 1 1 n n c1 6 . 7 n the coordinate vectors 4 . 5 2 R with respect to the basis fv1; : : : ; vng. Note that the cn coordinates of v 2 V depend on the choice of basis. 2 3 c1 Notation: Write [v] := 6 . 7 2 n for the coordinates of v 2 V with respect to the basis fvig 4 . 5 R cn fv1; : : : ; vng. For shorthand notation, let us name the basis A := fv1; : : : ; vng and then write [v]A for the coordinates of v with respect to the basis A. 2 2 Example: Using the monomial basis f1; x; x g of P2 = fa0 + a1x + a2x j ai 2 Rg, we obtain an isomorphism ' 3 ': P2 −! R 2 3 a0 2 a0 + a1x + a2x 7! 4a15 : a2 2 3 a0 2 In the notation above, we have [a0 + a1x + a2x ]fxig = 4a15. -

Tight Monomials and the Monomial Basis Property

PDF Manuscript TIGHT MONOMIALS AND MONOMIAL BASIS PROPERTY BANGMING DENG AND JIE DU Abstract. We generalize a criterion for tight monomials of quantum enveloping algebras associated with symmetric generalized Cartan matrices and a monomial basis property of those associated with symmetric (classical) Cartan matrices to their respective sym- metrizable case. We then link the two by establishing that a tight monomial is necessarily a monomial defined by a weakly distinguished word. As an application, we develop an algorithm to compute all tight monomials in the rank 2 Dynkin case. The existence of Hall polynomials for Dynkin or cyclic quivers not only gives rise to a simple realization of the ±-part of the corresponding quantum enveloping algebras, but also results in interesting applications. For example, by specializing q to 0, degenerate quantum enveloping algebras have been investigated in the context of generic extensions ([20], [8]), while through a certain non-triviality property of Hall polynomials, the authors [4, 5] have established a monomial basis property for quantum enveloping algebras associated with Dynkin and cyclic quivers. The monomial basis property describes a systematic construction of many monomial/integral monomial bases some of which have already been studied in the context of elementary algebraic constructions of canonical bases; see, e.g., [15, 27, 21, 5] in the simply-laced Dynkin case and [3, 18], [9, Ch. 11] in general. This property in the cyclic quiver case has also been used in [10] to obtain an elementary construction of PBW-type bases, and hence, of canonical bases for quantum affine sln. In this paper, we will complete this program by proving this property for all finite types. -

Polynomial Interpolation CPSC 303: Numerical Approximation and Discretization

Lecture Notes 2: Polynomial Interpolation CPSC 303: Numerical Approximation and Discretization Ian M. Mitchell [email protected] http://www.cs.ubc.ca/~mitchell University of British Columbia Department of Computer Science Winter Term Two 2012{2013 Copyright 2012{2013 by Ian M. Mitchell This work is made available under the terms of the Creative Commons Attribution 2.5 Canada license http://creativecommons.org/licenses/by/2.5/ca/ Outline • Background • Problem statement and motivation • Formulation: The linear system and its conditioning • Polynomial bases • Monomial • Lagrange • Newton • Uniqueness of polynomial interpolant • Divided differences • Divided difference tables and the Newton basis interpolant • Divided difference connection to derivatives • Osculating interpolation: interpolating derivatives • Error analysis for polynomial interpolation • Reducing the error using the Chebyshev points as abscissae CPSC 303 Notes 2 Ian M. Mitchell | UBC Computer Science 2/ 53 Interpolation Motivation n We are given a collection of data samples f(xi; yi)gi=0 n • The fxigi=0 are called the abscissae (singular: abscissa), n the fyigi=0 are called the data values • Want to find a function p(x) which can be used to estimate y(x) for x 6= xi • Why? We often get discrete data from sensors or computation, but we want information as if the function were not discretely sampled • If possible, p(x) should be inexpensive to evaluate for a given x CPSC 303 Notes 2 Ian M. Mitchell | UBC Computer Science 3/ 53 Interpolation Formulation There are lots of ways to define a function p(x) to approximate n f(xi; yi)gi=0 • Interpolation means p(xi) = yi (and we will only evaluate p(x) for mini xi ≤ x ≤ maxi xi) • Most interpolants (and even general data fitting) is done with a linear combination of (usually nonlinear) basis functions fφj(x)g n X p(x) = pn(x) = cjφj(x) j=0 where cj are the interpolation coefficients or interpolation weights CPSC 303 Notes 2 Ian M. -

![Arxiv:1805.04488V5 [Math.NA]](https://docslib.b-cdn.net/cover/2714/arxiv-1805-04488v5-math-na-712714.webp)

Arxiv:1805.04488V5 [Math.NA]

GENERALIZED STANDARD TRIPLES FOR ALGEBRAIC LINEARIZATIONS OF MATRIX POLYNOMIALS∗ EUNICE Y. S. CHAN†, ROBERT M. CORLESS‡, AND LEILI RAFIEE SEVYERI§ Abstract. We define generalized standard triples X, Y , and L(z) = zC1 − C0, where L(z) is a linearization of a regular n×n −1 −1 matrix polynomial P (z) ∈ C [z], in order to use the representation X(zC1 − C0) Y = P (z) which holds except when z is an eigenvalue of P . This representation can be used in constructing so-called algebraic linearizations for matrix polynomials of the form H(z) = zA(z)B(z)+ C ∈ Cn×n[z] from generalized standard triples of A(z) and B(z). This can be done even if A(z) and B(z) are expressed in differing polynomial bases. Our main theorem is that X can be expressed using ℓ the coefficients of the expression 1 = Pk=0 ekφk(z) in terms of the relevant polynomial basis. For convenience, we tabulate generalized standard triples for orthogonal polynomial bases, the monomial basis, and Newton interpolational bases; for the Bernstein basis; for Lagrange interpolational bases; and for Hermite interpolational bases. We account for the possibility of common similarity transformations. Key words. Standard triple, regular matrix polynomial, polynomial bases, companion matrix, colleague matrix, comrade matrix, algebraic linearization, linearization of matrix polynomials. AMS subject classifications. 65F15, 15A22, 65D05 1. Introduction. A matrix polynomial P (z) ∈ Fm×n[z] is a polynomial in the variable z with coef- ficients that are m by n matrices with entries from the field F. We will use F = C, the field of complex k numbers, in this paper. -

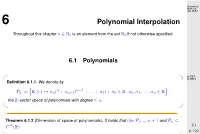

Polynomial Interpolation

Numerical Methods 401-0654 6 Polynomial Interpolation Throughout this chapter n N is an element from the set N if not otherwise specified. ∈ 0 0 6.1 Polynomials D-ITET, ✬ ✩D-MATL Definition 6.1.1. We denote by n n 1 n := R t αnt + αn 1t − + ... + α1t + α0 R: α0, α1,...,αn R . P ∋ 7→ − ∈ ∈ the R-vectorn space of polynomials with degree n. o ≤ ✫ ✪ ✬ ✩ Theorem 6.1.2 (Dimension of space of polynomials). It holds that dim n = n +1 and n P P ⊂ 6.1 C∞(R). p. 192 ✫ ✪ n n 1 ➙ Remark 6.1.1 (Polynomials in Matlab). MATLAB: αnt + αn 1t − + ... + α0 Vector Numerical − Methods (αn, αn 1,...,α0) (ordered!). 401-0654 − △ Remark 6.1.2 (Horner scheme). Evaluation of a polynomial in monomial representation: Horner scheme p(t)= t t t t αnt + αn 1 + αn 2 + + α1 + α0 . ··· − − ··· Code 6.1: Horner scheme, polynomial in MATLAB format 1 function y = polyval (p,x) 2 y = p(1); for i =2: length (p), y = x y+p( i ); end ∗ D-ITET, D-MATL Asymptotic complexity: O(n) Use: MATLAB “built-in”-function polyval(p,x); △ 6.2 p. 193 6.2 Polynomial Interpolation: Theory Numerical Methods 401-0654 Goal: (re-)constructionofapolynomial(function)frompairs of values (fit). ✬ Lagrange polynomial interpolation problem ✩ Given the nodes < t < t < < tn < and the values y ,...,yn R compute −∞ 0 1 ··· ∞ 0 ∈ p n such that ∈P j 0, 1,...,n : p(t )= y . ∀ ∈ { } j j ✫ ✪ D-ITET, D-MATL 6.2 p. 194 6.2.1 Lagrange polynomials Numerical Methods 401-0654 ✬ ✩ Definition 6.2.1. -

An Algebraic Approach to Harmonic Polynomials on S3

AN ALGEBRAIC APPROACH TO HARMONIC POLYNOMIALS ON S3 Kevin Mandira Limanta A thesis in fulfillment of the requirements for the degree of Master of Science (by Research) SCHOOL OF MATHEMATICS AND STATISTICS FACULTY OF SCIENCE UNIVERSITY OF NEW SOUTH WALES June 2017 ORIGINALITY STATEMENT 'I hereby declare that this submission is my own work and to the best of my knowledge it contains no materials previously published or written by another person, or substantial proportions of material which have been accepted for the award of any other degree or diploma at UNSW or any other educational institution, except where due acknowledgement is made in the thesis. Any contribution made to the research by others, with whom I have worked at UNSW or elsewhere, is explicitly acknowledged in the thesis. I also declare that the intellectual content of this thesis is the product of my own work, except to the extent that assistance from others in the project's design and conception or in style, presentation and linguistic expression is acknowledged.' Signed .... Date Show me your ways, Lord, teach me your paths. Guide me in your truth and teach me, for you are God my Savior, and my hope is in you all day long. { Psalm 25:4-5 { i This is dedicated for you, Papa. ii Acknowledgement This thesis is the the result of my two years research in the School of Mathematics and Statistics, University of New South Wales. I learned quite a number of life lessons throughout my entire study here, for which I am very grateful of. -

Sparse Polynomial Interpolation and Testing

Sparse Polynomial Interpolation and Testing by Andrew Arnold A thesis presented to the University of Waterloo in fulfillment of the thesis requirement for the degree of Doctor of Philsophy in Computer Science Waterloo, Ontario, Canada, 2016 © Andrew Arnold 2016 I hereby declare that I am the sole author of this thesis. This is a true copy of the thesis, including any required final revisions, as accepted by my examiners. I understand that my thesis may be made electronically available to the public. ii Abstract Interpolation is the process of learning an unknown polynomial f from some set of its evalua- tions. We consider the interpolation of a sparse polynomial, i.e., where f is comprised of a small, bounded number of terms. Sparse interpolation dates back to work in the late 18th century by the French mathematician Gaspard de Prony, and was revitalized in the 1980s due to advancements by Ben-Or and Tiwari, Blahut, and Zippel, amongst others. Sparse interpolation has applications to learning theory, signal processing, error-correcting codes, and symbolic computation. Closely related to sparse interpolation are two decision problems. Sparse polynomial identity testing is the problem of testing whether a sparse polynomial f is zero from its evaluations. Sparsity testing is the problem of testing whether f is in fact sparse. We present effective probabilistic algebraic algorithms for the interpolation and testing of sparse polynomials. These algorithms assume black-box evaluation access, whereby the algorithm may specify the evaluation points. We measure algorithmic costs with respect to the number and types of queries to a black-box oracle. -

Sparse Polynomial Interpolation Codes and Their Decoding Beyond Half the Minimum Distance *

Sparse Polynomial Interpolation Codes and their Decoding Beyond Half the Minimum Distance * Erich L. Kaltofen Clément Pernet Dept. of Mathematics, NCSU U. J. Fourier, LIP-AriC, CNRS, Inria, UCBL, Raleigh, NC 27695, USA ÉNS de Lyon [email protected] 46 Allée d’Italie, 69364 Lyon Cedex 7, France www4.ncsu.edu/~kaltofen [email protected] http://membres-liglab.imag.fr/pernet/ ABSTRACT General Terms: Algorithms, Reliability We present algorithms performing sparse univariate pol- Keywords: sparse polynomial interpolation, Blahut's ynomial interpolation with errors in the evaluations of algorithm, Prony's algorithm, exact polynomial fitting the polynomial. Based on the initial work by Comer, with errors. Kaltofen and Pernet [Proc. ISSAC 2012], we define the sparse polynomial interpolation codes and state that their minimal distance is precisely the code-word length 1. INTRODUCTION divided by twice the sparsity. At ISSAC 2012, we have Evaluation-interpolation schemes are a key ingredient given a decoding algorithm for as much as half the min- in many of today's computations. Model fitting for em- imal distance and a list decoding algorithm up to the pirical data sets is a well-known one, where additional minimal distance. information on the model helps improving the fit. In Our new polynomial-time list decoding algorithm uses particular, models of natural phenomena often happen sub-sequences of the received evaluations indexed by to be sparse, which has motivated a wide range of re- an arithmetic progression, allowing the decoding for a search including compressive sensing [4], and sparse in- larger radius, that is, more errors in the evaluations terpolation of polynomials [23,1, 15, 13,9, 11]. -

Interpolation Polynomial Interpolation Piecewise Polynomial Interpolation Outline

Interpolation Polynomial Interpolation Piecewise Polynomial Interpolation Outline 1 Interpolation 2 Polynomial Interpolation 3 Piecewise Polynomial Interpolation Michael T. Heath Scientific Computing 2 / 56 Chapter 7: Interpolation ❑ Topics: ❑ Examples ❑ Polynomial Interpolation – bases, error, Chebyshev, piecewise ❑ Orthogonal Polynomials ❑ Splines – error, end conditions ❑ Parametric interpolation ❑ Multivariate interpolation: f(x,y) Interpolation Motivation Polynomial Interpolation Choosing Interpolant Piecewise Polynomial Interpolation Existence and Uniqueness Interpolation Basic interpolation problem: for given data (t ,y ), (t ,y ),...(t ,y ) with t <t < <t 1 1 2 2 m m 1 2 ··· m determine function f : R R such that ! f(ti)=yi,i=1,...,m f is interpolating function, or interpolant, for given data Additional data might be prescribed, such as slope of interpolant at given points Additional constraints might be imposed, such as smoothness, monotonicity, or convexity of interpolant f could be function of more than one variable, but we will consider only one-dimensional case Michael T. Heath Scientific Computing 3 / 56 Interpolation Motivation Polynomial Interpolation Choosing Interpolant Piecewise Polynomial Interpolation Existence and Uniqueness Purposes for Interpolation Plotting smooth curve through discrete data points Reading between lines of table Differentiating or integrating tabular data Quick and easy evaluation of mathematical function Replacing complicated function by simple one Michael T. Heath Scientific Computing 4 / 56 Interpolation Motivation Polynomial Interpolation Choosing Interpolant Piecewise Polynomial Interpolation Existence and Uniqueness Interpolation vs Approximation By definition, interpolating function fits given data points exactly Interpolation is inappropriate if data points subject to significant errors It is usually preferable to smooth noisy data, for example by least squares approximation Approximation is also more appropriate for special function libraries Michael T.