Determinantal Beam Search

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

4 Beyond Classical Search

BEYOND CLASSICAL 4 SEARCH In which we relax the simplifying assumptions of the previous chapter, thereby getting closer to the real world. Chapter 3 addressed a single category of problems: observable, deterministic, known envi- ronments where the solution is a sequence of actions. In this chapter, we look at what happens when these assumptions are relaxed. We begin with a fairly simple case: Sections 4.1 and 4.2 cover algorithms that perform purely local search in the state space, evaluating and modify- ing one or more current states rather than systematically exploring paths from an initial state. These algorithms are suitable for problems in which all that matters is the solution state, not the path cost to reach it. The family of local search algorithms includes methods inspired by statistical physics (simulated annealing)andevolutionarybiology(genetic algorithms). Then, in Sections 4.3–4.4, we examine what happens when we relax the assumptions of determinism and observability. The key idea is that if an agent cannot predict exactly what percept it will receive, then it will need to consider what to do under each contingency that its percepts may reveal. With partial observability, the agent will also need to keep track of the states it might be in. Finally, Section 4.5 investigates online search,inwhichtheagentisfacedwithastate space that is initially unknown and must be explored. 4.1 LOCAL SEARCH ALGORITHMS AND OPTIMIZATION PROBLEMS The search algorithms that we have seen so far are designed to explore search spaces sys- tematically. This systematicity is achieved by keeping one or more paths in memory and by recording which alternatives have been explored at each point along the path. -

A. Local Beam Search with K=1. A. Local Beam Search with K = 1 Is Hill-Climbing Search

4. Give the name that results from each of the following special cases: a. Local beam search with k=1. a. Local beam search with k = 1 is hill-climbing search. b. Local beam search with one initial state and no limit on the number of states retained. b. Local beam search with k = ∞: strictly speaking, this doesn’t make sense. The idea is that if every successor is retained (because k is unbounded), then the search resembles breadth-first search in that it adds one complete layer of nodes before adding the next layer. Starting from one state, the algorithm would be essentially identical to breadth-first search except that each layer is generated all at once. c. Simulated annealing with T=0 at all times (and omitting the termination test). c. Simulated annealing with T = 0 at all times: ignoring the fact that the termination step would be triggered immediately, the search would be identical to first-choice hill climbing because every downward successor would be rejected with probability 1. d. Simulated annealing with T=infinity at all times. d. Simulated annealing with T = infinity at all times: ignoring the fact that the termination step would never be triggered, the search would be identical to a random walk because every successor would be accepted with probability 1. Note that, in this case, a random walk is approximately equivalent to depth-first search. e. Genetic algorithm with population size N=1. e. Genetic algorithm with population size N = 1: if the population size is 1, then the two selected parents will be the same individual; crossover yields an exact copy of the individual; then there is a small chance of mutation. -

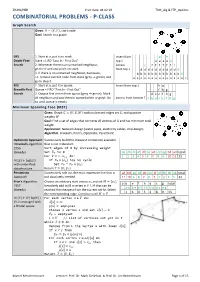

COMBINATORIAL PROBLEMS - P-CLASS Graph Search Given: 퐺 = (푉, 퐸), Start Node Goal: Search in a Graph

ZHAW/HSR Print date: 04.02.19 TSM_Alg & FTP_Optimiz COMBINATORIAL PROBLEMS - P-CLASS Graph Search Given: 퐺 = (푉, 퐸), start node Goal: Search in a graph DFS 1. Start at a, put it on stack. Insert from g h Depth-First- Stack = LIFO "Last In - First Out" top ↓ e e e e e Search 2. Whenever there is an unmarked neighbour, Access c f f f f f f f go there and and put it on stack from top ↓ d d d d d d d d d d d 3. If there is no unmarked neighbour, backtrack; b b b b b b b b b b b b b i.e. remove current node from stack (grey ⇒ green) and a a a a a a a a a a a a a a a go to step 2. BFS 1. Start at a, put it in queue. Insert from top ↓ h g Breadth-First Queue = FIFO "First In - First Out" f h g Search 2. Output first vertex from queue (grey ⇒ green). Mark d e c f h g all neighbors and put them in queue (white ⇒ grey). Do Access from bottom ↑ a b d e c f h g so until queue is empty Minimum Spanning Tree (MST) Given: Graph 퐺 = (푉, 퐸, 푊) with undirected edges set 퐸, with positive weights 푊 Goal: Find a set of edges that connects all vertices of G and has minimum total weight. Application: Network design (water pipes, electricity cables, chip design) Algorithm: Kruskal's, Prim's, Optimistic, Pessimistic Optimistic Approach Successively build the cheapest connection available =Kruskal's algorithm that is not redundant. -

Beam Search for Integer Multi-Objective Optimization

Beam Search for integer multi-objective optimization Thibaut Barthelemy Sophie N. Parragh Fabien Tricoire Richard F. Hartl University of Vienna, Department of Business Administration, Austria {thibaut.barthelemy,sophie.parragh,fabien.tricoire,richard.hartl}@univie.ac.at Abstract Beam search is a tree search procedure where, at each level of the tree, at most w nodes are kept. This results in a metaheuristic whose solving time is polynomial in w. Popular for single-objective problems, beam search has only received little attention in the context of multi-objective optimization. By introducing the concepts of oracle and filter, we define a paradigm to understand multi-objective beam search algorithms. Its theoretical analysis engenders practical guidelines for the design of these algorithms. The guidelines, suitable for any problem whose variables are integers, are applied to address a bi-objective 0-1 knapsack problem. The solver obtained outperforms the existing non-exact methods from the literature. 1 Introduction Everyday life decisions are often a matter of multi-objective optimization. Indeed, life is full of tradeoffs, as reflected for instance by discussions in parliaments or boards of directors. As a result, almost all cost-oriented problems of the classical literature give also rise to quality, durability, social or ecological concerns. Such goals are often conflicting. For instance, lower costs may lead to lower quality and vice versa. A generic approach to multi-objective optimization consists in computing a set of several good compromise solutions that decision makers discuss in order to choose one. Numerous methods have been developed to find the set of compromise solutions to multi-objective combi- natorial problems. -

IJIR Paper Template

International Journal of Research e-ISSN: 2348-6848 p-ISSN: 2348-795X Available at https://journals.pen2print.org/index.php/ijr/ Volume 06 Issue 10 September 2019 Study of Informed Searching Algorithm for Finding the Shortest Path Wint Aye Khaing1, Kyi Zar Nyunt2, Thida Win2, Ei Ei Moe3 1Faculty of Information Science, University of Computer Studies (Taungoo), Myanmar 2 Faculty of Information Science, University of Computer Studies (Taungoo), Myanmar 3 Faculty of Computer Science, University of Computer Studies (Taungoo), Myanmar [email protected],[email protected] [email protected], [email protected], [email protected], [email protected] Abstract: frequently deal with this question when planning trips with their cars. There are also many applications like logistic While technological revolution has active role to the planning or traffic simulation that need to solve a huge increase of computer information, growing computational number of such route queries. Shortest path can be either capabilities of devices, and raise the level of knowledge inconvenient for the client if he has to wait for the response abilities, and skills. Increase developments in science and or experience for the service provider if he has to make a lot technology. In city area traffic the shortest path finding is of computing power available. While algorithm is a very difficult in a road network. Shortest path searching is procedure or formula for solve problem. Algorithm usually very important in some special case as medical emergency, means a small procedure that solves a recurrent problem. spying, theft catching, fire brigade etc. In this paper used the shortest path algorithms for solving the shortest path 2. -

Job Shop Scheduling with Beam Search

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Bilkent University Institutional Repository European Journal of Operational Research 118 (1999) 390±412 www.elsevier.com/locate/orms Theory and Methodology Job shop scheduling with beam search I. Sabuncuoglu *, M. Bayiz Department of Industrial Engineering, Bilkent University, 06533 Ankara, Turkey Received 1 July 1997; accepted 1 August 1998 Abstract Beam Search is a heuristic method for solving optimization problems. It is an adaptation of the branch and bound method in which only some nodes are evaluated in the search tree. At any level, only the promising nodes are kept for further branching and remaining nodes are pruned o permanently. In this paper, we develop a beam search based scheduling algorithm for the job shop problem. Both the makespan and mean tardiness are used as the performance measures. The proposed algorithm is also compared with other well known search methods and dispatching rules for a wide variety of problems. The results indicate that the beam search technique is a very competitive and promising tool which deserves further research in the scheduling literature. Ó 1999 Elsevier Science B.V. All rights reserved. Keywords: Scheduling; Beam search; Job shop 1. Introduction (Lowerre, 1976). There have been a number of applications reported in the literature since then. Beam search is a heuristic method for solving Fox (1983) used beam search for solving complex optimization problems. It is an adaptation of the scheduling problems by a system called ISIS. La- branch and bound method in which only some ter, Ow and Morton (1988) studied the eects of nodes are evaluated. -

Improved Optimal and Approximate Power Graph Compression for Clearer Visualisation of Dense Graphs

Improved Optimal and Approximate Power Graph Compression for Clearer Visualisation of Dense Graphs Tim Dwyer∗ Christopher Mears† Kerri Morgan‡ Todd Niven§ Kim Marriott¶ Mark Wallacek Monash University, Australia (a) Flat graph with 30 nodes and 65 (b) Power Graph rendering computed using the (c) Power Graph computed using Beam Search (see 5.1) finds 15 links. heuristic of Royer et al. [12]: 7 modules, 36 links. modules to reduce the link count to 25. Figure 1: Three renderings of a network of dependencies between methods, properties and fields in a software system. In the Power Graph renderings an edge between a node and a module implies the node is connected to every member of the module. An edge between two modules implies a bipartite clique. In this way the Power Graph shows the precise connectivity of the directed graph but with much less clutter. ABSTRACT visualise in a way that individual links can still be followed. Such Drawings of highly connected (dense) graphs can be very difficult graphs occur frequently in nature as power-law or small-world net- to read. Power Graph Analysis offers an alternate way to draw a works. In practice, very dense graphs are often visualised in a way graph in which sets of nodes with common neighbours are shown that focuses less on high-fidelity readability of edges and more on grouped into modules. An edge connected to the module then im- highlighting highly-connected nodes or clusters of nodes through plies a connection to each member of the module. Thus, the entire techniques such as force-directed layout or abstraction determined graph may be represented with much less clutter and without loss by community detection [8]. -

Pruning State Spaces with Extended Beam Search

Pruning State Spaces with Extended Beam Search M. Torabi Dashti and A. J. Wijs CWI, Amsterdam {dashti, wijs}@cwi.nl Abstract. This paper focuses on using beam search, a heuristic search algorithm, for pruning state spaces while generating. The original beam search is adapted to the state space generation setting and two new search variants are devised. The resulting framework encompasses some known algorithms, such as A∗. We also report on two case studies based on an implementation of beam search in µCRL. 1 Introduction State space explosion is still a major problem in the area of model checking. Over the years a numberof techniques have emerged to prune, while generating, parts of the state space that are not relevant given the task at hand. Some of these techniques, such as par- tial order reduction algorithms (e.g. see [8]), guarantee that no essential information is lost after pruning. Alternatively, this paper focuses mainly on heuristic pruning methods which heavily reduce the generation time and memory consumption but generate only an approximate (partial) state space. The idea is that a user-supplied heuristic function guides the generation such that ideally only relevant parts of the state space are actually explored. This is, in fact, at odds with the core idea of model checking when studying qualitative properties of systems, i.e. to exhaustively search the complete state space to find any corner case bug. However, heuristic pruning techniques can very well target performance analysis problems as approximate answers are usually sufficient. In this paper, we investigate how beam search can be integrated into the state space generation setting. -

1 Adversarial Search (Minimax+Expectimax Pruning)

15-281: AI: Representation and Problem Solving Spring 2020 Recitation 5 (Midterm Review) February 14 ~ 1 Adversarial Search (Minimax+Expectimax Pruning) 1. Consider the following generic tree, where the triangle pointing down is a minimizer, the triangles pointing up are maximizers, and the square leaf nodes are terminal states with some value that has not been assigned yet: For each leaf node, is it possible for that leaf node to be the leftmost leaf node pruned? If yes, give an example of terminal values that would cause that leaf to be pruned. If not, explain. How might your answers change if the total range of the values were fixed and known? Solution (from left to right): The first node can never be pruned with no other information. Using alpha-beta reasoning, alpha and beta are still -inf and +inf, meaning there's no chance of pruning. The second node also can never be pruned; no value of the first node can ever be greater than the infinity in the beta node to cause pruning of this node. The third node cannot be the first pruned. Intuitively, if the third node were the first pruned, that would mean that the entire right tree would be unexplored. The fourth node can be pruned. If the values of the first three leaf nodes from left to right were 1, 1, 9, we know that minimizer will not choose the right path, so this leaf is pruned. Maybe trace through why this is! If the range were fixed, we would find that the first node still can never be pruned, the second node might be (if the first node had value = max), the third node can be (if both the first two nodes had value = min), and the fourth node can be for the same reasoning as above. -

Diverse Beam Search: Decoding Diverse Solutions from Neural

DIVERSE BEAM SEARCH: DECODING DIVERSE SOLUTIONS FROM NEURAL SEQUENCE MODELS Ashwin K Vijayakumar1, Michael Cogswell1, Ramprasath R. Selvaraju1, Qing Sun1 Stefan Lee1, David Crandall2 & Dhruv Batra1 {ashwinkv,cogswell,ram21,sunqing,steflee}@vt.edu [email protected], [email protected] 1 Department of Electrical and Computer Engineering, Virginia Tech, Blacksburg, VA, USA 2 School of Informatics and Computing Indiana University, Bloomington, IN, USA ABSTRACT Neural sequence models are widely used to model time-series data. Equally ubiq- uitous is the usage of beam search (BS) as an approximate inference algorithm to decode output sequences from these models. BS explores the search space in a greedy left-right fashion retaining only the top-B candidates – resulting in sequences that differ only slightly from each other. Producing lists of nearly iden- tical sequences is not only computationally wasteful but also typically fails to capture the inherent ambiguity of complex AI tasks. To overcome this problem, we propose Diverse Beam Search (DBS), an alternative to BS that decodes a list of diverse outputs by optimizing for a diversity-augmented objective. We observe that our method finds better top-1 solutions by controlling for the exploration and exploitation of the search space – implying that DBS is a better search algorithm. Moreover, these gains are achieved with minimal computational or memory over- head as compared to beam search. To demonstrate the broad applicability of our method, we present results on image captioning, machine translation and visual question generation using both standard quantitative metrics and qualitative hu- man studies. Further, we study the role of diversity for image-grounded language generation tasks as the complexity of the image changes. -

CS347 SP2014 Exam 3 Key

CS347 SP2014 Exam 3 Key This is a closed-book, closed-notes exam. The only items you are permitted to use are writing implements. Mark each sheet of paper you use with your name and the string \cs347sp2014 exam3". If you are caught cheating, you will receive a zero grade for this exam. The max number of points per question is indicated in square brackets after each question. The sum of the max points is 45. You have 75 minutes to complete this exam. Good luck! Multiple Choice Questions - write the letter of your choice on your answer paper 1. The complexity of expectiminimax where b, d, and m have their usual AI meanings and n is the number of distinct stochastic outcomes (e.g., dice rolls) is: [2] (a) O(bmnm) d d 1 (b) O(b n ) [1 2 ] (c) O(bdnm) [1] (d) O(bndm) [0] (e) none of the above [0] 2. Given the following local search algorithms: Steepest Ascent Hill Climbing, Stochastic Hill Climbing, First-Choice Hill Climbing, Random-Restart Hill Climbing, Local Beam Search, Stochastic Beam Search, Simulated Annealing. Which of these algorithms are suitable for optimizing real-valued problems? [2] (a) Local Beam Search and Stochastic Beam Search [0] (b) Stochastic Hill Climbing, Stochastic Beam Search, and Simulated Annealing [1] (c) Only Random-Restart Hill Climbing [0] 1 (d) Any of the hill-climbing algorithms [ 2 ] (e) Any of the non-hill-climbing algorithms [1] (f) None of the above (The correct answer is First Choice Hill Climbing and Simulated Annealing, because those are the only ones that limit their branching factors which prevents a real-valued search space from causing an infinite branching factor.) Regular Questions 3. -

CS 4700: Foundations of Artificial Intelligence

CS 4700: Foundations of Artificial Intelligence Fall 2019 Prof. Haym Hirsh Lecture 5 September 23, 2019 Properties of A* Search • If • search space is a finite graph and • all operator costs are positive • Then • A* is guaranteed to terminate and • if there is a solution, A* will find a solution (not necessarily an optimal one) Properties of A* Search • If • search space is an infinite graph (but branching factor is finite) and • all operator costs are positive and are never less than some number Ɛ (in other words, they cannot get arbitrarily close to 0) • Then • if there is a solution, A* will terminate with a solution (not necessarily an optimal one) (no guarantee of termination if there is no solution) Properties of A* Search • If, in addition, • h(s) is admissible (for all states s, 0 ≤ h(s) ≤ h*(s)) • Then • If A* terminates with a solution it will be optimal Properties of A* Search • If, in addition, • h(s) is consistent (for all states s, h(s) ≤ h(apply(a,s)) + cost(apply(a,s)) • Then • h(s) is admissible, • the first path found to any state is guaranteed to have the lowest cost (do not need to check for this in the algorithm), and • no other algorithm using the same h(s) and the same tie-breaking rules will expand fewer nodes than A* Properties of A* Search • If • the search space is a tree, • there is a single goal state, and • for all states s, |h*(s) – h(s)| = O(log(h*(s)) (the error of h(s) is never more than a logarithmic factor of h*(s)) • Then • A* runs in time polynomial in b (branching factor) A* Variants Weighted A*: