Big Data ° Real Time the Open Big Data Serving Engine; Store, Search, Rank and Organize Big Data at User Serving Time

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Recommending Best Answer in a Collaborative Question Answering System

RECOMMENDING BEST ANSWER IN A COLLABORATIVE QUESTION ANSWERING SYSTEM Mrs Lin Chen Submitted in fulfilment of the requirements for the degree of Master of Information Technology (Research) School of Information Technology Faculty of Science & Technology Queensland University of Technology 2009 Recommending Best Answer in a Collaborative Question Answering System Page i Keywords Authority, Collaborative Social Network, Content Analysis, Link Analysis, Natural Language Processing, Non-Content Analysis, Online Question Answering Portal, Prestige, Question Answering System, Recommending Best Answer, Social Network Analysis, Yahoo! Answers. © 2009 Lin Chen Page i Recommending Best Answer in a Collaborative Question Answering System Page ii © 2009 Lin Chen Page ii Recommending Best Answer in a Collaborative Question Answering System Page iii Abstract The World Wide Web has become a medium for people to share information. People use Web- based collaborative tools such as question answering (QA) portals, blogs/forums, email and instant messaging to acquire information and to form online-based communities. In an online QA portal, a user asks a question and other users can provide answers based on their knowledge, with the question usually being answered by many users. It can become overwhelming and/or time/resource consuming for a user to read all of the answers provided for a given question. Thus, there exists a need for a mechanism to rank the provided answers so users can focus on only reading good quality answers. The majority of online QA systems use user feedback to rank users’ answers and the user who asked the question can decide on the best answer. Other users who didn’t participate in answering the question can also vote to determine the best answer. -

Declaration of Jason Bartlett in Support of 86 MOTION For

Apple Inc. v. Samsung Electronics Co. Ltd. et al Doc. 88 Att. 34 Exhibit 34 Dockets.Justia.com Apple iPad review -- Engadget Page 1 of 14 Watch Gadling TV's "Travel Talk" and get all the latest travel news! MAIL You might also like: Engadget HD, Engadget Mobile and More MANGO PREVIEW WWDC 2011 E3 2011 COMPUTEX 2011 ASUS PADFONE GALAXY S II Handhelds, Tablet PCs Apple iPad review By Joshua Topolsky posted April 3rd 2010 9:00AM iPad Apple $499-$799 4/3/10 8/10 Finally, the Apple iPad review. The name iPad is a killing word -- more than a product -- it's a statement, an idea, and potentially a prime mover in the world of consumer electronics. Before iPad it was called the Best-in-class touchscreen Apple Tablet, the Slate, Canvas, and a handful of other guesses -- but what was little more than rumor Plugged into Apple's ecosystems and speculation for nearly ten years is now very much a reality. Announced on January 27th to a Tremendous battery life middling response, Apple has been readying itself for what could be the most significant product launch in its history; the making (or breaking) of an entirely new class of computer for the company. The iPad is No multitasking something in between its monumental iPhone and wildly successful MacBook line -- a usurper to the Web experience hampered by lack of Flash Can't stand-in for dedicated laptop netbook throne, and possibly a sign of things to come for the entire personal computer market... if Apple delivers on its promises. -

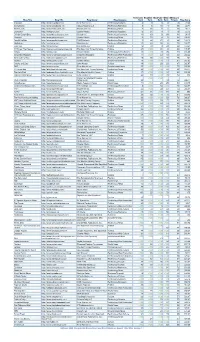

Blog Title Blog URL Blog Owner Blog Category Technorati Rank

Technorati Bloglines BlogPulse Wikio SEOmoz’s Blog Title Blog URL Blog Owner Blog Category Rank Rank Rank Rank Trifecta Blog Score Engadget http://www.engadget.com Time Warner Inc. Technology/Gadgets 4 3 6 2 78 19.23 Boing Boing http://www.boingboing.net Happy Mutants LLC Technology/Marketing 5 6 15 4 89 33.71 TechCrunch http://www.techcrunch.com TechCrunch Inc. Technology/News 2 27 2 1 76 42.11 Lifehacker http://lifehacker.com Gawker Media Technology/Gadgets 6 21 9 7 78 55.13 Official Google Blog http://googleblog.blogspot.com Google Inc. Technology/Corporate 14 10 3 38 94 69.15 Gizmodo http://www.gizmodo.com/ Gawker Media Technology/News 3 79 4 3 65 136.92 ReadWriteWeb http://www.readwriteweb.com RWW Network Technology/Marketing 9 56 21 5 64 142.19 Mashable http://mashable.com Mashable Inc. Technology/Marketing 10 65 36 6 73 160.27 Daily Kos http://dailykos.com/ Kos Media, LLC Politics 12 59 8 24 63 163.49 NYTimes: The Caucus http://thecaucus.blogs.nytimes.com The New York Times Company Politics 27 >100 31 8 93 179.57 Kotaku http://kotaku.com Gawker Media Technology/Video Games 19 >100 19 28 77 216.88 Smashing Magazine http://www.smashingmagazine.com Smashing Magazine Technology/Web Production 11 >100 40 18 60 283.33 Seth Godin's Blog http://sethgodin.typepad.com Seth Godin Technology/Marketing 15 68 >100 29 75 284 Gawker http://www.gawker.com/ Gawker Media Entertainment News 16 >100 >100 15 81 287.65 Crooks and Liars http://www.crooksandliars.com John Amato Politics 49 >100 33 22 67 305.97 TMZ http://www.tmz.com Time Warner Inc. -

Flickr: a Case Study of Web2.0, Aslib Proceedings, 60 (5), Pp

promoting access to White Rose research papers Universities of Leeds, Sheffield and York http://eprints.whiterose.ac.uk/ This is an author produced version of a paper published in Aslib Proceedings. White Rose Research Online URL for this paper: http://eprints.whiterose.ac.uk/9043 Published paper Cox, A.M. (2008) Flickr: A case study of Web2.0, Aslib Proceedings, 60 (5), pp. 493-516 http://dx.doi.org/10.1108/00012530810908210 White Rose Research Online [email protected] Flickr: A case study of Web2.0 Andrew M. Cox University of Sheffield, UK [email protected] Abstract The “photosharing” site Flickr is one of the most commonly cited examples used to define Web2.0. This paper explores where Flickr’s real novelty lies, examining its functionality and its place in the world of amateur photography. The paper draws on a wide range of sources including published interviews with its developers, user opinions expressed in forums, telephone interviews and content analysis of user profiles and activity. Flickr’s development path passes from an innovative social game to a relatively familiar model of a website, itself developed through intense user participation but later stabilising with the reassertion of a commercial relationship to the membership. The broader context of the impact of Flickr is examined by looking at the institutions of amateur photography and particularly the code of pictorialism promoted by the clubs and industry during the C20th. The nature of Flickr as a benign space is premised on the way the democratic potential of photography is controlled by such institutions. -

Location-Based Services: Industrial and Business Analysis Group 6 Table of Contents

Location-based Services Industrial and Business Analysis Group 6 Huanhuan WANG Bo WANG Xinwei YANG Han LIU Location-based Services: Industrial and Business Analysis Group 6 Table of Contents I. Executive Summary ................................................................................................................................................. 2 II. Introduction ............................................................................................................................................................ 3 III. Analysis ................................................................................................................................................................ 3 IV. Evaluation Model .................................................................................................................................................. 4 V. Model Implementation ........................................................................................................................................... 6 VI. Evaluation & Impact ........................................................................................................................................... 12 VII. Conclusion ........................................................................................................................................................ 16 1 Location-based Services: Industrial and Business Analysis Group 6 I. Executive Summary The objective of the report is to analyze location-based services (LBS) from the industrial -

UPDATED Activate Outlook 2021 FINAL DISTRIBUTION Dec

ACTIVATE TECHNOLOGY & MEDIA OUTLOOK 2021 www.activate.com Activate growth. Own the future. Technology. Internet. Media. Entertainment. These are the industries we’ve shaped, but the future is where we live. Activate Consulting helps technology and media companies drive revenue growth, identify new strategic opportunities, and position their businesses for the future. As the leading management consulting firm for these industries, we know what success looks like because we’ve helped our clients achieve it in the key areas that will impact their top and bottom lines: • Strategy • Go-to-market • Digital strategy • Marketing optimization • Strategic due diligence • Salesforce activation • M&A-led growth • Pricing Together, we can help you grow faster than the market and smarter than the competition. GET IN TOUCH: www.activate.com Michael J. Wolf Seref Turkmenoglu New York [email protected] [email protected] 212 316 4444 12 Takeaways from the Activate Technology & Media Outlook 2021 Time and Attention: The entire growth curve for consumer time spent with technology and media has shifted upwards and will be sustained at a higher level than ever before, opening up new opportunities. Video Games: Gaming is the new technology paradigm as most digital activities (e.g. search, social, shopping, live events) will increasingly take place inside of gaming. All of the major technology platforms will expand their presence in the gaming stack, leading to a new wave of mergers and technology investments. AR/VR: Augmented reality and virtual reality are on the verge of widespread adoption as headset sales take off and use cases expand beyond gaming into other consumer digital activities and enterprise functionality. -

Weekly Wireless Report September 23, 2016

Week Ending: Weekly Wireless Report September 23, 2016 This Week’s Stories Yahoo Says Information On At Least 500 Million User Accounts Inside This Issue: Was Stolen September 22, 2016 This Week’s Stories Yahoo Says Information On Internet company says it believes the 2014 hack was done by a state-sponsored actor; potentially the At Least 500 Million User biggest data breach on record. Accounts Was Stolen Yahoo Inc. is blaming “state-sponsored” hackers for what may be the largest-ever theft of personal You Can Now Exchange user data. Your Recalled Samsung Galaxy Note 7 The internet company, which has agreed to sell its core business to Verizon Communications Inc., said Products & Services Thursday that hackers penetrated its network in late 2014 and stole personal data on more than 500 million users. The stolen data included names, email addresses, dates of birth, telephone numbers Facebook Messenger Gets and encrypted passwords, Yahoo said. Polls And Now Encourages Peer-To-Peer Payments Yahoo said it believes that the hackers are no longer in its corporate network. The company said it Allo Brings Google’s didn't believe that unprotected passwords, payment-card data or bank-account information had been ‘Assistant’ To Your Phone affected. Today Computer users have grown inured to notices that a tech company, retailer or other company with Emerging Technology which they have done business had been hacked. But the Yahoo disclosure is significant because the iHeartRadio Is Finally company said it was the work of another nation, and because it raises questions about the fate of the Getting Into On-Demand $4.8 billion Verizon deal, which was announced on July 25. -

Sustaining Software Innovation from the Client to the Web Working Paper

Principles that Matter: Sustaining Software Innovation from the Client to the Web Marco Iansiti Working Paper 09-142 Copyright © 2009 by Marco Iansiti Working papers are in draft form. This working paper is distributed for purposes of comment and discussion only. It may not be reproduced without permission of the copyright holder. Copies of working papers are available from the author. Principles that Matter: Sustaining Software Innovation 1 from the Client to the Web Marco Iansiti David Sarnoff Professor of Business Administration Director of Research Harvard Business School Boston, MA 02163 June 15, 2009 1 The early work for this paper was based on a project funded by Microsoft Corporation. The author has consulted for a variety of companies in the information technology sector. The author would like to acknowledge Greg Richards and Robert Bock from Keystone Strategy LLC for invaluable suggestions. 1. Overview The information technology industry forms an ecosystem that consists of thousands of companies producing a vast array of interconnected products and services for consumers and businesses. This ecosystem was valued at more than $3 Trillion in 2008.2 More so than in any other industry, unique opportunities for new technology products and services stem from the ability of IT businesses to build new offerings in combination with existing technologies. This creates an unusual degree of interdependence among information technology products and services and, as a result, unique opportunities exist to encourage competition and innovation. In the growing ecosystem of companies that provide software services delivered via the internet - or “cloud computing” - the opportunities and risks are compounded by a significant increase in interdependence between products and services. -

Sign up Now Or Login with Your Facebook Account'' - Look Familiar? You Can Now Do the Same Thing with Your Pinterest Account

Contact us Visit us Visit our website ''Sign up now or Login with your Facebook Account'' - Look familiar? You can now do the same thing with your Pinterest account. With this, users can easily Pin collections and oer some new ways to pin images. Right now this feature is only avail- able on two apps, but as Pinterest said, its early tests have shown that this integration increases shares to Pinterest boards by 132 percent. We can expect more apps will open up with this feature to nurture our Pinterest ob- session. View More Your Facebook's News Feed is like a billboard of your life and for years, it has been powered by automated software that tracks your actions to show you the posts you will most likely engage with, or as Facbook's FAQ said, ''the posts you will nd interesting''. But now you can say goodbye to guesswork. Facebook has cre- ated a new function called ''See First''. Simply add the Pages, friends or public gures’ proles to your See First list and it will revamp your News Feed Prefer- ences. Getting added to that list guarantees all their posts will appear at the top of a user’s News Feed with a blue star. View More Youku Tudou, which has been de- scribed as China’s answer to YouTube and boasts 185 million unique users per month, will air its rst foreign TV series approved under new rules from China’s media regulator, SAP- PRFT. The Korean romantic comedy entitled "Hyde, Jekyll, and Me'' will be available this weekend. -

Home Reviews Gear Gaming Entertainment Tomorrow Audio Video Deals Buyer's Guide

11/2/2020 MIT tests autonomous 'Roboat' that can carry two passengers | Engadget Login Home Reviews Gear Gaming Entertainment Tomorrow Audio Video Deals Buyer's Guide Log in Sign up 3 related articles Login https://www.engadget.com/mit-autonomous-roboat-ii-carries-passengers-140145138.html 1/10 11/2/2020 MIT tests autonomous 'Roboat' that can carry two passengers | Engadget Latest in Tomorrow Daimler and Volvo team up to make fuel cells for trucks and generators 1h ago Image credit: MIT Csail MIT tests autonomous 'Roboat' that can carry two passengers The half-scale Roboat II is being tested in Amsterdam's canals Nathan Ingraham 'Pristine' October 26, 2020 meteorite 2 255 may provide Comments Shares clues to the origins of our solar system 6h ago 3 related articles Login https://www.engadget.com/mit-autonomous-roboat-ii-carries-passengers-140145138.html 2/10 11/2/2020 MIT tests autonomous 'Roboat' that can carry two passengers | Engadget Disney robot with human- like gaze is equal parts uncanny and horrifying 10.31.20 Modified drones help scientists better predict volcanic eruptions MIT Csail 10.30.20 We’ve heard plenty about the potential of autonomous vehicles in recent years, but MIT is thinking about different forms of self-driving transportation. For the last five years, MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and the Senseable City Lab have been working on a fleet of autonomous boats to deploy in Amsterdam. Last year, we saw the autonomous “roboats” that could assemble themselves into a series of floating structures for various uses, and today CSAIL is unveiling the “Roboat II.” What makes this one particularly notable is that it’s the first that can carry passengers. -

Home Gear Gaming Entertainment Tomorrow the Buyer's Guide Video

12/23/2018 MIT can shrink 3D objects down to nanoscale versions Login Home Login Gear Gaming Entertainment Tomorrow3 related articles The Buyer's Guide Video Reviews Log in Sign up https://www.engadget.com/2018/12/17/mit-nanoscale-object-shrinking/ 1/14 12/23/2018 MIT can shrink 3D objects down to nanoscale versions Latest in Login Tomorrow 3 related articles The Morning After: Drone attacks and self-lacing Nikes 12.21.18 Image credit: Daniel Oran/MIT MIT can shrink 3D objects down to nanoscale versions It could be useful for tiny parts in robotics, medicine and beyond. Jon Fingas, @jonfingas 12.17.18 in Gadgetry President 10 631 poised to Comments Shares sign bill creating quantum computing initiative 12.20.18 Marlboro owner Sponsored Links by Taboola https://www.engadget.com/2018/12/17/mit-nanoscale-object-shrinking/ 2/14 12/23/2018 MIT can shrink 3D objects down to nanoscale versions invests Before you renew Amazon Prime, read this $12.8 billion in e- Login cigarette maker Juul 3 related aHrteircel’es sW hat Makes An Azure Free Account So Valuable... 12.20.18 You're Probably Overpaying at Amazon – This Genius Trick Will Fix That WMHoiicrknieobysuyoft Azure ILO tidies up your dab sessions with self- contained ceramic pellets 12.20.18 https://www.engadget.com/2018/12/17/mit-nanoscale-object-shrinking/ 3/14 12/23/2018 MIT can shrink 3D objects down to nanoscale versions Login 3 related articles Daniel Oran/MIT It's difficult to create nanoscale 3D objects. The techniques either tend to be slow (such as stacking layers of 2D etchings) or are limited to specific materials and shapes. -

Social Media Identity Deception Detection: a Survey

Social Media Identity Deception Detection: A Survey AHMED ALHARBI, HAI DONG, XUN YI, ZAHIR TARI, and IBRAHIM KHALIL, School of Computing Technologies, RMIT University, Australia Social media have been growing rapidly and become essential elements of many people’s lives. Meanwhile, social media have also come to be a popular source for identity deception. Many social media identity deception cases have arisen over the past few years. Recent studies have been conducted to prevent and detect identity deception. This survey analyses various identity deception attacks, which can be categorized into fake profile, identity theft and identity cloning. This survey provides a detailed review of social media identity deception detection techniques. It also identifies primary research challenges and issues in the existing detection techniques. This article is expected to benefit both researchers and social media providers. CCS Concepts: • Security and privacy ! Social engineering attacks; Social network security and privacy. Additional Key Words and Phrases: identity deception, fake profile, Sybil, sockpuppet, social botnet, identity theft, identity cloning, detection techniques ACM Reference Format: Ahmed Alharbi, Hai Dong, Xun Yi, Zahir Tari, and Ibrahim Khalil. 2021. Social Media Identity Deception Detection: A Survey . ACM Comput. Surv. 37, 4, Article 111 (January 2021), 35 pages. https://doi.org/10.1145/ 1122445.1122456 1 INTRODUCTION Social media are web-based communication tools that facilitate people with creating customized user profiles to interact and share information with each other. Social media have been growing rapidly in the last two decades and have become an integral part of many people’s lives. Users in various social media platforms totalled almost 3.8 billion at the start of 2020, with a total growth of 321 million worldwide in the past 12 months1.