MTH 664 Lectures 24 - 27

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Notes on Elementary Martingale Theory 1 Conditional Expectations

. Notes on Elementary Martingale Theory by John B. Walsh 1 Conditional Expectations 1.1 Motivation Probability is a measure of ignorance. When new information decreases that ignorance, it changes our probabilities. Suppose we roll a pair of dice, but don't look immediately at the outcome. The result is there for anyone to see, but if we haven't yet looked, as far as we are concerned, the probability that a two (\snake eyes") is showing is the same as it was before we rolled the dice, 1/36. Now suppose that we happen to see that one of the two dice shows a one, but we do not see the second. We reason that we have rolled a two if|and only if|the unseen die is a one. This has probability 1/6. The extra information has changed the probability that our roll is a two from 1/36 to 1/6. It has given us a new probability, which we call a conditional probability. In general, if A and B are events, we say the conditional probability that B occurs given that A occurs is the conditional probability of B given A. This is given by the well-known formula P A B (1) P B A = f \ g; f j g P A f g providing P A > 0. (Just to keep ourselves out of trouble if we need to apply this to a set f g of probability zero, we make the convention that P B A = 0 if P A = 0.) Conditional probabilities are familiar, but that doesn't stop themf fromj g giving risef tog many of the most puzzling paradoxes in probability. -

The Theory of Filtrations of Subalgebras, Standardness and Independence

The theory of filtrations of subalgebras, standardness and independence Anatoly M. Vershik∗ 24.01.2017 In memory of my friend Kolya K. (1933{2014) \Independence is the best quality, the best word in all languages." J. Brodsky. From a personal letter. Abstract The survey is devoted to the combinatorial and metric theory of fil- trations, i. e., decreasing sequences of σ-algebras in measure spaces or decreasing sequences of subalgebras of certain algebras. One of the key notions, that of standardness, plays the role of a generalization of the no- tion of the independence of a sequence of random variables. We discuss the possibility of obtaining a classification of filtrations, their invariants, and various links to problems in algebra, dynamics, and combinatorics. Bibliography: 101 titles. Contents 1 Introduction 3 1.1 A simple example and a difficult question . .3 1.2 Three languages of measure theory . .5 1.3 Where do filtrations appear? . .6 1.4 Finite and infinite in classification problems; standardness as a generalization of independence . .9 arXiv:1705.06619v1 [math.DS] 18 May 2017 1.5 A summary of the paper . 12 2 The definition of filtrations in measure spaces 13 2.1 Measure theory: a Lebesgue space, basic facts . 13 2.2 Measurable partitions, filtrations . 14 2.3 Classification of measurable partitions . 16 ∗St. Petersburg Department of Steklov Mathematical Institute; St. Petersburg State Uni- versity Institute for Information Transmission Problems. E-mail: [email protected]. Par- tially supported by the Russian Science Foundation (grant No. 14-11-00581). 1 2.4 Classification of finite filtrations . 17 2.5 Filtrations we consider and how one can define them . -

An Application of Probability Theory in Finance: the Black-Scholes Formula

AN APPLICATION OF PROBABILITY THEORY IN FINANCE: THE BLACK-SCHOLES FORMULA EFFY FANG Abstract. In this paper, we start from the building blocks of probability the- ory, including σ-field and measurable functions, and then proceed to a formal introduction of probability theory. Later, we introduce stochastic processes, martingales and Wiener processes in particular. Lastly, we present an appli- cation - the Black-Scholes Formula, a model used to price options in financial markets. Contents 1. The Building Blocks 1 2. Probability 4 3. Martingales 7 4. Wiener Processes 8 5. The Black-Scholes Formula 9 References 14 1. The Building Blocks Consider the set Ω of all possible outcomes of a random process. When the set is small, for example, the outcomes of tossing a coin, we can analyze case by case. However, when the set gets larger, we would need to study the collections of subsets of Ω that may be taken as a suitable set of events. We define such a collection as a σ-field. Definition 1.1. A σ-field on a set Ω is a collection F of subsets of Ω which obeys the following rules or axioms: (a) Ω 2 F (b) if A 2 F, then Ac 2 F 1 S1 (c) if fAngn=1 is a sequence in F, then n=1 An 2 F The pair (Ω; F) is called a measurable space. Proposition 1.2. Let (Ω; F) be a measurable space. Then (1) ; 2 F k SK (2) if fAngn=1 is a finite sequence of F-measurable sets, then n=1 An 2 F 1 T1 (3) if fAngn=1 is a sequence of F-measurable sets, then n=1 An 2 F Date: August 28,2015. -

Mathematical Finance an Introduction in Discrete Time

Jan Kallsen Mathematical Finance An introduction in discrete time CAU zu Kiel, WS 13/14, February 10, 2014 Contents 0 Some notions from stochastics 4 0.1 Basics . .4 0.1.1 Probability spaces . .4 0.1.2 Random variables . .5 0.1.3 Conditional probabilities and expectations . .6 0.2 Absolute continuity and equivalence . .7 0.3 Conditional expectation . .8 0.3.1 σ-fields . .8 0.3.2 Conditional expectation relative to a σ-field . 10 1 Discrete stochastic calculus 16 1.1 Stochastic processes . 16 1.2 Martingales . 18 1.3 Stochastic integral . 20 1.4 Intuitive summary of some notions from this chapter . 27 2 Modelling financial markets 36 2.1 Assets and trading strategies . 36 2.2 Arbitrage . 39 2.3 Dividend payments . 41 2.4 Concrete models . 43 3 Derivative pricing and hedging 48 3.1 Contingent claims . 49 3.2 Liquidly traded derivatives . 50 3.3 Individual perspective . 54 3.4 Examples . 56 3.4.1 Forward contract . 56 3.4.2 Futures contract . 57 3.4.3 European call and put options in the binomial model . 57 3.4.4 European call and put options in the standard model . 61 3.5 American options . 63 2 CONTENTS 3 4 Incomplete markets 70 4.1 Martingale modelling . 70 4.2 Variance-optimal hedging . 72 5 Portfolio optimization 73 5.1 Maximizing expected utility of terminal wealth . 73 6 Elements of continuous-time finance 80 6.1 Continuous-time theory . 80 6.2 Black-Scholes model . 84 Chapter 0 Some notions from stochastics 0.1 Basics 0.1.1 Probability spaces This course requires a decent background in stochastics which cannot be provided here. -

4 Martingales in Discrete-Time

4 Martingales in Discrete-Time Suppose that (Ω, F, P) is a probability space. Definition 4.1. A sequence F = {Fn, n = 0, 1,...} is called a filtration if each Fn is a sub-σ-algebra of F, and Fn ⊆ Fn+1 for n = 0, 1, 2,.... In other words, a filtration is an increasing sequence of sub-σ-algebras of F. For nota- tional convenience, we define ∞ ! [ F∞ = σ Fn n=1 and note that F∞ ⊆ F. Definition 4.2. If F = {Fn, n = 0, 1,...} is a filtration, and X = {Xn, n = 0, 1,...} is a discrete-time stochastic process, then X is said to be adapted to F if Xn is Fn-measurable for each n. Note that by definition every process {Xn, n = 0, 1,...} is adapted to its natural filtration {Fn, n = 0, 1,...} where Fn = σ(X0,...,Xn). The intuition behind the idea of a filtration is as follows. At time n, all of the information about ω ∈ Ω that is known to us at time n is contained in Fn. As n increases, our knowledge of ω also increases (or, if you prefer, does not decrease) and this is captured in the fact that the filtration consists of an increasing sequence of sub-σ-algebras. Definition 4.3. A discrete-time stochastic process X = {Xn, n = 0, 1,...} is called a martingale (with respect to the filtration F = {Fn, n = 0, 1,...}) if for each n = 0, 1, 2,..., (a) X is adapted to F; that is, Xn is Fn-measurable, 1 (b) Xn is in L ; that is, E|Xn| < ∞, and (c) E(Xn+1|Fn) = Xn a.s. -

An Enlargement of Filtration Formula with Applications to Multiple Non

Finance and Stochastics manuscript No. (will be inserted by the editor) An enlargement of filtration formula with applications to multiple non-ordered default times Monique Jeanblanc · Libo Li · Shiqi Song Received: date / Accepted: date Abstract In this work, for a reference filtration F, we develop a method for computing the semimartingale decomposition of F-martingales in a specific type of enlargement of F. As an application, we study the progressive en- largement of F with a sequence of non-ordered default times and we show how to deduce results concerning the first-to-default, k-th-to-default, k-out-of- n-to-default or the all-to-default events. In particular, using this method, we compute explicitly the semimartingale decomposition of F-martingales under the absolute continuity condition of Jacod. Keywords Enlargement of filtration · Non-ordered default times · Reduced form credit risk modeling · Semimartingale decomposition JEL classification G14 Mathematics Subject Classification (2010) 60G07 · 91G40 This research benefited from the support of the Chaire Risque de Cr´edit(F´ed´erationBan- caire Fran¸caise)and Labex ANR 11-LABX-0019. Monique Jeanblanc Univ Evry, LaMME, UMR CNRS 8071, Universit´eParis Saclay, 23 boulevard de France, 91037 Evry. E-mail: [email protected] Libo Li ( ) School of Mathematics and Statistics, University of New South Wales, NSW, Australia. E-mail: [email protected] Shiqi Song Univ Evry, LaMME, UMR CNRS 8071, Universit´eParis Saclay, 23 boulevard de France, 91037 Evry. E-mail: [email protected] 2 M. Jeanblanc, L. Li and S. Song 1 Introduction This paper is motivated by the needs to model multiple non-ordered default times in credit risk and to perform explicit computations in the pricing of credit instruments. -

The Comparison Isomorphisms Ccris

The Comparison Isomorphisms Ccris Fabrizio Andreatta July 9, 2009 Contents 1 Introduction 2 2 The crystalline conjecture with coe±cients 3 3 The complex case. The strategy in the p-adic setting 4 4 The p-adic case; Faltings's topology 5 5 Sheaves of periods 7 i 6 The computation of R v¤Bcris 13 7 The proof of the comparison isomorphism 16 1 1 Introduction Let p > 0 denote a prime integer and let k be a perfect ¯eld of characteristic p. Put OK := W (k) and let K be its fraction ¯eld. Denote by K a ¯xed algebraic closure of K and set GK := Gal(K=K). We write Bcris for the crystalline period ring de¯ned by J.-M. Fontaine in [Fo]. Recall that Bcris is a topological ring, endowed with a continuous action of GK , an ² exhaustive decreasing ¯ltration Fil Bcris and a Frobenius operator '. Following the paper [AI2], these notes aim at presenting a new proof of the so called crys- talline conjecture formulated by J.-M. Fontaine in [Fo]. Let X be a smooth proper scheme, geometrically irreducible, of relative dimension d over OK : Conjecture 1.1 ([Fo]). For every i ¸ 0 there is a canonical and functorial isomorphism com- muting with all the additional structures (namely, ¯ltrations, GK {actions and Frobenii) i et » i H (XK ; Qp) Qp Bcris = Hcris(Xk=OK ) OK Bcris: i » It follows from a classical theorem for crystalline cohomology, see [BO], that Hcris(Xk=OK ) = i HdR(X=OK ). The latter denotes the hypercohomology of the de Rham complex 0 ¡! OX ¡! 1 ¡! 2 ¡! ¢ ¢ ¢ d ¡! 0. -

E1-Degeneration of the Irregular Hodge Filtration (With an Appendix by Morihiko Saito)

E1-DEGENERATION OF THE IRREGULAR HODGE FILTRATION (WITH AN APPENDIX BY MORIHIKO SAITO) HÉLÈNE ESNAULT, CLAUDE SABBAH, AND JENG-DAW YU Abstract. For a regular function f on a smooth complex quasi-projective va- riety, J.-D. Yu introduced in [Yu14] a filtration (the irregular Hodge filtration) on the de Rham complex with twisted differential d+df, extending a definition of Deligne in the case of curves. In this article, we show the degeneration at E1 of the spectral sequence attached to the irregular Hodge filtration, by using the method of [Sab10]. We also make explicit the relation with a complex introduced by M. Kontsevich and give details on his proof of the correspond- ing E1-degeneration, by reduction to characteristic p, when the pole divisor of the function is reduced with normal crossings. In Appendix E, M. Saito gives a different proof of the latter statement with a possibly non reduced normal crossing pole divisor. Contents Introduction 2 1. The irregular Hodge filtration in the normal crossing case 3 1.1. Setup and notation 3 1.2. The irregular Hodge filtration 5 1.3. The Kontsevich complex 6 1.4. Comparison of the filtered twisted meromorphic de Rham complex and the filtered Kontsevich complex 7 1.5. Relation between Theorem 1.2.2 for α = 0 and Theorem 1.3.2 9 1.6. Deligne’s filtration: the D-module approach 11 1.7. Comparison of Yu’s filtration and Deligne’s filtration on the twisted de Rham complex 16 2. Preliminaries to a generalization of Deligne’s filtration 18 2.1. -

LECTURES 3 and 4: MARTINGALES 1. Introduction in an Arbitrage–Free

LECTURES 3 AND 4: MARTINGALES 1. Introduction In an arbitrage–free market, the share price of any traded asset at time t = 0 is the expected value, under an equilibrium measure, of its discounted price at market termination t = T . We shall see that the discounted share price at any time t ≤ T may also be computed by an expectation; however, this expectation is a conditional expectation, given the information about the market scenario that is revealed up to time t. Thus, the discounted share price process, as a (random) function of time, has the property that its value at any time t is the conditional expectation of the value at a future time given information available at t.A stochastic process with this property is called a martingale. The theory of martingales (initiated by Joseph Doob, following earlier work of Paul Levy´ ) is one of the central themes of modern probability. In discrete-time finance, the importance of martingales stems largely from the Optional Stopping Formula, which (a) places fundamental constraints on (discounted) share price processes, and (b) provides a computational tool of considerable utility. 2. Filtrations of Probability Spaces Filtrations. In a multiperiod market, information about the market scenario is revealed in stages. Some events may be completely determined by the end of the first trading period, others by the end of the second, and others not until the termination of all trading. This suggests the following classification of events: for each t ≤ T , (1) Ft = {all events determined in the first t trading periods}. The finite sequence (Ft)0≤t≤T is a filtration of the space Ω of market scenarios. -

Homomorphisms of Filtered Modules

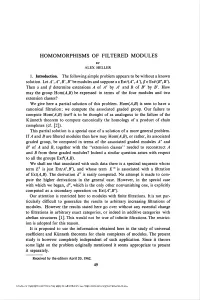

HOMOMORPHISMS OF FILTERED MODULES BY ALEX HELLER 1. Introduction. The following simple problem appears to be without a known solution. Let A', A", B', B" be modules and suppose a eExt'04", A'), ß e ExV(B",B'). Then a and ß determine extensions A of A" by A' and B of B" by B'. How may the group Hom(/l,B) be expressed in terms of the four modules and two extension classes? We give here a partial solution of this problem. Hom(A,B) is seen to have a canonical filtration; we compute the associated graded group. Our failure to compute Hom(y4,B) itself is to be thought of as analogous to the failure of the Kiinneth theorem to compute canonically the homology of a product of chain complexes (cf. [2]). This partial solution is a special case of a solution of a more general problem. If A and B are filtered modules then how may tlom(A,B), or rather, its associated graded group, be computed in terms of the associated graded modules A" and B" of A and B, together with the "extension classes" needed to reconstruct A and B from these graded modules? Indeed a similar question arises with respect to all the groups Extq(A,B). We shall see that associated with such data there is a spectral sequence whose term E1 is just Ext(A",B"), and whose term E °° is associated with a filtration of Ext(A,B). The derivation d1 is easily computed. No attempt is made to com- pute the higher derivations in the general case. -

Supports and Filtrations in Algebraic Geometry and Modular Representation Theory

SUPPORTS AND FILTRATIONS IN ALGEBRAIC GEOMETRY AND MODULAR REPRESENTATION THEORY PAUL BALMER Abstract. Working with tensor triangulated categories, we prove two the- orems relative to supports and then discuss their incarnations in algebraic geometry and in modular representation theory. First, we show that an in- decomposable object has connected support. Then we consider the filtrations by dimension or codimension of the support and prove that the associated subquotients decompose as sums of local terms. Introduction We proved in [1] that schemes X in algebraic geometry and projective support varieties VG(k) in modular representation theory both appear as the spectrum of prime ideals, Spc(K), for suitable tensor triangulated categories K, namely and respectively for K = Dperf (X), the derived category of perfect complexes over X, and for K = stab(kG), the stable category of finitely generated kG-modules modulo projectives. (This is recalled in Theorem 1.4 below.) In this spectrum Spc(K), one can construct for every object a ∈ K a closed subset supp(a) ⊂ Spc(K) called the support of a. This support provides a unified approach to the homological support supph(E) ⊂ X of a perfect complex E ∈ Dperf (X) and to the projective support variety VG(M) ⊂ VG(k) of a kG-module M ∈ stab(kG). Since tensor triangulated categories also appear in topology, motivic theory, KK-theory, and other areas, general results about spectrum and support have a wide potential of application. This level of generality, that we dub tensor triangular geometry, is the one of our main results. The first one is Theorem 2.11, which says : Theorem. -

Stochastic) Process {Xt, T ∈ T } Is a Collection of Random Variables on the Same Probability Space (Ω, F,P

Random Processes Florian Herzog 2013 Random Process Definition 1. Random process A random (stochastic) process fXt; t 2 T g is a collection of random variables on the same probability space (Ω; F;P ). The index set T is usually represen- ting time and can be either an interval [t1; t2] or a discrete set. Therefore, the stochastic process X can be written as a function: X : R × Ω ! R; (t; !) 7! X(t; !) Stochastic Systems, 2013 2 Filtration Observations: • The amount of information increases with time. • We use the concept of sigma algebras. • We assume that information is not lost with increasing time • Therefore the corresponding σ-algebras will increase over time when there is more information available. • ) This concept is called filtration. Definition 2. Filtration/adapted process A collection fFtgt≥0 of sub σ-algebras is called filtration if, for every s ≤ t, we have Fs ⊆ Ft. The random variables fXt : 0 ≤ t ≤ 1g are called adapted to the filtration Ft if, for every t, Xt is measurable with respect to Ft. Stochastic Systems, 2013 3 Filtration Example 1. Suppose we have a sample space of four elements: Ω = f!1;!2;!3;!4g. At time zero, we don't have any information about which T f g ! has been chosen. At time 2 we know wether we will have !1;!2 or f!3;!4g. r D = f!1g rB r E = f!2g r A r r F = f!3g C r G = f!4g - t T 0 2 T Abbildung 1: Example of a filtration Stochastic Systems, 2013 4 Difference between Random process and smooth functions Let x(·) be a real, continuously differentiable function defined on the interval [0;T ].