Data Collector User Guide

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A Highly Configurable High-Level Synthesis Functional Pattern Library

electronics Article A Highly Configurable High-Level Synthesis Functional Pattern Library Lan Huang 1,2,‡, Teng Gao 1,‡, Dalin Li 1,†, Zihao Wang 1 and Kangping Wang 1,2,* 1 College of Computer Science and Technology, Jilin University, Changchun 130012, China; [email protected] (L.H.); [email protected] (T.G.); [email protected] (D.L.); [email protected] (Z.W.) 2 Key Laboratory of Symbolic Computation and Knowledge Engineering, Jilin University, Changchun 130012, China * Correspondence: [email protected] † Current address: Zhuhai Laboratory of Key Laboratory of Symbol Computation and Knowledge Engineering of Ministry of Education, Department of Computer Science and Technology, Zhuhai College of Jilin University, Zhuhai 519041, China. ‡ These authors contributed equally to this work. Abstract: FPGA has recently played an increasingly important role in heterogeneous computing, but Register Transfer Level design flows are not only inefficient in design, but also require designers to be familiar with the circuit architecture. High-level synthesis (HLS) allows developers to design FPGA circuits more efficiently with a more familiar programming language, a higher level of abstraction, and automatic adaptation of timing constraints. When using HLS tools, such as Xilinx Vivado HLS, specific design patterns and techniques are required in order to create high-performance circuits. Moreover, designing efficient concurrency and data flow structures requires a deep understanding of the hardware, imposing more learning costs on programmers. In this paper, we propose a set of functional patterns libraries based on the MapReduce model, implemented by C++ templates, Citation: Huang, L.; Gao,T.; Li, D.; which can quickly implement high-performance parallel pipelined computing models on FPGA with Wang, Z.; Wang, K. -

Bash Guide for Beginners

Bash Guide for Beginners Machtelt Garrels Garrels BVBA <tille wants no spam _at_ garrels dot be> Version 1.11 Last updated 20081227 Edition Bash Guide for Beginners Table of Contents Introduction.........................................................................................................................................................1 1. Why this guide?...................................................................................................................................1 2. Who should read this book?.................................................................................................................1 3. New versions, translations and availability.........................................................................................2 4. Revision History..................................................................................................................................2 5. Contributions.......................................................................................................................................3 6. Feedback..............................................................................................................................................3 7. Copyright information.........................................................................................................................3 8. What do you need?...............................................................................................................................4 9. Conventions used in this -

PJM Command Line Interface

PJM Command Line Interface PJM Interconnection LLC Version 1.5.1 11-18-2020 PJM Command Line Interface Table of Contents Purpose ..................................................................................................................................................................................... 4 System Requirements ............................................................................................................................................................... 4 Release History ......................................................................................................................................................................... 4 Usage ........................................................................................................................................................................................ 5 Standalone Application ......................................................................................................................................................... 5 Example Standalone Execution ....................................................................................................................................... 5 Parameter Details ............................................................................................................................................................. 7 Password Encryption ....................................................................................................................................................... -

TASSEL 3.0 / 4.0 Pipeline Command Line Interface: Guide to Using Tassel Pipeline

TASSEL 3.0 / 4.0 Pipeline Command Line Interface: Guide to using Tassel Pipeline Terry Casstevens ([email protected]) Institute for Genomic Diversity, Cornell University, Ithaca, NY 14853-2703 March 28, 2014 Prerequisites ............................................................................................................................................................ 1 Source Code ............................................................................................................................................................ 1 Install ....................................................................................................................................................................... 1 Execute .................................................................................................................................................................... 1 Increasing Heap Size ............................................................................................................................................... 2 Examples ................................................................................................................................................................. 2 Examples (XML Configuration Files) .................................................................................................................... 2 Usage ...................................................................................................................................................................... -

Chapter 5. Writing Your Own Shell

Chapter 5. Writing Your Own Shell You really understand something until you program it. GRR Introduction Last chapter covered how to use a shell program using UNIX commands. The shell is a program that interacts with the user through a terminal or takes the input from a file and executes a sequence of commands that are passed to the Operating System. In this chapter you are going to learn how to write your own shell program. Shell Programs A shell program is an application that allows interacting with the computer. In a shell the user can run programs and also redirect the input to come from a file and output to come from a file. Shells also provide programming constructions such as if, for, while, functions, variables etc. Additionally, shell programs offer features such as line editing, history, file completion, wildcards, environment variable expansion, and programing constructions. Here is a list of the most popular shell programs in UNIX: sh Shell Program. The original shell program in UNIX. csh C Shell. An improved version of sh. tcsh A version of Csh that has line editing. ksh Korn Shell. The father of all advanced shells. bash The GNU shell. Takes the best of all shell programs. It is currently the most common shell program. In addition to commandline shells, there are also Graphical Shells such as the Windows Desktop, MacOS Finder, or Linux Gnome and KDE that simplify theDraft use of computers for most of the users. However, these graphical shells are not substitute to command line shells for power users who want to execute complex sequences of commands repeatedly or with parameters not available in the friendly, but limited graphical dialogs and controls. -

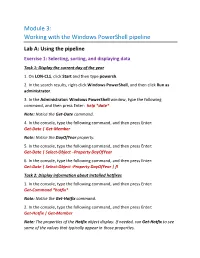

Working with the Windows Powershell Pipeline

Module 3: Working with the Windows PowerShell pipeline Lab A: Using the pipeline Exercise 1: Selecting, sorting, and displaying data Task 1: Display the current day of the year 1. On LON-CL1, click Start and then type powersh. 2. In the search results, right-click Windows PowerShell, and then click Run as administrator. 3. In the Administrator: Windows PowerShell window, type the following command, and then press Enter: help *date* Note: Notice the Get-Date command. 4. In the console, type the following command, and then press Enter: Get-Date | Get-Member Note: Notice the DayOfYear property. 5. In the console, type the following command, and then press Enter: Get-Date | Select-Object –Property DayOfYear 6. In the console, type the following command, and then press Enter: Get-Date | Select-Object -Property DayOfYear | fl Task 2: Display information about installed hotfixes 1. In the console, type the following command, and then press Enter: Get-Command *hotfix* Note: Notice the Get-Hotfix command. 2. In the console, type the following command, and then press Enter: Get-Hotfix | Get-Member Note: The properties of the Hotfix object display. If needed, run Get-Hotfix to see some of the values that typically appear in those properties. 3. In the console, type the following command, and then press Enter: Get-Hotfix | Select-Object –Property HotFixID,InstalledOn,InstalledBy 4. In the console, type the following command, and then press Enter: Get-Hotfix | Select-Object –Property HotFixID,@{n='HotFixAge';e={(New- TimeSpan –Start $PSItem.InstalledOn).Days}},InstalledBy Task 3: Display a list of available scopes from the DHCP server 1. -

Introduction to Linux/Unix

Introduction to Linux/Unix Xiaoge Wang, ICER [email protected] Feb. 4, 2016 How does this class work • We are going to cover some basics with hands on examples. • Exercises are denoted by the following icon: • Bold means commands which I expect you type on your terminal in most cases. Green and red sticky Use the sJcKy notes provided to help me help you. – No s%cky = I am worKing – Green = I am done and ready to move on (yea!) – Red = I am stucK and need more Jme and/or some help Agenda • IntroducJon • Linux – Commands • Navigaon • Get or create files • Organizing files • Closer looK into files • Search files • Distribute files • File permission • Learn new commands – Scripts • Pipeline • Make you own command • Environment of a shell • Summary Agenda • Linux – Commands • Navigaon • Get or create files • Organizing files • Closer looK into files • Search files • Distribute files • File permission • Learn new commands – Scripts • Pipeline • Make you own command • Environment of a shell • Summary Introduction • Get ready for adventure? – TicKet(account)? • Big map – Linux/Unix – Shell • Overview of the trail – Commands – Simple Shell script – Get ready for HPC. Exercise 0: Get ready • Connect to HPCC (gateway) – $ ssh [email protected] • Windows users MobaXterm • Mac users Terminal • Linux users? • Read important message – $ mod • Go to a development node – $ ssh dev-nodename Message of the day (mod) • Mac Show screen Big picture Shell Shell Big picture • Shell – CLI ✔ – GUI OS shell example Overview of the trail • Commands • Simple Shell script • Get ready for HPC Linux shell tour Ready? GO! Agenda • IntroducJon – Scripts • Pipeline • Make you own command • Environment of a shell • Summary Example 1: Navigation • TasK: Wander around on a node. -

Unix Tools As Visual Programming Components in a GUI-Builder

Unix Tools as Visual Programming Components Ý in a GUI-builder Environment £ Diomidis Spinellis Department of Management Science and Technology Athens University of Economics and Business Patision 76, GR-104 34 Athens, Greece email: [email protected] September, 2001 Abstract Introduction A number of environments support the visual composition Development environments based on ActiveX controls and of graphical user interfaces (GUIs) using components with a JavaBeans are marketed as “visual programming” plat- predefined set of interfaces. In addition, technologies such forms; in practice their visual dimension is limited to the as ActiveX and JavaBeans allow the development of visual design and implementation of an application’s graphical components (typically GUI elements) that can be seamlessly user interface (GUI. The availability of sophisticated GUI incorporated into an integrated development environment development environments and visual component develop- (IDE) and subsequently used in application development. In ment frameworks is now providing viable platforms for this article we present how visual IDEs and components can implementing visual programming within general-purpose be extended beyond GUI development to support visual pro- platforms, i.e. for the specification of non-GUI program gramming for a particular domain. functionality using visual representations. We describe A visual programming language can be informally de- how specially-designed reflective components can be used in an industry-standard visual programming environment fined as a programming language with a syntax that in- cludes visual expressions such as diagrams, free-hand to graphically specify sophisticated data transformation sketches, icons, or graphical manipulations [1]. Visual pipelines that interact with GUI elements. The components are based on Unix-style filters repackaged as ActiveX con- programming approaches aim towards easing the program- ming learning curve or enhancing programming productiv- trols. -

Shell Scripting and System Variables HORT 59000 Lecture 5 Instructor: Kranthi Varala Text Editors

Shell scripting and system variables HORT 59000 Lecture 5 Instructor: Kranthi Varala Text editors • Programs built to assist creation and manipulation of text files, typically scripts. • nano : easy-to-learn, supports syntax highlighting, lacks GUI. • Emacs : provides basic editing functions but also extendible to add functionality. Supports GUI, extensions provide a wide range of functions. • vi/vim : extensive editing functions and relatively limited extensibility, command and insert modes distinct, steep learning curve, but very rewarding experience. Text manipulations • Tabular data files can be manipulated at a column- level. 1. Cut: Divide file & extract columns. 2. Paste: Combine multiple columns into a single table/file. • Sort: Sort lines in a file based on contents of one or more columns. • Regular expressions : defining patterns in text. Special characters and quantifiers allow search and replacement of simple-to-complex matches. • grep and awk use the power of regular expressions to make text processing very easy. Command-line operations • All commands so far are run one at a time. • Redirection and pipes allow combining a few commands together into a single pipeline. • Lacks logical complexity, such as ability to make decisions based on input / values in file. • Certain repetitive tasks are tedious to user. • All commands are being sent to and interpreted by the ‘shell’ Client/Server architecture User1 User2 Server (UNIX/ Web/ Database etc..) User3 User4 Terminology • Terminal: Device or Program used to establish a connection to the UNIX server • Shell: Program that runs on the server and interprets the commands from the terminal. • Command line: The text-interface you use to interact with the shell. -

Linux Command Line Basics III: Piping Commands for Text Processing Yanbin Yin

Linux command line basics III: piping commands for text processing Yanbin Yin 1 http://korflab.ucdavis.edu/Unix_and_Perl/unix_and_perl_v3.1.1.pdf 2 The beauty of Unix for bioinformatics sort, cut, uniq, join, paste, sed, grep, awk, wc, diff, comm, cat All types of bioinformatics sequence analyses are essentially text processing. Unix Shell has the above commands that are very useful for processing texts and also allows the output from one command to be passed to another command as input using pipes (“|”). This makes the processing of files using Shell very convenient and very powerful: you do not need to write output to intermediate files or load all data into the memory. For example, combining different Unix commands for text processing is like passing an item through a manufacturing pipeline when you only care about the final product | Hold shift and press 4 cut: extract columns from a file less file | cut –f1 # cut the first column (default delimiter tabular key) less file | cut –f1 –d ‘ ‘ # specify delimiter to be regular space less file | cut –f1-3 # cut 1 to 3 col less file | cut –f1,7,10 > file.1-7-10 # cut 1, 7, 10 col and save as a new file sort: sort rows in a file, default on first col in alphabetical order (0-9 then a-z, 10 comes before 9) less file | sort –k 2 # sort on 2 col less file | sort –k 2,2n # sort in numeric order less file | sort –k 2,2nr # sort in reverse numeric order uniq: report file without repeated occurrences less file | cut –f2 | sort | uniq # unique text less file | cut –f2 | sort | uniq –c # count number -

The AWK Manual Edition 1.0 December 1995

The AWK Manual Edition 1.0 December 1995 Diane Barlow Close Arnold D. Robbins Paul H. Rubin Richard Stallman Piet van Oostrum Copyright c 1989, 1991, 1992, 1993 Free Software Foundation, Inc. This is Edition 1.0 of The AWK Manual, for the new implementation of AWK (sometimes called nawk). Notice: This work is derived from the original gawk manual. Adaptions for NAWK made by Piet van Oostrum, Dec. 1995, July 1998. Permission is granted to make and distribute verbatim copies of this manual provided the copyright notice and this permission notice are preserved on all copies. Permission is granted to copy and distribute modified versions of this manual under the conditions for verbatim copying, provided that the entire resulting derived work is distributed under the terms of a permission notice identical to this one. Permission is granted to copy and distribute translations of this manual into another language, under the above conditions for modified versions, except that this permission notice may be stated in a translation approved by the Foundation. Preface 1 Preface If you are like many computer users, you would frequently like to make changes in various text files wherever certain patterns appear, or extract data from parts of certain lines while discarding the rest. To write a program to do this in a language such as C or Pascal is a time-consuming inconvenience that may take many lines of code. The job may be easier with awk. The awk utility interprets a special-purpose programming language that makes it possible to handle simple data-reformatting jobs easily with just a few lines of code. -

An Order-Aware Dataflow Model for Parallel Unix Pipelines

65 An Order-Aware Dataflow Model for Parallel Unix Pipelines SHIVAM HANDA∗, CSAIL, MIT, USA KONSTANTINOS KALLAS∗, University of Pennsylvania, USA NIKOS VASILAKIS∗, CSAIL, MIT, USA MARTIN C. RINARD, CSAIL, MIT, USA We present a dataflow model for modelling parallel Unix shell pipelines. To accurately capture the semantics of complex Unix pipelines, the dataflow model is order-aware, i.e., the order in which a node in the dataflow graph consumes inputs from different edges plays a central role in the semantics of the computation and therefore in the resulting parallelization. We use this model to capture the semantics of transformations that exploit data parallelism available in Unix shell computations and prove their correctness. We additionally formalize the translations from the Unix shell to the dataflow model and from the dataflow model backtoa parallel shell script. We implement our model and transformations as the compiler and optimization passes of a system parallelizing shell pipelines, and use it to evaluate the speedup achieved on 47 pipelines. CCS Concepts: • Software and its engineering ! Compilers; Massively parallel systems; Scripting languages. Additional Key Words and Phrases: Unix, POSIX, Shell, Parallelism, Dataflow, Order-awareness ACM Reference Format: Shivam Handa, Konstantinos Kallas, Nikos Vasilakis, and Martin C. Rinard. 2021. An Order-Aware Dataflow Model for Parallel Unix Pipelines. Proc. ACM Program. Lang. 5, ICFP, Article 65 (August 2021), 28 pages. https://doi.org/10.1145/3473570 1 Introduction Unix pipelines