Increasing the Performance and Predictability of the Code Execution

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Java (Programming Langua a (Programming Language)

Java (programming language) From Wikipedia, the free encyclopedialopedia "Java language" redirects here. For the natural language from the Indonesian island of Java, see Javanese language. Not to be confused with JavaScript. Java multi-paradigm: object-oriented, structured, imperative, Paradigm(s) functional, generic, reflective, concurrent James Gosling and Designed by Sun Microsystems Developer Oracle Corporation Appeared in 1995[1] Java Standard Edition 8 Update Stable release 5 (1.8.0_5) / April 15, 2014; 2 months ago Static, strong, safe, nominative, Typing discipline manifest Major OpenJDK, many others implementations Dialects Generic Java, Pizza Ada 83, C++, C#,[2] Eiffel,[3] Generic Java, Mesa,[4] Modula- Influenced by 3,[5] Oberon,[6] Objective-C,[7] UCSD Pascal,[8][9] Smalltalk Ada 2005, BeanShell, C#, Clojure, D, ECMAScript, Influenced Groovy, J#, JavaScript, Kotlin, PHP, Python, Scala, Seed7, Vala Implementation C and C++ language OS Cross-platform (multi-platform) GNU General Public License, License Java CommuniCommunity Process Filename .java , .class, .jar extension(s) Website For Java Developers Java Programming at Wikibooks Java is a computer programming language that is concurrent, class-based, object-oriented, and specifically designed to have as few impimplementation dependencies as possible.ble. It is intended to let application developers "write once, run ananywhere" (WORA), meaning that code that runs on one platform does not need to be recompiled to rurun on another. Java applications ns are typically compiled to bytecode (class file) that can run on anany Java virtual machine (JVM)) regardless of computer architecture. Java is, as of 2014, one of tthe most popular programming ng languages in use, particularly for client-server web applications, witwith a reported 9 million developers.[10][11] Java was originallyy developed by James Gosling at Sun Microsystems (which has since merged into Oracle Corporation) and released in 1995 as a core component of Sun Microsystems'Micros Java platform. -

Garbage Collection for Java Distributed Objects

GARBAGE COLLECTION FOR JAVA DISTRIBUTED OBJECTS by Andrei A. Dãncus A Thesis Submitted to the Faculty of the WORCESTER POLYTECHNIC INSTITUTE in partial fulfillment of the requirements of the Degree of Master of Science in Computer Science by ____________________________ Andrei A. Dãncus Date: May 2nd, 2001 Approved: ___________________________________ Dr. David Finkel, Advisor ___________________________________ Dr. Mark L. Claypool, Reader ___________________________________ Dr. Micha Hofri, Head of Department Abstract We present a distributed garbage collection algorithm for Java distributed objects using the object model provided by the Java Support for Distributed Objects (JSDA) object model and using weak references in Java. The algorithm can also be used for any other Java based distributed object models that use the stub-skeleton paradigm. Furthermore, the solution could also be applied to any language that supports weak references as a mean of interaction with the local garbage collector. We also give a formal definition and a proof of correctness for the proposed algorithm. i Acknowledgements I would like to express my gratitude to my advisor Dr. David Finkel, for his encouragement and guidance over the last two years. I also want to thank Dr. Mark Claypool for being the reader of this thesis. Thanks to Radu Teodorescu, co-author of the initial JSDA project, for reviewing portions of the JSDA Parser. ii Table of Contents 1. Introduction……………………………………………………………………………1 2. Background and Related Work………………………………………………………3 2.1 Distributed -

Real-Time Java for Embedded Devices: the Javamen Project*

REAL-TIME JAVA FOR EMBEDDED DEVICES: THE JAVAMEN PROJECT* A. Borg, N. Audsley, A. Wellings The University of York, UK ABSTRACT: Hardware Java-specific processors have been shown to provide the performance benefits over their software counterparts that make Java a feasible environment for executing even the most computationally expensive systems. In most cases, the core of these processors is a simple stack machine on which stack operations and logic and arithmetic operations are carried out. More complex bytecodes are implemented either in microcode through a sequence of stack and memory operations or in Java and therefore through a set of bytecodes. This paper investigates the Figure 1: Three alternatives for executing Java code (take from (6)) state-of-the-art in Java processors and identifies two areas of improvement for specialising these processors timeliness as a key issue. The language therefore fails to for real-time applications. This is achieved through a allow more advanced temporal requirements of threads combination of the implementation of real-time Java to be expressed and virtual machine implementations components in hardware and by using application- may behave unpredictably in this context. For example, specific characteristics expressed at the Java level to whereas a basic thread priority can be specified, it is not drive a co-design strategy. An implementation of these required to be observed by a virtual machine and there propositions will provide a flexible Ravenscar- is no guarantee that the highest priority thread will compliant virtual machine that provides better preempt lower priority threads. In order to address this performance while still guaranteeing real-time shortcoming, two competing specifications have been requirements. -

WEP12 Writing TINE Servers in Java

Proceedings of PCaPAC2005, Hayama, Japan WRITING TINE SERVERS IN JAVA Philip Duval and Josef Wilgen Deutsches Elektronen Synchrotron /MST Abstract that the TINE protocol does not deal so much with ‘puts’ The TINE Control System [1] is used to some degree in and ‘gets’ as with data ‘links’. all accelerator facilities at DESY (Hamburg and Zeuthen) One’s first inclination when offering Java in the and plays a major role in HERA. It supports a wide Control System’s portfolio is to say that we don’t need to variety of platforms, which enables engineers and worry about a Java Server API since front-end servers machine physicists as well as professional programmers will always have to access their hardware and that is best to develop and integrate front-end server software into the left to code written in C. Furthermore, if there are real- control system using the operating system and platform of time requirements, Java would not be an acceptable their choice. User applications have largely been written platform owing to Java’s garbage collection kicking in at for Windows platforms (often in Visual Basic). In the next indeterminate intervals. generation of accelerators at DESY (PETRA III and Nevertheless, Java is a powerful language and offers VUV-FEL), it is planned to write the TINE user- numerous features and a wonderful framework for applications primarily in Java. Java control applications avoiding and catching nagging program errors. Thus have indeed enjoyed widespread acceptance within the there does in fact exist a strong desire to develop control controls community. The next step is then to offer Java as system servers using Java. -

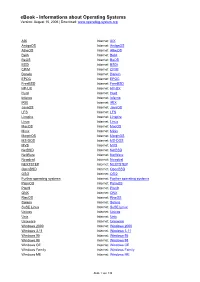

Ebook - Informations About Operating Systems Version: August 15, 2006 | Download

eBook - Informations about Operating Systems Version: August 15, 2006 | Download: www.operating-system.org AIX Internet: AIX AmigaOS Internet: AmigaOS AtheOS Internet: AtheOS BeIA Internet: BeIA BeOS Internet: BeOS BSDi Internet: BSDi CP/M Internet: CP/M Darwin Internet: Darwin EPOC Internet: EPOC FreeBSD Internet: FreeBSD HP-UX Internet: HP-UX Hurd Internet: Hurd Inferno Internet: Inferno IRIX Internet: IRIX JavaOS Internet: JavaOS LFS Internet: LFS Linspire Internet: Linspire Linux Internet: Linux MacOS Internet: MacOS Minix Internet: Minix MorphOS Internet: MorphOS MS-DOS Internet: MS-DOS MVS Internet: MVS NetBSD Internet: NetBSD NetWare Internet: NetWare Newdeal Internet: Newdeal NEXTSTEP Internet: NEXTSTEP OpenBSD Internet: OpenBSD OS/2 Internet: OS/2 Further operating systems Internet: Further operating systems PalmOS Internet: PalmOS Plan9 Internet: Plan9 QNX Internet: QNX RiscOS Internet: RiscOS Solaris Internet: Solaris SuSE Linux Internet: SuSE Linux Unicos Internet: Unicos Unix Internet: Unix Unixware Internet: Unixware Windows 2000 Internet: Windows 2000 Windows 3.11 Internet: Windows 3.11 Windows 95 Internet: Windows 95 Windows 98 Internet: Windows 98 Windows CE Internet: Windows CE Windows Family Internet: Windows Family Windows ME Internet: Windows ME Seite 1 von 138 eBook - Informations about Operating Systems Version: August 15, 2006 | Download: www.operating-system.org Windows NT 3.1 Internet: Windows NT 3.1 Windows NT 4.0 Internet: Windows NT 4.0 Windows Server 2003 Internet: Windows Server 2003 Windows Vista Internet: Windows Vista Windows XP Internet: Windows XP Apple - Company Internet: Apple - Company AT&T - Company Internet: AT&T - Company Be Inc. - Company Internet: Be Inc. - Company BSD Family Internet: BSD Family Cray Inc. -

Bypassing Portability Pitfalls of High-Level Low-Level Programming

Bypassing Portability Pitfalls of High-level Low-level Programming Yi Lin, Stephen M. Blackburn Australian National University [email protected], [email protected] Abstract memory-safety, encapsulation, and strong abstraction over hard- Program portability is an important software engineering consider- ware [12], which are desirable goals for system programming as ation. However, when high-level languages are extended to effec- well. Thus, high-level languages are potential candidates for sys- tively implement system projects for software engineering gain and tem programming. safety, portability is compromised—high-level code for low-level Prior research has focused on the feasibility and performance of programming cannot execute on a stock runtime, and, conversely, applying high-level languages to system programming [1, 7, 10, a runtime with special support implemented will not be portable 15, 16, 21, 22, 26–28]. The results showed that, with proper ex- across different platforms. tension and restriction, high-level languages are able to undertake We explore the portability pitfall of high-level low-level pro- the task of low-level programming, while preserving type-safety, gramming in the context of virtual machine implementation tasks. memory-safety, encapsulation and abstraction. Notwithstanding the Our approach is designing a restricted high-level language called cost for dynamic compilation and garbage collection, the perfor- RJava, with a flexible restriction model and effective low-level ex- mance of high-level languages when used to implement a virtual tensions, which is suitable for different scopes of virtual machine machine is still competitive with using a low-level language [2]. implementation, and also suitable for a low-level language bypass Using high-level languages to architect large systems is bene- for improved portability. -

Anpassung Eines Netzwerkprozessor-Referenzsystems Im Rahmen Des MAMS-Forschungsprojektes

Anpassung eines Netzwerkprozessor-Referenzsystems im Rahmen des MAMS-Forschungsprojektes Diplomarbeit an der Fachhochschule Hof Fachbereich Informatik / Technik Studiengang: Angewandte Informatik Vorgelegt bei von Prof. Dr. Dieter Bauer Stefan Weber Fachhochschule Hof Pacellistr. 23 Alfons-Goppel-Platz 1 95119 Naila 95028 Hof Matrikelnr. 00041503 Abgabgetermin: Freitag, den 28. September 2007 Hof, den 27. September 2007 Diplomarbeit - ii - Inhaltsverzeichnis Titelseite i Inhaltsverzeichnis ii Abbildungsverzeichnis v Tabellenverzeichnis vii Quellcodeverzeichnis viii Funktionsverzeichnis x Abkurzungen¨ xi Definitionen / Worterkl¨arungen xiii 1 Vorwort 1 2 Einleitung 3 2.1 Abstract ......................................... 3 2.2 Zielsetzung der Arbeit ................................. 3 2.3 Aufbau der Arbeit .................................... 4 2.4 MAMS .......................................... 4 2.4.1 Anwendungsszenario .............................. 4 2.4.2 Einführung ................................... 5 2.4.3 NGN - Next Generation Networks ....................... 5 2.4.4 Abstrakte Darstellung und Begriffe ...................... 7 3 Hauptteil 8 3.1 BorderGateway Hardware ............................... 8 3.1.1 Control-Blade - Control-PC .......................... 9 3.1.2 ConverGate-D Evaluation Board: Modell easy4271 ............. 10 3.1.3 ConverGate-D Netzwerkprozessor ....................... 12 3.1.4 Motorola POWER Chip (MPC) Modul ..................... 12 3.1.5 Test- und Arbeitsumgebung .......................... 13 3.2 Vorhandene -

Here I Led Subcommittee Reports Related to Data-Intensive Science and Post-Moore Computing) and in CRA’S Board of Directors Since 2015

Vivek Sarkar Curriculum Vitae Contents 1 Summary 2 2 Education 3 3 Professional Experience 3 3.1 2017-present: College of Computing, Georgia Institute of Technology . 3 3.2 2007-present: Department of Computer Science, Rice University . 5 3.3 1987-2007: International Business Machines Corporation . 7 4 Professional Awards 11 5 Research Awards 12 6 Industry Gifts 15 7 Graduate Student and Other Mentoring 17 8 Professional Service 19 8.1 Conference Committees . 20 8.2 Advisory/Award/Review/Steering Committees . 25 9 Keynote Talks, Invited Talks, Panels (Selected) 27 10 Teaching 33 11 Publications 37 11.1 Refereed Conference and Journal Publications . 37 11.2 Refereed Workshop Publications . 51 11.3 Books, Book Chapters, and Edited Volumes . 58 12 Patents 58 13 Software Artifacts (Selected) 59 14 Personal Information 60 Page 1 of 60 01/06/2020 1 Summary Over thirty years of sustained contributions to programming models, compilers and runtime systems for high performance computing, which include: 1) Leading the development of ASTI during 1991{1996, IBM's first product compiler component for optimizing locality, parallelism, and the (then) new FORTRAN 90 high-productivity array language (ASTI has continued to ship as part of IBM's XL Fortran product compilers since 1996, and was also used as the foundation for IBM's High Performance Fortran compiler product); 2) Leading the research and development of the open source Jikes Research Virtual Machine at IBM during 1998{2001, a first-of-a-kind Java Virtual Machine (JVM) and dynamic compiler implemented -

Embedded Java with GCJ

Embedded Java with GCJ http://0-delivery.acm.org.innopac.lib.ryerson.ca/10.1145/1140000/11341... Embedded Java with GCJ Gene Sally Abstract You don't always need a Java Virtual Machine to run Java in an embedded system. This article discusses how to use GCJ, part of the GCC compiler suite, in an embedded Linux project. Like all tools, GCJ has benefits, namely the ability to code in a high-level language like Java, and its share of drawbacks as well. The notion of getting GCJ running on an embedded target may be daunting at first, but you'll see that doing so requires less effort than you may think. After reading the article, you should be inspired to try this out on a target to see whether GCJ can fit into your next project. The Java language has all sorts of nifty features, like automatic garbage collection, an extensive, robust run-time library and expressive object-oriented constructs that help you quickly develop reliable code. Why Use GCJ in the First Place? The native code compiler for Java does what is says: compiles your Java source down to the machine code for the target. This means you won't have to get a JVM (Java Virtual Machine) on your target. When you run the program's code, it won't start a VM, it will simply load and run like any other program. This doesn't necessarily mean your code will run faster. Sometimes you get better performance numbers for byte code running on a hot-spot VM versus GCJ-compiled code. -

Research Purpose Operating Systems – a Wide Survey

GESJ: Computer Science and Telecommunications 2010|No.3(26) ISSN 1512-1232 RESEARCH PURPOSE OPERATING SYSTEMS – A WIDE SURVEY Pinaki Chakraborty School of Computer and Systems Sciences, Jawaharlal Nehru University, New Delhi – 110067, India. E-mail: [email protected] Abstract Operating systems constitute a class of vital software. A plethora of operating systems, of different types and developed by different manufacturers over the years, are available now. This paper concentrates on research purpose operating systems because many of them have high technological significance and they have been vividly documented in the research literature. Thirty-four academic and research purpose operating systems have been briefly reviewed in this paper. It was observed that the microkernel based architecture is being used widely to design research purpose operating systems. It was also noticed that object oriented operating systems are emerging as a promising option. Hence, the paper concludes by suggesting a study of the scope of microkernel based object oriented operating systems. Keywords: Operating system, research purpose operating system, object oriented operating system, microkernel 1. Introduction An operating system is a software that manages all the resources of a computer, both hardware and software, and provides an environment in which a user can execute programs in a convenient and efficient manner [1]. However, the principles and concepts used in the operating systems were not standardized in a day. In fact, operating systems have been evolving through the years [2]. There were no operating systems in the early computers. In those systems, every program required full hardware specification to execute correctly and perform each trivial task, and its own drivers for peripheral devices like card readers and line printers. -

A Post-Apocalyptic Sun.Misc.Unsafe World

A Post-Apocalyptic sun.misc.Unsafe World http://www.superbwallpapers.com/fantasy/post-apocalyptic-tower-bridge-london-26546/ Chris Engelbert Twitter: @noctarius2k Jatumba! 2014, 2015, 2016, … Disclaimer This talk is not going to be negative! Disclaimer But certain things are highly speculative and APIs or ideas might change by tomorrow! sun.misc.Scissors http://www.underwhelmedcomic.com/wp-content/uploads/2012/03/runningdude.jpg sun.misc.Unsafe - What you (don’t) know sun.misc.Unsafe - What you (don’t) know • Internal class (sun.misc Package) sun.misc.Unsafe - What you (don’t) know • Internal class (sun.misc Package) sun.misc.Unsafe - What you (don’t) know • Internal class (sun.misc Package) • Used inside the JVM / JRE sun.misc.Unsafe - What you (don’t) know • Internal class (sun.misc Package) • Used inside the JVM / JRE // Unsafe mechanics private static final sun.misc.Unsafe U; private static final long QBASE; private static final long QLOCK; private static final int ABASE; private static final int ASHIFT; static { try { U = sun.misc.Unsafe.getUnsafe(); Class<?> k = WorkQueue.class; Class<?> ak = ForkJoinTask[].class; example: QBASE = U.objectFieldOffset (k.getDeclaredField("base")); java.util.concurrent.ForkJoinPool QLOCK = U.objectFieldOffset (k.getDeclaredField("qlock")); ABASE = U.arrayBaseOffset(ak); int scale = U.arrayIndexScale(ak); if ((scale & (scale - 1)) != 0) throw new Error("data type scale not a power of two"); ASHIFT = 31 - Integer.numberOfLeadingZeros(scale); } catch (Exception e) { throw new Error(e); } } } sun.misc.Unsafe -

Free Java Developer Room

Room: AW1.121 Free Java Developer Room Saturday 2008-02-23 14:00-15:00 Welcome to the Free Java Meeting Welcome and introduction to the projects, people and themes that make Rich Sands – Mark Reinhold – Mark up the Free Java Meeting at Fosdem. ~ GNU Classpath ~ OpenJDK Wielaard – Tom Marble 15:00-16:00 Mobile Java Take your freedom to the max! Make your Free Java mobile. Christian Thalinger - Guillaume ~ CACAO Embedded ~ PhoneME ~ Midpath Legris - Ray Gans 16:00-16:40 Women in Java Technology Female programmers are rare. Female Java programmers are even more Clara Ko - Linda van der Pal rare. ~ Duchess, Ladies in Java Break 17:00-17:30 Hacking OpenJDK & Friends Hear about directions in hacking Free Java from the front lines. Roman Kennke - Andrew Hughes ~ OpenJDK ~ BrandWeg ~ IcePick 17:30-19:00 VM Rumble, Porting and Architectures Dalibor Topic - Robert Lougher - There are lots of runtimes able to execute your java byte code. But which Peter Kessler - Ian Rogers - one is the best, coolest, smartest, easiest portable or just simply the most fun? ~ Kaffe ~ JamVM ~ HotSpot ~ JikesRVM ~ CACAO ~ ikvm ~ Zero- Christian Thalinger - Jeroen Frijters assembler Port ~ Mika - Gary Benson - Chris Gray Sunday 2008-02-24 9:00-10:00 Distro Rumble So which GNU/Linux distribution integrates java packages best? Find out Petteri Raty - Tom Fitzsimmons - during this distro shootout! ~ Gentoo ~ Fedora ~ Debian ~ Ubuntu Matthias Klose 10:00-11:30 The Free Java Factory OpenJDK and IcedTea, how are they made and how do you test them? David Herron - Lillian Angel - Tom ~ OpenJDK ~ IcedTea Fitzsimmons 11:30-13:00 JIT Session: Discussion Topics Dynamically Loaded Want to hear about -- or talk about -- something the Free Java world and don't see a topic on the agenda? This time is reserved for late binding Tom Marble discussion.