Scalable Long Read Self-Correction and Assembly Polishing with Multiple Sequence Alignment

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Backtrack Parsing Context-Free Grammar Context-Free Grammar

Context-free Grammar Problems with Regular Context-free Grammar Language and Is English a regular language? Bad question! We do not even know what English is! Two eggs and bacon make(s) a big breakfast Backtrack Parsing Can you slide me the salt? He didn't ought to do that But—No! Martin Kay I put the wine you brought in the fridge I put the wine you brought for Sandy in the fridge Should we bring the wine you put in the fridge out Stanford University now? and University of the Saarland You said you thought nobody had the right to claim that they were above the law Martin Kay Context-free Grammar 1 Martin Kay Context-free Grammar 2 Problems with Regular Problems with Regular Language Language You said you thought nobody had the right to claim [You said you thought [nobody had the right [to claim that they were above the law that [they were above the law]]]] Martin Kay Context-free Grammar 3 Martin Kay Context-free Grammar 4 Problems with Regular Context-free Grammar Language Nonterminal symbols ~ grammatical categories Is English mophology a regular language? Bad question! We do not even know what English Terminal Symbols ~ words morphology is! They sell collectables of all sorts Productions ~ (unordered) (rewriting) rules This concerns unredecontaminatability Distinguished Symbol This really is an untiable knot. But—Probably! (Not sure about Swahili, though) Not all that important • Terminals and nonterminals are disjoint • Distinguished symbol Martin Kay Context-free Grammar 5 Martin Kay Context-free Grammar 6 Context-free Grammar Context-free -

Adaptive LL(*) Parsing: the Power of Dynamic Analysis

Adaptive LL(*) Parsing: The Power of Dynamic Analysis Terence Parr Sam Harwell Kathleen Fisher University of San Francisco University of Texas at Austin Tufts University [email protected] [email protected] kfi[email protected] Abstract PEGs are unambiguous by definition but have a quirk where Despite the advances made by modern parsing strategies such rule A ! a j ab (meaning “A matches either a or ab”) can never as PEG, LL(*), GLR, and GLL, parsing is not a solved prob- match ab since PEGs choose the first alternative that matches lem. Existing approaches suffer from a number of weaknesses, a prefix of the remaining input. Nested backtracking makes de- including difficulties supporting side-effecting embedded ac- bugging PEGs difficult. tions, slow and/or unpredictable performance, and counter- Second, side-effecting programmer-supplied actions (muta- intuitive matching strategies. This paper introduces the ALL(*) tors) like print statements should be avoided in any strategy that parsing strategy that combines the simplicity, efficiency, and continuously speculates (PEG) or supports multiple interpreta- predictability of conventional top-down LL(k) parsers with the tions of the input (GLL and GLR) because such actions may power of a GLR-like mechanism to make parsing decisions. never really take place [17]. (Though DParser [24] supports The critical innovation is to move grammar analysis to parse- “final” actions when the programmer is certain a reduction is time, which lets ALL(*) handle any non-left-recursive context- part of an unambiguous final parse.) Without side effects, ac- free grammar. ALL(*) is O(n4) in theory but consistently per- tions must buffer data for all interpretations in immutable data forms linearly on grammars used in practice, outperforming structures or provide undo actions. -

Sequence Alignment/Map Format Specification

Sequence Alignment/Map Format Specification The SAM/BAM Format Specification Working Group 3 Jun 2021 The master version of this document can be found at https://github.com/samtools/hts-specs. This printing is version 53752fa from that repository, last modified on the date shown above. 1 The SAM Format Specification SAM stands for Sequence Alignment/Map format. It is a TAB-delimited text format consisting of a header section, which is optional, and an alignment section. If present, the header must be prior to the alignments. Header lines start with `@', while alignment lines do not. Each alignment line has 11 mandatory fields for essential alignment information such as mapping position, and variable number of optional fields for flexible or aligner specific information. This specification is for version 1.6 of the SAM and BAM formats. Each SAM and BAMfilemay optionally specify the version being used via the @HD VN tag. For full version history see Appendix B. Unless explicitly specified elsewhere, all fields are encoded using 7-bit US-ASCII 1 in using the POSIX / C locale. Regular expressions listed use the POSIX / IEEE Std 1003.1 extended syntax. 1.1 An example Suppose we have the following alignment with bases in lowercase clipped from the alignment. Read r001/1 and r001/2 constitute a read pair; r003 is a chimeric read; r004 represents a split alignment. Coor 12345678901234 5678901234567890123456789012345 ref AGCATGTTAGATAA**GATAGCTGTGCTAGTAGGCAGTCAGCGCCAT +r001/1 TTAGATAAAGGATA*CTG +r002 aaaAGATAA*GGATA +r003 gcctaAGCTAA +r004 ATAGCT..............TCAGC -r003 ttagctTAGGC -r001/2 CAGCGGCAT The corresponding SAM format is:2 1Charset ANSI X3.4-1968 as defined in RFC1345. -

Lexing and Parsing with ANTLR4

Lab 2 Lexing and Parsing with ANTLR4 Objective • Understand the software architecture of ANTLR4. • Be able to write simple grammars and correct grammar issues in ANTLR4. EXERCISE #1 Lab preparation Ï In the cap-labs directory: git pull will provide you all the necessary files for this lab in TP02. You also have to install ANTLR4. 2.1 User install for ANTLR4 and ANTLR4 Python runtime User installation steps: mkdir ~/lib cd ~/lib wget http://www.antlr.org/download/antlr-4.7-complete.jar pip3 install antlr4-python3-runtime --user Then in your .bashrc: export CLASSPATH=".:$HOME/lib/antlr-4.7-complete.jar:$CLASSPATH" export ANTLR4="java -jar $HOME/lib/antlr-4.7-complete.jar" alias antlr4="java -jar $HOME/lib/antlr-4.7-complete.jar" alias grun='java org.antlr.v4.gui.TestRig' Then source your .bashrc: source ~/.bashrc 2.2 Structure of a .g4 file and compilation Links to a bit of ANTLR4 syntax : • Lexical rules (extended regular expressions): https://github.com/antlr/antlr4/blob/4.7/doc/ lexer-rules.md • Parser rules (grammars) https://github.com/antlr/antlr4/blob/4.7/doc/parser-rules.md The compilation of a given .g4 (for the PYTHON back-end) is done by the following command line: java -jar ~/lib/antlr-4.7-complete.jar -Dlanguage=Python3 filename.g4 or if you modified your .bashrc properly: antlr4 -Dlanguage=Python3 filename.g4 2.3 Simple examples with ANTLR4 EXERCISE #2 Demo files Ï Work your way through the five examples in the directory demo_files: Aurore Alcolei, Laure Gonnord, Valentin Lorentz. 1/4 ENS de Lyon, Département Informatique, M1 CAP Lab #2 – Automne 2017 ex1 with ANTLR4 + Java : A very simple lexical analysis1 for simple arithmetic expressions of the form x+3. -

Compiler Design

CCOOMMPPIILLEERR DDEESSIIGGNN -- PPAARRSSEERR http://www.tutorialspoint.com/compiler_design/compiler_design_parser.htm Copyright © tutorialspoint.com In the previous chapter, we understood the basic concepts involved in parsing. In this chapter, we will learn the various types of parser construction methods available. Parsing can be defined as top-down or bottom-up based on how the parse-tree is constructed. Top-Down Parsing We have learnt in the last chapter that the top-down parsing technique parses the input, and starts constructing a parse tree from the root node gradually moving down to the leaf nodes. The types of top-down parsing are depicted below: Recursive Descent Parsing Recursive descent is a top-down parsing technique that constructs the parse tree from the top and the input is read from left to right. It uses procedures for every terminal and non-terminal entity. This parsing technique recursively parses the input to make a parse tree, which may or may not require back-tracking. But the grammar associated with it ifnotleftfactored cannot avoid back- tracking. A form of recursive-descent parsing that does not require any back-tracking is known as predictive parsing. This parsing technique is regarded recursive as it uses context-free grammar which is recursive in nature. Back-tracking Top- down parsers start from the root node startsymbol and match the input string against the production rules to replace them ifmatched. To understand this, take the following example of CFG: S → rXd | rZd X → oa | ea Z → ai For an input string: read, a top-down parser, will behave like this: It will start with S from the production rules and will match its yield to the left-most letter of the input, i.e. -

7.2 Finite State Machines Finite State Machines Are Another Way to Specify the Syntax of a Sentence in a Lan- Guage

32397_CH07_331_388.qxd 1/14/09 5:01 PM Page 346 346 Chapter 7 Language Translation Principles Context Sensitivity of C++ It appears from Figure 7.8 that the C++ language is context-free. Every production rule has only a single nonterminal on the left side. This is in contrast to a context- sensitive grammar, which can have more than a single nonterminal on the left, as in Figure 7.3. Appearances are deceiving. Even though the grammar for this subset of C++, as well as the full standard C++ language, is context-free, the language itself has some context-sensitive aspects. Consider the grammar in Figure 7.3. How do its rules of production guarantee that the number of c’s at the end of a string must equal the number of a’s at the beginning of the string? Rules 1 and 2 guarantee that for each a generated, exactly one C will be generated. Rule 3 lets the C commute to the right of B. Finally, Rule 5 lets you substitute c for C in the context of having a b to the left of C. The lan- guage could not be specified by a context-free grammar because it needs Rules 3 and 5 to get the C’s to the end of the string. There are context-sensitive aspects of the C++ language that Figure 7.8 does not specify. For example, the definition of <parameter-list> allows any number of formal parameters, and the definition of <argument-expression-list> allows any number of actual parameters. -

2D Trie for Fast Parsing

2D Trie for Fast Parsing Xian Qian, Qi Zhang, Xuanjing Huang, Lide Wu Institute of Media Computing School of Computer Science, Fudan University xianqian, qz, xjhuang, ldwu @fudan.edu.cn { } Abstract FeatureGeneration Template: Feature: p .word+p .pos lucky/ADJ In practical applications, decoding speed 0 0 is very important. Modern structured Feature Retrieval learning technique adopts template based Index: method to extract millions of features. Parse Tree 3228~3233 Complicated templates bring about abun- Buildlattice,inferenceetc. dant features which lead to higher accu- racy but more feature extraction time. We Figure 1: Flow chart of dependency parsing. propose Two Dimensional Trie (2D Trie), p0.word, p0.pos denotes the word and POS tag a novel efficient feature indexing structure of parent node respectively. Indexes correspond which takes advantage of relationship be- to the features conjoined with dependency types, tween templates: feature strings generated e.g., lucky/ADJ/OBJ, lucky/ADJ/NMOD, etc. by a template are prefixes of the features from its extended templates. We apply our technique to Maximum Spanning Tree dependency parsing. Experimental results feature extraction beside of a fast parsing algo- on Chinese Tree Bank corpus show that rithm is important for the parsing and training our 2D Trie is about 5 times faster than speed. He takes 3 measures for a 40X speedup, traditional Trie structure, making parsing despite the same inference algorithm. One impor- speed 4.3 times faster. tant measure is to store the feature vectors in file to skip feature extraction, otherwise it will be the 1 Introduction bottleneck. Now we quickly review the feature extraction In practical applications, decoding speed is very stage of structured learning. -

The String Edit Distance Matching Problem with Moves

The String Edit Distance Matching Problem with Moves GRAHAM CORMODE AT&T Labs–Research and S. MUTHUKRISHNAN Rutgers University The edit distance between two strings S and R is defined to be the minimum number of character inserts, deletes and changes needed to convert R to S. Given a text string t of length n, and a pattern string p of length m, informally, the string edit distance matching problem is to compute the smallest edit distance between p and substrings of t. We relax the problem so that (a) we allow an additional operation, namely, sub- string moves, and (b) we allow approximation of this string edit distance. Our result is a near linear time deterministic algorithm to produce a factor of O(log n log∗ n) approximation to the string edit distance with moves. This is the first known significantly subquadratic algorithm for a string edit distance problem in which the distance involves nontrivial alignments. Our results are obtained by embed- ding strings into L1 vector space using a simplified parsing technique we call Edit Sensitive Parsing (ESP). Categories and Subject Descriptors: F.2.0 [Analysis of Algorithms and Problem Complex- ity]: General General Terms: Algorithms, Theory Additional Key Words and Phrases: approximate pattern matching, data streams, edit distance, embedding, similarity search, string matching 1. INTRODUCTION String matching has a long history in computer science, dating back to the first compilers in the sixties and before. Text comparison now appears in all areas of the discipline, from compression and pattern matching to computational biology Author’s addres: G. -

Chapter 3: Lexing and Parsing

Chapter 3: Lexing and Parsing Aarne Ranta Slides for the book "Implementing Programming Languages. An Introduction to Compilers and Interpreters", College Publications, 2012. Lexing and Parsing* Deeper understanding of the previous chapter Regular expressions and finite automata • the compilation procedure • why automata may explode in size • why parentheses cannot be matched by finite automata Context-free grammars and parsing algorithms. • LL and LR parsing • why context-free grammars cannot alone specify languages • why conflicts arise The standard tools The code generated by BNFC is processed by other tools: • Lex (Alex for Haskell, JLex for Java, Flex for C) • Yacc (Happy for Haskell, Cup for Java, Bison for C) Lex and YACC are the original tools from the early 1970's. They are based on the theory of formal languages: • Lex code is regular expressions, converted to finite automata. • Yacc code is context-free grammars, converted to LALR(1) parsers. The theory of formal languages A formal language is, mathematically, just any set of sequences of symbols, Symbols are just elements from any finite set, such as the 128 7-bit ASCII characters. Programming languages are examples of formal languages. In the theory, usually simpler languages are studied. But the complexity of real languages is mostly due to repetitions of simple well-known patterns. Regular languages A regular language is, like any formal language, a set of strings, i.e. sequences of symbols, from a finite set of symbols called the alphabet. All regular languages can be defined by regular expressions in the following set: expression language 'a' fag AB fabja 2 [[A]]; b 2 [[B]]g A j B [[A]] [ [[B]] A* fa1a2 : : : anjai 2 [[A]]; n ≥ 0g eps fg (empty string) [[A]] is the set corresponding to the expression A. -

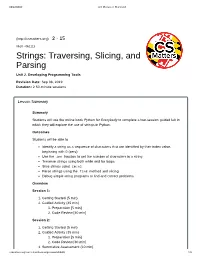

Strings: Traversing, Slicing, and Parsing Unit 2

10/22/2019 CS Matters in Maryland (http://csmatters.org) 2 - 15 0b10 - 0b1111 Strings: Traversing, Slicing, and Parsing Unit 2. Developing Programming Tools Revision Date: Sep 08, 2019 Duration: 2 50-minute sessions Lesson Summary Summary Students will use the online book Python for Everybody to complete a two-session guided lab in which they will explore the use of strings in Python. Outcomes Students will be able to: Identify a string as a sequence of characters that are identified by their index value, beginning with 0 (zero) Use the len function to get the number of characters in a string Traverse strings using both while and for loops Slice strings using [m:n] Parse strings using the find method and slicing Debug simple string programs to find and correct problems Overview Session 1: 1. Getting Started (5 min) 2. Guided Activity (45 min) 1. Preparation [5 min] 2. Code Review [40 min] Session 2: 1. Getting Started (5 min) 2. Guided Activity (35 min) 1. Preparation [5 min] 2. Code Review [30 min] 3. Summative Assessment (10 min) csmatters.org/curriculum/lesson/preview/oB04t8 1/8 10/22/2019 CS Matters in Maryland Learning Objectives CSP Objectives EU AAP-1 - To find specific solutions to generalizable problems, programmers represent and organize data in multiple ways. LO AAP-1.C - Represent a list or string using a variable. EU AAP-2 - The way statements are sequenced and combined in a program determines the computed result. Programs incorporate iteration and selection constructs to represent repetition and make decisions to handle varied input values. -

Tagging and Parsing a Large Corpus Research Report Svavar Kjarrval Lúthersson

Tagging and parsing a large corpus Research report Svavar Kjarrval Lúthersson 2010 B.Sc. in Computer Science Author: Svavar Kjarrval Lúthersson National ID: 071183-2119 Instructor: Hrafn Loftsson Moderator: Magnús Már Halldórsson Tölvunarfræðideild School of Computer Science 0. Abstract 0.1 Abstract in English This report is a product of a research where we tried to use existing language processing tools on a larger collection of Icelandic sentences than they had faced before. We hit many barriers on the way due to software errors, limitations in the software and due to the corpus we worked with. Unfortunately we had to resort to sidestep some of the problems with hacks but it resulted in a large collection of tagged and parsed sentences. We also managed to produce information regarding the frequency of words which could enhance the precision of current language processing tools. 0.2 Abstract in Icelandic Þessi skýrsla er afurð rannsóknar þar sem reynt er að beita núverandi máltæknitólum á stærra safn af íslenskum setningum en áður hefur verið farið út í. Við rákumst á ýmsar hindranir á leiðinni vegna hugbúnaðarvillna, takmarkana í hugbúnaðnum og vegna safnsins sem við unnum með. Því miður þurftum við að sneiða hjá vandamálunum með ýmsum krókaleiðum en það leiddi til þess að nú er tilbúið stórt safn af mörkuðum og þáttum setningum. Einnig söfnuðum við upplýsingum um tíðni orða sem gætu bætt nákvæmni máltæknitóla. Tagging and parsing a large corpus - Research report 2 of 19 Table of Contents 0. Abstract.............................................................................................................................................2 0.1 Abstract in English.....................................................................................................................2 0.2 Abstract in Icelandic..................................................................................................................2 1. -

Technical Correspondence Techniques for Automatic Memoization with Applications to Context-Free Parsing

Technical Correspondence Techniques for Automatic Memoization with Applications to Context-Free Parsing Peter Norvig* University of California It is shown that a process similar to Earley's algorithm can be generated by a simple top-down backtracking parser, when augmented by automatic memoization. The memoized parser has the same complexity as Earley's algorithm, but parses constituents in a different order. Techniques for deriving memo functions are described, with a complete implementation in Common Lisp, and an outline of a macro-based approach for other languages. 1. Memoization The term memoization was coined by Donald Michie (1968) to refer to the process by which a function is made to automatically remember the results of previous compu- tations. The idea has become more popular in recent years with the rise of functional languages; Field and Harrison (1988) devote a whole chapter to it. The basic idea is just to keep a table of previously computed input/result pairs. In Common Lisp one could write: 1 (defun memo (fn) "Return a memo-function of fn." (let ((table (make-hash-table))) #'(lambda (x) (multiple-value-bind (val found) (gethash x table) (if found val (setf (gethash x table) (funcall fn x))))))). (For those familiar with Lisp but not Common Lisp, gethash returns two values, the stored entry in the table, and a boolean flag indicating if there is in fact an entry. The special form multiple-value-bind binds these two values to the symbols val and found. The special form serf is used here to update the table entry for x.) In this simple implementation fn is required to take one argument and return one value, and arguments that are eql produce the same value.