LOW COMPLEXITY H.264 to VC-1 TRANSCODER by VIDHYA

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Fast Computational Structures for an Efficient Implementation of The

ARTICLE IN PRESS Signal Processing ] (]]]]) ]]]–]]] Contents lists available at ScienceDirect Signal Processing journal homepage: www.elsevier.com/locate/sigpro Fast computational structures for an efficient implementation of the complete TDAC analysis/synthesis MDCT/MDST filter banks Vladimir Britanak a,Ã, Huibert J. Lincklaen Arrie¨ns b a Institute of Informatics, Slovak Academy of Sciences, Dubravska cesta 9, 845 07 Bratislava, Slovak Republic b Delft University of Technology, Department of Electrical Engineering, Mathematics and Computer Science, Mekelweg 4, 2628 CD Delft, The Netherlands article info abstract Article history: A new fast computational structure identical both for the forward and backward Received 27 May 2008 modified discrete cosine/sine transform (MDCT/MDST) computation is described. It is Received in revised form the result of a systematic construction of a fast algorithm for an efficient implementa- 8 January 2009 tion of the complete time domain aliasing cancelation (TDAC) analysis/synthesis MDCT/ Accepted 10 January 2009 MDST filter banks. It is shown that the same computational structure can be used both for the encoder and the decoder, thus significantly reducing design time and resources. Keywords: The corresponding generalized signal flow graph is regular and defines new sparse Modified discrete cosine transform matrix factorizations of the discrete cosine transform of type IV (DCT-IV) and MDCT/ Modified discrete sine transform MDST matrices. The identical fast MDCT computational structure provides an efficient Modulated -

Video Coding Standards

Module 8 Video Coding Standards Version 2 ECE IIT, Kharagpur Lesson 23 MPEG-1 standards Version 2 ECE IIT, Kharagpur Lesson objectives At the end of this lesson, the students should be able to : 1. Enlist the major video coding standards 2. State the basic objectives of MPEG-1 standard. 3. Enlist the set of constrained parameters in MPEG-1 4. Define the I- P- and B-pictures 5. Present the hierarchical data structure of MPEG-1 6. Define the macroblock modes supported by MPEG-1 23.0 Introduction In lesson 21 and lesson 22, we studied how to perform motion estimation and thereby temporally predict the video frames to exploit significant temporal redundancies present in the video sequence. The error in temporal prediction is encoded by standard transform domain techniques like the DCT, followed by quantization and entropy coding to exploit the spatial and statistical redundancies and achieve significant video compression. The video codecs therefore follow a hybrid coding structure in which DPCM is adopted in temporal domain and DCT or other transform domain techniques in spatial domain. Efforts to standardize video data exchange via storage media or via communication networks are actively in progress since early 1980s. A number of international video and audio standardization activities started within the International Telephone Consultative Committee (CCITT), followed by the International Radio Consultative Committee (CCIR), and the International Standards Organization / International Electrotechnical Commission (ISO/IEC). An experts group, known as the Motion Pictures Expects Group (MPEG) was established in 1988 in the framework of the Joint ISO/IEC Technical Committee with an objective to develop standards for coded representation of moving pictures, associated audio, and their combination for storage and retrieval of digital media. -

Perceptual Audio Coding Contents

12/6/2007 Perceptual Audio Coding Henrique Malvar Managing Director, Redmond Lab UW Lecture – December 6, 2007 Contents • Motivation • “Source coding”: good for speech • “Sink coding”: Auditory Masking • Block & Lapped Transforms • Audio compression •Examples 2 1 12/6/2007 Contents • Motivation • “Source coding”: good for speech • “Sink coding”: Auditory Masking • Block & Lapped Transforms • Audio compression •Examples 3 Many applications need digital audio • Communication – Digital TV, Telephony (VoIP) & teleconferencing – Voice mail, voice annotations on e-mail, voice recording •Business – Internet call centers – Multimedia presentations • Entertainment – 150 songs on standard CD – thousands of songs on portable music players – Internet / Satellite radio, HD Radio – Games, DVD Movies 4 2 12/6/2007 Contents • Motivation • “Source coding”: good for speech • “Sink coding”: Auditory Masking • Block & Lapped Transforms • Audio compression •Examples 5 Linear Predictive Coding (LPC) LPC periodic excitation N coefficients x()nen= ()+−∑ axnrr ( ) gains r=1 pitch period e(n) Synthesis x(n) Combine Filter noise excitation synthesized speech 6 3 12/6/2007 LPC basics – analysis/synthesis synthesis parameters Analysis Synthesis algorithm Filter residual waveform N en()= xn ()−−∑ axnr ( r ) r=1 original speech synthesized speech 7 LPC variant - CELP selection Encoder index LPC original gain coefficients speech . Synthesis . Filter Decoder excitation codebook synthesized speech 8 4 12/6/2007 LPC variant - multipulse LPC coefficients excitation Synthesis -

Using Daala Intra Frames for Still Picture Coding

Using Daala Intra Frames for Still Picture Coding Nathan E. Egge, Jean-Marc Valin, Timothy B. Terriberry, Thomas Daede, Christopher Montgomery Mozilla Mountain View, CA, USA Abstract—Recent advances in video codec technology have techniques. Unsignaled horizontal and vertical prediction (see been successfully applied to the field of still picture coding. Daala Section II-D), and low complexity prediction of chroma coef- is a new royalty-free video codec under active development that ficients from their spatially coincident luma coefficients (see contains several novel coding technologies. We consider using Daala intra frames for still picture coding and show that it is Section II-E) are two examples. competitive with other video-derived image formats. A finished version of Daala could be used to create an excellent, royalty-free image format. I. INTRODUCTION The Daala video codec is a joint research effort between Xiph.Org and Mozilla to develop a next-generation video codec that is both (1) competitive performance with the state- of-the-art and (2) distributable on a royalty free basis. In work- ing to produce a royalty free video codec, Daala introduces new coding techniques to replace those used in the traditional block-based video codec pipeline. These techniques, while not Fig. 1. State of blocks within the decode pipeline of a codec using lapped completely finished, already demonstrate that it is possible to transforms. Immediate neighbors of the target block (bold lines) cannot be deliver a video codec that meets these goals. used for spatial prediction as they still require post-filtering (dotted lines). All video codecs have, in some form, the ability to code a still frame without prior information. -

AVC to the Max: How to Configure Encoder

Contents Company overview …. ………………………………………………………………… 3 Introduction…………………………………………………………………………… 4 What is AVC….………………………………………………………………………… 6 Making sense of profiles, levels, and bitrate………………………………………... 7 Group of pictures and its structure..………………………………………………… 11 Macroblocks: partitioning and prediction modes….………………………………. 14 Eliminating spatial redundancy……………………………………………………… 15 Eliminating temporal redundancy……...……………………………………………. 17 Adaptive quantization……...………………………………………………………… 24 Deblocking filtering….….…………………………………………………………….. 26 Entropy encoding…………………………………….……………………………….. 2 8 Conclusion…………………………………………………………………………….. 29 Contact details..………………………………………………………………………. 30 2 www.elecard.com Company overview Elecard company, founded in 1988, is a leading provider of software products for encoding, decoding, processing, monitoring and analysis of video and audio data in 9700 companies various formats. Elecard is a vendor of professional software products and software development kits (SDKs); products for in - depth high - quality analysis and monitoring of the media content; countries 1 50 solutions for IPTV and OTT projects, digital TV broadcasting and video streaming; transcoding servers. Elecard is based in the United States, Russia, and China with 20M users headquarters located in Tomsk, Russia. Elecard products are highly appreciated and widely used by the leaders of IT industry such as Intel, Cisco, Netflix, Huawei, Blackmagic Design, etc. For more information, please visit www.elecard.com. 3 www.elecard.com Introduction Video compression is the key step in video processing. Compression allows broadcasters and premium TV providers to deliver their content to their audience. Many video compression standards currently exist in TV broadcasting. Each standard has different properties, some of which are better suited to traditional live TV while others are more suited to video on demand (VoD). Two basic standards can be identified in the history of video compression: • MPEG-2, a legacy codec used for SD video and early digital broadcasting. -

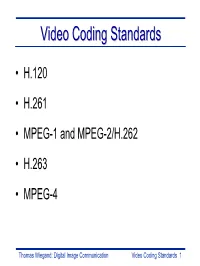

Video Coding Standards 1 Videovideo Codingcoding Standardsstandards

VideoVideo CodingCoding StandardsStandards • H.120 • H.261 • MPEG-1 and MPEG-2/H.262 • H.263 • MPEG-4 Thomas Wiegand: Digital Image Communication Video Coding Standards 1 VideoVideo CodingCoding StandardsStandards MPEG-2 digital TV 2 -6 Mbps ITU-R 601 166 Mbit/s H.261 ISDN 64 kbps Picture phone H.263 PSTN < 28.8 kbps picture phone Thomas Wiegand: Digital Image Communication Video Coding Standards 2 H.120:H.120: TheThe FirstFirst DigitalDigital VideoVideo CodingCoding StandardStandard • ITU-T (ex-CCITT) Rec. H.120: The first digital video coding standard (1984) • v1 (1984) had conditional replenishment, DPCM, scalar quantization, variable-length coding, switch for quincunx sampling • v2 (1988) added motion compensation and background prediction • Operated at 1544 (NTSC) and 2048 (PAL) kbps • Few units made, essentially not in use today Thomas Wiegand: Digital Image Communication Video Coding Standards 3 H.261:H.261: TheThe BasisBasis ofof ModernModern VideoVideo CompressionCompression • ITU-T (ex-CCITT) Rec. H.261: The first widespread practical success • First design (late ’80s) embodying typical structure that dominates today: 16x16 macroblock motion compensation, 8x8 DCT, scalar quantization, and variable-length coding • Other key aspects: loop filter, integer-pel motion compensation accuracy, 2-D VLC for coefficients • Operated at 64-2048 kbps • Still in use, although mostly as a backward- compatibility feature – overtaken by H.263 Thomas Wiegand: Digital Image Communication Video Coding Standards 4 H.261&3H.261&3 MacroblockMacroblock -

Lapped Transforms in Perceptual Coding of Wideband Audio

Lapped Transforms in Perceptual Coding of Wideband Audio Sien Ruan Department of Electrical & Computer Engineering McGill University Montreal, Canada December 2004 A thesis submitted to McGill University in partial fulfillment of the requirements for the degree of Master of Engineering. c 2004 Sien Ruan ° i To my beloved parents ii Abstract Audio coding paradigms depend on time-frequency transformations to remove statistical redundancy in audio signals and reduce data bit rate, while maintaining high fidelity of the reconstructed signal. Sophisticated perceptual audio coding further exploits perceptual redundancy in audio signals by incorporating perceptual masking phenomena. This thesis focuses on the investigation of different coding transformations that can be used to compute perceptual distortion measures effectively; among them the lapped transform, which is most widely used in nowadays audio coders. Moreover, an innovative lapped transform is developed that can vary overlap percentage at arbitrary degrees. The new lapped transform is applicable on the transient audio by capturing the time-varying characteristics of the signal. iii Sommaire Les paradigmes de codage audio d´ependent des transformations de temps-fr´equence pour enlever la redondance statistique dans les signaux audio et pour r´eduire le taux de trans- mission de donn´ees, tout en maintenant la fid´elit´e´elev´ee du signal reconstruit. Le codage sophistiqu´eperceptuel de l’audio exploite davantage la redondance perceptuelle dans les signaux audio en incorporant des ph´enom`enes de masquage perceptuels. Cette th`ese se concentre sur la recherche sur les diff´erentes transformations de codage qui peuvent ˆetre employ´ees pour calculer des mesures de d´eformation perceptuelles efficacement, parmi elles, la transformation enroul´e, qui est la plus largement r´epandue dans les codeurs audio de nos jours. -

Motion Compensation on DCT Domain

EURASIP Journal on Applied Signal Processing 2001:3, 147–162 © 2001 Hindawi Publishing Corporation Motion Compensation on DCT Domain Ut-Va Koc Lucent Technologies Bell Labs, 600 Mountain Avenue, Murray Hill, NJ 07974, USA Email: [email protected] K. J. Ray Liu Department of Electrical and Computer Engineering and Institute for Systems Research, University of Maryland, College Park, MD 20742, USA Email: [email protected] Received 21 May 2001 and in revised form 21 September 2001 Alternative fully DCT-based video codec architectures have been proposed in the past to address the shortcomings of the conven- tional hybrid motion compensated DCT video codec structures traditionally chosen as the basis of implementation of standard- compliant codecs. However, no prior effort has been made to ensure interoperability of these two drastically different architectures so that fully DCT-based video codecs are fully compatible with the existing video coding standards. In this paper, we establish the criteria for matching conventional codecs with fully DCT-based codecs. We find that the key to this interoperability lies in the heart of the implementation of motion compensation modules performed in the spatial and transform domains at both the encoder and the decoder. Specifically,if the spatial-domain motion compensation is compatible with the transform-domain motion compensation, then the states in both the coder and the decoder will keep track of each other even after a long series of P-frames. Otherwise, the states will diverge in proportion to the number of P-frames between two I-frames. This sets an important criterion for the development of any DCT-based motion compensation schemes. -

Daala: a Perceptually-Driven Still Picture Codec

DAALA: A PERCEPTUALLY-DRIVEN STILL PICTURE CODEC Jean-Marc Valin, Nathan E. Egge, Thomas Daede, Timothy B. Terriberry, Christopher Montgomery Mozilla, Mountain View, CA, USA Xiph.Org Foundation ABSTRACT and vertical directions [1]. Also, DC coefficients are com- bined recursively using a Haar transform, up to the level of Daala is a new royalty-free video codec based on perceptually- 64x64 superblocks. driven coding techniques. We explore using its keyframe format for still picture coding and show how it has improved over the past year. We believe the technology used in Daala Multi-Symbol Entropy Coder could be the basis of an excellent, royalty-free image format. Most recent video codecs encode information using binary arithmetic coding, meaning that each symbol can only take 1. INTRODUCTION two values. The Daala range coder supports up to 16 values per symbol, making it possible to encode fewer symbols [6]. Daala is a royalty-free video codec designed to avoid tra- This is equivalent to coding up to four binary values in parallel ditional patent-encumbered techniques used in most cur- and reduces serial dependencies. rent video codecs. In this paper, we propose to use Daala’s keyframe format for still picture coding. In June 2015, Daala was compared to other still picture codecs at the 2015 Picture Perceptual Vector Quantization Coding Symposium (PCS) [1,2]. Since then many improve- Rather than use scalar quantization like the vast majority of ments were made to the bitstream to improve its quality. picture and video codecs, Daala is based on perceptual vector These include reduced overlap in the lapped transform, finer quantization (PVQ) [7]. -

White Paper Version of July 2015

White paper Digital Video File Recommendation Version of July 2015 edited by Whitepaper 1 DIGITAL VIDEO FILE RECOMMENDATIONS ............................ 4 1.1 Preamble .........................................................................................................................4 1.2 Scope ...............................................................................................................................5 1.3 File Formats ....................................................................................................................5 1.4 Codecs ............................................................................................................................5 1.4.1 Browsing .................................................................................................... 5 1.4.2 Acquisition ................................................................................................. 6 1.4.3 Programme Contribution ........................................................................... 7 1.4.4 Postproduction .......................................................................................... 7 1.4.5 Broadcast ................................................................................................... 7 1.4.6 News & Magazines & Sports ..................................................................... 8 1.4.7 High Definition ........................................................................................... 8 1.5 General Requirements ..................................................................................................9 -

Wiener Filter-Based Error Resilient Time Domain Lapped Transform Jie Liang, Member, IEEE, Chengjie Tu, Member, IEEE, Lu Gan, Member, IEEE, Trac D

IEEE TRANSACTIONS ON IMAGE PROCESSING, SUBMITTED JAN. 2006 1 Wiener Filter-based Error Resilient Time Domain Lapped Transform Jie Liang, Member, IEEE, Chengjie Tu, Member, IEEE, Lu Gan, Member, IEEE, Trac D. Tran, Member, IEEE, and Kai-Kuang Ma, Senior Member, IEEE Abstract— In this paper, the design of the error resilient time- DCT and reducing the blocking artifact associated with DCT- domain lapped transform is formulated as a linear minimal based schemes. On the other hand, the postfilter can also mean squared error (LMMSE) problem. The optimal Wiener be designed to spread out the information of a block to its solution and several simplifications with different tradeoffs be- tween complexity and performance are developed. We also prove neighboring blocks. This is helpful when we need to recover the persymmetric structure of these Wiener filters. The existing a lost block during image transmission. mean reconstruction method is proven to be a special case of In [10], various techniques were proposed to estimate the the proposed framework. Our method also includes as a special lost data when the lapped orthogonal transform (LOT) was case the linear interpolation method used in DCT-based systems used. A mean reconstruction method and a nonlinear sharp- when there is no pre/postfiltering and when the quantization noise is ignored. The design criteria in our previous results are ening method were found to be quite effective, under the scrutinized and improved solutions are obtained. Various design assumption that DC coefficients were intact. In particular, in examples and multiple description image coding experiments the mean reconstruction method, each lost block was estimated are reported to demonstrate the performance of the proposed by averaging its available neighboring blocks. -

Nonlinearly-Adapted Lapped Transforms for Intra-Frame Coding

NONLINEARLY-ADAPTED LAPPED TRANSFORMS FOR INTRA-FRAME CODING Dan Lelescu DoCoMo Communications Labs USA 181 Metro Drive, San Jose, CA. 95110 Email: [email protected] ABSTRACT Lapped transforms can be implemented (e.g., [6]) as block boundary pre- and post- filtering outside a block transform codec. The use of block transforms for coding intra-frames in video coding From an implementation point of view, the lapped transform can may preclude higher coding performance due to residual correlation be added to a block transform codec without changing the existing across block boundaries and insufficient energy compaction, which block transform of the codec. The gains that a lapped transform translates into unrealized rate-distortion gains. Subjectively, the oc- brings compared to a block transform are diminished by the use currence of blocking artifacts is common. Post-filters and lapped of other complementary methods such as intra-prediction and adap- transforms offer good solutions to these problems. Lapped trans- tive post-filters. For increased performance, it is useful to design a forms offer a more general framework which can incorporate coor- signal-adapted rather than a fixed lapped transform, and evaluate the dinated pre- and post-filtering operations. Most common are fixed transform when incorporated in a state-of-art codec that uses tech- lapped transforms (such as lapped orthogonal transforms), and also niques such as intra-prediction and post-filtering. transforms with adaptive basis function length. In contrast, in this We present in this paper the determination and use of an adaptive paper we determine a lapped transform that non-linearly adapts its lapped transform for coding intra-frames in a video codec.