Introduction

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Digital Dialectics: the Paradox of Cinema in a Studio Without Walls', Historical Journal of Film, Radio and Television , Vol

Scott McQuire, ‘Digital dialectics: the paradox of cinema in a studio without walls', Historical Journal of Film, Radio and Television , vol. 19, no. 3 (1999), pp. 379 – 397. This is an electronic, pre-publication version of an article published in Historical Journal of Film, Radio and Television. Historical Journal of Film, Radio and Television is available online at http://www.informaworld.com/smpp/title~content=g713423963~db=all. Digital dialectics: the paradox of cinema in a studio without walls Scott McQuire There’s a scene in Forrest Gump (Robert Zemeckis, Paramount Pictures; USA, 1994) which encapsulates the novel potential of the digital threshold. The scene itself is nothing spectacular. It involves neither exploding spaceships, marauding dinosaurs, nor even the apocalyptic destruction of a postmodern cityscape. Rather, it depends entirely on what has been made invisible within the image. The scene, in which actor Gary Sinise is shown in hospital after having his legs blown off in battle, is noteworthy partly because of the way that director Robert Zemeckis handles it. Sinise has been clearly established as a full-bodied character in earlier scenes. When we first see him in hospital, he is seated on a bed with the stumps of his legs resting at its edge. The assumption made by most spectators, whether consciously or unconsciously, is that the shot is tricked up; that Sinise’s legs are hidden beneath the bed, concealed by a hole cut through the mattress. This would follow a long line of film practice in faking amputations, inaugurated by the famous stop-motion beheading in the Edison Company’s Death of Mary Queen of Scots (aka The Execution of Mary Stuart, Thomas A. -

Runaway Film Production: a Critical History of Hollywood’S Outsourcing Discourse

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Illinois Digital Environment for Access to Learning and Scholarship Repository RUNAWAY FILM PRODUCTION: A CRITICAL HISTORY OF HOLLYWOOD’S OUTSOURCING DISCOURSE BY CAMILLE K. YALE DISSERTATION Submitted in partial fulfillment of the requirements for the degree of Doctor of Philosophy in Communications in the Graduate College of the University of Illinois at Urbana-Champaign, 2010 Urbana, Illinois Doctoral Committee: Professor John C. Nerone, Chair and Director of Research Professor James W. Hay Professor Steven G. Jones, University of Illinois at Chicago Professor Cameron R. McCarthy ABSTRACT Runaway production is a phrase commonly used by Hollywood film and television production labor to describe the outsourcing of production work to foreign locations. It is an issue that has been credited with siphoning tens of millions of dollars and thousands of jobs from the U.S. economy. Despite broad interest in runaway production by journalists, politicians, academics, and media labor interests, and despite its potential impact on hundreds of thousands—and perhaps millions—of workers in the U.S., there has been very little critical analysis of its historical development and function as a political and economic discourse. Through extensive archival research, this dissertation critically examines the history of runaway production, from its introduction in postwar Hollywood to its present use in describing the development of highly competitive television and film production industries in Canada. From a political economic perspective, I argue that the history of runaway production demonstrates how Hollywood’s multinational media corporations have leveraged production work to cultivate goodwill and industry-friendly trade policies across global media markets. -

Looking Back at the Creative Process

IATSE LOCAL 839 MAGAZINE SPRING 2020 ISSUE NO. 9 THE ANIMATION GUILD QUARTERLY SCOOBY-DOO / TESTING PRACTICES LOOKING BACK AT THE CREATIVE PROCESS SPRING 2020 “HAS ALL THE MAKINGS OF A CLASSIC.” TIME OUT NEW YORK “A GAMECHANGER”. INDIEWIRE NETFLIXGUILDS.COM KEYFRAME QUARTERLY MAGAZINE OF THE ANIMATION GUILD, COVER 2 REVISION 1 NETFLIX: KLAUS PUB DATE: 01/30/20 TRIM: 8.5” X 10.875” BLEED: 8.75” X 11.125” ISSUE 09 CONTENTS 12 FRAME X FRAME 42 TRIBUTE 46 FRAME X FRAME Kickstarting a Honoring those personal project who have passed 6 FROM THE 14 AFTER HOURS 44 CALENDAR FEATURES PRESIDENT Introducing The Blanketeers 46 FINAL NOTE 20 EXPANDING THE Remembering 9 EDITOR’S FIBER UNIVERSE Disney, the man NOTE 16 THE LOCAL In Trolls World Tour, Poppy MPI primer, and her crew leave their felted Staff spotlight 11 ART & CRAFT homes to meet troll tribes Tiffany Ford’s from different regions of the color blocks kingdom in an effort to thwart Queen Barb and King Thrash from destroying all the other 28 styles of music. Hitting the road gave the filmmakers an opportunity to invent worlds from the perspective of new fabrics and fibers. 28 HIRING HUMANELY Supervisors and directors in the LA animation industry discuss hiring practices, testing, and the realities of trying to staff a show ethically. 34 ZOINKS! SCOOBY-DOO TURNS 50 20 The original series has been followed by more than a dozen rebooted series and movies, and through it all, artists and animators made sure that “those meddling kids” and a cowardly canine continued to unmask villains. -

Shaun the Sheep's Creator

PRODUCTION NOTES STUDIOCANAL RELEASE DATES: UK – OCTOBER 18th 2019 SOCIAL MEDIA: Facebook - https://www.facebook.com/shaunthesheep Twitter - https://twitter.com/shaunthesheep Instagram - https://www.instagram.com/shaunthesheep/ YouTube - https://www.youtube.com/user/aardmanshaunthesheep For further information please contact STUDIOCANAL: UK ENQUIRIES [email protected] [email protected] 1 Synopsis Strange lights over the quiet town of Mossingham herald the arrival of a mystery visitor from far across the galaxy… For Shaun the Sheep’s second feature-length movie, the follow-up to 2015’s smash hit SHAUN THE SHEEP MOVIE, A SHAUN THE SHEEP MOVIE: FARMAGEDDON takes the world’s favourite woolly hero and plunges him into an hilarious intergalactic adventure he will need to use all of his cheekiness and heart to work his way out of. When a visitor from beyond the stars – an impish and adorable alien called LU-LA – crash-lands near Mossy Bottom Farm, Shaun soon sees an opportunity for alien-powered fun and adventure, and sets off on a mission to shepherd LU-LA back to her home. Her magical alien powers, irrepressible mischief and galactic sized burps soon have the flock enchanted and Shaun takes his new extra-terrestrial friend on a road-trip to Mossingham Forest to find her lost spaceship. Little do the pair know, though, that they are being pursued at every turn by a mysterious alien- hunting government agency, spearheaded by the formidable Agent Red and her bunch of hapless, hazmat-suited goons. With Agent Red driven by a deep-seated drive to prove the existence of aliens and Bitzer unwittingly dragged into the haphazard chase, can Shaun and the flock avert Farmageddon on Mossy Bottom Farm before it’s too late? 2 Star Power The creative team behind the world’s favourite woolly wonder explain how, in Farmageddon, they’ve boldly gone where no sheep has gone before.. -

Computer Animation CPSC

Computer Animation INF2050 INSTITUTT FOR INFORMATIKK INF2050, januar 29, 2008 Alma Leora Culén Om kurset Hva er dette kurset ikke: – matte kurs –kunstkurs Det er – en introduksjon til Max 3D – forsøk til å forklare prinsipene av data animasjon og det som ligger bak INSTITUTT FOR INFORMATIKK INF2050, januar 29, 2008 Alma Leora Culén INTRODUKSJON Animate = “to give life to” Specify, directly or indirectly, how ‘thing’ moves in time and space Dataanimasjon inkluderer alle aspekter av bilder i bevegelsen: • Programmering • Modelering • Animasjon • Lys • Rendering • Post-prossesing • Scripting • Storyboarding • Hardware • Software • Lyd INSTITUTT FOR INFORMATIKK INF2050, januar 29, 2008 Alma Leora Culén INTRODUKSJON Vi skal kune ta opp bare en del av dette. Kanskje bare • Programmering • Modelering • Animasjon • Lys • Rendering INSTITUTT FOR INFORMATIKK INF2050, januar 29, 2008 Alma Leora Culén INTRODUKSJON Historien (litt mer omfatende en det vi gjorde på animasjonskvelden) Aplikasjoner Metoder INSTITUTT FOR INFORMATIKK INF2050, januar 29, 2008 Alma Leora Culén Methods and Techniques 2D Animation Basics – Double buffering – Interactive programs – Geometry review Interpolation – Linear interpolation – Temporal interpolation INSTITUTT FOR INFORMATIKK INF2050, januar 29, 2008 Alma Leora Culén Introduksjon 3D Animation Basics – Coordinates and cameras –Transformations –projections INSTITUTT FOR INFORMATIKK INF2050, januar 29, 2008 Alma Leora Culén Introduksjon Surfaces and Lighting – Polygonal models – Shading –Texture mapping – Roto-scoping Procedural -

ELLEN M. SOMERS Producer/Visual Effects Producer

ELLEN M. SOMERS Producer/Visual Effects Producer Projects Director Studio/Production FEATURES “SINBAD AND THE SORCERER’S BRIDE” RANDALL COOK TBD (Pitch) Producer “ETHYREA: CODE OF THE BRETHREN” NA BADALATO MEDIA GROUP (In Development) Visual Effects Producer “ROBOCOP” JOSÉ PADILHA MGM Additional VFX Producer “THE HOST” ANDREW NICCOL CHOCKSTONE PICTURES Visual Effects Producer OPEN ROAD FILMS “IN TIME” ANDREW NICCOL NEW REGENCY Visual Effects Producer/Supervisor TWENTIETH CENTURY FOX “GULLIVER’S TRAVELS” ROB LETTERMAN TWENTIETH CENTURY FOX Visual Effects Producer/Supervisor “GREEN LANTERN” MARTIN CAMPBELL WARNER BROTHERS VFX Producer (Pre-Production) UC “NIGHT AT THE MUSEUM: BATTLE OF SHAWN LEVY TWENTIETH CENTURY FOX THE SMITHSONIAN” Associate Producer Visual Effects Producer “JUMPER” DOUG LIMAN TWENTIETH CENTURY FOX Visual Effects Producer “FANTASTIC 4: RISE OF THE SILVER TIM STORY TWENTIETH CENTURY FOX SURFER” Visual Effects Producer “NIGHT AT THE MUSEUM” SHAWN LEVY TWENTIETH CENTURY FOX Associate Producer Visual Effects Producer “AEON FLUX” KARYN KUSAMA PARAMOUNT PICTURES Visual Effects Producer “PETER PAN” P.J. HOGAN UNIVERSAL PICTURES Associate Producer Visual Effects Producer “THE LORD OF THE RINGS: PETER JACKSON NEW LINE CINEMA THE FELLOWSHIP OF THE RING” Associate Producer Visual Effects Producer Academy Award win for VFX team “WHAT DREAMS MAY COME” VINCENT WARD POLYGRAM FILMED Visual Effects Producer/Supervisor ENTERTAINMENT Academy Award win for VFX team ELLEN SOMERS -continued- “BATMAN & ROBIN” JOEL SCHUMACHER WARNER BROTHERS -

THRILL RIDE - the SCIENCE of FUN a SONY PICTURES CLASSICS Release Running Time: 40 Minutes

THRILL RIDE - THE SCIENCE OF FUN a SONY PICTURES CLASSICS release Running time: 40 minutes Synopsis Sony Pictures Classics release of THRILL RIDE-THE SCIENCE OF FUN is a white- knuckle adventure that takes full advantage of the power of large format films. Filmed in the 70mm, 15-perforation format developed by the IMAX Corporation, and projected on a screen more than six stories tall, the film puts every member of the audience in the front seat of some of the wildest rides ever created. The ultimate ride film, "THRILL RIDE" not only traces the history of rides, past and present but also details how the development of the motion simulator ride has become one of the most exciting innovations in recent film history. Directed by Ben Stassen and produced by Charlotte Huggins in conjunction with New Wave International, "THRILL RIDE" takes the audience on rides that some viewers would never dare to attempt, including trips on Big Shot at the Stratosphere, Las Vegas and the rollercoasters Kumba and Montu, located at Busch Gardens, Tampa, Florida. New Wave International was founded by Stassen, who is also a renowned expert in the field of computer graphics imagery (CGI). The film shows that the possibilities for thrill making are endless and only limited by the imagination or the capabilities of a computer workstation. "THRILL RIDE-THE SCIENCE OF FUN" shows how ride film animators use CGI by first "constructing" a wire frame or skeleton version of the ride on a computer screen. Higher resolution textures and colors are added to the environment along with lighting and other atmospheric effects to heighten the illusion of reality. -

The Hollywood Cinema Industry's Coming of Digital Age: The

The Hollywood Cinema Industry’s Coming of Digital Age: the Digitisation of Visual Effects, 1977-1999 Volume I Rama Venkatasawmy BA (Hons) Murdoch This thesis is presented for the degree of Doctor of Philosophy of Murdoch University 2010 I declare that this thesis is my own account of my research and contains as its main content work which has not previously been submitted for a degree at any tertiary education institution. -------------------------------- Rama Venkatasawmy Abstract By 1902, Georges Méliès’s Le Voyage Dans La Lune had already articulated a pivotal function for visual effects or VFX in the cinema. It enabled the visual realisation of concepts and ideas that would otherwise have been, in practical and logistical terms, too risky, expensive or plain impossible to capture, re-present and reproduce on film according to so-called “conventional” motion-picture recording techniques and devices. Since then, VFX – in conjunction with their respective techno-visual means of re-production – have gradually become utterly indispensable to the array of practices, techniques and tools commonly used in filmmaking as such. For the Hollywood cinema industry, comprehensive VFX applications have not only motivated the expansion of commercial filmmaking praxis. They have also influenced the evolution of viewing pleasures and spectatorship experiences. Following the digitisation of their associated technologies, VFX have been responsible for multiplying the strategies of re-presentation and story-telling as well as extending the range of stories that can potentially be told on screen. By the same token, the visual standards of the Hollywood film’s production and exhibition have been growing in sophistication. -

SPECIES, Now out on the MUPPET SHOW a Hit

VOLUME 27 NUMBER 7 The magazine with a "Sense of Wonder." MARCH 1996 This magazine has followed the career 8 DEMOLITIONIST of H. R. Giger closely since 1979, when his Oscar-winning design work for ALIEN BAYWATCH babe Nicole Eggert stars as the future cop turned super crime- literally burst on the scene and changed fighter in a comic book-inspired low-budgeter that marks the directing debut the look of science fiction. This, our third of KNB Efx's Rob Kurtzman. / Preview by Michael Beeler cover story on the Swiss surrealist artist 10 MUPPET TREASURE ISLAND and film designer, takes a detailed look at Brian Henson on following in his late father's footsteps, returning his extraterrestrial concepts for last the Muppet players to the kind of hip, irreverent comedy that made TV's summer's hit movie SPECIES, now out on THE MUPPET SHOW a hit. / Interview by Alan Jones video. Page 8 L. A. correspondent Les Paul Robley 14 GIGER'S ALIEN: THE RIDE interviewed Giger at his home and studio Filming the motion simulator theme park attraction that puts you into the in Zurich, surrounded by the artist's work, nightmare world of Giger, based on the movie ALIENS, directed by genre which makes the building a kind of shrine master Stuart Gordon. I Article by Les Paul Robley or museum with exhibits that showcase Giger's unique biomechanoid vision, 16 H. R. GIGER—ORIGIN OF "SPECIES" including a working, ridable train that An interview with the Oscar-winning Swiss surrealist at his Zurich studio, snakes through its grounds. -

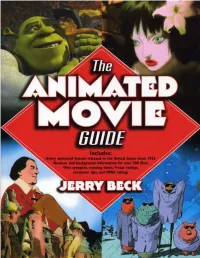

The Animated Movie Guide

THE ANIMATED MOVIE GUIDE Jerry Beck Contributing Writers Martin Goodman Andrew Leal W. R. Miller Fred Patten An A Cappella Book Library of Congress Cataloging-in-Publication Data Beck, Jerry. The animated movie guide / Jerry Beck.— 1st ed. p. cm. “An A Cappella book.” Includes index. ISBN 1-55652-591-5 1. Animated films—Catalogs. I. Title. NC1765.B367 2005 016.79143’75—dc22 2005008629 Front cover design: Leslie Cabarga Interior design: Rattray Design All images courtesy of Cartoon Research Inc. Front cover images (clockwise from top left): Photograph from the motion picture Shrek ™ & © 2001 DreamWorks L.L.C. and PDI, reprinted with permission by DreamWorks Animation; Photograph from the motion picture Ghost in the Shell 2 ™ & © 2004 DreamWorks L.L.C. and PDI, reprinted with permission by DreamWorks Animation; Mutant Aliens © Bill Plympton; Gulliver’s Travels. Back cover images (left to right): Johnny the Giant Killer, Gulliver’s Travels, The Snow Queen © 2005 by Jerry Beck All rights reserved First edition Published by A Cappella Books An Imprint of Chicago Review Press, Incorporated 814 North Franklin Street Chicago, Illinois 60610 ISBN 1-55652-591-5 Printed in the United States of America 5 4 3 2 1 For Marea Contents Acknowledgments vii Introduction ix About the Author and Contributors’ Biographies xiii Chronological List of Animated Features xv Alphabetical Entries 1 Appendix 1: Limited Release Animated Features 325 Appendix 2: Top 60 Animated Features Never Theatrically Released in the United States 327 Appendix 3: Top 20 Live-Action Films Featuring Great Animation 333 Index 335 Acknowledgments his book would not be as complete, as accurate, or as fun without the help of my ded- icated friends and enthusiastic colleagues. -

1 “I Grew up Loving Cars and the Southern California Car Culture. My Dad Was a Parts Manager at a Chevrolet Dealership, So V

“I grew up loving cars and the Southern California car culture. My dad was a parts manager at a Chevrolet dealership, so ‘Cars’ was very personal to me — the characters, the small town, their love and support for each other and their way of life. I couldn’t stop thinking about them. I wanted to take another road trip to new places around the world, and I thought a way into that world could be another passion of mine, the spy movie genre. I just couldn’t shake that idea of marrying the two distinctly different worlds of Radiator Springs and international intrigue. And here we are.” — John Lasseter, Director ABOUT THE PRODUCTION Pixar Animation Studios and Walt Disney Studios are off to the races in “Cars 2” as star racecar Lightning McQueen (voice of Owen Wilson) and his best friend, the incomparable tow truck Mater (voice of Larry the Cable Guy), jump-start a new adventure to exotic new lands stretching across the globe. The duo are joined by a hometown pit crew from Radiator Springs when they head overseas to support Lightning as he competes in the first-ever World Grand Prix, a race created to determine the world’s fastest car. But the road to the finish line is filled with plenty of potholes, detours and bombshells when Mater is mistakenly ensnared in an intriguing escapade of his own: international espionage. Mater finds himself torn between assisting Lightning McQueen in the high-profile race and “towing” the line in a top-secret mission orchestrated by master British spy Finn McMissile (voice of Michael Caine) and the stunning rookie field spy Holley Shiftwell (voice of Emily Mortimer). -

Independent Filmmakers and Commercials

Vol.Vol. 33 IssueIssue 77 October 1998 Independent Filmmakers and Commercials Balancing Commercials & Personal Work William Kentridge ItalyÕs Indy Scene U.K. Opps for Independents Max and His Special Problem Plus: The Budweiser Frogs & Lizards, Barry Purves and Glenn Vilppu TABLE OF CONTENTS OCTOBER 1998 VOL.3 NO.7 Table of Contents October 1998 Vol. 3, No. 7 4 Editor’s Notebook The inventiveness of independents... 5 Letters: [email protected] 7 Dig This! Animation World Magazine takes a jaunt into the innovative and remarkable: this month we look at fashion designer Rebecca Moses’ animated film, The Discovery of India. INDEPENDENT FILMMAKERS 8 William Kentridge: Quite the Opposite of Cartoons The amazing animation films of South African William Kentridge are discussed in depth by Philippe Moins. Available in English and French. 14 Italian Independent Animators Andrea Martignoni relates the current situation of independent animation in Italy and profiles three current indepen- dents: Ursula Ferrara, Alberto D’Amico, and Saul Saguatti. Available in English and Italian. 21 Eating and Animating: Balancing the Basics for U.K. Independents 1998 Marie Beardmore relays the main paths that U.K. animators, seeking to make their own works, use in order to obtain funding to animate...and eat! 25 Animation in Bosnia And Herzegovina:A Start and an Abrupt Stop In the shadow of Zagreb, animation in Bosnia and Herzegovina never truly developed until soon before the war...only to be abruptly halted. Rada Sesic explains. COMMERCIALS 30 Bud-Weis-Er: Computer-Generated Frogs and Lizards Give Bud a Boost As Karen Raugust explains, sometimes companies get lucky and their commercials become their own licensing phe- nomena.