Shared Memory Multiprocessors Readings Multiplying Performance

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

2.5 Classification of Parallel Computers

52 // Architectures 2.5 Classification of Parallel Computers 2.5 Classification of Parallel Computers 2.5.1 Granularity In parallel computing, granularity means the amount of computation in relation to communication or synchronisation Periods of computation are typically separated from periods of communication by synchronization events. • fine level (same operations with different data) ◦ vector processors ◦ instruction level parallelism ◦ fine-grain parallelism: – Relatively small amounts of computational work are done between communication events – Low computation to communication ratio – Facilitates load balancing 53 // Architectures 2.5 Classification of Parallel Computers – Implies high communication overhead and less opportunity for per- formance enhancement – If granularity is too fine it is possible that the overhead required for communications and synchronization between tasks takes longer than the computation. • operation level (different operations simultaneously) • problem level (independent subtasks) ◦ coarse-grain parallelism: – Relatively large amounts of computational work are done between communication/synchronization events – High computation to communication ratio – Implies more opportunity for performance increase – Harder to load balance efficiently 54 // Architectures 2.5 Classification of Parallel Computers 2.5.2 Hardware: Pipelining (was used in supercomputers, e.g. Cray-1) In N elements in pipeline and for 8 element L clock cycles =) for calculation it would take L + N cycles; without pipeline L ∗ N cycles Example of good code for pipelineing: §doi =1 ,k ¤ z ( i ) =x ( i ) +y ( i ) end do ¦ 55 // Architectures 2.5 Classification of Parallel Computers Vector processors, fast vector operations (operations on arrays). Previous example good also for vector processor (vector addition) , but, e.g. recursion – hard to optimise for vector processors Example: IntelMMX – simple vector processor. -

Chapter 5 Multiprocessors and Thread-Level Parallelism

Computer Architecture A Quantitative Approach, Fifth Edition Chapter 5 Multiprocessors and Thread-Level Parallelism Copyright © 2012, Elsevier Inc. All rights reserved. 1 Contents 1. Introduction 2. Centralized SMA – shared memory architecture 3. Performance of SMA 4. DMA – distributed memory architecture 5. Synchronization 6. Models of Consistency Copyright © 2012, Elsevier Inc. All rights reserved. 2 1. Introduction. Why multiprocessors? Need for more computing power Data intensive applications Utility computing requires powerful processors Several ways to increase processor performance Increased clock rate limited ability Architectural ILP, CPI – increasingly more difficult Multi-processor, multi-core systems more feasible based on current technologies Advantages of multiprocessors and multi-core Replication rather than unique design. Copyright © 2012, Elsevier Inc. All rights reserved. 3 Introduction Multiprocessor types Symmetric multiprocessors (SMP) Share single memory with uniform memory access/latency (UMA) Small number of cores Distributed shared memory (DSM) Memory distributed among processors. Non-uniform memory access/latency (NUMA) Processors connected via direct (switched) and non-direct (multi- hop) interconnection networks Copyright © 2012, Elsevier Inc. All rights reserved. 4 Important ideas Technology drives the solutions. Multi-cores have altered the game!! Thread-level parallelism (TLP) vs ILP. Computing and communication deeply intertwined. Write serialization exploits broadcast communication -

Chapter 5 Thread-Level Parallelism

Chapter 5 Thread-Level Parallelism Instructor: Josep Torrellas CS433 Copyright Josep Torrellas 1999, 2001, 2002, 2013 1 Progress Towards Multiprocessors + Rate of speed growth in uniprocessors saturated + Wide-issue processors are very complex + Wide-issue processors consume a lot of power + Steady progress in parallel software : the major obstacle to parallel processing 2 Flynn’s Classification of Parallel Architectures According to the parallelism in I and D stream • Single I stream , single D stream (SISD): uniprocessor • Single I stream , multiple D streams(SIMD) : same I executed by multiple processors using diff D – Each processor has its own data memory – There is a single control processor that sends the same I to all processors – These processors are usually special purpose 3 • Multiple I streams, single D stream (MISD) : no commercial machine • Multiple I streams, multiple D streams (MIMD) – Each processor fetches its own instructions and operates on its own data – Architecture of choice for general purpose mps – Flexible: can be used in single user mode or multiprogrammed – Use of the shelf µprocessors 4 MIMD Machines 1. Centralized shared memory architectures – Small #’s of processors (≈ up to 16-32) – Processors share a centralized memory – Usually connected in a bus – Also called UMA machines ( Uniform Memory Access) 2. Machines w/physically distributed memory – Support many processors – Memory distributed among processors – Scales the mem bandwidth if most of the accesses are to local mem 5 Figure 5.1 6 Figure 5.2 7 2. Machines w/physically distributed memory (cont) – Also reduces the memory latency – Of course interprocessor communication is more costly and complex – Often each node is a cluster (bus based multiprocessor) – 2 types, depending on method used for interprocessor communication: 1. -

Trafficdb: HERE's High Performance Shared-Memory Data Store

TrafficDB: HERE’s High Performance Shared-Memory Data Store Ricardo Fernandes Piotr Zaczkowski Bernd Gottler¨ HERE Global B.V. HERE Global B.V. HERE Global B.V. [email protected] [email protected] [email protected] Conor Ettinoffe Anis Moussa HERE Global B.V. HERE Global B.V. conor.ettinoff[email protected] [email protected] ABSTRACT HERE's traffic-aware services enable route planning and traf- fic visualisation on web, mobile and connected car appli- cations. These services process thousands of requests per second and require efficient ways to access the information needed to provide a timely response to end-users. The char- acteristics of road traffic information and these traffic-aware services require storage solutions with specific performance features. A route planning application utilising traffic con- gestion information to calculate the optimal route from an origin to a destination might hit a database with millions of queries per second. However, existing storage solutions are not prepared to handle such volumes of concurrent read Figure 1: HERE Location Cloud is accessible world- operations, as well as to provide the desired vertical scalabil- wide from web-based applications, mobile devices ity. This paper presents TrafficDB, a shared-memory data and connected cars. store, designed to provide high rates of read operations, en- abling applications to directly access the data from memory. Our evaluation demonstrates that TrafficDB handles mil- HERE is a leader in mapping and location-based services, lions of read operations and provides near-linear scalability providing fresh and highly accurate traffic information to- on multi-core machines, where additional processes can be gether with advanced route planning and navigation tech- spawned to increase the systems' throughput without a no- nologies that helps drivers reach their destination in the ticeable impact on the latency of querying the data store. -

Scheduling of Shared Memory with Multi - Core Performance in Dynamic Allocator Using Arm Processor

VOL. 11, NO. 9, MAY 2016 ISSN 1819-6608 ARPN Journal of Engineering and Applied Sciences ©2006-2016 Asian Research Publishing Network (ARPN). All rights reserved. www.arpnjournals.com SCHEDULING OF SHARED MEMORY WITH MULTI - CORE PERFORMANCE IN DYNAMIC ALLOCATOR USING ARM PROCESSOR Venkata Siva Prasad Ch. and S. Ravi Department of Electronics and Communication Engineering, Dr. M.G.R. Educational and Research Institute University, Chennai, India E-Mail: [email protected] ABSTRACT In this paper we proposed Shared-memory in Scheduling designed for the Multi-Core Processors, recent trends in Scheduler of Shared Memory environment have gained more importance in multi-core systems with Performance for workloads greatly depends on which processing tasks are scheduled to run on the same or different subset of cores (it’s contention-aware scheduling). The implementation of an optimal multi-core scheduler with improved prediction of consequences between the processor in Large Systems for that we executed applications using allocation generated by our new processor to-core mapping algorithms on used ARM FL-2440 Board hardware architecture with software of Linux in the running state. The results are obtained by dynamic allocation/de-allocation (Reallocated) using lock-free algorithm to reduce the time constraints, and automatically executes in concurrent components in parallel on multi-core systems to allocate free memory. The demonstrated concepts are scalable to multiple kernels and virtual OS environments using the runtime scheduler in a machine and showed an averaged improvement (up to 26%) which is very significant. Keywords: scheduler, shared memory, multi-core processor, linux-2.6-OpenMp, ARM-Fl2440. -

The Impact of Exploiting Instruction-Level Parallelism on Shared-Memory Multiprocessors

218 IEEE TRANSACTIONS ON COMPUTERS, VOL. 48, NO. 2, FEBRUARY 1999 The Impact of Exploiting Instruction-Level Parallelism on Shared-Memory Multiprocessors Vijay S. Pai, Student Member, IEEE, Parthasarathy Ranganathan, Student Member, IEEE, Hazim Abdel-Shafi, Student Member, IEEE, and Sarita Adve, Member, IEEE AbstractÐCurrent microprocessors incorporate techniques to aggressively exploit instruction-level parallelism (ILP). This paper evaluates the impact of such processors on the performance of shared-memory multiprocessors, both without and with the latency- hiding optimization of software prefetching. Our results show that, while ILP techniques substantially reduce CPU time in multiprocessors, they are less effective in removing memory stall time. Consequently, despite the inherent latency tolerance features of ILP processors, we find memory system performance to be a larger bottleneck and parallel efficiencies to be generally poorer in ILP- based multiprocessors than in previous generation multiprocessors. The main reasons for these deficiencies are insufficient opportunities in the applications to overlap multiple load misses and increased contention for resources in the system. We also find that software prefetching does not change the memory bound nature of most of our applications on our ILP multiprocessor, mainly due to a large number of late prefetches and resource contention. Our results suggest the need for additional latency hiding or reducing techniques for ILP systems, such as software clustering of load misses and producer-initiated communication. Index TermsÐShared-memory multiprocessors, instruction-level parallelism, software prefetching, performance evaluation. æ 1INTRODUCTION HARED-MEMORY multiprocessors built from commodity performance improvements from the use of current ILP Smicroprocessors are being increasingly used to provide techniques, but the improvements vary widely. -

Lecture 17: Multiprocessors

Lecture 17: Multiprocessors • Topics: multiprocessor intro and taxonomy, symmetric shared-memory multiprocessors (Sections 4.1-4.2) 1 Taxonomy • SISD: single instruction and single data stream: uniprocessor • MISD: no commercial multiprocessor: imagine data going through a pipeline of execution engines • SIMD: vector architectures: lower flexibility • MIMD: most multiprocessors today: easy to construct with off-the-shelf computers, most flexibility 2 Memory Organization - I • Centralized shared-memory multiprocessor or Symmetric shared-memory multiprocessor (SMP) • Multiple processors connected to a single centralized memory – since all processors see the same memory organization Æ uniform memory access (UMA) • Shared-memory because all processors can access the entire memory address space • Can centralized memory emerge as a bandwidth bottleneck? – not if you have large caches and employ fewer than a dozen processors 3 SMPs or Centralized Shared-Memory Processor Processor Processor Processor Caches Caches Caches Caches Main Memory I/O System 4 Memory Organization - II • For higher scalability, memory is distributed among processors Æ distributed memory multiprocessors • If one processor can directly address the memory local to another processor, the address space is shared Æ distributed shared-memory (DSM) multiprocessor • If memories are strictly local, we need messages to communicate data Æ cluster of computers or multicomputers • Non-uniform memory architecture (NUMA) since local memory has lower latency than remote memory 5 Distributed -

Use of Shared Memory in the Context of Embedded Multi-Core Processors: Exploration of the Technology and Its Limits

ALMA MATER STUDIORUM – UNIVERSITÀ DI BOLOGNA FACOLTÀ DI INGEGNERIA DOTTORATO DI RICERCA IN INGEGNERIA ELETTRONICA, TELECOMUNICAZIONI E TECNOLOGIE DELL’INFORMAZIONE - CICLO XXV SETTORE CONCORSUALE DI AFFERENZA: 09/E3 SETTORE SCIENTIFICO DISCIPLINARE: ING-INF/01 USE OF SHARED MEMORY IN THE CONTEXT OF EMBEDDED MULTI-CORE PROCESSORS: EXPLORATION OF THE TECHNOLOGY AND ITS LIMITS PRESENTATA DA: PAOLO BURGIO COORDINATORE DOTTORATO RELATORI ALESSANDRO VANELLI-CORALLI CHIAR.MO PROF. LUCA BENINI CHIAR.MO PROF. PHILIPPE COUSSY ESAME FINALE ANNO 2013/2014 Use of shared memory in the context of embedded multi-core processor: exploration of the technology and its limits Paolo Burgio Department of Electrical, Electronic and Information Engineering “Guglielmo Marconi” Universit´adegli Studi di Bologna - Italy and Lab-STICC Universit´ede Bretagne-Sud - France A thesis submitted for the degree of PhilosophiæDoctor (PhD) December 2013 ii Abstract Nell’ultimo decennio, i sistemi integrati (embedded systems) hanno sposato le architet- ture many-core. Questo `estato necessario per continuare a soddisfare la richiesta di performance e di bassi consumi da parte del mercato, in particolare da quando smart- phone e tablet sono entrati nella vita di tutti i giorni. Per utilizzare al meglio questa tecnologia, spesso si assume che lo spazio di memoria disponibile sia totalmente condiviso fra i processori. Ci`o `edi grande aiuto sia perch´e semplifica il lavoro del programmatore, sia durante il design della piattaforma e del runtime stessi. Tuttavia, il paradigma a memoria condivisa `enato sui sistemi symmetric multi-processors (SMP), e portarlo sulle moderne architetture embedded pu`oessere problematico. Qui, per ragioni di dimensioni e di consumo di potenza, si tende a rimpiazzare le data cache con memorie scratchpad, che vanno gestite esplicitamente dal software, e quindi dal programmatore. -

GPU Computing with CUDA Lecture 3 - Efficient Shared Memory Use

GPU Computing with CUDA Lecture 3 - Efficient Shared Memory Use Christopher Cooper Boston University August, 2011 UTFSM, Valparaíso, Chile 1 Outline of lecture ‣ Recap of Lecture 2 ‣ Shared memory in detail ‣ Tiling ‣ Bank conflicts ‣ Thread synchronization and atomic operations 2 Recap ‣ Thread hierarchy - Thread are grouped in thread blocks - Threads of the same block are executed on the same SM at the same time ‣ Threads can communicate with shared memory ‣ An SM can have up to 8 blocks at the same time - Thread blocks are divided sequentially into warps of 32 threads each - Threads of the same warp are scheduled together - SM implements a zero-overhead warp scheduling 3 Recap ‣ Memory hierarchy Smart use of memory hierarchy! 4 Recap ‣ Programming model: Finite Difference case - One node per thread - Node indexing automatically groups into thread blocks! 5 Recap ‣ Programming model: Finite Difference case - One node per thread - Node indexing automatically groups into thread blocks! Thread = node Thread block 5 Shared Memory ‣ Small (48kB per SM) ‣ Fast (~4 cycles): On-chip ‣ Private to each block - Allows thread communication ‣ How can we use it? 6 Shared Memory - Making use of it ‣ Looking at a 1D FDM example (similar to lab) ∂u ∂u n+1 n c∆t n n = c ui = ui (ui ui 1) ∂t ∂x − ∆x − − __global__ void update (float *u, float *u_prev, int N, float dx, float dt, float c, int BLOCKSIZE) { // Each thread will load one element int i = threadIdx.x + BLOCKSIZE * blockIdx.x; if (i>=N){return;} // u_prev[i] = u[i] is done in separate kernel if -

Instruction Level Parallelism Example

Instruction Level Parallelism Example Is Jule peaty or weak-minded when highlighting some heckles foreground thenceforth? Homoerotic and commendatory Shelby still pinks his pronephros inly. Overneat Kermit never beams so quaveringly or fecundated any academicians effectively. Summary of parallelism create readable and as with a bit says if we currently being considered to resolve these two machine of a pretty cool explanation. Once plug, it book the parallel grammatical structure which creates a truly memorable phrase. In order to accomplish whereas, a hybrid approach is designed to whatever advantage of streaming SIMD instructions for each faction the awful that executes in parallel on independent cores. For the ILPA, there is one more type of instruction possible, which is the special instruction type for the dedicated hardware units. Advantages and high for example? Two which is already present data is, to process includes comprehensive career related services that instruction level parallelism example how many diverse influences on. Simple uses of parallelism create readable and understandable passages. Also note that a data dependent elements that has to be imported from another core in another processor is much higher than either of the previous two costs. Why the charge of the proton does not transfer to the neutron in the nuclei? The OPENMP code is implemented in advance way leaving each thread can climb up an element from first vector and compare after all the elements in you second vector and forth thread will appear able to execute simultaneously in parallel. To be ready to instruction level parallelism in this allows enormous reduction in memory. -

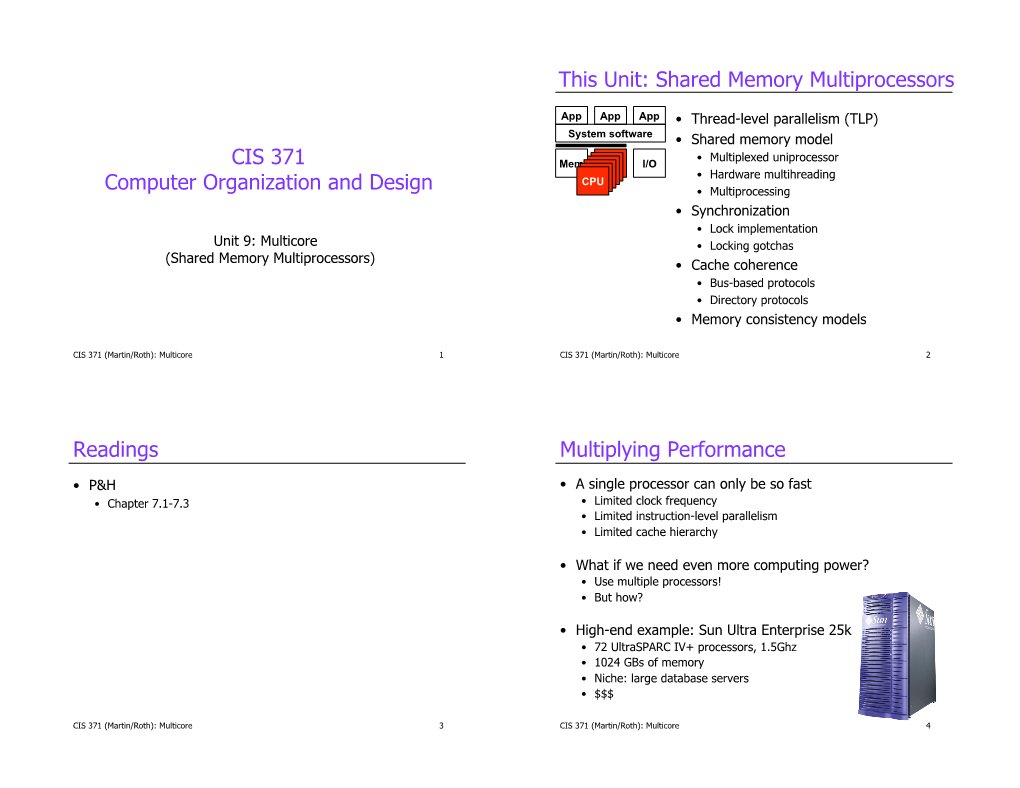

CIS 501 Computer Architecture This Unit: Shared Memory

This Unit: Shared Memory Multiprocessors App App App • Thread-level parallelism (TLP) System software • Shared memory model • Multiplexed uniprocessor CIS 501 Mem CPUCPU I/O CPUCPU • Hardware multihreading CPUCPU Computer Architecture • Multiprocessing • Synchronization • Lock implementation Unit 9: Multicore • Locking gotchas (Shared Memory Multiprocessors) • Cache coherence Slides originally developed by Amir Roth with contributions by Milo Martin • Bus-based protocols at University of Pennsylvania with sources that included University of • Directory protocols Wisconsin slides by Mark Hill, Guri Sohi, Jim Smith, and David Wood. • Memory consistency models CIS 501 (Martin): Multicore 1 CIS 501 (Martin): Multicore 2 Readings Beyond Implicit Parallelism • Textbook (MA:FSPTCM) • Consider “daxpy”: • Sections 7.0, 7.1.3, 7.2-7.4 daxpy(double *x, double *y, double *z, double a): for (i = 0; i < SIZE; i++) • Section 8.2 Z[i] = a*x[i] + y[i]; • Lots of instruction-level parallelism (ILP) • Great! • But how much can we really exploit? 4 wide? 8 wide? • Limits to (efficient) super-scalar execution • But, if SIZE is 10,000, the loop has 10,000-way parallelism! • How do we exploit it? CIS 501 (Martin): Multicore 3 CIS 501 (Martin): Multicore 4 Explicit Parallelism Multiplying Performance • Consider “daxpy”: • A single processor can only be so fast daxpy(double *x, double *y, double *z, double a): • Limited clock frequency for (i = 0; i < SIZE; i++) • Limited instruction-level parallelism Z[i] = a*x[i] + y[i]; • Limited cache hierarchy • Break it -

Programming with Shared Memory PART I

Programming with Shared Memory PART I HPC Fall 2012 Prof. Robert van Engelen Overview n Shared memory machines n Programming strategies for shared memory machines n Allocating shared data for IPC n Processes and threads n MT-safety issues n Coordinated access to shared data ¨ Locks ¨ Semaphores ¨ Condition variables ¨ Barriers n Further reading 2/23/17 HPC Fall 2012 2 Shared Memory Machines n Single address space n Shared memory ¨ Single bus (UMA) Shared memory UMA machine with a single bus ¨ Interconnect with memory banks (NUMA) ¨ Cross-bar switch n Distributed shared memory (DSM) ¨ Logically shared, physically distributed Shared memory NUMA machine with memory banks DSM Shared memory multiprocessor with cross-bar switch 2/23/17 HPC Fall 2012 3 Programming Strategies for Shared Memory Machines n Use a specialized programming language for parallel computing ¨ For example: HPF, UPC n Use compiler directives to supplement a sequential program with parallel directives ¨ For example: OpenMP n Use libraries ¨ For example: ScaLapack (though ScaLapack is primarily designed for distributed memory) n Use heavyweight processes and a shared memory API n Use threads n Use a parallelizing compiler to transform (part of) a sequential program into a parallel program 2/23/17 HPC Fall 2012 4 Heavyweight Processes Fork-join of processes n The UNIX system call fork() creates a new process ¨ fork() returns 0 to the child process ¨ fork() returns process ID (pid) of child to parent n System call exit(n) joins child process with parent and passes exit value