Performance Characterization and Optimization of Atomic Operations on AMD Gpus

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Benchmarking the Intel FPGA SDK for Opencl Memory Interface

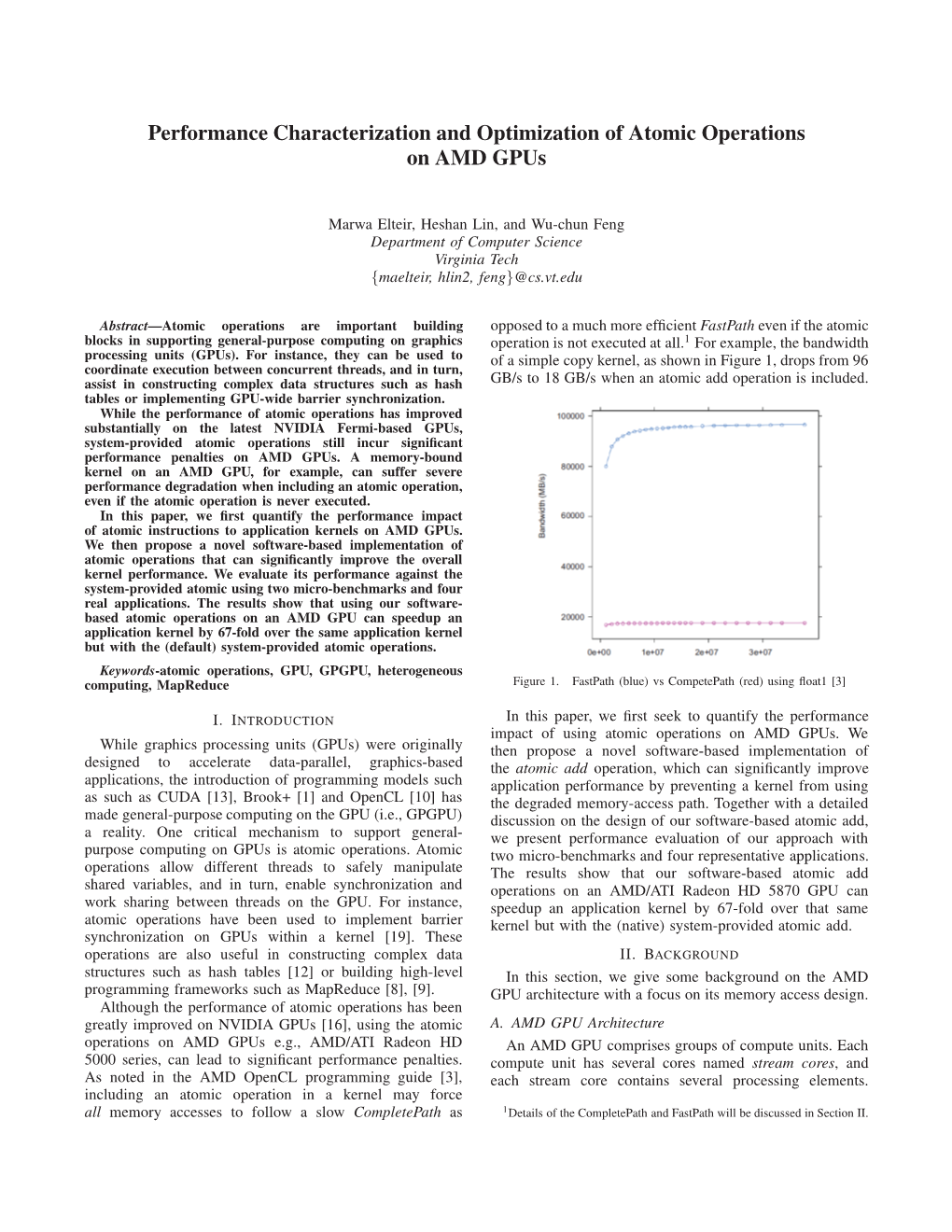

The Memory Controller Wall: Benchmarking the Intel FPGA SDK for OpenCL Memory Interface Hamid Reza Zohouri*†1, Satoshi Matsuoka*‡ *Tokyo Institute of Technology, †Edgecortix Inc. Japan, ‡RIKEN Center for Computational Science (R-CCS) {zohour.h.aa@m, matsu@is}.titech.ac.jp Abstract—Supported by their high power efficiency and efficiency on Intel FPGAs with different configurations recent advancements in High Level Synthesis (HLS), FPGAs are for input/output arrays, vector size, interleaving, kernel quickly finding their way into HPC and cloud systems. Large programming model, on-chip channels, operating amounts of work have been done so far on loop and area frequency, padding, and multiple types of blocking. optimizations for different applications on FPGAs using HLS. However, a comprehensive analysis of the behavior and • We outline one performance bug in Intel’s compiler, and efficiency of the memory controller of FPGAs is missing in multiple deficiencies in the memory controller, leading literature, which becomes even more crucial when the limited to significant loss of memory performance for typical memory bandwidth of modern FPGAs compared to their GPU applications. In some of these cases, we provide work- counterparts is taken into account. In this work, we will analyze arounds to improve the memory performance. the memory interface generated by Intel FPGA SDK for OpenCL with different configurations for input/output arrays, II. METHODOLOGY vector size, interleaving, kernel programming model, on-chip channels, operating frequency, padding, and multiple types of A. Memory Benchmark Suite overlapped blocking. Our results point to multiple shortcomings For our evaluation, we develop an open-source benchmark in the memory controller of Intel FPGAs, especially with respect suite called FPGAMemBench, available at https://github.com/ to memory access alignment, that can hinder the programmer’s zohourih/FPGAMemBench. -

BCL: a Cross-Platform Distributed Data Structures Library

BCL: A Cross-Platform Distributed Data Structures Library Benjamin Brock, Aydın Buluç, Katherine Yelick University of California, Berkeley Lawrence Berkeley National Laboratory {brock,abuluc,yelick}@cs.berkeley.edu ABSTRACT high-performance computing, including several using the Parti- One-sided communication is a useful paradigm for irregular paral- tioned Global Address Space (PGAS) model: Titanium, UPC, Coarray lel applications, but most one-sided programming environments, Fortran, X10, and Chapel [9, 11, 12, 25, 29, 30]. These languages are including MPI’s one-sided interface and PGAS programming lan- especially well-suited to problems that require asynchronous one- guages, lack application-level libraries to support these applica- sided communication, or communication that takes place without tions. We present the Berkeley Container Library, a set of generic, a matching receive operation or outside of a global collective. How- cross-platform, high-performance data structures for irregular ap- ever, PGAS languages lack the kind of high level libraries that exist plications, including queues, hash tables, Bloom filters and more. in other popular programming environments. For example, high- BCL is written in C++ using an internal DSL called the BCL Core performance scientific simulations written in MPI can leverage a that provides one-sided communication primitives such as remote broad set of numerical libraries for dense or sparse matrices, or get and remote put operations. The BCL Core has backends for for structured, unstructured, or adaptive meshes. PGAS languages MPI, OpenSHMEM, GASNet-EX, and UPC++, allowing BCL data can sometimes use those numerical libraries, but are missing the structures to be used natively in programs written using any of data structures that are important in some of the most irregular these programming environments. -

Advances, Applications and Performance of The

1 Introduction The two predominant classes of programming models for MIMD concurrent computing are distributed memory and ADVANCES, APPLICATIONS AND shared memory. Both shared memory and distributed mem- PERFORMANCE OF THE GLOBAL ory models have advantages and shortcomings. Shared ARRAYS SHARED MEMORY memory model is much easier to use but it ignores data PROGRAMMING TOOLKIT locality/placement. Given the hierarchical nature of the memory subsystems in modern computers this character- istic can have a negative impact on performance and scal- Jarek Nieplocha1 1 ability. Careful code restructuring to increase data reuse Bruce Palmer and replacing fine grain load/stores with block access to Vinod Tipparaju1 1 shared data can address the problem and yield perform- Manojkumar Krishnan ance for shared memory that is competitive with mes- Harold Trease1 2 sage-passing (Shan and Singh 2000). However, this Edoardo Aprà performance comes at the cost of compromising the ease of use that the shared memory model advertises. Distrib- uted memory models, such as message-passing or one- Abstract sided communication, offer performance and scalability This paper describes capabilities, evolution, performance, but they are difficult to program. and applications of the Global Arrays (GA) toolkit. GA was The Global Arrays toolkit (Nieplocha, Harrison, and created to provide application programmers with an inter- Littlefield 1994, 1996; Nieplocha et al. 2002a) attempts to face that allows them to distribute data while maintaining offer the best features of both models. It implements a the type of global index space and programming syntax shared-memory programming model in which data local- similar to that available when programming on a single ity is managed by the programmer. -

Enabling Efficient Use of UPC and Openshmem PGAS Models on GPU Clusters

Enabling Efficient Use of UPC and OpenSHMEM PGAS Models on GPU Clusters Presented at GTC ’15 Presented by Dhabaleswar K. (DK) Panda The Ohio State University E-mail: [email protected] hCp://www.cse.ohio-state.edu/~panda Accelerator Era GTC ’15 • Accelerators are becominG common in hiGh-end system architectures Top 100 – Nov 2014 (28% use Accelerators) 57% use NVIDIA GPUs 57% 28% • IncreasinG number of workloads are beinG ported to take advantage of NVIDIA GPUs • As they scale to larGe GPU clusters with hiGh compute density – hiGher the synchronizaon and communicaon overheads – hiGher the penalty • CriPcal to minimize these overheads to achieve maximum performance 3 Parallel ProGramminG Models Overview GTC ’15 P1 P2 P3 P1 P2 P3 P1 P2 P3 LoGical shared memory Shared Memory Memory Memory Memory Memory Memory Memory Shared Memory Model Distributed Memory Model ParPPoned Global Address Space (PGAS) DSM MPI (Message PassinG Interface) Global Arrays, UPC, Chapel, X10, CAF, … • ProGramminG models provide abstract machine models • Models can be mapped on different types of systems - e.G. Distributed Shared Memory (DSM), MPI within a node, etc. • Each model has strenGths and drawbacks - suite different problems or applicaons 4 Outline GTC ’15 • Overview of PGAS models (UPC and OpenSHMEM) • Limitaons in PGAS models for GPU compuPnG • Proposed DesiGns and Alternaves • Performance Evaluaon • ExploiPnG GPUDirect RDMA 5 ParPPoned Global Address Space (PGAS) Models GTC ’15 • PGAS models, an aracPve alternave to tradiPonal message passinG - Simple -

Automatic Handling of Global Variables for Multi-Threaded MPI Programs

Automatic Handling of Global Variables for Multi-threaded MPI Programs Gengbin Zheng, Stas Negara, Celso L. Mendes, Laxmikant V. Kale´ Eduardo R. Rodrigues Department of Computer Science Institute of Informatics University of Illinois at Urbana-Champaign Federal University of Rio Grande do Sul Urbana, IL 61801, USA Porto Alegre, Brazil fgzheng,snegara2,cmendes,[email protected] [email protected] Abstract— necessary to privatize global and static variables to ensure Hybrid programming models, such as MPI combined with the thread-safety of an application. threads, are one of the most efficient ways to write parallel ap- In high-performance computing, one way to exploit multi- plications for current machines comprising multi-socket/multi- core nodes and an interconnection network. Global variables in core platforms is to adopt hybrid programming models that legacy MPI applications, however, present a challenge because combine MPI with threads, where different programming they may be accessed by multiple MPI threads simultaneously. models are used to address issues such as multiple threads Thus, transforming legacy MPI applications to be thread-safe per core, decreasing amount of memory per core, load in order to exploit multi-core architectures requires proper imbalance, etc. When porting legacy MPI applications to handling of global variables. In this paper, we present three approaches to eliminate these hybrid models that involve multiple threads, thread- global variables to ensure thread-safety for an MPI program. safety of the applications needs to be addressed again. These approaches include: (a) a compiler-based refactoring Global variables cause no problem with traditional MPI technique, using a Photran-based tool as an example, which implementations, since each process image contains a sep- automates the source-to-source transformation for programs arate instance of the variable. -

Overview of the Global Arrays Parallel Software Development Toolkit: Introduction to Global Address Space Programming Models

Overview of the Global Arrays Parallel Software Development Toolkit: Introduction to Global Address Space Programming Models P. Saddayappan2, Bruce Palmer1, Manojkumar Krishnan1, Sriram Krishnamoorthy1, Abhinav Vishnu1, Daniel Chavarría1, Patrick Nichols1, Jeff Daily1 1Pacific Northwest National Laboratory 2Ohio State University Outline of the Tutorial ! " Parallel programming models ! " Global Arrays (GA) programming model ! " GA Operations ! " Writing, compiling and running GA programs ! " Basic, intermediate, and advanced calls ! " With C and Fortran examples ! " GA Hands-on session 2 Performance vs. Abstraction and Generality Domain Specific “Holy Grail” Systems GA CAF MPI OpenMP Scalability Autoparallelized C/Fortran90 Generality 3 Parallel Programming Models ! " Single Threaded ! " Data Parallel, e.g. HPF ! " Multiple Processes ! " Partitioned-Local Data Access ! " MPI ! " Uniform-Global-Shared Data Access ! " OpenMP ! " Partitioned-Global-Shared Data Access ! " Co-Array Fortran ! " Uniform-Global-Shared + Partitioned Data Access ! " UPC, Global Arrays, X10 4 High Performance Fortran ! " Single-threaded view of computation ! " Data parallelism and parallel loops ! " User-specified data distributions for arrays ! " Compiler transforms HPF program to SPMD program ! " Communication optimization critical to performance ! " Programmer may not be conscious of communication implications of parallel program HPF$ Independent HPF$ Independent s=s+1 DO I = 1,N DO I = 1,N A(1:100) = B(0:99)+B(2:101) HPF$ Independent HPF$ Independent HPF$ Independent -

Exascale Computing Project -- Software

Exascale Computing Project -- Software Paul Messina, ECP Director Stephen Lee, ECP Deputy Director ASCAC Meeting, Arlington, VA Crystal City Marriott April 19, 2017 www.ExascaleProject.org ECP scope and goals Develop applications Partner with vendors to tackle a broad to develop computer spectrum of mission Support architectures that critical problems national security support exascale of unprecedented applications complexity Develop a software Train a next-generation Contribute to the stack that is both workforce of economic exascale-capable and computational competitiveness usable on industrial & scientists, engineers, of the nation academic scale and computer systems, in collaboration scientists with vendors 2 Exascale Computing Project, www.exascaleproject.org ECP has formulated a holistic approach that uses co- design and integration to achieve capable exascale Application Software Hardware Exascale Development Technology Technology Systems Science and Scalable and Hardware Integrated mission productive technology exascale applications software elements supercomputers Correctness Visualization Data Analysis Applicationsstack Co-Design Programming models, Math libraries and development environment, Tools Frameworks and runtimes System Software, resource Workflows Resilience management threading, Data Memory scheduling, monitoring, and management and Burst control I/O and file buffer system Node OS, runtimes Hardware interface ECP’s work encompasses applications, system software, hardware technologies and architectures, and workforce -

The Global Arrays User Manual

The Global Arrays User Manual Manojkumar Krishnan, Bruce Palmer, Abhinav Vishnu, Sriram Krishnamoorthy, Jeff Daily, Daniel Chavarria February 8, 2012 1 This document is intended to be used with version 5.1 of Global Arrays (Pacific Northwest National Laboratory Technical Report Number PNNL-13130) DISCLAIMER This material was prepared as an account of work sponsored by an agency of the United States Government. Neither the United States Gov- ernment nor the United States Department of Energy, nor Battelle, nor any of their employees, MAKES ANY WARRANTY, EXPRESS OR IMPLIED, OR ASSUMES ANY LEGAL LIABILITY OR RESPONSIBILITY FOR THE ACCURACY, COMPLETENESS, OR USEFULNESS OF ANY INFORMA- TION, APPARATUS, PRODUCT, SOFTWARE, OR PROCESS DISCLOSED, OR REPRESENTS THAT ITS USE WOULD NOT INFRINGE PRIVATELY OWNED RIGHTS. ACKNOWLEDGMENT This software and its documentation were pro- duced with United States Government support under Contract Number DE- AC05-76RLO-1830 awarded by the United States Department of Energy. The United States Government retains a paid-up non-exclusive, irrevocable world- wide license to reproduce, prepare derivative works, perform publicly and display publicly by or for the US Government, including the right to distribute to other US Government contractors. December, 2011 Contents 1 Introduction 3 1.1 Overview . .3 1.2 Basic Functionality . .4 1.3 Programming Model . .5 1.4 Application Guidelines . .6 1.4.1 When to use GA: . .6 1.4.2 When not to use GA . .6 2 Writing, Building and Running GA Programs 7 2.1 Platform and Library Dependencies . .7 2.1.1 Supported Platforms . .7 2.1.2 Selection of the communication network for ARMCI . -

Recent Activities in Programming Models and Runtime Systems at ANL

Recent Activities in Programming Models and Runtime Systems at ANL Rajeev Thakur Deputy Director Mathemacs and Computer Science Division Argonne Naonal Laboratory Joint work with Pavan Balaji, Darius Bun0nas, Jim Dinan, Dave Goodell, Bill Gropp, Rusty Lusk, Marc Snir Programming Models and Runtime Systems Efforts at Argonne MPI and Low-level Data High-level Tasking and Global Movement Run4me Systems Address Programming Models Accelerators and Heterogeneous Compu4ng Systems 2 MPI at Exascale . We believe that MPI has a role to play even at exascale, par0cularly in the context of an MPI+X model . The investment in applicaon codes and libraries wriNen using MPI is on the order of hundreds of millions of dollars . Un0l a viable alternave to MPI for exascale is available, and applicaons have been ported to the new model, MPI must evolve to run as efficiently as possible on future systems . Argonne has long-term strength in all things related to MPI 3 MPI and Low-level Runtime Systems . MPICH implementaon of MPI – Scalability of MPI to very large systems • Scalability improvements to the MPI implementaon • Scalability to large numbers of threads – MPI-3 algorithms and interfaces • Remote memory access • Fault Tolerance – Research on features for future MPI standards • Interac0on with accelerators • Interac0on with other programming models . Data movement models on complex processor and memory architectures – Data movement for heterogeneous memory (accelerator memory, NVRAM) 4 Pavan Balaji wins DOE Early Career Award . Pavan Balaji was recently awarded the highly compe00ve and pres0gious DOE early career award ($500K/yr for 5 years) . “Exploring Efficient Data Movement Strategies for Exascale Systems with Deep Memory Hierarchies” 5 MPICH-based Implementations of MPI . -

The Opengl ES Shading Language

The OpenGL ES® Shading Language Language Version: 3.20 Document Revision: 12 246 JuneAugust 2015 Editor: Robert J. Simpson, Qualcomm OpenGL GLSL editor: John Kessenich, LunarG GLSL version 1.1 Authors: John Kessenich, Dave Baldwin, Randi Rost 1 Copyright (c) 2013-2015 The Khronos Group Inc. All Rights Reserved. This specification is protected by copyright laws and contains material proprietary to the Khronos Group, Inc. It or any components may not be reproduced, republished, distributed, transmitted, displayed, broadcast, or otherwise exploited in any manner without the express prior written permission of Khronos Group. You may use this specification for implementing the functionality therein, without altering or removing any trademark, copyright or other notice from the specification, but the receipt or possession of this specification does not convey any rights to reproduce, disclose, or distribute its contents, or to manufacture, use, or sell anything that it may describe, in whole or in part. Khronos Group grants express permission to any current Promoter, Contributor or Adopter member of Khronos to copy and redistribute UNMODIFIED versions of this specification in any fashion, provided that NO CHARGE is made for the specification and the latest available update of the specification for any version of the API is used whenever possible. Such distributed specification may be reformatted AS LONG AS the contents of the specification are not changed in any way. The specification may be incorporated into a product that is sold as long as such product includes significant independent work developed by the seller. A link to the current version of this specification on the Khronos Group website should be included whenever possible with specification distributions. -

The Opengl ES Shading Language

The OpenGL ES® Shading Language Language Version: 3.00 Document Revision: 6 29 January 2016 Editor: Robert J. Simpson, Qualcomm OpenGL GLSL editor: John Kessenich, LunarG GLSL version 1.1 Authors: John Kessenich, Dave Baldwin, Randi Rost Copyright © 2008-2016 The Khronos Group Inc. All Rights Reserved. This specification is protected by copyright laws and contains material proprietary to the Khronos Group, Inc. It or any components may not be reproduced, republished, distributed, transmitted, displayed, broadcast, or otherwise exploited in any manner without the express prior written permission of Khronos Group. You may use this specification for implementing the functionality therein, without altering or removing any trademark, copyright or other notice from the specification, but the receipt or possession of this specification does not convey any rights to reproduce, disclose, or distribute its contents, or to manufacture, use, or sell anything that it may describe, in whole or in part. Khronos Group grants express permission to any current Promoter, Contributor or Adopter member of Khronos to copy and redistribute UNMODIFIED versions of this specification in any fashion, provided that NO CHARGE is made for the specification and the latest available update of the specification for any version of the API is used whenever possible. Such distributed specification may be reformatted AS LONG AS the contents of the specification are not changed in any way. The specification may be incorporated into a product that is sold as long as such product includes significant independent work developed by the seller. A link to the current version of this specification on the Khronos Group website should be included whenever possible with specification distributions. -

Enabling Efficient Use of UPC and Openshmem PGAS Models on GPU Clusters

Enabling Efficient Use of UPC and OpenSHMEM PGAS models on GPU Clusters Presentation at GTC 2014 by Dhabaleswar K. (DK) Panda The Ohio State University E-mail: [email protected] http://www.cse.ohio-state.edu/~panda Accelerator Era • Accelerators are becoming common in high-end system architectures 72% 25% Top 100 – Nov 2013 72% use GPUs (25% use Accelerators) • Increasing number of workloads are being ported to take advantage of GPUs • As they scale to large GPU clusters with high compute density – higher the synchronization and communication overheads – higher the penalty • Critical to minimize these overheads to achieve maximum performance OSU-GTC-2014 2 Parallel Programming Models Overview P1 P2 P3 P1 P2 P3 P1 P2 P3 Logical shared memory Shared Memory Memory Memory Memory Memory Memory Memory Shared Memory Model Distributed Memory Model Partitioned Global Address Space (PGAS) DSM MPI (Message Passing Interface) Global Arrays, UPC, Chapel, X10, CAF, … • Programming models provide abstract machine models • Models can be mapped on different types of systems – e.g. Distributed Shared Memory (DSM), MPI within a node, etc. • Each model has strengths and drawbacks - suite different problems or applications OSU-GTC-2014 3 Outline • Overview of PGAS models (UPC and OpenSHMEM) • Limitations in PGAS models for GPU computing • Proposed Designs and Alternatives • Performance Evaluation • Exploiting GPUDirect RDMA OSU-GTC-2014 4 Partitioned Global Address Space (PGAS) Models • PGAS models, an attractive alternative to traditional message passing