Design and Optimisation of Scientific Programs in a Categorical Language

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Axiom / Fricas

Axiom / FriCAS Christoph Koutschan Research Institute for Symbolic Computation Johannes Kepler Universit¨atLinz, Austria Computer Algebra Systems 15.11.2010 Master's Thesis: The ISAC project • initiative at Graz University of Technology • Institute for Software Technology • Institute for Information Systems and Computer Media • experimental software assembling open source components with as little glue code as possible • feasibility study for a novel kind of transparent single-stepping software for applied mathematics • experimenting with concepts and technologies from • computer mathematics (theorem proving, symbolic computation, model based reasoning, etc.) • e-learning (knowledge space theory, usability engineering, computer-supported collaboration, etc.) • The development employs academic expertise from several disciplines. The challenge for research is interdisciplinary cooperation. Rewriting, a basic CAS technique This technique is used in simplification, equation solving, and many other CAS functions, and it is intuitively comprehensible. This would make rewriting useful for educational systems|if one copes with the problem, that even elementary simplifications involve hundreds of rewrites. As an example see: http://www.ist.tugraz.at/projects/isac/www/content/ publications.html#DA-M02-main \Reverse rewriting" for comprehensible justification Many CAS functions can not be done by rewriting, for instance cancelling multivariate polynomials, factoring or integration. However, respective inverse problems can be done by rewriting and produce human readable derivations. As an example see: http://www.ist.tugraz.at/projects/isac/www/content/ publications.html#GGTs-von-Polynomen Equation solving made transparent Re-engineering equation solvers in \transparent single-stepping systems" leads to types of equations, arranged in a tree. ISAC's tree of equations are to be compared with what is produced by tracing facilities of Mathematica and/or Maple. -

Aldor Generics in Software Component Architectures

Aldor Generics in Software Component Architectures (Spine title: Aldor Generics in Software Component Architectures) (Thesis Format: Monograph) by Michael Llewellyn Lloyd Graduate Program in Computer Science Submitted in partial fulfillment of the requirements for the degree of Masters Faculty of Graduate Studies The University of Western Ontario London, Ontario October, 2007 c Michael Llewellyn Lloyd 2007 THE UNIVERSITY OF WESTERN ONTARIO FACULTY OF GRADUATE STUDIES CERTIFICATE OF EXAMINATION Supervisor Examiners Dr. Stephen M. Watt Dr. David Jeffrey Supervisory Committee Dr. Hanan Lutfiyya Dr. Eric Schost The thesis by Michael Llewellyn Lloyd entitled Aldor Generics in Software Component Architectures is accepted in partial fulfillment of the requirements for the degree of Masters Date Chair of the Thesis Examination Board ii Abstract With the introduction of the Generic Interface Definition Language(GIDL), Software Component Architectures may now use Parameterized types between languages at a high level. Using GIDL, generic programming to be used between programming modules written in different programming languages. By exploiting this mechanism, GIDL creates a flexible programming environment where, each module may be coded in a language appropriate to its function. Currently the GIDL architecture has been implemented for C++ and Java, both object-oriented languages which support com- pile time binding for generic types. This thesis examines an implementation of GIDL for the Aldor language, an imperative language with some aspects of functional pro- gramming, particularly suited for representing algebraic types. In particular, we ex- periment with Aldors implementation of runtime binding for generic types, and show how GIDL allows programmers to use a rich set of generic types across language barriers. -

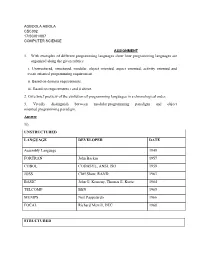

1. with Examples of Different Programming Languages Show How Programming Languages Are Organized Along the Given Rubrics: I

AGBOOLA ABIOLA CSC302 17/SCI01/007 COMPUTER SCIENCE ASSIGNMENT 1. With examples of different programming languages show how programming languages are organized along the given rubrics: i. Unstructured, structured, modular, object oriented, aspect oriented, activity oriented and event oriented programming requirement. ii. Based on domain requirements. iii. Based on requirements i and ii above. 2. Give brief preview of the evolution of programming languages in a chronological order. 3. Vividly distinguish between modular programming paradigm and object oriented programming paradigm. Answer 1i). UNSTRUCTURED LANGUAGE DEVELOPER DATE Assembly Language 1949 FORTRAN John Backus 1957 COBOL CODASYL, ANSI, ISO 1959 JOSS Cliff Shaw, RAND 1963 BASIC John G. Kemeny, Thomas E. Kurtz 1964 TELCOMP BBN 1965 MUMPS Neil Pappalardo 1966 FOCAL Richard Merrill, DEC 1968 STRUCTURED LANGUAGE DEVELOPER DATE ALGOL 58 Friedrich L. Bauer, and co. 1958 ALGOL 60 Backus, Bauer and co. 1960 ABC CWI 1980 Ada United States Department of Defence 1980 Accent R NIS 1980 Action! Optimized Systems Software 1983 Alef Phil Winterbottom 1992 DASL Sun Micro-systems Laboratories 1999-2003 MODULAR LANGUAGE DEVELOPER DATE ALGOL W Niklaus Wirth, Tony Hoare 1966 APL Larry Breed, Dick Lathwell and co. 1966 ALGOL 68 A. Van Wijngaarden and co. 1968 AMOS BASIC FranÇois Lionet anConstantin Stiropoulos 1990 Alice ML Saarland University 2000 Agda Ulf Norell;Catarina coquand(1.0) 2007 Arc Paul Graham, Robert Morris and co. 2008 Bosque Mark Marron 2019 OBJECT-ORIENTED LANGUAGE DEVELOPER DATE C* Thinking Machine 1987 Actor Charles Duff 1988 Aldor Thomas J. Watson Research Center 1990 Amiga E Wouter van Oortmerssen 1993 Action Script Macromedia 1998 BeanShell JCP 1999 AngelScript Andreas Jönsson 2003 Boo Rodrigo B. -

Emacs for Aider: an External Learning System

Emacs For Aider: An External Learning System Jeremy (Ya-Yu) Hsieh B.Sc., University Of Northern British Columbia, 2003 Thesis Submitted In Partial Fulfillment Of The Requirements For The Degree Of Master of Science in Mathematical, Computer, And Physical Sciences (Computer Science) The University Of Northern British Columbia May 2006 © Jeremy (Ya-Yu) Hsieh, 2006 Library and Bibliothèque et 1^1 Archives Canada Archives Canada Published Heritage Direction du Branch Patrimoine de l'édition 395 Wellington Street 395, rue Wellington Ottawa ON K1A 0N4 Ottawa ON K1A 0N4 Canada Canada Your file Votre référence ISBN: 978-0-494-28361-5 Our file Notre référence ISBN: 978-0-494-28361-5 NOTICE: AVIS: The author has granted a non L'auteur a accordé une licence non exclusive exclusive license allowing Library permettant à la Bibliothèque et Archives and Archives Canada to reproduce,Canada de reproduire, publier, archiver, publish, archive, preserve, conserve,sauvegarder, conserver, transmettre au public communicate to the public by par télécommunication ou par l'Internet, prêter, telecommunication or on the Internet,distribuer et vendre des thèses partout dans loan, distribute and sell theses le monde, à des fins commerciales ou autres, worldwide, for commercial or non sur support microforme, papier, électronique commercial purposes, in microform,et/ou autres formats. paper, electronic and/or any other formats. The author retains copyright L'auteur conserve la propriété du droit d'auteur ownership and moral rights in et des droits moraux qui protège cette thèse. this thesis. Neither the thesis Ni la thèse ni des extraits substantiels de nor substantial extracts from it celle-ci ne doivent être imprimés ou autrement may be printed or otherwise reproduits sans son autorisation. -

The Aldor-- Language

The Aldor-- language Simon Thompson and Leonid Timochouk Computing Laboratory, University of Kent Canterbury, CT2 7NF, UK ׺ºØÓÑÔ×ÓҸк ºØ ÑÓÓÙÙº ºÙ Abstract This paper introduces the Aldor-- language, which is a functional programming language with dependent types and a powerful, type-based, overloading mechanism. The language is built on a subset of Aldor, the ‘library compiler’ language for the Axiom computer algebra system. Aldor-- is designed with the intention of incorporating logical reasoning into computer algebra computations. The paper contains a formal account of the semantics and type system of Aldor--; a general discus- sion of overloading and how the overloading in Aldor-- fits into the general scheme; examples of logic within Aldor-- and notes on the implementation of the system. 1 Introduction Aldor-- is a dependently-typed functional programming language, which is based on a subset of Aldor [16]. Aldor is a programming language designed to support program development for symbolic mathe- matics, and was used as the library compiler for the Axiom computer algebra system [8]. Aldor-- embodies an approach to integrating computer algebra and logical reasoning which rests on the formulas-as-types principle, sometimes called the Curry-Howard isomorphism [14], under which logical formulas are represented as types in a system of dependent types. Aldor and Axiom have dependent types but, as was argued in earlier papers [11, 15], because there is no evaluation within the type-checker it is not possible to see the Aldor types as providing a faithful representation of logical assertions. A number of other implementations of dependently-typed systems already exist, including Coq [13], Alfa [1] and the Lego proof assistant [10]. -

Mathemagix User Guide Joris Van Der Hoeven, Grégoire Lecerf

Mathemagix User Guide Joris van der Hoeven, Grégoire Lecerf To cite this version: Joris van der Hoeven, Grégoire Lecerf. Mathemagix User Guide. 2013. hal-00785549 HAL Id: hal-00785549 https://hal.archives-ouvertes.fr/hal-00785549 Preprint submitted on 6 Feb 2013 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Mathemagix User Guide Joris van der Hoeven Grégoire Lecerf Table of contents 1. Introduction to the Mathemagix language ................7 2. Simple examples of Mathemagix programs .................9 2.1. Helloworld ..................................... ....... 9 2.1.1. Compiling and running the program . ......... 9 2.1.2. Running the program in the interpreter . ...........9 2.2. Fibonaccisequences ............................. ......... 10 2.3. Mergesort ...................................... ...... 10 3. Declarations and control structures ................... 13 3.1. Declaration and scope of variables . ............ 13 3.2. Declaration of simple functions . ............ 14 3.3. Macros ......................................... ..... 14 3.4. -

2006-2007 Catalog

ff Northwest Vista College Table of Contents Introduction Welcome from the President I. Academic Calendar 2006-2007 II. Mission, Vision, and Values III. College Overview IV. Getting Started V. Paying for Your Education VI. Supporting Academic Success VII. Workforce and Community Development VIII. Earning a Degree or Certificate IX. Programs of Study X. Course Descriptions XI. Student Handbook | Campus Map XII. Administration, Faculty, and Staff Northwest Vista College Catalog 2006-2007 1 Disclaimer This catalog has been carefully prepared to ensure that all information is as accurate and complete as possible. However, changes may occur which result in deviations from the information which is given. For administrative reasons, some courses listed in the Schedule of Classes may not be offered as announced. As a result, the college reserves the right to make such changes in the schedule, teaching assignments of instructors, class locations, and offerings as are necessary administratively. The information in this catalog was prepared well in advance of its effective date of use. Course descriptions are intended, of necessity, to be brief and general. Therefore, the course descriptions in this catalog are not to be considered as binding in content. 2 Catalog 2006-2007 Northwest Vista College Welcome from the President Welcome to Northwest Vista College!! I want to personally invite you to join our community and to participate in learning experiences that foster your personal and professional growth. When you enter the college campus you will encounter an inviting environment that combines a beautiful hill country setting with attractive, modern facilities. It is our goal that, when you meet the faculty and staff members of the college, your initial positive impression will be enhanced by a friendly greeting and helpful service to get you started on your educational journey. -

The Sage Project: Unifying Free Mathematical Software to Create a Viable Alternative to Magma, Maple, Mathematica and MATLAB

The Sage Project: Unifying Free Mathematical Software to Create a Viable Alternative to Magma, Maple, Mathematica and MATLAB Bur¸cinEr¨ocal1 and William Stein2 1 Research Institute for Symbolic Computation Johannes Kepler University, Linz, Austria Supported by FWF grants P20347 and DK W1214. [email protected] 2 Department of Mathematics University of Washington [email protected] Supported by NSF grant DMS-0757627 and DMS-0555776. Abstract. Sage is a free, open source, self-contained distribution of mathematical software, including a large library that provides a unified interface to the components of this distribution. This library also builds on the components of Sage to implement novel algorithms covering a broad range of mathematical functionality from algebraic combinatorics to number theory and arithmetic geometry. Keywords: Python, Cython, Sage, Open Source, Interfaces 1 Introduction In order to use mathematical software for exploration, we often push the bound- aries of available computing resources and continuously try to improve our imple- mentations and algorithms. Most mathematical algorithms require basic build- ing blocks, such as multiprecision numbers, fast polynomial arithmetic, exact or numeric linear algebra, or more advanced algorithms such as Gr¨obnerbasis computation or integer factorization. Though implementing some of these basic foundations from scratch can be a good exercise, the resulting code may be slow and buggy. Instead, one can build on existing optimized implementations of these basic components, either by using a general computer algebra system, such as Magma, Maple, Mathematica or MATLAB, or by making use of the many high quality open source libraries that provide the desired functionality. These two approaches both have significant drawbacks. -

1 Home PDF (Letter Size) PDF (Legal Size) Computer Algebra Independent Integration Tests

1 home PDF (letter size) PDF (legal size) Computer Algebra Independent Integration Tests Nasser M. Abbasi September 17, 2021 Compiled on September 17, 2021 at 9:43am [public] Contents 1 FULL CAS integration tests 2 2 Specialized integration tests 3 2.1 Waldek Hebisch’s Yet another integration test . 3 2.2 Elementary Algebraic integrals . 3 2.3 Sam Blake’s IntegrateAlgebraic function Test . 4 2.4 LITE CAS integration tests . 4 3 Note on build system 5 3.1 My PC during running the tests . 5 4 my cheat sheet notes 6 4.1 Fricas . 6 4.2 sympy . 14 4.3 sagemath hints . 21 4.4 Trying rubi in sympy . 21 4.5 build logs . 23 These reports and the web pages themselves were written in LATEX using TeXLive distribution and compiled to HTML using TeX4ht. 1 FULL CAS integration tests These reports show the result of using full CAS test suite on different CAS’s at same time. This compares how one CAS perform against another. The test suite uses the full Rubi test files provided thanks to Albert Rich. 1. Summer 2021 build Completed. Started May 15, 2021, finished July 28, 2021. Uses Rubi 4.16.1 input data. Number of integrals [71,994]. With grading for all CAS systems except for Mupad. Eight CAS systems were tested : Rubi 4.16.1, Mathematica 12.3, Maple 2021.1, Maxima 5.44, Fricas 1.3.7, Sympy 1.8 under Python 3.7.9, Giac/XCAS 1.7 and Mupad/Matlab 2021a and Sagemath 9.3. 2 3 Maxima, Fricas and Giac were used from within Sagemath 9.3 interface. -

Axiom Bibliography 1

The 30 Year Horizon Manuel Bronstein W illiam Burge T imothy Daly James Davenport Michael Dewar Martin Dunstan Albrecht F ortenbacher P atrizia Gianni Johannes Grabmeier Jocelyn Guidry Richard Jenks Larry Lambe Michael Monagan Scott Morrison W illiam Sit Jonathan Steinbach Robert Sutor Barry T rager Stephen W att Jim W en Clifton W illiamson Volume Bibliography: Axiom Literature Citations i Portions Copyright (c) 2005 Timothy Daly The Blue Bayou image Copyright (c) 2004 Jocelyn Guidry Portions Copyright (c) 2004 Martin Dunstan Portions Copyright (c) 2007 Alfredo Portes Portions Copyright (c) 2007 Arthur Ralfs Portions Copyright (c) 2005 Timothy Daly Portions Copyright (c) 1991-2002, The Numerical ALgorithms Group Ltd. All rights reserved. This book and the Axiom software is licensed as follows: Redistribution and use in source and binary forms, with or without modification, are permitted provided that the following conditions are met: - Redistributions of source code must retain the above copyright notice, this list of conditions and the following disclaimer. - Redistributions in binary form must reproduce the above copyright notice, this list of conditions and the following disclaimer in the documentation and/or other materials provided with the distribution. - Neither the name of The Numerical ALgorithms Group Ltd. nor the names of its contributors may be used to endorse or promote products derived from this software without specific prior written permission. THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" -

SAT Solving By

SWANSEA UNIVERSITY REPORT SERIES The OKlibrary: Introducing a “holistic” research platform for (generalised) SAT solving by Oliver Kullmann Report # CSR 1-2009 The OKlibrary: Introducing a “holistic” research platform for (generalised) SAT solving Oliver Kullmann∗ Computer Science Department Swansea University Swansea, SA2 8PP, UK email: [email protected] http://cs.swan.ac.uk/~csoliver January 12, 2009 Abstract The OKlibrary is introduced, an open-source library supporting research and development in the area of generalised SAT-solving, and available at http://www.ok-sat-library.org. We discuss history, motivation and ar- chitecture of the library, and outline its current extent. The differences to ex- isting platforms are explained: We understand the approach of the OKlibrary as “holistic”, so that the OKlibrary includes for example not only program code, but also research plans and methods for processing and evaluation of experiments (as well as experimental results themselves). This leads to the understanding of the OKlibrary as an “IRE”, an “integrated research envi- ronment” (however not building a single monolithic block, but based on the Unix/Linux tradition of a “tool chest”). “Generalised satisfiability problems” are understood as a generalisation of SAT towards CSP, and we discuss the basic ideas. To conclude we state 10 research problems which we regard as fundamental for advancing core SAT solving. 1 Introduction The “SAT problem” is a key problem in theoretical computer science, and in the last 10 years also has become an important applied area. In its simplest form (“boolean CNF-SAT”), stated as a combinatorial problem, we consider clauses C as finite sets of integers not containing at the same time z and −z for some z ∈ Z (and thus 0 ∈/ C). -

Aldor User Guide

Aldor User Guide by Aldor.org Aldor User Guide c 2000 The Numerical Algorithms Group Limited. c 2002 Aldor.org. All rights reserved. No part of this Manual may be reproduced, transcribed, stored in a retrieval system, translated into any language or computer language or transmitted in any form or by any means, electronic, mechanical, photocopying, recording or otherwise, without the prior written permission of the copyright owner. The copyright owner gives no warranties and makes no representations about the con- tents of this Manual and specifically disclaims any implied warranties of merchantabil- ity or fitness for any purpose. The copyright owner reserves the right to revise this Manual and to make changes from time to time in its contents without notifying any person of such revisions or changes. Substantial portions of this manual have been published earlier in the vol- ume \Axiom Library Compiler User Guide," The Numerical Algorithms Group 1994, ISBN 1-85206-106-5. Aldor was originally developed, under the working name of A], by the Research Di- vision of International Business Machines Corporation, Yorktown Heights, New York, USA. Aldor, AXIOM and the AXIOM logo are trademarks of NAG. NAG is a registered trademark of the Numerical Algorithms Group Limited. All other trademarks are acknowledged. Acknowledgements Aldor was originally developed at IBM Yorktown Heights, in various versions from 1985 to 1994, as an extension language for the AXIOM computer algebra system. Aldor was further refined and extended at the Numerical Algorithms Group at Oxford, before the formation of Al- dor.org in 2002. The principal designer of the language was Stephen Watt, now Profes- sor of Computer Science at the University of Western Ontario.