Cgweek Young Researchers Forum 2018

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Equilateral Sets in Lp

n Equilateral sets in lp Noga Alon ∗ Pavel Pudl´ak y May 23, 2002 Abstract We show that for every odd integer p 1 there is an absolute positive constant cp, ≥ n so that the maximum cardinality of a set of vectors in R such that the lp distance between any pair is precisely 1, is at most cpn log n. We prove some upper bounds for other lp norms as well. 1 Introduction An equilateral set (or a simplex) in a metric space, is a set A, so that the distance between any pair of distinct members of A is b, where b = 0 is a constant. Trivially, the maximum n 6 cardinality of such a set in R with respect to the (usual) l2-norm is n + 1. Somewhat surprisingly, the situation is far more complicated for the other lp norms. For a finite p > 1, ~ n ~ the lp-distance between two points ~a = (a1; : : : an) and b = (b1; : : : ; bn) in R is ~a b p = k − k n p 1=p ~ ~ ( k=1 ai bi ) . The l -distance between ~a and b is ~a b = max1 k n ai bi : For 1 1 ≤ ≤ P j − j n n k − k n j − j all p [1; ], lp denotes the space R with the lp-distance. Let e(lp ) denote the maximum 2 1 n possible cardinality of an equilateral set in lp . n Petty [6] proved that the maximum possible cardinality of an equilateral set in lp is at most 2n and that equality holds only in ln , as shown by the set of all 2n vectors with 0; 1- 1 coordinates. -

Equilateral Dimension of the Rectilinear Space

~& Designs, Codes and Cryptography, 21, 149-164, 2000. ,.,. © 2000 Kluwer Academic Publishers, Boston. Manufactured in The Netherlands. Equilateral Dimension of the Rectilinear Space JACK KOOLEN [email protected] CW/, Kruisluun 413, 1098 SJ Am.rterdam, The Netherlands MONIQUE LAURENT [email protected] CW/, Kruislacm 413, 1098 SJ Amsterdam, The Netherlands ALEXANDER SCHRIJVER CW!, Kruisluan 413, 1098 SJ Amsterdam, The Netherland.1· Abstract. It is conjectured that there exist at most 2k equidistant points in the k-dimensional rectilinear space. This conjecture has been verified fork :::; 3; we show here its validity in dimension k = 4. We also discuss a number of related questions. For instance, what is the maximum number of equidistant points lying in the hyperplane: :L~=I x; = O? If this number would be equal to k, then the above conjecture would follow. We show, however, that this number is 2:: k + I fork 2:: 4. Keywords: Touching number, rectilinear space, equidistant set, cut metric, design, touching simplices 1. Introduction 1.1. The Equilateral Problem Following Blumenthal [2], a subset X of a metric space M is said to be equilateral (or equidistant) if any two distinct points of X are at the same distance; then, the equilateral dimension e(M) of Mis defined as the maximum cardinality of an equilateral set in M. Equilateral sets have been extensively investigated in the literature for a number of metric spaces, including spherical, hyperbolic, elliptic spaces and real normed spaces. Their structure is well understood in the Euclidean, spherical and hyperbolic spaces (cf. [2]) and results about equiangular sets of lines are given by van Lint and Seidel [25] and Lemmens and Seidel [16]. -

Advances in Metric Embedding Theory

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Elsevier - Publisher Connector Advances in Mathematics 228 (2011) 3026–3126 www.elsevier.com/locate/aim Advances in metric embedding theory Ittai Abraham a,1, Yair Bartal b,∗,2, Ofer Neiman c,3 a Microsoft Research, USA b Hebrew University, Jerusalem, Israel c Ben-Gurion University, Israel Received 16 August 2010; accepted 11 August 2011 Available online 9 September 2011 Communicated by Gil Kalai Abstract Metric Embedding plays an important role in a vast range of application areas such as computer vision, computational biology, machine learning, networking, statistics, and mathematical psychology, to name a few. The mathematical theory of metric embedding is well studied in both pure and applied analysis and has more recently been a source of interest for computer scientists as well. Most of this work is focused on the development of bi-Lipschitz mappings between metric spaces. In this paper we present new concepts in metric embeddings as well as new embedding methods for metric spaces. We focus on finite metric spaces, however some of the concepts and methods are applicable in other settings as well. One of the main cornerstones in finite metric embedding theory is a celebrated theorem of Bourgain which states that every finite metric space on n points embeds in Euclidean space with O(log n) distortion. Bourgain’s result is best possible when considering the worst case distortion over all pairs of points in the metric space. Yet, it is natural to ask: can an embedding do much better in terms of the average distortion? Indeed, in most practical applications of metric embedding the main criteria for the quality of an embedding is its average distortion over all pairs. -

Variations of a Combinatorial Problem on Finite Sets Bas Lemmens

Variations of a combinatorial problem on finite sets Bas Lemmens Mathematics Institute, University of Warwick CV47AL Coventry, United Kingdom E-mail: [email protected] "Try, try again", is popular advice. It is good advice. The insect, the mouse, and the man follow it; but if one follows it with more success than the others it is because he varies his problem more intelligently. This strategy for solving mathematical problems was recommended to us by George P´olya [10, p. 209]. In this article we will take P´olya's suggestion and discuss two variations of a combinatorial problem on finite sets. These variations were found by Koolen, Laurent, and Schrijver [9], and reveal a surprising con- nection with geometry. At present however, the problem is still unresolved. Perhaps you can find another variation that will lead to its solution. The problem we are interested is a generalization of Fisher's inequality to multisets. We shall see that such a generalization is closely related to sets in Rn in which the taxicab (or Manhattan) distance between any two distinct points is the same. On the other hand, it will become clear to us in the third section that the problem can also be reformulated in terms of pairwise touching simplices. In fact, we shall see that our problem is equivalent to determining the maximum number of translated copies of a regular n- dimensional simplex that can be placed in Rn such that any two distinct ones touch but do not overlap. Figure 1 shows a possible configuration of three 2-dimensional simplices (equilateral triangles) in the plane. -

FEW DISTANCE SETS in Lp SPACES and Lp PRODUCT SPACES 1

FEW DISTANCE SETS IN `p SPACES AND `p PRODUCT SPACES RICHARD CHEN, FENG GUI, JASON TANG, AND NATHAN XIONG n Abstract. Kusner asked if n + 1 points is the maximum number of points in R such that the `p distance between any two points is 1. We present an improvement to the best known upper bound when p is large in terms of n, as well as a generalization of the bound to s-distance sets. We also study equilateral sets in the `p sums of Euclidean spaces, deriving upper bounds on the size of an equilateral set for when p = 1, p is even, and for any 1 ≤ p < 1. 1. Introduction 1.1. Background n A classic exercise in linear algebra asks for the maximum number of points in R such that the pairwise distances take only two values. One can associate a polynomial to each point in the set such that the polynomials are linearly independent. Then, one can show that the polynomials all lie in a subspace of dimension (n + 1)(n + 4)=2. Since the number of linearly independent vectors cannot exceed the dimension of the subspace, the cardinality of the set is at most (n + 1)(n + 4)=2. This beautiful argument was found by Larman, Rogers, and Seidel [LRS77] in 1977, illustrating the power of algebraic techniques in combinatorics. We can ask the much more general question: \In a metric space X, what is the maximum number of points such that the pairwise distances take only s values?" We use es(X), or just e(X) if s = 1, to denote the answer to this question (by convention, we do not count 0 as a distance). -

On Some Problems in Combinatorial Geometry

On some problems in combinatorial geometry N´oraFrankl A thesis submitted for the degree of Doctor of Philosophy Department of Mathematics London School of Economics and Political Science May 2020 Declaration I certify that the thesis I have presented for examination for the PhD degree of the London School of Economics and Political Science is my own work, with the following exceptions. Chapter 2 is based on the paper `Almost sharp bounds on the number of discrete chains in the plane' written with Andrey Kupavskii. Chapter 3 is based on the paper `Embedding graphs in Euclidean space' written with Andrey Kupavskii and Konrad Swanepoel. Chapter 4 is based on the paper `Almost monochromatic sets and the chromatic number of the plane' written with Tam´asHubai and D¨om¨ot¨orP´alv¨olgyi. Chapter 5 is based on the paper `Nearly k-distance sets' written with Andrey Kupavskii. I warrant that this authorization does not, to the best of my belief, infringe the rights of any third party. 2 Abstract Combinatorial geometry is the study of combinatorial properties of geometric objects. In this thesis we consider several problems in this area. 1. We determine the maximum number of paths with k edges in a unit-distance graph in the plane almost exactly. It is only for k 1 (mod 3) that the answer depends on the ≡ maximum number of unit distances in a set of n points which is unknown. This can be seen as a generalisation of the Erd}osunit distance problem, and was recently suggested to study by Palsson, Senger and Sheffer (2019). -

DICTIONARY of DISTANCES This Page Intentionally Left Blank DICTIONARY of DISTANCES

DICTIONARY OF DISTANCES This page intentionally left blank DICTIONARY OF DISTANCES ELENA DEZA Moscow Pedagogical State University and Donghua University MICHEL-MARIE DEZA Ecole Normale Superieure, Paris, and Shanghai University Amsterdam • Boston • Heidelberg • London • New York • Oxford Paris • San Diego • San Francisco • Singapore • Sydney • Tokyo ELSEVIER Radarweg 29, PO Box 211, 1000 AE Amsterdam, The Netherlands The Boulevard, Langford Lane, Kidlington, Oxford OX5 1GB, UK First edition 2006 Copyright © 2006 Elsevier B.V. All rights reserved No part of this publication may be reproduced, stored in a retrieval system or transmitted in any form or by any means electronic, mechanical, photocopying, recording or otherwise without the prior written permission of the publisher Permissions may be sought directly from Elsevier’s Science & Technology Rights Department in Oxford, UK: phone (+44) (0) 1865 843830; fax (+44) (0) 1865 853333; Email: [email protected]. Alternatively you can submit your request online by visiting the Elsevier web site at http://elsevier.com/locate/permissions, and selecting: Obtaining permission to use Elsevier material Notice No responsibility is assumed by the publisher for any injury and/or damage to persons or property as a matter of products liability, negligence or otherwise, or from any use or operation of any methods, products, instructions or ideas contained in the material herein. Because of rapid advances in the medical sciences, in particular, independent verification of diagnoses and drug dosages -

Combinatorial Distance Geometry in Normed Spaces

Combinatorial distance geometry in normed spaces Konrad J. Swanepoel∗ Abstract We survey problems and results from combinatorial geometry in normed spaces, concentrating on problems that involve distances. These include various properties of unit-distance graphs, minimum-distance graphs, diameter graphs, as well as minimum spanning trees and Steiner minimum trees. In particular, we discuss translative kissing (or Hadwiger) numbers, equilateral sets, and the Borsuk problem in normed spaces. We show how to use the angular measure of Peter Brass to prove various statements about Hadwiger and blocking numbers of convex bodies in the plane, including some new results. We also include some new results on thin cones and their application to distinct distances and other combinatorial problems for normed spaces. Contents 1 Introduction 2 1.1 Outline . .2 1.2 Terminology and Notation . .3 2 The Hadwiger number (translative kissing number) and relatives3 2.1 The Hadwiger number . .3 2.2 Lattice Hadwiger number . .5 2.3 Strict Hadwiger number . .6 2.4 One-sided Hadwiger number . .6 2.5 Blocking number . .7 3 Equilateral sets 7 3.1 Equilateral sets in `¥-sums and the Borsuk problem . .8 3.2 Small maximal equilateral sets . .9 3.3 Subequilateral sets . 10 4 Minimum-distance graphs 10 4.1 Maximum degree and maximum number of edges of minimum-distance graphs . 10 arXiv:1702.00066v2 [math.MG] 3 May 2017 4.2 Minimum degree of minimum-distance graphs . 12 4.3 Chromatic number and independence number of minimum-distance graphs . 12 5 Unit-distance graphs and diameter graphs 13 5.1 Maximum number of edges of unit-distance and diameter graphs . -

Advances in Metric Embedding Theory

Advances in Metric Embedding Theory Ittai Abraham ∗ Yair Bartaly Ofer Neimanz Microsoft Research Hebrew University Princeton University and CCI Abstract Metric Embedding plays an important role in a vast range of application areas such as com- puter vision, computational biology, machine learning, networking, statistics, and mathematical psychology, to name a few. The mathematical theory of metric embedding is well studied in both pure and applied analysis and has more recently been the a source of interest for computer scientists as well. Most of this work is focused on the development of bi-Lipschitz mappings between metric spaces. In this paper we present new concepts in metric embeddings as well as new embedding methods for metric spaces. We focus on finite metric spaces, however some of the concepts and methods are applicable in other settings as well. One of the main cornerstones in finite metric embedding theory is a celebrated theorem of Bourgain which states that every finite metric space on n points embeds in Euclidean space with O(log n) distortion. Bourgain's result is best possible when considering the worst case distortion over all pairs of points in the metric space. Yet, it is natural to ask: can an embedding can do much better in terms of the average distortion? Indeed, in most practical applications of metric embedding the main criteria for the quality of an embedding is its average distortion over all pairs. In this paper we provide an embedding with constant average distortion for arbitrary metric spaces, while maintaining the same worst case bound provided by Bourgain's theorem. -

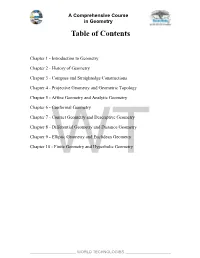

Table of Contents

A Comprehensive Course in Geometry Table of Contents Chapter 1 - Introduction to Geometry Chapter 2 - History of Geometry Chapter 3 - Compass and Straightedge Constructions Chapter 4 - Projective Geometry and Geometric Topology Chapter 5 - Affine Geometry and Analytic Geometry Chapter 6 - Conformal Geometry Chapter 7 - Contact Geometry and Descriptive Geometry Chapter 8 - Differential Geometry and Distance Geometry Chapter 9 - Elliptic Geometry and Euclidean Geometry Chapter 10 WT- Finite Geometry and Hyperbolic Geometry _____________________ WORLD TECHNOLOGIES _____________________ A Course in Boolean Algebra Table of Contents Chapter 1 - Introduction to Boolean Algebra Chapter 2 - Boolean Algebras Formally Defined Chapter 3 - Negation and Minimal Negation Operator Chapter 4 - Sheffer Stroke and Zhegalkin Polynomial Chapter 5 - Interior Algebra and Two-Element Boolean Algebra Chapter 6 - Heyting Algebra and Boolean Prime Ideal Theorem Chapter 7 - Canonical Form (Boolean algebra) Chapter 8 -WT Boolean Algebra (Logic) and Boolean Algebra (Structure) _____________________ WORLD TECHNOLOGIES _____________________ A Course in Riemannian Geometry Table of Contents Chapter 1 - Introduction to Riemannian Geometry Chapter 2 - Riemannian Manifold and Levi-Civita Connection Chapter 3 - Geodesic and Symmetric Space Chapter 4 - Curvature of Riemannian Manifolds and Isometry Chapter 5 - Laplace–Beltrami Operator Chapter 6 - Gauss's Lemma Chapter 7 - Types of Curvature in Riemannian Geometry Chapter 8 - Gauss–Codazzi Equations Chapter 9 -WT Formulas -

Pacific Journal of Mathematics Vol 228 Issue 2, Dec 2006

PACIFIC JOURNAL OF MATHEMATICS Pacific Journal of Mathematics Volume 228 No. 2 December 2006 PacificPPPPPPPacificacificacificacificacificacificacificPPPPPPacificacificacificacificacificacificPacificPPacificacificPacificPacific JournalJJJJJJJournalournalournalournalournalournalournalofofofofofofofJJJJJJournalournalournalournalournalournalofofofofofofJournalJJournalournalofofJournalJournal of of of of MathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematicsMathematics 2006 Vol. 228, No. 2 Volume 228 No. 2 December 2006 PACIFIC JOURNAL OF MATHEMATICS http://www.pjmath.org Founded in 1951 by E. F. Beckenbach (1906–1982) F. Wolf (1904–1989) EDITORS V. S. Varadarajan (Managing Editor) Department of Mathematics University of California Vyjayanthi Chari Los Angeles, CA 90095-1555 Sorin Popa Department of Mathematics pacifi[email protected] Department of Mathematics University of California University of California Riverside, CA 92521-0135 Los Angeles, CA 90095-1555 [email protected] [email protected] Robert Finn Darren Long Jie Qing Department of Mathematics Department of Mathematics Department of Mathematics Stanford University University of California University of California Stanford, CA 94305-2125 Santa Barbara, CA 93106-3080 Santa Cruz, CA 95064 fi[email protected] [email protected] [email protected] Kefeng Liu Jiang-Hua Lu Jonathan Rogawski Department of Mathematics Department of Mathematics Department of Mathematics -

Equilateral Sets in Finite-Dimensional Normed Spaces

Equilateral sets in finite-dimensional normed spaces Konrad J. Swanepoel∗ Abstract This is an expository paper on the largest size of equilateral sets in finite-dimensional normed spaces. Contents 1 Introduction 1.1 Definitions 1.2 Kusner’s questions for the ℓp norms 2 General upper bounds 2.1 The theorem of Petty and Soltan 2.2 Strictly convex norms 3 Simple lower bounds 3.1 General Minkowski spaces n 3.2 ℓp with 1 <p< 2 4 Cayley-Menger Theory 4.1 The Cayley-Menger determinant n 4.2 Embedding an almost equilateral n-simplex into ℓ2 5 The Theorem of Brass and Dekster 6 The Linear Algebra Method 6.1 Linear independence 6.2 Rank arguments 7 Approximation Theory: Smyth’s approach n 8 The best known upper bound for e(ℓ1 ) 9 Final remarks 9.1 Infinite dimensions 9.2 Generalizations ∗Department of Mathematics, Applied Mathematics and Astronomy, University of South Africa, PO Box 392, Pretoria arXiv:math/0406264v1 [math.MG] 14 Jun 2004 0003, South Africa. E-mail: [email protected]. This material is based upon work supported by the National Research Foundation under Grant number 2053752. These are lecture notes for a 4-hour course given on April 20-23, 2004, at the Department of Mathematical Analysis, University of Seville. I thank the University of Seville for their kind invitation and generous hospitality. 1 1 Introduction In this paper I discuss some of the more important known results on equilateral sets in finite-dimensional normed spaces with an analytical flavour. I have omitted a discussion of some of the low-dimensional results of a discrete geometric nature.