Mysql Cluster – Evaluation and Tests, OCTOBER 2, 2012 1 Mysql Cluster – Evaluation and Tests

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Mysql Replication Tutorial

MySQL Replication Tutorial Lars Thalmann Technical lead Replication, Backup, and Engine Technology Mats Kindahl Lead Developer Replication Technology MySQL Conference and Expo 2008 Concepts 3 MySQL Replication Why? How? 1. High Availability Snapshots (Backup) Possibility of fail-over 1. Client program mysqldump 2. Load-balancing/Scale- With log coordinates out 2. Using backup Query multiple servers InnoDB, NDB 3. Off-site processing Don’t disturb master Binary log 1. Replication Asynchronous pushing to slave 2. Point-in-time recovery Roll-forward Terminology Master MySQL Server • Changes data • Has binlog turned on Master • Pushes binlog events to slave after slave has requested them MySQL Server Slave MySQL Server • Main control point of replication • Asks master for replication log Replication • Gets binlog event from master MySQL Binary log Server • Log of everything executed Slave • Divided into transactional components • Used for replication and point-in-time recovery Terminology Synchronous replication Master • A transaction is not committed until the data MySQL has been replicated (and applied) Server • Safer, but slower • This is available in MySQL Cluster Replication Asynchronous replication • A transaction is replicated after it has been committed MySQL Server • Faster, but you can in some cases loose transactions if master fails Slave • Easy to set up between MySQL servers Configuring Replication Required configuration – my.cnf Replication Master log-bin server_id Replication Slave server_id Optional items in my.cnf – What -

Beyond Relational Databases

EXPERT ANALYSIS BY MARCOS ALBE, SUPPORT ENGINEER, PERCONA Beyond Relational Databases: A Focus on Redis, MongoDB, and ClickHouse Many of us use and love relational databases… until we try and use them for purposes which aren’t their strong point. Queues, caches, catalogs, unstructured data, counters, and many other use cases, can be solved with relational databases, but are better served by alternative options. In this expert analysis, we examine the goals, pros and cons, and the good and bad use cases of the most popular alternatives on the market, and look into some modern open source implementations. Beyond Relational Databases Developers frequently choose the backend store for the applications they produce. Amidst dozens of options, buzzwords, industry preferences, and vendor offers, it’s not always easy to make the right choice… Even with a map! !# O# d# "# a# `# @R*7-# @94FA6)6 =F(*I-76#A4+)74/*2(:# ( JA$:+49>)# &-)6+16F-# (M#@E61>-#W6e6# &6EH#;)7-6<+# &6EH# J(7)(:X(78+# !"#$%&'( S-76I6)6#'4+)-:-7# A((E-N# ##@E61>-#;E678# ;)762(# .01.%2%+'.('.$%,3( @E61>-#;(F7# D((9F-#=F(*I## =(:c*-:)U@E61>-#W6e6# @F2+16F-# G*/(F-# @Q;# $%&## @R*7-## A6)6S(77-:)U@E61>-#@E-N# K4E-F4:-A%# A6)6E7(1# %49$:+49>)+# @E61>-#'*1-:-# @E61>-#;6<R6# L&H# A6)6#'68-# $%&#@:6F521+#M(7#@E61>-#;E678# .761F-#;)7-6<#LNEF(7-7# S-76I6)6#=F(*I# A6)6/7418+# @ !"#$%&'( ;H=JO# ;(\X67-#@D# M(7#J6I((E# .761F-#%49#A6)6#=F(*I# @ )*&+',"-.%/( S$%=.#;)7-6<%6+-# =F(*I-76# LF6+21+-671># ;G';)7-6<# LF6+21#[(*:I# @E61>-#;"# @E61>-#;)(7<# H618+E61-# *&'+,"#$%&'$#( .761F-#%49#A6)6#@EEF46:1-# -

Two Node Mysql Cluster

Two Node MySQL Cluster 1.0 EXECUTIVE SUMMARY This white paper describes the challenges CONTENTS involved in deploying the 2 node High Available MySQL-Cluster with a proposed solution. For the SECTION PAGE sake of users reading this document it also describes in brief the main components of the MySQL Cluster which are necessary to 1.0 EXECUTIVE SUMMARY………………………1 understand the paper overall. 2.0 BUSINESS CHALLENGES……………………1 The solution relies on the Linux HA framework 3.0 MYSQL CLUSTER……………………………..1 (Heartbeat/Pacemaker) so the white paper can 3.1 CLIENTS/APIS………………………………….2 be best understood with the knowledge of Linux 3.2 SQL NODE………………………………………2 HA framework. 3.3 DATA NODE…………………………………….2 3.4 NDB MANAGEMENT NODE………………….3 3.5 CHALLENGES………………………………….3 3.6 SOLUTION………………………………………4 4.0 REFERENCES………………………………….7 2.0 BUSINESS CHALLENGES The MySQL cluster demands at least 4 nodes to be present for deploying a High Available MySQL database cluster. The typical configuration of any enterprise application is a 2 Node solution (Active-Standby mode or Active-Active Mode). The challenge lies in fitting the MySQL Clsuter Nodes in the 2 Nodes offering the application services and to make it work in that configuration with no single point of failure. 3.0 MYSQL CLUSTER The intent of this section is to briefly mention the important actors and their roles in the overall MySQL Cluster. For more information the reader can refer to the MYSQL reference documents from its official site (http://dev.mysql.com/doc/index.html). MySQL Cluster is a technology that enables clustering of in-memory databases in a “shared-nothing system”. -

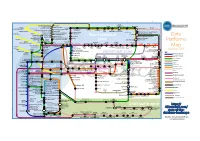

Data Platforms Map from 451 Research

1 2 3 4 5 6 Azure AgilData Cloudera Distribu2on HDInsight Metascale of Apache Kaa MapR Streams MapR Hortonworks Towards Teradata Listener Doopex Apache Spark Strao enterprise search Apache Solr Google Cloud Confluent/Apache Kaa Al2scale Qubole AWS IBM Azure DataTorrent/Apache Apex PipelineDB Dataproc BigInsights Apache Lucene Apache Samza EMR Data Lake IBM Analy2cs for Apache Spark Oracle Stream Explorer Teradata Cloud Databricks A Towards SRCH2 So\ware AG for Hadoop Oracle Big Data Cloud A E-discovery TIBCO StreamBase Cloudera Elas2csearch SQLStream Data Elas2c Found Apache S4 Apache Storm Rackspace Non-relaonal Oracle Big Data Appliance ObjectRocket for IBM InfoSphere Streams xPlenty Apache Hadoop HP IDOL Elas2csearch Google Azure Stream Analy2cs Data Ar2sans Apache Flink Azure Cloud EsgnDB/ zone Platforms Oracle Dataflow Endeca Server Search AWS Apache Apache IBM Ac2an Treasure Avio Kinesis LeanXcale Trafodion Splice Machine MammothDB Drill Presto Big SQL Vortex Data SciDB HPCC AsterixDB IBM InfoSphere Towards LucidWorks Starcounter SQLite Apache Teradata Map Data Explorer Firebird Apache Apache JethroData Pivotal HD/ Apache Cazena CitusDB SIEM Big Data Tajo Hive Impala Apache HAWQ Kudu Aster Loggly Ac2an Ingres Sumo Cloudera SAP Sybase ASE IBM PureData January 2016 Logic Search for Analy2cs/dashDB Logentries SAP Sybase SQL Anywhere Key: B TIBCO Splunk Maana Rela%onal zone B LogLogic EnterpriseDB SQream General purpose Postgres-XL Microso\ Ry\ X15 So\ware Oracle IBM SAP SQL Server Oracle Teradata Specialist analy2c PostgreSQL Exadata -

Mariadb Presentation

THE VALUE OF OPEN SOURCE MICHAEL ”MONTY” WIDENIUS Entrepreneur, MariaDB Hacker, MariaDB CTO MariaDB Corporation AB 2019-09-25 Seoul 11 Reasons Open Source is Better than Closed Source ● Using open standards (no lock in into proprietary standards) ● Resource friendly; OSS software tend to work on old hardware ● Lower cost; Usually 1/10 of closed source software ● No cost for testing the full software ● Better documentation and more troubleshooting resources ● Better support, in many cases directly from the developers ● Better security, auditability (no trap doors and more eye balls) ● Better quality; Developed together with users ● Better customizability; You can also participate in development ● No vendor lock in; More than one vendor can give support ● When using open source, you take charge of your own future Note that using open source does not mean that you have to become a software producer! OPEN SOURCE, THE GOOD AND THE BAD ● Open source is a better way to develop software ● More developers ● More spread ● Better code (in many cases) ● Works good for projects that can freely used by a lot of companies in their production or products. ● It's very hard to create a profitable company developing an open source project. ● Not enough money to pay developers. ● Hard to get money and investors for most projects (except for infrastructure projects like libraries or daemon services). OPEN SOURCE IS NATURAL OR WHY OPEN SOURCE WORKS ● You use open source because it's less expensive (and re-usable) ● You solve your own problems and get free help and development efforts from others while doing it. -

GIS Features in Mariadb and Mysql What Has Happened in Recent Years?

GIS features in MariaDB and MySQL What has happened in recent years? Hartmut Holzgraefe Principal Support Engineer at MariaDB Inc. [email protected] August 20, 2016 Hartmut Holzgraefe (MariaDB Inc.) GIS features in MariaDB and MySQL August 20, 2016 1 / 35 Overview 1 GIS Introduction 2 MySQL GIS History 3 Other Open Source GIS Databases 4 Performance 5 The End ... Hartmut Holzgraefe (MariaDB Inc.) GIS features in MariaDB and MySQL August 20, 2016 2 / 35 GIS Introduction 1 GIS Introduction Examples 2 MySQL GIS History 3 Other Open Source GIS Databases 4 Performance 5 The End ... Hartmut Holzgraefe (MariaDB Inc.) GIS features in MariaDB and MySQL August 20, 2016 3 / 35 GIS Data Types Geospatial Information System (GIS) data types describe geometries in a (usually) two-dimensional space. There are several different geometric subtypes: Simple types: POINT, LINESTRING, POLYGON, GEOMETRY Collection types: MULTIPOINT, MULTILINESTRING, MULTIPOLYGON, GEOMETRYCOLLECTION Hartmut Holzgraefe (MariaDB Inc.) GIS features in MariaDB and MySQL August 20, 2016 4 / 35 Spatial Properties Spatial properties of a geometry can be: Coordinates Length Area Is Closed Bounding Rectangle ... Hartmut Holzgraefe (MariaDB Inc.) GIS features in MariaDB and MySQL August 20, 2016 5 / 35 Spatial Relationships The most important spatial relationships between two geometries: Hartmut Holzgraefe (MariaDB Inc.) GIS features in MariaDB and MySQL August 20, 2016 6 / 35 Examples 1 GIS Introduction Examples 2 MySQL GIS History 3 Other Open Source GIS Databases 4 Performance 5 The -

Mariadb Subscription Services Agreement

MARIADB CORPORATION MariaDB Subscription Services MariaDB Subscription customers have access to technical support services including Problem Resolution Support, Engineering Support, Consultative Support, Remote Login Support, and Telephone Support for the MariaDB platform (MariaDB server, MariaDB TX for transactions, MariaDB AX for analytics, MariaDB MaxScale, and related products like storage engines) via the Customer Support Portal. Each designated technical contact will receive a Customer Support Portal login (based on the associated email address) that can be used to report new support issues, monitor ongoing issues, or review historical issues. Information regarding making changes to technical contacts can be found in the "Welcome Letter" provided after signup, and is also available in the “Contact Us” section of the Customer Support Portal. If you have issues initially logging into the Customer Support Portal, you will be prompted to email [email protected] for further assistance. If Remote DBA services are purchased, an on-boarding call is scheduled to gather the necessary information for the MariaDB Remote DBA team to remotely access supported products. Information about the architecture, operating systems, database server versions, backup schedules, etc will also be documented during this call. Once the required information has been collected, monitoring software will be installed and setup to alert MariaDB Corporation. Certain alerts such as server availability, replication health, and others will be configured to open issues automatically in the Customer Support Portal. All services are delivered in English. MariaDB Corporation will use reasonable efforts to provide technical support in languages other than English using MariaDB Corporation’s available personnel via voice calls and in-person meetings, but may not have such resources available at all or at the time of the support request. -

Mysql Cluster Wann Brauche Ich Das?

MySQL Cluster Wann brauche ich das? Mario Beck Principal Sales Consultant [email protected] The presentation is intended to outline our general product direction. It is intended for information purposes only, and may not be incorporated into any contract. It is not a commitment to deliver any material, code, or functionality, and should not be relied upon in making purchasing decisions. The development, release, and timing of any features or functionality described for Oracle’s products remains at the sole discretion of Oracle. 2.1BN USERS 8X DATA GROWTH IN 5 YRS 750M USERS 70+ NEW DOMAINS EVERY 60 SECONDS 20M APPS PER DAY 40% DATA GROWTH PER YEAR 600 NEW VIDEOS EVERY 60 SECONDS $1TR BY 2014 100K TWEETS PER MINUTE $700BN IN 2011 5.3BN MOBILE SUBS IN 2010 (78% PENETRATION) 13K iPHONE APPS 370K CALL MINUTES EVERY 60 SECONDS DOWNLOADED PER MINUTE Driving new Database Requirements EXTREME WRITE SCALABILITY REAL TIME USER EXPERIENCE ROCK SOLID RELIABILITY ELIMNATE BARRIERS TO ENTRY No Trade-Offs Transactional Integrity EXTREME WRITE SCALABILITYComplex REALQueries TIME USER EXPERIENCE Standards & Skillsets ROCK SOLID RELIABILITY ELIMNATE BARRIERS TO ENTRY No Trade-Offs: Cellular Network HLR / HSS Location Updates AuC, Call Routing, Billing Pre & Post Paid • Massive volumes of write traffic • <3ms database response • Downtime & lost transactions = lost $ Billing, AuC, VLR MySQL Cluster in Action: http://bit.ly/oRI5tF No Trade-Offs: eCommerce • Integrated Service Provider platform • eCommerce • Payment processing • Fulfillment • Supports 1k+ -

Innodb 1.1 for Mysql 5.5 User's Guide Innodb 1.1 for Mysql 5.5 User's Guide

InnoDB 1.1 for MySQL 5.5 User's Guide InnoDB 1.1 for MySQL 5.5 User's Guide Abstract This is the User's Guide for the InnoDB storage engine 1.1 for MySQL 5.5. Beginning with MySQL version 5.1, it is possible to swap out one version of the InnoDB storage engine and use another (the “plugin”). This manual documents the latest InnoDB plugin, version 1.1, which works with MySQL 5.5 and features cutting-edge improvements in performance and scalability. This User's Guide documents the procedures and features that are specific to the InnoDB storage engine 1.1 for MySQL 5.5. It supplements the general InnoDB information in the MySQL Reference Manual. Because InnoDB 1.1 is integrated with MySQL 5.5, it is generally available (GA) and production-ready. WARNING: Because the InnoDB storage engine 1.0 and above introduces a new file format, restrictions apply to the use of a database created with the InnoDB storage engine 1.0 and above, with earlier versions of InnoDB, when using mysqldump or MySQL replication and if you use the older InnoDB Hot Backup product rather than the newer MySQL Enterprise Backup product. See Section 1.4, “Compatibility Considerations for Downgrade and Backup”. For legal information, see the Legal Notices. Document generated on: 2014-01-30 (revision: 37565) Table of Contents Preface and Legal Notices .................................................................................................................. v 1 Introduction to InnoDB 1.1 ............................................................................................................... 1 1.1 Features of the InnoDB Storage Engine ................................................................................ 1 1.2 Obtaining and Installing the InnoDB Storage Engine ............................................................... 3 1.3 Viewing the InnoDB Storage Engine Version Number ............................................................ -

A Closer Look at Mysql Cluster an Architectural Overview - Mariadb White Paper - 08-26-13-001.Docx Page 1

w: www.mariadb.com e: [email protected] MySQL Cluster Internal Architecture Max Mether MariaDB Keywords: MySQL Cluster, MySQL, High Availability, HA, Clustering, Architecture 1 Introduction MySQL Cluster is tightly linked to the MySQL server, yet it is a separate product. Due to its distributed nature, MySQL Cluster has a far more complicated architecture than a standard MySQL database. This presentation and white paper will describe the internal architecture of the MySQL Cluster product and the different mechanisms and process that exists to insure the availability of the cluster. We will also take a close look at the data and message flow that takes place between the nodes in the cluster during different types of operations. 2 MySQL Cluster Features MySQL Cluster can be distinguished from many other clustering products in that it offers five nines availability with a shared-nothing architecture. Most clustering products use a shared-disk architecture. Using a shared- nothing architecture allows the cluster to run on commodity hardware, thus greatly reducing costs compared to shared-disk architecture. Having a shared-nothing architecture does, however, come with a different cost, namely, a more complex way of ensuring high availability and synchronization of the nodes which is mainly seen as increased network traffic. MySQL Cluster also provides ACID transactions with row level locking using the READ-COMMITTED isolation level. MySQL Cluster is, in general, an in-memory database, however non-indexed data can be stored on disk and the disks are also used for checkpointing to ensure durability through system shutdown. MySQL cluster provides unique hash indexes as well as ordered T-tree indexes. -

Mysql Backup and Recovery Abstract

MySQL Backup and Recovery Abstract This is the MySQL Backup and Recovery extract from the MySQL 8.0 Reference Manual. For legal information, see the Legal Notices. For help with using MySQL, please visit the MySQL Forums, where you can discuss your issues with other MySQL users. Document generated on: 2021-10-01 (revision: 70946) Table of Contents Preface and Legal Notices .................................................................................................................. v 1 Backup and Recovery ..................................................................................................................... 1 1.1 Backup and Recovery Types ................................................................................................ 2 1.2 Database Backup Methods ................................................................................................... 5 1.3 Example Backup and Recovery Strategy ............................................................................... 7 1.3.1 Establishing a Backup Policy ..................................................................................... 8 1.3.2 Using Backups for Recovery .................................................................................... 10 1.3.3 Backup Strategy Summary ....................................................................................... 10 1.4 Using mysqldump for Backups ............................................................................................ 10 1.4.1 Dumping Data in SQL Format with mysqldump ........................................................ -

In Mysql/Mariadb?

T h e O W A S P F o u n d a t i o n h t t p : / / w w w . o w a s p . o r g O W A S P E U T o u r B u c h a Do you r e s“GRANT ALL PRIVILEGES” t ... in MySQL/MariaDB? 2 0 1 DevOps Engineer 3 Gabriel PREDA [email protected] @eRadical Co pyr igh t © Th e O W AS P Fo un dat ion Per mi ssi on is gr ant ed to co py, dis tri bu te an d/ or mo dif y thi s do cu me nt un de r the ter ms of the O W AS P Lic en se. 2 DevOps = new BORG DevOps Engineer ??? ● Development – Web Applications (“Certified MySQL Associate”, “Zend Certified Engineer”) – Real Time Analytics ● Operations – MySQL DBA (15+ instances) – Sysadmin (<25 virtual & physical servers) 3 My MySQL● Over 15 MariaDB / TokuDBMariaDB(s) instances ● Statistics in MariaDB – < 1TB from Oct 2012 – < 12G raw data daily – < 12,000,000 events processed daily – < 90,000,000 rows added daily BigData? NO!!! ● I can copy all of that to my laptop ● “Working data set” - less than 1G & less than 7,500,000 rows 4 MySQL History ● 1983 – first version of MySQL created by Monty Wideniuns ● 1994 – MySQL is released OpenSource ● 2004 Oct – MySQL 4.1 GA ● 2005 Oct – InnoDB (Innobase) is bought by Oracle – Black Friday ● 2008 Ian – MySQL AB is bought by Sun (1bn $) ● 2008 Nov – MySQL 5.1 GA ● 2009 Apr – Sun is bought by Oracle (7,4 bn $) ● 2010 Dec – MySQL 5.5 GA ● 2012 Apr – MariaDB 5.5 GA ● 2013 Feb – MySQL 5.6 – first version made by Oracle ● 2013 Feb – MySQL will be replaced by MariaDB in Fedora & OpenSuSE * Max Mether – SkySQL “MySQL and MariaDB: Past, Present and Future” 5 Where are we NOW()? Drizzle MySQL TokuDB (Oracle) (Tokutek) Percona Server (Percona) MariaDB (Monty Program, Brighthouse MariaDB Foundation) (Infobright) Replication: ● Asynchronous InfiniDB ● Semi-synchronous (Calpont) ● Galera Synchronous (Codership) ● Tungsten Replication (Continuent) 6 Elementary..