Tight Probability Bounds with Pairwise Independence

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Notes for ECE 313, Probability with Engineering

Probability with Engineering Applications ECE 313 Course Notes Bruce Hajek Department of Electrical and Computer Engineering University of Illinois at Urbana-Champaign August 2019 c 2019 by Bruce Hajek All rights reserved. Permission is hereby given to freely print and circulate copies of these notes so long as the notes are left intact and not reproduced for commercial purposes. Email to [email protected], pointing out errors or hard to understand passages or providing comments, is welcome. Contents 1 Foundations 3 1.1 Embracing uncertainty . .3 1.2 Axioms of probability . .6 1.3 Calculating the size of various sets . 10 1.4 Probability experiments with equally likely outcomes . 13 1.5 Sample spaces with infinite cardinality . 15 1.6 Short Answer Questions . 20 1.7 Problems . 21 2 Discrete-type random variables 25 2.1 Random variables and probability mass functions . 25 2.2 The mean and variance of a random variable . 27 2.3 Conditional probabilities . 32 2.4 Independence and the binomial distribution . 34 2.4.1 Mutually independent events . 34 2.4.2 Independent random variables (of discrete-type) . 36 2.4.3 Bernoulli distribution . 37 2.4.4 Binomial distribution . 37 2.5 Geometric distribution . 41 2.6 Bernoulli process and the negative binomial distribution . 43 2.7 The Poisson distribution{a limit of binomial distributions . 45 2.8 Maximum likelihood parameter estimation . 47 2.9 Markov and Chebychev inequalities and confidence intervals . 49 2.10 The law of total probability, and Bayes formula . 53 2.11 Binary hypothesis testing with discrete-type observations . -

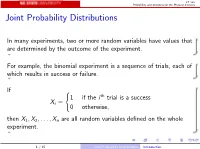

Joint Probability Distributions

ST 380 Probability and Statistics for the Physical Sciences Joint Probability Distributions In many experiments, two or more random variables have values that are determined by the outcome of the experiment. For example, the binomial experiment is a sequence of trials, each of which results in success or failure. If ( 1 if the i th trial is a success Xi = 0 otherwise; then X1; X2;:::; Xn are all random variables defined on the whole experiment. 1 / 15 Joint Probability Distributions Introduction ST 380 Probability and Statistics for the Physical Sciences To calculate probabilities involving two random variables X and Y such as P(X > 0 and Y ≤ 0); we need the joint distribution of X and Y . The way we represent the joint distribution depends on whether the random variables are discrete or continuous. 2 / 15 Joint Probability Distributions Introduction ST 380 Probability and Statistics for the Physical Sciences Two Discrete Random Variables If X and Y are discrete, with ranges RX and RY , respectively, the joint probability mass function is p(x; y) = P(X = x and Y = y); x 2 RX ; y 2 RY : Then a probability like P(X > 0 and Y ≤ 0) is just X X p(x; y): x2RX :x>0 y2RY :y≤0 3 / 15 Joint Probability Distributions Two Discrete Random Variables ST 380 Probability and Statistics for the Physical Sciences Marginal Distribution To find the probability of an event defined only by X , we need the marginal pmf of X : X pX (x) = P(X = x) = p(x; y); x 2 RX : y2RY Similarly the marginal pmf of Y is X pY (y) = P(Y = y) = p(x; y); y 2 RY : x2RX 4 / 15 Joint -

A Counterexample to the Central Limit Theorem for Pairwise Independent Random Variables Having a Common Arbitrary Margin

A counterexample to the central limit theorem for pairwise independent random variables having a common arbitrary margin Benjamin Avanzia,1, Guillaume Boglioni Beaulieub,2,∗, Pierre Lafaye de Micheauxc, Fr´ed´ericOuimetd,3, Bernard Wongb,1 aCentre for Actuarial Studies, Department of Economics, University of Melbourne, VIC 3010, Australia. bSchool of Risk and Actuarial Studies, UNSW Sydney, NSW 2052, Australia. cSchool of Mathematics and Statistics, UNSW Sydney, NSW 2052, Australia. dPMA Department, California Institute of Technology, CA 91125, Pasadena, USA. Abstract The Central Limit Theorem (CLT) is one of the most fundamental results in statistics. It states that the standardized sample mean of a sequence of n mutually independent and identically distributed random variables with finite first and second moments converges in distribution to a standard Gaussian as n goes to infinity. In particular, pairwise independence of the sequence is generally not sufficient for the theorem to hold. We construct explicitly a sequence of pairwise independent random variables having a common but arbitrary marginal distribution F (satisfying very mild conditions) for which the CLT is not verified. We study the extent of this `failure' of the CLT by obtaining, in closed form, the asymptotic distribution of the sample mean of our sequence. This is illustrated through several theoretical examples, for which we provide associated computing codes in the R language. Keywords: central limit theorem, characteristic function, mutual independence, non-Gaussian asymptotic distribution, pairwise independence 2020 MSC: Primary : 62E20 Secondary : 60F05, 60E10 1. Introduction The aim of this paper is to construct explicitly a sequence of pairwise independent and identically distributed (p.i.i.d.) random variables (r.v.s) whose common margin F can be chosen arbitrarily (under very mild conditions) and for which the (standardized) sample mean is not asymptotically Gaussian. -

![Distance Metrics for Measuring Joint Dependence with Application to Causal Inference Arxiv:1711.09179V2 [Stat.ME] 15 Jun 2018](https://docslib.b-cdn.net/cover/0793/distance-metrics-for-measuring-joint-dependence-with-application-to-causal-inference-arxiv-1711-09179v2-stat-me-15-jun-2018-900793.webp)

Distance Metrics for Measuring Joint Dependence with Application to Causal Inference Arxiv:1711.09179V2 [Stat.ME] 15 Jun 2018

Distance Metrics for Measuring Joint Dependence with Application to Causal Inference Shubhadeep Chakraborty Department of Statistics, Texas A&M University and Xianyang Zhang Department of Statistics, Texas A&M University June 18, 2018 Abstract Many statistical applications require the quantification of joint dependence among more than two random vectors. In this work, we generalize the notion of distance covariance to quantify joint dependence among d ≥ 2 random vectors. We introduce the high order distance covariance to measure the so-called Lancaster interaction dependence. The joint distance covariance is then defined as a linear combination of pairwise distance covariances and their higher order counterparts which together completely characterize mutual independence. We further introduce some related concepts including the distance cumulant, distance characteristic function, and rank- based distance covariance. Empirical estimators are constructed based on certain Euclidean distances between sample elements. We study the large sample properties of the estimators and propose a bootstrap procedure to approximate their sampling distributions. The asymptotic validity of the bootstrap procedure is justified under both the null and alternative hypotheses. The new metrics are employed to perform model selection in causal inference, which is based on the joint independence testing of the residuals from the fitted structural equation models. The effectiveness of the method is illustrated via both simulated and real datasets. arXiv:1711.09179v2 [stat.ME] 15 Jun 2018 Keywords: Bootstrap, Directed Acyclic Graph, Distance Covariance, Interaction Dependence, U- statistic, V-statistic. 1 1 Introduction Measuring and testing dependence is of central importance in statistics, which has found applica- tions in a wide variety of areas including independent component analysis, gene selection, graphical modeling and causal inference. -

Testing Mutual Independence in High Dimension Via Distance Covariance

Testing mutual independence in high dimension via distance covariance By Shun Yao, Xianyang Zhang, Xiaofeng Shao ∗ Abstract In this paper, we introduce a L2 type test for testing mutual independence and banded dependence structure for high dimensional data. The test is constructed based on the pairwise distance covariance and it accounts for the non-linear and non-monotone depen- dences among the data, which cannot be fully captured by the existing tests based on either Pearson correlation or rank correlation. Our test can be conveniently implemented in practice as the limiting null distribution of the test statistic is shown to be standard normal. It exhibits excellent finite sample performance in our simulation studies even when sample size is small albeit dimension is high, and is shown to successfully iden- tify nonlinear dependence in empirical data analysis. On the theory side, asymptotic normality of our test statistic is shown under quite mild moment assumptions and with little restriction on the growth rate of the dimension as a function of sample size. As a demonstration of good power properties for our distance covariance based test, we further show that an infeasible version of our test statistic has the rate optimality in the class of Gaussian distribution with equal correlation. Keywords: Banded dependence, Degenerate U-statistics, Distance correlation, High di- mensionality, Hoeffding decomposition arXiv:1609.09380v2 [stat.ME] 18 Sep 2017 1 Introduction In statistical multivariate analysis and machine learning research, a fundamental problem is to explore the relationships and dependence structure among subsets of variables. An important ∗Address correspondence to Xianyang Zhang ([email protected]), Assistant Professor, Department of Statistics, Texas A&M University. -

Independence & Causality: Chapter 17.7 – 17.8

“mcs” — 2015/5/18 — 1:43 — page 714 — #722 17.7 Independence Suppose that we flip two fair coins simultaneously on opposite sides of a room. Intuitively, the way one coin lands does not affect the way the other coin lands. The mathematical concept that captures this intuition is called independence. Definition 17.7.1. An event with probability 0 is defined to be independent of every event (including itself). If PrŒBç 0, then event A is independent of event B iff ¤ Pr A B PrŒAç: (17.4) j D In other words, A and B are independent⇥ ⇤ if knowing that B happens does not al- ter the probability that A happens, as is the case with flipping two coins on opposite sides of a room. “mcs” — 2015/5/18 — 1:43 — page 715 — #723 17.7. Independence 715 Potential Pitfall Students sometimes get the idea that disjoint events are independent. The opposite is true: if A B , then knowing that A happens means you know that B \ D; does not happen. Disjoint events are never independent—unless one of them has probability zero. 17.7.1 Alternative Formulation Sometimes it is useful to express independence in an alternate form which follows immediately from Definition 17.7.1: Theorem 17.7.2. A is independent of B if and only if PrŒA Bç PrŒAç PrŒBç: (17.5) \ D Notice that Theorem 17.7.2 makes apparent the symmetry between A being in- dependent of B and B being independent of A: Corollary 17.7.3. A is independent of B iff B is independent of A. -

On Khintchine Type Inequalities for $ K $-Wise Independent Rademacher Random Variables

1 ON KHINTCHINE TYPE INEQUALITIES FOR k-WISE INDEPENDENT RADEMACHER RANDOM VARIABLES BRENDAN PASS AND SUSANNA SPEKTOR Abstract. We consider Khintchine type inequalities on the p-th moments of vectors of N k-wise independent Rademacher random variables. We show that an analogue of Khintchine’s inequality holds, with a constant N 1/2−k/2p, when k is even. We then show that this result is sharp for k = 2; in particu- lar, a version of Khintchine’s inequality for sequences of pairwise Rademacher variables cannot hold with a constant independent of N. We also characterize the cases of equality and show that, although the vector achieving equality is not unique, it is unique (up to law) among the smaller class of exchangable vectors of pairwise independent Rademacher random variables. As a fortu- nate consequence of our work, we obtain similar results for 3-wise independent vectors. 2010 Classification: 46B06, 60E15 Keywords: Khintchine inequality, Rademacher random variables, k-wise inde- pendent random variables. 1. Introduction This short note concerns Khintchine’s inequality, a classical theorem in prob- ability, with many important applications in both probability and analysis (see [4, 8, 9, 10, 12] among others). It states that the Lp norm of the weighted sum of independent Rademacher random variables is controlled by its L2 norm; a precise statement follows. We say that ε0 is a Rademacher random variable if P(ε =1)= P(ε = 1) = 1 . Letε ¯ , 1 i N, be independent copies of ε and 0 0 − 2 i ≤ ≤ 0 a RN . Khintchine’s inequality (see, for example, Theorem 2.b.3 in [9] , Theorem 12.3.1∈ in [4] or the original work of Khintchine [7]) states that, for any p> 0 (1) 1 1 1 N 2 2 N p p N 2 2 arXiv:1708.08775v1 [math.PR] 27 Aug 2017 B(p) E aiε¯i = B(p) a 2 E aiε¯i C(p) a 2 = C(p) E aiε¯i . -

5. Independence

Virtual Laboratories > 1. Probability Spaces > 1 2 3 4 5 6 7 5. Independence As usual, suppose that we have a random experiment with sample space S and probability measure ℙ. In this section, we will discuss independence, one of the fundamental concepts in probability theory. Independence is frequently invoked as a modeling assumption, and moreover, probability itself is based on the idea of independent replications of the experiment. Basic Theory We will define independence on increasingly complex structures, from two events, to arbitrary collections of events, and then to collections of random variables. In each case, the basic idea is the same. Independence of Two Events Two events A and B are independent if ℙ(A∩B)= ℙ(A) ℙ(B) If both of the events have positive probability, then independence is equivalent to the statement that the conditional probability of one event given the other is the same as the unconditional probability of the event: ℙ(A |B)= ℙ(A) if and only if ℙ(B |A)= ℙ(B) if and only if ℙ(A∩B)= ℙ(A) ℙ(B) This is how you should think of independence: knowledge that one event has occurred does not change the probability assigned to the other event. The terms independent and disjoint sound vaguely similar b ut they are actually very different. First, note that disjointness is purely a set-theory concept while independence is a probability (measure-theoretic) concept. Indeed, two events can be independent relative to one probability measure and dependent relative to another. But most importantly, two disjoint events can never be independent, except in the trivial case that one of the events is null. -

Dependence and Dependence Structures

Open Statistics 2020; 1:1–48 Research Article Björn Böttcher* Dependence and dependence structures: estimation and visualization using the unifying concept of distance multivariance https://doi.org/10.1515/stat-2020-0001 Received Aug 26, 2019; accepted Oct 28, 2019 Abstract: Distance multivariance is a multivariate dependence measure, which can detect dependencies be- tween an arbitrary number of random vectors each of which can have a distinct dimension. Here we discuss several new aspects, present a concise overview and use it as the basis for several new results and concepts: in particular, we show that distance multivariance unifies (and extends) distance covariance and the Hilbert- Schmidt independence criterion HSIC, moreover also the classical linear dependence measures: covariance, Pearson’s correlation and the RV coefficient appear as limiting cases. Based on distance multivariance several new measures are defined: a multicorrelation which satisfies a natural set of multivariate dependence mea- sure axioms and m-multivariance which is a dependence measure yielding tests for pairwise independence and independence of higher order. These tests are computationally feasible and under very mild moment conditions they are consistent against all alternatives. Moreover, a general visualization scheme for higher order dependencies is proposed, including consistent estimators (based on distance multivariance) for the dependence structure. Many illustrative examples are provided. All functions for the use of distance multivariance in applications -

![Arxiv:2005.03967V5 [Math.PR] 12 Aug 2021 Kolmogoroff's Strong Law of Large Numbers Holds for Pairwise Uncorrelated Random Va](https://docslib.b-cdn.net/cover/2992/arxiv-2005-03967v5-math-pr-12-aug-2021-kolmogoroffs-strong-law-of-large-numbers-holds-for-pairwise-uncorrelated-random-va-4232992.webp)

Arxiv:2005.03967V5 [Math.PR] 12 Aug 2021 Kolmogoroff's Strong Law of Large Numbers Holds for Pairwise Uncorrelated Random Va

Maximilian Janisch* Kolmogoroff’s Strong Law of Large Numbers holds for pairwise uncorrelated random variables Original version written on May 8, 2020; Text last updated May 6, 2021; Journal information updated on August 25, 2021 Abstract Using the approach of N. Etemadi for the Strong Law of Large Numbers (SLLN ) in [1] and its elaboration in [5], I give weaker conditions under which the SLLN still holds, namely for pairwise uncorrelated (and also for “quasi uncorrelated”) random variables. I am focusing in particular on random variables which are not identically distributed. My approach here leads to another simple proof of the classical SLLN. Published in (English edition): Theory of Probability and its Applications, 2021, Volume 66, Issue 2, Pages 263–275. https://epubs.siam.org/doi/10.1137/S0040585X97T990381. Doi: https://doi.org/10.1137/S0040585X97T990381. Published in (Russian edition): Teoriya Veroyatnostei i ee Primeneniya (this is the Russian edition of Theory of Probability and its Applications), 2021, Volume 66, Issue 2, Pages 327–341. http://mi.mathnet.ru/tvp5459. Doi: https://doi.org/10.4213/tvp5459. 1 Introduction and results In publication [1] from 1981, N. Etemadi weakened the requirements of Kolmogoroff’s first SLLN for identically distributed random variables and provided an elementary proof of a more general SLLN. I will first state the main Theorem of [1] after introducing some notations that arXiv:2005.03967v6 [math.PR] 24 Aug 2021 I will use. Notation. Throughout the paper, (Ω, , P) is a probability space. All random variables (usu- A ally denoted by X or Xn) are assumed to be measurable functions from Ω to the set of real numbers R. -

Random Variables and Independence Anup Rao April 24, 2019

Lecture 11: Random Variables and Independence Anup Rao April 24, 2019 We discuss the use of hashing and pairwise independence. Random variables are a very useful way to model many situa- tions. Recall the law of total probability, that we discussed before: Fact 1 (Law of Total Probability). If A1, A2,..., An are disjoint events that form a partition of the whole sample space, and B is another event, then p(B) = p(A1 \ B) + p(A2 \ B) + ... + p(An \ B). One consequence of the Law of Total Probability is that whenever you have a random variable X and an event E, we have p(E) = ∑ p(X = x) · p(EjX = x). x Just like with events, we say that two random variables X, Y are independent if having information about one gives you no information about the other. Formally, X, Y are independent if for every x, y p(X = x, Y = y) = p(X = x) · p(Y = y). This means that p(X = xjY = y) = p(X = x), and p(Y = yjX = x) = p(Y = y). Example: Even Number of Heads Suppose you toss n coins. What is the probability that the number of heads is even? We already calculated this the hard way. Let us use an See notes for Lecture 7 on April 15. appropriate random variable to calculate this the easy way. Let X denote the outcome of tossing the first n − 1 coins. Let E denote the event that the number of heads is even. Then: p(E) = ∑ p(X = x) · p(EjX = x). -

Tight Probability Bounds with Pairwise Independence

Tight Probability Bounds with Pairwise Independence Arjun Kodagehalli Ramachandra∗ Karthik Natarajan†‡ March 2021 Abstract While some useful probability bounds for the sum of n pairwise independent Bernoulli random variables exceeding an integer k have been proposed in the literature, none of these bounds are tight in general. In this paper, we provide several results towards finding tight probability bounds for this class of problems. Firstly, when k = 1, the tightest upper bound on the probability of the union of n pairwise independent events is provided in closed form for any input marginal probability vector p 2 [0; 1]n. To prove the result, we show the existence of a positively correlated Bernoulli random vector with transformed bivariate probabilities, which is of independent interest. Building on this, we show that the ratio of Boole's union bound and the tight pairwise independent bound is upper bounded by 4=3 and the bound is attained. Secondly, for k ≥ 2 and any input marginal probability vector p 2 [0; 1]n, new upper bounds are derived exploiting ordering of probabilities. Numerical examples are provided to illustrate when the bounds provide significant improvement over existing bounds. Lastly, while the existing and new bounds are not always tight, we provide special instances when they are shown to be tight. 1 Introduction Probability bounds for sums of Bernoulli random variables have been extensively studied by researchers in various communities including, but not limited to, probability and statistics, computer science, combinatorics and optimization. In this paper, our focus is on pairwise independent Bernoulli random variables. It is well known that while mutually independent random variables are pairwise independent, the reverse is not true.