On a Problem Posed by Steve Smale

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Condition Length and Complexity for the Solution of Polynomial Systems

Condition length and complexity for the solution of polynomial systems Diego Armentano∗ Carlos Beltr´an† Universidad de La Rep´ublica Universidad de Cantabria URUGUAY SPAIN [email protected] [email protected] Peter B¨urgisser‡ Felipe Cucker§ Technische Universit¨at Berlin City University of Hong Kong GERMANY HONG KONG [email protected] [email protected] Michael Shub City University of New York U.S.A. [email protected] October 2, 2018 Abstract Smale’s 17th problem asks for an algorithm which finds an approximate arXiv:1507.03896v1 [math.NA] 14 Jul 2015 zero of polynomial systems in average polynomial time (see Smale [17]). The main progress on Smale’s problem is Beltr´an-Pardo [6] and B¨urgisser- Cucker [9]. In this paper we will improve on both approaches and we prove an important intermediate result. Our main results are Theorem 1 on the complexity of a randomized algorithm which improves the result of [6], Theorem 2 on the average of the condition number of polynomial systems ∗Partially supported by Agencia Nacional de Investigaci´on e Innovaci´on (ANII), Uruguay, and by CSIC group 618 †partially suported by the research projects MTM2010-16051 and MTM2014-57590 from Spanish Ministry of Science MICINN ‡Partially funded by DFG research grant BU 1371/2-2 §Partially funded by a GRF grant from the Research Grants Council of the Hong Kong SAR (project number CityU 100813). 1 which improves the estimate found in [9], and Theorem 3 on the complexity of finding a single zero of polynomial systems. This last Theorem is the main result of [9]. -

Dagrep-V005-I006-Complete.Pdf

Volume 5, Issue 6, June 2015 Computational Social Choice: Theory and Applications (Dagstuhl Seminar 15241) Britta Dorn, Nicolas Maudet, and Vincent Merlin ................................ 1 Complexity of Symbolic and Numerical Problems (Dagstuhl Seminar 15242) Peter Bürgisser, Felipe Cucker, Marek Karpinski, and Nicolai Vorobjov . 28 Sparse Modelling and Multi-exponential Analysis (Dagstuhl Seminar 15251) Annie Cuyt, George Labahn, Avraham Sidi, and Wen-shin Lee ................... 48 Logics for Dependence and Independence (Dagstuhl Seminar 15261) Erich Grädel, Juha Kontinen, Jouko Väänänen, and Heribert Vollmer . 70 DagstuhlReports,Vol.5,Issue6 ISSN2192-5283 ISSN 2192-5283 Published online and open access by Aims and Scope Schloss Dagstuhl – Leibniz-Zentrum für Informatik The periodical Dagstuhl Reports documents the GmbH, Dagstuhl Publishing, Saarbrücken/Wadern, program and the results of Dagstuhl Seminars and Germany. Online available at Dagstuhl Perspectives Workshops. http://www.dagstuhl.de/dagpub/2192-5283 In principal, for each Dagstuhl Seminar or Dagstuhl Perspectives Workshop a report is published that Publication date contains the following: February, 2016 an executive summary of the seminar program and the fundamental results, Bibliographic information published by the Deutsche an overview of the talks given during the seminar Nationalbibliothek (summarized as talk abstracts), and The Deutsche Nationalbibliothek lists this publica- summaries from working groups (if applicable). tion in the Deutsche Nationalbibliografie; detailed bibliographic data are available in the Internet at This basic framework can be extended by suitable http://dnb.d-nb.de. contributions that are related to the program of the seminar, e. g. summaries from panel discussions or License open problem sessions. This work is licensed under a Creative Commons Attribution 3.0 DE license (CC BY 3.0 DE). -

Algebraic Settings for the Problem P6=NP?"

Lectures in Applied Mathematics Volume 00, 19xx Algebraic Settings for the Problem \P 6= NP?" Lenore Blum, Felip e Cucker, MikeShub, and Steve Smale Abstract. When complexity theory is studied over an arbitrary unordered eld K , the classical theory is recaptured with K = Z . The fundamental 2 result that the Hilb ert Nullstellensatz as a decision problem is NP-complete over K allows us to reformulate and investigate complexity questions within an algebraic framework and to develop transfer principles for complexity theory. Here we show that over algebraically closed elds K of characteristic 0 the fundamental problem \P 6= NP?" has a single answer that dep ends on the tractability of the Hilb ert Nullstellensatz over the complex numb ers C .Akey comp onent of the pro of is the Witness Theorem enabling the eliminationof transcendental constants in p olynomial time. 1. Statement of Main Theorems We consider the Hilb ert Nullstellensatz in the form HN=K : given a nite set of p olynomials in n variables over a eld K , decide if there is a common zero over K . At rst the eld is taken as the complex numb er eld C . Relationships with other elds and with problems in numb er theory will b e develop ed here. This article is essentially Chapter 6 of our b o ok Complexity and Real Compu- tation to b e published by Springer. Background material can b e found in [Blum, Shub, and Smale 1989]. Only machines and algorithms which branchon\hx = 0?" are considered here. The symbol is not used. -

On the Mathematical Foundations of Learning

BULLETIN (New Series) OF THE AMERICAN MATHEMATICAL SOCIETY Volume 39, Number 1, Pages 1{49 S 0273-0979(01)00923-5 Article electronically published on October 5, 2001 ON THE MATHEMATICAL FOUNDATIONS OF LEARNING FELIPE CUCKER AND STEVE SMALE The problem of learning is arguably at the very core of the problem of intelligence, both biological and artificial. T. Poggio and C.R. Shelton Introduction (1) A main theme of this report is the relationship of approximation to learning and the primary role of sampling (inductive inference). We try to emphasize relations of the theory of learning to the mainstream of mathematics. In particular, there are large roles for probability theory, for algorithms such as least squares,andfor tools and ideas from linear algebra and linear analysis. An advantage of doing this is that communication is facilitated and the power of core mathematics is more easily brought to bear. We illustrate what we mean by learning theory by giving some instances. (a) The understanding of language acquisition by children or the emergence of languages in early human cultures. (b) In Manufacturing Engineering, the design of a new wave of machines is an- ticipated which uses sensors to sample properties of objects before, during, and after treatment. The information gathered from these samples is to be analyzed by the machine to decide how to better deal with new input objects (see [43]). (c) Pattern recognition of objects ranging from handwritten letters of the alpha- bet to pictures of animals, to the human voice. Understanding the laws of learning plays a large role in disciplines such as (Cog- nitive) Psychology, Animal Behavior, Economic Decision Making, all branches of Engineering, Computer Science, and especially the study of human thought pro- cesses (how the brain works). -

Valued Constraint Satisfaction Problems Over Infinite Domains

Valued Constraint Satisfaction Problems over Infnite Domains DISSERTATION zur Erlangung des akademischen Grades Doctor rerum naturalium (Dr. rer. nat.) vorgelegt dem Bereich Mathematik und Naturwissenschaften der Technischen Universit¨atDresden von M.Sc. Caterina Viola Eingereicht am 18. Februar 2020 Verteidigt am 17. Juni 2020 Gutachter: Prof. Dr.rer.nat. Manuel Bodirsky Prof. Ph.D. Andrei Krokhin Die Dissertation wurde in der Zeit von M¨arz2016 bis Februar 2020 am Institut f¨urAlgebra angefertigt. This work is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License. To view a copy of this license, visit http:// creativecommons.org/licenses/by-sa/4.0/deed.en_GB. \Would you tell me, please, which way I ought to go from here?" \That depends a good deal on where you want to get to," said the Cat. \I don't much care where {" said Alice. \Then it doesn't matter which way you go," said the Cat. \{ so long as I get somewhere," Alice added as an explanation. \Oh, you're sure to do that," said the Cat, \if you only walk long enough." | Lewis Carroll, Alice in Wonderland Acknowledgements First and foremost, I want to express my deepest and most sincere gratitude to my supervisor, Manuel Bodirsky. He ofered me his knowledge, guided my research, and rescued in many ways the scientist I eventually became. I am glad and honoured to have been one of his doctoral students. I am very grateful to my collaborator and co-author Marcello Mamino, who was also a mentor during my frst years in Dresden. His enthusiasm and eclectic mathematical knowledge have been crucial to the success of my doctoral studies. -

2021 Students, Who Have Studied at Any of the Local Institutions, to Stay and Work in Hong Kong for a Further 12 Months After Graduation

2022 CITY UNIVERSITY OF HONG KONG HONG KONG A CITY OF OPPORTUNITIES Situated in the heart of Asia, Hong Kong is a humming metropolis of immense opportunities. Home to people from all manner of backgrounds and ethnicities, the city attracts people from around the globe with its unique cultural heritage, accessibility, open economy, and bold focus on the future. Coloured by its colonial past and strong ties to mainland China, Hong Kong continues to be influenced by its diverse population of more than 7 million, and has a longstanding reputation as being one of the safest cities in the world. Not only is Hong Kong famous for its endless skyscrapers, it is also a place of great natural beauty, where people can go hiking in the magnificent countryside, relax on pristine beaches, and visit any of the charming outlying islands. Thanks to its unique location in the region, it is also the perfect place from which to explore any of the neighbouring countries in this dynamic corner of the world. A city of perpetual motion, as well as excitement, Hong Kong truly is a place where East meets West and tradition meets innovation. 01 FUTURE CAREERS DYNAMIC AND FORWARD THINKING, Boasting one of the world’s most free and competitive economies, Hong Kong is a leading financial centre and economic hub. Its excellent infrastructure and strategic CITY UNIVERSITY OF HONG KONG #53 IN THE WORLD IS THE UNIVERSITY FOR YOUR FUTURE. IN THE QS WORLD location also make it a perfect gateway to the countless opportunities in mainland UNIVERSITY RANKINGS 2022 China and the rest of Asia. -

Jahresbericht Annual Report 2013

Mathematisches Forschungsinstitut Oberwolfach Jahresbericht 2013 Annual Report www.mfo.de Herausgeber/Published by Mathematisches Forschungsinstitut Oberwolfach Direktor Gerhard Huisken Gesellschafter Gesellschaft für Mathematische Forschung e.V. Adresse Mathematisches Forschungsinstitut Oberwolfach gGmbH Schwarzwaldstr. 9-11 77709 Oberwolfach Germany Kontakt http://www.mfo.de [email protected] Tel: +49 (0)7834 979 0 Fax: +49 (0)7834 979 38 Das Mathematische Forschungsinstitut Oberwolfach ist Mitglied der Leibniz-Gemeinschaft. © Mathematisches Forschungsinstitut Oberwolfach gGmbH (2015) Jahresbericht 2013 – Annual Report 2013 Inhaltsverzeichnis/Table of Contents Vorwort des Direktors/Director’s Foreword ........................................................................... 6 1. Besondere Beiträge/Special contributions 1.1. Amtsübergabe des Direktors/Change of Directorship ................................................ 10 1.2. Simons Visiting Professors ..................................................................................... 12 1.3. IMAGINARY 2013 und Mathematik des Planeten Erde/ IMAGINARY 2013 and Mathematics of Planet Earth .................................................... 14 1.4. swMATH .............................................................................................................. 22 1.5. MiMa - Museum für Mineralien und Mathematik Oberwolfach/ MiMa - Museum for Minerals and Mathematics Oberwolfach ........................................ 27 1.6. Oberwolfach Vorlesung 2013/Oberwolfach Lecture 2013 ........................................... -

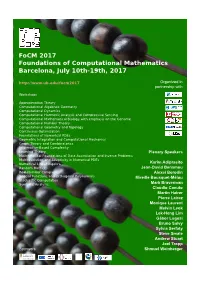

Focm 2017 Foundations of Computational Mathematics Barcelona, July 10Th-19Th, 2017 Organized in Partnership With

FoCM 2017 Foundations of Computational Mathematics Barcelona, July 10th-19th, 2017 http://www.ub.edu/focm2017 Organized in partnership with Workshops Approximation Theory Computational Algebraic Geometry Computational Dynamics Computational Harmonic Analysis and Compressive Sensing Computational Mathematical Biology with emphasis on the Genome Computational Number Theory Computational Geometry and Topology Continuous Optimization Foundations of Numerical PDEs Geometric Integration and Computational Mechanics Graph Theory and Combinatorics Information-Based Complexity Learning Theory Plenary Speakers Mathematical Foundations of Data Assimilation and Inverse Problems Multiresolution and Adaptivity in Numerical PDEs Numerical Linear Algebra Karim Adiprasito Random Matrices Jean-David Benamou Real-Number Complexity Alexei Borodin Special Functions and Orthogonal Polynomials Mireille Bousquet-Mélou Stochastic Computation Symbolic Analysis Mark Braverman Claudio Canuto Martin Hairer Pierre Lairez Monique Laurent Melvin Leok Lek-Heng Lim Gábor Lugosi Bruno Salvy Sylvia Serfaty Steve Smale Andrew Stuart Joel Tropp Sponsors Shmuel Weinberger 2 FoCM 2017 Foundations of Computational Mathematics Barcelona, July 10th{19th, 2017 Books of abstracts 4 FoCM 2017 Contents Presentation . .7 Governance of FoCM . .9 Local Organizing Committee . .9 Administrative and logistic support . .9 Technical support . 10 Volunteers . 10 Workshops Committee . 10 Plenary Speakers Committee . 10 Smale Prize Committee . 11 Funding Committee . 11 Plenary talks . 13 Workshops . 21 A1 { Approximation Theory Organizers: Albert Cohen { Ron Devore { Peter Binev . 21 A2 { Computational Algebraic Geometry Organizers: Marta Casanellas { Agnes Szanto { Thorsten Theobald . 36 A3 { Computational Number Theory Organizers: Christophe Ritzenhaler { Enric Nart { Tanja Lange . 50 A4 { Computational Geometry and Topology Organizers: Joel Hass { Herbert Edelsbrunner { Gunnar Carlsson . 56 A5 { Geometric Integration and Computational Mechanics Organizers: Fernando Casas { Elena Celledoni { David Martin de Diego . -

Condition the Geometry of Numerical Algorithms

Grundlehren der mathematischen Wissenschaften 349 A Series of Comprehensive Studies in Mathematics Series editors M. Berger P. de la Harpe N.J. Hitchin A. Kupiainen G. Lebeau F.-H. Lin S. Mori B.C. Ngô M. Ratner D. Serre N.J.A. Sloane A.M. Vershik M. Waldschmidt Editor-in-Chief A. Chenciner J. Coates S.R.S. Varadhan For further volumes: www.springer.com/series/138 Peter Bürgisser r Felipe Cucker Condition The Geometry of Numerical Algorithms Peter Bürgisser Felipe Cucker Institut für Mathematik Department of Mathematics Technische Universität Berlin City University of Hong Kong Berlin, Germany Hong Kong, Hong Kong SAR ISSN 0072-7830 Grundlehren der mathematischen Wissenschaften ISBN 978-3-642-38895-8 ISBN 978-3-642-38896-5 (eBook) DOI 10.1007/978-3-642-38896-5 Springer Heidelberg New York Dordrecht London Library of Congress Control Number: 2013946090 Mathematics Subject Classification (2010): 15A12, 52A22, 60D05, 65-02, 65F22, 65F35, 65G50, 65H04, 65H10, 65H20, 90-02, 90C05, 90C31, 90C51, 90C60, 68Q25, 68W40, 68Q87 © Springer-Verlag Berlin Heidelberg 2013 This work is subject to copyright. All rights are reserved by the Publisher, whether the whole or part of the material is concerned, specifically the rights of translation, reprinting, reuse of illustrations, recitation, broadcasting, reproduction on microfilms or in any other physical way, and transmission or information storage and retrieval, electronic adaptation, computer software, or by similar or dissimilar methodology now known or hereafter developed. Exempted from this legal reservation are brief excerpts in connection with reviews or scholarly analysis or material supplied specifically for the purpose of being entered and executed on a computer system, for exclusive use by the purchaser of the work. -

Focm 2017 Foundations of Computational Mathematics Barcelona, July 10Th-19Th, 2017 Organized in Partnership With

FoCM 2017 Foundations of Computational Mathematics Barcelona, July 10th-19th, 2017 http://www.ub.edu/focm2017 Organized in partnership with Workshops Approximation Theory Computational Algebraic Geometry Computational Dynamics Computational Harmonic Analysis and Compressive Sensing Computational Mathematical Biology with emphasis on the Genome Computational Number Theory Computational Geometry and Topology Continuous Optimization Foundations of Numerical PDEs Geometric Integration and Computational Mechanics Graph Theory and Combinatorics Information-Based Complexity Learning Theory Plenary Speakers Mathematical Foundations of Data Assimilation and Inverse Problems Multiresolution and Adaptivity in Numerical PDEs Numerical Linear Algebra Karim Adiprasito Random Matrices Jean-David Benamou Real-Number Complexity Alexei Borodin Special Functions and Orthogonal Polynomials Mireille Bousquet-Mélou Stochastic Computation Symbolic Analysis Mark Braverman Claudio Canuto Martin Hairer Pierre Lairez Monique Laurent Melvin Leok Lek-Heng Lim Gábor Lugosi Bruno Salvy Sylvia Serfaty Steve Smale Andrew Stuart Joel Tropp Sponsors Shmuel Weinberger Wifi access Network: wifi.ub.edu Open a Web browser At the UB authentication page put Identifier: aghnmr.tmp Password: aqoy96 Venue FoCM 2017 will take place in the Historical Building of the University of Barcelona, located at Gran Via de les Corts Catalanes 585. Mapa The plenary lectures will be held in the Paraninf (1st day of each period) and Aula Magna (2nd and 3rd day of each period). Both auditorium are located in the first floor. The poster sessions (2nd and 3rd day of each period) will be at the courtyard of the Faculty of Mathematics, at the ground floor. Workshops will be held in the lecture rooms B1, B2, B3, B5, B6, B7 and 111 in the ground floor, and T1 in the second floor. -

Computing the Homology of Basic Semialgebraic Sets in Weak

Computing the Homology of Basic Semialgebraic Sets in Weak Exponential Time PETER BÜRGISSER, Technische Universität Berlin, Germany FELIPE CUCKER, City University of Hong Kong PIERRE LAIREZ, Inria, France We describe and analyze an algorithm for computing the homology (Betti numbers and torsion coefficients) of basic semialgebraic sets which works in weak exponential time. That is, out of a set of exponentially small measure in the space of data, the cost of the algorithm is exponential in the size of the data. All algorithms previously proposed for this problem have a complexity which is doubly exponential (and this is so for almost all data). CCS Concepts: • Theory of computation → Computational geometry; • Computing methodologies → Hybrid symbolic-numeric methods. Additional Key Words and Phrases: Semialgebraic geometry, homology, algorithm ACM Reference Format: Peter Bürgisser, Felipe Cucker, and Pierre Lairez. 2018. Computing the Homology of Basic Semialgebraic Sets in Weak Exponential Time. J. ACM 66, 1, Article 5 (October 2018), 30 pages. https://doi.org/10.1145/3275242 1 INTRODUCTION Semialgebraic sets (that is, subsets of Euclidean spaces defined by polynomial equations and in- equalities with real coefficients) come in a wide variety of shapes and this raises the problem of describing a given specimen, from the most primitive features, such as emptiness, dimension, or number of connected components, to finer ones, such as roadmaps, Euler-Poincaré characteristic, Betti numbers, or torsion coefficients. The Cylindrical Algebraic Decomposition (CAD) introduced by Collins [23] and Wüthrich [63] n 5 in the 1970’s provided algorithms to compute these features that worked within time sD 2O( ) where s is the number of defining equations, D a bound on their degree and n the dimension( ) of the ambient space. -

Alperen Ali Ergür

Alperen Ali Ergür Address Technische Universität Berlin Institut für Mathematik Phone + 49 (0)30 314 - 29385 Sekretariat MA 3-2 Email [email protected] Straße des 17. Juni 136 10623 Berlin , Germany Research Interest Discrete and Convex Geometry, Computational Algebraic Geometry, Probabilistic Structures and Randomized Numerical Algorithms, Real Algebraic Geometry and Continuous Optimization Education 2016 PhD in Mathematics - Texas A&M University, USA Advisors: Grigoris Paouris and J. Maurice Rojas 2011 MS in Mathematics- Tobb University, Turkey 2009 BS in Mathematics- Bilkent University, Turkey Employment May 2017- Technical University of Berlin present Einstein Postdoctoral Fellow Mentors: Peter Bürgisser and Felipe Cucker Aug 2016- North Carolina State University, USA May 2017 Postdoctoral Research Scholar Mentor: Cynthia Vinzant Sep 2011- Texas A&M University, USA Aug 2016 Graduate Research/Teaching Assistant Assistant Instructor at Research Experience for Undergraduates Program 1 of 4 Teaching Experience 1. Technische Universität Berlin Seminar on Interior Point Methods in Convex Optimization (co-teaching with T. de Wolff) Effective Algebraic Geometry Lectures (co-teaching with P.Bürgisser and J. Tonelli-Cueto) 2. NC State University Linear Algebra for Science Majors (Instructor of the Record) Calculus for Engineers (Instructor of the Record) Precalculus (Instructor of the Record) 3. Texas A&M University Assistant Instructor at Research Experience for Undergraduates Program Mentored eight undergraduate research projects in four