Postface to “Model Predictive Control: Theory and Design”

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Design Binding Today

OPEN • SET 2020 Design Binding Today OPEN • SET is a competition and exhibition, a triennial event featuring finely crafted design bindings. The title reflects the two categories in which the bookbinders compete—the Open Category, wherein the binder chooses which textblock to bind, and the Set Category, in which participants bind the same textblock. The Open Category titles are books in French, German, Spanish and English, a variety that echoes the number of foreign entries in the show. The Set Category book was conceived and printed by fine press printer Russell Maret; he selected the text of a letter by William Blake entitled Happy Abstract. There are notable highlights in this show, as there are First, Second and Third Prize awards in each category, as well as twenty Highly Commendable designations. These were awarded by a jury of the well-known American binders Monique Lallier, Mark Esser and Patricia Owen. Each juror has a binding on display. And there is so much more! Take note of the variety of structures and different materials used. When a binder first sits with a textblock, they spend time reading and absorbing the meaning of the content, examining the typography, the layout and page margins, taking note of the mood and color of the illustrations. Ideas form. A binding requires that the design come from deep within, and is then executed into visual play that exemplifies the interpretation. This is with all respect to the author, illustrator, and publisher. The result is an invitation into the text, the words and their meaning. The result is unique, a work of art in the form of a book. -

How to Page a Document in Microsoft Word

1 HOW TO PAGE A DOCUMENT IN MICROSOFT WORD 1– PAGING A WHOLE DOCUMENT FROM 1 TO …Z (Including the first page) 1.1 – Arabic Numbers (a) Click the “Insert” tab. (b) Go to the “Header & Footer” Section and click on “Page Number” drop down menu (c) Choose the location on the page where you want the page to appear (i.e. top page, bottom page, etc.) (d) Once you have clicked on the “box” of your preference, the pages will be inserted automatically on each page, starting from page 1 on. 1.2 – Other Formats (Romans, letters, etc) (a) Repeat steps (a) to (c) from 1.1 above (b) At the “Header & Footer” Section, click on “Page Number” drop down menu. (C) Choose… “Format Page Numbers” (d) At the top of the box, “Number format”, click the drop down menu and choose your preference (i, ii, iii; OR a, b, c, OR A, B, C,…and etc.) an click OK. (e) You can also set it to start with any of the intermediate numbers if you want at the “Page Numbering”, “Start at” option within that box. 2 – TITLE PAGE WITHOUT A PAGE NUMBER…….. Option A – …And second page being page number 2 (a) Click the “Insert” tab. (b) Go to the “Header & Footer” Section and click on “Page Number” drop down menu (c) Choose the location on the page where you want the page to appear (i.e. top page, bottom page, etc.) (d) Once you have clicked on the “box” of your preference, the pages will be inserted automatically on each page, starting from page 1 on. -

Using a Radical-Derived Character E-Learning Platform to Increase Learner Knowledge of Chinese Characters

Language Learning & Technology February 2013, Volume 17, Number 1 http://llt.msu.edu/issues/february2013/chenetal.pdf pp. 89–106 USING A RADICAL-DERIVED CHARACTER E-LEARNING PLATFORM TO INCREASE LEARNER KNOWLEDGE OF CHINESE CHARACTERS Hsueh-Chih Chen, National Taiwan Normal University Chih-Chun Hsu, National Defense University Li-Yun Chang, University of Pittsburgh Yu-Chi Lin, National Taiwan Normal University Kuo-En Chang, National Taiwan Normal University Yao-Ting Sung, National Taiwan Normal University The present study is aimed at investigating the effect of a radical-derived Chinese character teaching strategy on enhancing Chinese as a Foreign Language (CFL) learners’ Chinese orthographic awareness. An e-learning teaching platform, based on statistical data from the Chinese Orthography Database Explorer (Chen, Chang, L.Y., Chou, Sung, & Chang, K.E., 2011), was established and used as an auxiliary teaching tool. A nonequivalent pretest-posttest quasi-experiment was conducted, with 129 Chinese- American CFL learners as participants (69 people in the experimental group and 60 people in the comparison group), to examine the effectiveness of the e-learning platform. After a three-week course—involving instruction on Chinese orthographic knowledge and at least seven phonetic/semantic radicals and their derivative characters per week—the experimental group performed significantly better than the comparison group on a phonetic radical awareness test, a semantic radical awareness test, as well as an orthography knowledge test. Keywords: Chinese as a Foreign Language (CFL), Chinese Orthographic Awareness, Radical-Derived Character Instructional Method, Phonetic/Semantic Radicals INTRODUCTION The rise of China to international prominence in recent years has made learning Chinese extremely popular, and increasing numbers of non-native Chinese students have begun to choose Chinese as their second language of study. -

On the Pictorial Structure of Chinese Characters

National Bur'SaU 01 Jiwiuuiuu Library, N.W. Bldg Reference book not to be FEB 1 1965 taken from the library. ^ecltnlcai v|ete 254 ON THE PICTORIAL STRUCTURE OF CHINESE CHARACTERS B. KIRK RANKIN, III, WALTER A. SILLARS, AND ROBERT W. HSU U. S. DEPARTMENT OF COMMERCE NATIONAL BUREAU OF STANDARDS THE NATIONAL BUREAU OF STANDARDS The National Bureau of Standards is a principal focal point in the Federal Government for assuring maximum application of the physical and engineering sciences to the advancement of technology in industry and commerce. Its responsibilities include development and maintenance of the national stand- ards of measurement, and the provisions of means for making measurements consistent with those standards; determination of physical constants and properties of materials; development of methods for testing materials, mechanisms, and structures, and making such tests as may be necessary, particu- larly for government agencies; cooperation in the establishment of standard practices for incorpora- tion in codes and specifications; advisory service to government agencies on scientific and technical problems; invention and development of devices to serve special needs of the Government; assistance to industry, business, and consumers in the development and acceptance of commercial standards and simplified trade practice recommendations; administration of programs in cooperation with United States business groups and standards organizations for the development of international standards of practice; and maintenance of a clearinghouse for the collection and dissemination of scientific, tech- nical, and engineering information. The scope of the Bureau's activities is suggested in the following listing of its four Institutes and their organizational units. Institute for Basic Standards. -

Instructions for How to Build a Table of Contents and Table of Authorities So the Page Numbers Automatically 1 Update in Microsoft® Word

INSTRUCTIONS FOR HOW TO BUILD A TABLE OF CONTENTS AND TABLE OF AUTHORITIES SO THE PAGE NUMBERS AUTOMATICALLY 1 UPDATE IN MICROSOFT® WORD Table of Contents Introduction ......................................................................................................................... 2 Step 1: Insert Page Numbers in the Brief ............................................................................ 2 Step 2: Mark the Headings for the Table of Contents ......................................................... 3 Step 3: Generate the Table of Contents ............................................................................... 3 Step 4: Mark Your Citations for the Table of Authorities ................................................ 12 Step 5: Generate the Table of Authorities ......................................................................... 12 Step 6: Update the Page Numbers in Your Table of Authorities ..................................... 13 Step 7: Regenerate the Table of Contents ........................................................................ 13 1 Microsoft, Encarta, MSN, and Windows are either registered trademarks or trademarks of Microsoft Corporation in the United States and/or other countries. 1 INTRODUCTION The Fifth DCA now requires that all document pages “be consecutively numbered beginning from the cover page of the document and using only the Arabic numbering system, as in 1, 2, 3.” (Fifth Dist., Local Rule, rule 8(b).) The cover page (including the cover page of a brief) should always show number -

Epilogue and Acknowledgments

Epilogue and Acknowledgments On the afternoon of March 10, 2004, I posted a draft of the Introduction and Chapter 1 of this book on my weblog. I asked readers to let me know, preferably by email, if they noticed any factual errors. I also asked whether I’d missed any crucial top- ics, or whether they knew of some perfect anecdote that abso- lutely had to be included. They responded. One of the first emails alerted me to an incorrect web address, which I fixed immediately. Another pointed out a mistake in a section about open source software. Others suggested I amplify certain points, or asked why I discussed a particular topic, or that I slow down the narrative. The comments section of my weblog became a discussion about the book. The ideas I’ve been discussing in We the Media became inte- gral to the reporting and writing of the book itself. When I started, I didn’t really know what to expect. But I can say now, without any fear of contradiction, that this process has worked. Thank you, all. outline and ideas My version of open source journalism got off to a rocky start. In the early spring of 2003, I posted an outline of the book and invited comments by email. My inbox overflowed. 243 we the media Then a small disaster hit. I’d moved all the suggestions into a separate folder in my mailbox, but several months later, when I looked for them, they were gone. Vanished. Disappeared. I still don’t know if this was my doing or my Internet service pro- vider’s. -

How to Build a Table of Authorities and Table of Contents in Word

How to Build A Table of Authorities in Word* By: Morgan Otway Overview: • A Table of Authorities (TOA) is a list of all of the sources cited in a legal document that notes the page numbers on which each source has been cited. • To create a TOA, you must “mark” each of your citations. Once all citations have been “marked,” Microsoft Word will generate a TOA that organizes the sources by category (e.g. cases, statutes, etc.) and alphabetically within each category. • NOTE: Once you “mark” each citation, Word will add hidden characters to that citation to designate that it has been “marked.” Don’t be alarmed by this! You can toggle this ON and OFF by checking and unchecking the ¶ symbol on the Home tab (located on the left-hand side of the toolbar). Instructions: 1. Locate the first citation in your document > highlight the full citation > go to Insert > Index and Tables > Table of Authorities. 2. Click “Mark Citation.” * Although differences should be slight, these instructions were based off of Microsoft Word for Mac 2011. 3. Once you click Mark Citation, the citation should appear in the “Selected text” box (see diagram on the next page). The way that the citation appears in the Selected text box is how it will appear in the TOA, once it has been generated. Therefore, you MUST remove the pincite from the citation in the “Selected text” box in order for the TOA to work properly. 4. From the list of categories, choose the category that describes the authority you’ve selected (e.g. -

Grade 1 Unit 3 – Nonfiction Chapter Books Writing Workshop: Nov./Dec

Grade 1 Unit 3 – Nonfiction Chapter Books Writing Workshop: Nov./Dec. Unit Overview Everyone is an expert at something. Whether it be knowing the names of all the NBA players on every team, or telling you about every lego piece, set, and creation, everyone has something they are passionate about. This unit aims to take this knowledge and allow students the opportunity to teach what they know. During this unit, students will be writing many information books about many different topics, choosing one to publish towards the end of the unit. Rather than researching new topics, help children select topics they are already knowledgeable about. This is a time for students to reveal their hobbies and passions. As you prepare for this unit, it is important to remember paper choices. You will want to have variety here, thinking of paper choices for table of contents, diagrams, how-to, etc to support the various structures students will be writing in throughout the unit. For additional information regarding the unit please see Units of Study for Teaching Writing Grade 1 Book 2 and the Writing Workshop Book in the kits. Grade 1 Unit 3 – Nonfiction Chapter Books Writing Workshop: Nov./Dec. Overarching Standards Aligning with Grade 1 Unit 3, Nonfiction Chapter Books Session Writing Standards Reading Standards Speaking & Listening Standards Language Standards 1 W.1.2, W.1.5, W.1.7 RI.1.1, RFS.1.1 SL.1.1, SL.1.4, SL.1.5, SL.1.6 L.1.1, L.1.2 2 W.1.2, W.1.5 RI.1.6, RI.1.7, RFS.1.1 SL.1.1, SL.1.4, SL.1.5, SL.1.6 L.1.1, L.1.2 3 W.1.2, W.2.2 RI.1.1, RI.1.4 -

STANDARD PAGE ORDER for a BOOK These Are Guidelines, Not Rules, but Are Useful in Making Your Book Look Professional

STANDARD PAGE ORDER FOR A BOOK These are guidelines, not rules, but are useful in making your book look professional. More extensive descriptions are available in the “Chicago Manual of Style”. (Note: CMS uses the classic terms recto for right handed and verso for left handed pages.) FRONT MATTER PRE PAGES: are usually numbered with lower case roman numerals Blank A blank page is often needed to force the first page of the book to fall on a right hand page. Half title page (contains only the title) - OPTIONAL Introduction (OPTIONAL) A blank page is often Blank page (back of title page) needed to force the first page of the book to fall on a right hand page. Title page title author, illustrator where appropriate Copyright page (back of the Title page): Usually BODY OF THE BOOK contains Copyright information, ISBN, LCCN if using, Text pages are usually numbered with normal fonts. design credits, disclaimers about fictional characters, permission granted to use information or illustrations TEXT: from another source Chapter One: In a “classic book” all chapter heads start on the right hand page. In novels where continuity Dedication is important, chapters may start on the right or left but the first chapter should always start on the right. Blank PARTS: Epigraph (quote pertinent to the book) OPTIONAL Book One or Section One: In large books it is May be used instead of, or after a Dedication. common for the book to be divided into Parts or Units. Some Section pages carry their own titles. These are Blank styled like title pages and are always on the right hand page, usually followed by a blank. -

Explorations in Book Binding Techniques

The University of Akron IdeaExchange@UAkron Williams Honors College, Honors Research The Dr. Gary B. and Pamela S. Williams Honors Projects College Spring 2020 Explorations in Book Binding Techniques Kristen Faux [email protected] Follow this and additional works at: https://ideaexchange.uakron.edu/honors_research_projects Part of the Book and Paper Commons, and the Graphic Design Commons Please take a moment to share how this work helps you through this survey. Your feedback will be important as we plan further development of our repository. Recommended Citation Faux, Kristen, "Explorations in Book Binding Techniques" (2020). Williams Honors College, Honors Research Projects. 1114. https://ideaexchange.uakron.edu/honors_research_projects/1114 This Dissertation/Thesis is brought to you for free and open access by The Dr. Gary B. and Pamela S. Williams Honors College at IdeaExchange@UAkron, the institutional repository of The University of Akron in Akron, Ohio, USA. It has been accepted for inclusion in Williams Honors College, Honors Research Projects by an authorized administrator of IdeaExchange@UAkron. For more information, please contact [email protected], [email protected]. Honors Research Project: Explorations in Book Binding Techniques Kristen Faux University of Akron, Class of 2020 Honors Research Project: Explorations in Book Binding Techniques Kristen Faux Department of Graphic Design Honors Research Project Submitted to The Williams Honors College The University of Akron ABSTRACT INITIAL RESEARCH Initial Binding Types The goal of this project was to explore book binding The first step for this project was to generate a list 1. Saddle Stitched through research and book creation. The first step was of potential types of binding that could then be narrowed to generate a base list of binding techniques. -

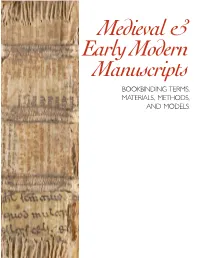

Medieval & Early Modern Manuscripts: Bookbinding Terms, Materials, Methods, and Models

Medieval & Early Modern Manuscripts BOOKBINDING TERMS, MATERIALS, METHODS, AND MODELS Medieval & Early Modern Manuscripts BOOKBINDING TERMS, MATERIALS, METHODS, AND MODELS This booklet was compiled by the Special Collections Conservation Unit of the Preservation Department of Yale University Library. If you have any comments or questions, please email Karen Jutzi at [email protected]. A NOTE ON DATES & TERMINOLOGY: The terms ‘Carolingian’, ‘Romanesque’, and ‘Gothic’ are used in this booklet to describe a method of board attachment as recognized by J.A. Szirmai in The Ar- chaeology of Medieval Bookbinding (Aldershot: Ashgate, 1999), and are often used by others to describe medi- eval binding structures. While a convenient method of categorization, using the name of the historical period to describe a style of binding can be misleading. Many other styles of binding existed concurrently; it should not be as- sumed that all bindings of a certain historical period were bound the same way. Furthermore, changes in bookbinding did not happen overnight. Methods co- existed; styles overlapped; older structures continued to be used well into the next historical period. Many thanks to Professor Nicholas Pickwoad, Uni- versity College, London, for his helpful comments, suggestions, and corrections on terminology. kaj 1/2015 Table of Contents MATERIALS OF MEDIEVAL & EARLY MODERN BOOKBINDING Wood 8 Leather 9 Alum-tawed skin 10 Parchment & vellum 11 TERMINOLOGY Parts of a medieval book 14 Outside structure 15 Inside structure 16 Bindery tools & equipment -

PART 5 Understanding and Using the “Toolkit Guidelines for Graphic Design”

TOOLKIT for Making Written Material Clear and Effective SECTION 2: Detailed guidelines for writing and design PART 5 Understanding and using the “Toolkit Guidelines for Graphic Design” Chapter 3 Guidelines for fonts (typefaces), size of print, and contrast U.S. Department of Health and Human Services Centers for Medicare & Medicaid Services TOOLKIT Part 5, Chapter 3 Guidelines for fonts (typefaces), size of print, and contrast Introduction .......................................................................................................................... 41 List of guidelines covered in this chapter ..................................................................... 42 Background on terms we use to describe fonts ............................................................ 45 Guidelines for choosing fonts (guidelines 6.1, 6.2, and 6.3) ........................................... 49 Make the print large enough for easy reading by your intended readers (guideline 6.4).... 53 Avoid using “all caps” (guideline 6.5) .......................................................................... 56 For text emphasis, use boldface or italics (with restraint) (guideline 6.6)......................... 59 Use very dark colored text on very light non-glossy background (guideline 6.7) .............. 61 Do not print text sideways or on top of shaded backgrounds, photos, or patterns (guideline 6.8) ................................................................................................. 67 Adjust the spacing between lines (guideline 6.9).........................................................