Xen and the Art of Virtualization

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Industrial Control Via Application Containers: Migrating from Bare-Metal to IAAS

Industrial Control via Application Containers: Migrating from Bare-Metal to IAAS Florian Hofer, Student Member, IEEE Martin A. Sehr Antonio Iannopollo, Member, IEEE Faculty of Computer Science Corporate Technology EECS Department Free University of Bolzano-Bozen Siemens Corporation University of California Bolzano, Italy Berkeley, CA 94704, USA Berkeley, CA 94720, USA fl[email protected] [email protected] [email protected] Ines Ugalde Alberto Sangiovanni-Vincentelli, Fellow, IEEE Barbara Russo Corporate Technology EECS Department Faculty of Computer Science Siemens Corporation University of California Free University of Bolzano-Bozen Berkeley, CA 94704, USA Berkeley, CA 94720, USA Bolzano, Italy [email protected] [email protected] [email protected] Abstract—We explore the challenges and opportunities of control design full authority over the environment in which shifting industrial control software from dedicated hardware to its software will run, it is not straightforward to determine bare-metal servers or cloud computing platforms using off the under what conditions the software can be executed on cloud shelf technologies. In particular, we demonstrate that executing time-critical applications on cloud platforms is viable based on computing platforms due to resource virtualization. Yet, we a series of dedicated latency tests targeting relevant real-time believe that the principles of Industry 4.0 present a unique configurations. opportunity to explore complementing traditional automation Index Terms—Industrial Control Systems, Real-Time, IAAS, components with a novel control architecture [3]. Containers, Determinism We believe that modern virtualization techniques such as application containerization [3]–[5] are essential for adequate I. INTRODUCTION utilization of cloud computing resources in industrial con- Emerging technologies such as the Internet of Things and trol systems. -

Xen on X86, 15 Years Later

Xen on x86, 15 years later Recent development, future direction QEMU Deprivileging PVShim Panopticon Large guests (288 vcpus) NVDIMM PVH Guests PVCalls VM Introspection / Memaccess PV IOMMU ACPI Memory Hotplug PVH dom0 Posted Interrupts KConfig Sub-page protection Hypervisor Multiplexing Talk approach • Highlight some key features • Recently finished • In progress • Cool Idea: Should be possible, nobody committed to working on it yet • Highlight how these work together to create interesting theme • PVH (with PVH dom0) • KConfig • … to disable PV • PVshim • Windows in PVH PVH: Finally here • Full PVH DomU support in Xen 4.10, Linux 4.15 • First backwards-compatibility hack • Experimental PVH Dom0 support in Xen 4.11 PVH: What is it? • Next-generation paravirtualization mode • Takes advantage of hardware virtualization support • No need for emulated BIOS or emulated devices • Lower performance overhead than PV • Lower memory overhead than HVM • More secure than either PV or HVM mode • PVH (with PVH dom0) • KConfig • … to disable PV • PVshim • Windows in PVH KConfig • KConfig for Xen allows… • Users to produce smaller / more secure binaries • Makes it easier to merge experimental functionality • KConfig option to disable PV entirely • PVH • KConfig • … to disable PV • PVshim • Windows in PVH PVShim • Some older kernels can only run in PV mode • Expect to run in ring 1, ask a hypervisor PV-only kernel (ring 1) to perform privileged actions “Shim” Hypervisor (ring 0) • “Shim”: A build of Xen designed to allow an unmodified PV guest to run in PVH mode -

Xen to KVM Migration Guide

SUSE Linux Enterprise Server 12 SP4 Xen to KVM Migration Guide SUSE Linux Enterprise Server 12 SP4 As the KVM virtualization solution is becoming more and more popular among server administrators, many of them need a path to migrate their existing Xen based environments to KVM. As of now, there are no mature tools to automatically convert Xen VMs to KVM. There is, however, a technical solution that helps convert Xen virtual machines to KVM. The following information and procedures help you to perform such a migration. Publication Date: September 24, 2021 Contents 1 Migration to KVM Using virt-v2v 2 2 Xen to KVM Manual Migration 9 3 For More Information 18 4 Documentation Updates 18 5 Legal Notice 18 6 GNU Free Documentation License 18 1 SLES 12 SP4 Important: Migration Procedure Not Supported The migration procedure described in this document is not fully supported by SUSE. We provide it as a guidance only. 1 Migration to KVM Using virt-v2v This section contains information to help you import virtual machines from foreign hypervisors (such as Xen) to KVM managed by libvirt . Tip: Microsoft Windows Guests This section is focused on converting Linux guests. Converting Microsoft Windows guests using virt-v2v is the same as converting Linux guests, except in regards to handling the Virtual Machine Driver Pack (VMDP). Additional details on converting Windows guests with the VMDP can be found in the separate Virtual Machine Driver Pack documentation at https://www.suse.com/documentation/sle-vmdp-22/ . 1.1 Introduction to virt-v2v virt-v2v is a command line tool to convert VM Guests from a foreign hypervisor to run on KVM managed by libvirt . -

L4 – Virtualization and Beyond

L4 – Virtualization and Beyond Hermann Härtig!," Michael Roitzsch! Adam Lackorzynski" Björn Döbel" Alexander Böttcher! #!Department of Computer Science# "GWT-TUD GmbH # Technische Universität Dresden# 01187 Dresden, Germany # 01062 Dresden, Germany {haertig,mroi,adam,doebel,boettcher}@os.inf.tu-dresden.de Abstract Mac OS X environment, using full hardware After being introduced by IBM in the 1960s, acceleration by the GPU. Virtual machines are virtualization has experienced a renaissance in used to test potentially malicious software recent years. It has become a major industry trend without risking the host environment and they in the server context and is also popular on help developers in debugging crashes with back- consumer desktops. In addition to the well-known in-time execution. benefits of server consolidation and legacy In the server world, virtual machines are used to preservation, virtualization is now considered in consolidate multiple services previously located embedded systems. In this paper, we want to look on dedicated machines. Running them within beyond the term to evaluate advantages and virtual machines on one physical server eases downsides of various virtualization approaches. management and helps saving power by We show how virtualization can be increasing utilization. In server farms, migration complemented or even superseded by modern of virtual machines between servers is used to operating system paradigms. Using L4 as the balance load with the potential of shutting down basis for virtualization and as an advanced completely unloaded servers or adding more to microkernel provides a best-of-both-worlds increase capacity. Lastly, virtual machines also combination. Microkernels can contribute proven isolate different customers who can purchase real-time capabilities and small trusted computing virtual shares of a physical server in a data center. -

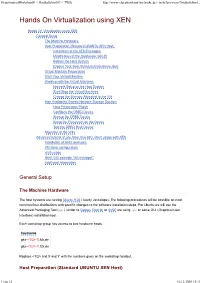

Hands on Virtualization Using XEN

VirtualizationWorkshop09 < GridkaSchool09 < TWiki http://www-ekp.physik.uni-karlsruhe.de/~twiki/bin/view/GridkaSchool... Hands On Virtualization using XEN Hands On Virtualization using XEN General Setup The Machine Hardware Host Preparation (Standard UBUNTU XEN Host) Installation of the XEN Packages Modification of the Bootloader GRUB Reboot the Host System Explore Your New XEN dom0 Hardware Host Virtual Machine Preparation Start Your Virtual Machine Working with the Virtual Machines Network Setup on the Host System Start/Stop the Virtual Machines Change the Memory Allocated to the VM High Availability Shared Network Storage Solution Host Preparation Phase Configure the DRBD Device Startup the DRBD Device Setup the Filesystem on the Device Test the DRBD Raid Device Migration of the VMs Advanced tutorial (if you have time left): libvirt usage with XEN Installation of libvirt and tools VM libvirt configuration virsh usage libvirt GUI example "virt-manager" Additional Information General Setup The Machine Hardware The host systems are running Ubuntu 9.04 (Jaunty Jackalope). The following procedures will be possible on most common linux distributions with specific changes to the software installation steps. For Ubuntu we will use the Advanced Packaging Tool ( apt ) similar to Debian . RedHat or SuSE are using rpm or some GUI (Graphical User Interface) installation tool. Each workshop group has access to two hardware hosts: hostname gks- <1/2>-X .fzk.de gks- <1/2>-Y .fzk.de Replace <1/2> and X and Y with the numbers given on the workshop handout. Host Preparation (Standard UBUNTU XEN Host) 1 von 15 16.12.2009 15:13 VirtualizationWorkshop09 < GridkaSchool09 < TWiki http://www-ekp.physik.uni-karlsruhe.de/~twiki/bin/view/GridkaSchool.. -

Lars Kurth Community Manger, Xen Project Chairman, Xen Project Advisory Board Director, Open Source, Citrix Lars Kurth

Lars Kurth Community Manger, Xen Project Chairman, Xen Project Advisory Board Director, Open Source, Citrix lars_kurth Was a contributor to various projects Worked in parallel computing, tools, mobile and now virtualization Long history in change projects Community guy at Symbian Foundation Learned how NOT to do stuff Community guy for the Xen Project Working for Citrix Accountable to Xen Project Advisory Board Chairman of Xen Project Advisory Board 250000 200000 150000 More than 1 Projects Million Today 100000 Projected 50000 0 2006 2008 2010 2012 2014 Source: The 2013 Future of Open Source Survey Results Late 90’s Today Individuals & Hobbyist's Still about Individuals But, a majority are employees Companies have a huge stake Features How many users you have How many vendors back you How you are seen in the press … Different Management Disciplines can help you succeed Neutrality / Perception Support Infrastructure Expertise / Mentoring Vendor Network … BUT: You still need to do all the right things Case Study An Open Source Hypervisor > 10M Users Powering some of the biggest Clouds in Production Amazon Web Services, Rackspace Public Cloud, Terremark, … Several sub-projects Xen Hypervisor (including Xen on ARM), XAPI management tools, Mirage OS Linux Foundation Collaborative Project Sponsored by Amazon Web Services, AMD, Bromium, Calxeda, CA Technologies, Cisco, Citrix, Google, Intel, NetApp, Oracle, Samsung and Verizon 10 years old Four Key Issues Symptoms Consequences for Xen Fixes that were applied Effect this had (there may be others) -

Design of the Next-Generation Securedrop Workstation Freedom of the Press Foundation

1 Design of the Next-Generation SecureDrop Workstation Freedom of the Press Foundation I. INTRODUCTION Whistleblowers expose wrongdoing, illegality, abuse, misconduct, waste, and/or threats to public health or safety. Whistleblowing has been critical for some of the most important stories in the history of investigative journalism, e.g. the Pentagon Papers, the Panama Papers, and the Snowden disclosures. From the Government Accountability Project’s Whistleblower Guide (1): The power of whistleblowers to hold institutions and leaders accountable very often depends on the critical work of journalists, who verify whistleblowers’ disclosures and then bring them to the public. The partnership between whistleblowers and journalists is essential to a functioning democracy. In the United States, shield laws and reporter’s privilege exists to protect the right of a journalist to not reveal the identity of a source. However, under both the Obama and Trump administrations, governments have attempted to identify journalistic sources via court orders to third parties holding journalist’s records. Under the Obama administration, the Associated Press had its telephone records acquired in order to identify a source (2). Under the Trump Administration, New York Times journalist Ali Watkins had her phone and email records acquired by court order (3). If source—journalist communications are mediated by third parties that can be subject to subpoena, source identities can be revealed without a journalist being aware due to a gag that is often associated with such court orders. Sources can face a range of reprisals. These could be personal reprisals such as reputational or relationship damage, or for employees that reveal wrongdoing, loss of employment and career opportunities. -

Paravirtualizing Linux in a Real-Time Hypervisor

Paravirtualizing Linux in a real-time hypervisor Vincent Legout and Matthieu Lemerre CEA, LIST, Embedded Real Time Systems Laboratory Point courrier 172, F-91191 Gif-sur-Yvette, FRANCE {vincent.legout,matthieu.lemerre}@cea.fr ABSTRACT applications written for a fully-featured operating system This paper describes a new hypervisor built to run Linux in a such as Linux. The Anaxagoros design prevent non real- virtual machine. This hypervisor is built inside Anaxagoros, time tasks to interfere with real-time tasks, thus providing a real-time microkernel designed to execute safely hard real- the security foundation to build a hypervisor to run existing time and non real-time tasks. This allows the execution of non real-time applications. This allows current applications hard real-time tasks in parallel with Linux virtual machines to run on Anaxagoros systems without any porting effort, without interfering with the execution of the real-time tasks. opening the access to a wide range of applications. We implemented this hypervisor and compared perfor- Furthermore, modern computers are powerful enough to mances with other virtualization techniques. Our hypervisor use virtualization, even embedded processors. Virtualiza- does not yet provide high performance but gives correct re- tion has become a trendy topic of computer science, with sults and we believe the design is solid enough to guarantee its advantages like scalability or security. Thus we believe solid performances with its future implementation. that building a real-time system with guaranteed real-time performances and dense non real-time tasks is an important topic for the future of real-time systems. -

Paravirtualized Applications on Top of an L4/Fiasco Microkernel

TECHNOLOGICAL EDUCATIONAL INSTITUTE OF CRETE Paravirtualized applications on top of an L4/Fiasco Microkernel by Emmanouil Fragkiskos Ragkousis A thesis submitted in partial fulfillment for the degree of Bachelor of Science in the Technological Educational Institute of Crete Department of Informatics Engineering March 31, 2019 TECHNOLOGICAL EDUCATIONAL INSTITUTE OF CRETE Σύνοψη Technological Educational Institute of Crete Department of Informatics Engineering Bachelor of Science by Emmanouil Fragkiskos Ragkousis In this thesis we added Zedboard support to L4/Fiasco, which allowed us to use it as a hypervisor. In this way we can achieve a better use of resources by sharing them between multiple operating systems and/or bare metal programs. It also allows us to achieve better security by controlling access to parts of the hardware. Thesis Supervisor: Kornaros Georgios Title: Assistant Professor at Department of Informatics Engineering, TEI of Crete TECHNOLOGICAL EDUCATIONAL INSTITUTE OF CRETE Σύνοψη Technological Educational Institute of Crete Department of Informatics Engineering Bachelor of Science by Emmanouil Fragkiskos Ragkousis Se αυτή thn πτυχιακή, prosjèsame υποστήριξη gia to Zedboard sto L4/Fiasco, me σκοπό na to χρησιμοποιήσουμε san hypervisor. Me αυτόn ton τρόπο μπορούμε na επιτύχουμε καλύτερη χρήση twn πόρων tou συστήματος, μοιράζοντας ton metaxύ diaforetik¸n leitourgik¸n συστημάτων kai efarmog¸n. EpÐshc mac epitrèpei na επιτύχουμε καλύτερη ασφάλεια elègqontac to thn πρόσβαση sto hardware. Epiblèpwn Πτυχιακής: Ge¸rgioc Κορνάρος TÐtloc: EpÐkouroc Καθηγητής tou τμήματoc Mhqanik¸n Πληροφορικής, TEI Κρήτης Acknowledgements This bachelor thesis is my first academic milestone and looking back I would like to thank all the people, that are not listed below, for their support and encouragement until today. -

![Arxiv:2103.07092V1 [Cs.DC] 12 Mar 2021 Tems Running in the Cloud](https://docslib.b-cdn.net/cover/4954/arxiv-2103-07092v1-cs-dc-12-mar-2021-tems-running-in-the-cloud-2004954.webp)

Arxiv:2103.07092V1 [Cs.DC] 12 Mar 2021 Tems Running in the Cloud

Performance Exploration of Virtualization Systems Joel Mandebi Mbongue Danielle Tchuinkou Kwadjo Christophe Bobda University of Florida University of Florida University of Florida Gainesville, Florida Gainesville, Florida Gainesville, Florida [email protected] [email protected] [email protected] ABSTRACT 3 User App 3 Guest App 3 Guest App Virtualization has gained astonishing popularity in recent decades. 2 2 2 It is applied in several application domains, including mainframes, 1 1 1 VMM Kernel VMM 0 Host Kernel Privileges Privileges personal computers, data centers, and embedded systems. While 0 0 Privileges the benefits of virtualization are no longer to be demonstrated, it Hardware Hardware Hardware often comes at the price of performance degradation compared to (a) (b) (c) native execution. In this work, we conduct a comparative study on the performance outcome of VMWare, KVM, and Docker against Figure 1: x86 Privilege Ring and Virtualization. (a) Typical compute-intensive, IO-intensive, and system benchmarks. The ex- configuration in environment with no virtualization. The periments reveal that containers are the way-to-go for the fast kernel runs at level 0 and applications run at level 3. (b) execution of applications. It also shows that VMWare and KVM Corresponds to bare-metal virtualization stacks. There is no perform similarly on most of the benchmarks. host operating system, the virtual machine monitor (VMM) runs at level 0 and guest applications are at level 3. (c) De- KEYWORDS ployment of hosted VMMs. The host kernel runs at level 0, Virtualization, Containers, KVM, VMware, Docker the VMM at level 1, and the guests at level 3. -

RT-Xen: Towards Real-Time Hypervisor Scheduling in Xen

RT-Xen: Towards Real-time Hypervisor Scheduling in Xen Sisu Xi, Justin Wilson, Chenyang Lu, and Christopher Gill Department of Computer Science and Engineering Washington University in St. Louis {xis, wilsonj, lu, cdgill}@cse.wustl.edu ABSTRACT [Software]: Operating Systems { Organization and Design As system integration becomes an increasingly important { Hierarchical design challenge for complex real-time systems, there has been a significant demand for supporting real-time systems in virtu- Keywords alized environments. This paper presents RT-Xen, the first real-time hypervisor scheduling framework for Xen, the most real-time virtualization; hierarchical scheduling; sporadic server, widely used open-source virtual machine monitor (VMM). deferrable server, periodic server, polling server RT-Xen bridges the gap between real-time scheduling theory and Xen, whose wide-spread adoption makes it an attrac- 1. INTRODUCTION tive platform for integrating a broad range of real-time and Virtualization has been widely adopted in enterprise com- embedded systems. Moreover, RT-Xen provides an open- puting to integrate multiple systems on a shared platform. source platform for researchers and integrators to develop Virtualization breaks the one-to-one correspondence between and evaluate real-time scheduling techniques, which to date logical systems and physical systems, while maintaining the have been studied predominantly via analysis and simula- modularity of the logical systems. Breaking this correspon- tions. Extensive experimental results demonstrate the fea- dence allows resource provisioning and subsystem develop- sibility, efficiency, and efficacy of fixed-priority hierarchical ment to be done separately, with subsystem developers stat- real-time scheduling in RT-Xen. RT-Xen instantiates a suite ing performance demands which a system integrator must of fixed-priority servers (Deferrable Server, Periodic Server, take into account. -

HPC Containers in Use

HPC Containers in Use Jonathan Sparks SC and Clusters R&D Cray Inc. Bloomington, MN, USA e-mail: [email protected] Abstract— Linux containers in the commercial world are environments. This study uses several different container changing the landscape for application development and technologies and applications to evaluate the performance deployments. Container technologies are also making inroads overhead, deployment, and isolation techniques for each. into HPC environments, as exemplified by NERSC’s Shifter Our focus is on traditional HPC use cases and applications; and LBL’s Singularity. While the first generation of HPC more general use cases have been covered by previous containers offers some of the same benefits as the existing open container frameworks, like CoreOS or Docker, they do not work, such as that by Lucas Chaufournier [17]. address the cloud/commercial feature sets such as virtualized This paper is organized as follows: Section II provides an networks, full isolation, and orchestration. This paper will overview of virtualization techniques; Section III presents explore the use of containers in the HPC environment and the different container runtime environments; Section IV summarize our study to determine how best to use these describes the motivation behind our study; Section V technologies in the HPC environment at scale. presents the experiments performed in order to evaluate both application performance and the system overhead incurred in Keywords- Shifter; HPC; Cray; container; virtualization; hosting the environment; Section VI presents related work. Docker; CoreOS Conclusions and future work are presented in Section VII. I. INTRODUCTION II. VIRTUALIZATION ontainers seem to have burst onto the infrastructure In this section we provide some background on the two C scene for good reason.