Xen to KVM Migration Guide

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Effective Virtual CPU Configuration with QEMU and Libvirt

Effective Virtual CPU Configuration with QEMU and libvirt Kashyap Chamarthy <[email protected]> Open Source Summit Edinburgh, 2018 1 / 38 Timeline of recent CPU flaws, 2018 (a) Jan 03 • Spectre v1: Bounds Check Bypass Jan 03 • Spectre v2: Branch Target Injection Jan 03 • Meltdown: Rogue Data Cache Load May 21 • Spectre-NG: Speculative Store Bypass Jun 21 • TLBleed: Side-channel attack over shared TLBs 2 / 38 Timeline of recent CPU flaws, 2018 (b) Jun 29 • NetSpectre: Side-channel attack over local network Jul 10 • Spectre-NG: Bounds Check Bypass Store Aug 14 • L1TF: "L1 Terminal Fault" ... • ? 3 / 38 Related talks in the ‘References’ section Out of scope: Internals of various side-channel attacks How to exploit Meltdown & Spectre variants Details of performance implications What this talk is not about 4 / 38 Related talks in the ‘References’ section What this talk is not about Out of scope: Internals of various side-channel attacks How to exploit Meltdown & Spectre variants Details of performance implications 4 / 38 What this talk is not about Out of scope: Internals of various side-channel attacks How to exploit Meltdown & Spectre variants Details of performance implications Related talks in the ‘References’ section 4 / 38 OpenStack, et al. libguestfs Virt Driver (guestfish) libvirtd QMP QMP QEMU QEMU VM1 VM2 Custom Disk1 Disk2 Appliance ioctl() KVM-based virtualization components Linux with KVM 5 / 38 OpenStack, et al. libguestfs Virt Driver (guestfish) libvirtd QMP QMP Custom Appliance KVM-based virtualization components QEMU QEMU VM1 VM2 Disk1 Disk2 ioctl() Linux with KVM 5 / 38 OpenStack, et al. libguestfs Virt Driver (guestfish) Custom Appliance KVM-based virtualization components libvirtd QMP QMP QEMU QEMU VM1 VM2 Disk1 Disk2 ioctl() Linux with KVM 5 / 38 libguestfs (guestfish) Custom Appliance KVM-based virtualization components OpenStack, et al. -

Industrial Control Via Application Containers: Migrating from Bare-Metal to IAAS

Industrial Control via Application Containers: Migrating from Bare-Metal to IAAS Florian Hofer, Student Member, IEEE Martin A. Sehr Antonio Iannopollo, Member, IEEE Faculty of Computer Science Corporate Technology EECS Department Free University of Bolzano-Bozen Siemens Corporation University of California Bolzano, Italy Berkeley, CA 94704, USA Berkeley, CA 94720, USA fl[email protected] [email protected] [email protected] Ines Ugalde Alberto Sangiovanni-Vincentelli, Fellow, IEEE Barbara Russo Corporate Technology EECS Department Faculty of Computer Science Siemens Corporation University of California Free University of Bolzano-Bozen Berkeley, CA 94704, USA Berkeley, CA 94720, USA Bolzano, Italy [email protected] [email protected] [email protected] Abstract—We explore the challenges and opportunities of control design full authority over the environment in which shifting industrial control software from dedicated hardware to its software will run, it is not straightforward to determine bare-metal servers or cloud computing platforms using off the under what conditions the software can be executed on cloud shelf technologies. In particular, we demonstrate that executing time-critical applications on cloud platforms is viable based on computing platforms due to resource virtualization. Yet, we a series of dedicated latency tests targeting relevant real-time believe that the principles of Industry 4.0 present a unique configurations. opportunity to explore complementing traditional automation Index Terms—Industrial Control Systems, Real-Time, IAAS, components with a novel control architecture [3]. Containers, Determinism We believe that modern virtualization techniques such as application containerization [3]–[5] are essential for adequate I. INTRODUCTION utilization of cloud computing resources in industrial con- Emerging technologies such as the Internet of Things and trol systems. -

KVM Based Virtualization and Remote Management Srinath Reddy Pasunuru St

St. Cloud State University theRepository at St. Cloud State Culminating Projects in Information Assurance Department of Information Systems 5-2018 KVM Based Virtualization and Remote Management Srinath Reddy Pasunuru St. Cloud State University, [email protected] Follow this and additional works at: https://repository.stcloudstate.edu/msia_etds Recommended Citation Pasunuru, Srinath Reddy, "KVM Based Virtualization and Remote Management" (2018). Culminating Projects in Information Assurance. 53. https://repository.stcloudstate.edu/msia_etds/53 This Starred Paper is brought to you for free and open access by the Department of Information Systems at theRepository at St. Cloud State. It has been accepted for inclusion in Culminating Projects in Information Assurance by an authorized administrator of theRepository at St. Cloud State. For more information, please contact [email protected]. 1 KVM Based Virtualization and Remote Management by Srinath Reddy Pasunuru A Starred Paper Submitted to the Graduate Faculty of St. Cloud State University in Partial Fulfillment of the Requirements for the Degree Master of Science in Information Assurance May, 2018 Starred Paper Committee Susantha Herath, Chairperson Ezzat Kirmani Sneh Kalia 2 Abstract In the recent past, cloud computing is the most significant shifts and Kernel Virtual Machine (KVM) is the most commonly deployed hypervisor which are used in the IaaS layer of the cloud computing systems. The Hypervisor is the one which provides the complete virtualization environment which will intend to virtualize as much as hardware and systems which will include the CPUs, Memory, network interfaces and so on. Because of the virtualization technologies such as the KVM and others such as ESXi, there has been a significant decrease in the usage if the resources and decrease in the costs involved. -

Xen on X86, 15 Years Later

Xen on x86, 15 years later Recent development, future direction QEMU Deprivileging PVShim Panopticon Large guests (288 vcpus) NVDIMM PVH Guests PVCalls VM Introspection / Memaccess PV IOMMU ACPI Memory Hotplug PVH dom0 Posted Interrupts KConfig Sub-page protection Hypervisor Multiplexing Talk approach • Highlight some key features • Recently finished • In progress • Cool Idea: Should be possible, nobody committed to working on it yet • Highlight how these work together to create interesting theme • PVH (with PVH dom0) • KConfig • … to disable PV • PVshim • Windows in PVH PVH: Finally here • Full PVH DomU support in Xen 4.10, Linux 4.15 • First backwards-compatibility hack • Experimental PVH Dom0 support in Xen 4.11 PVH: What is it? • Next-generation paravirtualization mode • Takes advantage of hardware virtualization support • No need for emulated BIOS or emulated devices • Lower performance overhead than PV • Lower memory overhead than HVM • More secure than either PV or HVM mode • PVH (with PVH dom0) • KConfig • … to disable PV • PVshim • Windows in PVH KConfig • KConfig for Xen allows… • Users to produce smaller / more secure binaries • Makes it easier to merge experimental functionality • KConfig option to disable PV entirely • PVH • KConfig • … to disable PV • PVshim • Windows in PVH PVShim • Some older kernels can only run in PV mode • Expect to run in ring 1, ask a hypervisor PV-only kernel (ring 1) to perform privileged actions “Shim” Hypervisor (ring 0) • “Shim”: A build of Xen designed to allow an unmodified PV guest to run in PVH mode -

Ovirt Architecture

oVirt Architecture Itamar Heim Presented here by Dan Kenigsberg [email protected] oVirt Overview 1 Agenda ● oVirt Components ● Engine ● Clients ● Host ● Engine Agent - VDSM ● Guest ● Storage Concepts ● Data Warehouse & Reports ● User flows oVirt Overview 2 Architecture From 30,000 Feet Servers Engine Client oVirt Overview 3 The Real World Web Clients Python SDK DB Python CLI Engine R LDAP E Server S T Guest agent Spice Guest client Shared Storage VDSM Host Local Storage oVirt Overview 4 oVirt Engine VM & Template Life Cycle Load HA create, schedule, snapshot Balancing Storage Configuration & Monitoring Network Configuration & Monitoring Host Host Host Host Register/Install Monitoring Maintenance Fencing Authentication, Authorization Inventory Audit oVirt Overview 5 oVirt Engine Postgres DB Active Directory Engine RHDS R E S IDM T oVirt Overview 6 The Real World Web Clients Python SDK DB Python CLI Engine R LDAP E Server S T Guest agent Spice Guest client Shared Storage VDSM Host Local Storage oVirt Overview 7 The Clients Admin Portal User Portal R Python SDK Engine E S T Python CLI oVirt Overview 8 Admin Portal oVirt Overview 9 User Portal oVirt Overview 10 Power User Portal oVirt Overview 11 REST API oVirt Overview 12 SDK oVirt Overview 13 CLI oVirt Overview 14 The Real World Web Clients Python SDK DB Python CLI Engine R LDAP E Server S T Guest agent Spice Guest client Shared Storage VDSM Host Local Storage oVirt Overview 15 The Host QEMU/KVM Fedora Engine MOM libvirt oVirt Node VDSM KSM Configuration Monitoring : Network, Storage, Host, -

Vnfs in a CNF Environment

VNFs in a CNF environment Monika Antoniak, Piotr Skamruk CodiLime Agenda ● Who are we? ● Business use case - use existing VNFs in a containerized set-up ● Technical solution to the problem ● Q&A Who we are? Who we are ● CodiLime has been providing networking engineering services since 2011 ● As part of our R&D efforts and based on our expertise with CNFs and VNFs, we have decided to explore this topic further ● Today we are presenting the working example Business use case Business case What if… ● You love the lightness of containers and use them on a daily basis ● You value the flexibility and resilience of the overall solution ● You want a simple way to deploy things ● You enjoy using kubernetes to manage your resources ● ...and you use a business critical network function as a blackbox VM What can you do to get all of that? A step back: VNFs and CNFs VNF (Virtual Network Function): a well- CNF (Containerized Network Function): a known way to realize network functions in new way of providing required network virtualized or cloud environments functionality with the help of containers Software is provided as a VM image that Software is distributed as a container cannot be changed into a container image, image and can be managed using or is based on an operating system other container-management tools (like docker than Linux images in kubernetes env) VNF examples: vFW, vBNG, vEPC CNF examples: vCPE/cCPE, LDAP, DHCP Back to business Goal: a converged setup for running containerized and VM-based network functions ● using a single user interface -

The Evolution of Linux Containers and Integration of Docker with SLES® 12

The Evolution of Linux Containers and Integration of Docker with SLES® 12 Michal Svec Flavio Castelli Senior Product Manager Senior Software Engineer [email protected] [email protected] Agenda • Linux Containers • Docker • Demo 2 Linux Containers Traditional virtualization App App App App A A' B B' Bins/Libs Bins/Libs Bins/Libs Bins/Libs Virtual Machine Virtual Guest OS Guest OS Guest OS Guest OS Hypervisor (Type 2) Host OS Server 4 Linux Containers App App App App A A' B B' Bins/Libs Bins/Libs Bins/Libs Bins/Libs Guest OS Guest OS System container Application container Guest OS Guest OS Kernel Kernel Hypervisor (Type 2) Host OS Server 5 What is a Linux Container? Apps Kernel Server 6 Why Use Linux Containers? • Lightweight virtualization solution ‒ Isolated from the other processes ‒ 1 kernel to rule them all ‒ Normal I/O ‒ Dynamic changes possible without reboot ‒ Nested virtualization is not a problem ‒ No boot time or very short one • Isolate services (e.g. web server, ftp, ...) • Provide root read-only access ‒ Mount host / as read-only ‒ Add only needed resources read-write 7 Linux Containers Use Cases • Deploy everywhere quickly ‒ Deploy application and their dependencies together. • Enterprise Data Center ‒ Limit applications which have a tendency to grab all resources on a system: ‒ Memory (databases) ‒ CPU cycles/scheduling (compute intensive applications) • Outsourcing business ‒ Guarantee a specific amount of resources (SLAs!) to a set of applications for a specific customer without more heavy virtualization technologies 8 Linux -

Debugging the Virtualization Layer (Libvirt and QEMU) in Openstack

Debugging the Virtualization layer (libvirt and QEMU) in OpenStack Kashyap Chamarthy <[email protected]> OpenStack Summit Tokyo 2015 Part I Problem background and overview Problem background – Lots of moving parts: OpenStack services, Virt drivers, System components, etc – Tracking interactions between multiple components is challenging – Finding relevant log patterns in complex systems can become cumbersome Effective root cause analysis with right tooling What kind of bugs? – Unexpected guest crashes – Heisenbugs! (e.g. Nova bug: #1334398) – Bugs introduced by load (e.g. OpenStack CI infra: ~800 test jobs/hr[*]) – Subtle issues in complex features (e.g. live migration), perf. degradation [*] http://status.openstack.org/zuul/ OpenStack Nova – Compute workloads – Pluggable Virtualization drivers [libvirt] virt_type=kvm|qemu|xen|[...] ... – nova-compute: faciliates interactions between hypervisors (libvirt/KVM) & VMs, via the virt driver interface KVM Virtualization building blocks KVM – Linux hardware virt (vmx|svm) QEMU – Emulator: Devices (disk, networks, display, sound, PCI, etc); CPU $ qemu-system-x86_64 -device \? $ qemu-system-x86_64 -cpu \? – Interactions with libvirt: QMP JSON RPC interface, command-line libvirt – Hypervisor agnostic virtualization library Default virtualization drivers in OpenStack OpenStack KVM Virtualization building blocks .------------------. .------------. | OpenStack | | libguestfs | | (`nova-compute`) | .-------------------. '------------------' | guestfish; virt-* | | | '-------------------' | | | | | -

Building Vmnetx with Qemu and Libvirt

Building VMNetX with qemu and libvirt github.com/cmusatyalab/vmnetx Benjamin Gilbert [email protected] June 3, 2013 What is VMNetX? ● Virtual Machine Network Execution ● Tool for executing remote VMs locally with low startup overhead ○ Disk chunks demand-paged from server ● Designed for preservation and execution of old software ○ Collaboration with University Libraries ● GPLv2 ● Part of the Olive project, olivearchive.org Demo Building VMNetX ● System architecture ● libvirt & qemu in practice ● Unsolved problems Virtual Machine Lifecycle ● Archive curator builds and uploads a VM ○ Using virt-manager for now ● Many users run it ○ We do not save their changes VM VM VM VM VM VM VM Curator Users What is a VM? Domain XML Disk Image Memory Image What is a VM? <domain type="kvm"> <name>machine</name> <uuid>a7434757-631b-496d-a1ba-638014c74cc4</uuid> <memory>65536</memory> <currentMemory>65536</currentMemory> <vcpu>1</vcpu> <os> <type arch="i686" machine="pc">hvm</type> <boot dev="hd"/> </os> <features> <pae/> Domain XML </features> Disk Image Memory Image <clock offset="utc"/> <devices> <emulator>/usr/libexec/qemu-kvm</emulator> <disk type="file" device="disk"> <driver name="qemu" type="raw"/> <source file="/disk.img"/> <target dev="hda" bus="ide"/> <address type="drive" controller="0" bus="0" unit="0"/> </disk> <controller type="ide" index="0"> <address type="pci" domain="0x0000" bus="0x00" slot="0x01" function="0x1"/> </controller> <interface type="user"> <mac address="52:54:00:03:a0:11"/> <address type="pci" domain="0x0000" bus="0x00" -

TESTING in QEMU and LIBVIRT Beyond Make and Make Check

TESTING IN QEMU AND LIBVIRT Beyond make and make check YASH MANKAD RED HAT INC. 26th October 2018 AGENDA What Why How Where 1 KVM Forum 2018 AGENDA What is the state of testing in QEMU and libvirt ? Why How Where 2 KVM Forum 2018 AGENDA What is the state of testing in QEMU and libvirt ? Why should we change ? How Where 3 KVM Forum 2018 AGENDA What is the state of testing in QEMU and libvirt ? Why should we change ? How will it work ? Where 4 KVM Forum 2018 AGENDA What is the state of testing in QEMU and libvirt ? Why should we change ? How will it work ? Where will it run ? 5 KVM Forum 2018 STATE OF VIRT TESTING QEMU libvirt Tests included in qemu source test suite present in source are unit tests code runs unit tests CI environments build QEMU CI environment uses the from source using 'make' and autogen.sh script to build and run 'make check' runs gmake Sub-system tests such as 'check' ensures XML can run iotests, coverity, and dynamic qemu code analysis 6 KVM Forum 2018 STATE OF QEMU TESTING Runs for every patch Ubuntu 12/14.04 x86_64 Runs Debian, Ubuntu sent to qemu-devel hosts, MAC OS X amd-64 ubuntu, fedora, centos Runs make Runs make on docker No functional tests No functional tests checkpatch.pl Runs make check 7 KVM Forum 2018 STATE OF LIBVIRT TESTING on Runs Ubuntu in docker Runs CentOS, Debian, Fedora, rawhide and make syntax-check freebsd make install make and make install make dist 8 KVM Forum 2018 WHY SHOULD WE CHANGE ? 9 KVM Forum 2018 WHY SHOULD WE CHANGE ? Why look beyond make, make check, make install, etc ? Run more functional -

L4 – Virtualization and Beyond

L4 – Virtualization and Beyond Hermann Härtig!," Michael Roitzsch! Adam Lackorzynski" Björn Döbel" Alexander Böttcher! #!Department of Computer Science# "GWT-TUD GmbH # Technische Universität Dresden# 01187 Dresden, Germany # 01062 Dresden, Germany {haertig,mroi,adam,doebel,boettcher}@os.inf.tu-dresden.de Abstract Mac OS X environment, using full hardware After being introduced by IBM in the 1960s, acceleration by the GPU. Virtual machines are virtualization has experienced a renaissance in used to test potentially malicious software recent years. It has become a major industry trend without risking the host environment and they in the server context and is also popular on help developers in debugging crashes with back- consumer desktops. In addition to the well-known in-time execution. benefits of server consolidation and legacy In the server world, virtual machines are used to preservation, virtualization is now considered in consolidate multiple services previously located embedded systems. In this paper, we want to look on dedicated machines. Running them within beyond the term to evaluate advantages and virtual machines on one physical server eases downsides of various virtualization approaches. management and helps saving power by We show how virtualization can be increasing utilization. In server farms, migration complemented or even superseded by modern of virtual machines between servers is used to operating system paradigms. Using L4 as the balance load with the potential of shutting down basis for virtualization and as an advanced completely unloaded servers or adding more to microkernel provides a best-of-both-worlds increase capacity. Lastly, virtual machines also combination. Microkernels can contribute proven isolate different customers who can purchase real-time capabilities and small trusted computing virtual shares of a physical server in a data center. -

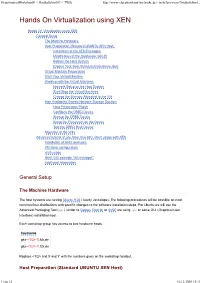

Hands on Virtualization Using XEN

VirtualizationWorkshop09 < GridkaSchool09 < TWiki http://www-ekp.physik.uni-karlsruhe.de/~twiki/bin/view/GridkaSchool... Hands On Virtualization using XEN Hands On Virtualization using XEN General Setup The Machine Hardware Host Preparation (Standard UBUNTU XEN Host) Installation of the XEN Packages Modification of the Bootloader GRUB Reboot the Host System Explore Your New XEN dom0 Hardware Host Virtual Machine Preparation Start Your Virtual Machine Working with the Virtual Machines Network Setup on the Host System Start/Stop the Virtual Machines Change the Memory Allocated to the VM High Availability Shared Network Storage Solution Host Preparation Phase Configure the DRBD Device Startup the DRBD Device Setup the Filesystem on the Device Test the DRBD Raid Device Migration of the VMs Advanced tutorial (if you have time left): libvirt usage with XEN Installation of libvirt and tools VM libvirt configuration virsh usage libvirt GUI example "virt-manager" Additional Information General Setup The Machine Hardware The host systems are running Ubuntu 9.04 (Jaunty Jackalope). The following procedures will be possible on most common linux distributions with specific changes to the software installation steps. For Ubuntu we will use the Advanced Packaging Tool ( apt ) similar to Debian . RedHat or SuSE are using rpm or some GUI (Graphical User Interface) installation tool. Each workshop group has access to two hardware hosts: hostname gks- <1/2>-X .fzk.de gks- <1/2>-Y .fzk.de Replace <1/2> and X and Y with the numbers given on the workshop handout. Host Preparation (Standard UBUNTU XEN Host) 1 von 15 16.12.2009 15:13 VirtualizationWorkshop09 < GridkaSchool09 < TWiki http://www-ekp.physik.uni-karlsruhe.de/~twiki/bin/view/GridkaSchool..