Federated Search by Milad Shokouhi and Luo Si

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Key Issue Cervone 1L

Serials – 20(1), March 2007 Key issue Key issue Federated searching: today, tomorrow and the future (?) FRANK CERVONE Assistant University Librarian for Information Technology Northwestern University, Evanston, IL, USA Although for many people it may seem that authorization, thereby allowing patrons the ability federated search software has been around forever, to use the resources from wherever they may it has only been a little over three years since happen to be. federated search burst on the library scene. In that time, the software has been evolving in many ways as the landscape of Internet-based searching has Standard features and evolving trends shifted. When many libraries decided to install federated In the current generation of federated searching search software, they were trying to address some products, standard features include the ability to of the issues that have confounded searchers since use multiple protocols for gathering data from the introduction of CD ROM-based indexes in the information products. In addition to the ubiquitous early 1990s. For example, it has been known for Z39.50 protocol, newer protocols such as SRU/SRW some time that when patrons have multiple and OAI-PMH are rapidly becoming basic require- databases available to them, they typically prefer ments for all federated search engines, regardless to use a single interface rather than having to learn of scale. difference interfaces for each database. Further- As the needs of researchers and faculty evolve, more, most people want to have a way to search advanced features are being built into next- those multiple resources simultaneously and then generation federated searching products, such as consolidate the search results into a single, ordered integration of the federated searching software with list. -

Federated Search: New Option for Libraries in the Digital Era Shailendra Kumar Gareema Sanaman Namrata Rai

International CALIBER-2008 267 Federated Search: New Option for Libraries in the Digital Era Shailendra Kumar Gareema Sanaman Namrata Rai Abstract The article describes the concept of federated searching and demarcates the difference between metasearching and federated searching which are synonymously used. Due to rapid growth of scholarly information, need of federated searching arises. Advantages of federated search have been described along with the search model indicating old search model and federated search model. Various technologies used for federated searching have been discussed. While working with search, the selection of federated search engine and how it works in libraries and other institutions are explained. Article also covers various federated search providers and at the end system advantages and drawbacks in federated search have been listed. Keywords: Federated Search, Meta Search, Google Scholar, SCOPUS, XML, SRW 1. Introduction In the electronic information environment one of the responses to the problem of bringing large amounts of information together has been for libraries to introduce portals. A portal is a gateway, or a point where users can start their search for information on the web. There are a number of different types of portals, for example universities have been introducing “institutional portals”, which can be described as “a layer which aggregates, integrates, personalizes and presents information, transactions and applications to the user according to their role and preferences” (Dolphin, Miller & Sherratt, 2002). A second type of portal is a “subject portals”. A third type of portal is a “federated search tool” which brings together the resources to a library subscribes and allows cross-searching of these resources. -

From Federated to Aggregated Search

From federated to aggregated search Fernando Diaz, Mounia Lalmas and Milad Shokouhi [email protected] [email protected] [email protected] Outline Introduction and Terminology Architecture Resource Representation Resource Selection Result Presentation Evaluation Open Problems Bibliography 1 Outline Introduction and Terminology Architecture Resource Representation Resource Selection Result Presentation Evaluation Open Problems Bibliography Introduction What is federated search? What is aggregated search? Motivations Challenges Relationships 2 A classical example of federated search One query Collections to be searched www.theeuropeanlibrary.org A classical example of federated search Merged list www.theeuropeanlibrary.org of results 3 Motivation for federated search Search a number of independent collections, with a focus on hidden web collections Collections not easily crawlable (and often should not) Access to up-to-date information and data Parallel search over several collections Effective tool for enterprise and digital library environments Challenges for federated search How to represent collections, so that to know what documents each contain? How to select the collection(s) to be searched for relevant documents? How to merge results retrieved from several collections, to return one list of results to the users? Cooperative environment Uncooperative environment 4 From federated search to aggregated search “Federated search on the web” Peer-to-peer network connects distributed peers (usually for -

Federated Search of Text Search Engines in Uncooperative Environments

Federated Search of Text Search Engines in Uncooperative Environments Luo Si Language Technology Institute School of Computer Science Carnegie Mellon University [email protected] Thesis Committee: Jamie Callan (Carnegie Mellon University, Chair) Jaime Carbonell (Carnegie Mellon University) Yiming Yang (Carnegie Mellon University) Luis Gravano (Columbia University) 2006 i ACKNOWLEDGEMENTS I would never have been able to finish my dissertation without the guidance of my committee members, help from friends, and support from my family. My first debt of gratitude must go to my advisor, Jamie Callan, for taking me on as a student and for leading me through many aspects of my academic career. Jamie is not only a wonderful advisor but also a great mentor. He told me different ways to approach research problems. He showed me how to collaborate with other researchers and share success. He guided me how to write conference and journal papers, had confidence in me when I doubted myself, and brought out the best in me. Jamie has given me tremendous help on research and life in general. This experience has been extremely valuable to me and I will treasure it for the rest of my life. I would like to express my sincere gratitude towards the other members of my committee, Yiming Yang, Jamie Carbonell and Luis Gravano, for their efforts and constructive suggestions. Yiming has been very supportive for both my work and my life since I came to Carnegie Mellon University. Her insightful comments and suggestions helped a lot to improve the quality of this dissertation. Jamie has kindly pointed out different ways to solve research problems in my dissertation, which results in many helpful discussions. -

Federated Search As a Transformational Technology Enabling Knowledge Discovery: the Role of Worldwidescience.Org

Federated search as a transformational technology enabling knowledge discovery: the role of WorldWideScience.org ABSTRACT Purpose To describe the work of the Office of Scientific and Technical Information (OSTI) in the U.S. Department of Energy Office of Science and OSTI’s development of the powerful search engine, WorldWideScience.org. With tools such as Science.gov and WorldWideScience.org, the patron gains access to multiple, geographically dispersed deep web databases and can search all of the constituent sources with a single query. Approach – Historical and descriptive Findings – That WorldWideScience.org fills a unique niche in discovering scientific material in an information landscape that includes search engines such as Google and Google Scholar. Value – One of the few articles to describe in depth the important work being done by the U.S. Office of Scientific and Technical Information in the field of search and discovery Paper type – Review Keywords – OSTI, WorldWideScience, ScienceAccelerator.gov, Science.gov, search engines, federated search, Google, multilingual Th h e dd ee vv ee ll oo pp me e nn t oo f OO SS TI Established in 1947, the U.S. Department of Energy (DOE) Office of Scientific and Technical Information (OSTI) (http://www.osti.gov/) fulfills the agency’s responsibilities to collect, preserve, and disseminate scientific and technical information (STI) emanating from DOE research and development (R&D) activities. OSTI was founded on the principle that science progresses only if knowledge is shared. OSTI’s mission is to advance science and sustain creativity by making R&D findings available and useful to DOE and other researchers and the public. -

ASPECT Approach to Federated Search and Harvesting of Learning Object Repositories

ASPECT Approach to Federated Search and Harvesting of Learning Object Repositories ECP 2007 EDU 417008 ASPECT ASPECT Approach to Federated Search and Harvesting of Learning Object Repositories Deliverable number D2.1 Dissemination level Public Delivery date 28 February 2009 Status Final KUL, EUN, Author(s) VMG, RWCS, KOB eContentplus This project is funded under the eContentplus programme1, a multiannual Community programme to make digital content in Europe more accessible, usable and exploitable. 1 OJ L 79, 24.3.2005, p. 1. 1/1 ASPECT Approach to Federated Search and Harvesting of Learning Object Repositories 1 INTRODUCTION ........................................................................................................................................... 4 2 METADATA STANDARDS & SPECIFICATIONS ................................................................................... 4 3 SPECIFICATIONS FOR EDUCATIONAL CONTENT DISCOVERY................................................... 5 3.1 SEARCH SPECIFICATIONS............................................................................................................................ 6 3.1.1 SQI ..................................................................................................................................................... 6 3.1.2 SRU/SRW ........................................................................................................................................... 6 3.1.3 Z39.50 ............................................................................................................................................... -

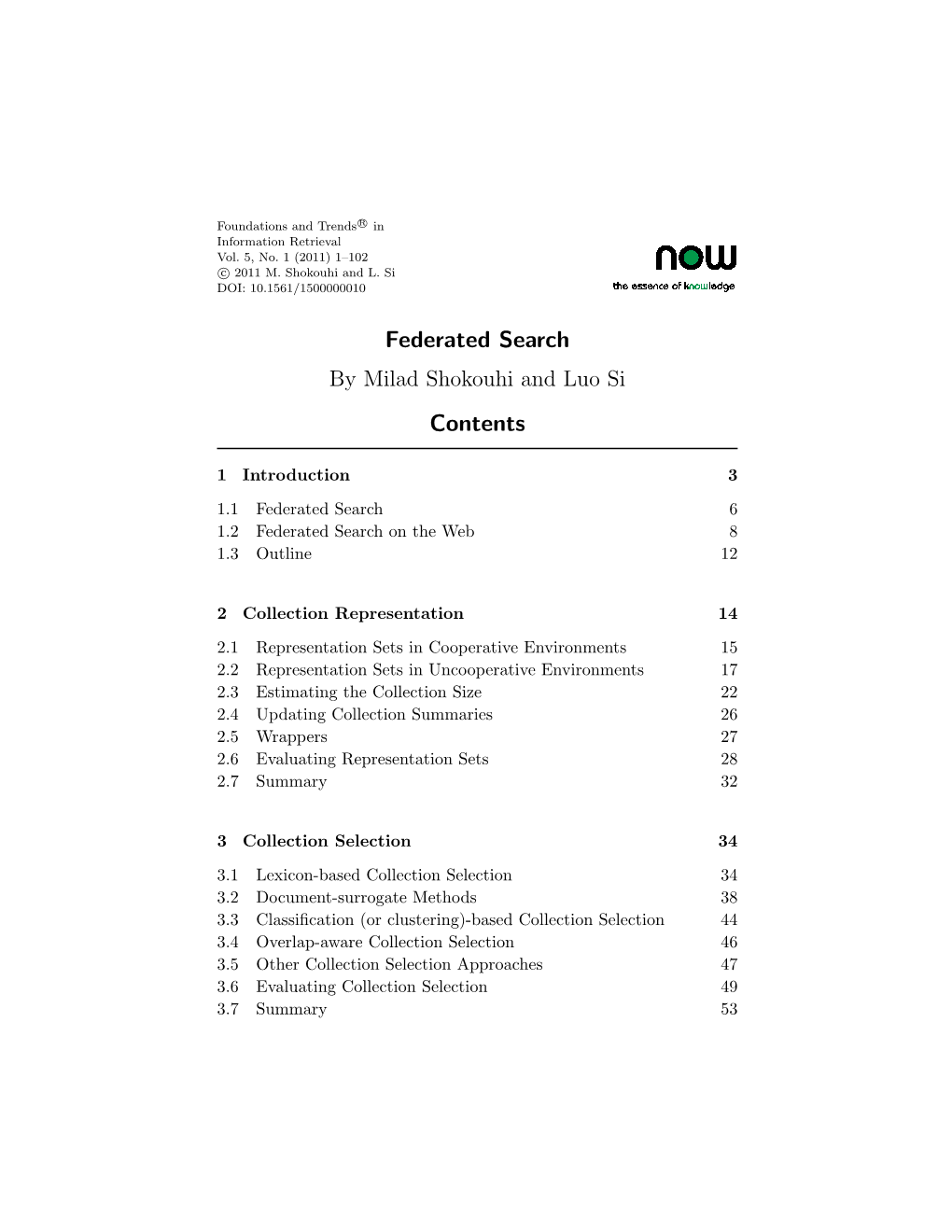

Federated Search

Foundations and Trends R in sample Vol. xx, No xx (xxxx) 1{109 c xxxx xxxxxxxxx DOI: xxxxxx Federated Search Milad Shokouhi1 and Luo Si2 1 Microsoft Research, 7 JJ Thomson Avenue, Cambridge CB30FB , UK , [email protected] 2 Purdue University, 250N University Street, West Lafayette IN 47907-2066 , USA , [email protected] Abstract Federated search (federated information retrieval or distributed infor- mation retrieval) is a technique for searching multiple text collections simultaneously. Queries are submitted to a subset of collections that are most likely to return relevant answers. The results returned by selected collections are integrated and merged into a single list. Fed- erated search is preferred over centralized search alternatives in many environments. For example, commercial search engines such as Google cannot easily index uncrawlable hidden web collections while feder- ated search systems can search the contents of hidden web collections without crawling. In enterprise environments, where each organization maintains an independent search engine, federated search techniques can provide parallel search over multiple collections. There are three major challenges in federated search. For each query, a subset of collections that are most likely to return relevant documents are selected. This creates the collection selection problem. To be able to select suitable collections, federated search systems need to acquire some knowledge about the contents of each collection, creating the col- lection representation problem. The results returned from the selected collections are merged before the final presentation to the user. This final step is the result merging problem. The goal of this work, is to provide a comprehensive summary of the previous research on the federated search challenges described above. -

Federated Search and Recommendation

1 Federated Search and Recommendation Federated search systems emerged in late 1990 to support library users to simultaneously search multiple library sources and catalogues [ELG 12]. The first such systems were designed to collect the search criteria from the users, submit search queries to sources to be retrieved, wait for a response to arrive, and finally, filter and organize the query results to be send back to the users. With the maturity of Cloud Computing, Distributed Machine Learning (DML), big data, fog and edge computing technologies, new opportunities for exploiting computing resources on demand appeared, creating huge impacts on many sectors. However, implementing federated search systems is still a challenge for technical solutions, but also governance decisions and policies that need to be implemented and administered. 1.1. Introduction The authors in [ORC 10] differentiate between Windows Search and Federated Search, as two types of search in Windows 7. Windows Search enables searching of the entire local system and a limited amount of external resources such as shared drives. Federated Search enables the simultaneous search of multiple sources, including document repositories, databases, remote repositories and external Web sites. To enable federated features for websites to be searched by the user, special search connectors need to be implemented and configured for each website [ORC 10]. For example, the selection-based search in MacOS Spotlight requires an index of all items and files that exist in Chapter written by Dileepa JAYAKODY, Nirojan SELVANATHAN, Violeta DAMJANOVIC-BEHRENDT 2 ISTE Ltd. the system to be created. One of the biggest issues with the federated search relates to resource accessibility, thus requiring one large index to be created from those multiple sources that constitute federation. -

Overview of the TREC 2013 Federated Web Search Track

Overview of the TREC 2013 Federated Web Search Track Thomas Demeester1, Dolf Trieschnigg2, Dong Nguyen2, Djoerd Hiemstra2 1 Ghent University - iMinds, Belgium 2 University of Twente, The Netherlands [email protected], {d.trieschnigg, d.nguyen, d.hiemstra}@utwente.nl ABSTRACT track. A total of 11 groups (see Table 1) participated in the The TREC Federated Web Search track is intended to pro- two classic distributed search tasks [9]: mote research related to federated search in a realistic web Task 1: Resource Selection setting, and hereto provides a large data collection gathered The goal of resource selection is to select the right re- from a series of online search engines. This overview paper sources from a large number of independent search en- discusses the results of the first edition of the track, FedWeb gines given a query. Participants had to rank the 157 2013. The focus was on basic challenges in federated search: search engines for each test topic without access to (1) resource selection, and (2) results merging. After an the corresponding search results. The FedWeb 2013 overview of the provided data collection and the relevance collection contains search result pages for many other judgments for the test topics, the participants' individual queries, as well as the HTML of the corresponding web approaches and results on both tasks are discussed. Promis- pages. These data could be used by the participants to ing research directions and an outlook on the 2014 edition build resource descriptions. Some of the participants of the track are provided as well. also used external sources such as Wikipedia, ODP, or WordNet. -

HELIN Federated Search Task Force Final Report HELIN Federated Search Task Force

HELIN Consortium HELIN Digital Commons HELIN Task Force reports HELIN Consortium January 2007 HELIN Federated Search Task Force Final Report HELIN Federated Search Task Force Follow this and additional works at: http://helindigitalcommons.org/task Recommended Citation Federated Search Task Force, HELIN, "HELIN Federated Search Task Force Final Report" (2007). HELIN Task Force reports. Paper 2. http://helindigitalcommons.org/task/2 This Article is brought to you for free and open access by the HELIN Consortium at HELIN Digital Commons. It has been accepted for inclusion in HELIN Task Force reports by an authorized administrator of HELIN Digital Commons. For more information, please contact [email protected]. HELIN Federated Search Task Force Final Report January 2007 Committee Members: Susan McMullen (Chair) (RWU) - [email protected] Dusty Haller (CCRI) – [email protected] Connie Cameron (PC) – [email protected] John Edwards (JWU) – [email protected] Tish Brennan (RIC)– [email protected] Mimi Keefe (URI) – [email protected] Olga Verbeek (Salve) – [email protected] Colleen Anderson (Bryant) – [email protected] TJ Sondermann (Wheaton) - [email protected] Background: In Spring 2006, the Federated Search Task Force was formed as a working group of the HELIN Reference Committee. Our charge was to investigate and report on Federated Search Engines. A federated search engine allows users simultaneously to search multiple databases at one time. The databases to be searched are pre-determined by librarians to correlate with their individual library database subscriptions. It was generally agreed by consortium members that any product that required HELIN or an individual institution to configure its own server would not be feasible given staffing and budget constraints. -

Federated Search and Recommendation

Federated Search and Recommendation Dileepa Jayakodya, Nirojan Selvanathana and Violeta Damjanovic-Behrendta a Salzburg Research, Jakob Haringer Strasse 5/II, 5020 Salzburg, Austria Abstract This paper presents an index-time merging approach to federated search designed for a European Connected Factory Platform for Agile Manufacturing (EFPF) project and its federated digital ecosystem. The presented approach supports search and recommendations for products, services as well as suitable business partners for the creation of networks within the business ecosystem. We discuss the overall architecture of the search and recommendation system, major challenges and the current state of development. Keywords 1 Federated search, recommendation, digital platforms, platform search 1. Introduction Federated search systems emerged in the late 1990s in order to support library users to simultaneously search multiple library sources and catalogues [1]. Early federated search systems were designed to collect search criteria from users, submit search queries to sources to be retrieved, wait for a response to arrive, and filter and organize the query results before sending the results back to the users. For example, Windows 7 services differentiate between two types of search, Windows Search and Federated Search [2]. Windows Search enables searching of the entire local system and a limited amount of external resources such as shared drives. Likewise, the selection-based search in MacOS Spotlight requires an index of all items and files that exist in the system to be created. By contrast, Federated Search enables the simultaneous search of multiple sources, including document repositories, databases, remote repositories and external Web sites. To enable federated features for websites to be effectively searched by the user, search connectors need to be implemented and configured for each website [2]. -

Search Insights 2021

Search Insights 2021 The Search Network March 2021 Contents Introduction 1 The challenge of information quality, Agnes Molnar 5 The art and craft of search auto-suggest, Avi Rappoport 9 The enterprise search user experience, Martin White 15 Searching fast and slow, Tony Russell-Rose 20 Reinventing a neglected taxonomy, Helen Lippell 26 Ecommerce site search is broken: how to fix it with open source 29 software, Charlie Hull & Eric Pugh The data-driven search team, Max Irwin 32 Search resources: books and blogs 37 Enterprise search vendors 40 Glossary 42 This work is licensed under the Creative Commons Attribution 2.0 UK: England & Wales License. To view a copy of this license, visit https://creativecommons.org/licenses/by/2.0/uk/ or send a letter to Creative Commons, PO Box 1866, Mountain View, CA 94042, USA. Editorial services provided by Val Skelton ([email protected]) Design & Production by Simon Flegg - Hub Graphics Ltd (www.hubgraphics.co.uk) Search Insights 2021 Introduction Successful search implementations are not just about choosing the best technology. Search is not a product or a project. It requires an on-going commitment to support changing user and business requirements and to take advantage of enhancements in technology. The Search Network is a community of expertise. It was set up in October 2017 by a group of eight search implementation specialists working in Europe and North Amer- ica. There are now eleven members spanning the world from Singapore to San Fran- cisco. We have known each other for at least a decade and share a common passion for search that delivers business value through providing employees with access to information and knowledge that enables them to make decisions that benefit the or- ganisation and their personal career objectives.