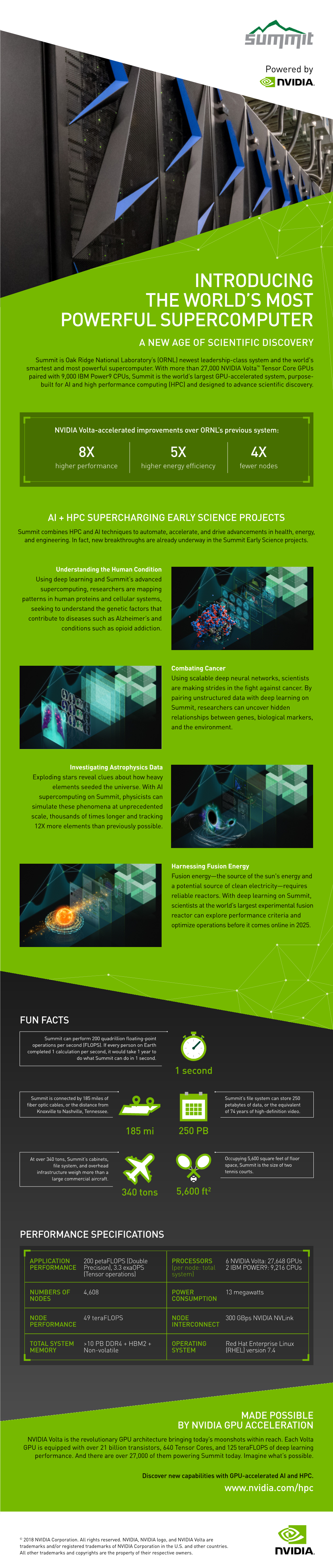

Introducing the World's Most Powerful Supercomputer: Summit

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

NVIDIA GEFORCE GT 630 Step up to Better Video, Photo, Game, and Web Performance

NVIDIA GEFORCE GT 630 Step up to better video, photo, game, and web performance. Every PC deserves dedicated graphics. The NVIDIA® GeForce® GT 630 graphics card delivers a premium multimedia experience “Sixty percent of integrated and reliable gaming—every time. Insist on NVIDIA dedicated graphics owners want dedicated graphics for the faster, more immersive performance your graphics in their next PC1”. customers want. GEFORCE GT 630 TECHNICAL SPECIFICATIONS CUDA CORES > 96 GRAPHICS CLOCK > 810 MHz PROCESSOR CLOCK > 1620 MHz Deliver more Accelerate performance by up Give customers the freedom performance—and fun— to 5x over today’s integrated to connect their PC to any MEMORY INTERFACE with amazing HD video, graphics solutions2 and provide 3D-enabled TV for a rich, > 128 Bit photo, web, and gaming. additional dedicated memory. cinematic Blu-ray 3D experience FRAME BUFFER in their homes4. > 512/1024 MB GDDR5 or 1024 MB DDR3 MICROSOFT DIRECTX > 11 BEST-IN-CLASS PERFORMANCE MICROSOFT DIRECTCOMPUTE > Yes BLU-RAY 3D4 > Yes TRUEHD AND DTS-HD AUDIO BITSTREAMING > Yes NVIDIA PHYSX™-READY > Yes MAX. ANALOG / DIGITAL RESOLUTION > 2048x1536 (analog) / 2560x1600 (digital) DISPLAY CONNECTORS > Dual Link DVI, HDMI, VGA NVIDIA GEFORCE GT 630 | SELLSHEET | MAY 12 NVIDIA® GEFORCE® GT 630 Features Benefits HD VIDEOS Get stunning picture clarity, smooth video, accurate color, and precise image scaling for movies and video with NVIDIA PureVideo® HD technology. WEB ACCELERATION Enjoy a 2x faster web experience with the latest generation of GPU-accelerated web browsers (Internet Explorer, Google Chrome, and Firefox) 5. PHOTO EDITING Perfect and share your photos in half the time with popular apps like Adobe® Photoshop® and Nik Silver EFX Pro 25. -

NVIDIA Tesla Personal Supercomputer, Please Visit

NVIDIA TESLA PERSONAL SUPERCOMPUTER TESLA DATASHEET Get your own supercomputer. Experience cluster level computing performance—up to 250 times faster than standard PCs and workstations—right at your desk. The NVIDIA® Tesla™ Personal Supercomputer AccessiBLE to Everyone TESLA C1060 COMPUTING ™ PROCESSORS ARE THE CORE is based on the revolutionary NVIDIA CUDA Available from OEMs and resellers parallel computing architecture and powered OF THE TESLA PERSONAL worldwide, the Tesla Personal Supercomputer SUPERCOMPUTER by up to 960 parallel processing cores. operates quietly and plugs into a standard power strip so you can take advantage YOUR OWN SUPERCOMPUTER of cluster level performance anytime Get nearly 4 teraflops of compute capability you want, right from your desk. and the ability to perform computations 250 times faster than a multi-CPU core PC or workstation. NVIDIA CUDA UnlocKS THE POWER OF GPU parallel COMPUTING The CUDA™ parallel computing architecture enables developers to utilize C programming with NVIDIA GPUs to run the most complex computationally-intensive applications. CUDA is easy to learn and has become widely adopted by thousands of application developers worldwide to accelerate the most performance demanding applications. TESLA PERSONAL SUPERCOMPUTER | DATASHEET | MAR09 | FINAL FEATURES AND BENEFITS Your own Supercomputer Dedicated computing resource for every computational researcher and technical professional. Cluster Performance The performance of a cluster in a desktop system. Four Tesla computing on your DesKtop processors deliver nearly 4 teraflops of performance. DESIGNED for OFFICE USE Plugs into a standard office power socket and quiet enough for use at your desk. Massively Parallel Many Core 240 parallel processor cores per GPU that can execute thousands of GPU Architecture concurrent threads. -

Data Sheet: Quadro GV100

REINVENTING THE WORKSTATION WITH REAL-TIME RAY TRACING AND AI NVIDIA QUADRO GV100 The Power To Accelerate AI- FEATURES > Four DisplayPort 1.4 Enhanced Workflows Connectors3 The NVIDIA® Quadro® GV100 reinvents the workstation > DisplayPort with Audio to meet the demands of AI-enhanced design and > 3D Stereo Support with Stereo Connector3 visualization workflows. It’s powered by NVIDIA Volta, > NVIDIA GPUDirect™ Support delivering extreme memory capacity, scalability, and > NVIDIA NVLink Support1 performance that designers, architects, and scientists > Quadro Sync II4 Compatibility need to create, build, and solve the impossible. > NVIDIA nView® Desktop SPECIFICATIONS Management Software GPU Memory 32 GB HBM2 Supercharge Rendering with AI > HDCP 2.2 Support Memory Interface 4096-bit > Work with full fidelity, massive datasets 5 > NVIDIA Mosaic Memory Bandwidth Up to 870 GB/s > Enjoy fluid visual interactivity with AI-accelerated > Dedicated hardware video denoising encode and decode engines6 ECC Yes NVIDIA CUDA Cores 5,120 Bring Optimal Designs to Market Faster > Work with higher fidelity CAE simulation models NVIDIA Tensor Cores 640 > Explore more design options with faster solver Double-Precision Performance 7.4 TFLOPS performance Single-Precision Performance 14.8 TFLOPS Enjoy Ultimate Immersive Experiences Tensor Performance 118.5 TFLOPS > Work with complex, photoreal datasets in VR NVIDIA NVLink Connects 2 Quadro GV100 GPUs2 > Enjoy optimal NVIDIA Holodeck experience NVIDIA NVLink bandwidth 200 GB/s Realize New Opportunities with AI -

Science-Driven Development of Supercomputer Fugaku

Special Contribution Science-driven Development of Supercomputer Fugaku Hirofumi Tomita Flagship 2020 Project, Team Leader RIKEN Center for Computational Science In this article, I would like to take a historical look at activities on the application side during supercomputer Fugaku’s climb to the top of supercomputer rankings as seen from a vantage point in early July 2020. I would like to describe, in particular, the change in mindset that took place on the application side along the path toward Fugaku’s top ranking in four benchmarks in 2020 that all began with the Application Working Group and Computer Architecture/Compiler/ System Software Working Group in 2011. Somewhere along this path, the application side joined forces with the architecture/system side based on the keyword “co-design.” During this journey, there were times when our opinions clashed and when efforts to solve problems came to a halt, but there were also times when we took bold steps in a unified manner to surmount difficult obstacles. I will leave the description of specific technical debates to other articles, but here, I would like to describe the flow of Fugaku development from the application side based heavily on a “science-driven” approach along with some of my personal opinions. Actually, we are only halfway along this path. We look forward to the many scientific achievements and so- lutions to social problems that Fugaku is expected to bring about. 1. Introduction In this article, I would like to take a look back at On June 22, 2020, the supercomputer Fugaku the flow along the path toward Fugaku’s top ranking became the first supercomputer in history to simul- as seen from the application side from a vantage point taneously rank No. -

Download Gtx 970 Driver Download Gtx 970 Driver

download gtx 970 driver Download gtx 970 driver. Completing the CAPTCHA proves you are a human and gives you temporary access to the web property. What can I do to prevent this in the future? If you are on a personal connection, like at home, you can run an anti-virus scan on your device to make sure it is not infected with malware. If you are at an office or shared network, you can ask the network administrator to run a scan across the network looking for misconfigured or infected devices. Another way to prevent getting this page in the future is to use Privacy Pass. You may need to download version 2.0 now from the Chrome Web Store. Cloudflare Ray ID: 67a229f54fd4c3c5 • Your IP : 188.246.226.140 • Performance & security by Cloudflare. GeForce Windows 10 Driver. NVIDIA has been working closely with Microsoft on the development of Windows 10 and DirectX 12. Coinciding with the arrival of Windows 10, this Game Ready driver includes the latest tweaks, bug fixes, and optimizations to ensure you have the best possible gaming experience. Game Ready Best gaming experience for Windows 10. GeForce GTX TITAN X, GeForce GTX TITAN, GeForce GTX TITAN Black, GeForce GTX TITAN Z. GeForce 900 Series: GeForce GTX 980 Ti, GeForce GTX 980, GeForce GTX 970, GeForce GTX 960. GeForce 700 Series: GeForce GTX 780 Ti, GeForce GTX 780, GeForce GTX 770, GeForce GTX 760, GeForce GTX 760 Ti (OEM), GeForce GTX 750 Ti, GeForce GTX 750, GeForce GTX 745, GeForce GT 740, GeForce GT 730, GeForce GT 720, GeForce GT 710, GeForce GT 705. -

Datasheet Quadro K600

ACCELERATE YOUR CREATIVITY NVIDIA® QUADRO® K620 Accelerate your creativity with FEATURES ® ® > DisplayPort 1.2 Connector NVIDIA Quadro —the world’s most > DisplayPort with Audio > DVI-I Dual-Link Connector 1 powerful workstation graphics. > VGA Support ™ The NVIDIA Quadro K620 offers impressive > NVIDIA nView Desktop Management Software power-efficient 3D application performance and Compatibility capability. 2 GB of DDR3 GPU memory with fast > HDCP Support bandwidth enables you to create large, complex 3D > NVIDIA Mosaic2 SPECIFICATIONS models, and a flexible single-slot and low-profile GPU Memory 2 GB DDR3 form factor makes it compatible with even the most Memory Interface 128-bit space and power-constrained chassis. Plus, an all-new display engine drives up to four displays with Memory Bandwidth 29.0 GB/s DisplayPort 1.2 support for ultra-high resolutions like NVIDIA CUDA® Cores 384 3840x2160 @ 60 Hz with 30-bit color. System Interface PCI Express 2.0 x16 Quadro cards are certified with a broad range of Max Power Consumption 45 W sophisticated professional applications, tested by Thermal Solution Ultra-Quiet Active leading workstation manufacturers, and backed by Fansink a global team of support specialists, giving you the Form Factor 2.713” H × 6.3” L, Single Slot, Low Profile peace of mind to focus on doing your best work. Whether you’re developing revolutionary products or Display Connectors DVI-I DL + DP 1.2 telling spectacularly vivid visual stories, Quadro gives Max Simultaneous Displays 2 direct, 4 DP 1.2 you the performance to do it brilliantly. Multi-Stream Max DP 1.2 Resolution 3840 x 2160 at 60 Hz Max DVI-I DL Resolution 2560 × 1600 at 60 Hz Max DVI-I SL Resolution 1920 × 1200 at 60 Hz Max VGA Resolution 2048 × 1536 at 85 Hz Graphics APIs Shader Model 5.0, OpenGL 4.53, DirectX 11.24, Vulkan 1.03 Compute APIs CUDA, DirectCompute, OpenCL™ 1 Via supplied adapter/connector/bracket | 2 Windows 7, 8, 8.1 and Linux | 3 Product is based on a published Khronos Specification, and is expected to pass the Khronos Conformance Testing Process when available. -

NVIDIA AI-On-5G Computing Platform Adopted by Leading Service and Network Infrastructure Providers

NVIDIA AI-on-5G Computing Platform Adopted by Leading Service and Network Infrastructure Providers Fujitsu, Google Cloud, Mavenir, Radisys and Wind River to Deliver Solutions for Smart Hospitals, Factories, Warehouses and Stores GTC -- NVIDIA today announced it is teaming with Fujitsu, Google Cloud, Mavenir, Radisys and Wind River to develop solutions for NVIDIA’s AI-on-5G platform, which will speed the creation of smart cities and factories, advanced hospitals and intelligent stores. Enterprises, mobile network operators and cloud service providers that deploy the platform will be able to handle both 5G and edge AI computing in a single, converged platform. The AI-on-5G platform leverages the NVIDIA Aerial™ software development kit with the NVIDIA BlueField®-2 A100 — a converged card that combines GPUs and DPUs including NVIDIA’s “5T for 5G” solution. This creates high-performance 5G RAN and AI applications in an optimal platform to manage precision manufacturing robots, automated guided vehicles, drones, wireless cameras, self-checkout aisles and hundreds of other transformational projects. “In this era of continuous, accelerated computing, network operators are looking to take advantage of the security, low latency and ability to connect hundreds of devices on one node to deliver the widest range of services in ways that are cost-effective, flexible and efficient,” said Ronnie Vasishta, senior vice president of Telecom at NVIDIA. “With the support of key players in the 5G industry, we’re delivering on the promise of AI everywhere.” NVIDIA and several collaborators in this new AI-on-5G ecosystem are members of the O-RAN Alliance, which is developing standards for more intelligent, open, virtualized and fully interoperable mobile networks. -

Nvidia A100 Supercharges Cfd with Altair and Google Cloud

NVIDIA A100 SUPERCHARGES CFD WITH ALTAIR AND GOOGLE CLOUD Image courtesy of Altair Ultra-Fast CFD powered by Altair, Google TAILORED FOR YOU Cloud, and NVIDIA > Get exactly as many GPUs as you need, for exactly as long as you need them, and pay The newest A2 VM family on Google Cloud Platform was designed to just for that. meet today’s most demanding applications. With up to 16 NVIDIA® A100 Tensor Core GPUs in a node, each offering up to 20X the compute FASTER OUT OF THE BOX performance of the previous generation and a whopping total of up to > The new Ampere GPU architecture on Google Cloud A2 instances delivers 640GB of GPU memory, CFD simulations with Altair ultraFluidX™ were the unprecedented acceleration and flexibility ideal candidate to test the performance and benefits of the new normal. to power the world’s highest-performing elastic data centers for AI, data analytics, With ultraFluidX, highly resolved transient aerodynamics simulations and HPC applications. can be performed on a single server. The Lattice Boltzmann Method is a perfect fit for the massively parallel architecture of NVIDIA GPUs, THINK THE UNTHINKABLE and sets the stage for unprecedented turnaround times. What used to > With a total of up to 312 TFLOPS FP32 peak be overnight runs on single nodes now becomes possible within working performance in the same node linked with NVLink technology, with an aggregate hours by utilizing state-of-the-art GPU-optimized algorithms, the new bandwidth of 9.6TB/s, new solutions to NVIDIA A100, and Google Cloud’s A2 VM family, while delivering the high many tough challenges are within reach. -

NVIDIA and Tech Leaders Team to Build GPU-Accelerated Arm Servers for New Era of Diverse HPC Architectures

NVIDIA and Tech Leaders Team to Build GPU-Accelerated Arm Servers for New Era of Diverse HPC Architectures Arm, Ampere, Cray, Fujitsu, HPE, Marvell to Build NVIDIA GPU-Accelerated Servers for Hyperscale-Cloud to Edge, Simulation to AI, High-Performance Storage to Exascale Supercomputing SC19 -- NVIDIA today introduced a reference design platform that enables companies to quickly build GPU-accelerated Arm®-based servers, driving a new era of high performance computing for a growing range of applications in science and industry. Announced by NVIDIA founder and CEO Jensen Huang at the SC19 supercomputing conference, the reference design platform -- consisting of hardware and software building blocks -- responds to growing demand in the HPC community to harness a broader range of CPU architectures. It allows supercomputing centers, hyperscale-cloud operators and enterprises to combine the advantage of NVIDIA's accelerated computing platform with the latest Arm-based server platforms. To build the reference platform, NVIDIA is teaming with Arm and its ecosystem partners -- including Ampere, Fujitsu and Marvell -- to ensure NVIDIA GPUs can work seamlessly with Arm-based processors. The reference platform also benefits from strong collaboration with Cray, a Hewlett Packard Enterprise company, and HPE, two early providers of Arm-based servers. Additionally, a wide range of HPC software companies have used NVIDIA CUDA-X™ libraries to build GPU-enabled management and monitoring tools that run on Arm-based servers. “There is a renaissance in high performance computing,'' Huang said. “Breakthroughs in machine learning and AI are redefining scientific methods and enabling exciting opportunities for new architectures. Bringing NVIDIA GPUs to Arm opens the floodgates for innovators to create systems for growing new applications from hyperscale-cloud to exascale supercomputing and beyond.'' Rene Haas, president of the IP Products Group at Arm, said: “Arm is working with ecosystem partners to deliver unprecedented performance and efficiency for exascale-class Arm-based SoCs. -

The Sunway Taihulight Supercomputer: System and Applications

SCIENCE CHINA Information Sciences . RESEARCH PAPER . July 2016, Vol. 59 072001:1–072001:16 doi: 10.1007/s11432-016-5588-7 The Sunway TaihuLight supercomputer: system and applications Haohuan FU1,3 , Junfeng LIAO1,2,3 , Jinzhe YANG2, Lanning WANG4 , Zhenya SONG6 , Xiaomeng HUANG1,3 , Chao YANG5, Wei XUE1,2,3 , Fangfang LIU5 , Fangli QIAO6 , Wei ZHAO6 , Xunqiang YIN6 , Chaofeng HOU7 , Chenglong ZHANG7, Wei GE7 , Jian ZHANG8, Yangang WANG8, Chunbo ZHOU8 & Guangwen YANG1,2,3* 1Ministry of Education Key Laboratory for Earth System Modeling, and Center for Earth System Science, Tsinghua University, Beijing 100084, China; 2Department of Computer Science and Technology, Tsinghua University, Beijing 100084, China; 3National Supercomputing Center in Wuxi, Wuxi 214072, China; 4College of Global Change and Earth System Science, Beijing Normal University, Beijing 100875, China; 5Institute of Software, Chinese Academy of Sciences, Beijing 100190, China; 6First Institute of Oceanography, State Oceanic Administration, Qingdao 266061, China; 7Institute of Process Engineering, Chinese Academy of Sciences, Beijing 100190, China; 8Computer Network Information Center, Chinese Academy of Sciences, Beijing 100190, China Received May 27, 2016; accepted June 11, 2016; published online June 21, 2016 Abstract The Sunway TaihuLight supercomputer is the world’s first system with a peak performance greater than 100 PFlops. In this paper, we provide a detailed introduction to the TaihuLight system. In contrast with other existing heterogeneous supercomputers, which include both CPU processors and PCIe-connected many-core accelerators (NVIDIA GPU or Intel Xeon Phi), the computing power of TaihuLight is provided by a homegrown many-core SW26010 CPU that includes both the management processing elements (MPEs) and computing processing elements (CPEs) in one chip. -

Scheduling Many-Task Workloads on Supercomputers: Dealing with Trailing Tasks

Scheduling Many-Task Workloads on Supercomputers: Dealing with Trailing Tasks Timothy G. Armstrong, Zhao Zhang Daniel S. Katz, Michael Wilde, Ian T. Foster Department of Computer Science Computation Institute University of Chicago University of Chicago & Argonne National Laboratory [email protected], [email protected] [email protected], [email protected], [email protected] Abstract—In order for many-task applications to be attrac- as a worker and allocate one task per node. If tasks are tive candidates for running on high-end supercomputers, they single-threaded, each core or virtual thread can be treated must be able to benefit from the additional compute, I/O, as a worker. and communication performance provided by high-end HPC hardware relative to clusters, grids, or clouds. Typically this The second feature of many-task applications is an empha- means that the application should use the HPC resource in sis on high performance. The many tasks that make up the such a way that it can reduce time to solution beyond what application effectively collaborate to produce some result, is possible otherwise. Furthermore, it is necessary to make and in many cases it is important to get the results quickly. efficient use of the computational resources, achieving high This feature motivates the development of techniques to levels of utilization. Satisfying these twin goals is not trivial, because while the efficiently run many-task applications on HPC hardware. It parallelism in many task computations can vary over time, allows people to design and develop performance-critical on many large machines the allocation policy requires that applications in a many-task style and enables the scaling worker CPUs be provisioned and also relinquished in large up of existing many-task applications to run on much larger blocks rather than individually. -

Providing a Robust Tools Landscape for CORAL Machines

Providing a Robust Tools Landscape for CORAL Machines 4th Workshop on Extreme Scale Programming Tools @ SC15 in AusGn, Texas November 16, 2015 Dong H. Ahn Lawrence Livermore Naonal Lab Michael Brim Oak Ridge Naonal Lab Sco4 Parker Argonne Naonal Lab Gregory Watson IBM Wesley Bland Intel CORAL: Collaboration of ORNL, ANL, LLNL Current DOE Leadership Computers Objective - Procure 3 leadership computers to be Titan (ORNL) Sequoia (LLNL) Mira (ANL) 2012 - 2017 sited at Argonne, Oak Ridge and Lawrence Livermore 2012 - 2017 2012 - 2017 in 2017. Leadership Computers - RFP requests >100 PF, 2 GiB/core main memory, local NVRAM, and science performance 4x-8x Titan or Sequoia Approach • Competitive process - one RFP (issued by LLNL) leading to 2 R&D contracts and 3 computer procurement contracts • For risk reduction and to meet a broad set of requirements, 2 architectural paths will be selected and Oak Ridge and Argonne must choose different architectures • Once Selected, Multi-year Lab-Awardee relationship to co-design computers • Both R&D contracts jointly managed by the 3 Labs • Each lab manages and negotiates its own computer procurement contract, and may exercise options to meet their specific needs CORAL Innova,ons and Value IBM, Mellanox, and NVIDIA Awarded $325M U.S. Department of Energy’s CORAL Contracts • Scalable system solu.on – scale up, scale down – to address a wide range of applicaon domains • Modular, flexible, cost-effec.ve, • Directly leverages OpenPOWER partnerships and IBM’s Power system roadmap • Air and water cooling • Heterogeneous