Common Probability Distributions

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Beta-Binomial Distribution Introduction Bayesian Derivation

In Danish: 2005-09-19 / SLB Translated: 2008-05-28 / SLB Bayesian Statistics, Simulation and Software The beta-binomial distribution I have translated this document, written for another course in Danish, almost as is. I have kept the references to Lee, the textbook used for that course. Introduction In Lee: Bayesian Statistics, the beta-binomial distribution is very shortly mentioned as the predictive distribution for the binomial distribution, given the conjugate prior distribution, the beta distribution. (In Lee, see pp. 78, 214, 156.) Here we shall treat it slightly more in depth, partly because it emerges in the WinBUGS example in Lee x 9.7, and partly because it possibly can be useful for your project work. Bayesian Derivation We make n independent Bernoulli trials (0-1 trials) with probability parameter π. It is well known that the number of successes x has the binomial distribution. Considering the probability parameter π unknown (but of course the sample-size parameter n is known), we have xjπ ∼ bin(n; π); where in generic1 notation n p(xjπ) = πx(1 − π)1−x; x = 0; 1; : : : ; n: x We assume as prior distribution for π a beta distribution, i.e. π ∼ beta(α; β); 1By this we mean that p is not a fixed function, but denotes the density function (in the discrete case also called the probability function) for the random variable the value of which is argument for p. 1 with density function 1 p(π) = πα−1(1 − π)β−1; 0 < π < 1: B(α; β) I remind you that the beta function can be expressed by the gamma function: Γ(α)Γ(β) B(α; β) = : (1) Γ(α + β) In Lee, x 3.1 is shown that the posterior distribution is a beta distribution as well, πjx ∼ beta(α + x; β + n − x): (Because of this result we say that the beta distribution is conjugate distribution to the binomial distribution.) We shall now derive the predictive distribution, that is finding p(x). -

9. Binomial Distribution

J. K. SHAH CLASSES Binomial Distribution 9. Binomial Distribution THEORETICAL DISTRIBUTION (Exists only in theory) 1. Theoretical Distribution is a distribution where the variables are distributed according to some definite mathematical laws. 2. In other words, Theoretical Distributions are mathematical models; where the frequencies / probabilities are calculated by mathematical computation. 3. Theoretical Distribution are also called as Expected Variance Distribution or Frequency Distribution 4. THEORETICAL DISTRIBUTION DISCRETE CONTINUOUS BINOMIAL POISSON Distribution Distribution NORMAL OR Student’s ‘t’ Chi-square Snedecor’s GAUSSIAN Distribution Distribution F-Distribution Distribution A. Binomial Distribution (Bernoulli Distribution) 1. This distribution is a discrete probability Distribution where the variable ‘x’ can assume only discrete values i.e. x = 0, 1, 2, 3,....... n 2. This distribution is derived from a special type of random experiment known as Bernoulli Experiment or Bernoulli Trials , which has the following characteristics (i) Each trial must be associated with two mutually exclusive & exhaustive outcomes – SUCCESS and FAILURE . Usually the probability of success is denoted by ‘p’ and that of the failure by ‘q’ where q = 1-p and therefore p + q = 1. (ii) The trials must be independent under identical conditions. (iii) The number of trial must be finite (countably finite). (iv) Probability of success and failure remains unchanged throughout the process. : 442 : J. K. SHAH CLASSES Binomial Distribution Note 1 : A ‘trial’ is an attempt to produce outcomes which is neither sure nor impossible in nature. Note 2 : The conditions mentioned may also be treated as the conditions for Binomial Distributions. 3. Characteristics or Properties of Binomial Distribution (i) It is a bi parametric distribution i.e. -

Chapter 6 Continuous Random Variables and Probability

EF 507 QUANTITATIVE METHODS FOR ECONOMICS AND FINANCE FALL 2019 Chapter 6 Continuous Random Variables and Probability Distributions Chap 6-1 Probability Distributions Probability Distributions Ch. 5 Discrete Continuous Ch. 6 Probability Probability Distributions Distributions Binomial Uniform Hypergeometric Normal Poisson Exponential Chap 6-2/62 Continuous Probability Distributions § A continuous random variable is a variable that can assume any value in an interval § thickness of an item § time required to complete a task § temperature of a solution § height in inches § These can potentially take on any value, depending only on the ability to measure accurately. Chap 6-3/62 Cumulative Distribution Function § The cumulative distribution function, F(x), for a continuous random variable X expresses the probability that X does not exceed the value of x F(x) = P(X £ x) § Let a and b be two possible values of X, with a < b. The probability that X lies between a and b is P(a < X < b) = F(b) -F(a) Chap 6-4/62 Probability Density Function The probability density function, f(x), of random variable X has the following properties: 1. f(x) > 0 for all values of x 2. The area under the probability density function f(x) over all values of the random variable X is equal to 1.0 3. The probability that X lies between two values is the area under the density function graph between the two values 4. The cumulative density function F(x0) is the area under the probability density function f(x) from the minimum x value up to x0 x0 f(x ) = f(x)dx 0 ò xm where -

A Matrix-Valued Bernoulli Distribution Gianfranco Lovison1 Dipartimento Di Scienze Statistiche E Matematiche “S

Journal of Multivariate Analysis 97 (2006) 1573–1585 www.elsevier.com/locate/jmva A matrix-valued Bernoulli distribution Gianfranco Lovison1 Dipartimento di Scienze Statistiche e Matematiche “S. Vianelli”, Università di Palermo Viale delle Scienze 90128 Palermo, Italy Received 17 September 2004 Available online 10 August 2005 Abstract Matrix-valued distributions are used in continuous multivariate analysis to model sample data matrices of continuous measurements; their use seems to be neglected for binary, or more generally categorical, data. In this paper we propose a matrix-valued Bernoulli distribution, based on the log- linear representation introduced by Cox [The analysis of multivariate binary data, Appl. Statist. 21 (1972) 113–120] for the Multivariate Bernoulli distribution with correlated components. © 2005 Elsevier Inc. All rights reserved. AMS 1991 subject classification: 62E05; 62Hxx Keywords: Correlated multivariate binary responses; Multivariate Bernoulli distribution; Matrix-valued distributions 1. Introduction Matrix-valued distributions are used in continuous multivariate analysis (see, for exam- ple, [10]) to model sample data matrices of continuous measurements, allowing for both variable-dependence and unit-dependence. Their potentials seem to have been neglected for binary, and more generally categorical, data. This is somewhat surprising, since the natural, elementary representation of datasets with categorical variables is precisely in the form of sample binary data matrices, through the 0-1 coding of categories. E-mail address: [email protected] URL: http://dssm.unipa.it/lovison. 1 Work supported by MIUR 60% 2000, 2001 and MIUR P.R.I.N. 2002 grants. 0047-259X/$ - see front matter © 2005 Elsevier Inc. All rights reserved. doi:10.1016/j.jmva.2005.06.008 1574 G. -

3.2.3 Binomial Distribution

3.2.3 Binomial Distribution The binomial distribution is based on the idea of a Bernoulli trial. A Bernoulli trail is an experiment with two, and only two, possible outcomes. A random variable X has a Bernoulli(p) distribution if 8 > <1 with probability p X = > :0 with probability 1 − p, where 0 ≤ p ≤ 1. The value X = 1 is often termed a “success” and X = 0 is termed a “failure”. The mean and variance of a Bernoulli(p) random variable are easily seen to be EX = (1)(p) + (0)(1 − p) = p and VarX = (1 − p)2p + (0 − p)2(1 − p) = p(1 − p). In a sequence of n identical, independent Bernoulli trials, each with success probability p, define the random variables X1,...,Xn by 8 > <1 with probability p X = i > :0 with probability 1 − p. The random variable Xn Y = Xi i=1 has the binomial distribution and it the number of sucesses among n independent trials. The probability mass function of Y is µ ¶ ¡ ¢ n ¡ ¢ P Y = y = py 1 − p n−y. y For this distribution, t n EX = np, Var(X) = np(1 − p),MX (t) = [pe + (1 − p)] . 1 Theorem 3.2.2 (Binomial theorem) For any real numbers x and y and integer n ≥ 0, µ ¶ Xn n (x + y)n = xiyn−i. i i=0 If we take x = p and y = 1 − p, we get µ ¶ Xn n 1 = (p + (1 − p))n = pi(1 − p)n−i. i i=0 Example 3.2.2 (Dice probabilities) Suppose we are interested in finding the probability of obtaining at least one 6 in four rolls of a fair die. -

Solutions to the Exercises

Solutions to the Exercises Chapter 1 Solution 1.1 (a) Your computer may be programmed to allocate borderline cases to the next group down, or the next group up; and it may or may not manage to follow this rule consistently, depending on its handling of the numbers involved. Following a rule which says 'move borderline cases to the next group up', these are the five classifications. (i) 1.0-1.2 1.2-1.4 1.4-1.6 1.6-1.8 1.8-2.0 2.0-2.2 2.2-2.4 6 6 4 8 4 3 4 2.4-2.6 2.6-2.8 2.8-3.0 3.0-3.2 3.2-3.4 3.4-3.6 3.6-3.8 6 3 2 2 0 1 1 (ii) 1.0-1.3 1.3-1.6 1.6-1.9 1.9-2.2 2.2-2.5 10 6 10 5 6 2.5-2.8 2.8-3.1 3.1-3.4 3.4-3.7 7 3 1 2 (iii) 0.8-1.1 1.1-1.4 1.4-1.7 1.7-2.0 2.0-2.3 2 10 6 10 7 2.3-2.6 2.6-2.9 2.9-3.2 3.2-3.5 3.5-3.8 6 4 3 1 1 (iv) 0.85-1.15 1.15-1.45 1.45-1.75 1.75-2.05 2.05-2.35 4 9 8 9 5 2.35-2.65 2.65-2.95 2.95-3.25 3.25-3.55 3.55-3.85 7 3 3 1 1 (V) 0.9-1.2 1.2-1.5 1.5-1.8 1.8-2.1 2.1-2.4 6 7 11 7 4 2.4-2.7 2.7-3.0 3.0-3.3 3.3-3.6 3.6-3.9 7 4 2 1 1 (b) Computer graphics: the diagrams are shown in Figures 1.9 to 1.11. -

Discrete Probability Distributions Uniform Distribution Bernoulli

Discrete Probability Distributions Uniform Distribution Experiment obeys: all outcomes equally probable Random variable: outcome Probability distribution: if k is the number of possible outcomes, 1 if x is a possible outcome p(x)= k ( 0 otherwise Example: tossing a fair die (k = 6) Bernoulli Distribution Experiment obeys: (1) a single trial with two possible outcomes (success and failure) (2) P trial is successful = p Random variable: number of successful trials (zero or one) Probability distribution: p(x)= px(1 − p)n−x Mean and variance: µ = p, σ2 = p(1 − p) Example: tossing a fair coin once Binomial Distribution Experiment obeys: (1) n repeated trials (2) each trial has two possible outcomes (success and failure) (3) P ith trial is successful = p for all i (4) the trials are independent Random variable: number of successful trials n x n−x Probability distribution: b(x; n,p)= x p (1 − p) Mean and variance: µ = np, σ2 = np(1 − p) Example: tossing a fair coin n times Approximations: (1) b(x; n,p) ≈ p(x; λ = pn) if p ≪ 1, x ≪ n (Poisson approximation) (2) b(x; n,p) ≈ n(x; µ = pn,σ = np(1 − p) ) if np ≫ 1, n(1 − p) ≫ 1 (Normal approximation) p Geometric Distribution Experiment obeys: (1) indeterminate number of repeated trials (2) each trial has two possible outcomes (success and failure) (3) P ith trial is successful = p for all i (4) the trials are independent Random variable: trial number of first successful trial Probability distribution: p(x)= p(1 − p)x−1 1 2 1−p Mean and variance: µ = p , σ = p2 Example: repeated attempts to start -

STATS8: Introduction to Biostatistics 24Pt Random Variables And

STATS8: Introduction to Biostatistics Random Variables and Probability Distributions Babak Shahbaba Department of Statistics, UCI Random variables • In this lecture, we will discuss random variables and their probability distributions. • Formally, a random variable X assigns a numerical value to each possible outcome (and event) of a random phenomenon. • For instance, we can define X based on possible genotypes of a bi-allelic gene A as follows: 8 < 0 for genotype AA; X = 1 for genotype Aa; : 2 for genotype aa: • Alternatively, we can define a random, Y , variable this way: 0 for genotypes AA and aa; Y = 1 for genotype Aa: Random variables • After we define a random variable, we can find the probabilities for its possible values based on the probabilities for its underlying random phenomenon. • This way, instead of talking about the probabilities for different outcomes and events, we can talk about the probability of different values for a random variable. • For example, suppose P(AA) = 0:49, P(Aa) = 0:42, and P(aa) = 0:09. • Then, we can say that P(X = 0) = 0:49, i.e., X is equal to 0 with probability of 0.49. • Note that the total probability for the random variable is still 1. Random variables • The probability distribution of a random variable specifies its possible values (i.e., its range) and their corresponding probabilities. • For the random variable X defined based on genotypes, the probability distribution can be simply specified as follows: 8 < 0:49 for x = 0; P(X = x) = 0:42 for x = 1; : 0:09 for x = 2: Here, x denotes a specific value (i.e., 0, 1, or 2) of the random variable. -

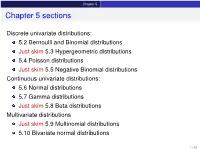

Chapter 5 Sections

Chapter 5 Chapter 5 sections Discrete univariate distributions: 5.2 Bernoulli and Binomial distributions Just skim 5.3 Hypergeometric distributions 5.4 Poisson distributions Just skim 5.5 Negative Binomial distributions Continuous univariate distributions: 5.6 Normal distributions 5.7 Gamma distributions Just skim 5.8 Beta distributions Multivariate distributions Just skim 5.9 Multinomial distributions 5.10 Bivariate normal distributions 1 / 43 Chapter 5 5.1 Introduction Families of distributions How: Parameter and Parameter space pf /pdf and cdf - new notation: f (xj parameters ) Mean, variance and the m.g.f. (t) Features, connections to other distributions, approximation Reasoning behind a distribution Why: Natural justification for certain experiments A model for the uncertainty in an experiment All models are wrong, but some are useful – George Box 2 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Bernoulli distributions Def: Bernoulli distributions – Bernoulli(p) A r.v. X has the Bernoulli distribution with parameter p if P(X = 1) = p and P(X = 0) = 1 − p. The pf of X is px (1 − p)1−x for x = 0; 1 f (xjp) = 0 otherwise Parameter space: p 2 [0; 1] In an experiment with only two possible outcomes, “success” and “failure”, let X = number successes. Then X ∼ Bernoulli(p) where p is the probability of success. E(X) = p, Var(X) = p(1 − p) and (t) = E(etX ) = pet + (1 − p) 8 < 0 for x < 0 The cdf is F(xjp) = 1 − p for 0 ≤ x < 1 : 1 for x ≥ 1 3 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Binomial distributions Def: Binomial distributions – Binomial(n; p) A r.v. -

A Note on Skew-Normal Distribution Approximation to the Negative Binomal Distribution

WSEAS TRANSACTIONS on MATHEMATICS Jyh-Jiuan Lin, Ching-Hui Chang, Rosemary Jou A NOTE ON SKEW-NORMAL DISTRIBUTION APPROXIMATION TO THE NEGATIVE BINOMAL DISTRIBUTION 1 2* 3 JYH-JIUAN LIN , CHING-HUI CHANG AND ROSEMARY JOU 1Department of Statistics Tamkang University 151 Ying-Chuan Road, Tamsui, Taipei County 251, Taiwan [email protected] 2Department of Applied Statistics and Information Science Ming Chuan University 5 De-Ming Road, Gui-Shan District, Taoyuan 333, Taiwan [email protected] 3 Graduate Institute of Management Sciences, Tamkang University 151 Ying-Chuan Road, Tamsui, Taipei County 251, Taiwan [email protected] Abstract: This article revisits the problem of approximating the negative binomial distribution. Its goal is to show that the skew-normal distribution, another alternative methodology, provides a much better approximation to negative binomial probabilities than the usual normal approximation. Key-Words: Central Limit Theorem, cumulative distribution function (cdf), skew-normal distribution, skew parameter. * Corresponding author ISSN: 1109-2769 32 Issue 1, Volume 9, January 2010 WSEAS TRANSACTIONS on MATHEMATICS Jyh-Jiuan Lin, Ching-Hui Chang, Rosemary Jou 1. INTRODUCTION where φ and Φ denote the standard normal pdf and cdf respectively. The This article revisits the problem of the parameter space is: μ ∈( −∞ , ∞), σ > 0 and negative binomial distribution approximation. Though the most commonly used solution of λ ∈(−∞ , ∞). Besides, the distribution approximating the negative binomial SN(0, 1, λ) with pdf distribution is by normal distribution, Peizer f( x | 0, 1, λ) = 2φ (x )Φ (λ x ) is called the and Pratt (1968) has investigated this problem standard skew-normal distribution. -

Lecture 6: Special Probability Distributions

The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Lecture 6: Special Probability Distributions Assist. Prof. Dr. Emel YAVUZ DUMAN MCB1007 Introduction to Probability and Statistics Istanbul˙ K¨ult¨ur University The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Outline 1 The Discrete Uniform Distribution 2 The Bernoulli Distribution 3 The Binomial Distribution 4 The Negative Binomial and Geometric Distribution The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Outline 1 The Discrete Uniform Distribution 2 The Bernoulli Distribution 3 The Binomial Distribution 4 The Negative Binomial and Geometric Distribution The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di The Discrete Uniform Distribution If a random variable can take on k different values with equal probability, we say that it has a discrete uniform distribution; symbolically, Definition 1 A random variable X has a discrete uniform distribution and it is referred to as a discrete uniform random variable if and only if its probability distribution is given by 1 f (x)= for x = x , x , ··· , xk k 1 2 where xi = xj when i = j. The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di The mean and the variance of this distribution are Mean: k k 1 x1 + x2 + ···+ xk μ = E[X ]= xi f (xi ) = xi · = , k k i=1 i=1 1/k Variance: k 2 2 2 1 σ = E[(X − μ) ]= (xi − μ) · k i=1 2 2 2 (x − μ) +(x − μ) + ···+(xk − μ) = 1 2 k The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di In the special case where xi = i, the discrete uniform distribution 1 becomes f (x)= k for x =1, 2, ··· , k, and in this from it applies, for example, to the number of points we roll with a balanced die. -

11. Parameter Estimation

11. Parameter Estimation Chris Piech and Mehran Sahami May 2017 We have learned many different distributions for random variables and all of those distributions had parame- ters: the numbers that you provide as input when you define a random variable. So far when we were working with random variables, we either were explicitly told the values of the parameters, or, we could divine the values by understanding the process that was generating the random variables. What if we don’t know the values of the parameters and we can’t estimate them from our own expert knowl- edge? What if instead of knowing the random variables, we have a lot of examples of data generated with the same underlying distribution? In this chapter we are going to learn formal ways of estimating parameters from data. These ideas are critical for artificial intelligence. Almost all modern machine learning algorithms work like this: (1) specify a probabilistic model that has parameters. (2) Learn the value of those parameters from data. Parameters Before we dive into parameter estimation, first let’s revisit the concept of parameters. Given a model, the parameters are the numbers that yield the actual distribution. In the case of a Bernoulli random variable, the single parameter was the value p. In the case of a Uniform random variable, the parameters are the a and b values that define the min and max value. Here is a list of random variables and the corresponding parameters. From now on, we are going to use the notation q to be a vector of all the parameters: Distribution Parameters Bernoulli(p) q = p Poisson(l) q = l Uniform(a,b) q = (a;b) Normal(m;s 2) q = (m;s 2) Y = mX + b q = (m;b) In the real world often you don’t know the “true” parameters, but you get to observe data.