Using Static and Runtime Analysis to Improve Developer Productivity And

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A Compiler-Level Intermediate Representation Based Binary Analysis and Rewriting System

A Compiler-level Intermediate Representation based Binary Analysis and Rewriting System Kapil Anand Matthew Smithson Khaled Elwazeer Aparna Kotha Jim Gruen Nathan Giles Rajeev Barua University of Maryland, College Park {kapil,msmithso,wazeer,akotha,jgruen,barua}@umd.edu Abstract 1. Introduction This paper presents component techniques essential for con- In recent years, there has been a tremendous amount of ac- verting executables to a high-level intermediate representa- tivity in executable-level research targeting varied applica- tion (IR) of an existing compiler. The compiler IR is then tions such as security vulnerability analysis [13, 37], test- employed for three distinct applications: binary rewriting us- ing [17], and binary optimizations [30, 35]. In spite of a sig- ing the compiler’s binary back-end, vulnerability detection nificant overlap in the overall goals of various source-code using source-level symbolic execution, and source-code re- methods and executable-level techniques, several analyses covery using the compiler’s C backend. Our techniques en- and sophisticated transformations that are well-understood able complex high-level transformations not possible in ex- and implemented in source-level infrastructures have yet to isting binary systems, address a major challenge of input- become available in executable frameworks. Many of the derived memory addresses in symbolic execution and are the executable-level tools suggest new techniques for perform- first to enable recovery of a fully functional source-code. ing elementary source-level tasks. For example, PLTO [35] We present techniques to segment the flat address space in proposes a custom alias analysis technique to implement a an executable containing undifferentiated blocks of memory. -

Executable Code Is Not the Proper Subject of Copyright Law a Retrospective Criticism of Technical and Legal Naivete in the Apple V

Executable Code is Not the Proper Subject of Copyright Law A retrospective criticism of technical and legal naivete in the Apple V. Franklin case Matthew M. Swann, Clark S. Turner, Ph.D., Department of Computer Science Cal Poly State University November 18, 2004 Abstract: Copyright was created by government for a purpose. Its purpose was to be an incentive to produce and disseminate new and useful knowledge to society. Source code is written to express its underlying ideas and is clearly included as a copyrightable artifact. However, since Apple v. Franklin, copyright has been extended to protect an opaque software executable that does not express its underlying ideas. Common commercial practice involves keeping the source code secret, hiding any innovative ideas expressed there, while copyrighting the executable, where the underlying ideas are not exposed. By examining copyright’s historical heritage we can determine whether software copyright for an opaque artifact upholds the bargain between authors and society as intended by our Founding Fathers. This paper first describes the origins of copyright, the nature of software, and the unique problems involved. It then determines whether current copyright protection for the opaque executable realizes the economic model underpinning copyright law. Having found the current legal interpretation insufficient to protect software without compromising its principles, we suggest new legislation which would respect the philosophy on which copyright in this nation was founded. Table of Contents INTRODUCTION................................................................................................. 1 THE ORIGIN OF COPYRIGHT ........................................................................... 1 The Idea is Born 1 A New Beginning 2 The Social Bargain 3 Copyright and the Constitution 4 THE BASICS OF SOFTWARE .......................................................................... -

The LLVM Instruction Set and Compilation Strategy

The LLVM Instruction Set and Compilation Strategy Chris Lattner Vikram Adve University of Illinois at Urbana-Champaign lattner,vadve ¡ @cs.uiuc.edu Abstract This document introduces the LLVM compiler infrastructure and instruction set, a simple approach that enables sophisticated code transformations at link time, runtime, and in the field. It is a pragmatic approach to compilation, interfering with programmers and tools as little as possible, while still retaining extensive high-level information from source-level compilers for later stages of an application’s lifetime. We describe the LLVM instruction set, the design of the LLVM system, and some of its key components. 1 Introduction Modern programming languages and software practices aim to support more reliable, flexible, and powerful software applications, increase programmer productivity, and provide higher level semantic information to the compiler. Un- fortunately, traditional approaches to compilation either fail to extract sufficient performance from the program (by not using interprocedural analysis or profile information) or interfere with the build process substantially (by requiring build scripts to be modified for either profiling or interprocedural optimization). Furthermore, they do not support optimization either at runtime or after an application has been installed at an end-user’s site, when the most relevant information about actual usage patterns would be available. The LLVM Compilation Strategy is designed to enable effective multi-stage optimization (at compile-time, link-time, runtime, and offline) and more effective profile-driven optimization, and to do so without changes to the traditional build process or programmer intervention. LLVM (Low Level Virtual Machine) is a compilation strategy that uses a low-level virtual instruction set with rich type information as a common code representation for all phases of compilation. -

Studying the Real World Today's Topics

Studying the real world Today's topics Free and open source software (FOSS) What is it, who uses it, history Making the most of other people's software Learning from, using, and contributing Learning about your own system Using tools to understand software without source Free and open source software Access to source code Free = freedom to use, modify, copy Some potential benefits Can build for different platforms and needs Development driven by community Different perspectives and ideas More people looking at the code for bugs/security issues Structure Volunteers, sponsored by companies Generally anyone can propose ideas and submit code Different structures in charge of what features/code gets in Free and open source software Tons of FOSS out there Nearly everything on myth Desktop applications (Firefox, Chromium, LibreOffice) Programming tools (compilers, libraries, IDEs) Servers (Apache web server, MySQL) Many companies contribute to FOSS Android core Apple Darwin Microsoft .NET A brief history of FOSS 1960s: Software distributed with hardware Source included, users could fix bugs 1970s: Start of software licensing 1974: Software is copyrightable 1975: First license for UNIX sold 1980s: Popularity of closed-source software Software valued independent of hardware Richard Stallman Started the free software movement (1983) The GNU project GNU = GNU's Not Unix An operating system with unix-like interface GNU General Public License Free software: users have access to source, can modify and redistribute Must share modifications under same -

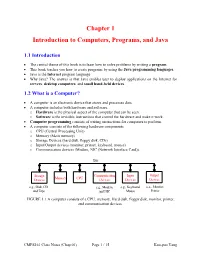

Chapter 1 Introduction to Computers, Programs, and Java

Chapter 1 Introduction to Computers, Programs, and Java 1.1 Introduction • The central theme of this book is to learn how to solve problems by writing a program . • This book teaches you how to create programs by using the Java programming languages . • Java is the Internet program language • Why Java? The answer is that Java enables user to deploy applications on the Internet for servers , desktop computers , and small hand-held devices . 1.2 What is a Computer? • A computer is an electronic device that stores and processes data. • A computer includes both hardware and software. o Hardware is the physical aspect of the computer that can be seen. o Software is the invisible instructions that control the hardware and make it work. • Computer programming consists of writing instructions for computers to perform. • A computer consists of the following hardware components o CPU (Central Processing Unit) o Memory (Main memory) o Storage Devices (hard disk, floppy disk, CDs) o Input/Output devices (monitor, printer, keyboard, mouse) o Communication devices (Modem, NIC (Network Interface Card)). Bus Storage Communication Input Output Memory CPU Devices Devices Devices Devices e.g., Disk, CD, e.g., Modem, e.g., Keyboard, e.g., Monitor, and Tape and NIC Mouse Printer FIGURE 1.1 A computer consists of a CPU, memory, Hard disk, floppy disk, monitor, printer, and communication devices. CMPS161 Class Notes (Chap 01) Page 1 / 15 Kuo-pao Yang 1.2.1 Central Processing Unit (CPU) • The central processing unit (CPU) is the brain of a computer. • It retrieves instructions from memory and executes them. -

The Interplay of Compile-Time and Run-Time Options for Performance Prediction Luc Lesoil, Mathieu Acher, Xhevahire Tërnava, Arnaud Blouin, Jean-Marc Jézéquel

The Interplay of Compile-time and Run-time Options for Performance Prediction Luc Lesoil, Mathieu Acher, Xhevahire Tërnava, Arnaud Blouin, Jean-Marc Jézéquel To cite this version: Luc Lesoil, Mathieu Acher, Xhevahire Tërnava, Arnaud Blouin, Jean-Marc Jézéquel. The Interplay of Compile-time and Run-time Options for Performance Prediction. SPLC 2021 - 25th ACM Inter- national Systems and Software Product Line Conference - Volume A, Sep 2021, Leicester, United Kingdom. pp.1-12, 10.1145/3461001.3471149. hal-03286127 HAL Id: hal-03286127 https://hal.archives-ouvertes.fr/hal-03286127 Submitted on 15 Jul 2021 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. The Interplay of Compile-time and Run-time Options for Performance Prediction Luc Lesoil, Mathieu Acher, Xhevahire Tërnava, Arnaud Blouin, Jean-Marc Jézéquel Univ Rennes, INSA Rennes, CNRS, Inria, IRISA Rennes, France [email protected] ABSTRACT Both compile-time and run-time options can be configured to reach Many software projects are configurable through compile-time op- specific functional and performance goals. tions (e.g., using ./configure) and also through run-time options (e.g., Existing studies consider either compile-time or run-time op- command-line parameters, fed to the software at execution time). -

Clangjit: Enhancing C++ with Just-In-Time Compilation

ClangJIT: Enhancing C++ with Just-in-Time Compilation Hal Finkel David Poliakoff David F. Richards Lead, Compiler Technology and Lawrence Livermore National Lawrence Livermore National Programming Languages Laboratory Laboratory Leadership Computing Facility Livermore, CA, USA Livermore, CA, USA Argonne National Laboratory [email protected] [email protected] Lemont, IL, USA [email protected] ABSTRACT body of C++ code, but critically, defer the generation and optimiza- The C++ programming language is not only a keystone of the tion of template specializations until runtime using a relatively- high-performance-computing ecosystem but has proven to be a natural extension to the core C++ programming language. successful base for portable parallel-programming frameworks. As A significant design requirement for ClangJIT is that the runtime- is well known, C++ programmers use templates to specialize al- compilation process not explicitly access the file system - only gorithms, thus allowing the compiler to generate highly-efficient loading data from the running binary is permitted - which allows code for specific parameters, data structures, and so on. This capa- for deployment within environments where file-system access is bility has been limited to those specializations that can be identi- either unavailable or prohibitively expensive. In addition, this re- fied when the application is compiled, and in many critical cases, quirement maintains the redistributibility of the binaries using the compiling all potentially-relevant specializations is not practical. JIT-compilation features (i.e., they can run on systems where the ClangJIT provides a well-integrated C++ language extension allow- source code is unavailable). For example, on large HPC deploy- ing template-based specialization to occur during program execu- ments, especially on supercomputers with distributed file systems, tion. -

Design and Implementation of Generics for the .NET Common Language Runtime

Design and Implementation of Generics for the .NET Common Language Runtime Andrew Kennedy Don Syme Microsoft Research, Cambridge, U.K. fakeÒÒ¸d×ÝÑeg@ÑicÖÓ×ÓfغcÓÑ Abstract cally through an interface definition language, or IDL) that is nec- essary for language interoperation. The Microsoft .NET Common Language Runtime provides a This paper describes the design and implementation of support shared type system, intermediate language and dynamic execution for parametric polymorphism in the CLR. In its initial release, the environment for the implementation and inter-operation of multiple CLR has no support for polymorphism, an omission shared by the source languages. In this paper we extend it with direct support for JVM. Of course, it is always possible to “compile away” polymor- parametric polymorphism (also known as generics), describing the phism by translation, as has been demonstrated in a number of ex- design through examples written in an extended version of the C# tensions to Java [14, 4, 6, 13, 2, 16] that require no change to the programming language, and explaining aspects of implementation JVM, and in compilers for polymorphic languages that target the by reference to a prototype extension to the runtime. JVM or CLR (MLj [3], Haskell, Eiffel, Mercury). However, such Our design is very expressive, supporting parameterized types, systems inevitably suffer drawbacks of some kind, whether through polymorphic static, instance and virtual methods, “F-bounded” source language restrictions (disallowing primitive type instanti- type parameters, instantiation at pointer and value types, polymor- ations to enable a simple erasure-based translation, as in GJ and phic recursion, and exact run-time types. -

Toward IFVM Virtual Machine: a Model Driven IFML Interpretation

Toward IFVM Virtual Machine: A Model Driven IFML Interpretation Sara Gotti and Samir Mbarki MISC Laboratory, Faculty of Sciences, Ibn Tofail University, BP 133, Kenitra, Morocco Keywords: Interaction Flow Modelling Language IFML, Model Execution, Unified Modeling Language (UML), IFML Execution, Model Driven Architecture MDA, Bytecode, Virtual Machine, Model Interpretation, Model Compilation, Platform Independent Model PIM, User Interfaces, Front End. Abstract: UML is the first international modeling language standardized since 1997. It aims at providing a standard way to visualize the design of a system, but it can't model the complex design of user interfaces and interactions. However, according to MDA approach, it is necessary to apply the concept of abstract models to user interfaces too. IFML is the OMG adopted (in March 2013) standard Interaction Flow Modeling Language designed for abstractly expressing the content, user interaction and control behaviour of the software applications front-end. IFML is a platform independent language, it has been designed with an executable semantic and it can be mapped easily into executable applications for various platforms and devices. In this article we present an approach to execute the IFML. We introduce a IFVM virtual machine which translate the IFML models into bytecode that will be interpreted by the java virtual machine. 1 INTRODUCTION a fundamental standard fUML (OMG, 2011), which is a subset of UML that contains the most relevant The software development has been affected by the part of class diagrams for modeling the data apparition of the MDA (OMG, 2015) approach. The structure and activity diagrams to specify system trend of the 21st century (BRAMBILLA et al., behavior; it contains all UML elements that are 2014) which has allowed developers to build their helpful for the execution of the models. -

Architectural Support for Scripting Languages

Architectural Support for Scripting Languages By Dibakar Gope A dissertation submitted in partial fulfillment of the requirements for the degree of Doctor of Philosophy (Electrical and Computer Engineering) at the UNIVERSITY OF WISCONSIN–MADISON 2017 Date of final oral examination: 6/7/2017 The dissertation is approved by the following members of the Final Oral Committee: Mikko H. Lipasti, Professor, Electrical and Computer Engineering Gurindar S. Sohi, Professor, Computer Sciences Parameswaran Ramanathan, Professor, Electrical and Computer Engineering Jing Li, Assistant Professor, Electrical and Computer Engineering Aws Albarghouthi, Assistant Professor, Computer Sciences © Copyright by Dibakar Gope 2017 All Rights Reserved i This thesis is dedicated to my parents, Monoranjan Gope and Sati Gope. ii acknowledgments First and foremost, I would like to thank my parents, Sri Monoranjan Gope, and Smt. Sati Gope for their unwavering support and encouragement throughout my doctoral studies which I believe to be the single most important contribution towards achieving my goal of receiving a Ph.D. Second, I would like to express my deepest gratitude to my advisor Prof. Mikko Lipasti for his mentorship and continuous support throughout the course of my graduate studies. I am extremely grateful to him for guiding me with such dedication and consideration and never failing to pay attention to any details of my work. His insights, encouragement, and overall optimism have been instrumental in organizing my otherwise vague ideas into some meaningful contributions in this thesis. This thesis would never have been accomplished without his technical and editorial advice. I find myself fortunate to have met and had the opportunity to work with such an all-around nice person in addition to being a great professor. -

Building Useful Program Analysis Tools Using an Extensible Java Compiler

Building Useful Program Analysis Tools Using an Extensible Java Compiler Edward Aftandilian, Raluca Sauciuc Siddharth Priya, Sundaresan Krishnan Google, Inc. Google, Inc. Mountain View, CA, USA Hyderabad, India feaftan, [email protected] fsiddharth, [email protected] Abstract—Large software companies need customized tools a specific task, but they fail for several reasons. First, ad- to manage their source code. These tools are often built in hoc program analysis tools are often brittle and break on an ad-hoc fashion, using brittle technologies such as regular uncommon-but-valid code patterns. Second, simple ad-hoc expressions and home-grown parsers. Changes in the language cause the tools to break. More importantly, these ad-hoc tools tools don’t provide sufficient information to perform many often do not support uncommon-but-valid code code patterns. non-trivial analyses, including refactorings. Type and symbol We report our experiences building source-code analysis information is especially useful, but amounts to writing a tools at Google on top of a third-party, open-source, extensible type-checker. Finally, more sophisticated program analysis compiler. We describe three tools in use on our Java codebase. tools are expensive to create and maintain, especially as the The first, Strict Java Dependencies, enforces our dependency target language evolves. policy in order to reduce JAR file sizes and testing load. The second, error-prone, adds new error checks to the compilation In this paper, we present our experience building special- process and automates repair of those errors at a whole- purpose tools on top of the the piece of software in our codebase scale. -

Overview of LLVM Architecture of LLVM

Overview of LLVM Architecture of LLVM Front-end: high-level programming language => LLVM IR Optimizer: optimize/analyze/secure the program in the IR form Back-end: LLVM IR => machine code Optimizer The optimizer’s job: analyze/optimize/secure programs. Optimizations are implemented as passes that traverse some portion of a program to either collect information or transform the program. A pass is an operation on a unit of IR code. Pass is an important concept in LLVM. LLVM IR - A low-level strongly-typed language-independent, SSA-based representation. - Tailored for static analyses and optimization purposes. Part 1 Part 1 has two kinds of passes: - Analysis pass (section 1): only analyze code statically - Transformation pass (section 2 & 3): insert code into the program Analysis pass (Section 1) Void foo (uint32_t int, uint32_t * p) { LLVM IR ... Clang opt } test.c test.bc stderr mypass.so Transformation pass (Section 2 & 3) mypass.so Void foo (uint32_t int, uint32_t * p) { ... LLVM IR opt LLVM IR } test.cpp Int main () { test.bc test-ins.bc ... Clang++ foo () ... LLVM IR } Clang++ main.cpp main.bc LLVM IR lib.cpp Executable lib.bc Section 1 Challenges: - How to traverse instructions in a function http://releases.llvm.org/3.9.1/docs/ProgrammersManual.html#iterating-over-the-instruction-in-a-function - How to print to stderr Section 2 & 3 Challenges: 1. How to traverse basic blocks in a function and instructions in a basic block 2. How to insert function calls to the runtime library a. Add the function signature to the symbol table of the module Section 2 & 3 Challenges: 1.