Stackwalkerapi Programmer's Guide

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Procedures & the Stack

Procedures & The Stack Spring 2016 Procedures & The Stack Announcements ¢ Lab 1 graded § Late days § Extra credit ¢ HW 2 out ¢ Lab 2 prep in section tomorrow § Bring laptops! ¢ Slides HTTP://XKCD.COM/1270/ 1 Procedures & The Stack Spring 2016 Memory & data Roadmap Integers & floats C: Java: Machine code & C x86 assembly car *c = malloc(sizeof(car)); Car c = new Car(); Procedures & stacks c->miles = 100; c.setMiles(100); Arrays & structs c->gals = 17; c.setGals(17); float mpg = get_mpg(c); float mpg = Memory & caches free(c); c.getMPG(); Processes Virtual memory Assembly get_mpg: Memory allocation pushq %rbp Java vs. C language: movq %rsp, %rbp ... popq %rbp ret OS: Machine 0111010000011000 100011010000010000000010 code: 1000100111000010 110000011111101000011111 Computer system: 2 Procedures & The Stack Spring 2016 Mechanisms required for procedures ¢ Passing control P(…) { § To beginning oF procedure code • • § Back to return point y = Q(x); print(y) ¢ Passing data • § Procedure arguments } § Return value ¢ Memory management int Q(int i) § Allocate during procedure execution { § Deallocate upon return int t = 3*i; int v[10]; ¢ All implemented with machine • instructions • § An x86-64 procedure uses only those return v[t]; mechanisms required For that procedure } 3 Procedures & The Stack Spring 2016 Questions to answer about procedures ¢ How do I pass arguments to a procedure? ¢ How do I get a return value from a procedure? ¢ Where do I put local variables? ¢ When a function returns, how does it know where to return? ¢ To answer some of -

MIPS Calling Convention

MIPS Calling Convention CS 64: Computer Organization and Design Logic Lecture #9 Fall 2018 Ziad Matni, Ph.D. Dept. of Computer Science, UCSB Administrative • Lab #5 this week – due on Friday • Grades will be up on GauchoSpace today by noon! – If you want to review your exams, see your TAs: LAST NAMES A thru Q See Bay-Yuan (Th. 12:30 – 2:30 pm) LAST NAMES R thru Z See Harmeet (Th. 9:30 – 11:30 am) • Mid-quarter evaluations for T.As – Links on the last slide and will put up on Piazza too – Optional to do, but very appreciated by us all! 11/5/2018 Matni, CS64, Fa18 2 CS 64, Fall 18, Midterm Exam Average = 86.9% Median = 87% 26 22 8 5 3 11/5/2018 Matni, CS64, Fa18 3 Lecture Outline • MIPS Calling Convention – Functions calling functions – Recursive functions 11/5/2018 Matni, CS64, Fa18 4 Function Calls Within Functions… Given what we’ve said so far… • What about this code makes our previously discussed setup break? – You would need multiple copies of $ra • You’d have to copy the value of $ra to another register (or to mem) before calling another function • Danger: You could run out of registers! 11/5/2018 Matni, CS64, Fa18 5 Another Example… What about this code makes this setup break? • Can’t fit all variables in registers at the same time! • How do I know which registers are even usable without looking at the code? 11/5/2018 Matni, CS64, Fa18 6 Solution??!! • Store certain information in memory only at certain times • Ultimately, this is where the call stack comes from • So what (registers/memory) save what??? 11/5/2018 Matni, CS64, Fa18 -

UNDERSTANDING STACK ALIGNMENT in 64-BIT CALLING CONVENTIONS for X86-64 Assembly Programmers

UNDERSTANDING STACK ALIGNMENT IN 64-BIT CALLING CONVENTIONS For x86-64 Assembly Programmers This article is part of CPU2.0 presentation Soffian Abdul Rasad Date : Oct 2nd, 2017 Updated: 2019 Note: This article is released in conjunction with CPU2.0 to enable users to use CPU2.0 correctly in regards to stack alignment. It does not present full stack programming materials. MS 64-bit Application Binary Interface (ABI) defines an x86_64 software calling convention known as the fastcall. System V also introduces similar software calling convention known as AMD64 calling convention. These calling conventions specify the standards and the requirements of the 64-bit APIs to enable codes to access and use them in a standardized and uniform fashion. This is the standard convention being employed by Win64 APIs and Linux64 respectively. While the two are radically different, they share similar stack alignment policy – the stack must be well-aligned to 16-byte boundaries prior to calling a 64-bit API. A memory address is said to be aligned to 16 if it is evenly divisible by 16, or if the last digit is 0, in hexadecimal notation. Please note that to simplify discussions, we ignore the requirement for shadow space or red zone when calling the 64-bit APIs for now. But still with or without the shadow space, the discussion below applies. Also note that the CPU honors no calling convention. Align 16 Ecosystem This requirement specifies that for the 64-bit APIs to function correctly it must work in an ecosystem or runtime environment in which the stack memory is always aligned to 16-byte boundaries. -

Protecting Against Unexpected System Calls

Protecting Against Unexpected System Calls C. M. Linn, M. Rajagopalan, S. Baker, C. Collberg, S. K. Debray, J. H. Hartman Department of Computer Science University of Arizona Tucson, AZ 85721 {linnc,mohan,bakers,collberg,debray,jhh}@cs.arizona.edu Abstract writing the return address on the stack with the address of the attack code). This then causes the various actions This paper proposes a comprehensive set of techniques relating to the attack to be carried out. which limit the scope of remote code injection attacks. These techniques prevent any injected code from mak- In order to do any real damage, e.g., create a root ing system calls and thus restrict the capabilities of an shell, change permissions on a file, or access proscribed attacker. In defending against the traditional ways of data, the attack code needs to execute one or more sys- harming a system these techniques significantly raise the tem calls. Because of this, and the well-defined system bar for compromising the host system forcing the attack call interface between application code and the underly- code to take extraordinary steps that may be impractical ing operating system kernel, many researchers have fo- in the context of a remote code injection attack. There cused on the system call interface as a convenient point are two main aspects to our approach. The first is to for detecting and disrupting such attacks (see, for exam- embed semantic information into executables identify- ple, [5, 13, 17, 19, 29, 32, 35, 38]; Section 7 gives a more ing the locations of legitimate system call instructions; extensive discussion). -

Protecting the Stack with Metadata Policies and Tagged Hardware

2018 IEEE Symposium on Security and Privacy Protecting the Stack with Metadata Policies and Tagged Hardware Nick Roessler Andre´ DeHon University of Pennsylvania University of Pennsylvania [email protected] [email protected] Abstract—The program call stack is a major source of ex- stack data, or hijack the exposed function call mechanism in ploitable security vulnerabilities in low-level, unsafe languages a host of other malicious ways. like C. In conventional runtime implementations, the underlying Consequently, protecting the stack abstraction is critical stack data is exposed and unprotected, allowing programming errors to turn into security violations. In this work, we design for application security. Currently deployed defenses such as W X and stack canaries [2] make attacks more difficult to novel metadata-tag based, stack-protection security policies for ⊕ a general-purpose tagged architecture. Our policies specifically conduct, but do not protect against more sophisticated attack exploit the natural locality of dynamic program call graphs to techniques. Full memory safety can be retrofitted onto existing achieve cacheability of the metadata rules that they require. C/C++ code through added software checks, but at a high cost Our simple Return Address Protection policy has a performance overhead of 1.2% but just protects return addresses. The two of 100% or more in runtime overhead [3]. These expensive richer policies we present, Static Authorities and Depth Isola- solutions are unused in practice due to their unacceptably high tion, provide object-level protection for all stack objects. When overheads [4]. enforcing memory safety, our Static Authorities policy has a There is a long history of accelerating security policies with performance overhead of 5.7% and our Depth Isolation policy hardware to bring their overheads to more bearable levels has a performance overhead of 4.5%. -

Time-Travel Debugging for Javascript/Node.Js

Time-Travel Debugging for JavaScript/Node.js Earl T. Barr Mark Marron Ed Maurer University College London, UK Microsoft Research, USA Microsoft, USA [email protected] [email protected] [email protected] Dan Moseley Gaurav Seth Microsoft, USA Microsoft, USA [email protected] [email protected] ABSTRACT Time-traveling in the execution history of a program during de- bugging enables a developer to precisely track and understand the sequence of statements and program values leading to an error. To provide this functionality to real world developers, we embarked on a two year journey to create a production quality time-traveling de- bugger in Microsoft’s open-source ChakraCore JavaScript engine and the popular Node.js application framework. CCS Concepts •Software and its engineering ! Software testing and debug- Figure 1: Visual Studio Code with extra time-travel functional- ging; ity (Step-Back button in top action bar) at a breakpoint. Keywords • Options for both using time-travel during local debugging Time-Travel Debugging, JavaScript, Node.js and for recording a trace in production for postmortem de- bugging or other analysis (Section 2.1). 1. INTRODUCTION • Reverse-Step Local and Dynamic operations (Section 2.2) Modern integrated development environments (IDEs) provide a that allow the developer to step-back in time to the previously range of tools for setting breakpoints, examining program state, and executed statement in the current function or to step-back in manually logging execution. These features enable developers to time to the previously executed statement in any frame in- quickly track down localized bugs, provided all of the relevant val- cluding exception throws or callee returns. -

Section 6: Implementing Coroutines

Section 6: Implementing Coroutines In Section 5, we worked on the usage of coroutines. Today, we will be reviewing the basic concepts relating to how coroutines can be implemented. 1 def f() { 2 yield(1) 3 yield(2) 4 null 5 } 6 7 def g(unused) { 8 f() 9 } 10 11 def co = coroutine(g) 12 print(resume(co, null)) 13 print(resume(co, null)) 14 print(resume(co, null)) Part 1. What does the above program print? 1 2 null Part 2. Which piece of information about the coroutine co does the interpreter need to remember to continue execution when the second resume is invoked? To continue execution within f, the interpreter needs to remember: ● the coroutine call stack that contains frames for the calls to g and to f ● the next instruction to execute in each stack frame Part 3. What happens if we invoke resume a fourth time? Think about how the interpreter should implement the intended behavior. Invoking resume for a fourth time will result in an error. The interpreter can track this by distinguishing whether the coroutine has finished its execution or not. Part 4. Describe a data structure to maintain the state of the coroutine throughout program execution. For each coroutine, we must store: ● A coroutine state (running, suspended, or dead) ● A call stack ● For each frame in the call stack: ○ The program counter ○ The bytecode array into which the PC indexes ○ The environment Part 5. Now consider the following changes to the program above. 1 def f() { 2 yield(1) 3 print "between yields" 4 yield(2) 5 null 6 } 7 8 def g(unused) { 9 f() 10 } 11 12 def co = coroutine(g) 13 print "created coroutine" 14 resume(co, null) 15 print "between resumes" 16 resume(co, null) 17 resume(co, null) 18 print "end" Describe the state of coroutines maintained by the interpreter at each print statement. -

Visualizing the Call Stack for Novice Programmers

Draft of April 17, 2014, at 2:04 am Pomona College Department of Computer Science StackExplorer: Visualizing the Call Stack for Novice Programmers Kimberly Merrill April 17, 2014 Submitted as part of the senior exercise for the degree of Bachelor of Arts in Computer Science Professor Rett Bull Draft of April 17, 2014, at 2:04 am Copyright c 2014 Kimberly Merrill The author grants Pomona College the nonexclusive right to make this work available for noncommercial, educational purposes, pro- vided that this copyright statement appears on the reproduced materials and notice is given that the copying is by permission of the author. To disseminate otherwise or to republish requires written permission from the author. Draft of April 17, 2014, at 2:04 am Abstract Novice programmers first encounter machine-level con- structs in introductory computer architecture courses. Translating their knowledge of high-level concepts to the op- erations performed by the machine is a cognitively heavy conceptual jump as students become accountable for their program's data on the stack and explicit CPU state. The field of program visualization has proposed many models for visualizing challenging program execution concepts but has most extensively focused on high-level introductory program- ming concepts and low-level full-machine simulators. StackExplorer was designed as a dynamic program visu- alization tool to reinforce the stack discipline as taught in existing introductory computer architecture courses. It rep- resents the execution model as a series of stack frames and the effects the source code has on the contents of each frame, allowing users to step through C programs and examine the corresponding assembly instructions, call stack, and stack frames. -

CS164: Introduction to Programming Languages and Compilers, Spring 2012 Thibaud Hottelier UC Berkeley

Lecture 5 Implementing Coroutines compile AST to bytecode, bytecode interpreter Ras Bodik Hack Your Language! Shaon Barman CS164: Introduction to Programming Thibaud Hottelier Languages and Compilers, Spring 2012 UC Berkeley 1 What you will learn today Implement Asymmetric Coroutines – why a recursive interpreter with implicit stack won’t do Compiling AST to bytecode - this is your first compiler; compiles AST to “flat” code Bytecode Interpreter - bytecode can be interpreted without recursion - hence no need to keep interpreter state on the call stack 2 PA2 PA2 was released today, due Sunday – bytecode interpreter of coroutines – after PA2, you will be able to implement iterators – in PA3, you will build Prolog on top of your coroutines – extra credit: cool use of coroutines (see L4 reading) HW3 has been assigned: cool uses of coroutines This homework is not graded. While it is optional, you are expected to know the homework material: 1) lazy list concatenation 2) regexes Solve at least the lazy list problem before you start on PA2 3 Code 4 Cal Hackathon Friday, February 3, 9:00pm to Saturday, February 4 5:00pm Soda Hall Wozniak Lounge Grand Prize: $1,500!!! Register @ http://code4cal.eventbrite.com/ More information about the event at http://stc.berkeley.edu. The Student Technology Council (STC), an advisory council for the UC Berkeley CIO, Shel Waggener, is hosting Code 4 Cal, a hackathon for students to create innovative, sustainable, and most of all, useful applications or widgets for Cal students. Student-developed app could be adopted by the University! Cash prizes start at $1,500. 4 Review of L4: Why Coroutines Loop calls iterator function to get the next item eg the next token from the input The iterator maintains state between two such calls eg pointer to the input stream. -

EECS 373 Design of Microprocessor-Based Systems

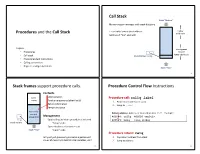

Procedures Procedures are very important for writing reusable and maintainable code in assembly and high-level languages. How are they implemented? · Application Binary Interfaces · Calling Conventions · Recursive Calls · Examples Reference: PowerPC Embedded ABI General Concepts · Caller: The calling procedure Callee: The procedure called by the caller ¼ int mult(x, y) prod = mult (a, b) ¼ ¼ return (x * y) · Caller and callee must agree on: · How to pass parameters · How to return the return value(s), if any · How to maintain relevant information across calls · PowerPC architecture does not define ªagreementº. Instead, common policies are defined by convention. PowerPC Features The PowerPC ISA provides the following features to support procedure/function calls: · link register (p. 2-11) · bl: branch and link (p. 4-41) · blr: branch to link register (Table F-4) A Very Simple Calling Convention · Passing arguments · Use GPRs r3 to r10 in order · Use stack in main memory if more than 8 arguments · Passing return value · Leave result in r3 Example int func(int a, int b) { return (a + b); } main { ¼ func(5,6); ¼ } Another Example int func2(int a, int b) { return func(a , b); } main { ¼ func2(5,6); ¼ } The Stack · Information for each function invocation (e.g. link register) is saved on the call stack or simply stack. · Each function invocation has its own stack frame (a.k.a. activation record ). high address func2 stack frame func stack frame stack pointer low address Using the Stack main ¼ Describe the stack and LR contents ¼ · right before -

Procedures and the Call Stack Call Stack Stack Frames Support

Call Stack Stack “Bottom” Memory region managed with stack discipline %rsp holds lowest stack address higher Procedures and the Call Stack addresses (address of "top" element) Topics stack grows • Procedures toward lower addresses • Call stack Stack Pointer: %rsp • Procedure/stack instructions • Calling conventions • Register-saving conventions Stack “Top” 9 Stack frames support procedure calls. Procedure Control Flow Instructions Contents Caller Local variables Procedure call: callq label Frame Function arguments (after first 6) 1. Push return address on stack Return information 2. Jump to label Temporary space Frame for Return address: Address of instruction after . Example: current call procedure Management 400544: callq 400550 <mult2> %rsp Space allocated when procedure is entered 400549: movq %rax,(%rbx) Stack Pointer “Setup” code Space deallocated before return Stack “Top” “Finish” code Procedure return: retq Why not just give every procedure a permanent 1. Pop return address from stack chunk of memory to hold its local variables, etc? 2. Jump to address 26 28 Call Example (step 1) Return Example (step 1) • • 0x130 0x130 0000000000400540 <multstore>: • 0000000000400540 <multstore>: • • 0x128 • • 0x128 • • 0x120 • 0x120 400544: callq 400550 <mult2> 400544: callq 400550 <mult2> 400549: mov %rax,(%rbx) 400549: mov %rax,(%rbx) 0x118 0x400549 • • • %rsp 0x120 • %rsp 0x118 %rip 0x400544 %rip 0x400557 0000000000400550 <mult2>: 0000000000400550 <mult2>: 400550: mov %rdi,%rax 400550: mov %rdi,%rax • • • • 400557: retq 400557: retq 29 31 -

Eliminating the Call Stack to Save RAM

Eliminating the Call Stack to Save RAM Xuejun Yang Nathan Cooprider John Regehr University of Utah BAE Systems AIT University of Utah [email protected] [email protected] [email protected] Abstract it is likely to use some of its RAM to store one or more call stacks: Most programming languages support a call stack in the program- chains of activation records representing the state of executing ming model and also in the runtime system. We show that for appli- functions. From the point of view of the compiler, targeting a run- cations targeting low-power embedded microcontrollers (MCUs), time system based on a callstack has important benefits. First, the RAM usage can be significantly decreased by partially or com- stack is memory-efficient: variables live only as long as they are pletely eliminating the runtime callstack. We present flattening, needed. Second, the stack is time-efficient, supporting allocation a transformation that absorbs a function into its caller, replacing and deallocation in a handful of clock cycles. Third, compilers can function invocations and returns with jumps. Unlike inlining, flat- rapidly generate high-quality code by compiling one function at tening does not duplicate the bodies of functions that have multiple a time; the small size of the typical function makes it possible to callsites. Applied aggressively, flattening results in stack elimina- run aggressive intraprocedural optimizations while also achieving tion. Flattening is most useful in conjunction with a lifting transfor- short compile times. mation that moves global variables into a local scope. Our thesis is that despite these well-known benefits: Partially or Flattening and lifting can save RAM.