Quasi-Rational Canonical Forms of a Matrix Over a Number Field

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Polynomial Flows in the Plane

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Elsevier - Publisher Connector ADVANCES IN MATHEMATICS 55, 173-208 (1985) Polynomial Flows in the Plane HYMAN BASS * Department of Mathematics, Columbia University. New York, New York 10027 AND GARY MEISTERS Department of Mathematics, University of Nebraska, Lincoln, Nebraska 68588 Contents. 1. Introduction. I. Polynomial flows are global, of bounded degree. 2. Vector fields and local flows. 3. Change of coordinates; the group GA,(K). 4. Polynomial flows; statement of the main results. 5. Continuous families of polynomials. 6. Locally polynomial flows are global, of bounded degree. II. One parameter subgroups of GA,(K). 7. Introduction. 8. Amalgamated free products. 9. GA,(K) as amalgamated free product. 10. One parameter subgroups of GA,(K). 11. One parameter subgroups of BA?(K). 12. One parameter subgroups of BA,(K). 1. Introduction Let f: Rn + R be a Cl-vector field, and consider the (autonomous) system of differential equations with initial condition x(0) = x0. (lb) The solution, x = cp(t, x,), depends on t and x0. For which f as above does the flow (p depend polynomially on the initial condition x,? This question was discussed in [M2], and in [Ml], Section 6. We present here a definitive solution of this problem for n = 2, over both R and C. (See Theorems (4.1) and (4.3) below.) The main tool is the theorem of Jung [J] and van der Kulk [vdK] * This material is based upon work partially supported by the National Science Foun- dation under Grant NSF MCS 82-02633. -

New Algorithms in the Frobenius Matrix Algebra for Polynomial Root-Finding

City University of New York (CUNY) CUNY Academic Works Computer Science Technical Reports CUNY Academic Works 2014 TR-2014006: New Algorithms in the Frobenius Matrix Algebra for Polynomial Root-Finding Victor Y. Pan Ai-Long Zheng How does access to this work benefit ou?y Let us know! More information about this work at: https://academicworks.cuny.edu/gc_cs_tr/397 Discover additional works at: https://academicworks.cuny.edu This work is made publicly available by the City University of New York (CUNY). Contact: [email protected] New Algoirthms in the Frobenius Matrix Algebra for Polynomial Root-finding ∗ Victor Y. Pan[1,2],[a] and Ai-Long Zheng[2],[b] Supported by NSF Grant CCF-1116736 and PSC CUNY Award 64512–0042 [1] Department of Mathematics and Computer Science Lehman College of the City University of New York Bronx, NY 10468 USA [2] Ph.D. Programs in Mathematics and Computer Science The Graduate Center of the City University of New York New York, NY 10036 USA [a] [email protected] http://comet.lehman.cuny.edu/vpan/ [b] [email protected] Abstract In 1996 Cardinal applied fast algorithms in Frobenius matrix algebra to complex root-finding for univariate polynomials, but he resorted to some numerically unsafe techniques of symbolic manipulation with polynomials at the final stages of his algorithms. We extend his work to complete the computations by operating with matrices at the final stage as well and also to adjust them to real polynomial root-finding. Our analysis and experiments show efficiency of the resulting algorithms. 2000 Math. -

On Multivariate Interpolation

On Multivariate Interpolation Peter J. Olver† School of Mathematics University of Minnesota Minneapolis, MN 55455 U.S.A. [email protected] http://www.math.umn.edu/∼olver Abstract. A new approach to interpolation theory for functions of several variables is proposed. We develop a multivariate divided difference calculus based on the theory of non-commutative quasi-determinants. In addition, intriguing explicit formulae that connect the classical finite difference interpolation coefficients for univariate curves with multivariate interpolation coefficients for higher dimensional submanifolds are established. † Supported in part by NSF Grant DMS 11–08894. April 6, 2016 1 1. Introduction. Interpolation theory for functions of a single variable has a long and distinguished his- tory, dating back to Newton’s fundamental interpolation formula and the classical calculus of finite differences, [7, 47, 58, 64]. Standard numerical approximations to derivatives and many numerical integration methods for differential equations are based on the finite dif- ference calculus. However, historically, no comparable calculus was developed for functions of more than one variable. If one looks up multivariate interpolation in the classical books, one is essentially restricted to rectangular, or, slightly more generally, separable grids, over which the formulae are a simple adaptation of the univariate divided difference calculus. See [19] for historical details. Starting with G. Birkhoff, [2] (who was, coincidentally, my thesis advisor), recent years have seen a renewed level of interest in multivariate interpolation among both pure and applied researchers; see [18] for a fairly recent survey containing an extensive bibli- ography. De Boor and Ron, [8, 12, 13], and Sauer and Xu, [61, 10, 65], have systemati- cally studied the polynomial case. -

Stabilization, Estimation and Control of Linear Dynamical Systems with Positivity and Symmetry Constraints

Stabilization, Estimation and Control of Linear Dynamical Systems with Positivity and Symmetry Constraints A Dissertation Presented by Amirreza Oghbaee to The Department of Electrical and Computer Engineering in partial fulfillment of the requirements for the degree of Doctor of Philosophy in Electrical Engineering Northeastern University Boston, Massachusetts April 2018 To my parents for their endless love and support i Contents List of Figures vi Acknowledgments vii Abstract of the Dissertation viii 1 Introduction 1 2 Matrices with Special Structures 4 2.1 Nonnegative (Positive) and Metzler Matrices . 4 2.1.1 Nonnegative Matrices and Eigenvalue Characterization . 6 2.1.2 Metzler Matrices . 8 2.1.3 Z-Matrices . 10 2.1.4 M-Matrices . 10 2.1.5 Totally Nonnegative (Positive) Matrices and Strictly Metzler Matrices . 12 2.2 Symmetric Matrices . 14 2.2.1 Properties of Symmetric Matrices . 14 2.2.2 Symmetrizer and Symmetrization . 15 2.2.3 Quadratic Form and Eigenvalues Characterization of Symmetric Matrices . 19 2.3 Nonnegative and Metzler Symmetric Matrices . 22 3 Positive and Symmetric Systems 27 3.1 Positive Systems . 27 3.1.1 Externally Positive Systems . 27 3.1.2 Internally Positive Systems . 29 3.1.3 Asymptotic Stability . 33 3.1.4 Bounded-Input Bounded-Output (BIBO) Stability . 34 3.1.5 Asymptotic Stability using Lyapunov Equation . 37 3.1.6 Robust Stability of Perturbed Systems . 38 3.1.7 Stability Radius . 40 3.2 Symmetric Systems . 43 3.3 Positive Symmetric Systems . 47 ii 4 Positive Stabilization of Dynamic Systems 50 4.1 Metzlerian Stabilization . 50 4.2 Maximizing the stability radius by state feedback . -

Learning Fast Algorithms for Linear Transforms Using Butterfly Factorizations

Learning Fast Algorithms for Linear Transforms Using Butterfly Factorizations Tri Dao 1 Albert Gu 1 Matthew Eichhorn 2 Atri Rudra 2 Christopher Re´ 1 Abstract ture generation, and kernel approximation, to image and Fast linear transforms are ubiquitous in machine language modeling (convolutions). To date, these trans- learning, including the discrete Fourier transform, formations rely on carefully designed algorithms, such as discrete cosine transform, and other structured the famous fast Fourier transform (FFT) algorithm, and transformations such as convolutions. All of these on specialized implementations (e.g., FFTW and cuFFT). transforms can be represented by dense matrix- Moreover, each specific transform requires hand-crafted vector multiplication, yet each has a specialized implementations for every platform (e.g., Tensorflow and and highly efficient (subquadratic) algorithm. We PyTorch lack the fast Hadamard transform), and it can be ask to what extent hand-crafting these algorithms difficult to know when they are useful. Ideally, these barri- and implementations is necessary, what structural ers would be addressed by automatically learning the most priors they encode, and how much knowledge is effective transform for a given task and dataset, along with required to automatically learn a fast algorithm an efficient implementation of it. Such a method should be for a provided structured transform. Motivated capable of recovering a range of fast transforms with high by a characterization of matrices with fast matrix- accuracy and realistic sizes given limited prior knowledge. vector multiplication as factoring into products It is also preferably composed of differentiable primitives of sparse matrices, we introduce a parameteriza- and basic operations common to linear algebra/machine tion of divide-and-conquer methods that is capa- learning libraries, that allow it to run on any platform and ble of representing a large class of transforms. -

Subspace-Preserving Sparsification of Matrices with Minimal Perturbation

Subspace-preserving sparsification of matrices with minimal perturbation to the near null-space. Part I: Basics Chetan Jhurani Tech-X Corporation 5621 Arapahoe Ave Boulder, Colorado 80303, U.S.A. Abstract This is the first of two papers to describe a matrix sparsification algorithm that takes a general real or complex matrix as input and produces a sparse output matrix of the same size. The non-zero entries in the output are chosen to minimize changes to the singular values and singular vectors corresponding to the near null-space of the input. The output matrix is constrained to preserve left and right null-spaces exactly. The sparsity pattern of the output matrix is automatically determined or can be given as input. If the input matrix belongs to a common matrix subspace, we prove that the computed sparse matrix belongs to the same subspace. This works with- out imposing explicit constraints pertaining to the subspace. This property holds for the subspaces of Hermitian, complex-symmetric, Hamiltonian, cir- culant, centrosymmetric, and persymmetric matrices, and for each of the skew counterparts. Applications of our method include computation of reusable sparse pre- conditioning matrices for reliable and efficient solution of high-order finite element systems. The second paper in this series [1] describes our open- arXiv:1304.7049v1 [math.NA] 26 Apr 2013 source implementation, and presents further technical details. Keywords: Sparsification, Spectral equivalence, Matrix structure, Convex optimization, Moore-Penrose pseudoinverse Email address: [email protected] (Chetan Jhurani) Preprint submitted to Computers and Mathematics with Applications October 10, 2018 1. Introduction We present and analyze a matrix-valued optimization problem formulated to sparsify matrices while preserving the matrix null-spaces and certain spe- cial structural properties. -

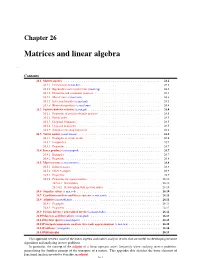

Matrices and Linear Algebra

Chapter 26 Matrices and linear algebra ap,mat Contents 26.1 Matrix algebra........................................... 26.2 26.1.1 Determinant (s,mat,det)................................... 26.2 26.1.2 Eigenvalues and eigenvectors (s,mat,eig).......................... 26.2 26.1.3 Hermitian and symmetric matrices............................. 26.3 26.1.4 Matrix trace (s,mat,trace).................................. 26.3 26.1.5 Inversion formulas (s,mat,mil)............................... 26.3 26.1.6 Kronecker products (s,mat,kron).............................. 26.4 26.2 Positive-definite matrices (s,mat,pd) ................................ 26.4 26.2.1 Properties of positive-definite matrices........................... 26.5 26.2.2 Partial order......................................... 26.5 26.2.3 Diagonal dominance.................................... 26.5 26.2.4 Diagonal majorizers..................................... 26.5 26.2.5 Simultaneous diagonalization................................ 26.6 26.3 Vector norms (s,mat,vnorm) .................................... 26.6 26.3.1 Examples of vector norms.................................. 26.6 26.3.2 Inequalities......................................... 26.7 26.3.3 Properties.......................................... 26.7 26.4 Inner products (s,mat,inprod) ................................... 26.7 26.4.1 Examples.......................................... 26.7 26.4.2 Properties.......................................... 26.8 26.5 Matrix norms (s,mat,mnorm) .................................... 26.8 26.5.1 -

A Logarithmic Minimization Property of the Unitary Polar Factor in The

A logarithmic minimization property of the unitary polar factor in the spectral norm and the Frobenius matrix norm Patrizio Neff∗ Yuji Nakatsukasa† Andreas Fischle‡ October 30, 2018 Abstract The unitary polar factor Q = Up in the polar decomposition of Z = Up H is the minimizer over unitary matrices Q for both Log(Q∗Z) 2 and its Hermitian part k k sym (Log(Q∗Z)) 2 over both R and C for any given invertible matrix Z Cn×n and k * k ∈ any matrix logarithm Log, not necessarily the principal logarithm log. We prove this for the spectral matrix norm for any n and for the Frobenius matrix norm in two and three dimensions. The result shows that the unitary polar factor is the nearest orthogonal matrix to Z not only in the normwise sense, but also in a geodesic distance. The derivation is based on Bhatia’s generalization of Bernstein’s trace inequality for the matrix exponential and a new sum of squared logarithms inequality. Our result generalizes the fact for scalars that for any complex logarithm and for all z C 0 ∈ \ { } −iϑ 2 2 −iϑ 2 2 min LogC(e z) = log z , min Re LogC(e z) = log z . ϑ∈(−π,π] | | | | || ϑ∈(−π,π] | | | | || Keywords: unitary polar factor, matrix logarithm, matrix exponential, Hermitian part, minimization, unitarily invariant norm, polar decomposition, sum of squared logarithms in- equality, optimality, matrix Lie-group, geodesic distance arXiv:1302.3235v4 [math.CA] 2 Jan 2014 AMS 2010 subject classification: 15A16, 15A18, 15A24, 15A44, 15A45, 26Dxx ∗Corresponding author, Head of Lehrstuhl f¨ur Nichtlineare Analysis und Modellierung, Fakult¨at f¨ur Mathematik, Universit¨at Duisburg-Essen, Campus Essen, Thea-Leymann Str. -

Polynomial Parametrization for the Solutions of Diophantine Equations and Arithmetic Groups

ANNALS OF MATHEMATICS Polynomial parametrization for the solutions of Diophantine equations and arithmetic groups By Leonid Vaserstein SECOND SERIES, VOL. 171, NO. 2 March, 2010 anmaah Annals of Mathematics, 171 (2010), 979–1009 Polynomial parametrization for the solutions of Diophantine equations and arithmetic groups By LEONID VASERSTEIN Abstract A polynomial parametrization for the group of integer two-by-two matrices with determinant one is given, solving an old open problem of Skolem and Beurk- ers. It follows that, for many Diophantine equations, the integer solutions and the primitive solutions admit polynomial parametrizations. Introduction This paper was motivated by an open problem from[8, p. 390]: CNTA 5.15 (Frits Beukers). Prove or disprove the following statement: There exist four polynomials A; B; C; D with integer coefficients (in any number of variables) such that AD BC 1 and all integer solutions D of ad bc 1 can be obtained from A; B; C; D by specialization of the D variables to integer values. Actually, the problem goes back to Skolem[14, p. 23]. Zannier[22] showed that three variables are not sufficient to parametrize the group SL2 Z, the set of all integer solutions to the equation x1x2 x3x4 1. D Apparently Beukers posed the question because SL2 Z (more precisely, a con- gruence subgroup of SL2 Z) is related with the solution set X of the equation x2 x2 x2 3, and he (like Skolem) expected the negative answer to CNTA 1 C 2 D 3 C 5.15, as indicated by his remark[8, p. 389] on the set X: I have begun to believe that it is not possible to cover all solutions by a finite number of polynomials simply because I have never The paper was conceived in July of 2004 while the author enjoyed the hospitality of Tata Institute for Fundamental Research, India. -

The Images of Non-Commutative Polynomials Evaluated on 2 × 2 Matrices

PROCEEDINGS OF THE AMERICAN MATHEMATICAL SOCIETY Volume 140, Number 2, February 2012, Pages 465–478 S 0002-9939(2011)10963-8 Article electronically published on June 16, 2011 THE IMAGES OF NON-COMMUTATIVE POLYNOMIALS EVALUATED ON 2 × 2 MATRICES ALEXEY KANEL-BELOV, SERGEY MALEV, AND LOUIS ROWEN (Communicated by Birge Huisgen-Zimmermann) Abstract. Let p be a multilinear polynomial in several non-commuting vari- ables with coefficients in a quadratically closed field K of any characteristic. It has been conjectured that for any n, the image of p evaluated on the set Mn(K)ofn by n matrices is either zero, or the set of scalar matrices, or the set sln(K) of matrices of trace 0, or all of Mn(K). We prove the conjecture for n = 2, and show that although the analogous assertion fails for completely homogeneous polynomials, one can salvage the conjecture in this case by in- cluding the set of all non-nilpotent matrices of trace zero and also permitting dense subsets of Mn(K). 1. Introduction Images of polynomials evaluated on algebras play an important role in non- commutative algebra. In particular, various important problems related to the theory of polynomial identities have been settled after the construction of central polynomials by Formanek [F1] and Razmyslov [Ra1]. The parallel topic in group theory (the images of words in groups) also has been studied extensively, particularly in recent years. Investigation of the image sets of words in pro-p-groups is related to the investigation of Lie polynomials and helped Zelmanov [Ze] to prove that the free pro-p-group cannot be embedded in the algebra of n × n matrices when p n. -

The Computation of the Inverse of a Square Polynomial Matrix ∗

The Computation of the Inverse of a Square Polynomial Matrix ∗ Ky M. Vu, PhD. AuLac Technologies Inc. c 2008 Email: [email protected] Abstract 2 The Inverse Polynomial An approach to calculate the inverse of a square polyno- The inverse of a square nonsingular matrix is a square ma- mial matrix is suggested. The approach consists of two trix, which by premultiplying or postmultiplying with the similar algorithms: One calculates the determinant polyno- matrix gives an identity matrix. An inverse matrix can be mial and the other calculates the adjoint polynomial. The expressed as a ratio of the adjoint and determinant of the algorithm to calculate the determinant polynomial gives the matrix. A singular matrix has no inverse because its deter- coefficients of the polynomial in a recursive manner from minant is zero; we cannot calculate its inverse. We, how- a recurrence formula. Similarly, the algorithm to calculate ever, can always calculate its adjoint and determinant. It is, the adjoint polynomial also gives the coefficient matrices therefore, always better to calculate the inverse of a square recursively from a similar recurrence formula. Together, polynomial matrix by calculating its adjoint and determi- they give the inverse as a ratio of the adjoint polynomial nant polynomials separately and obtain the inverse as a ra- and the determinant polynomial. tio of these polynomials. In the following discussion, we will present algorithms to calculate the adjoint and deter- minant polynomials. Keywords: Adjoint, Cayley-Hamilton theorem, determi- nant, Faddeev’s algorithm, inverse matrix. 2.1 The Determinant Polynomial To begin our discussion, we consider the following equa- 1 Introduction tion that is the determinant of a square matrix of dimension n, viz Modern control theory consists of a large part of matrix the- n n−1 n−2 n jλI − Cj = λ − p1(C)λ + p2(C)λ ··· (−1) pn(C); ory. -

Perron–Frobenius Theorem for Matrices with Some Negative Entries

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Elsevier - Publisher Connector Linear Algebra and its Applications 328 (2001) 57–68 www.elsevier.com/locate/laa Perron–Frobenius theorem for matrices with some negative entries Pablo Tarazaga a,∗,1, Marcos Raydan b,2, Ana Hurman c aDepartment of Mathematics, University of Puerto Rico, P.O. Box 5000, Mayaguez Campus, Mayaguez, PR 00681-5000, USA bDpto. de Computación, Facultad de Ciencias, Universidad Central de Venezuela, Ap. 47002, Caracas 1041-A, Venezuela cFacultad de Ingeniería y Facultad de Ciencias Económicas, Universidad Nacional del Cuyo, Mendoza, Argentina Received 17 December 1998; accepted 24 August 2000 Submitted by R.A. Brualdi Abstract We extend the classical Perron–Frobenius theorem to matrices with some negative entries. We study the cone of matrices that has the matrix of 1’s (eet) as the central ray, and such that any matrix inside the cone possesses a Perron–Frobenius eigenpair. We also find the maximum angle of any matrix with eet that guarantees this property. © 2001 Elsevier Science Inc. All rights reserved. Keywords: Perron–Frobenius theorem; Cones of matrices; Optimality conditions 1. Introduction In 1907, Perron [4] made the fundamental discovery that the dominant eigenvalue of a matrix with all positive entries is positive and also that there exists a correspond- ing eigenvector with all positive entries. This result was later extended to nonnegative matrices by Frobenius [2] in 1912. In that case, there exists a nonnegative dominant ∗ Corresponding author. E-mail addresses: [email protected] (P. Tarazaga), [email protected] (M.