Image Processing - Douglas G

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

An End-To-End System for Automatic Characterization of Iba1 Immunopositive Microglia in Whole Slide Imaging

Neuroinformatics (2019) 17:373–389 https://doi.org/10.1007/s12021-018-9405-x ORIGINAL ARTICLE An End-to-end System for Automatic Characterization of Iba1 Immunopositive Microglia in Whole Slide Imaging Alexander D. Kyriazis1 · Shahriar Noroozizadeh1 · Amir Refaee1 · Woongcheol Choi1 · Lap-Tak Chu1 · Asma Bashir2 · Wai Hang Cheng2 · Rachel Zhao2 · Dhananjay R. Namjoshi2 · Septimiu E. Salcudean3 · Cheryl L. Wellington2 · Guy Nir4 Published online: 8 November 2018 © Springer Science+Business Media, LLC, part of Springer Nature 2018 Abstract Traumatic brain injury (TBI) is one of the leading causes of death and disability worldwide. Detailed studies of the microglial response after TBI require high throughput quantification of changes in microglial count and morphology in histological sections throughout the brain. In this paper, we present a fully automated end-to-end system that is capable of assessing microglial activation in white matter regions on whole slide images of Iba1 stained sections. Our approach involves the division of the full brain slides into smaller image patches that are subsequently automatically classified into white and grey matter sections. On the patches classified as white matter, we jointly apply functional minimization methods and deep learning classification to identify Iba1-immunopositive microglia. Detected cells are then automatically traced to preserve their complex branching structure after which fractal analysis is applied to determine the activation states of the cells. The resulting system detects white matter regions with 84% accuracy, detects microglia with a performance level of 0.70 (F1 score, the harmonic mean of precision and sensitivity) and performs binary microglia morphology classification with a 70% accuracy. -

Digital Image Processing

11/11/20 DIGITAL IMAGE PROCESSING Lecture 4 Frequency domain Tammy Riklin Raviv Electrical and Computer Engineering Ben-Gurion University of the Negev Fourier Domain 1 11/11/20 2D Fourier transform and its applications Jean Baptiste Joseph Fourier (1768-1830) ...the manner in which the author arrives at these A bold idea (1807): equations is not exempt of difficulties and...his Any univariate function can analysis to integrate them still leaves something be rewritten as a weighted to be desired on the score of generality and even sum of sines and cosines of rigour. different frequencies. • Don’t believe it? – Neither did Lagrange, Laplace, Poisson and Laplace other big wigs – Not translated into English until 1878! • But it’s (mostly) true! – called Fourier Series Legendre Lagrange – there are some subtle restrictions Hays 2 11/11/20 A sum of sines and cosines Our building block: Asin(wx) + B cos(wx) Add enough of them to get any signal g(x) you want! Hays Reminder: 1D Fourier Series 3 11/11/20 Fourier Series of a Square Wave Fourier Series: Just a change of basis 4 11/11/20 Inverse FT: Just a change of basis 1D Fourier Transform 5 11/11/20 1D Fourier Transform Fourier Transform 6 11/11/20 Example: Music • We think of music in terms of frequencies at different magnitudes Slide: Hoiem Fourier Analysis of a Piano https://www.youtube.com/watch?v=6SR81Wh2cqU 7 11/11/20 Fourier Analysis of a Piano https://www.youtube.com/watch?v=6SR81Wh2cqU Discrete Fourier Transform Demo http://madebyevan.com/dft/ Evan Wallace 8 11/11/20 2D Fourier Transform -

9. Biomedical Imaging Informatics Daniel L

9. Biomedical Imaging Informatics Daniel L. Rubin, Hayit Greenspan, and James F. Brinkley After reading this chapter, you should know the answers to these questions: 1. What makes images a challenging type of data to be processed by computers, as opposed to non-image clinical data? 2. Why are there many different imaging modalities, and by what major two characteristics do they differ? 3. How are visual and knowledge content in images represented computationally? How are these techniques similar to representation of non-image biomedical data? 4. What sort of applications can be developed to make use of the semantic image content made accessible using the Annotation and Image Markup model? 5. Describe four different types of image processing methods. Why are such methods assembled into a pipeline when creating imaging applications? 6. Give an example of an imaging modality with high spatial resolution. Give an example of a modality that provides functional information. Why are most imaging modalities not capable of providing both? 7. What is the goal in performing segmentation in image analysis? Why is there more than one segmentation method? 8. What are two types of quantitative information in images? What are two types of semantic information in images? How might this information be used in medical applications? 9. What is the difference between image registration and image fusion? Given an example of each. 1 9.1. Introduction Imaging plays a central role in the healthcare process. Imaging is crucial not only to health care, but also to medical communication and education, as well as in research. -

Recognition of Electrical & Electronics Components

NATIONAL INSTITUTE OF TECHNOLOGY, ROURKELA Submission of project report for the evaluation of the final year project Titled Recognition of Electrical & Electronics Components Under the guidance of: Prof. S. Meher Dept. of Electronics & Communication Engineering NIT, Rourkela. Submitted by: Submitted by: Amiteshwar Kumar Abhijeet Pradeep Kumar Chachan 10307022 10307030 B.Tech IV B.Tech IV Dept. of Electronics & Communication Dept. of Electronics & Communication Engg. Engg. NIT, Rourkela NIT, Rourkela NATIONAL INSTITUTE OF TECHNOLOGY, ROURKELA Submission of project report for the evaluation of the final year project Titled Recognition of Electrical & Electronics Components Under the guidance of: Prof. S. Meher Dept. of Electronics & Communication Engineering NIT, Rourkela. Submitted by: Submitted by: Amiteshwar Kumar Abhijeet Pradeep Kumar Chachan 10307022 10307030 B.Tech IV B.Tech IV Dept. of Electronics & Communication Dept. of Electronics & Communication Engg. Engg. NIT, Rourkela NIT, Rourkela National Institute of Technology Rourkela CERTIFICATE This is to certify that the thesis entitled, “RECOGNITION OF ELECTRICAL & ELECTRONICS COMPONENTS ” submitted by Sri AMITESHWAR KUMAR ABHIJEET and Sri PRADEEP KUMAR CHACHAN in partial fulfillments for the requirements for the award of Bachelor of Technology Degree in Electronics & Instrumentation Engg. at National Institute of Technology, Rourkela (Deemed University) is an authentic work carried out by him under my supervision and guidance. To the best of my knowledge, the matter embodied in the thesis has not been submitted to any other University / Institute for the award of any Degree or Diploma. Date: Dr. S. Meher Dept. of Electronics & Communication Engg. NIT, Rourkela. ACKNOWLEDGEMENT I wish to express my deep sense of gratitude and indebtedness to Dr.S.Meher, Department of Electronics & Communication Engg. -

Sampling Theory for Digital Video Acquisition: the Guide for the Perplexed User

Sampling Theory for Digital Video Acquisition: The Guide for the Perplexed User Prof. Lenny Rudin1,2,3 Ping Yu1 Prof. Jean-Michel Morel2 Cognitech, Inc, Pasadena, California (1) Ecole Normale Superieure de Cachan, France(2) SMPTE Member (Hollywood Technical Section) (3) Abstract: Recently, the law enforcement community with professional interests in applications of image/video processing technology has been exposed to scientifically flawed assertions regarding the advantages and disadvantages of various hardware image acquisition devices (video digitizing cards). These assertions state a necessity of using SMPTE CCIR-601 standard when digitizing NTSC composite video signals from surveillance videotapes. In particular, it would imply that the pixel-sampling rate of 720*486 is absolutely required to capture all the available video information encoded in the composite video signal and recorded on (S)VHS videotapes. Fortunately, these statements can be directly analyzed within the strict mathematical context of Shannons Sampling Theory, and shown to be wrong. Here we apply the classical Shannon-Nyquist results to the process of digitizing composite analog video from videotapes to dispel the theoretically unfounded, wrong assertions. Introduction: The field of image processing is a mature interdisciplinary science that spans the fields of electrical engineering, applied mathematics, and computer science. Over the last sixty years, this field accumulated fundamental results from the above scientific disciplines. In particular, the field of signal processing has contributed to the basic understanding of the exact mathematical relationship between the world of continuous (analog) images and the digitized versions of these images, when collected by computer video acquisition systems. This theory is known as Sampling Theory, and is credited to Prof. -

An Analysis of Aliasing and Image Restoration Performance for Digital Imaging Systems

AN ANALYSIS OF ALIASING AND IMAGE RESTORATION PERFORMANCE FOR DIGITAL IMAGING SYSTEMS Thesis Submitted to The School of Engineering of the UNIVERSITY OF DAYTON In Partial Fulfillment of the Requirements for The Degree of Master of Science in Electrical Engineering By Iman Namroud UNIVERSITY OF DAYTON Dayton, Ohio May, 2014 AN ANALYSIS OF ALIASING AND IMAGE RESTORATION PERFORMANCE FOR DIGITAL IMAGING SYSTEMS Name: Namroud, Iman APPROVED BY: Russell C. Hardie, Ph.D. John S. Loomis, Ph.D. Advisor Committee Chairman Committee Member Professor, Department of Electrical Professor, Department of Electrical and Computer Engineering and Computer Engineering Eric J. Balster, Ph.D. Committee Member Assistant Professor, Department of Electrical and Computer Engineering John G. Weber, Ph.D. Tony E. Saliba, Ph.D. Associate Dean Dean, School of Engineering School of Engineering & Wilke Distinguished Professor ii c Copyright by Iman Namroud All rights reserved 2014 ABSTRACT AN ANALYSIS OF ALIASING AND IMAGE RESTORATION PERFORMANCE FOR DIGITAL IMAGING SYSTEMS Name: Namroud, Iman University of Dayton Advisor: Dr. Russell C. Hardie It is desirable to obtain a high image quality when designing an imaging system. The design depends on many factors such as the optics, the pitch, and the cost. The effort to enhance one aspect of the image may reduce the chances of enhancing another one, due to some tradeoffs. There is no imaging system capable of producing an ideal image, since that the system itself presents distortion in the image. When designing an imaging system, some tradeoffs favor aliasing, such as the desire for a wide field of view (FOV) and a high signal to noise ratio (SNR). -

Digital Image Processing Lectures 5 & 6

Digital Image Processing Lectures 5 & 6 Mahmood (Mo) Azimi, Professor Colorado State University Fort Collins, Co 80523 email : [email protected] Each time you have to click on this icon wherever it appears to load the next sound file To advance click enter or page down to go back use page up 1. Parseval’s Theorem and Inner Product Preservation Another important property of FT is that the inner product of two functions is equal to the inner product of their FT’s. ZZ ∞ x(v, u)y∗(u, v)dudv −∞ ZZ ∞ 1 ∗ = 2 X(ω1, ω2)Y (ω1, ω2)dω1dω2 4π −∞ where ∗ stands for complex conjugate operation. When x = y we obtain the well-known Parseval energy conservation formula i.e. ZZ ∞ ZZ ∞ 2 1 2 |x(u, v)| dudv = 2 |X(ω1, ω2)| dω1dω2 −∞ 4π −∞ i.e. the total energy in the function is the same as in its FT. 2. Frequency Response and Eigenfunctions of 2-D LSI Systems An eigen-function of a system is defined as an input function To advance click enter or page down to go back use page up 1 that is reproduced at the output with a possible change in the amplitude. For an LSI system eigen-functions are given by f(u, v) = exp(jω1u + jω2v) Using the 2-D convolution integral ZZ ∞ 0 0 0 0 0 0 g(u, v) = h(u − u , v − v ) exp[jω1u + jω2v ]du dv −∞ change u˜ = u − u0, v˜ = v − v0, then g(u, v) = H(ω1, ω2) exp[jω1u + jω2v] where ZZ ∞ H(ω1, ω2) = h(˜u, v˜) exp[jω1u˜ + jω2v˜]dud˜ v˜ −∞ = F{h(u, v)} To advance click enter or page down to go back use page up 2 is the frequency response of the 2-D system. -

Chapter7 Image Compression

Chapter7 Image Compression •Preview • 71I7.1 Intro duct ion • 7.2 Fundamental concepts and theories • 7.3 Basidihdic coding methods • 7.4 Predictive coding • 7.5 Transform coding • 7.6 Introduction to international standards Digital Image Processing 1 Prof.Zhengkai Liu Dr.Rong Zhang 7.1 Introduction 7.1.1 why code data? 7.1.2 Classification • To reduce storage volume Lossless compression: reversible • To reduce transmission time Lossy compression: irreversible – One colour image • 760 by 580 pixels • 3 channels, each 8 bits • 1.3 Mbyte – Video da ta • same resolution • 25 frames per second • 33 Mbyte/second Digital Image Processing 2 Prof.Zhengkai Liu Dr.Rong Zhang 7.1 Introduction 7.1.3 Compression methods •Entropy coding: Huffman coding, arithmetic coding •Run length coding: G3, G4 •LZW coding: arj(DOS) , zi p( Wi nd ows) , compress( UNIX) •Predictive coding: linear prediction, non-linear prediction •TfTransform codi ng: DCT, KLT, W al sh -TWT(T, WT(wavel et) •Others: vector quantization (VQ), Fractal coding the second generation coding Digital Image Processing 3 Prof.Zhengkai Liu Dr.Rong Zhang 7. 2 Fundamental concepts and theories 7.2.1 Data redundancy If n1denote the original data number, and n2 denote the compressed data number, then the data redundancy can be defined as 1 RD =−1 CR where CR, commonly called the compression ratio, is n1 CR = n2 n =n FlFor example 2 1 RD=0 nn21 CRRD→∞,1 → Digital Image Processing 4 Prof.Zhengkai Liu Dr.Rong Zhang 7. 2 Fundamental concepts and theories 7.2.1 Data redundancy •Other expression of compression ratio n C = 2 n2 8/bits pixel R or L== bits/, pixel CR n1 MN× L •Three basic image redundancy Coding redundancy Inteeprpixle redu edudndancy psychovisual redundancy Digital Image Processing 5 Prof.Zhengkai Liu Dr.Rong Zhang 7. -

A Survey of Medical Image Processing Tools

See discussions, stats, and author profiles for this publication at: http://www.researchgate.net/publication/282610419 A Survey of Medical Image Processing Tools CONFERENCE PAPER · AUGUST 2015 DOI: 10.13140/RG.2.1.3364.4241 2 AUTHORS, INCLUDING: Siau-Chuin Liew Universiti Malaysia Pahang 24 PUBLICATIONS 30 CITATIONS SEE PROFILE Available from: Siau-Chuin Liew Retrieved on: 07 October 2015 provided by UMP Institutional Repository View metadata, citation and similar papers at core.ac.uk CORE brought to you by A Survey of Medical Image Processing Tools Lay-Khoon Lee Siau-Chuin Liew 1Faculty of Computer Systems and Software Engineering; 2Faculty of Computer Systems and Software Engineering; Universiti Malaysia Pahang, Universiti Malaysia Pahang, 26300 Gambang, Pahang, Malaysia 26300 Gambang, Pahang, Malaysia Abstract—A precise analysis of medical image is an important registration, segmentation, visualization, reconstruction, stage in the contouring phase throughout radiotherapy simulation and diffusion preparation. Medical images are mostly used as radiographic techniques in diagnosis, clinical studies and treatment planning. Medical image processing tool are also similarly as important. With a medical image processing tool, it is possible to speed up and enhance the operation of the analysis of the medical image. This paper describes medical image processing software tool which attempts to secure the same kind of programmability advantage for exploring applications of the pipelined processors. These tools simulate complete systems consisting of several of the proposed processing components, in a configuration described by a graphical schematic diagram. In this paper, fifteen different medical image processing tools will be compared in several aspects. The main objective of the comparison is to gather and analysis on the tool in order to recommend users of different operating systems on what type of medical image tools to be used when analysing different types of imaging. -

Application of Augmented Reality Gis in Architecture

APPLICATION OF AUGMENTED REALITY GIS IN ARCHITECTURE Yan Guo a,*, Qingyun Du a, Yi Luo a, Weiwei Zhang a, Lu Xu a a Resources and Environment Science School, 129 Luo Yu Road, Wuhan University, Wuhan, China, [email protected] Commission V, WG V/2 KEY WORDS: Augmented Reality, 3D GIS, Architecture, Urban planning, Digital photogrammetry ABSTRACT: Paper discusses the function and meaning of ARGIS in architecture through an instance of an aerodrome construction project. In the initial stages of the construction, it applies the advanced technology including 3S (RS, GIS and GPS) techniques, augmented reality, virtual reality and digital image processing techniques, and so on. Virtual image or other information that is created by the computer is superimposed with the surveying district which the observer stands is looking at. When the observer is moving in the district, the virtual information is changing correspondingly, just like the virtual information really exists in true environment. The observer can see the scene of aerodrome if he puts on clairvoyant HMD. If we have structural information of construction in database, AR can supply X-ray of the building just like pipeline, wire and framework in walls. 1. INTRODUCTION Real environment (RE) and virtual environment (VE) are at two sides, mixed reality (MR) is in the middle, AR is near to the 1.1 Definition real environment side. Data created by the computer can augment real environment and enhance user’s comprehension Augmented reality (AR) is an important branch of virtual about environment. Augmented Virtuality (AV) is a term reality, and it’s a hot spot of study in recent years. -

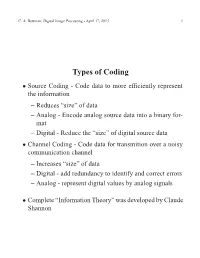

Source Coding

C. A. Bouman: Digital Image Processing - April 17, 2013 1 Types of Coding Source Coding - Code data to more efficiently represent • the information – Reduces “size” of data – Analog - Encode analog source data into a binary for- mat – Digital - Reduce the “size” of digital source data Channel Coding - Code data for transmition over a noisy • communication channel – Increases “size” of data – Digital - add redundancy to identify and correct errors – Analog - represent digital values by analog signals Complete “Information Theory” was developed by Claude • Shannon C. A. Bouman: Digital Image Processing - April 17, 2013 2 Digital Image Coding Images from a 6 MPixel digital cammera are 18 MBytes • each Input and output images are digital • Output image must be smaller (i.e. 500 kBytes) • ≈ This is a digital source coding problem • C. A. Bouman: Digital Image Processing - April 17, 2013 3 Two Types of Source (Image) Coding Lossless coding (entropy coding) • – Data can be decoded to form exactly the same bits – Used in “zip” – Can only achieve moderate compression (e.g. 2:1 - 3:1) for natural images – Can be important in certain applications such as medi- cal imaging Lossly source coding • – Decompressed image is visually similar, but has been changed – Used in “JPEG” and “MPEG” – Can achieve much greater compression (e.g. 20:1 - 40:1) for natural images – Uses entropy coding C. A. Bouman: Digital Image Processing - April 17, 2013 4 Entropy Let X be a random variables taking values in the set 0, ,M • 1 such that { ··· − } pi = P X = i { } Then we define the entropy of X as • M 1 − H(X) = pi log pi − 2 i=0 X = E [log pX] − 2 H(X) has units of bits C. -

From Virtual to Augmented Reality in Financial Trading: a CYBERII

Reality (AR) in electronic financial trading. This added From Virtual to Augmented value is gained from augmented realism and less constrained interaction. The paper discusses the Reality in Financial Trading: A challenges and rewards of emerging digital media CYBERII Application technologies in meeting the needs of electronic commerce applications, in particular electronic financial trading, in terms of the realism of rendering, portability, and Soha Maad, Samir Grabaya, and Saida widespread usability. It motivates further ergonomic studies involving the evaluation of utility and usability of Bouakaz augmented reality technologies, including the CYBERII technology, in the field of electronic commerce. The authors Soha Maad is a Post-doc at INRIA (Institut National de Recherche en Informatique et en Automatique) Rhône- INTRODUCTION Alpes doing research at LIRIS Lab (Laboratoire d'InfoRmatique en Images et Systèmes d'information) in With the growing importance of digital media Université Claude Bernard Lyon 1 in France. technology, there is a rising need for developing new Samir Garbaya is a researcher at the Robotics applications that harness the use of this technology Laboratory of Versailles, France. while meeting various industries' needs. With this Saida Bouakaz is a Profossor in Computer Sciences at Université Claude Bernard Lyon 1 in France. motivating aim in mind, this paper considers an application that targets the financial industry and Keywords implements novel media technologies developed Augmented Reality (AR), Virtual Reality