Percona Xtradb Cluster Documentation Release 5.7.25-31.35

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Mysql Replication Tutorial

MySQL Replication Tutorial Lars Thalmann Technical lead Replication, Backup, and Engine Technology Mats Kindahl Lead Developer Replication Technology MySQL Conference and Expo 2008 Concepts 3 MySQL Replication Why? How? 1. High Availability Snapshots (Backup) Possibility of fail-over 1. Client program mysqldump 2. Load-balancing/Scale- With log coordinates out 2. Using backup Query multiple servers InnoDB, NDB 3. Off-site processing Don’t disturb master Binary log 1. Replication Asynchronous pushing to slave 2. Point-in-time recovery Roll-forward Terminology Master MySQL Server • Changes data • Has binlog turned on Master • Pushes binlog events to slave after slave has requested them MySQL Server Slave MySQL Server • Main control point of replication • Asks master for replication log Replication • Gets binlog event from master MySQL Binary log Server • Log of everything executed Slave • Divided into transactional components • Used for replication and point-in-time recovery Terminology Synchronous replication Master • A transaction is not committed until the data MySQL has been replicated (and applied) Server • Safer, but slower • This is available in MySQL Cluster Replication Asynchronous replication • A transaction is replicated after it has been committed MySQL Server • Faster, but you can in some cases loose transactions if master fails Slave • Easy to set up between MySQL servers Configuring Replication Required configuration – my.cnf Replication Master log-bin server_id Replication Slave server_id Optional items in my.cnf – What -

Beyond Relational Databases

EXPERT ANALYSIS BY MARCOS ALBE, SUPPORT ENGINEER, PERCONA Beyond Relational Databases: A Focus on Redis, MongoDB, and ClickHouse Many of us use and love relational databases… until we try and use them for purposes which aren’t their strong point. Queues, caches, catalogs, unstructured data, counters, and many other use cases, can be solved with relational databases, but are better served by alternative options. In this expert analysis, we examine the goals, pros and cons, and the good and bad use cases of the most popular alternatives on the market, and look into some modern open source implementations. Beyond Relational Databases Developers frequently choose the backend store for the applications they produce. Amidst dozens of options, buzzwords, industry preferences, and vendor offers, it’s not always easy to make the right choice… Even with a map! !# O# d# "# a# `# @R*7-# @94FA6)6 =F(*I-76#A4+)74/*2(:# ( JA$:+49>)# &-)6+16F-# (M#@E61>-#W6e6# &6EH#;)7-6<+# &6EH# J(7)(:X(78+# !"#$%&'( S-76I6)6#'4+)-:-7# A((E-N# ##@E61>-#;E678# ;)762(# .01.%2%+'.('.$%,3( @E61>-#;(F7# D((9F-#=F(*I## =(:c*-:)U@E61>-#W6e6# @F2+16F-# G*/(F-# @Q;# $%&## @R*7-## A6)6S(77-:)U@E61>-#@E-N# K4E-F4:-A%# A6)6E7(1# %49$:+49>)+# @E61>-#'*1-:-# @E61>-#;6<R6# L&H# A6)6#'68-# $%&#@:6F521+#M(7#@E61>-#;E678# .761F-#;)7-6<#LNEF(7-7# S-76I6)6#=F(*I# A6)6/7418+# @ !"#$%&'( ;H=JO# ;(\X67-#@D# M(7#J6I((E# .761F-#%49#A6)6#=F(*I# @ )*&+',"-.%/( S$%=.#;)7-6<%6+-# =F(*I-76# LF6+21+-671># ;G';)7-6<# LF6+21#[(*:I# @E61>-#;"# @E61>-#;)(7<# H618+E61-# *&'+,"#$%&'$#( .761F-#%49#A6)6#@EEF46:1-# -

Two Node Mysql Cluster

Two Node MySQL Cluster 1.0 EXECUTIVE SUMMARY This white paper describes the challenges CONTENTS involved in deploying the 2 node High Available MySQL-Cluster with a proposed solution. For the SECTION PAGE sake of users reading this document it also describes in brief the main components of the MySQL Cluster which are necessary to 1.0 EXECUTIVE SUMMARY………………………1 understand the paper overall. 2.0 BUSINESS CHALLENGES……………………1 The solution relies on the Linux HA framework 3.0 MYSQL CLUSTER……………………………..1 (Heartbeat/Pacemaker) so the white paper can 3.1 CLIENTS/APIS………………………………….2 be best understood with the knowledge of Linux 3.2 SQL NODE………………………………………2 HA framework. 3.3 DATA NODE…………………………………….2 3.4 NDB MANAGEMENT NODE………………….3 3.5 CHALLENGES………………………………….3 3.6 SOLUTION………………………………………4 4.0 REFERENCES………………………………….7 2.0 BUSINESS CHALLENGES The MySQL cluster demands at least 4 nodes to be present for deploying a High Available MySQL database cluster. The typical configuration of any enterprise application is a 2 Node solution (Active-Standby mode or Active-Active Mode). The challenge lies in fitting the MySQL Clsuter Nodes in the 2 Nodes offering the application services and to make it work in that configuration with no single point of failure. 3.0 MYSQL CLUSTER The intent of this section is to briefly mention the important actors and their roles in the overall MySQL Cluster. For more information the reader can refer to the MYSQL reference documents from its official site (http://dev.mysql.com/doc/index.html). MySQL Cluster is a technology that enables clustering of in-memory databases in a “shared-nothing system”. -

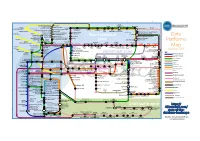

Data Platforms Map from 451 Research

1 2 3 4 5 6 Azure AgilData Cloudera Distribu2on HDInsight Metascale of Apache Kaa MapR Streams MapR Hortonworks Towards Teradata Listener Doopex Apache Spark Strao enterprise search Apache Solr Google Cloud Confluent/Apache Kaa Al2scale Qubole AWS IBM Azure DataTorrent/Apache Apex PipelineDB Dataproc BigInsights Apache Lucene Apache Samza EMR Data Lake IBM Analy2cs for Apache Spark Oracle Stream Explorer Teradata Cloud Databricks A Towards SRCH2 So\ware AG for Hadoop Oracle Big Data Cloud A E-discovery TIBCO StreamBase Cloudera Elas2csearch SQLStream Data Elas2c Found Apache S4 Apache Storm Rackspace Non-relaonal Oracle Big Data Appliance ObjectRocket for IBM InfoSphere Streams xPlenty Apache Hadoop HP IDOL Elas2csearch Google Azure Stream Analy2cs Data Ar2sans Apache Flink Azure Cloud EsgnDB/ zone Platforms Oracle Dataflow Endeca Server Search AWS Apache Apache IBM Ac2an Treasure Avio Kinesis LeanXcale Trafodion Splice Machine MammothDB Drill Presto Big SQL Vortex Data SciDB HPCC AsterixDB IBM InfoSphere Towards LucidWorks Starcounter SQLite Apache Teradata Map Data Explorer Firebird Apache Apache JethroData Pivotal HD/ Apache Cazena CitusDB SIEM Big Data Tajo Hive Impala Apache HAWQ Kudu Aster Loggly Ac2an Ingres Sumo Cloudera SAP Sybase ASE IBM PureData January 2016 Logic Search for Analy2cs/dashDB Logentries SAP Sybase SQL Anywhere Key: B TIBCO Splunk Maana Rela%onal zone B LogLogic EnterpriseDB SQream General purpose Postgres-XL Microso\ Ry\ X15 So\ware Oracle IBM SAP SQL Server Oracle Teradata Specialist analy2c PostgreSQL Exadata -

Percona Xtrabackup Provides

Percona XtraBackup provides: • Fast and reliable backups • Uninterrupted transaction processing during backups • Savings on disk space and network bandwidth with better compression • Automatic backup Percona XtraBackup verification You’re only as good as the tools you have to use. When it comes to your business, the • Higher uptime due to faster software tools you employ can be the difference between success and failure. restore time Percona’s suite of MySQL and MongoDB software and toolkits are a powerhouse of performance, the backbone of the organization. As a product of the open source Bringing immediate, noticeable community, our software has been tested by fire and proven resilient. and long lasting benefits to Percona XtraBackup is a free, open source, complete online backup solution for all meet your budget and needs. versions of Percona Server for MySQL, MySQL® and MariaDB®. With over 1,800,000 downloads, Percona XtraBackup performs online non-blocking, tightly compressed, highly secure backups on transactional systems so that applications remain fully available during planned maintenance windows. Percona XtraBackup is the world’s only open-source, free MySQL hot backup software that performs non-blocking backups for InnoDB and XtraDB databases. With Percona XtraBackup, you can achieve the following benefits: • Create hot InnoDB backups without pausing your database • Make incremental backups of MySQL • Stream compressed MySQL backups to another server • Move tables between MySQL servers on-line • Create new MySQL replication slaves easily • Backup MySQL without adding load to the server Percona XtraBackup makes MySQL hot backups for all versions of Percona Server for MySQL, MySQL, and MariaDB. It performs streaming, compressed, and incremental MySQL backups. -

Mysql Cluster Wann Brauche Ich Das?

MySQL Cluster Wann brauche ich das? Mario Beck Principal Sales Consultant [email protected] The presentation is intended to outline our general product direction. It is intended for information purposes only, and may not be incorporated into any contract. It is not a commitment to deliver any material, code, or functionality, and should not be relied upon in making purchasing decisions. The development, release, and timing of any features or functionality described for Oracle’s products remains at the sole discretion of Oracle. 2.1BN USERS 8X DATA GROWTH IN 5 YRS 750M USERS 70+ NEW DOMAINS EVERY 60 SECONDS 20M APPS PER DAY 40% DATA GROWTH PER YEAR 600 NEW VIDEOS EVERY 60 SECONDS $1TR BY 2014 100K TWEETS PER MINUTE $700BN IN 2011 5.3BN MOBILE SUBS IN 2010 (78% PENETRATION) 13K iPHONE APPS 370K CALL MINUTES EVERY 60 SECONDS DOWNLOADED PER MINUTE Driving new Database Requirements EXTREME WRITE SCALABILITY REAL TIME USER EXPERIENCE ROCK SOLID RELIABILITY ELIMNATE BARRIERS TO ENTRY No Trade-Offs Transactional Integrity EXTREME WRITE SCALABILITYComplex REALQueries TIME USER EXPERIENCE Standards & Skillsets ROCK SOLID RELIABILITY ELIMNATE BARRIERS TO ENTRY No Trade-Offs: Cellular Network HLR / HSS Location Updates AuC, Call Routing, Billing Pre & Post Paid • Massive volumes of write traffic • <3ms database response • Downtime & lost transactions = lost $ Billing, AuC, VLR MySQL Cluster in Action: http://bit.ly/oRI5tF No Trade-Offs: eCommerce • Integrated Service Provider platform • eCommerce • Payment processing • Fulfillment • Supports 1k+ -

Mysql Cluster – Evaluation and Tests, OCTOBER 2, 2012 1 Mysql Cluster – Evaluation and Tests

MySQL Cluster – Evaluation and Tests, OCTOBER 2, 2012 1 MySQL Cluster – Evaluation and Tests Michael Raith (B.Sc.), Master-Student F Abstract Websites or web applications, whether they represent shopping systems, on demand services or a social networks, have something in common: data must be stored somewhere and somehow. This job can be achieved by various solutions with very different performance characteristics, e.g. based on simple data files, databases or high performance RAM storage solutions. For today’s popular web applications it is important to handle database operations in a minimum amount of time, because they are struggling with a vast increase in visitors and user generated data. Therefore, a major requirement for modern database application is to handle huge data (also called “big data”) in a short amount of time and to provide high availability for that data. A very popular database application in the open source community is MySQL, which was originally developed by a swedisch company called MySQL AB and is now maintenanced by Oracle. MySQL is shipped in a bundle with the Apache web server and therefore has a large distribution. This database is easily installed, maintained and administrated. By default MySQL is shipped with the MyISAM storage engine, which has good performance on read requests, but a poor one on massive parallel write requests. With appropriate tuning of various database settings, special architecture setups (replication, partitioning, etc.) or other storage engines, MySQL can be turned into a fast database application. For example Wikipedia uses MySQL for their backend data storage. In the lecture “Ultra Large Scale Systems” and “System Engineering” teached by Walter Kriha at Media University Stuttgart, the question “Can a MySQL database application handle more then 3000 database requests per second?” came up some time. -

A Closer Look at Mysql Cluster an Architectural Overview - Mariadb White Paper - 08-26-13-001.Docx Page 1

w: www.mariadb.com e: [email protected] MySQL Cluster Internal Architecture Max Mether MariaDB Keywords: MySQL Cluster, MySQL, High Availability, HA, Clustering, Architecture 1 Introduction MySQL Cluster is tightly linked to the MySQL server, yet it is a separate product. Due to its distributed nature, MySQL Cluster has a far more complicated architecture than a standard MySQL database. This presentation and white paper will describe the internal architecture of the MySQL Cluster product and the different mechanisms and process that exists to insure the availability of the cluster. We will also take a close look at the data and message flow that takes place between the nodes in the cluster during different types of operations. 2 MySQL Cluster Features MySQL Cluster can be distinguished from many other clustering products in that it offers five nines availability with a shared-nothing architecture. Most clustering products use a shared-disk architecture. Using a shared- nothing architecture allows the cluster to run on commodity hardware, thus greatly reducing costs compared to shared-disk architecture. Having a shared-nothing architecture does, however, come with a different cost, namely, a more complex way of ensuring high availability and synchronization of the nodes which is mainly seen as increased network traffic. MySQL Cluster also provides ACID transactions with row level locking using the READ-COMMITTED isolation level. MySQL Cluster is, in general, an in-memory database, however non-indexed data can be stored on disk and the disks are also used for checkpointing to ensure durability through system shutdown. MySQL cluster provides unique hash indexes as well as ordered T-tree indexes. -

In Mysql/Mariadb?

T h e O W A S P F o u n d a t i o n h t t p : / / w w w . o w a s p . o r g O W A S P E U T o u r B u c h a Do you r e s“GRANT ALL PRIVILEGES” t ... in MySQL/MariaDB? 2 0 1 DevOps Engineer 3 Gabriel PREDA [email protected] @eRadical Co pyr igh t © Th e O W AS P Fo un dat ion Per mi ssi on is gr ant ed to co py, dis tri bu te an d/ or mo dif y thi s do cu me nt un de r the ter ms of the O W AS P Lic en se. 2 DevOps = new BORG DevOps Engineer ??? ● Development – Web Applications (“Certified MySQL Associate”, “Zend Certified Engineer”) – Real Time Analytics ● Operations – MySQL DBA (15+ instances) – Sysadmin (<25 virtual & physical servers) 3 My MySQL● Over 15 MariaDB / TokuDBMariaDB(s) instances ● Statistics in MariaDB – < 1TB from Oct 2012 – < 12G raw data daily – < 12,000,000 events processed daily – < 90,000,000 rows added daily BigData? NO!!! ● I can copy all of that to my laptop ● “Working data set” - less than 1G & less than 7,500,000 rows 4 MySQL History ● 1983 – first version of MySQL created by Monty Wideniuns ● 1994 – MySQL is released OpenSource ● 2004 Oct – MySQL 4.1 GA ● 2005 Oct – InnoDB (Innobase) is bought by Oracle – Black Friday ● 2008 Ian – MySQL AB is bought by Sun (1bn $) ● 2008 Nov – MySQL 5.1 GA ● 2009 Apr – Sun is bought by Oracle (7,4 bn $) ● 2010 Dec – MySQL 5.5 GA ● 2012 Apr – MariaDB 5.5 GA ● 2013 Feb – MySQL 5.6 – first version made by Oracle ● 2013 Feb – MySQL will be replaced by MariaDB in Fedora & OpenSuSE * Max Mether – SkySQL “MySQL and MariaDB: Past, Present and Future” 5 Where are we NOW()? Drizzle MySQL TokuDB (Oracle) (Tokutek) Percona Server (Percona) MariaDB (Monty Program, Brighthouse MariaDB Foundation) (Infobright) Replication: ● Asynchronous InfiniDB ● Semi-synchronous (Calpont) ● Galera Synchronous (Codership) ● Tungsten Replication (Continuent) 6 Elementary.. -

Mariadb Viable Mysql Replacement Scale12x.Pdf

MariaDB: Viable MySQL replacement Colin Charles, Team MariaDB, SkySQL Ab [email protected] | [email protected] http://mariadb.org/ http://bytebot.net/blog/ | @bytebot on Twitter SCALE12x, Los Angeles, California, USA 22 February 2014 Sunday, 23 February 14 whoami • Work on MariaDB at SkySQL Ab • Merged with Monty Program Ab, makers of MariaDB • Formerly MySQL AB (exit: Sun Microsystems) • Past lives include Fedora Project (FESCO), OpenOffice.org • MHA experience • since November 2011 (MHA 0.52, 0.53) • NRE work to make it run in a Solaris 10 environment... with no Internet access! • Continued deployment advice + work for data centre use • Much thanks to SkySQL for the experience Sunday, 23 February 14 MySQL? Percona Server? MariaDB? Sunday, 23 February 14 Agenda • 4 years: major server releases (5.1, 5.2, 5.3, 5.5, 5.5+TokuDB, Galera Cluster) and 10.0 series • Delving into history of previous releases • MariaDB 10.0 • Client libraries, Galera Cluster • Roadmap Sunday, 23 February 14 What isn’t covered • MariaDB Enterprise • Galera Cluster + GUI + API • mariadb.com • SkySQL • trademarks... Sunday, 23 February 14 What is MariaDB? • Community developed branch of MySQL • Feature enhanced • Fully compatible & feature complete with MySQL Sunday, 23 February 14 Backed by MariaDB Foundation • Driver of the MariaDB project • Foundation not controlled by single entity/ person; has a Board • Ensure MariaDB is compatible with MySQL, maintain mariadb.org, keep community voice • Major sponsors: SkySQL, Parallels, Booking.com, Automattic, OpenQuery, Percona, -

SQL) I Nerelacijskih (Nosql) Baza Podataka S Primjerima U Mysql-U I Mongodb-U

Usporedba relacijskih (SQL) i nerelacijskih (NoSQL) baza podataka s primjerima u MySQL-u i MongoDB-u 3.5.2019., Zagreb, Nenad Crnko Sadržaj Uvod Osnovne karakteristike relacijskih (SQL) i nerelacijskih (NoSQL) baza podataka i prikaz njihovih razlika na primjerima baza MySQL i MongoDB Izvođenje osnovnih operacija kao što su čitanje, upisivanje, ažuriranje i brisanje zapisa u bazi podataka Naprednije operacije poput optimizacije tablica, indeksa, upita i ostalo Primjeri korištenja uz pomoć nekoliko programskih jezika (PHP, Python i Visual Studio) SQL vs NoSQL Trenutno stanje na tržištu SQL vs NoSQL Kriteriji za vrednovanje popularnosti (https://db-engines.com ) • Broj upita o pojedinom sustavu na nekoliko najpoznatijih tražilica (Google, Bing i Yandex) • Opće zanimanje za neki sustav na temelju podataka prikupljenih preko alata Google Trends • Učestalost tehničkih diskusija o nekom proizvodu koje se provode na web-adresama https://stackoverflow.com/ i https://dba.stackexchange.com/ • Broj dostupnih IT poslova povezanih s nekim od sustava na adresama za online pretraživanje ponuđenih poslova https://www.indeed.com/ i https://www.simplyhired.com/ • Učestalost pozivanja na pojedini sustav na društvenim mrežama namijenjenim profesionalcima https://www.linkedin.com/ i https://www.upwork.com/ • Broj pojavljivanja u porukama na https://twitter.com/ SQL vs NoSQL SQL vs NoSQL Usporedba relacijskih (SQL) i nerelacijskih (NoSQL) baza podataka (brzi pregled) SQL vs NoSQL Osnovne karakteristike relacijskih (SQL) i nerelacijskih (NoSQL) baza podataka Relacijske baze - Edgar F. Cood: A Relational Model of Data for Large Shared Data Banks (Communications of the ACM, 1970). Na temelju rada u istraživačkom centru San Jose IBM stručnjaci razvili su sustav System R. U Systemu R sve operacije su se izvodile pomoću posebnog jezika Structured English Query Language (SEQUEL). -

Mysql Performance Monitoring with Zabbix an Alternative

MySQL Performance Monitoring with Zabbix An alternative to the MySQL Enterprise Monitor? by Oli Sennhauser [email protected] http://www.fromdual.com www.fromdual.com 1 How many of you ... ● ¼ monitor their database servers? ● ¼ monitor their (MySQL) databases? ● ¼ monitor performance metrics? ● ¼ all the others: Why not? www.fromdual.com 2 Linux monitoring ● Question: What is your favourite Linux monitoring application? ● 1187 votes, April 2010 ● Manipulated? ● Lit: Linux Journal, [1] www.fromdual.com 3 What to monitor? ● Devices / Software ● Server, Router, Switches, I/O systems etc. ● Operating System, Networks, Applications, etc. ● Incidents ● DB down, Replication stopped, Server not reachable, etc. ● Critical Events ● Disk more than n% full or less than m Gbyte free, Replication more than n seconds lagging, Data node down, 100% CPU utilization, etc. → Alert, immediate intervention, fire fighting www.fromdual.com 4 What to monitor? ● Trends (includes time!) → Graphs ● How long does it take until ¼ ● ¼ my disk is full? ● ¼ my Index Memory is filled up? ● When does it happen? ● Peak? Backup? ● How often does it happen? Does it happen periodically? ● Once a day? Always at Sunday night? ● How does it correlate to other informations? ● I/O problems during our backup window? ● Reading the patterns! → this can help us to find the root cause of problems¼ www.fromdual.com 5 How to monitor? ● Basic solutions: ● top, vmstat, iostat, mytop, innotop, SHOW GLOBAL STATUS, SHOW INNODB STATUS ● CLI!, no graphs, no log term information, but good for adhoc analysis! ● Graphical solutions ● Nagios, Cactii, Zabbix, ¼ ● Typically NOT specialised in DB monitoring... www.fromdual.com 6 Focus ● Database + Server ● Trends Not the other stuff..