A Topology Layer for Machine Learning

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Topological Pattern Recognition for Point Cloud Data∗

Acta Numerica (2014), pp. 289–368 c Cambridge University Press, 2014 doi:10.1017/S0962492914000051 Printed in the United Kingdom Topological pattern recognition for point cloud data∗ Gunnar Carlsson† Department of Mathematics, Stanford University, CA 94305, USA E-mail: [email protected] In this paper we discuss the adaptation of the methods of homology from algebraic topology to the problem of pattern recognition in point cloud data sets. The method is referred to as persistent homology, and has numerous applications to scientific problems. We discuss the definition and computation of homology in the standard setting of simplicial complexes and topological spaces, then show how one can obtain useful signatures, called barcodes, from finite metric spaces, thought of as sampled from a continuous object. We present several different cases where persistent homology is used, to illustrate the different ways in which the method can be applied. CONTENTS 1 Introduction 289 2 Topology 293 3 Shape of data 311 4 Structures on spaces of barcodes 331 5 Organizing data sets 343 References 365 1. Introduction Deriving knowledge from large and complex data sets is a fundamental prob- lem in modern science. All aspects of this problem need to be addressed by the mathematical and computational sciences. There are various dif- ferent aspects to the problem, including devising methods for (a) storing massive amounts of data, (b) efficiently managing it, and (c) developing un- derstanding of the data set. The past decade has seen a great deal of devel- opment of powerful computing infrastructure, as well as methodologies for ∗ Colour online for monochrome figures available at journals.cambridge.org/anu. -

Topology and Data

BULLETIN (New Series) OF THE AMERICAN MATHEMATICAL SOCIETY Volume 46, Number 2, April 2009, Pages 255–308 S 0273-0979(09)01249-X Article electronically published on January 29, 2009 TOPOLOGY AND DATA GUNNAR CARLSSON 1. Introduction An important feature of modern science and engineering is that data of various kinds is being produced at an unprecedented rate. This is so in part because of new experimental methods, and in part because of the increase in the availability of high powered computing technology. It is also clear that the nature of the data we are obtaining is significantly different. For example, it is now often the case that we are given data in the form of very long vectors, where all but a few of the coordinates turn out to be irrelevant to the questions of interest, and further that we don’t necessarily know which coordinates are the interesting ones. A related fact is that the data is often very high-dimensional, which severely restricts our ability to visualize it. The data obtained is also often much noisier than in the past and has more missing information (missing data). This is particularly so in the case of biological data, particularly high throughput data from microarray or other sources. Our ability to analyze this data, both in terms of quantity and the nature of the data, is clearly not keeping pace with the data being produced. In this paper, we will discuss how geometry and topology can be applied to make useful contributions to the analysis of various kinds of data. -

Lecture Notes in Mathematics

Lecture Notes in Mathematics For information about Vols. 1-1145 please contact your bookseller Vol. 1173: H. DeHs, M. Knebusch, Locally Semialgebraic Spaces. XVI, or Springer-Verlag. 329 pages. 1g95, Vol. 1146: 5eminaire d'Aigebre Paul Dubreil et Marie-Paula Malliavin. Vol. 1174: Categories in Continuum Physics, Buffalo 1982. Seminar. Proceedings, 1g63-1984. Edite par M.-P. Malliavin. IV, 420 pages. Edited by F.W. Lawvere and S.H. Schanuel. V, t26 pages. t986. 1985. Vol. 1175: K. Mathiak, Valuations of Skew Fields and Projective Vol. 1147: M. Wschebor, Surfaces Aleatoires. VII, 11t pages. 1985. Hjelmslev Spaces. VII, 116 pages. 1986. Vol. 1t48: Mark A. Kon, Probability Distributions in Quantum Statistical Vol. 1176: R.R. Bruner, J.P. May, J.E. McClure, M. Steinberger, Mechanics. V, 12t pages. 1985. Hoo Ring Spectra and their Applications. VII, 388 pages. 1988. Vol. 1149: Universal Algebra and Lattice Theory. Proceedings, 1984. Vol. 1t77: Representation Theory I. Finite Dimensional Algebras. Edited by S. D. Comer. VI, 282 pages. 1985. Proceedings, t984. Edited by V. Dlab, P. Gabriel and G. Michler. XV, 340 pages. 1g86. Vol. 1150: B. Kawohl, Rearrangements and Convexity of Level Sets in Vol. 1178: Representation Theory II. Groups and Orders. Proceed PDE. V, 136 pages. 1985. ings, 1984. Edited by V. Dlab, P. Gabriel and G. Michler. XV, 370 Vol 1151: Ordinary and Partial Differential Equations. Proceedings, pages. 1986. 1984. Edited by B.D. Sleeman and R.J. Jarvis. XIV, 357 pages. 1985. Vol. 1179: Shi J .-Y. The Kazhdan-Lusztig Cells in Certain Affine Weyl Vol. 1152: H. Widom, Asymptotic Expansions for Pseudodifferential Groups. -

Three Keynote Lectures Delivered By: ▫ Prof. Gunnar Carlsson, Stanford

Three keynote lectures delivered by: . Prof. Gunnar Carlsson, Stanford University, USA . Prof. Samad Hedayat, University of Illinois at Chicago, USA . Prof. Edriss Titi, Texas A&M University, USA Gunnar Carlsson: Gunnar Carlsson is a Professor of Mathematics at Stanford University. A highly influential mathematician, he is most well-known for his proof of the Segal Burnside Ring conjecture and for his work on applied algebraic topology, especially topological data analysis. He was a Professor at Princeton University before joining the Stanford Mathematics Department in 1991 where he also served as a Chairman. He was invited at the International Congress of Mathematicians to speak about his work in homotopy theory. More recently his work on persistent homology and topological data analysis has opened up new fields of research with a large number of followers. Whether through his numerous publications or keynote addresses, Gunnar Carlsson has been having an enormous influence on present day mathematical research and its applications to as far afield as cancer treatment. He has co-authored two books on mathematical education. He served as a consultant to the education boards of the State of Texas and State of California concerning the Content Standards in Mathematics and currently is a member of Texas Instruments California Advisory Board on K-12 education. He is the recipient of many awards and grants including the Alfred P. Sloan Fellowship, NSF grants, DARPA and Air Force Office of Scientific Research grants. He is the editor of several internationally respected mathematics journals in topology and algebra. More information about Professor Gunnar Carlsson can be found at http://math.stanford.edu/~gunnar/ Samad Hedayat Professor Samad Hedayat is a UIC Distinguished Professor at Department of Mathematics, Statistics, and Computer Science, University of Illinois at Chicago (UIC). -

Gunnar Carlsson Professor, Department of Mathematics Stanford University

Colorado State University’s Information Science and Technology Center (ISTeC) presents two lectures by Gunnar Carlsson Professor, Department of Mathematics Stanford University ISTeC Distinguished Lecture In conjunction with the Mathematics Department, the Electrical and Computer Engineering Department, and the Computer Science Department ”The Shape of Data” Monday, October 20, 2014 Reception with refreshments: 10:30 am Lecture: 11:00 am – 12:00 noon Location: LSC Grey Rock • Mathematics Department, Electrical and Computer Engineering Department , and Computer Science Department Special Seminar Sponsored by ISTeC “The Algebraic Geometry of Persistence Barcodes” Tuesday, October 21, 2014 Lecture: 1:00 – 2:00 pm Reception with refreshments: 2:00 pm Location: 221 TILT ISTeC (Information Science and Technology Center) is a university-wide organization for promoting, facilitating, and enhancing CSU’s research, education, and outreach activities pertaining to the design and innovative application of computer, communication, and information systems. For more information please see ISTeC.ColoState.EDU. Abstracts The Shape of Data “Big data” is a term which describes many varied problems in the management of data. These relate to storage, query ca- pability, analysis, and numerous other aspects of the general problem of how to make most effective use of the enormous amounts of data currently being gathered. We will talk about a collection of recently developed methods for the analysis of large, high dimensional, and most importantly, complex data sets. These methods us the mathematical notion of shape, as encoded in topological methods, as a new tool in data analysis. We will discuss these methods, with numerous examples. The Algebraic Geometry of Persistence Barcodes Persistent homology associates to a finite metric space an invariant called a persistence barcode, which often allows one to infer the homology of and underlying space from which the finite sample is obtained. -

David Donoho COMMENTARY 52 Cliff Ord J

ISSN 0002-9920 (print) ISSN 1088-9477 (online) of the American Mathematical Society January 2018 Volume 65, Number 1 JMM 2018 Lecture Sampler page 6 Taking Mathematics to Heart y e n r a page 19 h C th T Ru a Columbus Meeting l i t h i page 90 a W il lia m s r e lk a W ca G Eri u n n a r C a rl ss on l l a d n a R na Da J i ll C . P ip her s e v e N ré F And e d e r i c o A rd ila s n e k c i M . E d al Ron Notices of the American Mathematical Society January 2018 FEATURED 6684 19 26 29 JMM 2018 Lecture Taking Mathematics to Graduate Student Section Sampler Heart Interview with Sharon Arroyo Conducted by Melinda Lanius Talithia Williams, Gunnar Carlsson, Alfi o Quarteroni Jill C. Pipher, Federico Ardila, Ruth WHAT IS...an Acylindrical Group Action? Charney, Erica Walker, Dana Randall, by omas Koberda André Neves, and Ronald E. Mickens AMS Graduate Student Blog All of us, wherever we are, can celebrate together here in this issue of Notices the San Diego Joint Mathematics Meetings. Our lecture sampler includes for the first time the AMS-MAA-SIAM Hrabowski-Gates-Tapia-McBay Lecture, this year by Talithia Williams on the new PBS series NOVA Wonders. After the sampler, other articles describe modeling the heart, Dürer's unfolding problem (which remains open), gerrymandering after the fall Supreme Court decision, a story for Congress about how geometry has advanced MRI, “My Father André Weil” (2018 is the 20th anniversary of his death), and a profile on Donald Knuth and native script by former Notices Senior Writer and Deputy Editor Allyn Jackson. -

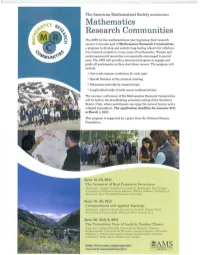

Mathematics Research Communities

The American Mathematical Society announces Mathematics Research Communities The AMS invites mathematicians just beginning their research careers to become part of Mathematics Research Communities, a program to develop and sustain long-lasting cohorts for collabora- tive research projects in many areas of mathematics. Women and underrepresented minorities are especially encouraged to partici- pate. The AMS will provide a structured program to engage and guide all participants as they start their careers. The program wHi include: • One-week summer conference for each topic • Special Sessions at the national meeting • Discussion networks by research topic • Longitudinal study of early career mathematicians The summer conferences of the Mathematics Research Communities will be held in the breathtaking mountain setting of the Snowbird Resort, Utah, where participants can enjoy the natural beauty and a collegial atmosphere. The application deadline for summer 2011 is March I, 2011. This program is supported by a grant from the National Science Foundation. June 12-18,2011 The Geometry of Real Projective Structures Organiurs: Virgirde Charette (Universite de Sherbrooke), Daryl Cooper (University of California, Santa Barbam). William Goldman (Uni\'ersity of Maryland), Anna Wienhard (Princeton University) June 19-25, 2011 Computational and Applied Topology Organizers: Gunnar Carlsson (SlanfOrd University). Robert Ghrist (University of Pennsylvania), Benjamin f;lann (Ayasdi. Inc.) June 26-July 2, 2011 The Pretentious View of Analytic Number Theory Orgnnizers: Andrew Granville (Universite do Montreal), Oimitris Koukoulopoulos (Universite de Montreal), Younes8 Lamzouri (University of Illinois at Urbana· Champaign), KRnmm Soulldnrarajan (Stanford University), Frank Thorne (Stanford University) http://www.ams.org/programs/ ~AMS ••••••••••• ...,.,""""""' ••'<11'" research·communities/mrc I ••••••.••••-""1' I. -

John Gunnar Carlsson Epstein Department of Industrial And

John Gunnar Carlsson · Curriculum Vitae · 2018 John Gunnar Carlsson Epstein Department of Industrial and Systems Engineering University of Southern California 3715 McClintock Ave, GER 240 Los Angeles, CA 90089-0193 Office: OHE 310F [email protected] Education 2009 Institute for Computational and Mathematical Engineering (ICME) Stanford University Ph.D. in Computational and Mathematical Engineering Dissertation: “Map segmentation algorithms for geographic resource allocation problems” Adviser: Yinyu Ye 2005 Harvard College A.B. in Mathematics and Music with honors Positions held 2017– Kellner Family Associate Professor, University of Southern California 2015–17 Assistant Professor, University of Southern California 2009–14 Assistant Professor, University of Minnesota Research publications and pre-prints (names in bold denote students) 2018 Carlsson, John Gunnar, Mehdi Behroozi, and Kresimir Mihic. “Wasserstein distance and the distributionally robust TSP.” Operations Research, to appear. 2018 Carlsson, John Gunnar, and Siyuan Song. “Coordinated logistics with a truck and a drone.” Management Science, to appear. 2017 Carlsson, John Gunnar, and Mehdi Behroozi. “Worst-case demand distributions in vehicle routing.” European Journal of Operational Research 256.2 (2017): 462-472. 2016 Carlsson, John Gunnar, Mehdi Behroozi, Xiangfei Meng, and Raghuveer Devulapalli. “Household-level economies of scale in transportation.” Operations Research 64.6 (2016): 1372- 1387. 2016 Carlsson, John Gunnar, Erik Carlsson, and Raghuveer Devulapalli. “Shadow prices in ter- ritory division.” Networks and Spatial Economics 16.3 (2016): 1-39. 2016 Carlsson, John Gunnar, Mehdi Behroozi, and Xiang Li. “Geometric partitioning and robust ad-hoc network design.” Annals of Operations Research 238.1 (2016): 41-68. 1 John Gunnar Carlsson · Curriculum Vitae · 2018 2015 Carlsson, John Gunnar, and Fan Jia. -

CV of Yuan YAO

CV of Yuan YAO Contact Hong Kong University of Science and Technology Phone: (852) 2358-7461 Information Department of Mathematics Fax: (852) 2358-1643 3430 Academic Building, Clear Water Bay, Kowloon E-mail: [email protected] Hong Kong SAR, P.R. China WWW: yao-lab.github.io Academic University of California at Berkeley, USA Qualifications Ph.D. Mathematics, December 2006 • Dissertation: A dynamic theory of learning: online learning and stochastic algorithms in Reproducing Kernel Hilbert Spaces • Committee: Stephen Smale (chair), Peter Bartlett, and Steve Evans. City University of Hong Kong, Hong Kong SAR, China M.Phil. Mathematics, June 2002 Harbin Institute of Technology, Harbin, China M.S. Control Engineering, July 1998 B.S. Control Engineering, July 1996 Academic Hong Kong University of Science and Technology, China Positions Department of Mathematics Department of Chemical and Biological Engineering (by courtesy) Department of Computer Science and Engineering Associate Professor Aug 2016 - present Peking (Beijing) University, China School of Mathematical Sciences Department of Probability and Statistics Associate Professor with Tenure July 2015 - present Professor of Statistics in the Hundred Talents Program1 July 2009 - present Stanford University, USA Department of Mathematics and Computer Science Postdoctoral Fellow August 2006 { August 2009 Awards and Hong Kong RGC General Research Fund, award 16303817 Grants Principal investigator Aug 2017 - Jul 2020 Social Choice, Crowdsourced Ranking, and Hodge Theory Tencent AI Lab, collaborative research award Principal investigator 2017 - Regularizations in Deep Learning Microsoft Research Asia, collaborative research award 1The Program was introduced 10 years ago by Peking University to attract overseas talents, in which I was appointed as Professor in the traditional Chinese academic ranking system. -

A Singer Construction in Motivic Homotopy Theory

A Singer construction in motivic homotopy theory Thomas Gregersen December 2012 © Thomas Gregersen, 2012 Series of dissertations submitted to the Faculty of Mathematics and Natural Sciences, University of Oslo No. 1280 ISSN 1501-7710 All rights reserved. No part of this publication may be reproduced or transmitted, in any form or by any means, without permission. Cover: Inger Sandved Anfinsen. Printed in Norway: AIT Oslo AS. Produced in co-operation with Akademika publishing. The thesis is produced by Akademika publishing merely in connection with the thesis defence. Kindly direct all inquiries regarding the thesis to the copyright holder or the unit which grants the doctorate. 2 Several people should be thanked for their support. First of all, I would like to thank professor John Rognes without whom this work would never have materialized. My parents should be thanked for giving me the chance to be who I am. Oda for all those years that were very worthwile. Finally, I thank Eirik for the years we got to share while he was still here. I will truly miss him. Contents 1 The basic categories 9 2 Overview and the main argument 13 3 The algebra 17 3.1 The motivic Steenrod algebra and its dual ........... 17 3.2 Finitely generated subalgebras of A ............... 34 3.3 The motivic Singer construction ................ 48 4 Inverse limits of motivic spectra 53 4.1 Realization in the motivic stable category ........... 53 4.1.1 Preliminaries....................... 53 4.1.2 Thehomotopicalconstruction.............. 61 4.2 The motivic Adams spectral sequence ............. 79 5 Concluding comments 95 3 4 CONTENTS Introduction The work pursued in this thesis concerns two fields related inside homotopy theory. -

The Segal Conjecture for Finite Groups a Beginner’S Guide

UNIVERSITY OF COPENHAGEN FACULTY OF SCIENCE ,,, Master’s thesis Kaif Muhammad Borhan Tan The Segal Conjecture for Finite Groups A Beginner’s Guide Advisor: Jesper Grodal Handed in: September 15, 2020 Contents 1 Introduction 2 2 Genuine equivariant stable homotopy theory 4 3 Mackey functors 12 4 Motivation and formulation of the conjecture 16 5 Reduction to the case of p-groups 18 6 Operational formulation of the conjecture for p-groups 21 7 An overview of the inductive strategy 23 8 S-functors and the proof of eorem A 25 9 e Ext group calculation of Adams-Gunawardena-Miller 30 10 Nonequivariant theory and the proof of eorem B 32 11 e case of elementary abelians and the proof of eorem D 35 Appendix A Induction theorem for the completed Burnside ring Green functor 40 1 Introduction One of the great triumphs of algebraic topology in the 1980’s was the resolution of the Segal conjecture for nite groups, the precise formulation of which will be given in x4. Its formulation and proof is the concern of the present note. e interest in this problem before and aer its resolution led to a urry of work and developments in algebraic topology in the 1970’s and 1980’s by many people, culminating in Gunnar Carlsson’s paper [Car84]. One implication of this is that even the literature for the full treatment of the core story for nite groups is quite scaered and the inevitable amount of forward references as well as folklore logical jumps le unsaid can be quite exhausting for the uninitiated. -

Equivariant Homotopy and Cohomology

EQUIVARIANT HOMOTOPY AND COHOMOLOGY THEORY May JP The author acknowledges the supp ort of the NSF Mathematics Subject Classication Revision Primary L M N N N N N P P P P Q R R T R Secondary E G G A P P P Q Q R R S S S U U R R S Author addresses University of Chicago Chicago Il Email address maymathuchicagoedu ii Contents Intro duction Chapter I Equivariant Cellular and Homology Theory Some basic denitions and adjunctions Analogs for based Gspaces GCW complexes Ordinary homology and cohomology theories Obstruction theory ersal co ecient sp ectral sequences Univ Chapter I I P ostnikov Systems Lo calization and Completion Gspaces and Postnikov systems Eilenb ergMacLane lo calizations of spaces Summary Lo calizations of Gspaces Summary completions of spaces Completions of Gspaces Chapter I I I Equivariant Rational Homotopy Theory Summary the theory of minimal mo dels Equivariant minimal mo dels Rational equivariant Hopf spaces Chapter IV Smith Theory cohomology Smith theory via Bredon iii iv CONTENTS Borel cohomology lo calization and Smith theory Equivariant Applications Chapter V Categorical Constructions Co ends and geometric realization Homotopy colimits and limits Elmendorf s theorem on diagrams of xed p oint spaces spaces Eilenb ergMac Lane Gspaces and universal F Chapter VI The Homotopy Theory of Diagrams Elementary homotopy theory of diagrams Homotopy Groups Cellular Theory The homology and cohomology theory of diagrams J The closed mo del structure on U Another pro of