Methods in Literature-Based Drug Discovery

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Detailed Review Paper on Retinoid Pathway Signalling

1 1 Detailed Review Paper on Retinoid Pathway Signalling 2 December 2020 3 2 4 Foreword 5 1. Project 4.97 to develop a Detailed Review Paper (DRP) on the Retinoid System 6 was added to the Test Guidelines Programme work plan in 2015. The project was 7 originally proposed by Sweden and the European Commission later joined the project as 8 a co-lead. In 2019, the OECD Secretariat was added to coordinate input from expert 9 consultants. The initial objectives of the project were to: 10 draft a review of the biology of retinoid signalling pathway, 11 describe retinoid-mediated effects on various organ systems, 12 identify relevant retinoid in vitro and ex vivo assays that measure mechanistic 13 effects of chemicals for development, and 14 Identify in vivo endpoints that could be added to existing test guidelines to 15 identify chemical effects on retinoid pathway signalling. 16 2. This DRP is intended to expand the recommendations for the retinoid pathway 17 included in the OECD Detailed Review Paper on the State of the Science on Novel In 18 vitro and In vivo Screening and Testing Methods and Endpoints for Evaluating 19 Endocrine Disruptors (DRP No 178). The retinoid signalling pathway was one of seven 20 endocrine pathways considered to be susceptible to environmental endocrine disruption 21 and for which relevant endpoints could be measured in new or existing OECD Test 22 Guidelines for evaluating endocrine disruption. Due to the complexity of retinoid 23 signalling across multiple organ systems, this effort was foreseen as a multi-step process. -

Brimonidine Tartrate; Brinzolamide

Contains Nonbinding Recommendations Draft Guidance on Brimonidine Tartrate ; Brinzolamide This draft guidance, when finalized, will represent the current thinking of the Food and Drug Administration (FDA, or the Agency) on this topic. It does not establish any rights for any person and is not binding on FDA or the public. You can use an alternative approach if it satisfies the requirements of the applicable statutes and regulations. To discuss an alternative approach, contact the Office of Generic Drugs. Active Ingredient: Brimonidine tartrate; Brinzolamide Dosage Form; Route: Suspension/drops; ophthalmic Strength: 0.2%; 1% Recommended Studies: One study Type of study: Bioequivalence (BE) study with clinical endpoint Design: Randomized (1:1), double-masked, parallel, two-arm, in vivo Strength: 0.2%; 1% Subjects: Males and females with chronic open angle glaucoma or ocular hypertension in both eyes. Additional comments: Specific recommendations are provided below. ______________________________________________________________________________ Analytes to measure (in appropriate biological fluid): Not applicable Bioequivalence based on (95% CI): Clinical endpoint Additional comments regarding the BE study with clinical endpoint: 1. The Office of Generic Drugs (OGD) recommends conducting a BE study with a clinical endpoint in the treatment of open angle glaucoma and ocular hypertension comparing the test product to the reference listed drug (RLD), each applied as one drop in both eyes three times daily at approximately 8:00 a.m., 4:00 p.m., and 10:00 p.m. for 42 days (6 weeks). 2. Inclusion criteria (the sponsor may add additional criteria): a. Male or nonpregnant females aged at least 18 years with chronic open angle glaucoma or ocular hypertension in both eyes b. -

ERJ-01090-2018.Supplement

Shaheen et al Online data supplement Prescribed analgesics in pregnancy and risk of childhood asthma Seif O Shaheen, Cecilia Lundholm, Bronwyn K Brew, Catarina Almqvist. 1 Shaheen et al Figure E1: Data available for analysis Footnote: Numbers refer to adjusted analyses (complete data on covariates) 2 Shaheen et al Table E1. Three classes of analgesics included in the analyses ATC codes Generic drug name Opioids N02AA59 Codeine, combinations excluding psycholeptics N02AA79 Codeine, combinations with psycholeptics N02AA08 Dihydrocodeine N02AA58 Dihydrocodeine, combinations N02AC04 Dextropropoxyphene N02AC54 Dextropropoxyphene, combinations excluding psycholeptics N02AX02 Tramadol Anti-migraine N02CA01 Dihydroergotamine N02CA02 Ergotamine N02CA04 Methysergide N02CA07 Lisuride N02CA51 Dihydroergotamine, combinations N02CA52 Ergotamine, combinations excluding psycholeptics N02CA72 Ergotamine, combinations with psycholeptics N02CC01 Sumatriptan N02CC02 Naratriptan N02CC03 Zolmitriptan N02CC04 Rizatriptan N02CC05 Almotriptan N02CC06 Eletriptan N02CC07 Frovatriptan N02CX01 Pizotifen N02CX02 Clonidine N02CX03 Iprazochrome N02CX05 Dimetotiazine N02CX06 Oxetorone N02CB01 Flumedroxone Paracetamol N02BE01 Paracetamol N02BE51 Paracetamol, combinations excluding psycholeptics N02BE71 Paracetamol, combinations with psycholeptics 3 Shaheen et al Table E2. Frequency of analgesic classes prescribed to the mother during pregnancy Opioids Anti- Paracetamol N % migraine No No No 459,690 93.2 No No Yes 9,091 1.8 Yes No No 15,405 3.1 No Yes No 2,343 0.5 Yes No -

Classification of Medicinal Drugs and Driving: Co-Ordination and Synthesis Report

Project No. TREN-05-FP6TR-S07.61320-518404-DRUID DRUID Driving under the Influence of Drugs, Alcohol and Medicines Integrated Project 1.6. Sustainable Development, Global Change and Ecosystem 1.6.2: Sustainable Surface Transport 6th Framework Programme Deliverable 4.4.1 Classification of medicinal drugs and driving: Co-ordination and synthesis report. Due date of deliverable: 21.07.2011 Actual submission date: 21.07.2011 Revision date: 21.07.2011 Start date of project: 15.10.2006 Duration: 48 months Organisation name of lead contractor for this deliverable: UVA Revision 0.0 Project co-funded by the European Commission within the Sixth Framework Programme (2002-2006) Dissemination Level PU Public PP Restricted to other programme participants (including the Commission x Services) RE Restricted to a group specified by the consortium (including the Commission Services) CO Confidential, only for members of the consortium (including the Commission Services) DRUID 6th Framework Programme Deliverable D.4.4.1 Classification of medicinal drugs and driving: Co-ordination and synthesis report. Page 1 of 243 Classification of medicinal drugs and driving: Co-ordination and synthesis report. Authors Trinidad Gómez-Talegón, Inmaculada Fierro, M. Carmen Del Río, F. Javier Álvarez (UVa, University of Valladolid, Spain) Partners - Silvia Ravera, Susana Monteiro, Han de Gier (RUGPha, University of Groningen, the Netherlands) - Gertrude Van der Linden, Sara-Ann Legrand, Kristof Pil, Alain Verstraete (UGent, Ghent University, Belgium) - Michel Mallaret, Charles Mercier-Guyon, Isabelle Mercier-Guyon (UGren, University of Grenoble, Centre Regional de Pharmacovigilance, France) - Katerina Touliou (CERT-HIT, Centre for Research and Technology Hellas, Greece) - Michael Hei βing (BASt, Bundesanstalt für Straßenwesen, Germany). -

Liarozole Hydrochloride (BANM, USAN, Rinnm) Kinetin Hidrocloruro De Liarozol; Liarozole, Chlorhydrate De; Liarozoli 1

Isotretinoin/Liarozole 1603 Malignant neoplasms. Retinoids such as isotretinoin have 9. Matthay KK, et al. Treatment of high-risk neuroblastoma with Profile been studied in the treatment of various neoplastic or preneoplas- intensive chemotherapy, radiotherapy, autologous bone marrow Kinetin is a plant growth hormone that has been promoted in transplantation, and 13-cis-retinoic acid. N Engl J Med 1999; tic disorders. Although oral tretinoin is used for remission induc- 341: 1165–73. products for the management of photodamaged skin and hyper- tion in acute promyelocytic leukaemia (see p.1619), other retin- 10. Kohler JA, et al. A randomized trial of 13-cis retinoic acid in pigmentation but good evidence of efficacy appears to be lack- oids do not have an established role in the treatment of cancer. children with advanced neuroblastoma after high-dose therapy. ing. There may, however, be a place for the use of retinoids in the Br J Cancer 2000; 83: 1124–7. Preparations chemoprevention of some malignancies. Skin disorders. Apart from its established role in the treatment Proprietary Preparations (details are given in Part 3) There has been particular interest in the potential for retinoids to of acne (above), isotretinoin has been tried in many other skin Arg.: Kinerase†; Braz.: Kinerase; Hong Kong: Kinerase; Malaysia: Kin- prevent the formation of skin cancers (p.672) in patients at in- disorders not responding to usual therapy.1,2 Clinical responses to erase†; Mex.: Kinerase; Singapore: Kinerase; USA: Kinerase. creased risk. Maintenance immunosuppression may increase the oral isotretinoin have been reported1 in small numbers of patients incidence of pre-malignant and malignant skin lesions in solid with anogenital warts (p.1584), rosacea (p.1583), and lichen pla- organ transplant recipients; large numbers of lesions can develop nus (p.1580). -

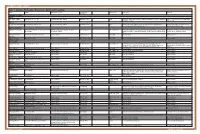

Table 1. Glaucoma Medications: Mechanisms, Dosing and Precautions Brand Generic Mechanism of Action Dosage/Avg

OPTOMETRIC STUDY CENTER Table 1. Glaucoma Medications: Mechanisms, Dosing and Precautions Brand Generic Mechanism of Action Dosage/Avg. % Product Sizes Side Effects Warnings Reduction CHOLINERGIC AGENTS Direct Pilocarpine (generic) Pilocarpine 1%, 2%, 4% Increases trabecular outflow BID-QID/15-25% 15ml Headache, blurred vision, myopia, retinal detachment, bronchiole constriction, Angle closure, shortness of breath, retinal narrowing of angle detachment Indirect Phospholine Iodide (Pfizer) Echothiophate iodide 0.125% Increases trabecular outflow QD-BID/15-25% 5ml Same as above plus cataractogenic iris cysts in children, pupillary block, Same as above, plus avoid prior to any increased paralysis with succinylcholine general anesthetic procedure ALPHA-2 AGONISTS Alphagan P (Allergan) Brimonidine tartrate 0.1%, 0.15% with Purite Decreases aqueous production, increases BID-TID/up to 26% 5ml, 10ml, 15ml Dry mouth, hypotension, bradycardia, follicular conjunctivitis, ocular irritation, Monitor for shortness of breath, dizziness, preservative uveoscleral outflow pruritus, dermatitis, conjunctival blanching, eyelid retraction, mydriasis, drug ocular redness and itching, fatigue allergy Brimonidine tartrate Brimonidine tartrate 0.15%, 0.2% Same as above Same as above 5ml, 10ml Same as above Same as above (generic) Iopidine (Novartis) Apraclonidine 0.5% Decreases aqueous production BID-TID/up to 25% 5ml, 10ml Same as above but higher drug allergy (40%) Same as above BETA-BLOCKERS Non-selective Betagan (Allergan) Levobunolol 0.25%, 0.5% Decreases -

EXPLORING NEW ASYMMETRIC REACTIONS CATALYSED by DICATIONIC Pd(II) COMPLEXES

EXPLORING NEW ASYMMETRIC REACTIONS CATALYSED BY DICATIONIC Pd(II) COMPLEXES A DISSERTATION FOR THE DEGREE OF DOCTOR OF PHILOSOPHY FROM IMPERIAL COLLEGE LONDON BY ALEXANDER SMITH MAY 2010 DEPARTMENT OF CHEMISTRY IMPERIAL COLLEGE LONDON DECLARATION I confirm that this report is my own work and where reference is made to other research this is referenced in text. ……………………………………………………………………… Copyright Notice Imperial College of Science, Technology and Medicine Department Of Chemistry Exploring new asymmetric reactions catalysed by dicationic Pd(II) complexes © 2010 Alexander Smith [email protected] This publication may be distributed freely in its entirety and in its original form without the consent of the copyright owner. Use of this material in any other published works must be appropriately referenced, and, if necessary, permission sought from the copyright owner. Published by: Alexander Smith Department of Chemistry Imperial College London South Kensington campus, London, SW7 2AZ UK www.imperial.ac.uk ACKNOWLEDGEMENTS I wish to thank my supervisor, Dr Mimi Hii, for her support throughout my PhD, without which this project could not have existed. Mimi’s passion for research and boundless optimism have been crucial, turning failures into learning curves, and ultimately leading this project to success. She has always been able to spare the time to advise me and provide fresh ideas, and I am grateful for this patience, generosity and support. In addition, Mimi’s open-minded and multi-disciplinary approach to chemistry has allowed the project to develop in new and unexpected directions, and has fundamentally changed my attitude to chemistry. I would like to express my gratitude to Dr Denis Billen from Pfizer, who also provided a much needed dose of optimism and enthusiasm in the early stages of the project, and managed to arrange my three month placement at Pfizer despite moving to the US as the department closed. -

(12) United States Patent (10) Patent No.: US 7.803,838 B2 Davis Et Al

USOO7803838B2 (12) United States Patent (10) Patent No.: US 7.803,838 B2 Davis et al. (45) Date of Patent: Sep. 28, 2010 (54) COMPOSITIONS COMPRISING NEBIVOLOL 2002fO169134 A1 11/2002 Davis 2002/0177586 A1 11/2002 Egan et al. (75) Inventors: Eric Davis, Morgantown, WV (US); 2002/0183305 A1 12/2002 Davis et al. John O'Donnell, Morgantown, WV 2002/0183317 A1 12/2002 Wagle et al. (US); Peter Bottini, Morgantown, WV 2002/0183365 A1 12/2002 Wagle et al. (US) 2002/0192203 A1 12, 2002 Cho 2003, OOO4194 A1 1, 2003 Gall (73) Assignee: Forest Laboratories Holdings Limited 2003, OO13699 A1 1/2003 Davis et al. (BM) 2003/0027820 A1 2, 2003 Gall (*) Notice: Subject to any disclaimer, the term of this 2003.0053981 A1 3/2003 Davis et al. patent is extended or adjusted under 35 2003, OO60489 A1 3/2003 Buckingham U.S.C. 154(b) by 455 days. 2003, OO69221 A1 4/2003 Kosoglou et al. 2003/0078190 A1* 4/2003 Weinberg ...................... 514f1 (21) Appl. No.: 11/141,235 2003/0078517 A1 4/2003 Kensey 2003/01 19428 A1 6/2003 Davis et al. (22) Filed: May 31, 2005 2003/01 19757 A1 6/2003 Davis 2003/01 19796 A1 6/2003 Strony (65) Prior Publication Data 2003.01.19808 A1 6/2003 LeBeaut et al. US 2005/027281.0 A1 Dec. 8, 2005 2003.01.19809 A1 6/2003 Davis 2003,0162824 A1 8, 2003 Krul Related U.S. Application Data 2003/0175344 A1 9, 2003 Waldet al. (60) Provisional application No. 60/577,423, filed on Jun. -

)&F1y3x PHARMACEUTICAL APPENDIX to THE

)&f1y3X PHARMACEUTICAL APPENDIX TO THE HARMONIZED TARIFF SCHEDULE )&f1y3X PHARMACEUTICAL APPENDIX TO THE TARIFF SCHEDULE 3 Table 1. This table enumerates products described by International Non-proprietary Names (INN) which shall be entered free of duty under general note 13 to the tariff schedule. The Chemical Abstracts Service (CAS) registry numbers also set forth in this table are included to assist in the identification of the products concerned. For purposes of the tariff schedule, any references to a product enumerated in this table includes such product by whatever name known. Product CAS No. Product CAS No. ABAMECTIN 65195-55-3 ACTODIGIN 36983-69-4 ABANOQUIL 90402-40-7 ADAFENOXATE 82168-26-1 ABCIXIMAB 143653-53-6 ADAMEXINE 54785-02-3 ABECARNIL 111841-85-1 ADAPALENE 106685-40-9 ABITESARTAN 137882-98-5 ADAPROLOL 101479-70-3 ABLUKAST 96566-25-5 ADATANSERIN 127266-56-2 ABUNIDAZOLE 91017-58-2 ADEFOVIR 106941-25-7 ACADESINE 2627-69-2 ADELMIDROL 1675-66-7 ACAMPROSATE 77337-76-9 ADEMETIONINE 17176-17-9 ACAPRAZINE 55485-20-6 ADENOSINE PHOSPHATE 61-19-8 ACARBOSE 56180-94-0 ADIBENDAN 100510-33-6 ACEBROCHOL 514-50-1 ADICILLIN 525-94-0 ACEBURIC ACID 26976-72-7 ADIMOLOL 78459-19-5 ACEBUTOLOL 37517-30-9 ADINAZOLAM 37115-32-5 ACECAINIDE 32795-44-1 ADIPHENINE 64-95-9 ACECARBROMAL 77-66-7 ADIPIODONE 606-17-7 ACECLIDINE 827-61-2 ADITEREN 56066-19-4 ACECLOFENAC 89796-99-6 ADITOPRIM 56066-63-8 ACEDAPSONE 77-46-3 ADOSOPINE 88124-26-9 ACEDIASULFONE SODIUM 127-60-6 ADOZELESIN 110314-48-2 ACEDOBEN 556-08-1 ADRAFINIL 63547-13-7 ACEFLURANOL 80595-73-9 ADRENALONE -

NINDS Custom Collection II

ACACETIN ACEBUTOLOL HYDROCHLORIDE ACECLIDINE HYDROCHLORIDE ACEMETACIN ACETAMINOPHEN ACETAMINOSALOL ACETANILIDE ACETARSOL ACETAZOLAMIDE ACETOHYDROXAMIC ACID ACETRIAZOIC ACID ACETYL TYROSINE ETHYL ESTER ACETYLCARNITINE ACETYLCHOLINE ACETYLCYSTEINE ACETYLGLUCOSAMINE ACETYLGLUTAMIC ACID ACETYL-L-LEUCINE ACETYLPHENYLALANINE ACETYLSEROTONIN ACETYLTRYPTOPHAN ACEXAMIC ACID ACIVICIN ACLACINOMYCIN A1 ACONITINE ACRIFLAVINIUM HYDROCHLORIDE ACRISORCIN ACTINONIN ACYCLOVIR ADENOSINE PHOSPHATE ADENOSINE ADRENALINE BITARTRATE AESCULIN AJMALINE AKLAVINE HYDROCHLORIDE ALANYL-dl-LEUCINE ALANYL-dl-PHENYLALANINE ALAPROCLATE ALBENDAZOLE ALBUTEROL ALEXIDINE HYDROCHLORIDE ALLANTOIN ALLOPURINOL ALMOTRIPTAN ALOIN ALPRENOLOL ALTRETAMINE ALVERINE CITRATE AMANTADINE HYDROCHLORIDE AMBROXOL HYDROCHLORIDE AMCINONIDE AMIKACIN SULFATE AMILORIDE HYDROCHLORIDE 3-AMINOBENZAMIDE gamma-AMINOBUTYRIC ACID AMINOCAPROIC ACID N- (2-AMINOETHYL)-4-CHLOROBENZAMIDE (RO-16-6491) AMINOGLUTETHIMIDE AMINOHIPPURIC ACID AMINOHYDROXYBUTYRIC ACID AMINOLEVULINIC ACID HYDROCHLORIDE AMINOPHENAZONE 3-AMINOPROPANESULPHONIC ACID AMINOPYRIDINE 9-AMINO-1,2,3,4-TETRAHYDROACRIDINE HYDROCHLORIDE AMINOTHIAZOLE AMIODARONE HYDROCHLORIDE AMIPRILOSE AMITRIPTYLINE HYDROCHLORIDE AMLODIPINE BESYLATE AMODIAQUINE DIHYDROCHLORIDE AMOXEPINE AMOXICILLIN AMPICILLIN SODIUM AMPROLIUM AMRINONE AMYGDALIN ANABASAMINE HYDROCHLORIDE ANABASINE HYDROCHLORIDE ANCITABINE HYDROCHLORIDE ANDROSTERONE SODIUM SULFATE ANIRACETAM ANISINDIONE ANISODAMINE ANISOMYCIN ANTAZOLINE PHOSPHATE ANTHRALIN ANTIMYCIN A (A1 shown) ANTIPYRINE APHYLLIC -

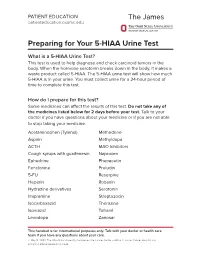

Preparing for Your 5-HIAA Urine Test

PATIENT EDUCATION patienteducation.osumc.edu Preparing for Your 5-HIAA Urine Test What is a 5-HIAA Urine Test? This test is used to help diagnose and check carcinoid tumors in the body. When the hormone serotonin breaks down in the body, it makes a waste product called 5-HIAA. The 5-HIAA urine test will show how much 5-HIAA is in your urine. You must collect urine for a 24-hour period of time to complete this test. How do I prepare for this test? Some medicines can affect the results of this test.Do not take any of the medicines listed below for 2 days before your test. Talk to your doctor if you have questions about your medicine or if you are not able to stop taking your medicine. Acetaminophen (Tylenol) Methedrine Aspirin Methyldopa ACTH MAO Inhibitors Cough syrups with guaifenesin Naproxen Ephedrine Phenacetin Fenclonine Preludin 5-FU Reserpine Heparin Robaxin Hydrazine derivatives Serotonin Imipramine Streptozocin Isocarboxazid Thorazine Isoniazid Tofranil Levodopa Zanosar This handout is for informational purposes only. Talk with your doctor or health care team if you have any questions about your care. © May 14, 2020. The Ohio State University Comprehensive Cancer Center – Arthur G. James Cancer Hospital and Richard J. Solove Research Institute. There are certain foods and drinks that you should not eat or drink before this test. Do not eat or drink any of the items below for 4 days before the test or during the test. Avocados Grapefruit Bananas Honeydew Coffee/Tea Kiwi Cantaloupe Pineapple Dates Plantains Eggplant Plums Tomatoes / tomato products Nuts (walnuts, pecans, hickory nuts, butternuts) • Do not drink alcoholic beverages for 2 days before or during the test. -

Rodgers 693..696

Copyright #ERS Journals Ltd 1999 Eur Respir J 1999; 14: 693±696 European Respiratory Journal Printed in UK ± all rights reserved ISSN 0903-1936 The effect of topical benzamil and amiloride on nasal potential difference in cystic fibrosis H.C. Rodgers, A.J. Knox The effect of topical benzamil and amiloride on nasal potential difference in cystic fibrosis. Respiratory Medicine Unit, City Hospital, H.C. Rodgers, A.J. Knox. #ERS Journals Ltd 1999. Hucknall Road, Nottingham, NG5 IPB. ABSTRACT: The electrochemical defect in the bronchial epithelium in cystic fibrosis (CF) consists of defective chloride secretion and excessive sodium reabsorption. The Correspondence: A.J. Knox Respiratory Medicine Unit sodium channel blocker, amiloride, has been shown to reversibly correct the sodium Clinical Sciences Building reabsorption in CF subjects, but long term studies of amiloride have been disap- City Hospital pointing due to its short duration of action. Benzamil, a benzyl substituted amiloride Hucknall Road analogue, has a longer duration of action than amiloride in cultured human nasal Nottingham NG5 lPB epithelium. The results of the first randomized, placebo controlled, double blind, UK crossover study are reported here comparing the effects of benzamil and amiloride on Fax: 0115 9602140 nasal potential difference (nasal PD) in CF. Ten adults with CF attended on three occasions. At each visit baseline nasal PD was Keywords: Amiloride -3 -3 benzamil recorded, the drug (amiloride 1610 M, benzamil 1.7610 M, or 0.9% sodium cystic fibrosis chloride) was administered topically via a nasal spray, and nasal PD was measured at nasal potential difference 15, 30 min, 1, 2, 4 and 8 h.