Selecting, Refining, and Evaluating Predicates for Program Analysis

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Executable Code Is Not the Proper Subject of Copyright Law a Retrospective Criticism of Technical and Legal Naivete in the Apple V

Executable Code is Not the Proper Subject of Copyright Law A retrospective criticism of technical and legal naivete in the Apple V. Franklin case Matthew M. Swann, Clark S. Turner, Ph.D., Department of Computer Science Cal Poly State University November 18, 2004 Abstract: Copyright was created by government for a purpose. Its purpose was to be an incentive to produce and disseminate new and useful knowledge to society. Source code is written to express its underlying ideas and is clearly included as a copyrightable artifact. However, since Apple v. Franklin, copyright has been extended to protect an opaque software executable that does not express its underlying ideas. Common commercial practice involves keeping the source code secret, hiding any innovative ideas expressed there, while copyrighting the executable, where the underlying ideas are not exposed. By examining copyright’s historical heritage we can determine whether software copyright for an opaque artifact upholds the bargain between authors and society as intended by our Founding Fathers. This paper first describes the origins of copyright, the nature of software, and the unique problems involved. It then determines whether current copyright protection for the opaque executable realizes the economic model underpinning copyright law. Having found the current legal interpretation insufficient to protect software without compromising its principles, we suggest new legislation which would respect the philosophy on which copyright in this nation was founded. Table of Contents INTRODUCTION................................................................................................. 1 THE ORIGIN OF COPYRIGHT ........................................................................... 1 The Idea is Born 1 A New Beginning 2 The Social Bargain 3 Copyright and the Constitution 4 THE BASICS OF SOFTWARE .......................................................................... -

The LLVM Instruction Set and Compilation Strategy

The LLVM Instruction Set and Compilation Strategy Chris Lattner Vikram Adve University of Illinois at Urbana-Champaign lattner,vadve ¡ @cs.uiuc.edu Abstract This document introduces the LLVM compiler infrastructure and instruction set, a simple approach that enables sophisticated code transformations at link time, runtime, and in the field. It is a pragmatic approach to compilation, interfering with programmers and tools as little as possible, while still retaining extensive high-level information from source-level compilers for later stages of an application’s lifetime. We describe the LLVM instruction set, the design of the LLVM system, and some of its key components. 1 Introduction Modern programming languages and software practices aim to support more reliable, flexible, and powerful software applications, increase programmer productivity, and provide higher level semantic information to the compiler. Un- fortunately, traditional approaches to compilation either fail to extract sufficient performance from the program (by not using interprocedural analysis or profile information) or interfere with the build process substantially (by requiring build scripts to be modified for either profiling or interprocedural optimization). Furthermore, they do not support optimization either at runtime or after an application has been installed at an end-user’s site, when the most relevant information about actual usage patterns would be available. The LLVM Compilation Strategy is designed to enable effective multi-stage optimization (at compile-time, link-time, runtime, and offline) and more effective profile-driven optimization, and to do so without changes to the traditional build process or programmer intervention. LLVM (Low Level Virtual Machine) is a compilation strategy that uses a low-level virtual instruction set with rich type information as a common code representation for all phases of compilation. -

Architectural Support for Scripting Languages

Architectural Support for Scripting Languages By Dibakar Gope A dissertation submitted in partial fulfillment of the requirements for the degree of Doctor of Philosophy (Electrical and Computer Engineering) at the UNIVERSITY OF WISCONSIN–MADISON 2017 Date of final oral examination: 6/7/2017 The dissertation is approved by the following members of the Final Oral Committee: Mikko H. Lipasti, Professor, Electrical and Computer Engineering Gurindar S. Sohi, Professor, Computer Sciences Parameswaran Ramanathan, Professor, Electrical and Computer Engineering Jing Li, Assistant Professor, Electrical and Computer Engineering Aws Albarghouthi, Assistant Professor, Computer Sciences © Copyright by Dibakar Gope 2017 All Rights Reserved i This thesis is dedicated to my parents, Monoranjan Gope and Sati Gope. ii acknowledgments First and foremost, I would like to thank my parents, Sri Monoranjan Gope, and Smt. Sati Gope for their unwavering support and encouragement throughout my doctoral studies which I believe to be the single most important contribution towards achieving my goal of receiving a Ph.D. Second, I would like to express my deepest gratitude to my advisor Prof. Mikko Lipasti for his mentorship and continuous support throughout the course of my graduate studies. I am extremely grateful to him for guiding me with such dedication and consideration and never failing to pay attention to any details of my work. His insights, encouragement, and overall optimism have been instrumental in organizing my otherwise vague ideas into some meaningful contributions in this thesis. This thesis would never have been accomplished without his technical and editorial advice. I find myself fortunate to have met and had the opportunity to work with such an all-around nice person in addition to being a great professor. -

CDC Build System Guide

CDC Build System Guide Java™ Platform, Micro Edition Connected Device Configuration, Version 1.1.2 Foundation Profile, Version 1.1.2 Optimized Implementation Sun Microsystems, Inc. www.sun.com December 2008 Copyright © 2008 Sun Microsystems, Inc., 4150 Network Circle, Santa Clara, California 95054, U.S.A. All rights reserved. Sun Microsystems, Inc. has intellectual property rights relating to technology embodied in the product that is described in this document. In particular, and without limitation, these intellectual property rights may include one or more of the U.S. patents listed at http://www.sun.com/patents and one or more additional patents or pending patent applications in the U.S. and in other countries. U.S. Government Rights - Commercial software. Government users are subject to the Sun Microsystems, Inc. standard license agreement and applicable provisions of the FAR and its supplements. This distribution may include materials developed by third parties. Parts of the product may be derived from Berkeley BSD systems, licensed from the University of California. UNIX is a registered trademark in the U.S. and in other countries, exclusively licensed through X/Open Company, Ltd. Sun, Sun Microsystems, the Sun logo, Java, Solaris and HotSpot are trademarks or registered trademarks of Sun Microsystems, Inc. or its subsidiaries in the United States and other countries. The Adobe logo is a registered trademark of Adobe Systems, Incorporated. Products covered by and information contained in this service manual are controlled by U.S. Export Control laws and may be subject to the export or import laws in other countries. Nuclear, missile, chemical biological weapons or nuclear maritime end uses or end users, whether direct or indirect, are strictly prohibited. -

Using Ld the GNU Linker

Using ld The GNU linker ld version 2 January 1994 Steve Chamberlain Cygnus Support Cygnus Support [email protected], [email protected] Using LD, the GNU linker Edited by Jeffrey Osier (jeff[email protected]) Copyright c 1991, 92, 93, 94, 95, 96, 97, 1998 Free Software Foundation, Inc. Permission is granted to make and distribute verbatim copies of this manual provided the copyright notice and this permission notice are preserved on all copies. Permission is granted to copy and distribute modified versions of this manual under the conditions for verbatim copying, provided also that the entire resulting derived work is distributed under the terms of a permission notice identical to this one. Permission is granted to copy and distribute translations of this manual into another lan- guage, under the above conditions for modified versions. Chapter 1: Overview 1 1 Overview ld combines a number of object and archive files, relocates their data and ties up symbol references. Usually the last step in compiling a program is to run ld. ld accepts Linker Command Language files written in a superset of AT&T’s Link Editor Command Language syntax, to provide explicit and total control over the linking process. This version of ld uses the general purpose BFD libraries to operate on object files. This allows ld to read, combine, and write object files in many different formats—for example, COFF or a.out. Different formats may be linked together to produce any available kind of object file. See Chapter 5 [BFD], page 47, for more information. Aside from its flexibility, the gnu linker is more helpful than other linkers in providing diagnostic information. -

Optimizing Function Placement for Large-Scale Data-Center Applications

Optimizing Function Placement for Large-Scale Data-Center Applications Guilherme Ottoni Bertrand Maher Facebook, Inc., USA ottoni,bertrand @fb.com { } Abstract While the large size and performance criticality of such Modern data-center applications often comprise a large applications make them good candidates for profile-guided amount of code, with substantial working sets, making them code-layout optimizations, these characteristics also im- good candidates for code-layout optimizations. Although pose scalability challenges to optimize these applications. recent work has evaluated the impact of profile-guided intra- Instrumentation-based profilers significantly slow down the module optimizations and some cross-module optimiza- applications, often making it impractical to gather accurate tions, no recent study has evaluated the benefit of function profiles from a production system. To simplify deployment, placement for such large-scale applications. In this paper, it is beneficial to have a system that can profile unmodi- we study the impact of function placement in the context fied binaries, running in production, and use these data for of a simple tool we created that uses sample-based profiling feedback-directed optimization. This is possible through the data. By using sample-based profiling, this methodology fol- use of sample-based profiling, which enables high-quality lows the same principle behind AutoFDO, i.e. using profil- profiles to be gathered with minimal operational complexity. ing data collected from unmodified binaries running in pro- This is the approach taken by tools such as AutoFDO [1], duction, which makes it applicable to large-scale binaries. and which we also follow in this work. Using this tool, we first evaluate the impact of the traditional The benefit of feedback-directed optimizations for some Pettis-Hansen (PH) function-placement algorithm on a set of data-center applications has been evaluated in some pre- widely deployed data-center applications. -

LLVM, Clang and Embedded Linux Systems

LLVM, Clang and Embedded Linux Systems Bruno Cardoso Lopes University of Campinas What’s LLVM? What’s LLVM • Compiler infrastructure To o l s Frontend Optimizer IR Assembler Backends (clang) Disassembler JIT What’s LLVM • Virtual Instruction set (IR) • SSA • Bitcode C source code IR Frontend int add(int x, int y) { define i32 @add(i32 %x, i32 %y) { return x+y; Clang %1 = add i32 %y, %x } ret i32 %1 } What’s LLVM • Optimization oriented compiler: compile time, link-time and run-time • More than 30 analysis passes and 60 transformation passes. Why LLVM? Why LLVM? • Open Source • Active community • Easy integration and quick patch review Why LLVM? • Code easy to read and understand • Transformations and optimizations are applied in several parts during compilation Design Design • Written in C++ • Modular and composed of several libraries • Pass mechanism with pluggable interface for transformations and analysis • Several tools for each part of compilation To o l s To o l s Front-end • Dragonegg • Gcc 4.5 plugin • llvm-gcc • GIMPLE to LLVM IR To o l s Front-end • Clang • Library approach • No cross-compiler generation needed • Good diagnostics • Static Analyzer To o l s Optimizer • Optimization are applied to the IR • opt tool $ opt -O3 add.bc -o add2.bc optimizations Aggressive Dead Code Elmination Tail Call Elimination Combine Redudant Instructions add.bc add2.bc Dead Argument Elimination Type-Based Alias Analysis ... To o l s Low Level Compiler • llc tool: invoke the static backends • Generates assembly or object code $ llc -march=arm add.bc -

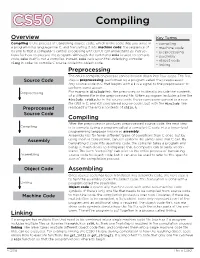

Compiling.Pdf

CS50 Compiling Overview Key Terms Compiling is the process of translating source code, which is the code that you write in • compiling a programming language like C, and translating it into machine code: the sequence of • machine code 0s and 1s that a computer's central processing unit (CPU) can understand as instruc- • preprocessing tions for how to execute the program. Although the command make is used to compile • assembly code, make itself is not a compiler. Instead, make calls upon the underlying compiler • object code in order to compile C source code into object code. clang • linking Preprocessing The entire compilation process can be broken down into four steps. The first Source Code step is preprocessing, performed by a program called the preprocessor. Any source code in C that begins with a # is a signal to the preprocessor to perform some action. Preprocessing For example, #include tells the preprocessor to literally include the contents of a different file in the preprocessed file. When a program includes a line like #include <stdio.h> in the source code, the preprocessor generates a new file (still in C, and still considered source code), but with the #include line Preprocessed replaced by the entire contents of stdio.h. Source Code Compiling After the preprocessor produces preprocessed source code, the next step Compiling is to compile (using a program called a compiler) C code into a lower-level programming language known as assembly. Assembly has far fewer different types of operations than C does, but by Assembly using them in conjunction, can still perform the same tasks that C can. -

Code Generation

Code Generation 22 November 2019 OSU CSE 1 BL Compiler Structure Code Tokenizer Parser Generator string of string of abstract string of characters tokens program integers (source code) (“words”) (object code) The code generator is the last step. 22 November 2019 OSU CSE 2 Executing a BL Program • There are two qualitatively different ways one might execute a BL program, given a value of type Program that has been constructed from BL source code: – Interpret the Program directly – Compile the Program into object code (“byte code”) that is executed by a virtual machine 22 November 2019 OSU CSE 3 Executing a BLThis Program is what the BL compiler will actually do; • There are two qualitativelyand this isdifferent how Java ways itself one might execute a worksBL program, (recall the given JVM a value of type Programandthat its “bytehas beencodes”). constructed from its source code: – Interpret the Program directly – Compile the Program into object code (“byte code”) that is executed by a virtual machine 22 November 2019 OSU CSE 4 Executing a BL Program Let’s first see how this might be done ... • There are two qualitatively different ways one might execute a BL program, given a value of type Program that has been constructed from its source code: – Interpret the Program directly – Compile the Program into object code (“byte code”) that is executed by a virtual machine 22 November 2019 OSU CSE 5 Time Lines of Execution • Directly interpreting a Program: Execute by interpreting the Tokenize Parse Program directly • Compiling and then executing a Program: Generate Execute by interpreting Tokenize Parse code generated code on VM 22 November 2019 OSU CSE 6 Time Lines of Execution • Directly interpreting a Program: Execute by interpreting the Tokenize Parse Program directly • Compiling and then executing a Program: Generate Execute by interpreting Tokenize Parse code generated code on VM At this point, you have a Program value to use. -

ACUCOBOL-GT | Open Systems COBOL Compiler and Runtime System

® ACUCOBOL-GT | Open Systems COBOL Compiler and Runtime System EXECUTIVE OVERVIEW ACUCOBOL-GT is an advanced COBOL development system for extending and modernizing business-critical COBOL applications. It is an extremely portable COBOL, supporting single- source, compile-once deployment on hundreds of platforms including most UNIX®, Linux®, Windows®, VMS, and MPE/iX systems. ACUCOBOL-GT includes extensive facilities for interoperating with C, Java, .NET and XML. It supports connectivity to leading relational databases and ISAM data sources through both open and patented technologies. The core component of ® Imagine. COBOL interoperating with Java™ Acucorp’s family of extend technologies, ACUCOBOL-GT supports thin client and multi-tier technology... running a .NET™ assembly… client/server architectures, as well as COBOL Web processing XML data…or providing a Web services and platform-independent graphical user service. Imagine running COBOL from a mobile, interfaces. Flexible and scalable, ACUCOBOL-GT handheld device. What would this kind of works anywhere in the enterprise, from back office to point-of-sale. functionality mean to your business? For some, it might give a competitive edge, adding value to ACUCOBOL-GT is a multi-component COBOL mission-critical applications. To others it might development system that includes a compiler, the mean breathing new life into a languishing COBOL Virtual Machine™ (runtime), the Vision indexed file system, a source-level interactive application. ACUCOBOL-GT offers all of these debugger and nearly a dozen support utilities. capabilities and more. Our graphical technology allows you to create a native graphical user interface with the Screen Section and familiar COBOL verbs like DISPLAY and ACCEPT. -

Looking Under the Hood: Software

Chapter 3 Looking Under The Hood: Software In this chapter we explore how a simple C program interacts with the hardware described in the previous chapter. We begin by introducing the virtual memory map and its relationship to the physical memory map. We then use the simplePIC.c program from Chapter 1 to begin to explore the compilation process and the XC32 compiler installation. 3.1 The Virtual Memory Map In the last chapter we learned about the PIC32 physical memory map. The physical memory map is relatively easy to understand: the CPU can access any SFR, or any location in data RAM, program flash, or boot flash, by a 32-bit address that it puts on the bus matrix. Since we don’t really have 232 bytes, or 4 GB, to access, many choices of the 32 bits don’t address anything. In this chapter we focus on the virtual memory map. This is because almost all software refers only to virtual memory addresses. Virtual addresses (VAs) specified in software are translated to physical addresses (PAs) by the fixed mapping translation (FMT) unit in the CPU, which is simply PA = VA & 0x1FFFFFFF This bitwise AND operation simply clears the first three bits, the three most significant bits of the most significant hex digit. If we’re just throwing away those three bits, what’s the point of them? Well, those first three bits are used by the CPU and the prefetch module we learned about in the previous chapter. If the first three bits of the virtual address of a program instruction are 100 (so the corresponding most significant hex digit of the VA is an 8 or 9), then that instruction can be cached. -

Safety Certification of Compiler Generated Object Code

Safety Certification of Compiler Generated Object Code INTRODUCTION This paper examines the standards and guidance related to safety certification of object code generated by a compiler toolchain for both CPU and GPU targets. It is written in the context of two markets: avionics (DO-178C/ED-12C) and automotive (ISO 26262). (ISO 26262 is derived from the general IEC 61508, which is also used and derived for other markets with safety-critical applications, such as rail and nuclear). This paper will explain the motivation behind the guidance and identify approaches that may be used to address concerns. Understanding the guidance and constraints will facilitate the selection of an appropriate approach. In the context of this paper, a compiler is a computer program that translates a high-level programming language, such as C, GLSL, or SPIR-V, to a lower-level language, such as object code, that can be used to create an executable pro- gram. See Figure 1. Figure 1: Compiler Tool Chain Relative to Development coreavi.com [email protected] 1 Compilers perform many or all the following operations: preprocessing, lexical analysis, parsing, semantic analysis, code optimization, and code generation. Typically, compilers have an option to produce an intermediate assembly language output that is a human-readable representation of the executable object code (one to one relationship). Compilers are complex computer programs consisting of millions of lines of code; they are a concern for safety-critical development in that they are tools that generate code embedded in the application, and any error in the generated executable object code can introduce a failure in the safety-critical application.