CRAM: Efficient Hardware-Based Memory Compression For

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Virtual Memory

Chapter 4 Virtual Memory Linux processes execute in a virtual environment that makes it appear as if each process had the entire address space of the CPU available to itself. This virtual address space extends from address 0 all the way to the maximum address. On a 32-bit platform, such as IA-32, the maximum address is 232 − 1or0xffffffff. On a 64-bit platform, such as IA-64, this is 264 − 1or0xffffffffffffffff. While it is obviously convenient for a process to be able to access such a huge ad- dress space, there are really three distinct, but equally important, reasons for using virtual memory. 1. Resource virtualization. On a system with virtual memory, a process does not have to concern itself with the details of how much physical memory is available or which physical memory locations are already in use by some other process. In other words, virtual memory takes a limited physical resource (physical memory) and turns it into an infinite, or at least an abundant, resource (virtual memory). 2. Information isolation. Because each process runs in its own address space, it is not possible for one process to read data that belongs to another process. This improves security because it reduces the risk of one process being able to spy on another pro- cess and, e.g., steal a password. 3. Fault isolation. Processes with their own virtual address spaces cannot overwrite each other’s memory. This greatly reduces the risk of a failure in one process trig- gering a failure in another process. That is, when a process crashes, the problem is generally limited to that process alone and does not cause the entire machine to go down. -

The Case for Compressed Caching in Virtual Memory Systems

THE ADVANCED COMPUTING SYSTEMS ASSOCIATION The following paper was originally published in the Proceedings of the USENIX Annual Technical Conference Monterey, California, USA, June 6-11, 1999 The Case for Compressed Caching in Virtual Memory Systems _ Paul R. Wilson, Scott F. Kaplan, and Yannis Smaragdakis aUniversity of Texas at Austin © 1999 by The USENIX Association All Rights Reserved Rights to individual papers remain with the author or the author's employer. Permission is granted for noncommercial reproduction of the work for educational or research purposes. This copyright notice must be included in the reproduced paper. USENIX acknowledges all trademarks herein. For more information about the USENIX Association: Phone: 1 510 528 8649 FAX: 1 510 548 5738 Email: [email protected] WWW: http://www.usenix.org The Case for Compressed Caching in Virtual Memory Systems Paul R. Wilson, Scott F. Kaplan, and Yannis Smaragdakis Dept. of Computer Sciences University of Texas at Austin Austin, Texas 78751-1182 g fwilson|sfkaplan|smaragd @cs.utexas.edu http://www.cs.utexas.edu/users/oops/ Abstract In [Wil90, Wil91b] we proposed compressed caching for virtual memory—storing pages in compressed form Compressed caching uses part of the available RAM to in a main memory compression cache to reduce disk pag- hold pages in compressed form, effectively adding a new ing. Appel also promoted this idea [AL91], and it was level to the virtual memory hierarchy. This level attempts evaluated empirically by Douglis [Dou93] and by Russi- to bridge the huge performance gap between normal (un- novich and Cogswell [RC96]. Unfortunately Douglis’s compressed) RAM and disk. -

Memory Protection at Option

Memory Protection at Option Application-Tailored Memory Safety in Safety-Critical Embedded Systems – Speicherschutz nach Wahl Auf die Anwendung zugeschnittene Speichersicherheit in sicherheitskritischen eingebetteten Systemen Der Technischen Fakultät der Universität Erlangen-Nürnberg zur Erlangung des Grades Doktor-Ingenieur vorgelegt von Michael Stilkerich Erlangen — 2012 Als Dissertation genehmigt von der Technischen Fakultät Universität Erlangen-Nürnberg Tag der Einreichung: 09.07.2012 Tag der Promotion: 30.11.2012 Dekan: Prof. Dr.-Ing. Marion Merklein Berichterstatter: Prof. Dr.-Ing. Wolfgang Schröder-Preikschat Prof. Dr. Michael Philippsen Abstract With the increasing capabilities and resources available on microcontrollers, there is a trend in the embedded industry to integrate multiple software functions on a single system to save cost, size, weight, and power. The integration raises new requirements, thereunder the need for spatial isolation, which is commonly established by using a memory protection unit (MPU) that can constrain access to the physical address space to a fixed set of address regions. MPU-based protection is limited in terms of available hardware, flexibility, granularity and ease of use. Software-based memory protection can provide an alternative or complement MPU-based protection, but has found little attention in the embedded domain. In this thesis, I evaluate qualitative and quantitative advantages and limitations of MPU-based memory protection and software-based protection based on a multi-JVM. I developed a framework composed of the AUTOSAR OS-like operating system CiAO and KESO, a Java implementation for deeply embedded systems. The framework allows choosing from no memory protection, MPU-based protection, software-based protection, and a combination of the two. -

Virtual Memory

Virtual Memory Copyright ©: University of Illinois CS 241 Staff 1 Address Space Abstraction n Program address space ¡ All memory data ¡ i.e., program code, stack, data segment n Hardware interface (physical reality) ¡ Computer has one small, shared memory How can n Application interface (illusion) we close this gap? ¡ Each process wants private, large memory Copyright ©: University of Illinois CS 241 Staff 2 Address Space Illusions n Address independence ¡ Same address can be used in different address spaces yet remain logically distinct n Protection ¡ One address space cannot access data in another address space n Virtual memory ¡ Address space can be larger than the amount of physical memory on the machine Copyright ©: University of Illinois CS 241 Staff 3 Address Space Illusions: Virtual Memory Illusion Reality Giant (virtual) address space Many processes sharing Protected from others one (physical) address space (Unless you want to share it) Limited memory More whenever you want it Today: An introduction to Virtual Memory: The memory space seen by your programs! Copyright ©: University of Illinois CS 241 Staff 4 Multi-Programming n Multiple processes in memory at the same time n Goals 1. Layout processes in memory as needed 2. Protect each process’s memory from accesses by other processes 3. Minimize performance overheads 4. Maximize memory utilization Copyright ©: University of Illinois CS 241 Staff 5 OS & memory management n Main memory is normally divided into two parts: kernel memory and user memory n In a multiprogramming system, the “user” part of main memory needs to be divided to accommodate multiple processes. n The user memory is dynamically managed by the OS (known as memory management) to satisfy following requirements: ¡ Protection ¡ Relocation ¡ Sharing ¡ Memory organization (main mem. -

Extracting Compressed Pages from the Windows 10 Virtual Store WHITE PAPER | EXTRACTING COMPRESSED PAGES from the WINDOWS 10 VIRTUAL STORE 2

white paper Extracting Compressed Pages from the Windows 10 Virtual Store WHITE PAPER | EXTRACTING COMPRESSED PAGES FROM THE WINDOWS 10 VIRTUAL STORE 2 Abstract Windows 8.1 introduced memory compression in August 2013. By the end of 2013 Linux 3.11 and OS X Mavericks leveraged compressed memory as well. Disk I/O continues to be orders of magnitude slower than RAM, whereas reading and decompressing data in RAM is fast and highly parallelizable across the system’s CPU cores, yielding a significant performance increase. However, this came at the cost of increased complexity of process memory reconstruction and thus reduced the power of popular tools such as Volatility, Rekall, and Redline. In this document we introduce a method to retrieve compressed pages from the Windows 10 Memory Manager Virtual Store, thus providing forensics and auditing tools with a way to retrieve, examine, and reconstruct memory artifacts regardless of their storage location. Introduction Windows 10 moves pages between physical memory and the hard disk or the Store Manager’s virtual store when memory is constrained. Universal Windows Platform (UWP) applications leverage the Virtual Store any time they are suspended (as is the case when minimized). When a given page is no longer in the process’s working set, the corresponding Page Table Entry (PTE) is used by the OS to specify the storage location as well as additional data that allows it to start the retrieval process. In the case of a page file, the retrieval is straightforward because both the page file index and the location of the page within the page file can be directly retrieved. -

![Memory Protection File B [RWX] File D [RW] File F [R] OS GUEST LECTURE](https://docslib.b-cdn.net/cover/5095/memory-protection-file-b-rwx-file-d-rw-file-f-r-os-guest-lecture-255095.webp)

Memory Protection File B [RWX] File D [RW] File F [R] OS GUEST LECTURE

11/17/2018 Operating Systems Protection Goal: Ensure data confidentiality + data integrity + systems availability Protection domain = the set of accessible objects + access rights File A [RW] File C [R] Printer 1 [W] File E [RX] Memory Protection File B [RWX] File D [RW] File F [R] OS GUEST LECTURE XIAOWAN DONG Domain 1 Domain 2 Domain 3 11/15/2018 1 2 Private Virtual Address Space Challenges of Sharing Memory Most common memory protection Difficult to share pointer-based data Process A virtual Process B virtual Private Virtual addr space mechanism in current OS structures addr space addr space Private Page table Physical addr space ◦ Data may map to different virtual addresses Each process has a private virtual in different address spaces Physical address space Physical Access addr space ◦ Set of accessible objects: virtual pages frame no Rights Segment 3 mapped … … Segment 2 ◦ Access rights: access permissions to P1 RW each virtual page Segment 1 P2 RW Segment 1 Recorded in per-process page table P3 RW ◦ Virtual-to-physical translation + P4 RW Segment 2 access permissions … … 3 4 1 11/17/2018 Challenges of Sharing Memory Challenges for Memory Sharing Potential duplicate virtual-to-physical Potential duplicate virtual-to-physical translation information for shared Process A page Physical Process B page translation information for shared memory table addr space table memory Processor ◦ Page table is per-process at page ◦ Single copy of the physical memory, multiple granularity copies of the mapping info (even if identical) TLB L1 cache -

Paging: Smaller Tables

20 Paging: Smaller Tables We now tackle the second problem that paging introduces: page tables are too big and thus consume too much memory. Let’s start out with a linear page table. As you might recall1, linear page tables get pretty 32 12 big. Assume again a 32-bit address space (2 bytes), with 4KB (2 byte) pages and a 4-byte page-table entry. An address space thus has roughly 232 one million virtual pages in it ( 212 ); multiply by the page-table entry size and you see that our page table is 4MB in size. Recall also: we usually have one page table for every process in the system! With a hundred active processes (not uncommon on a modern system), we will be allocating hundreds of megabytes of memory just for page tables! As a result, we are in search of some techniques to reduce this heavy burden. There are a lot of them, so let’s get going. But not before our crux: CRUX: HOW TO MAKE PAGE TABLES SMALLER? Simple array-based page tables (usually called linear page tables) are too big, taking up far too much memory on typical systems. How can we make page tables smaller? What are the key ideas? What inefficiencies arise as a result of these new data structures? 20.1 Simple Solution: Bigger Pages We could reduce the size of the page table in one simple way: use bigger pages. Take our 32-bit address space again, but this time assume 16KB pages. We would thus have an 18-bit VPN plus a 14-bit offset. -

HALO: Post-Link Heap-Layout Optimisation

HALO: Post-Link Heap-Layout Optimisation Joe Savage Timothy M. Jones University of Cambridge, UK University of Cambridge, UK [email protected] [email protected] Abstract 1 Introduction Today, general-purpose memory allocators dominate the As the gap between memory and processor speeds continues landscape of dynamic memory management. While these so- to widen, efficient cache utilisation is more important than lutions can provide reasonably good behaviour across a wide ever. While compilers have long employed techniques like range of workloads, it is an unfortunate reality that their basic-block reordering, loop fission and tiling, and intelligent behaviour for any particular workload can be highly subop- register allocation to improve the cache behaviour of pro- timal. By catering primarily to average and worst-case usage grams, the layout of dynamically allocated memory remains patterns, these allocators deny programs the advantages of largely beyond the reach of static tools. domain-specific optimisations, and thus may inadvertently Today, when a C++ program calls new, or a C program place data in a manner that hinders performance, generating malloc, its request is satisfied by a general-purpose allocator unnecessary cache misses and load stalls. with no intimate knowledge of what the program does or To help alleviate these issues, we propose HALO: a post- how its data objects are used. Allocations are made through link profile-guided optimisation tool that can improve the fixed, lifeless interfaces, and fulfilled by -

Unikernel Monitors: Extending Minimalism Outside of the Box

Unikernel Monitors: Extending Minimalism Outside of the Box Dan Williams Ricardo Koller IBM T.J. Watson Research Center Abstract Recently, unikernels have emerged as an exploration of minimalist software stacks to improve the security of ap- plications in the cloud. In this paper, we propose ex- tending the notion of minimalism beyond an individual virtual machine to include the underlying monitor and the interface it exposes. We propose unikernel monitors. Each unikernel is bundled with a tiny, specialized mon- itor that only contains what the unikernel needs both in terms of interface and implementation. Unikernel mon- itors improve isolation through minimal interfaces, re- Figure 1: The unit of execution in the cloud as (a) a duce complexity, and boot unikernels quickly. Our ini- unikernel, built from only what it needs, running on a tial prototype, ukvm, is less than 5% the code size of a VM abstraction; or (b) a unikernel running on a spe- traditional monitor, and boots MirageOS unikernels in as cialized unikernel monitor implementing only what the little as 10ms (8× faster than a traditional monitor). unikernel needs. 1 Introduction the application (unikernel) and the rest of the system, as defined by the virtual hardware abstraction, minimal? Minimal software stacks are changing the way we think Can application dependencies be tracked through the in- about assembling applications for the cloud. A minimal terface and even define a minimal virtual machine mon- amount of software implies a reduced attack surface and itor (or in this case a unikernel monitor) for the applica- a better understanding of the system, leading to increased tion, thus producing a maximally isolated, minimal exe- security. -

Virtual Memory - Paging

Virtual memory - Paging Johan Montelius KTH 2020 1 / 32 The process code heap (.text) data stack kernel 0x00000000 0xC0000000 0xffffffff Memory layout for a 32-bit Linux process 2 / 32 Segments - a could be solution Processes in virtual space Address translation by MMU (base and bounds) Physical memory 3 / 32 one problem Physical memory External fragmentation: free areas of free space that is hard to utilize. Solution: allocate larger segments ... internal fragmentation. 4 / 32 another problem virtual space used code We’re reserving physical memory that is not used. physical memory not used? 5 / 32 Let’s try again It’s easier to handle fixed size memory blocks. Can we map a process virtual space to a set of equal size blocks? An address is interpreted as a virtual page number (VPN) and an offset. 6 / 32 Remember the segmented MMU MMU exception no virtual addr. offset yes // < within bounds index + physical address segment table 7 / 32 The paging MMU MMU exception virtual addr. offset // VPN available ? + physical address page table 8 / 32 the MMU exception exception virtual address within bounds page available Segmentation Paging linear address physical address 9 / 32 a note on the x86 architecture The x86-32 architecture supports both segmentation and paging. A virtual address is translated to a linear address using a segmentation table. The linear address is then translated to a physical address by paging. Linux and Windows do not use use segmentation to separate code, data nor stack. The x86-64 (the 64-bit version of the x86 architecture) has dropped many features for segmentation. -

Chapter 4 Memory Management and Virtual Memory Asst.Prof.Dr

Chapter 4 Memory management and Virtual Memory Asst.Prof.Dr. Supakit Nootyaskool IT-KMITL Object • To discuss the principle of memory management. • To understand the reason that memory partitions are importance for system organization. • To describe the concept of Paging and Segmentation. 4.1 Difference in main memory in the system • The uniprogramming system (example in DOS) allows only a program to be present in memory at a time. All resources are provided to a program at a time. Example in a memory has a program and an OS running 1) Operating system (on Kernel space) 2) A running program (on User space) • The multiprogramming system is difference from the mentioned above by allowing programs to be present in memory at a time. All resource must be organize and sharing to programs. Example by two programs are in the memory . 1) Operating system (on Kernel space) 2) Running programs (on User space + running) 3) Programs are waiting signals to execute in CPU (on User space). The multiprogramming system uses memory management to organize location to the programs 4.2 Memory management terms Frame Page Segment 4.2 Memory management terms Frame Page A fixed-lengthSegment block of main memory. 4.2 Memory management terms Frame Page A fixed-length block of data that resides in secondary memory. A page of data may temporarily beSegment copied into a frame of main memory. A variable-lengthMemory management block of data that residesterms in secondary memory. A segment may temporarily be copied into an available region of main memory or the segment may be divided into pages which can be individuallyFrame copied into mainPage memory. -

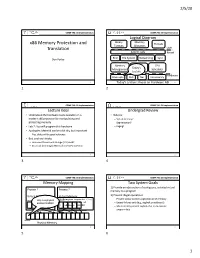

X86 Memory Protection and Translation

2/5/20 COMP 790: OS Implementation COMP 790: OS Implementation Logical Diagram Binary Memory x86 Memory Protection and Threads Formats Allocators Translation User System Calls Kernel Don Porter RCU File System Networking Sync Memory Device CPU Today’s Management Drivers Scheduler Lecture Hardware Interrupts Disk Net Consistency 1 Today’s Lecture: Focus on Hardware ABI 2 1 2 COMP 790: OS Implementation COMP 790: OS Implementation Lecture Goal Undergrad Review • Understand the hardware tools available on a • What is: modern x86 processor for manipulating and – Virtual memory? protecting memory – Segmentation? • Lab 2: You will program this hardware – Paging? • Apologies: Material can be a bit dry, but important – Plus, slides will be good reference • But, cool tech tricks: – How does thread-local storage (TLS) work? – An actual (and tough) Microsoft interview question 3 4 3 4 COMP 790: OS Implementation COMP 790: OS Implementation Memory Mapping Two System Goals 1) Provide an abstraction of contiguous, isolated virtual Process 1 Process 2 memory to a program Virtual Memory Virtual Memory 2) Prevent illegal operations // Program expects (*x) – Prevent access to other application or OS memory 0x1000 Only one physical 0x1000 address 0x1000!! // to always be at – Detect failures early (e.g., segfault on address 0) // address 0x1000 – More recently, prevent exploits that try to execute int *x = 0x1000; program data 0x1000 Physical Memory 5 6 5 6 1 2/5/20 COMP 790: OS Implementation COMP 790: OS Implementation Outline x86 Processor Modes • x86