GPU Hardware CS 380P

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Gs-35F-4677G

March 2013 NCS Technologies, Inc. Information Technology (IT) Schedule Contract Number: GS-35F-4677G FEDERAL ACQUISTIION SERVICE INFORMATION TECHNOLOGY SCHEDULE PRICELIST GENERAL PURPOSE COMMERCIAL INFORMATION TECHNOLOGY EQUIPMENT Special Item No. 132-8 Purchase of Hardware 132-8 PURCHASE OF EQUIPMENT FSC CLASS 7010 – SYSTEM CONFIGURATION 1. End User Computer / Desktop 2. Professional Workstation 3. Server 4. Laptop / Portable / Notebook FSC CLASS 7-25 – INPUT/OUTPUT AND STORAGE DEVICES 1. Display 2. Network Equipment 3. Storage Devices including Magnetic Storage, Magnetic Tape and Optical Disk NCS TECHNOLOGIES, INC. 7669 Limestone Drive Gainesville, VA 20155-4038 Tel: (703) 621-1700 Fax: (703) 621-1701 Website: www.ncst.com Contract Number: GS-35F-4677G – Option Year 3 Period Covered by Contract: May 15, 1997 through May 14, 2017 GENERAL SERVICE ADMINISTRATION FEDERAL ACQUISTIION SERVICE Products and ordering information in this Authorized FAS IT Schedule Price List is also available on the GSA Advantage! System. Agencies can browse GSA Advantage! By accessing GSA’s Home Page via Internet at www.gsa.gov. TABLE OF CONTENTS INFORMATION FOR ORDERING OFFICES ............................................................................................................................................................................................................................... TC-1 SPECIAL NOTICE TO AGENCIES – SMALL BUSINESS PARTICIPATION 1. Geographical Scope of Contract ............................................................................................................................................................................................................................. -

Data Sheet: Quadro GV100

REINVENTING THE WORKSTATION WITH REAL-TIME RAY TRACING AND AI NVIDIA QUADRO GV100 The Power To Accelerate AI- FEATURES > Four DisplayPort 1.4 Enhanced Workflows Connectors3 The NVIDIA® Quadro® GV100 reinvents the workstation > DisplayPort with Audio to meet the demands of AI-enhanced design and > 3D Stereo Support with Stereo Connector3 visualization workflows. It’s powered by NVIDIA Volta, > NVIDIA GPUDirect™ Support delivering extreme memory capacity, scalability, and > NVIDIA NVLink Support1 performance that designers, architects, and scientists > Quadro Sync II4 Compatibility need to create, build, and solve the impossible. > NVIDIA nView® Desktop SPECIFICATIONS Management Software GPU Memory 32 GB HBM2 Supercharge Rendering with AI > HDCP 2.2 Support Memory Interface 4096-bit > Work with full fidelity, massive datasets 5 > NVIDIA Mosaic Memory Bandwidth Up to 870 GB/s > Enjoy fluid visual interactivity with AI-accelerated > Dedicated hardware video denoising encode and decode engines6 ECC Yes NVIDIA CUDA Cores 5,120 Bring Optimal Designs to Market Faster > Work with higher fidelity CAE simulation models NVIDIA Tensor Cores 640 > Explore more design options with faster solver Double-Precision Performance 7.4 TFLOPS performance Single-Precision Performance 14.8 TFLOPS Enjoy Ultimate Immersive Experiences Tensor Performance 118.5 TFLOPS > Work with complex, photoreal datasets in VR NVIDIA NVLink Connects 2 Quadro GV100 GPUs2 > Enjoy optimal NVIDIA Holodeck experience NVIDIA NVLink bandwidth 200 GB/s Realize New Opportunities with AI -

NVIDIA Quadro P4000

NVIDIA Quadro P4000 GP104 1792 112 64 8192 MB GDDR5 256 bit GRAPHICS PROCESSOR CORES TMUS ROPS MEMORY SIZE MEMORY TYPE BUS WIDTH The Quadro P4000 is a professional graphics card by NVIDIA, launched in February 2017. Built on the 16 nm process, and based on the GP104 graphics processor, the card supports DirectX 12.0. The GP104 graphics processor is a large chip with a die area of 314 mm² and 7,200 million transistors. Unlike the fully unlocked GeForce GTX 1080, which uses the same GPU but has all 2560 shaders enabled, NVIDIA has disabled some shading units on the Quadro P4000 to reach the product's target shader count. It features 1792 shading units, 112 texture mapping units and 64 ROPs. NVIDIA has placed 8,192 MB GDDR5 memory on the card, which are connected using a 256‐bit memory interface. The GPU is operating at a frequency of 1227 MHz, which can be boosted up to 1480 MHz, memory is running at 1502 MHz. We recommend the NVIDIA Quadro P4000 for gaming with highest details at resolutions up to, and including, 5760x1080. Being a single‐slot card, the NVIDIA Quadro P4000 draws power from 1x 6‐pin power connectors, with power draw rated at 105 W maximum. Display outputs include: 4x DisplayPort. Quadro P4000 is connected to the rest of the system using a PCIe 3.0 x16 interface. The card measures 241 mm in length, and features a single‐slot cooling solution. Graphics Processor Graphics Card GPU Name: GP104 Released: Feb 6th, 2017 Architecture: Pascal Production Active Status: Process Size: 16 nm Bus Interface: PCIe 3.0 x16 Transistors: 7,200 -

Nvidia Tesla P40 Gpu Accelerator

NVIDIA TESLA P40 GPU ACCELERATOR HIGH-PERFORMANCE VIRTUAL GRAPHICS AND COMPUTE NVIDIA redefined visual computing by giving designers, engineers, scientists, and graphic artists the power to take on the biggest visualization challenges with immersive, interactive, photorealistic environments. NVIDIA® Quadro® Virtual Data GPU 1 NVIDIA Pascal GPU Center Workstation (Quadro vDWS) takes advantage of NVIDIA® CUDA Cores 3,840 Tesla® GPUs to deliver virtual workstations from the data center. Memory Size 24 GB GDDR5 H.264 1080p30 streams 24 Architects, engineers, and designers are now liberated from Max vGPU instances 24 (1 GB Profile) their desks and can access applications and data anywhere. vGPU Profiles 1 GB, 2 GB, 3 GB, 4 GB, 6 GB, 8 GB, 12 GB, 24 GB ® ® The NVIDIA Tesla P40 GPU accelerator works with NVIDIA Form Factor PCIe 3.0 Dual Slot Quadro vDWS software and is the first system to combine an (rack servers) Power 250 W enterprise-grade visual computing platform for simulation, Thermal Passive HPC rendering, and design with virtual applications, desktops, and workstations. This gives organizations the freedom to virtualize both complex visualization and compute (CUDA and OpenCL) workloads. The NVIDIA® Tesla® P40 taps into the industry-leading NVIDIA Pascal™ architecture to deliver up to twice the professional graphics performance of the NVIDIA® Tesla® M60 (Refer to Performance Graph). With 24 GB of framebuffer and 24 NVENC encoder sessions, it supports 24 virtual desktops (1 GB profile) or 12 virtual workstations (2 GB profile), providing the best end-user scalability per GPU. This powerful GPU also supports eight different user profiles, so virtual GPU resources can be efficiently provisioned to meet the needs of the user. -

4 Reasons Why Pny Is the Right Choice Nvidia Quadro Rtx 8000

4 REASONS WHY PNY IS THE RIGHT CHOICE GOVERNMENT AND DEFENSE PROGRAMS 1. PNY OFFERS SPECIAL GOVERNMENT PRICING PNY offers a special discount to all qualified government and educational customers on NVIDIA Quadro* professional graphics solutions. This discount is available through participating distributors only. To see if you qualify, contact your PNY account manager at [email protected]. 2. PNY OFFERS A FULL RANGE OF GPU PRODUCTS AND SOLUTIONS PNY offers a full line of professional GPU solutions to meet any project need, including the NVIDIA® Quadro® line of professional graphics solutions. NVIDIA Quadro is the world’s most advanced and trusted graphics accelerator of professional workflows. 3. PNY OFFERS PRODUCTS THAT ARE USED IN MANY GOVERNMENT AND PUBLIC SECTORS Whether it’s for CAD, Computation, Artificial Intelligence, Virtual Reality or even Scientific Visualization, our professional graphics solutions are certified on over 100+ industry leading applications and can be found supporting all levels of government and public sectors: • AVIATION • GOVERNMENT AGENCIES • MEDICAL • DEFENSE/MILITARY • INTELLIGENCE • UNIVERSITY RESEARCH 4. PNY OFFERS QUADRO RTX 8000 FOR SUPERCOMPUTING, CAE AND DEEP LEARNING (AI) NVIDIA QUADRO RTX 8000 The RTX 8000, powered by NVIDIA’s Turing GPU architecture, with RT Cores and Tensor Cores, delivers cinematic quality physically-based rendering, with AI denoising enhancements. New solutions ranging from generative design to Data Science are opened up by the RTX 8000’s amazing new capabilities. With unmatched mixed precision and Tensor compute, real-time ray tracing, and advanced AI on a single board, the RTX 8000 is the perfect upgrade to existing Quadro P6000 and GV100 use cases for demanding creative and design professionals. -

Datasheet Quadro K600

ACCELERATE YOUR CREATIVITY NVIDIA® QUADRO® K620 Accelerate your creativity with FEATURES ® ® > DisplayPort 1.2 Connector NVIDIA Quadro —the world’s most > DisplayPort with Audio > DVI-I Dual-Link Connector 1 powerful workstation graphics. > VGA Support ™ The NVIDIA Quadro K620 offers impressive > NVIDIA nView Desktop Management Software power-efficient 3D application performance and Compatibility capability. 2 GB of DDR3 GPU memory with fast > HDCP Support bandwidth enables you to create large, complex 3D > NVIDIA Mosaic2 SPECIFICATIONS models, and a flexible single-slot and low-profile GPU Memory 2 GB DDR3 form factor makes it compatible with even the most Memory Interface 128-bit space and power-constrained chassis. Plus, an all-new display engine drives up to four displays with Memory Bandwidth 29.0 GB/s DisplayPort 1.2 support for ultra-high resolutions like NVIDIA CUDA® Cores 384 3840x2160 @ 60 Hz with 30-bit color. System Interface PCI Express 2.0 x16 Quadro cards are certified with a broad range of Max Power Consumption 45 W sophisticated professional applications, tested by Thermal Solution Ultra-Quiet Active leading workstation manufacturers, and backed by Fansink a global team of support specialists, giving you the Form Factor 2.713” H × 6.3” L, Single Slot, Low Profile peace of mind to focus on doing your best work. Whether you’re developing revolutionary products or Display Connectors DVI-I DL + DP 1.2 telling spectacularly vivid visual stories, Quadro gives Max Simultaneous Displays 2 direct, 4 DP 1.2 you the performance to do it brilliantly. Multi-Stream Max DP 1.2 Resolution 3840 x 2160 at 60 Hz Max DVI-I DL Resolution 2560 × 1600 at 60 Hz Max DVI-I SL Resolution 1920 × 1200 at 60 Hz Max VGA Resolution 2048 × 1536 at 85 Hz Graphics APIs Shader Model 5.0, OpenGL 4.53, DirectX 11.24, Vulkan 1.03 Compute APIs CUDA, DirectCompute, OpenCL™ 1 Via supplied adapter/connector/bracket | 2 Windows 7, 8, 8.1 and Linux | 3 Product is based on a published Khronos Specification, and is expected to pass the Khronos Conformance Testing Process when available. -

NVIDIA Quadro P620

UNMATCHED POWER. UNMATCHED CREATIVE FREEDOM. NVIDIA® QUADRO® P620 Powerful Professional Graphics with FEATURES > Four Mini DisplayPort 1.4 Expansive 4K Visual Workspace Connectors1 > DisplayPort with Audio The NVIDIA Quadro P620 combines a 512 CUDA core > NVIDIA nView® Desktop Pascal GPU, large on-board memory and advanced Management Software display technologies to deliver amazing performance > HDCP 2.2 Support for a range of professional workflows. 2 GB of ultra- > NVIDIA Mosaic2 fast GPU memory enables the creation of complex 2D > Dedicated hardware video encode and decode engines3 and 3D models and a flexible single-slot, low-profile SPECIFICATIONS form factor makes it compatible with even the most GPU Memory 2 GB GDDR5 space and power-constrained chassis. Support for Memory Interface 128-bit up to four 4K displays (4096x2160 @ 60 Hz) with HDR Memory Bandwidth Up to 80 GB/s color gives you an expansive visual workspace to view NVIDIA CUDA® Cores 512 your creations in stunning detail. System Interface PCI Express 3.0 x16 Quadro cards are certified with a broad range of Max Power Consumption 40 W sophisticated professional applications, tested by Thermal Solution Active leading workstation manufacturers, and backed by Form Factor 2.713” H x 5.7” L, a global team of support specialists, giving you the Single Slot, Low Profile peace of mind to focus on doing your best work. Display Connectors 4x Mini DisplayPort 1.4 Whether you’re developing revolutionary products or Max Simultaneous 4 direct, 4x DisplayPort telling spectacularly vivid visual stories, Quadro gives Displays 1.4 Multi-Stream you the performance to do it brilliantly. -

Nvidia Quadro T1000

NVIDIA professional laptop GPUs power the world’s most advanced thin and light mobile workstations and unique compact devices to meet the visual computing needs of professionals across a wide range of industries. The latest generation of NVIDIA RTX professional laptop GPUs, built on the NVIDIA Ampere architecture combine the latest advancements in real-time ray tracing, advanced shading, and AI-based capabilities to tackle the most demanding design and visualization workflows on the go. With the NVIDIA PROFESSIONAL latest graphics technology, enhanced performance, and added compute power, NVIDIA professional laptop GPUs give designers, scientists, and artists the tools they need to NVIDIA MOBILE GRAPHICS SOLUTIONS work efficiently from anywhere. LINE CARD GPU SPECIFICATIONS PERFORMANCE OPTIONS 2 1 ® / TXAA™ Anti- ® ™ 3 4 * 5 NVIDIA FXAA Aliasing Manager NVIDIA RTX Desktop Support Vulkan NVIDIA Optimus NVIDIA CUDA NVIDIA RT Cores Cores Tensor GPU Memory Memory Bandwidth* Peak Memory Type Memory Interface Consumption Max Power TGP DisplayPort Open GL Shader Model DirectX PCIe Generation Floating-Point Precision Single Peak)* (TFLOPS, Performance (TFLOPS, Performance Tensor Peak) Gen MAX-Q Technology 3rd NVENC / NVDEC Processing Cores Processing Laptop GPUs 48 (2nd 192 (3rd NVIDIA RTX A5000 6,144 16 GB 448 GB/s GDDR6 256-bit 80 - 165 W* 1.4 4.6 7.0 12 Ultimate 4 21.7 174.0 Gen) Gen) 40 (2nd 160 (3rd NVIDIA RTX A4000 5,120 8 GB 384 GB/s GDDR6 256-bit 80 - 140 W* 1.4 4.6 7.0 12 Ultimate 4 17.8 142.5 Gen) Gen) 32 (2nd 128 (3rd NVIDIA RTX A3000 -

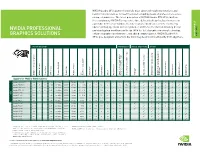

NVIDIA Professional Graphics Solutions | Line Card

You need to do great things. Create and collaborate from anywhere, on any device, without distractions like slow performance, poor stability, or application incompatibility. NVIDIA® Quadro® is the technology that lets you unleash your vision and enjoy the ultimate creative freedom. NVIDIA Quadro provides a wide range of solutions that bring the power of NVIDIA RTX™ to millions of professionals, on the go, on desktop, or in the data center. Leverage the latest advancements in AI, virtual reality (VR), and interactive, photorealistic rendering so you can develop revolutionary NVIDIA PROFESSIONAL products, tell vivid visual stories, and design groundbreaking architecture like never before. GRAPHICS SOLUTIONS Support for advanced features, frameworks, and SDKs across all of our products gives you the power to tackle the most challenging visual computing tasks, no matter the scale. Quadro in Mobile Workstations Quadro in Desktop Workstations Quadro in Servers Professionals today are increasingly working on complex workflows Drive the most challenging workloads with Quadro RTX powered Demand for visualization, rendering, data science, and simulation like VR, 8K video editing, and photorealistic rendering on the go. desktop workstations designed and built specifically for artists, continues to grow as businesses tackle larger, more complex Quadro RTX™ mobile GPUs deliver desktop-level performance in designers, and engineers. Connect multiple Quadro RTX GPUs to workloads than ever before. Scale up your visual compute a portable form factor. With up to 24GB of massive GPU memory, scale up to 96 gigabytes (GB) of GPU memory and performance infrastructure and tackle graphics intensive workloads, complex Quadro RTX mobile GPUs combine the latest advancements in real- to tackle the largest workloads and speed up your workflow. -

NVIDIA Professional Graphics Solutions | Line Card

NVIDIA Quadro GPUs power the world’s most advanced mobile workstations and new form-factor devices to meet the visual computing needs of professionals across a range of industries. The latest generation of NVIDIA Quadro RTX GPUs, built on the revolutionary NVIDIA Turing architecture, deliver desktop-level performance in a portable form factor. Combine the latest advancements in real-time ray tracing, advanced shading, and AI-based capabilities and tackle the most demanding design NVIDIA PROFESSIONAL and visualization workflows on the go. With the latest graphics memory technology, enhanced graphics performance, and added compute power, NVIDIA Quadro RTX QUADRO MOBILE QUADRO GRAPHICS SOLUTIONS CARD LINE GPUs give designers and artists the tools they need to work efficiently from anywhere. GPU SPECIFICATIONS PERFORMANCE VIRTUAL REALITY (VR) OPTIONS 4 1 2 5 RT Cores 3 ® Tensor Cores Tensor GPU Memory Memory Bandwidth Memory Type Memory Interface TGP Consumption Max Power DisplayPort NVIDIA CUDA Processing Cores Processing NVIDIA CUDA NVIDIA OpenGL Shader Model DirectX PCIe Generation Floating-Point Precision Single Peak) (TFLOPS, Performance Peak) (TOPS, Performance Tensor VR Ready Multi-Projection Simultaneous NVIDIA FXAA / TXAA Antialiasing Display Management NVIDIA nView Technology Video for GPUDirect Support Vulkan NVIDIA 3D Vision Pro NVIDIA Optimus Quadro for Mobile Workstations Quadro RTX 6000 4,608 72 576 24 GB 672 GBps GDDR6 384-bit 250 W 1.4 4.6 5.1 12.1 3 14.9 119.4 Quadro RTX 5000 3,072 48 384 16 GB 448 GBps GDDR6 256-bit 80 -

Quadro Rtx 8000

THE WORLD’S FIRST RAY TRACING GPU NVIDIA QUADRO RTX 8000 REAL TIME RAY TRACING FEATURES > Four DisplayPort 1.4 FOR PROFESSIONALS Connectors 3 Experience unbeatable performance, power, and memory > VirtualLink Connector ® ™ > DisplayPort with Audio with the NVIDIA Quadro RTX 8000, the world’s most > VGA Support4 powerful graphics card for professional workflows. The > 3D Stereo Support with Quadro RTX 8000 is powered by the NVIDIA Turing™ Stereo Connector4 architecture and NVIDIA RTX™ platform to deliver the > NVIDIA GPUDirect™ Support 5 latest hardware-accelerated ray tracing, deep learning, > Quadro Sync II Compatibility SPECIFICATIONS > NVIDIA nView® Desktop and advanced shading to professionals. Equipped with Management Software GPU Memory 48 GB GDDR6 ® 4608 NVIDIA CUDA cores, 576 Tensor cores, and 72 RT > HDCP 2.2 Support Memory Interface 384-bit 6 Cores, the Quadro RTX 8000 can render complex models > NVIDIA Mosaic Memory Bandwidth 672 GB/s and scenes with physically accurate shadows, reflections, ECC Yes and refractions to empower users with instant insight. NVIDIA CUDA Cores 4,608 Support for NVIDIA NVLink™1 lets applications scale NVIDIA Tensor Cores 576 performance, providing 96 GB of GDDR6 memory with NVIDIA RT Cores 72 multi-GPU configurations2. And with the industry’s first implementation of the new VirtualLink®3 port, the Quadro Single-Precision 16.3 TFLOPS Performance RTX 8000 provides simple connectivity to the next- Tensor Performance 130.5 TFLOPS generation of high-resolution VR head-mounted displays NVIDIA NVLink Connects 2 Quadro to let designers view their work in the most compelling RTX 8000 GPUs1 virtual environments possible. NVIDIA NVLink bandwidth 100 GB/s (bidirectional) Quadro cards are certified with a broad range of System Interface PCI Express 3.0 x 16 sophisticated professional applications, tested by leading Power Consumption Total board power: 295 W workstation manufacturers, and backed by a global Total graphics power: 260 W team of support specialists. -

GPGPU Computing for Next-Generation Mission-Critical Applications

White Paper GPGPU Computing for Next-Generation Mission-Critical Applications Introduction Recent developments in artificial intelligence (AI) and growing demands imposed by both the enterprise and military sectors call for heavy-lift tasks such as: rendering images for video; analysis of high-volumes of data in detecting risks and generating real-time countermeasures demanded by cyber- security; as well as the software-based abstraction of complex hardware systems. While IC-level advancements in compute acceleration are not sufficiently meeting these demands, this discussion proposes heterogeneous computing as an alternative. Among the many benefits that heterogeneous computing offers is the appropriate assignment of workloads to traditional CPUs, task-specific chips such as graphics processing units (GPUs), and the relatively new general purpose GPU (GPGPU). These chipsets, combined with application- specific hardware platforms developed by ADLINK in conjunction with NVIDIA provide solutions offering low power consumption, optimized performance, rugged construction, and small form factors (SFFs) for every industry across the globe. P 1 GPUs for Computing Power Advancement In 2015 former Intel CEO Brian Krzanich announced that the GPUs evolving along different architectural lines than CPUs. doubling time for transistor density had slowed from the two In time, software developers realized that non-graphical years predicted by Moore’s law to two and a half years, and workload types (financial analysis, weather modeling, that their 10nm architecture had fallen still further behind fluid dynamics, signal processing, and so on) could also be that schedule (https://blogs.wsj.com/digits/2015/07/16/intel- processed efficiently on GPUs when dedicated to highly rechisels-the-tablet-on-moores-law/).