실시간 운영체계 (Real-Time Operating System)

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A Comprehensive Review for Central Processing Unit Scheduling Algorithm

IJCSI International Journal of Computer Science Issues, Vol. 10, Issue 1, No 2, January 2013 ISSN (Print): 1694-0784 | ISSN (Online): 1694-0814 www.IJCSI.org 353 A Comprehensive Review for Central Processing Unit Scheduling Algorithm Ryan Richard Guadaña1, Maria Rona Perez2 and Larry Rutaquio Jr.3 1 Computer Studies and System Department, University of the East Caloocan City, 1400, Philippines 2 Computer Studies and System Department, University of the East Caloocan City, 1400, Philippines 3 Computer Studies and System Department, University of the East Caloocan City, 1400, Philippines Abstract when an attempt is made to execute a program, its This paper describe how does CPU facilitates tasks given by a admission to the set of currently executing processes is user through a Scheduling Algorithm. CPU carries out each either authorized or delayed by the long-term scheduler. instruction of the program in sequence then performs the basic Second is the Mid-term Scheduler that temporarily arithmetical, logical, and input/output operations of the system removes processes from main memory and places them on while a scheduling algorithm is used by the CPU to handle every process. The authors also tackled different scheduling disciplines secondary memory (such as a disk drive) or vice versa. and examples were provided in each algorithm in order to know Last is the Short Term Scheduler that decides which of the which algorithm is appropriate for various CPU goals. ready, in-memory processes are to be executed. Keywords: Kernel, Process State, Schedulers, Scheduling Algorithm, Utilization. 2. CPU Utilization 1. Introduction In order for a computer to be able to handle multiple applications simultaneously there must be an effective way The central processing unit (CPU) is a component of a of using the CPU. -

A Programmable Microkernel for Real-Time Systems∗

A Programmable Microkernel for Real-Time Systems∗ Christoph M. Kirsch Marco A.A. Sanvido Thomas A. Henzinger University of Salzburg VMWare Inc. EPFL and UC Berkeley [email protected] tah@epfl.ch ABSTRACT Categories and Subject Descriptors We present a new software system architecture for the im- D.4.7 [Operating Systems]: Organization and Design— plementation of hard real-time applications. The core of the Real-time systems and embedded systems system is a microkernel whose reactivity (interrupt handling as in synchronous reactive programs) and proactivity (task General Terms scheduling as in traditional RTOSs) are fully programma- Languages ble. The microkernel, which we implemented on a Strong- ARM processor, consists of two interacting domain-specific Keywords virtual machines, a reactive E (Embedded) machine and a proactive S (Scheduling) machine. The microkernel code (or Real Time, Operating System, Virtual Machine microcode) that runs on the microkernel is partitioned into E and S code. E code manages the interaction of the system 1. INTRODUCTION with the physical environment: the execution of E code is In [9], we advocated the E (Embedded) machine as a triggered by environment interrupts, which signal external portable target for compiling hard real-time code, and in- events such as the arrival of a message or sensor value, and it troduced, in [11], the S (Scheduling) machine as a universal releases application tasks to the S machine. S code manages target for generating schedules according to arbitrary and the interaction of the system with the processor: the exe- possibly non-trivial strategies such as nonpreemptive and cution of S code is triggered by hardware interrupts, which multiprocessor scheduling. -

The Different Unix Contexts

The different Unix contexts • User-level • Kernel “top half” - System call, page fault handler, kernel-only process, etc. • Software interrupt • Device interrupt • Timer interrupt (hardclock) • Context switch code Transitions between contexts • User ! top half: syscall, page fault • User/top half ! device/timer interrupt: hardware • Top half ! user/context switch: return • Top half ! context switch: sleep • Context switch ! user/top half Top/bottom half synchronization • Top half kernel procedures can mask interrupts int x = splhigh (); /* ... */ splx (x); • splhigh disables all interrupts, but also splnet, splbio, splsoftnet, . • Masking interrupts in hardware can be expensive - Optimistic implementation – set mask flag on splhigh, check interrupted flag on splx Kernel Synchronization • Need to relinquish CPU when waiting for events - Disk read, network packet arrival, pipe write, signal, etc. • int tsleep(void *ident, int priority, ...); - Switches to another process - ident is arbitrary pointer—e.g., buffer address - priority is priority at which to run when woken up - PCATCH, if ORed into priority, means wake up on signal - Returns 0 if awakened, or ERESTART/EINTR on signal • int wakeup(void *ident); - Awakens all processes sleeping on ident - Restores SPL a time they went to sleep (so fine to sleep at splhigh) Process scheduling • Goal: High throughput - Minimize context switches to avoid wasting CPU, TLB misses, cache misses, even page faults. • Goal: Low latency - People typing at editors want fast response - Network services can be latency-bound, not CPU-bound • BSD time quantum: 1=10 sec (since ∼1980) - Empirically longest tolerable latency - Computers now faster, but job queues also shorter Scheduling algorithms • Round-robin • Priority scheduling • Shortest process next (if you can estimate it) • Fair-Share Schedule (try to be fair at level of users, not processes) Multilevel feeedback queues (BSD) • Every runnable proc. -

Interrupt Handling in Linux

Department Informatik Technical Reports / ISSN 2191-5008 Valentin Rothberg Interrupt Handling in Linux Technical Report CS-2015-07 November 2015 Please cite as: Valentin Rothberg, “Interrupt Handling in Linux,” Friedrich-Alexander-Universitat¨ Erlangen-Nurnberg,¨ Dept. of Computer Science, Technical Reports, CS-2015-07, November 2015. Friedrich-Alexander-Universitat¨ Erlangen-Nurnberg¨ Department Informatik Martensstr. 3 · 91058 Erlangen · Germany www.cs.fau.de Interrupt Handling in Linux Valentin Rothberg Distributed Systems and Operating Systems Dept. of Computer Science, University of Erlangen, Germany [email protected] November 8, 2015 An interrupt is an event that alters the sequence of instructions executed by a processor and requires immediate attention. When the processor receives an interrupt signal, it may temporarily switch control to an inter- rupt service routine (ISR) and the suspended process (i.e., the previously running program) will be resumed as soon as the interrupt is being served. The generic term interrupt is oftentimes used synonymously for two terms, interrupts and exceptions [2]. An exception is a synchronous event that occurs when the processor detects an error condition while executing an instruction. Such an error condition may be a devision by zero, a page fault, a protection violation, etc. An interrupt, on the other hand, is an asynchronous event that occurs at random times during execution of a pro- gram in response to a signal from hardware. A proper and timely handling of interrupts is critical to the performance, but also to the security of a computer system. In general, interrupts can be emitted by hardware as well as by software. Software interrupts (e.g., via the INT n instruction of the x86 instruction set architecture (ISA) [5]) are means to change the execution context of a program to a more privileged interrupt context in order to enter the kernel and, in contrast to hardware interrupts, occur synchronously to the currently running program. -

Processes Process States

Processes • A process is a program in execution • Synonyms include job, task, and unit of work • Not surprisingly, then, the parts of a process are precisely the parts of a running program: Program code, sometimes called the text section Program counter (where we are in the code) and other registers (data that CPU instructions can touch directly) Stack — for subroutines and their accompanying data Data section — for statically-allocated entities Heap — for dynamically-allocated entities Process States • Five states in general, with specific operating systems applying their own terminology and some using a finer level of granularity: New — process is being created Running — CPU is executing the process’s instructions Waiting — process is, well, waiting for an event, typically I/O or signal Ready — process is waiting for a processor Terminated — process is done running • See the text for a general state diagram of how a process moves from one state to another The Process Control Block (PCB) • Central data structure for representing a process, a.k.a. task control block • Consists of any information that varies from process to process: process state, program counter, registers, scheduling information, memory management information, accounting information, I/O status • The operating system maintains collections of PCBs to track current processes (typically as linked lists) • System state is saved/loaded to/from PCBs as the CPU goes from process to process; this is called… The Context Switch • Context switch is the technical term for the act -

Chapter 3: Processes

Chapter 3: Processes Operating System Concepts – 9th Edition Silberschatz, Galvin and Gagne ©2013 Chapter 3: Processes Process Concept Process Scheduling Operations on Processes Interprocess Communication Examples of IPC Systems Communication in Client-Server Systems Operating System Concepts – 9th Edition 3.2 Silberschatz, Galvin and Gagne ©2013 Objectives To introduce the notion of a process -- a program in execution, which forms the basis of all computation To describe the various features of processes, including scheduling, creation and termination, and communication To explore interprocess communication using shared memory and message passing To describe communication in client-server systems Operating System Concepts – 9th Edition 3.3 Silberschatz, Galvin and Gagne ©2013 Process Concept An operating system executes a variety of programs: Batch system – jobs Time-shared systems – user programs or tasks Textbook uses the terms job and process almost interchangeably Process – a program in execution; process execution must progress in sequential fashion Multiple parts The program code, also called text section Current activity including program counter, processor registers Stack containing temporary data Function parameters, return addresses, local variables Data section containing global variables Heap containing memory dynamically allocated during run time Operating System Concepts – 9th Edition 3.4 Silberschatz, Galvin and Gagne ©2013 Process Concept (Cont.) Program is passive entity stored on disk (executable -

Creating a Custom Embedded Linux Distribution for Any Embedded

Yocto Project Summit Intro to Yocto Project Creating a Custom Embedded Linux Distribution for Any Embedded Device Using the Yocto Project Behan Webster Tom King The Linux Foundation May 25, 2021 (CC BY-SA 4.0) 1 bit.ly/YPS202105Intro The URL for this presentation http://bit.ly/YPS202105Intro bit.ly/YPS202105Intro Yocto Project Overview ➢ Collection of tools and methods enabling ◆ Rapid evaluation of embedded Linux on many popular off-the-shelf boards ◆ Easy customization of distribution characteristics ➢ Supports x86, ARM, MIPS, Power, RISC-V ➢ Based on technology from the OpenEmbedded Project ➢ Layer architecture allows for other layers easy re-use of code meta-yocto-bsp meta-poky meta (oe-core) 3 bit.ly/YPS202105Intro What is the Yocto Project? ➢ Umbrella organization under Linux Foundation ➢ Backed by many companies interested in making Embedded Linux easier for the industry ➢ Co-maintains OpenEmbedded Core and other tools (including opkg) 4 bit.ly/YPS202105Intro Yocto Project Governance ➢ Organized under the Linux Foundation ➢ Split governance model ➢ Technical Leadership Team ➢ Advisory Board made up of participating organizations 5 bit.ly/YPS202105Intro Yocto Project Member Organizations bit.ly/YPS202105Intro Yocto Project Overview ➢ YP builds packages - then uses these packages to build bootable images ➢ Supports use of popular package formats including: ◆ rpm, deb, ipk ➢ Releases on a 6-month cadence ➢ Latest (stable) kernel, toolchain and packages, documentation ➢ App Development Tools including Eclipse plugin, SDK, toaster 7 -

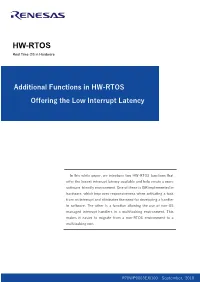

Additional Functions in HW-RTOS Offering the Low Interrupt Latency

HW-RTOS Real Time OS in Hardware Additional Functions in HW-RTOS Offering the Low Interrupt Latency In this white paper, we introduce two HW-RTOS functions that offer the lowest interrupt latency available and help create a more software-friendly environment. One of these is ISR implemented in hardware, which improves responsiveness when activating a task from an interrupt and eliminates the need for developing a handler in software. The other is a function allowing the use of non-OS managed interrupt handlers in a multitasking environment. This makes it easier to migrate from a non-RTOS environment to a multitasking one. R70WP0003EJ0100 September, 2018 2 / 8 Multitasking Environment with Lowest Interrupt Latency Offered by HW-RTOS 1. Executive Summary In this white paper, we introduce two functions special to HW-RTOS that improve interrupt performance. The first is the HW ISR function. Renesas stylized the ISR (Interrupt Service Routine) process and implemented it in hardware to create their HW ISR. With this function, the task corresponding to the interrupt signal can be activated directly and in real time. And, since the ISR is implemented in the hardware, application software engineers are relieved of the burden of developing a handler. The second is called Direct Interrupt Service. This function is equivalent to allowing a non-OS managed interrupt handler to invoke an API. This function %" "$# $""%!$ $ $""%!$!" enables synchronization and communication "$ "$ between the non-OS managed interrupt handler and $($ $($ '$ '$ tasks, a benefit not available in conventional $ $ software. In other words, it allows the use of non-OS # $ % " "$) ) managed interrupt handlers in a multitasking $($ '$ environment. -

Comparing Systems Using Sample Data

Operating System and Process Monitoring Tools Arik Brooks, [email protected] Abstract: Monitoring the performance of operating systems and processes is essential to debug processes and systems, effectively manage system resources, making system decisions, and evaluating and examining systems. These tools are primarily divided into two main categories: real time and log-based. Real time monitoring tools are concerned with measuring the current system state and provide up to date information about the system performance. Log-based monitoring tools record system performance information for post-processing and analysis and to find trends in the system performance. This paper presents a survey of the most commonly used tools for monitoring operating system and process performance in Windows- and Unix-based systems and describes the unique challenges of real time and log-based performance monitoring. See Also: Table of Contents: 1. Introduction 2. Real Time Performance Monitoring Tools 2.1 Windows-Based Tools 2.1.1 Task Manager (taskmgr) 2.1.2 Performance Monitor (perfmon) 2.1.3 Process Monitor (pmon) 2.1.4 Process Explode (pview) 2.1.5 Process Viewer (pviewer) 2.2 Unix-Based Tools 2.2.1 Process Status (ps) 2.2.2 Top 2.2.3 Xosview 2.2.4 Treeps 2.3 Summary of Real Time Monitoring Tools 3. Log-Based Performance Monitoring Tools 3.1 Windows-Based Tools 3.1.1 Event Log Service and Event Viewer 3.1.2 Performance Logs and Alerts 3.1.3 Performance Data Log Service 3.2 Unix-Based Tools 3.2.1 System Activity Reporter (sar) 3.2.2 Cpustat 3.3 Summary of Log-Based Monitoring Tools 4. -

A Simple Chargeback System for SAS® Applications Running on UNIX and Linux Servers

SAS Global Forum 2007 Systems Architecture Paper: 194-2007 A Simple Chargeback System For SAS® Applications Running on UNIX and Linux Servers Michael A. Raithel, Westat, Rockville, MD Abstract Organizations that run SAS on UNIX and Linux servers often have a need to measure overall SAS usage and to charge the projects and users who utilize the servers. This allows an organization to pay for the hardware, software, and labor necessary to maintain and run these types of shared servers. However, performance management and accounting software is normally expensive. Purchasing such software, configuring it, and managing it may be prohibitive in terms of cost and in terms of having staff with the right skill sets to do so. Consequently, many organizations with UNIX or Linux servers do not have a reliable way to measure the overall use of SAS software and to charge individuals and projects for its use. This paper presents a simple methodology for creating a chargeback system on UNIX and Linux servers. It uses basic accounting programs found in the UNIX and Linux operating systems, and exploits them using SAS software. The paper presents an overview of the UNIX/Linux “sa” command and the basic accounting system. Then, it provides a SAS program that utilizes this command to capture and store monthly SAS usage statistics. The paper presents a second SAS program that reads the monthly SAS usage SAS data set and creates both a chargeback report and a general usage report. After reading this paper, you should be able to easily adapt the sample SAS programs to run on servers in your own environment. -

CS 450: Operating Systems Sean Wallace <[email protected]>

Deadlock CS 450: Operating Systems Computer Sean Wallace <[email protected]> Science Science deadlock |ˈdedˌläk| noun 1 [ in sing. ] a situation, typically one involving opposing parties, in which no progress can be made: an attempt to break the deadlock. –New Oxford American Dictionary 2 Traffic Gridlock 3 Software Gridlock mutex_A.lock() mutex_B.lock() mutex_B.lock() mutex_A.lock() # critical section # critical section mutex_B.unlock() mutex_B.unlock() mutex_A.unlock() mutex_A.unlock() 4 Necessary Conditions for Deadlock 5 That is, what conditions need to be true (of some system) so that deadlock is possible? (Not the same as causing deadlock!) 6 1. Mutual Exclusion Resources can be held by process in a mutually exclusive manner 7 2. Hold & Wait While holding one resource (in mutex), a process can request another resource 8 3. No Preemption One process can not force another to give up a resource; i.e., releasing is voluntary 9 4. Circular Wait Resource requests and allocations create a cycle in the resource allocation graph 10 Resource Allocation Graphs 11 Process: Resource: Request: Allocation: 12 R1 R2 P1 P2 P3 R3 Circular wait is absent = no deadlock 13 R1 R2 P1 P2 P3 R3 All 4 necessary conditions in place; Deadlock! 14 In a system with only single-instance resources, necessary conditions ⟺ deadlock 15 P3 R1 P1 P2 R2 P2 Cycle without Deadlock! 16 Not practical (or always possible) to detect deadlock using a graph —but convenient to help us reason about things 17 Approaches to Dealing with Deadlock 18 1. Ostrich algorithm (Ignore it and hope it never happens) 2. -

Sched-ITS: an Interactive Tutoring System to Teach CPU Scheduling Concepts in an Operating Systems Course

Wright State University CORE Scholar Browse all Theses and Dissertations Theses and Dissertations 2017 Sched-ITS: An Interactive Tutoring System to Teach CPU Scheduling Concepts in an Operating Systems Course Bharath Kumar Koya Wright State University Follow this and additional works at: https://corescholar.libraries.wright.edu/etd_all Part of the Computer Engineering Commons, and the Computer Sciences Commons Repository Citation Koya, Bharath Kumar, "Sched-ITS: An Interactive Tutoring System to Teach CPU Scheduling Concepts in an Operating Systems Course" (2017). Browse all Theses and Dissertations. 1745. https://corescholar.libraries.wright.edu/etd_all/1745 This Thesis is brought to you for free and open access by the Theses and Dissertations at CORE Scholar. It has been accepted for inclusion in Browse all Theses and Dissertations by an authorized administrator of CORE Scholar. For more information, please contact [email protected]. SCHED – ITS: AN INTERACTIVE TUTORING SYSTEM TO TEACH CPU SCHEDULING CONCEPTS IN AN OPERATING SYSTEMS COURSE A thesis submitted in partial fulfillment of the requirements for the degree of Master of Science By BHARATH KUMAR KOYA B.E, Andhra University, India, 2015 2017 Wright State University WRIGHT STATE UNIVERSITY GRADUATE SCHOOL April 24, 2017 I HEREBY RECOMMEND THAT THE THESIS PREPARED UNDER MY SUPERVISION BY Bharath Kumar Koya ENTITLED SCHED-ITS: An Interactive Tutoring System to Teach CPU Scheduling Concepts in an Operating System Course BE ACCEPTED IN PARTIAL FULFILLMENT OF THE REQIREMENTS FOR THE DEGREE OF Master of Science. _____________________________________ Adam R. Bryant, Ph.D. Thesis Director _____________________________________ Mateen M. Rizki, Ph.D. Chair, Department of Computer Science and Engineering Committee on Final Examination _____________________________________ Adam R.