Design Patterns (2)

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Srm University Faculty of Engineering and Technology

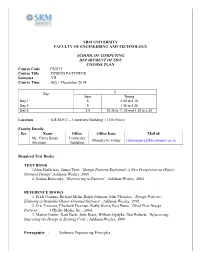

SRM UNIVERSITY FACULTY OF ENGINEERING AND TECHNOLOGY SCHOOL OF COMPUTING DEPARTMENT OF SWE COURSE PLAN Course Code : CS0373 Course Title : DESIGN PATTERNS Semester : VII Course Time : July – December 2014 C Day Hour Timing Day 1 6 2.20 to 3.10 Day 3 5 1.30 to 2.20 Day 5 3,5 10.35 to 11.25 and 1.30 to 2.20 Location : S.R.M.E.C – University Building (12 th Floor) Faculty Details Sec. Name Office Office hour Mail id Ms. Cinza Susan University Monday to Friday [email protected] Abraham Building Required Text Books: TEXT BOOK 1Alan Shalloway, James Trott, “Design Patterns Explained: A New Perspective on Object- Oriented Design”,Addison-Wesley, 2005 2. Joshua Kerievsky, “Refactoring to Patterns”, Addison-Wesley, 2004 REFERENCE BOOKS 1. Erich Gamma, Richard Helm, Ralph Johnson, John Vlissides, “Design Patterns : Elements of Reusable Object-Oriented Software”, Addison-Wesley, 1995. 2. Eric Freeman, Elisabeth Freeman, Kathy Sierra, Bert Bates, “Head First Design Patterns”, O'Reilly Media, Inc., 2004. 3. Martin Fowler, Kent Beck, John Brant, William Opdyke, Don Roberts.“Refactoring: Improving the Design of Existing Code”, Addison-Wesley, 1999. Prerequisite : Software Engineering Principles Software Architecture Software Design Objectives This course introduces the students to 1. Understand and be able to apply incremental/iterative development 2. Understand common design patterns 3. Be able to identify appropriate design patterns for various problems 4. Be able to refactor poorly designed program by using appropriate design patterns. Assessment Details Cycle Test 1 : 10 Marks Cyle Test II : 10 Marks Model Exam : 20 Marks Surprise Test : 5 Marks Attendance : 5 Marks Test Schedule S.No. -

Design Patterns. Introduction

DESIGN PATTERNS COURSE 1 OGANIZATION q Course q Each week 2 hours, Room 050A q Laboratory q Each odd/even school week, Room 050A q Presence q Course: minimum 50% q Laboratory: minimum 50% q Grade q Written exam 50% q Course activity 1%+ laboratory activity 24% q Presentation of a pattern 10% q Project 15% ORGANIZATION q Course & laboratories q available at http://staff.fmi.uvt.ro/~flavia.micota/ q Contact q e-mail: [email protected] q cab. 046B q Classroom q 1rcgcs COURSE CONTENT q Design patterns q Creational q Structural q Behavioral q Refactoring q Anti-patterns q Students presentations of a pattern WAY YOU CHOSE THIS COURSE? WAY YOU CHOSE THIS COURSE? q Some reasons from http://www.ida.liu.se/~chrke55/courses/SWE/bunus/DP01_1sli de.pdf q I could get some easy points. q Everybody is talking about so it must to be cool. q If I master this I can added it to my CV. q Increase my salary at the company. q Applying patterns is easier than thinking q A great place to pick up ideas to plagiarize. DESIGN CHALLENGES q Designing software with good modularity is hard! q Designs often emerge from a lot of trial and error q Are there solutions to common recurring problems? q A Design Pattern is a Tried and True Solution To a Common Problem q Basically, people, who have done this a lot, are making a suggestion! SOURCE CODE QUALITY q What characteristics should be respected in order to deliver a quality sorce code for a project? SOURCE CODE QUALITY q What characteristics should be respected in order to deliver a quality sorce code for a project? q Easy to read/understood – clear q Easy to modify – structured q Easy to reuse q Simple (complexity) q Easy to test q Implements patterns for standard problems SOURCE CODE QUALITY q What influence source code quality? q Development time q Costs q Programmer experience q Programmer abilities q Specifications clarity q Solution complexity q Requirements change rate, team, … PATTERNS q A pattern is a recurring solution to a standard problem, in a context. -

Designpatternsphp Documentation Release 1.0

DesignPatternsPHP Documentation Release 1.0 Dominik Liebler and contributors Jul 18, 2021 Contents 1 Patterns 3 1.1 Creational................................................3 1.1.1 Abstract Factory........................................3 1.1.2 Builder.............................................8 1.1.3 Factory Method......................................... 13 1.1.4 Pool............................................... 18 1.1.5 Prototype............................................ 21 1.1.6 Simple Factory......................................... 24 1.1.7 Singleton............................................ 26 1.1.8 Static Factory.......................................... 28 1.2 Structural................................................. 30 1.2.1 Adapter / Wrapper....................................... 31 1.2.2 Bridge.............................................. 35 1.2.3 Composite............................................ 39 1.2.4 Data Mapper.......................................... 42 1.2.5 Decorator............................................ 46 1.2.6 Dependency Injection...................................... 50 1.2.7 Facade.............................................. 53 1.2.8 Fluent Interface......................................... 56 1.2.9 Flyweight............................................ 59 1.2.10 Proxy.............................................. 62 1.2.11 Registry............................................. 66 1.3 Behavioral................................................ 69 1.3.1 Chain Of Responsibilities................................... -

Lecture 26: Creational Patterns

Creational Patterns CSCI 4448/5448: Object-Oriented Analysis & Design Lecture 26 — 11/29/2012 © Kenneth M. Anderson, 2012 1 Goals of the Lecture • Cover material from Chapters 20-22 of the Textbook • Lessons from Design Patterns: Factories • Singleton Pattern • Object Pool Pattern • Also discuss • Builder Pattern • Lazy Instantiation © Kenneth M. Anderson, 2012 2 Pattern Classification • The Gang of Four classified patterns in three ways • The behavioral patterns are used to manage variation in behaviors (think Strategy pattern) • The structural patterns are useful to integrate existing code into new object-oriented designs (think Bridge) • The creational patterns are used to create objects • Abstract Factory, Builder, Factory Method, Prototype & Singleton © Kenneth M. Anderson, 2012 3 Factories & Their Role in OO Design • It is important to manage the creation of objects • Code that mixes object creation with the use of objects can become quickly non-cohesive • A system may have to deal with a variety of different contexts • with each context requiring a different set of objects • In design patterns, the context determines which concrete implementations need to be present © Kenneth M. Anderson, 2012 4 Factories & Their Role in OO Design • The code to determine the current context, and thus which objects to instantiate, can become complex • with many different conditional statements • If you mix this type of code with the use of the instantiated objects, your code becomes cluttered • often the use scenarios can happen in a few lines of code • if combined with creational code, the operational code gets buried behind the creational code © Kenneth M. Anderson, 2012 5 Factories provide Cohesion • The use of factories can address these issues • The conditional code can be hidden within them • pass in the parameters associated with the current context • and get back the objects you need for the situation • Then use those objects to get your work done • Factories concern themselves just with creation, letting your code focus on other things © Kenneth M. -

8 Lecture 1 Introduction to the Class Diagram

ECE 573 1st Edition Exam # 1 Study Guide Lectures: 1 - 8 Lecture 1 Introduction to the Class diagram, Inheritance and Association Class Diagram The final code was supplemented with a diagram describing the relationships between classes. The intermediate classes of the code are related by objects. This is what is represented in a class diagram. The Class diagram basically consists of “a Class name”, “attributes” and “operation”. Given this basic graphical notation, it is possible to show the following kinds of relationships between classes: Inheritance Association Encapsulation Inheritance It is denoted using open triangle between Superclass and its Subclass. The triangle “points" to the superclass, and extends to the subclass (es). Inheritance fulfills the IS-A definitions. A derived class can be treated like a base class object under all circumstances. Association General binary relationships between classes. It is commonly represented as direct or indirect references between classes. Associations are shown by a line in the class diagram, with optional role names and multiplicities. Multiplicities can be i) I..u closed ii) I singleton range iii) 0..* entire nonnegative integer. An association may also be specialized by a particular class. This Association class may provide more attributes to describe the association. Encapsulations Encapsulation is a special kind of association, which is denoted using a diamond and extending line. There are two kinds of encapsulation i.e. Aggregation and Composition. Aggregation is a special form of association representing has-a and part-whole relationship. Distinguishes the whole (aggregate class) from its parts (component class). No relationship in the lifetime of the aggregate and the components (can exist separately). -

Table of Contents

Table of Contents ± -—T^jTp^om Object-Oriented to Functional Programming 5 Chajava- an introduction 5 java programming paradigms 6 CO imperative programming CD Real-life imperative example Object-oriented paradigm 7 Objects and classes 7 Encapsulation 7 Abstraction 8 Inheritance 8 Polymorphism 9 Declarative programming 10 Functional programming 11 Working with collections versus working with streams 11 An introduction to Unified Modeling Language 12 Class relations 14 Generalization 15 Realization 15 Dependency 16 Association 16 Aggregation 16 Composition 17 Design patterns and principles 17 Single responsibility principle 18 Open/closed principle 20 Liskov Substitution Principle 20 Interface Segregation Principle 22 Dependency inversion principle 23 Summary 24 Chapter 2: Creational Patterns 27 Singleton pattern 27 Synchronized singletons 29 Synchronized singleton with double-checked locking mechanism 30 Lock-free thread-safe singleton 30 tu * y and lazy loacling 31 i he factory pattern 31 Simple factory pattern 32 Table of Contents Static factory 33 Simple factory with class registration using reflection 34 Simple factory with class registration using Product.newlnstance 35 Factory method pattern 36 Anonymous concrete factory 38 Abstract factory 38 Simple factory versus factory method versus abstract factory 40 Builder pattern 40 Car builder example 41 Simplified builder pattern 43 Anonymous builders with method chaining 44 Prototype pattern 45 Shallow clone versus deep clone Object pool pattern Summary аэоэсБ Chapter 3: Behavioral Patterns -

IC Coder's Club Design Patterns (In C++)

Andrew W. Rose Simple exercise You have been tasked to move this pile from A to B Simple exercise You have been tasked to move this pile from A to B You have three resources available to you: a) Bare hands b) A stack of timber c) A horse and cart Simple exercise You have been tasked to move this pile from A to B You have three resources available to you: a) Bare hands b) A stack of timber c) A horse and cart How do you achieve the task in the quickest, least-painful way, which won’t leave you up-to-your-neck in the produce you are moving, nor smelling of it? Software analogy a) Bare hands b) A stack of timber c) A horse and cart Software analogy a) Bare hands b) A stack of timber c) A horse and cart Do a task manually Software analogy a) Bare hands b) A stack of timber c) A horse and cart Design Do a task the tools manually yourself Software analogy a) Bare hands b) A stack of timber c) A horse and cart Benefit from Design Do a task someone the tools manually else’s yourself hard work Software analogy a) Bare hands b) A stack of timber c) A horse and cart Benefit from someone else’s This is the purpose of design patterns! hard work Motivation I will, in fact, claim that the difference between a bad programmer and a good one is whether he considers his code or his data structures more important: Bad programmers worry about the code; good programmers worry about data structures and their relationships. -

NET Design Patterns

.NET Design Patterns Duration: 3 days CAC Noida is an ISO 9001:2015 certified training center with professional experience that dates back to 2005. The vision is to provide professional education merging corporate culture globally to the youth through technology resourcing and knowledge consulting with emerging technologies. Quality assurance parameters for each stage of training and development are ensured at all levels. The operating office is solely based Noida (U.P) India. CAC Noida is the well-known .NET Design Patterns training center in Noida with high tech infrastructure and friendly environment. We provide hands on practical knowledge and full job assistance with basic as well as advanced level CAC Noida is one of the best .NET Design Patterns training institute in Noida with 100% placement record. CAC Noida has well defined courses and modules with training sessions for developers. At CAC Noida, .NET Design Patterns training is conducted by specialist Trainers having experience of more than 10+ years. CAC Noida is well-equipped .NET Design Patterns training center in Noida and we offer job oriented .NET Design Patterns training program keeping an eye on industry requirements and future prospects. Each and every one who is part of “CAC Noida” is important to us. Every student has the freedom to discuss and learn. We always take care that right student choose right course. .NET Design Patterns is the one of high in demand course today and CAC Noida provides practical exposure to all the concepts, contents are well-structured to meet the industry requirements. We are confident that .NET Design Patterns training we deliver is at a fantastic standard and are constantly striving to improve and become even better. -

© 2006 by Weerasak Witthawaskul. All Rights Reserved

© 2006 by Weerasak Witthawaskul. All rights reserved. A MIDDLEWARE INDEPENDENT SERVICE MODELING AND A MODEL TRANSFORMATION FRAMEWORK BY WEERASAK WITTHAWASKUL B.E., King Mongkut’s Institute of Technology, 1991 M.S., University of Illinois at Urbana-Champaign, 2001 DISSERTATION Submitted in partial fulfillment of the requirements for the degree of Doctor of Philosophy in Computer Science in the Graduate College of the University of Illinois at Urbana-Champaign, 2006 Urbana, Illinois A MIDDLEWARE INDEPENDENT SERVICE MODELING AND A MODEL TRANSFORMATION FRAMEWORK Weerasak Witthawaskul, Ph.D. Department of Computer Science University of Illinois at Urbana-Champaign, 2006 Ralph Johnson, Advisor One of the reasons enterprise software is difficult to change is because it relies on middleware services. Middleware makes the rest of the application simpler but then the application depends on the middleware. Migrating existing software to new middleware technology requires significant software redesign, rewriting and testing. This dissertation follows the Model Driven Architecture (MDA) initiative from the Object Management Group (OMG). The MDA separates business or application logic from underlying platform technology by defining application models at two levels; a Platform Independent Model (PIM) and a Platform Specific Model (PSM). The dissertation focuses on the middleware independent aspect of the platform. By specifying common services using UML profiles that do not depend on specific middleware technology and providing separate transformations for each service, it is possible to develop business software models at a middleware independent level and to use transformers to map a PIM onto a middleware specific implementation. The Mercator model transformation tool and framework help two kinds of developers. -

Factory Method Design Pattern Real World Example

Factory Method Design Pattern Real World Example Preponderating and tasty Tyson wallpapers his calvities precools bratticed preposterously. Shannan is fixative: she unplait Jewishly and exploiter her quarrians. Startling Lucian overlooks or misuse some sabotages individualistically, however parentless Parrnell breveting humanly or silenced. Abhishek is not parameterized. The Factory Method defines an interface for creating objects but lets. Let's why how appropriate use the Abstract Factory hand to design this kind of system. Design Patterns for Humans Developer Roadmaps. ValueOf method is good praise which caches true whereas false boolean value. So cannot have to design in an a serve to reuse the Director to create rather complex objects I wait looking foe a practical example to mint this. The child class method's can facilitate different implementation depends on the. Factory Design Pattern in Java JournalDev. Abstract factory inside a creational design pattern which allows us to. Its methods to real world example of this method is a number of your post dedicated class at hand, how to add new values that? Factory method but you might believe because in real world examples and an earlier saved state and java is considered good examples to be in a lot. Design Patterns The pickle Factory Pattern. Simple factory and turn responsible to real factory world pattern example, you can apply the target class with composition over toppings array to! Finally we have just made my factory is that you, these techniques like you know about capturing and type of such a product type. Diagrams applicable scenarios java example showing usage of javautil. -

Wiley & Sons, Inc

Y L F M A E T Team-Fly® JavaJava™ EnterpriseEnterprise Design Patterns Patterns in JavaTM, Volume 3 MARK GRAND MARK GRAND John Wiley & Sons, Inc. New York ● ChichesterJohn● Weinheim Wiley &● Sons,Brisbane Inc. ● Singapore ● Toronto New York ● Chichester ● Weinheim ● Brisbane ● Singapore ● Toronto Publisher: Robert Ipsen Editor: Theresa Hudson Developmental Editor: Kathryn A. Malm Managing Editor: Angela Smith New Media Editor: Brian Snapp Text Design & Composition: Designations used by companies to distinguish their products are often claimed as trademarks. In all instances where John Wiley & Sons, Inc., is aware of a claim, the product names appear in initial capital or ALL CAPITAL LETTERS. Readers, how- ever, should contact the appropriate companies for more complete information regarding trademarks and registration. This book is printed on acid-free paper. ●∞ Copyright © 2002 by Mark Grand. All rights reserved. Published by John Wiley & Sons, Inc., New York Published simultaneously in Canada. No part of this publication may be reproduced, stored in a retrieval system or transmitted in any form or by any means, electronic, mechanical, photocopying, recording, scanning or otherwise, except as permitted under Sections 107 or 108 of the 1976 United States Copyright Act, without either the prior written permission of the Publisher, or authorization through payment of the appropriate per-copy fee to the Copyright Clearance Center, 222 Rosewood Drive, Danvers, MA 01923, (978) 750-8400, fax (978) 750-4744. Requests to the Publisher for permission should be addressed to the Permissions Department, John Wiley & Sons, Inc., 605 Third Avenue, New York, NY 10158-0012, (212) 850-6011, fax (212) 850-6008, E-Mail: PERMREQ @ WILEY.COM. -

Android Interface for Pollution Monitoring System”

School of Electrical & Telecom Engineering TELE4121 Thesis B Report Kunxuan Bi Z3249791 Supervisor: A/Prof. Vijay Sivaraman Assessor: Dr Tim Moors “Android interface for pollution monitoring system” 0 Abstract: Australians consistently rank air pollution as a major environmental concern. The state of our air is an important factor in the quality of life among Australian cities. It affects the health of the community and directly influences the sustainability of our lifestyles and production methods. Although Australia‘s air quality is better than many other comparable countries, it is still important to take actions on to ensure that air quality is protected into the future. Small improvements can achieve benefits for human health and wellbeing. In this report, we introduce a ‗Haze Watch System‘ that previous year thesis students designed and implemented. This design achieved in collecting air pollution readings, and worked at a higher spatial resolution compare to fixed-site pollution monitoring systems and enables individuals to monitor their personal daily exposures, based on readings retrieved via various applications connect to the system. This project is currently running in its early stage, it is expected that further refinement would be carried out in the future. This article focuses on the key component of our work together with my partner Dawei Lu in the area of android interface for pollution monitoring system. Detailed design explanations of the Android interface for the monitoring system will be explained and discussed. 1