SETL for Internet Data Processing

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Review Thanks!

CS 242 Thanks! Review Teaching Assistants • Mike Cammarano • TJ Giuli • Hendra Tjahayadi John Mitchell Graders • Andrew Adams Tait Larson Final Exam • Kenny Lau Aman Naimat • Vishal Patel Justin Pettit Wednesday Dec 8 8:30 – 11:30 AM • and more … Gates B01, B03 Course Goals There are many programming languages Understand how programming languages work Early languages Appreciate trade-offs in language design • Fortran, Cobol, APL, ... Be familiar with basic concepts so you can Algol family understand discussions about • Algol 60, Algol 68, Pascal, …, PL/1, … Clu, Ada, Modula, • Language features you haven’t used Cedar/Mesa, ... • Analysis and environment tools Functional languages • Implementation costs and program efficiency • Lisp, FP, SASL, ML, Miranda, Haskell, Scheme, Setl, ... • Language support for program development Object-oriented languages • Smalltalk, Self, Cecil, … • Modula-3, Eiffel, Sather, … • C++, Objective C, …. Java General Themes in this Course Concurrent languages Language provides an abstract view of machine • Actors, Occam, ... • We don’t see registers, length of instruction, etc. • Pai-Lisp, … The right language can make a problem easy; Proprietary and special purpose languages wrong language can make a problem hard • Could have said a lot more about this • TCL, Applescript, Telescript, ... Language design is full of difficult trade-offs • Postscript, Latex, RTF, … • Expressiveness vs efficiency, ... • Domain-specific language • Important to decide what the language is for Specification languages • Every feature -

GNAT for Cross-Platforms

GNAT User’s Guide Supplement for Cross Platforms GNAT, The GNU Ada Compiler GNAT GPL Edition, Version 2012 Document revision level 247113 Date: 2012/03/28 AdaCore Copyright c 1995-2011, Free Software Foundation Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.1 or any later version published by the Free Software Foundation; with the Invariant Sections being “GNU Free Documentation License”, with the Front-Cover Texts being “GNAT User’s Guide Supplement for Cross Platforms”, and with no Back-Cover Texts. A copy of the license is included in the section entitled “GNU Free Documentation License”. About This Guide This guide describes the use of GNAT, a compiler and software development toolset for the full Ada programming language, in a cross compilation environ- ment. It supplements the information presented in the GNAT User’s Guide. It describes the features of the compiler and tools, and details how to use them to build Ada applications that run on a target processor. GNAT implements Ada 95 and Ada 2005, and it may also be invoked in Ada 83 compatibility mode. By default, GNAT assumes Ada 2005, but you can override with a compiler switch to explicitly specify the language version. (Please refer to the section “Compiling Different Versions of Ada”, in GNAT User’s Guide, for details on these switches.) Throughout this manual, references to “Ada” without a year suffix apply to both the Ada 95 and Ada 2005 versions of the language. This guide contains some basic information about using GNAT in any cross environment, but the main body of the document is a set of Appendices on topics specific to the various target platforms. -

Automated Testing of Debian Packages

Introduction Lintian and Linda Rebuilding packages Piuparts Structuring QA Conclusion Automated Testing of Debian Packages Holger Levsen – [email protected] Lucas Nussbaum – [email protected] Holger Levsen and Lucas Nussbaum Automated Testing of Debian Packages 1 / 31 Introduction Lintian and Linda Rebuilding packages Piuparts Structuring QA Conclusion Summary 1 Introduction 2 Lintian and Linda 3 Rebuilding packages 4 Piuparts 5 Structuring QA 6 Conclusion Holger Levsen and Lucas Nussbaum Automated Testing of Debian Packages 2 / 31 Introduction Lintian and Linda Rebuilding packages Piuparts Structuring QA Conclusion Debian’s Quality Popcon data Automated Testing Summary 1 Introduction Debian’s Quality Popcon data Automated Testing 2 Lintian and Linda 3 Rebuilding packages 4 Piuparts 5 Structuring QA 6 ConclusionHolger Levsen and Lucas Nussbaum Automated Testing of Debian Packages 3 / 31 Introduction Lintian and Linda Rebuilding packages Piuparts Structuring QA Conclusion Debian’s Quality Popcon data Automated Testing Debian’s Quality Ask around : considered quite good compared to other distros A lot of packages, all supported in the same way : 10316 source packages in etch/main 18167 binary packages in etch/main Holger Levsen and Lucas Nussbaum Automated Testing of Debian Packages 4 / 31 Introduction Lintian and Linda Rebuilding packages Piuparts Structuring QA Conclusion Debian’s Quality Popcon data Automated Testing Packages installations according to popcon 1 0.9 0.8 0.7 0.6 0.5 0.4 0.3 percentage of packages 0.2 0.1 0 0 5000 10000 -

Modern Programming Languages CS508 Virtual University of Pakistan

Modern Programming Languages (CS508) VU Modern Programming Languages CS508 Virtual University of Pakistan Leaders in Education Technology 1 © Copyright Virtual University of Pakistan Modern Programming Languages (CS508) VU TABLE of CONTENTS Course Objectives...........................................................................................................................4 Introduction and Historical Background (Lecture 1-8)..............................................................5 Language Evaluation Criterion.....................................................................................................6 Language Evaluation Criterion...................................................................................................15 An Introduction to SNOBOL (Lecture 9-12).............................................................................32 Ada Programming Language: An Introduction (Lecture 13-17).............................................45 LISP Programming Language: An Introduction (Lecture 18-21)...........................................63 PROLOG - Programming in Logic (Lecture 22-26) .................................................................77 Java Programming Language (Lecture 27-30)..........................................................................92 C# Programming Language (Lecture 31-34) ...........................................................................111 PHP – Personal Home Page PHP: Hypertext Preprocessor (Lecture 35-37)........................129 Modern Programming Languages-JavaScript -

January–June 2018 New GNAT Pro Product Lines GNAT Pro Assurance

tech corner newsflash calendar highlights / January –June 2018 MHI Aerospace Systems Corporation For up-to-date information on conferences where AdaCore is New Product Release participating, please visit www.adacore.com/events/. AdaCore’s annual Q1 release cycle brings across-the-board enhance- new rules, with supporting qualification material available for DO-178C; Selects QGen 2 ments, many of which stem from customer suggestions. Below is a sam- GNATcoverage’s tool qualification material has been adapted to DO-178C MHI Aerospace Systems Corporation (MASC), a member of the Mitsubishi ERTS 2018 pling of new features in the V18 products; details may be found on-line and Ada 2012, and support has been introduced for Lauterbach probes; Heavy Industries Group, has selected the QGen toolset to develop the (Embedded Real Time Software and Systems) in AdaCore’s “New Features” pages: and the GNATtest unit testing framework has added several new options. software for the Throttle Quadrant Assembly (TQA) system. This avionics January 31–February 2, 2018 Inside The GNAT Programming Studio (GPS) IDE has incorporated performance Toulouse, France GNAT Pro base technology: docs.adacore.com/R/relnotes/features-18 research project is being conducted to meet the Level C objectives in the and user interface improvements, for example in the C/C++ navigation DO-178C safety standard for airborne software and its DO-331 supplement AdaCore is exhibiting at this conference, and AdaCore personnel are presenting papers on docs.adacore.com/R/relnotes/features-gps-18 software safety, avionics certification, drone autopilot software, and lightweight semantic GPS and GNATbench IDEs: engine, and GNATbench supports Eclipse 4.8 Oxygen as well as Wind on Model-Based Development and Verification. -

GNAT User's Guide

GNAT User's Guide GNAT, The GNU Ada Compiler For gcc version 4.7.4 (GCC) AdaCore Copyright c 1995-2009 Free Software Foundation, Inc. Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.3 or any later version published by the Free Software Foundation; with no Invariant Sections, with no Front-Cover Texts and with no Back-Cover Texts. A copy of the license is included in the section entitled \GNU Free Documentation License". About This Guide 1 About This Guide This guide describes the use of GNAT, a compiler and software development toolset for the full Ada programming language. It documents the features of the compiler and tools, and explains how to use them to build Ada applications. GNAT implements Ada 95 and Ada 2005, and it may also be invoked in Ada 83 compat- ibility mode. By default, GNAT assumes Ada 2005, but you can override with a compiler switch (see Section 3.2.9 [Compiling Different Versions of Ada], page 78) to explicitly specify the language version. Throughout this manual, references to \Ada" without a year suffix apply to both the Ada 95 and Ada 2005 versions of the language. What This Guide Contains This guide contains the following chapters: • Chapter 1 [Getting Started with GNAT], page 5, describes how to get started compiling and running Ada programs with the GNAT Ada programming environment. • Chapter 2 [The GNAT Compilation Model], page 13, describes the compilation model used by GNAT. • Chapter 3 [Compiling Using gcc], page 41, describes how to compile Ada programs with gcc, the Ada compiler. -

Preconditions/Postconditions Author: Robert Dewar Abstract: Ada Gem

Gem #31: preconditions/postconditions Author: Robert Dewar Abstract: Ada Gem #31 — The notion of preconditions and postconditions is an old one. A precondition is a condition that must be true before a section of code is executed, and a postcondition is a condition that must be true after the section of code is executed. Let’s get started… The notion of preconditions and postconditions is an old one. A precondition is a condition that must be true before a section of code is executed, and a postcondition is a condition that must be true after the section of code is executed. In the context we are talking about here, the section of code will always be a subprogram. Preconditions are conditions that must be guaranteed by the caller before the call, and postconditions are results guaranteed by the subprogram code itself. It is possible, using pragma Assert (as defined in Ada 2005, and as implemented in all versions of GNAT), to approximate run-time checks corresponding to preconditions and postconditions by placing assertion pragmas in the body of the subprogram, but there are several problems with that approach: 1. The assertions are not visible in the spec, and preconditions and postconditions are logically a part of (in fact, an important part of) the spec. 2. Postconditions have to be repeated at every exit point. 3. Postconditions often refer to the original value of a parameter on entry or the result of a function, and there is no easy way to do that in an assertion. The latest versions of GNAT implement two pragmas, Precondition and Postcondition, that deal with all three problems in a convenient way. -

Introduction to Python

Introduction to Python Programming Languages Adapted from Tutorial by Mark Hammond Skippi-Net, Melbourne, Australia [email protected] http://starship.python.net/crew/mhammond What Is Python? Created in 1990 by Guido van Rossum While at CWI, Amsterdam Now hosted by centre for national research initiatives, Reston, VA, USA Free, open source And with an amazing community Object oriented language “Everything is an object” 1 Why Python? Designed to be easy to learn and master Clean, clear syntax Very few keywords Highly portable Runs almost anywhere - high end servers and workstations, down to windows CE Uses machine independent byte-codes Extensible Designed to be extensible using C/C++, allowing access to many external libraries Python: a modern hybrid A language for scripting and prototyping Balance between extensibility and powerful built-in data structures genealogy: Setl (NYU, J.Schwartz et al. 1969-1980) ABC (Amsterdam, Meertens et al. 1980-) Python (Van Rossum et all. 1996-) Very active open-source community 2 Prototyping Emphasis on experimental programming: Interactive (like LISP, ML, etc). Translation to bytecode (like Java) Dynamic typing (like LISP, SETL, APL) Higher-order function (LISP, ML) Garbage-collected, no ptrs (LISP, SNOBOL4) Prototyping Emphasis on experimental programming: Uniform treatment of indexable structures (like SETL) Built-in associative structures (like SETL, SNOBOL4, Postscript) Light syntax, indentation is significant (from ABC) 3 Most obvious and notorious features Clean syntax plus high-level data types Leads to fast coding Uses white-space to delimit blocks Humans generally do, so why not the language? Try it, you will end up liking it Variables do not need declaration Although not a type-less language A Digression on Block Structure There are three ways of dealing with IF structures Sequences of statements with explicit end (Algol-68, Ada, COBOL) Single statement (Algol-60, Pascal, C) Indentation (ABC, Python) 4 Sequence of Statements IF condition THEN stm; stm; . -

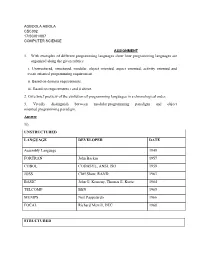

1. with Examples of Different Programming Languages Show How Programming Languages Are Organized Along the Given Rubrics: I

AGBOOLA ABIOLA CSC302 17/SCI01/007 COMPUTER SCIENCE ASSIGNMENT 1. With examples of different programming languages show how programming languages are organized along the given rubrics: i. Unstructured, structured, modular, object oriented, aspect oriented, activity oriented and event oriented programming requirement. ii. Based on domain requirements. iii. Based on requirements i and ii above. 2. Give brief preview of the evolution of programming languages in a chronological order. 3. Vividly distinguish between modular programming paradigm and object oriented programming paradigm. Answer 1i). UNSTRUCTURED LANGUAGE DEVELOPER DATE Assembly Language 1949 FORTRAN John Backus 1957 COBOL CODASYL, ANSI, ISO 1959 JOSS Cliff Shaw, RAND 1963 BASIC John G. Kemeny, Thomas E. Kurtz 1964 TELCOMP BBN 1965 MUMPS Neil Pappalardo 1966 FOCAL Richard Merrill, DEC 1968 STRUCTURED LANGUAGE DEVELOPER DATE ALGOL 58 Friedrich L. Bauer, and co. 1958 ALGOL 60 Backus, Bauer and co. 1960 ABC CWI 1980 Ada United States Department of Defence 1980 Accent R NIS 1980 Action! Optimized Systems Software 1983 Alef Phil Winterbottom 1992 DASL Sun Micro-systems Laboratories 1999-2003 MODULAR LANGUAGE DEVELOPER DATE ALGOL W Niklaus Wirth, Tony Hoare 1966 APL Larry Breed, Dick Lathwell and co. 1966 ALGOL 68 A. Van Wijngaarden and co. 1968 AMOS BASIC FranÇois Lionet anConstantin Stiropoulos 1990 Alice ML Saarland University 2000 Agda Ulf Norell;Catarina coquand(1.0) 2007 Arc Paul Graham, Robert Morris and co. 2008 Bosque Mark Marron 2019 OBJECT-ORIENTED LANGUAGE DEVELOPER DATE C* Thinking Machine 1987 Actor Charles Duff 1988 Aldor Thomas J. Watson Research Center 1990 Amiga E Wouter van Oortmerssen 1993 Action Script Macromedia 1998 BeanShell JCP 1999 AngelScript Andreas Jönsson 2003 Boo Rodrigo B. -

Understanding Programming Languages

Understanding Programming Languages M. Ben-Ari Weizmann Institute of Science Originally published by John Wiley & Sons, Chichester, 1996. Copyright °c 2006 by M. Ben-Ari. You may download, display and print one copy for your personal use in non-commercial academic research and teaching. Instructors in non-commerical academic institutions may make one copy for each student in his/her class. All other rights reserved. In particular, posting this document on web sites is prohibited without the express permission of the author. Contents Preface xi I Introduction to Programming Languages 1 1 What Are Programming Languages? 2 1.1 The wrong question . 2 1.2 Imperative languages . 4 1.3 Data-oriented languages . 7 1.4 Object-oriented languages . 11 1.5 Non-imperative languages . 12 1.6 Standardization . 13 1.7 Computer architecture . 13 1.8 * Computability . 16 1.9 Exercises . 17 2 Elements of Programming Languages 18 2.1 Syntax . 18 2.2 * Semantics . 20 2.3 Data . 21 2.4 The assignment statement . 22 2.5 Type checking . 23 2.6 Control statements . 24 2.7 Subprograms . 24 2.8 Modules . 25 2.9 Exercises . 26 v Contents vi 3 Programming Environments 27 3.1 Editor . 28 3.2 Compiler . 28 3.3 Librarian . 30 3.4 Linker . 31 3.5 Loader . 32 3.6 Debugger . 32 3.7 Profiler . 33 3.8 Testing tools . 33 3.9 Configuration tools . 34 3.10 Interpreters . 34 3.11 The Java model . 35 3.12 Exercises . 37 II Essential Concepts 38 4 Elementary Data Types 39 4.1 Integer types . -

CWI Scanprofile/PDF/300

Centrum voor Wiskunde en lnformatica Centre for Mathematics and Computer Science L.G.L.T. Meertens, S. Pemberton An implementation of the B programming language Department of Computer Science Note CS-N8406 June Biblioiiie.:;I( ~'~'l'i't'Hm.n<' Wi~f.i;r;de- c11 !nform;:,;i:i.C<a - Ams1errJar11 AN IMPLEMENTATION OF THE B PROGRAMMING LANGUAGE L.G.L.T. MEERTENS, S. PEMBERTON CentPe foP Mathematics and ComputeP Science~ AmstePdam Bis a new programming language designed for personal computing. We describe some of the decisions taken in implementing the language, and the problems involved. Note: B is a working title until the language is finally frozen. Then it will acquire its definitive name. The language is entirely unrelated to the predecessor of C. A version of this paper will appear in the proceedings of the Washington USENIX Conference (January 1984). 1982 CR CATEGORIES: 69D44. KEY WORDS & PHRASES: programming language implementation, progrannning envi ronments, B. Note CS-N8406 Centre f~r Mathematics and Computer Science P.O. Box 4079, 1009 AB Amsterdam, The Netherlands I The programming language B B is a programming language being designed and implemented at the CWI. It was originally started in 1975 in an attempt to design a language for beginners as a suitable replacement for BASIC. While the emphasis of the project has in the intervening years shifted from "beginners" to "personal computing", the main design objectives have remained the same: · • simplicity; • suitability for conversational use; • availability of tools for structured programming. The design of the language has proceeded iteratively, and the language as it now stands is the third iteration of this process. -

Bibliography

Bibliography [1] P. Agarwal, S. Har-Peled, M. Sharir, and Y. Wang. Hausdorff distance under translation for points, disks, and balls. Manuscript, 2002. [2] P. Agarwal and M. Sharir. Pipes, cigars, and kreplach: The union of Minkowski sums in three dimensions. Discrete Comput. Geom., 24:645–685, 2000. [3] P. K. Agarwal, O. Schwarzkopf, and Micha Sharir. The overlay of lower envelopes and its applications. Discrete Comput. Geom., 15:1–13, 1996. [4] Pankaj K. Agarwal. Efficient techniques in geometric optimization: An overview, 1999. slides. [5] Pankaj K. Agarwal and Micha Sharir. Motion planning of a ball amid segments in three dimensions. In Proc. 10th ACM-SIAM Sympos. Discrete Algorithms, pages 21–30, 1999. [6] Pankaj K. Agarwal and Micha Sharir. Pipes, cigars, and kreplach: The union of Minkowski sums in three dimensions. In Proc. 15th Annu. ACM Sympos. Comput. Geom., pages 143–153, 1999. [7] Pankaj K. Agarwal, Micha Sharir, and Sivan Toledo. Applications of parametric searching in geometric optimization. Journal of Algorithms, 17:292–318, 1994. [8] Pankaj Kumar Agarwal and Micha Sharir. Arrangements and their applications. In J.-R. Sack and J. Urrutia, editors, Handbook of Computational Geometry, pages 49–120. Elsevier, 1999. [9] H. Alt, O. Aichholzer, and G¨unter Rote. Matching shapes with a reference point. Inter- nat. J. Comput. Geom. Appl., 7:349–363, 1997. [10] H. Alt and M. Godau. Computing the Fr´echet distance between two polygonal curves. Internat. J. Comput. Geom. Appl., 5:75–91, 1995. [11] H. Alt, K. Mehlhorn, H. Wagener, and Emo Welzl. Congruence, similarity and symme- tries of geometric objects.