How to Find Short RC4 Colliding Key Pairs

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Hardness Evaluation of Solving MQ Problems

MQ Challenge: Hardness Evaluation of Solving MQ Problems Takanori Yasuda (ISIT), Xavier Dahan (ISIT), Yun-Ju Huang (Kyushu Univ.), Tsuyoshi Takagi (Kyushu Univ.), Kouichi Sakurai (Kyushu Univ., ISIT) This work was supported by “Strategic Information and Communications R&D Promotion Programme (SCOPE), no. 0159-0091”, Ministry of Internal Affairs and Communications, Japan. The first author is supported by Grant-in-Aid for Young Scientists (B), Grant number 24740078. Fukuoka MQ challenge MQ challenge started on April 1st. https://www.mqchallenge.org/ ETSI Quantum Safe Workshop 2015/4/3 2 Why we need MQ challenge? • Several public key cryptosystems held contests which solve the associated basic mathematical problems. o RSA challenge(RSA Laboratories), ECC challenge(Certicom), Lattice challenge(TU Darmstadt) • Lattice challenge (http://www.latticechallenge.org/) o Target: Short vector problem o 2008 – now continued • Multivariate public-key cryptsystem (MPKC) also need to evaluate the current state-of-the-art in practical MQ problem solvers. We planed to hold MQ challenge. ETSI Quantum Safe Workshop 2015/4/3 3 Multivariate Public Key Cryptosystem (MPKC) • Advantage o Candidate for post-quantum cryptography o Used for both encryption and signature schemes • Encryption: Simple Matrix scheme (ABC scheme), ZHFE scheme • Signature: UOV, Rainbow o Efficient encryption and decryption and signature generation and verification. • Problems o Exact estimate of security of MPKC schemes o Huge length of secret and public keys in comparison with RSA o New application and function ETSI Quantum Safe Workshop 2015/4/3 4 MQ problem MPKC are public key cryptosystems whose security depends on the difficulty in solving a system of multivariate quadratic polynomials with coefficients in a finite field . -

Side Channel Analysis Attacks on Stream Ciphers

Side Channel Analysis Attacks on Stream Ciphers Daehyun Strobel 23.03.2009 Masterarbeit Ruhr-Universität Bochum Lehrstuhl Embedded Security Prof. Dr.-Ing. Christof Paar Betreuer: Dipl.-Ing. Markus Kasper Erklärung Ich versichere, dass ich die Arbeit ohne fremde Hilfe und ohne Benutzung anderer als der angegebenen Quellen angefertigt habe und dass die Arbeit in gleicher oder ähnlicher Form noch keiner anderen Prüfungsbehörde vorgelegen hat und von dieser als Teil einer Prüfungsleistung angenommen wurde. Alle Ausführungen, die wörtlich oder sinngemäß übernommen wurden, sind als solche gekennzeichnet. Bochum, 23.März 2009 Daehyun Strobel ii Abstract In this thesis, we present results from practical differential power analysis attacks on the stream ciphers Grain and Trivium. While most published works on practical side channel analysis describe attacks on block ciphers, this work is among the first ones giving report on practical results of power analysis attacks on stream ciphers. Power analyses of stream ciphers require different methods than the ones used in todays most popular attacks. While for the majority of block ciphers it is sufficient to attack the first or last round only, to analyze a stream cipher typically the information leakages of many rounds have to be considered. Furthermore the analysis of hardware implementations of stream ciphers based on feedback shift registers inevitably leads to methods combining algebraic attacks with methods from the field of side channel analysis. Instead of a direct recovery of key bits, only terms composed of several key bits and bits from the initialization vector can be recovered. An attacker first has to identify a sufficient set of accessible terms to finally solve for the key bits. -

Petawatt and Exawatt Class Lasers Worldwide

Petawatt and exawatt class lasers worldwide Colin Danson, Constantin Haefner, Jake Bromage, Thomas Butcher, Jean-Christophe Chanteloup, Enam Chowdhury, Almantas Galvanauskas, Leonida Gizzi, Joachim Hein, David Hillier, et al. To cite this version: Colin Danson, Constantin Haefner, Jake Bromage, Thomas Butcher, Jean-Christophe Chanteloup, et al.. Petawatt and exawatt class lasers worldwide. High Power Laser Science and Engineering, Cambridge University Press, 2019, 7, 10.1017/hpl.2019.36. hal-03037682 HAL Id: hal-03037682 https://hal.archives-ouvertes.fr/hal-03037682 Submitted on 3 Dec 2020 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. High Power Laser Science and Engineering, (2019), Vol. 7, e54, 54 pages. © The Author(s) 2019. This is an Open Access article, distributed under the terms of the Creative Commons Attribution licence (http://creativecommons.org/ licenses/by/4.0/), which permits unrestricted re-use, distribution, and reproduction in any medium, provided the original work is properly cited. doi:10.1017/hpl.2019.36 Petawatt and exawatt class lasers worldwide Colin N. Danson1;2;3, Constantin Haefner4;5;6, Jake Bromage7, Thomas Butcher8, Jean-Christophe F. Chanteloup9, Enam A. Chowdhury10, Almantas Galvanauskas11, Leonida A. -

Hardware Implementation of Four Byte Per Clock RC4 Algorithm Rourab Paul, Amlan Chakrabarti, Senior Member, IEEE, and Ranjan Ghosh

JOURNAL OF LATEX CLASS FILES, VOL. 6, NO. 1, JANUARY 2007 1 Hardware Implementation of four byte per clock RC4 algorithm Rourab Paul, Amlan Chakrabarti, Senior Member, IEEE, and Ranjan Ghosh, Abstract—In the field of cryptography till date the 2-byte in 1-clock is the best known RC4 hardware design [1], while 1-byte in 1-clock [2], and the 1-byte in 3 clocks [3][4] are the best known implementation. The design algorithm in[2] considers two consecutive bytes together and processes them in 2 clocks. The design [1] is a pipelining architecture of [2]. The design of 1-byte in 3-clocks is too much modular and clock hungry. In this paper considering the RC4 algorithm, as it is, a simpler RC4 hardware design providing higher throughput is proposed in which 6 different architecture has been proposed. In design 1, 1-byte is processed in 1-clock, design 2 is a dynamic KSA-PRGA architecture of Design 1. Design 3 can process 2 byte in a single clock, where as Design 4 is Dynamic KSA-PRGA architecture of Design 3. Design 5 and Design 6 are parallelization architecture design 2 and design 4 which can compute 4 byte in a single clock. The maturity in terms of throughput, power consumption and resource usage, has been achieved from design 1 to design 6. The RC4 encryption and decryption designs are respectively embedded on two FPGA boards as co-processor hardware, the communication between the two boards performed using Ethernet. Keywords—Computer Society, IEEEtran, journal, LATEX, paper, template. -

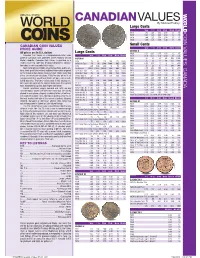

*UPDATED Canadian Values 07-04 201 7/26/2016 4:42:21 PM *UPDATED Canadian Values 07-04 202 COIN VALUES: CANADA 02 .0 .0 12

CANADIAN VALUES By Michael Findlay Large Cents VG-8 F-12 VF-20 EF-40 MS-60 MS-63R 1917 1.00 1.25 1.50 2.50 13. 45. CANADA COIN VALUES: 1918 1.00 1.25 1.50 2.50 13. 45. 1919 1.00 1.25 1.50 2.50 13. 45. 1920 1.00 1.25 1.50 3.00 18. 70. CANADIAN COIN VALUES Small Cents PRICE GUIDE VG-8 F-12 VF-20 EF-40 MS-60 MS-63R GEORGE V All prices are in U.S. dollars LargeL Cents C t 1920 0.20 0.35 0.75 1.50 12. 45. Canadian Coin Values is a comprehensive retail value VG-8 F-12 VF-20 EF-40 MS-60 MS-63R 1921 0.50 0.75 1.50 4.00 30. 250. guide of Canadian coins published online regularly at Coin VICTORIA 1922 20. 23. 28. 40. 200. 1200. World’s website. Canadian Coin Values is provided as a 1858 70. 90. 120. 200. 475. 1800. 1923 30. 33. 42. 55. 250. 2000. reader service to collectors desiring independent informa- 1858 Coin Turn NI NI 2500. 5000. BNE BNE 1924 6.00 8.00 11. 16. 120. 800. tion about a coin’s potential retail value. 1859 4.00 5.00 6.00 10. 50. 200. 1925 25. 28. 35. 45. 200. 900. Sources for pricing include actual transactions, public auc- 1859 Brass 16000. 22000. 30000. BNE BNE BNE 1926 3.50 4.50 7.00 12. 90. 650. tions, fi xed-price lists and any additional information acquired 1859 Dbl P 9 #1 225. -

9/11 Report”), July 2, 2004, Pp

Final FM.1pp 7/17/04 5:25 PM Page i THE 9/11 COMMISSION REPORT Final FM.1pp 7/17/04 5:25 PM Page v CONTENTS List of Illustrations and Tables ix Member List xi Staff List xiii–xiv Preface xv 1. “WE HAVE SOME PLANES” 1 1.1 Inside the Four Flights 1 1.2 Improvising a Homeland Defense 14 1.3 National Crisis Management 35 2. THE FOUNDATION OF THE NEW TERRORISM 47 2.1 A Declaration of War 47 2.2 Bin Ladin’s Appeal in the Islamic World 48 2.3 The Rise of Bin Ladin and al Qaeda (1988–1992) 55 2.4 Building an Organization, Declaring War on the United States (1992–1996) 59 2.5 Al Qaeda’s Renewal in Afghanistan (1996–1998) 63 3. COUNTERTERRORISM EVOLVES 71 3.1 From the Old Terrorism to the New: The First World Trade Center Bombing 71 3.2 Adaptation—and Nonadaptation— ...in the Law Enforcement Community 73 3.3 . and in the Federal Aviation Administration 82 3.4 . and in the Intelligence Community 86 v Final FM.1pp 7/17/04 5:25 PM Page vi 3.5 . and in the State Department and the Defense Department 93 3.6 . and in the White House 98 3.7 . and in the Congress 102 4. RESPONSES TO AL QAEDA’S INITIAL ASSAULTS 108 4.1 Before the Bombings in Kenya and Tanzania 108 4.2 Crisis:August 1998 115 4.3 Diplomacy 121 4.4 Covert Action 126 4.5 Searching for Fresh Options 134 5. -

Stream Cipher Designs: a Review

SCIENCE CHINA Information Sciences March 2020, Vol. 63 131101:1–131101:25 . REVIEW . https://doi.org/10.1007/s11432-018-9929-x Stream cipher designs: a review Lin JIAO1*, Yonglin HAO1 & Dengguo FENG1,2* 1 State Key Laboratory of Cryptology, Beijing 100878, China; 2 State Key Laboratory of Computer Science, Institute of Software, Chinese Academy of Sciences, Beijing 100190, China Received 13 August 2018/Accepted 30 June 2019/Published online 10 February 2020 Abstract Stream cipher is an important branch of symmetric cryptosystems, which takes obvious advan- tages in speed and scale of hardware implementation. It is suitable for using in the cases of massive data transfer or resource constraints, and has always been a hot and central research topic in cryptography. With the rapid development of network and communication technology, cipher algorithms play more and more crucial role in information security. Simultaneously, the application environment of cipher algorithms is in- creasingly complex, which challenges the existing cipher algorithms and calls for novel suitable designs. To accommodate new strict requirements and provide systematic scientific basis for future designs, this paper reviews the development history of stream ciphers, classifies and summarizes the design principles of typical stream ciphers in groups, briefly discusses the advantages and weakness of various stream ciphers in terms of security and implementation. Finally, it tries to foresee the prospective design directions of stream ciphers. Keywords stream cipher, survey, lightweight, authenticated encryption, homomorphic encryption Citation Jiao L, Hao Y L, Feng D G. Stream cipher designs: a review. Sci China Inf Sci, 2020, 63(3): 131101, https://doi.org/10.1007/s11432-018-9929-x 1 Introduction The widely applied e-commerce, e-government, along with the fast developing cloud computing, big data, have triggered high demands in both efficiency and security of information processing. -

Copyright Infringement

COPYRIGHT INFRINGEMENT Mark Miller Jackson Walker L.L.P. 112 E. Pecan, Suite 2400 San Antonio, Texas 78205 (210) 978-7700 [email protected] This paper was first published in the State Bar of Texas Corporate Counsel Section Corporate Counsel Review, Vol. 30, No. 1 (2011) The author appreciates the State Bar’s permission to update and re-publish it. THE AUTHOR Mark Miller is a shareholder with Jackson Walker L.L.P., a Texas law firm with offices in San Antonio, Dallas, Houston, Austin, Ft. Worth, and San Angelo. He is Chair of the firm’s intellectual property section and concentrates on transactions and litigation concerning intellectual property, franchising, and antitrust. Mr. Miller received his B.A. in Chemistry from Austin College in 1974 and his J.D. from St. Mary’s University in 1978. He was an Associate Editor for St. Mary’s Law Journal; admitted to practice before the United States Patent and Trademark Office in 1978; served on the Admissions Committee for the U.S. District Court, Western District of Texas, and been selected as one of the best intellectual property lawyers in San Antonio. He is admitted to practice before the United States Supreme Court, the Federal Circuit Court of Appeals, the United States Court of Appeals for the Fifth and Tenth Circuits, the District Courts for the Western, Southern, and Northern Districts of Texas, and the Texas Supreme Court. He is a member of the American Bar Association’s Forum Committee on Franchising, and the ABA Antitrust Section’s Committee on Franchising. Mr. Miller is a frequent speaker and writer, including “Neglected Copyright Litigation Issues,” State Bar of Texas, Intellectual Property Section, Annual Meeting, 2009, “Unintentional Franchising,” 36 St. -

MQ Challenge: Hardness Evaluation of Solving MQ Problems

MQ Challenge: Hardness Evaluation of Solving Multivariate Quadratic Problems * * y * z * x Takanori Yasuda Xavier Dahan Yun-Ju Huang Tsuyoshi Takagi * { Kouichi Sakurai Abstract rity of MPKC is thus based on the one-wayness of the multivariate polynomial maps. In the same vein, Multivariate polynomial (MP) problem is the basis of QUAD [3] is a stream cipher (symmetric key cryp security for potentially post-quantum cryptosystems. tography) whose security is guaranteed by the one The hardness of solving MP problem depends on a wayness of the multivariate polynomial maps. number of parameters, most importantly the number The one-wayness of multivariate polynomial maps of variables and the degree of the polynomials, as resides in the difficulty to find solutions of a system of well as the number of equations, the size of the base multivariate polynomial equations (MP problem). In field etc. When the degree is two, we investigate the particular, if the multivariate polynomials involved in relation among these parameters and the hardness a MP problem consist only of quadratic polynomials, of solving MP problem, in order to construct hard the problem is called MQ problem: instances of MP problem. These instances are X X used to create a challenge, which may be helpful in (1) (1) (1) f1(x1; : : : ; xn) = aij xixj + bi xi + c = d1; determining appropriate parameters for multivariate 1 i j n 1 i n X X (2) (2) public key cryptosystems, and stimulate furthermore (2) f2(x1; : : : ; xn) = aij xixj + bi xi + c = d2; the research in solving MP problem. 1 i j n 1 i n . -

LNCS 4593, Pp

Analysis of QUAD Bo-Yin Yang1,OwenChia-HsinChen2, Daniel J. Bernstein3, and Jiun-Ming Chen4 1 Academia Sinica and Taiwan Information Security Center [email protected] 2 National Taiwan University [email protected] 3 University of Illinois at Chicago [email protected] 4 Nat’l Taiwan University and Nat’l Cheng Kung University [email protected] Abstract. In a Eurocrypt 2006 article entitled “QUAD: A Practical Stream Cipher with Provable Security,” Berbain, Gilbert, and Patarin introduced QUAD, a parametrized family of stream ciphers. The arti- cle stated that “the security of the novel stream cipher is provably reducible to the intractability of the MQ problem”; this reduction de- duces the infeasibility of attacks on QUAD from the hypothesized infeasi- bility (with an extra looseness factor) of attacks on the well-known hard problem of solving systems of multivariate quadratic equations over fi- nite fields. The QUAD talk at Eurocrypt 2006 reported speeds for QUAD instances with 160-bit state and output block over the fields GF(2), GF(16), and GF(256). This paper discusses both theoretical and practical aspects of attack- ing QUAD and of attacking the underlying hard problem. For example, this paper shows how to use XL-Wiedemann to break the GF(256) instance QUAD(256, 20, 20) in approximately 266 Opteron cycles, and to break the underlying hard problem in approximately 245 cycles. For each of the QUAD parameters presented at Eurocrypt 2006, this analysis shows the implications and limitations of the security proofs, pointing out which QUAD instances are not secure, and which ones will never be proven se- cure. -

Voice Encryption Using Twin Stream Cipher Algorithm تشفير الصوت باستخدام خوارزمية التوأم

Voice Encryption Using Twin Stream Cipher Algorithm تشفير الصوت باستخدام خوارزمية التوأم اﻻنسيابية Prepared by Omar Mejbel Hammad Aljouani ((401320142)) Supervisor Dr. Hebah H. O. Nasereddin Dr. Abdulkareem O. Ibadi Master Thesis Submitted in Partial Fulfillment of the Requirements of the Master Degree in Computer Science Department of Computer Science Faculty of Information Technology Middle East University Amman - Jordan January - 2016 II ((بسم هللا الرحمن الرحيم(( ّ يَ ْر ف عَََللاَهَا ّل ذي نََآ مَ هنواَ م ْن هك ْمََ وَا ّلَ ذي نََ} ه ه ْ ْ {أَوتواَال عل مََ دَ ر جات ))صدق هللا العظيم(( II III IV Acknowledgment I utilize this opportunity to thank everyone helped me reach this stage and everyone who encourage me during performing this thesis. I want to thank Dr. Hebah H. O. Nasereddin for her guidance and supervision during writing this thesis. Extended thanks are also for my family and friends who encourage me during writing this thesis. I also want to thank everyone who believes that the knowledge is right for everyone. The greatest thank ever to assistant prof. Abdulkareem O. Ibadi, the head of software engineering department at Baghdad College for economic sciences. V Dedication اهدي خﻻصة جهدي العلمي المتواضع الى : قرة عيني الرسول محمد عليه افضل الصﻻة واتم التسليم ...... وطني العراق الجريح .................................... اخي الشهيد الحاضر الغائب صهيب ................... والدي ووالدتي واختي رفاق دربي ومسيرتي ............... كل من كان له بصمة بجهدي العلمي هذا............. كل الشهداء الذين استشهدوا برصاص الغدر والخيانة ...... كل من كان يدعي لي ويوجهني ويتمنى لي الخير ......... جامعة بغداد أخص بها كلية التربية ابن الهيثم ....... اﻻعدادية المركزية للبنين .................. VI Table of Contents AUTHORIZATION STATEMENT .......................................................... -

Analysis of QUAD

Analysis of QUAD Bo-Yin Yang1,OwenChia-HsinChen2, Daniel J. Bernstein3, and Jiun-Ming Chen4 1 Academia Sinica and Taiwan Information Security Center [email protected] 2 National Taiwan University [email protected] 3 University of Illinois at Chicago [email protected] 4 Nat’l Taiwan University and Nat’l Cheng Kung University [email protected] Abstract. In a Eurocrypt 2006 article entitled “QUAD: A Practical Stream Cipher with Provable Security,” Berbain, Gilbert, and Patarin introduced QUAD, a parametrized family of stream ciphers. The arti- cle stated that “the security of the novel stream cipher is provably reducible to the intractability of the MQ problem”; this reduction de- duces the infeasibility of attacks on QUAD from the hypothesized infeasi- bility (with an extra looseness factor) of attacks on the well-known hard problem of solving systems of multivariate quadratic equations over fi- nite fields. The QUAD talk at Eurocrypt 2006 reported speeds for QUAD instances with 160-bit state and output block over the fields GF(2), GF(16), and GF(256). This paper discusses both theoretical and practical aspects of attack- ing QUAD and of attacking the underlying hard problem. For example, this paper shows how to use XL-Wiedemann to break the GF(256) instance QUAD(256, 20, 20) in approximately 266 Opteron cycles, and to break the underlying hard problem in approximately 245 cycles. For each of the QUAD parameters presented at Eurocrypt 2006, this analysis shows the implications and limitations of the security proofs, pointing out which QUAD instances are not secure, and which ones will never be proven se- cure.