Exokernel: an Operating System Architecture for Application-Level Resource Management

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Complete Freebsd

The Complete FreeBSD® If you find errors in this book, please report them to Greg Lehey <grog@Free- BSD.org> for inclusion in the errata list. The Complete FreeBSD® Fourth Edition Tenth anniversary version, 24 February 2006 Greg Lehey The Complete FreeBSD® by Greg Lehey <[email protected]> Copyright © 1996, 1997, 1999, 2002, 2003, 2006 by Greg Lehey. This book is licensed under the Creative Commons “Attribution-NonCommercial-ShareAlike 2.5” license. The full text is located at http://creativecommons.org/licenses/by-nc-sa/2.5/legalcode. You are free: • to copy, distribute, display, and perform the work • to make derivative works under the following conditions: • Attribution. You must attribute the work in the manner specified by the author or licensor. • Noncommercial. You may not use this work for commercial purposes. This clause is modified from the original by the provision: You may use this book for commercial purposes if you pay me the sum of USD 20 per copy printed (whether sold or not). You must also agree to allow inspection of printing records and other material necessary to confirm the royalty sums. The purpose of this clause is to make it attractive to negotiate sensible royalties before printing. • Share Alike. If you alter, transform, or build upon this work, you may distribute the resulting work only under a license identical to this one. • For any reuse or distribution, you must make clear to others the license terms of this work. • Any of these conditions can be waived if you get permission from the copyright holder. Your fair use and other rights are in no way affected by the above. -

Design of the PATHWORKS for ULTRIX File Server by Anthony J

Design of the PATHWORKS for ULTRIX File Server By Anthony J. Rizzolo, Elizabeth A. Brewer, and Martha A. Chandler 1 Abstract The PATHWORKS for ULTRIX product integrates personal computers with the ULTRIX operating system on a local area network. The software supports both the TCP/IP protocol and the DECnet transport stacks. The design and implementation of the PATHWORKS for ULTRIX file server is based on a client-server model. The server provides file, print, mail, and time services to client PCs on the network. Network file service management is accessed through a PC-style menu interface. The file server's performance was optimized to allow parallelism to occur when the client is generating data at the same time the server is writing the data to disk. 2 Introduction The PATHWORKS for ULTRIX file server connects industry-standard personal computers running Microsoft's server message block (SMB) protocol to Digital computers running the ULTRIX operating system. The server provides a network operating system for PC integration among users of the ULTRIX, DOS, and OS/2 operating systems. The PATHWORKS for ULTRIX server provides file, print, mail, and time services to client PCs on the network. The software is layered on VAX systems and on reduced instruction set computer (RISC) hardware. It supports both the transmission control protocol/internet protocol (TCP/IP) and the DECnet transport stacks. The base product also provides centralized server-based management accessed through a PC-style menu interface. In addition, the PATHWORKS for ULTRIX server implements a network basic I/O system (NetBIOS) naming service that allows clients on the network to obtain the DECnet node address of the server in the DECnet environment or the TCP/IP address of the server in the TCP/IP environment. -

Openafs: BSD Clients 2009

OpenAFS: BSD Clients 2009 Matt Benjamin <[email protected]> OpenAFS: BSD Clients 2009 Who am I? ● OpenAFS developer interested in various new-code development topics ● for the last while, “portmaster” for BSD clients except DARWIN/MacOS X ● involves evolving the ports, interfacing withusers and port maintainers in the BSD communities OpenAFS: BSD Clients 2009 Former Maintainers ● Tom Maher, MIT ● Jim Rees, University of Michigan (former Gatekeeper and Elder) ● Garret Wollman, MIT OpenAFS: BSD Clients 2009 Other Active ● Ben Kaduk (FreeBSD) ● Tony Jago (FreeBSD) ● Jamie Fournier (NetBSD) OpenAFS: BSD Clients 2009 Historical Remarks ● AFS originated in a BSD 4.2 environment ● extend UFS with coherence across a group of machines ● Terminology in common with BSD, SunOS, etc, e.g., vnodes ● Followed SunOS and Ultrix to Solaris and Digital Unix in Transarc period ● 386BSD released at 4.3 level in the Transarc period, some client development never publically released (or independent of Transarc) OpenAFS: BSD Clients 2009 BSD Clients Today ● Descendents of 386BSD distribution and successors, not including DARWIN/MacOS X ● DARWIN separately maintained, though of course there are similarities OpenAFS: BSD Clients 2009 Today ● FreeBSD ● OpenBSD Soon ● NetBSD ● OpenBSD Not yet supported (as a client): ● Dragonfly BSD OpenAFS: BSD Clients 2009 BSD Port History I ● First 386BSD port probably that of John Kohl (MIT), for NetBSD ● First to appear in OpenAFS is FreeBSD, by Tom Maher ● Next to appear in OpenAFS is OpenBSD, by Jim Rees ● Significant evolution -

BSD Based Systems

Pregled BSD sistema I projekata Glavne BSD grane FreeBSD Homepage: http://www.freebsd.org/ FreeBSD je Unix-like slobodni operativni sistem koji vodi poreklo iz AT&T UNIX-a preko Berkeley Software Distribution (BSD) grane kroz 386BSD i 4.4BSD. Radi na procesorima kompatibilnim sa Intel x86 familijom procesora, kao I na DEC Alpha, UltraSPARC procesorima od Sun Microsystems, Itanium (IA-64), AMD64 i PowerPC procesorima. Radi I na PC-98 arhitekturi. Podrska za ARM i MIPS arhitekture je trenutno u razvoju. Početkom 1993. godine Jordan K. Hubbard, Rod Grimes i Nate Williams su pokrenuli projekat čiji je cilj bio rešavanje problema koji su postojali u principima razvoja Jolitzovog 386BSD-a. Posle konsultovanja sa tadašnjim korisnicima sistema, uspostavljeni su principi i smišljeno je ime - FreeBSD. Pre nego što je konkretan razvoj i počeo, Jordan Hubbard je predložio je firmi Walnut Creek CDROM (danas BSDi) da pripreme distribuiranje FreeBSD-a na CD-ROM-ovima. Walnut Creek CDROM su prihvatili ideju, ali i obezbedili (tada potpuno nepoznatom) projektu mašinu na kojoj će biti razvijan i brzu Internet konekciju. Bez ove pomoći teško da bi FreeBSD bio razvijen u ovolikoj meri i ovolikom brzinom kao što jeste. Prva distribucija FreeBSD-a na CD-ROM-ovima (i naravno na netu) bila je FreeBSD 1.0, objavljena u decembru 1993. godine. Bila je zasnovana na Berkeley-evoj 4.3BSD-Lite ("Net/2") traci, a naravno sadržala je i komponente 386BSD-a i mnoge programe Free Software Foundation (fondacija besplatnog- slobodnog softvera). Nakon što je Novell otkupio UNIX od AT&T-a, Berkeley je morao da prizna da Net/2 traka sadrži velike delove UNIX koda. -

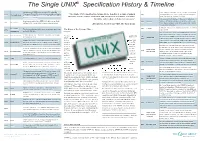

The Single UNIX® Ingle UNIX Specification History & Timeline

The Single UNIX® Specifi cationcation HistoryHistory && TTimelineimeline The history of UNIX starts back in 1969, when Ken UNIX System Laboratories (USL) becomes a company Thompson, Dennis Ritchie and others started working on 1969 The Beginning “The Single UNIX Specifi cation brings all the benefi ts of a single standard 1991 - majority-owned by AT&T. Linus Torvalds commences the “little-used PDP-7 in a corner” at Bell Labs and what operating system, namely application and information portability, scalability, Linux development. Solaris 1.0 debuts. was to become UNIX. fl exibility and freedom of choice for customers” USL releases UNIX System V Release 4.2 (Destiny). It had an assembler for a PDP-11/20, fi le system, fork(), October - XPG4 Brand launched by X/Open. December 1992 SVR4.2 1971 First Edition roff and ed. It was used for text processing of patent Allen Brown, President and CEO, The Open Group 22nd - Novell announces intent to acquire USL. Solaris documents. 2.0 and HP-UX 9.0 ship. 4.4BSD the fi nal release from Berkeley. June 16 - Novell First UNIX The fi rst installations had 3 users, no memory protection, 1993 4.4BSD 1972 The Story of the License Plate... acquires USL Installations and a 500 KB disk. Novell decides to get out of the UNIX business. Rather It was rewritten in C. This made it portable and changed than sell the business as a single entity, Novell transfers 1973 Fourth Edition In 1983 Digital Equipment Corporation the middle of it, Late the rights to the UNIX trademark and the specifi cation the history of OS’s. -

Tru64 UNIX Compaq C Language Reference Manual

Tru64 UNIX Compaq C Language Reference Manual Order Number: AA–RH9NC–TE August 2000 This document is the language reference manual for Compaq C. Revision Update Information: This revised manual supersedes the DEC C Language Reference Manual (Order No. AA–RH9NB–TE). Software Version: Compaq C for Tru64 UNIX Version 5.1 or higher Compaq C Version 6.2 for OpenVMS Systems Compaq C Version 6.2 for Linux Alpha Compaq Computer Corporation Houston, Texas First Printing, February 1991 Revised, November 1992 Revised, November 1995 Revised, March 1996 Revised, May 1997 Revised, February 1998 Revised, December 1998 Revised, November 1999 Revised, April 2000 Revised, July 2000 Copyright 2000 Compaq Computer Corporation COMPAQ, VAX, and the Compaq logo Registered in U.S. Patent and Trademark Office. Tru64, Alpha, and OpenVMS are trademarks of Compaq Information Technologies Group, L.P. UNIX is a trademark of The Open Group. All other product names mentioned herein may be trademarks or registered trademarks of their respective companies. Confidential computer software. Valid license from Compaq required for possession, use, or copying. Consistent with FAR 12.211 and 12.212, Commercial Computer Software, Computer Software Documentation, and Technical Data for Commercial Items are licensed to the U.S. Government under vendor’s standard commercial license. Compaq shall not be liable for technical or editorial errors or omissions contained herein. The information in this publication is subject to change without notice and is provided "as is" without warranty of any kind. The entire risk arising out of the use of this information remains with recipient. In no event shall Compaq be liable for any direct, consequential, incidental, special, punitive, or other damages whatsoever (including without limitation, damages for loss of business profits, business interruption or loss of business information), even if Compaq has been advised of the possibility of such damages. -

Why Aren't Operating Systems Getting Faster As Fast As Hardware?

O C T O B E R 1 9 8 9 WRL Technical Note TN-11 Why Aren't Operating Systems Getting Faster As Fast As Hardware? John Ousterhout d i g i t a l Western Research Laboratory 100 Hamilton Avenue Palo Alto, California 94301 USA The Western Research Laboratory (WRL) is a computer systems research group that was founded by Digital Equipment Corporation in 1982. Our focus is computer science research relevant to the design and application of high performance scientific computers. We test our ideas by designing, building, and using real systems. The systems we build are research prototypes; they are not intended to become products. There is a second research laboratory located in Palo Alto, the Systems Research Cen- ter (SRC). Other Digital research groups are located in Paris (PRL) and in Cambridge, Massachusetts (CRL). Our research is directed towards mainstream high-performance computer systems. Our prototypes are intended to foreshadow the future computing environments used by many Digital customers. The long-term goal of WRL is to aid and accelerate the development of high-performance uni- and multi-processors. The research projects within WRL will address various aspects of high-performance computing. We believe that significant advances in computer systems do not come from any single technological advance. Technologies, both hardware and software, do not all advance at the same pace. System design is the art of composing systems which use each level of technology in an appropriate balance. A major advance in overall system performance will require reexamination of all aspects of the system. -

Why Is Netbsd So Special? Use Your Favorite Tools and Applications All the Software You Ever Wanted

Why is NetBSD so special? Use Your Favorite Tools and Applications All the software you ever wanted Since NetBSD was founded in 1993, it has always been at NetBSD contains all the features you would expect in an open NetBSD consists of a slim base operating system that can the forefront of Open Source operating system development. source operating system today, including X11, tools for fire- be configured for many uses by adding software from the NetBSD has been the complete foundation or reference for walls, and software RAID. With NetBSD’s package tools you NetBSD Packages Collection, pkgsrc. The collection in- other projects. Many advantages of NetBSD are not found in can install more than 5400 freely available software packages cludes more than 5400 packages of which we have room here any other open source operating system: easily. (See "All the software you ever wanted", below) to mention but a few: NetBSD’s binary compatibility feature lets you run applica- • Support for 55 different hardware platforms today, tions compiled for other operating systems (for the same CPU • Web serving and website development, including more will follow: acorn26 acorn32 algor alpha amd64 amiga amigappc arc atari bebox architecture). This includes most applications for Linux, So- Apache (with many modules), PHP, and Jakarta Tom- cats cesfic cobalt dreamcast evbarm evbmips evbppc evbsh3 evbsh5 hp300 hp700 hpcarm hpcmips hpcsh laris, SCO, FreeBSD, BSD/OS, OSF/1, and Ultrix; and some cat i386 luna68k mac68k macppc mipsco mmeye mvme68k mvmeppc netwinder news68k newsmips next68k for Darwin and IRIX. In fact, testing has shown NetBSD runs ofppc pc532 playstation2 pmax pmppc prep sandpoint sbmips sgimips shark sparc sparc64 sun2 sun3 sun68k the Sun JDK/JRE for Linux as well as Linux itself does. -

Alpha, VAX and PDP-11 Virtualization

Alpha, VAX and PDP-11 Virtualization An overview of CHARON products OpenVMS Tech. Update, Bad Homburg, Sep 17-18, 2009 Dogan Baser / Sep 2009 Copyright 2001-2009 Stromasys SA 01-20-017-05 p 1 Contents • Stromasys SA • Legacy DEC systems • Hardware virtualization • CHARON products overview • Benefits Copyright 2001-2009 Stromasys SA 01-20-017-05 p 2 Stromasys SA • Former European application migration department of Digital Equipment Corporation (DEC) • Management buy-out in 1998 Æ Software Resources International SRI • Renamed to Stromasys SA in 2008 • Headquarters in Geneva, Switzerland • Focus on preserving software investments via – Hardware emulators (VAX, PDP-11, Alpha), and – Application source code migrations • Sales and support channel worldwide Copyright 2001-2009 Stromasys SA 01-20-017-05 p 3 IT applications are socio-technical systems Copyright 2001-2009 Stromasys SA 01-20-017-05 p 4 DEC computers Programmable Data Processor PDP (PDP-11: 16 bit) Virtual Address eXtension VAX (32 bit) Alpha Extended VAX (64 bit) Copyright 2001-2009 Stromasys SA 01-20-017-05 p 5 PDP-11 • Sold by DEC between 1970 and 1997 • First real time process control computer in the industry sold in large quantities • Several bus structures: UNIbus, Qbus, Massbus • Cloned several times in the Eastern Block countries – SM-4, SM-1420, SM-1600, Elektronika BK-0010, DVK, UKNC (Soviet Union) – SM-4, SM-1420, IZOT-1016 (Bulgaria) – SM-1420 (East Germany) –Mera(Poland) – SM-4 (Hungary) – I-102 (Romenia) • Several operating systems by DEC and others: – DOS/BATCH, -

Attachment 1 Version 6.3 of the 66TCP/IP Daemon Wrapper Package

Attachment 1 Version 6.3 of the 66TCP/IP daemon wrapper package. Table of contents ----------------- 1 - Introduction 2 - Disclaimer 3 - Tutorials 3.1 - How it works 3.2 - Where the logging information goes 4 - Features 4.1 - Access control 4.2 - Host name spoofing 4.3 - Host address spoofing 4.4 - Remote username lookups 4.5 - Language extensions 5 - Other works 5.1 - Related documents 5.2 - Related software 6 - Limitations 6.1 - Known wrapper limitations 6.2 - Known system software bugs 7 - Configuration and installation 7.1 - Easy configuration and installation 7.2 - Advanced configuration and installation 7.3 - Daemons with arbitrary path names 7.4 - Building and testing the access control rules 7.5 - Other applications 8 - Acknowledgements -2- 1 - Introduction ---------------- With this package you can monitor and filter incoming requests for the SYSTAT, FINGER, FTP, TELNET, RLOGIN, RSH, EXEC, TFTP, TALK, and other network services. It supports both 4.3BSD-style sockets and System V.4-style TLI. Praise yourself lucky if you don't know what that means. The package provides tiny daemon wrapper programs that can be installed without any changes to existing software or to existing configuration files. The wrappers report the name of the remote host and of the requested service; the wrappers do not exchange information with the remote client process, and impose no overhead on the actual communication between the client and server applications. Optional features are: access control to restrict what systems can connect to your network daemons; remote user name lookups with the RFC 931 protocol; additional protection against hosts that pretend to have someone elses host name; additional protection against hosts that pretend to have someone elses host address. -

New Faces of Freebsd Sept/Oct 2016

SEE TEXT ONLY new faces of FreeBSD BY DRU LAVIGNE This column aims to shine a spotlight on contributors who recently received their commit bit and to introduce them to the FreeBSD community. This month, the spotlight is on Eric Badger and Toomas Soome, who became src committers in July and August 2016. Tell us a bit about yourself, your back- For a fun fact, we actually started with www ground, and your interests. using the CERN sources for both the server and Eric: I'm a software engineer at Dell browser. Compellent, based in Minnesota, USA. I Later on I was working as pre-sales engineer work on the Platform OS team in charge for a local Sun Microsystems reseller, which of the FreeBSD operating system running meant less compiling but still a lot of integration, on Dell Storage controllers. I've been architecting, and deep diving into almost every doing this for a little more than two layer of IT infrastructure. years. I've learned a ton about FreeBSD For other interests, snowboarding in winter working at Dell, partly from solving prob- and skydiving in summer. Although, due to time ERIC lems that arise in the OS and partly from issues, those activities have been somewhat the various smart people that I get to work with. diminished. I'm interested in lots of things; nearly anything low level-ish can capture my interest. The things How did you first learn about FreeBSD and I've actually had time to work on have been what about FreeBSD interested you? mostly oriented towards networking, debugging Eric: I encountered FreeBSD at my first computer tools (like gdb or DTrace), and storage devices. -

[email protected] 1 Historical Overview

Improving NetBSD/mips [email protected] 1 Historical Overview • My first MIPS experience was back in 1988 when I helped port ULTRIX to the DECstation 3100 (pmax) for Digital Equipment Corporation. • NetBSD gained its initial MIPS support 6 years later when it imported the BSD 4.4-lite pmax code. • Generic MIPS code was split off from pmax in 1998 when newmips was added. Most other mips ports were added between 1999 and 2003. 2 The MIPS Architecture • MIPS-1 (R3000/R2000), MIPS-2 ‣ 32-bit, no locking (LL/SC) instructions, simple TLB • MIPS-3 (R4000+, 64-bit), MIPS64 ‣ 64-bit, locking (LL/SC) instructions, dual entry TLB • MIPS32 ‣ 32-bit, locking instructions, dual entry TLB 3 The MIPS Architecture Continued FFFFFFFF • 32-bit address space is split into 4 regions (1 KSEG2 (1GB, TLB Mapped) user, 3 kernel) C0000000 KSEG1 (512MB, Uncached Direct A0000000 Mapped) • Addresses are signed KSEG0 (512MB, Cached Direct (positive addresses are 80000000 Mapped) user and negative address are for the kernel) • KSEG0/KSEG1 map the User (2GB, TLB Mapped) first 512MB of physical memory 00000000 4 The MIPS Architecture Continued • 64-bit address space is split into equal sized 4 regions (user, supervisor, kernel direct mapped (XKPHYS), kernel TLB mapped (XKSEG)) • Addresses are signed (positive addresses are user and supervisor while negative addresses are for the kernel) • KSEG0/KSEG1 map first 512MB of physical memory • XKPHYS direct maps all of physical memory (using one of 8 CCAs) 5 MIPS ABIs • O32 came first and is still the only ABI used for 32-bit only CPUs.