A Tutorial on Support Vector Machines for Pattern Recognition

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Distributions:The Evolutionof a Mathematicaltheory

Historia Mathematics 10 (1983) 149-183 DISTRIBUTIONS:THE EVOLUTIONOF A MATHEMATICALTHEORY BY JOHN SYNOWIEC INDIANA UNIVERSITY NORTHWEST, GARY, INDIANA 46408 SUMMARIES The theory of distributions, or generalized func- tions, evolved from various concepts of generalized solutions of partial differential equations and gener- alized differentiation. Some of the principal steps in this evolution are described in this paper. La thgorie des distributions, ou des fonctions g&&alis~es, s'est d&eloppeg 2 partir de divers con- cepts de solutions g&&alis6es d'gquations aux d&i- &es partielles et de diffgrentiation g&&alis6e. Quelques-unes des principales &apes de cette &olution sont d&rites dans cet article. Die Theorie der Distributionen oder verallgemein- erten Funktionen entwickelte sich aus verschiedenen Begriffen von verallgemeinerten Lasungen der partiellen Differentialgleichungen und der verallgemeinerten Ableitungen. In diesem Artikel werden einige der wesentlichen Schritte dieser Entwicklung beschrieben. 1. INTRODUCTION The founder of the theory of distributions is rightly con- sidered to be Laurent Schwartz. Not only did he write the first systematic paper on the subject [Schwartz 19451, which, along with another paper [1947-19481, already contained most of the basic ideas, but he also wrote expository papers on distributions for electrical engineers [19481. Furthermore, he lectured effec- tively on his work, for example, at the Canadian Mathematical Congress [1949]. He showed how to apply distributions to partial differential equations [19SObl and wrote the first general trea- tise on the subject [19SOa, 19511. Recognition of his contri- butions came in 1950 when he was awarded the Fields' Medal at the International Congress of Mathematicians, and in his accep- tance address to the congress Schwartz took the opportunity to announce his celebrated "kernel theorem" [195Oc]. -

![Arxiv:1404.7630V2 [Math.AT] 5 Nov 2014 Asoffsae,Se[S4 P8,Vr6.Isedof Instead Ver66]](https://docslib.b-cdn.net/cover/3386/arxiv-1404-7630v2-math-at-5-nov-2014-aso-sae-se-s4-p8-vr6-isedof-instead-ver66-83386.webp)

Arxiv:1404.7630V2 [Math.AT] 5 Nov 2014 Asoffsae,Se[S4 P8,Vr6.Isedof Instead Ver66]

PROPER BASE CHANGE FOR SEPARATED LOCALLY PROPER MAPS OLAF M. SCHNÜRER AND WOLFGANG SOERGEL Abstract. We introduce and study the notion of a locally proper map between topological spaces. We show that fundamental con- structions of sheaf theory, more precisely proper base change, pro- jection formula, and Verdier duality, can be extended from contin- uous maps between locally compact Hausdorff spaces to separated locally proper maps between arbitrary topological spaces. Contents 1. Introduction 1 2. Locally proper maps 3 3. Proper direct image 5 4. Proper base change 7 5. Derived proper direct image 9 6. Projection formula 11 7. Verdier duality 12 8. The case of unbounded derived categories 15 9. Remindersfromtopology 17 10. Reminders from sheaf theory 19 11. Representability and adjoints 21 12. Remarks on derived functors 22 References 23 arXiv:1404.7630v2 [math.AT] 5 Nov 2014 1. Introduction The proper direct image functor f! and its right derived functor Rf! are defined for any continuous map f : Y → X of locally compact Hausdorff spaces, see [KS94, Spa88, Ver66]. Instead of f! and Rf! we will use the notation f(!) and f! for these functors. They are embedded into a whole collection of formulas known as the six-functor-formalism of Grothendieck. Under the assumption that the proper direct image functor f(!) has finite cohomological dimension, Verdier proved that its ! derived functor f! admits a right adjoint f . Olaf Schnürer was supported by the SPP 1388 and the SFB/TR 45 of the DFG. Wolfgang Soergel was supported by the SPP 1388 of the DFG. 1 2 OLAFM.SCHNÜRERANDWOLFGANGSOERGEL In this article we introduce in 2.3 the notion of a locally proper map between topological spaces and show that the above results even hold for arbitrary topological spaces if all maps whose proper direct image functors are involved are locally proper and separated. -

MATH 418 Assignment #7 1. Let R, S, T Be Positive Real Numbers Such That R

MATH 418 Assignment #7 1. Let r, s, t be positive real numbers such that r + s + t = 1. Suppose that f, g, h are nonnegative measurable functions on a measure space (X, S, µ). Prove that Z r s t r s t f g h dµ ≤ kfk1kgk1khk1. X s t Proof . Let u := g s+t h s+t . Then f rgsht = f rus+t. By H¨older’s inequality we have Z Z rZ s+t r s+t r 1/r s+t 1/(s+t) r s+t f u dµ ≤ (f ) dµ (u ) dµ = kfk1kuk1 . X X X For the same reason, we have Z Z s t s t s+t s+t s+t s+t kuk1 = u dµ = g h dµ ≤ kgk1 khk1 . X X Consequently, Z r s t r s+t r s t f g h dµ ≤ kfk1kuk1 ≤ kfk1kgk1khk1. X 2. Let Cc(IR) be the linear space of all continuous functions on IR with compact support. (a) For 1 ≤ p < ∞, prove that Cc(IR) is dense in Lp(IR). Proof . Let X be the closure of Cc(IR) in Lp(IR). Then X is a linear space. We wish to show X = Lp(IR). First, if I = (a, b) with −∞ < a < b < ∞, then χI ∈ X. Indeed, for n ∈ IN, let gn the function on IR given by gn(x) := 1 for x ∈ [a + 1/n, b − 1/n], gn(x) := 0 for x ∈ IR \ (a, b), gn(x) := n(x − a) for a ≤ x ≤ a + 1/n and gn(x) := n(b − x) for b − 1/n ≤ x ≤ b. -

(Measure Theory for Dummies) UWEE Technical Report Number UWEETR-2006-0008

A Measure Theory Tutorial (Measure Theory for Dummies) Maya R. Gupta {gupta}@ee.washington.edu Dept of EE, University of Washington Seattle WA, 98195-2500 UWEE Technical Report Number UWEETR-2006-0008 May 2006 Department of Electrical Engineering University of Washington Box 352500 Seattle, Washington 98195-2500 PHN: (206) 543-2150 FAX: (206) 543-3842 URL: http://www.ee.washington.edu A Measure Theory Tutorial (Measure Theory for Dummies) Maya R. Gupta {gupta}@ee.washington.edu Dept of EE, University of Washington Seattle WA, 98195-2500 University of Washington, Dept. of EE, UWEETR-2006-0008 May 2006 Abstract This tutorial is an informal introduction to measure theory for people who are interested in reading papers that use measure theory. The tutorial assumes one has had at least a year of college-level calculus, some graduate level exposure to random processes, and familiarity with terms like “closed” and “open.” The focus is on the terms and ideas relevant to applied probability and information theory. There are no proofs and no exercises. Measure theory is a bit like grammar, many people communicate clearly without worrying about all the details, but the details do exist and for good reasons. There are a number of great texts that do measure theory justice. This is not one of them. Rather this is a hack way to get the basic ideas down so you can read through research papers and follow what’s going on. Hopefully, you’ll get curious and excited enough about the details to check out some of the references for a deeper understanding. -

Mathematics Support Class Can Succeed and Other Projects To

Mathematics Support Class Can Succeed and Other Projects to Assist Success Georgia Department of Education Divisions for Special Education Services and Supports 1870 Twin Towers East Atlanta, Georgia 30334 Overall Content • Foundations for Success (Math Panel Report) • Effective Instruction • Mathematical Support Class • Resources • Technology Georgia Department of Education Kathy Cox, State Superintendent of Schools FOUNDATIONS FOR SUCCESS National Mathematics Advisory Panel Final Report, March 2008 Math Panel Report Two Major Themes Curricular Content Learning Processes Instructional Practices Georgia Department of Education Kathy Cox, State Superintendent of Schools Two Major Themes First Things First Learning as We Go Along • Positive results can be • In some areas, adequate achieved in a research does not exist. reasonable time at • The community will learn accessible cost by more later on the basis of addressing clearly carefully evaluated important things now practice and research. • A consistent, wise, • We should follow a community-wide effort disciplined model of will be required. continuous improvement. Georgia Department of Education Kathy Cox, State Superintendent of Schools Curricular Content Streamline the Mathematics Curriculum in Grades PreK-8: • Focus on the Critical Foundations for Algebra – Fluency with Whole Numbers – Fluency with Fractions – Particular Aspects of Geometry and Measurement • Follow a Coherent Progression, with Emphasis on Mastery of Key Topics Avoid Any Approach that Continually Revisits Topics without Closure Georgia Department of Education Kathy Cox, State Superintendent of Schools Learning Processes • Scientific Knowledge on Learning and Cognition Needs to be Applied to the classroom to Improve Student Achievement: – To prepare students for Algebra, the curriculum must simultaneously develop conceptual understanding, computational fluency, factual knowledge and problem solving skills. -

Stone-Weierstrass Theorems for the Strict Topology

STONE-WEIERSTRASS THEOREMS FOR THE STRICT TOPOLOGY CHRISTOPHER TODD 1. Let X be a locally compact Hausdorff space, E a (real) locally convex, complete, linear topological space, and (C*(X, E), ß) the locally convex linear space of all bounded continuous functions on X to E topologized with the strict topology ß. When E is the real num- bers we denote C*(X, E) by C*(X) as usual. When E is not the real numbers, C*(X, E) is not in general an algebra, but it is a module under multiplication by functions in C*(X). This paper considers a Stone-Weierstrass theorem for (C*(X), ß), a generalization of the Stone-Weierstrass theorem for (C*(X, E), ß), and some of the immediate consequences of these theorems. In the second case (when E is arbitrary) we replace the question of when a subalgebra generated by a subset S of C*(X) is strictly dense in C*(X) by the corresponding question for a submodule generated by a subset S of the C*(X)-module C*(X, E). In what follows the sym- bols Co(X, E) and Coo(X, E) will denote the subspaces of C*(X, E) consisting respectively of the set of all functions on I to £ which vanish at infinity, and the set of all functions on X to £ with compact support. Recall that the strict topology is defined as follows [2 ] : Definition. The strict topology (ß) is the locally convex topology defined on C*(X, E) by the seminorms 11/11*,,= Sup | <¡>(x)f(x)|, xçX where v ranges over the indexed topology on E and </>ranges over Co(X). -

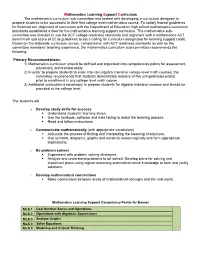

Learning Support Competencies-Math

Mathematics Learning Support Curriculum The mathematics curriculum sub-committee was tasked with developing a curriculum designed to prepare students to be successful in their first college level mathematics course. To satisfy federal guidelines for financial aid, alignment of curriculum with the Department of Education high school mathematics curriculum standards established a floor for the mathematics learning support curriculum. The mathematics sub- committee was directed to use the ACT college readiness standards and alignment with a mathematics ACT benchmark sub score of 22 as guidelines to set a ceiling for curriculum designated for learning support credit. Based on the statewide curriculum survey, comparisons’ with ACT readiness standards as well as the committee members’ teaching experience, the mathematics curriculum sub-committee recommends the following: Primary Recommendations: 1) Mathematics curriculum should be defined and organized into competencies points for assessment, placement, and transferability. 2) In order to prepare students to enter into non-algebra intensive college-level math courses, the committee recommends that students demonstrate mastery of five competencies points prior to enrollment in any college level math course. 3) Additional curriculum is necessary to prepare students for algebra intensive courses and should be provided at the college level. The students will o Develop study skills for success . Understand students’ learning styles. Use the textbook, software and note taking to assist the learning process . Read and follow instructions. o Communicate mathematically (with appropriate vocabulary) . Articulate the process of finding and interpreting the meaning of solutions. Use symbols, diagrams, graphs and words to reason logically and form appropriate implications. o Be problem solvers . Experiment with problem solving strategies. -

Unify Manifold System Hot Runner Installation Manual

Unify Manifold System Hot Runner Installation Manual Original Instructions v 2.2 — March 2021 Unify Manifold System Issue: v 2.2 — March 2021 Document No.: 7593574 This product manual is intended to provide information for safe operation and/or maintenance. Husky reserves the right to make changes to products in an effort to continually improve the product features and/or performance. These changes may result in different and/or additional safety measures that are communicated to customers through bulletins as changes occur. This document contains information which is the exclusive property of Husky Injection Molding Systems Limited. Except for any rights expressly granted by contract, no further publication or commercial use may be made of this document, in whole or in part, without the prior written permission of Husky Injection Molding Systems Limited. Notwithstanding the foregoing, Husky Injection Molding Systems Limited grants permission to its customers to reproduce this document for limited internal use only. Husky® product or service names or logos referenced in these materials are trademarks of Husky Injection Molding Systems Ltd. and may be used by certain of its affiliated companies under license. All third-party trademarks are the property of the respective third-party and may be protected by applicable copyright, trademark or other intellectual property laws and treaties. Each such third- party expressly reserves all rights into such intellectual property. ©2016 – 2021 Husky Injection Molding Systems Ltd. All rights reserved. ii Hot Runner Installation Manual v 2.2 — March 2021 General Information Telephone Support Numbers North America Toll free 1-800-465-HUSKY (4875) Europe EC (most countries) 008000 800 4300 Direct and Non-EC + (352) 52115-4300 Asia Toll Free 800-820-1667 Direct: +86-21-3849-4520 Latin America Brazil +55-11-4589-7200 Mexico +52-5550891160 option 5 For on-site service, contact your nearest Husky Regional Service and Sales office. -

Tempered Distributions and the Fourier Transform

CHAPTER 1 Tempered distributions and the Fourier transform Microlocal analysis is a geometric theory of distributions, or a theory of geomet- ric distributions. Rather than study general distributions { which are like general continuous functions but worse { we consider more specific types of distributions which actually arise in the study of differential and integral equations. Distri- butions are usually defined by duality, starting from very \good" test functions; correspondingly a general distribution is everywhere \bad". The conormal dis- tributions we shall study implicitly for a long time, and eventually explicitly, are usually good, but like (other) people have a few interesting faults, i.e. singulari- ties. These singularities are our principal target of study. Nevertheless we need the general framework of distribution theory to work in, so I will start with a brief in- troduction. This is designed either to remind you of what you already know or else to send you off to work it out. (As noted above, I suggest Friedlander's little book [4] - there is also a newer edition with Joshi as coauthor) as a good introduction to distributions.) Volume 1 of H¨ormander'streatise [8] has all that you would need; it is a good general reference. Proofs of some of the main theorems are outlined in the problems at the end of the chapter. 1.1. Schwartz test functions To fix matters at the beginning we shall work in the space of tempered distribu- tions. These are defined by duality from the space of Schwartz functions, also called the space of test functions of rapid decrease. -

Distributions: How to Think About the Dirac Delta Function

1 Distributions on R Definition 1. Let X be a topological space, let f : X ! C, then define the support of f to be the set supp(f) = fx 2 X : f(x) 6= 0g: Definition 2. Let ΩRn, then define D(Ω) ½ C1(Ω) to be the space of infinitely differentiable (partials of all orders) functions f : Rn ! R which have compact support. We can see that D(Ω) is a real vector space, an algebra under pointwise multiplication, and an ideal in C1(Ω). We can always thing of D(Ω) ½ D(Rn), and for brevity I will call D(R) = D. n n Definition 3 (Convergence in D(R )). Let f'ig be a sequence in D(R ) n and let ' 2 D(R ), then we say that f'ig ! ' if n 1. supp('i) ½ K for some compact K ½ R for all i 2 N. p p n 2. fD 'ig ! D ' uniformly for each p 2 N . This topology defined by some semi-norm? n n Theorem 4. The space D(R ) is dense in C0(R ), the space of continuous real function of compact support with topology given by uniform conver- n n gence, i.e. for any f 2 C0(R ) with supp(f) ½ U for some open U ½ R and for any " > 0, there exists a ' 2 D(Rn) with supp(') ½ U, and such that for all x 2 Rn jf(x) ¡ '(x)j < ": Definition 5. A distribution T is a continuous linear map T : D(Rn) ! C, i.e. 1. T (®' + ¯Ã) = ®T (') + ¯T (Ã) for all ®; ¯ 2 C, '; à 2 D. -

Understanding Academic Language in Edtpa

Academic Language Handout: Secondary Mathematics Version 01 Candidate Support Resource Understanding Academic Language in edTPA: Supporting Learning and Language Development Academic language (AL) is the oral and written language used for academic purposes. AL is the "language of the discipline" used to engage students in learning and includes the means by which students develop and express content understandings. When completing their edTPA, candidates must consider the AL (i.e., language demands) present throughout the learning segment in order to support student learning and language development. The language demands in Secondary Mathematics include function, vocabulary, discourse, syntax, and mathematical precision. As stated in the edTPA handbook: Candidates identify a key language function and one essential learning task within their learning segment lesson plans that allows students to practice the function (Planning Task 1, Prompts 4a/b). Candidates are then asked to identify vocabulary and one additional language demand related to the language function and learning task (Planning Task 1, Prompt 4c). Finally, candidates must identify and describe the instructional and/or language supports they have planned to address the language demands (Planning Task 1, Prompt 4d). Language supports are scaffolds, representations, and instructional strategies that teachers intentionally provide to help learners understand and use the language they need to learn within disciplines. It is important to realize that not all learning tasks focus on both discourse and syntax. As candidates decide which additional language demands (i.e., syntax and/or discourse) are relevant to their identified function, they should examine the language understandings and use that are most relevant to the learning task they have chosen. -

1 Support Vector Machine (Continued)

CS369M: Algorithms for Modern Massive Data Set Analysis Lecture 7 - 10/14/2009 Reproducing Kernel Hilbert Spaces and Kernel-based Learning Methods (2 of 2) Lecturer: Michael Mahoney Scribes: Mark Wagner and Weidong Shao *Unedited Notes 1 Support Vector Machine (continued) n Given (~xi; yi) 2 R × {−1; +1g, we want to find a good classification hyperplane. ( hw; xi + b ≥ 1 y = 1 Assumptions: data are linearly separable, i.e., there exists a hyperplane such that , hw; xi + b ≤ −1 y = −1 or yi (hw; xii + b) ≥ 1 To decide on a hyperplane, we want to maximize margin. 1.1 Problem statement (Primal) 1 2 min jjwjj2 w;b 2 s.t. yi (hw; xii + b) ≥ 1 The Lagrangian for the above problem n 1 2 X L (w; b; α) = jjwjj − α y ((hw; x i + b) − 1) 2 i i i i=1 We can view this as a 2-player game, i.e. min max L (w; b; α) w;b α≥0 where player A chooses w and b while player B chooses an α In particular, if A chooses an infeasible point (i.e. constraint violated), B can make the expression as large as possible. If A chooses a feasible point, then 8i such that (yi hw; xii + b) − 1 > 0 We must have ! αi = 0 1 2 If feasible, then optimizing 2 jjwjj Alternatively: Consider the “Dual game” max min L (w; b; α) ≤ min max L (w; b; α) αi>0 w;b w;b α which is the weak duality. But for a wide class of objectives the equality holds (i.e., no duality gap–minimax).