Make Big Data Agile: Improve Reliability and Trustworthiness of Big Data by Aligning Process and Data

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Table of Contents

The Kimball Group Reader Relentlessly Practical Tools for Data Warehousing and Business Intelligence Remastered Collection Ralph Kimball and Margy Ross with Bob Becker, Joy Mundy, and Warren Thornthwaite Contents Introduction . xxv 1 The Reader at a Glance . 1 Setting Up for Success . 1 1.1 Resist the Urge to Start Coding . 1 1.2 Set Your Boundaries . 4 Tackling DW/BI Design and Development . 6 1.3 Data Wrangling . 6 1.4 Myth Busters . 9 1.5 Dividing the World . 10 1.6 Essential Steps for the Integrated Enterprise Data Warehouse . 13 1.7 Drill Down to Ask Why . 22 1.8 Slowly Changing Dimensions . 25 1.9 Judge Your BI Tool through Your Dimensions . 28 1.10 Fact Tables . 31 1.11 Exploit Your Fact Tables . 33 2 Before You Dive In . 35 Before Data Warehousing . 35 2.1 History Lesson on Ralph Kimball and Xerox PARC. 36 Historical Perspective . 37 2.2 The Database Market Splits . 37 2.3 Bringing Up Supermarts . 40 Dealing with Demanding Realities . 47 2.4 Brave New Requirements for Data Warehousing . 47 2.5 Coping with the Brave New Requirements. 52 2.6 Stirring Things Up . 57 2.7 Design Constraints and Unavoidable Realities . 60 xiv Contents 2.8 Two Powerful Ideas . 64 2.9 Data Warehouse Dining Experience . 67 2.10 Easier Approaches for Harder Problems . 70 2.11 Expanding Boundaries of the Data Warehouse . 72 3 Project/Program Planning . 75 Professional Responsibilities . 75 3.1 Professional Boundaries . 75 3.2 An Engineer’s View . 78 3.3 Beware the Objection Removers . -

Basically Speaking, Inmon Professes the Snowflake Schema While Kimball Relies on the Star Schema

What is the main difference between Inmon and Kimball? Basically speaking, Inmon professes the Snowflake Schema while Kimball relies on the Star Schema. According to Ralf Kimball… Kimball views data warehousing as a constituency of data marts. Data marts are focused on delivering business objectives for departments in the organization. And the data warehouse is a conformed dimension of the data marts. Hence a unified view of the enterprise can be obtained from the dimension modeling on a local departmental level. He follows Bottom-up approach i.e. first creates individual Data Marts from the existing sources and then Create Data Warehouse. KIMBALL – First Data Marts – Combined way – Data warehouse. According to Bill Inmon… Inmon beliefs in creating a data warehouse on a subject-by-subject area basis. Hence the development of the data warehouse can start with data from their needs arise. Point-of-sale (POS) data can be added later if management decides it is necessary. He follows Top-down approach i.e. first creates Data Warehouse from the existing sources and then create individual Data Marts. INMON – First Data warehouse – Later – Data Marts. The Main difference is: Kimball: follows Dimensional Modeling. Inmon: follows ER Modeling bye Mayee. Kimball: creating data marts first then combining them up to form a data warehouse. Inmon: creating data warehouse then data marts. What is difference between Views and Materialized Views? Views: •• Stores the SQL statement in the database and let you use it as a table. Every time you access the view, the SQL statement executes. •• This is PSEUDO table that is not stored in the database and it is just a query. -

MASTER's THESIS Role of Metadata in the Datawarehousing Environment

2006:24 MASTER'S THESIS Role of Metadata in the Datawarehousing Environment Kranthi Kumar Parankusham Ravinder Reddy Madupu Luleå University of Technology Master Thesis, Continuation Courses Computer and Systems Science Department of Business Administration and Social Sciences Division of Information Systems Sciences 2006:24 - ISSN: 1653-0187 - ISRN: LTU-PB-EX--06/24--SE Preface This study is performed as the part of the master’s programme in computer and system sciences during 2005-06. It has been very useful and valuable experience and we have learned a lot during the study, not only about the topic at hand but also to manage to the work in the specified time. However, this workload would not have been manageable if we had not received help and support from a number of people who we would like to mention. First of all, we would like to thank our professor Svante Edzen for his help and supervision during the writing of thesis. And also we would like to express our gratitude to all the employees who allocated their valuable time to share their professional experience. On a personal level, Kranthi would like to thank all his family for their help and especially for my friends Kiran, Chenna Reddy, and Deepak Kumar. Ravi would like to give the greatest of thanks to his family for always being there when needed, and constantly taking so extremely good care of me….Also, thanks to all my friends for being close to me. Luleå University of Technology, 31 January 2006 Kranthi Kumar Parankusham Ravinder Reddy Madupu Abstract In order for a well functioning data warehouse to succeed many components must work together. -

Kimball Vs. Inmon

© 2011 - Andy Hogg Kimball vs. Inmon "Neither are any wars so furious and bloody, or of so long continuance as those occasioned by difference in opinion, especially if it be in things indifferent." (Swift, 1726) In the world of the data warehouse (DW) there are two dominant and opposing dogmas. Zealots of both extoll the virtues of their chosen doctrine with religious fervour, whilst decrying the beliefs of the other. These doctrines have existed for years, and in that time innumerable DWs have been built upon the principles of William Inmon. Likewise, an incalculable number built upon the ideas of Ralph Kimball. Inmon’s Corporate Information Factory (CIF), is a top-down approach. Since the whole DW is built in advance of usage, it requires significant time to deliver value. It therefore requires unwavering sponsorship from a senior figure within the organisation, possessing long-term vision of the DW’s value. Commentators contrast Kimball’s Bus Architecture (BA) as a bottom-up approach, where the data marts (DMs) are built first and unified into a DW at the end of the process. Inmon (n.d.a) ridicules this:- “…in bottom up data warehouse development first one data mart is developed, then another data mart is developed, then one day - presto - you magically and effortlessly wake up and have a data warehouse”. Kimball (2003) dislikes the bottom-up description, “Bottom-up is typically viewed as quick and dirty – focused on the needs of a single department rather than the enterprise”. He maintains the BA is a holistic view of the enterprise, with a final overall structure planned from the outset. -

Educational Open Government Data: from Requirements to End Users

Educational Open Government Data: from requirements to end users Rudolf Eckelberg, Vytor Bezerra Calixto, Marina Hoshiba Pimentel, Marcos Didonet Del Fabro, Marcos Suny´e,Leticia M. Peres, Eduardo Todt, Thiago Alves, Adriana Dragone, and Gabriela Schneider C3SL and NuPE Labs Federal University of Paran´a,Curitiba, Brazil {rce16,vsbc14,marina,marcos.ddf,sunye,lmperes,todt}@inf.ufpr.br, [email protected],[email protected],[email protected] Abstract. The large availability of open government data raises enor- mous opportunities for open big data analytics. However, providing an end-to-end framework able to handle tasks from data extraction and pro- cessing to a web interface involves many challenges. One critical factor is the existence of many players with different knowledge, who need to interact, such as application domain experts, database designers, and web developers. This represents a knowledge gap that is difficult to over- come. In this paper, we present a case study for big data analytics over Brazilian educational data, with more than 1 billion records. We show how we organized the data analytics phase, starting from the analytics requirements, data evolution, development and deployment in a public interface. Keywords: Open Government Data; Analytics API; data evolution 1 Introduction The large availability of Open Governmental Data raises enormous opportunities for open big data analytics. Opportunities are always followed by challenges and when one handle Big Data, difficulties lie in data capture, storage, searching, sharing, analysis, and visualization [2]. Providing useful Open Data would need a complete team, from the domain expert up to the web developer, so often it cannot be handled only by a data scientist for example. -

Lecture @Dhbw: Data Warehouse Part I: Introduction to Dwh and Dwh Architecture Andreas Buckenhofer, Daimler Tss About Me

A company of Daimler AG LECTURE @DHBW: DATA WAREHOUSE PART I: INTRODUCTION TO DWH AND DWH ARCHITECTURE ANDREAS BUCKENHOFER, DAIMLER TSS ABOUT ME Andreas Buckenhofer https://de.linkedin.com/in/buckenhofer Senior DB Professional [email protected] https://twitter.com/ABuckenhofer https://www.doag.org/de/themen/datenbank/in-memory/ Since 2009 at Daimler TSS Department: Big Data http://wwwlehre.dhbw-stuttgart.de/~buckenhofer/ Business Unit: Analytics https://www.xing.com/profile/Andreas_Buckenhofer2 NOT JUST AVERAGE: OUTSTANDING. As a 100% Daimler subsidiary, we give 100 percent, always and never less. We love IT and pull out all the stops to aid Daimler's development with our expertise on its journey into the future. Our objective: We make Daimler the most innovative and digital mobility company. Daimler TSS INTERNAL IT PARTNER FOR DAIMLER + Holistic solutions according to the Daimler guidelines + IT strategy + Security + Architecture + Developing and securing know-how + TSS is a partner who can be trusted with sensitive data As subsidiary: maximum added value for Daimler + Market closeness + Independence + Flexibility (short decision making process, ability to react quickly) Daimler TSS 4 LOCATIONS Daimler TSS Germany 7 locations 1000 employees* Ulm (Headquarters) Daimler TSS China Stuttgart Hub Beijing 10 employees Berlin Karlsruhe Daimler TSS Malaysia Hub Kuala Lumpur 42 employees Daimler TSS India * as of August 2017 Hub Bangalore 22 employees Daimler TSS Data Warehouse / DHBW 5 DWH, BIG DATA, DATA MINING This lecture is -

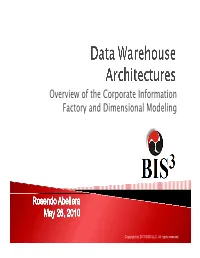

Overview of the Corporate Information Factory and Dimensional Modeling

Overview of the Corporate Information Factory and Dimensional Modeling Copyright (c) 2010 BIS3 LLC. All rights reserved. Data Warehouse Architect: Rosendo Abellera ` President, BIS3 o Nearly 2 decades software and system development o 12 years in DW and BI space o 25+ years of data and intelligence/analytics Other Notable Data Projects: ` Accenture ` Toshiba ` National Security Agency (NSA) ` US Air Force Copyright (c) 2010 BIS3 LLC. All rights reserved. 2 A data warehouse is a repository of an organization's electronically stored data designed to facilitate reporting and analysis. Subject-oriented Non-volatile Integrated Time-variant Reference: Wikipedia Copyright (c) 2010 BIS3 LLC. All rights reserved. 3 Copyright (c) 2010 BIS3 LLC. All rights reserved. 4 3rd Normal Form Bill Inmon Data Mart Corporate ? Enterprise Data ? Warehouse Dimensional Information Hub and Spoke Operational Data Modeling Factory Store Ralph Kimball Slowly Changing Dimensions Snowflake Star Schema Copyright (c) 2010 BIS3 LLC. All rights reserved. 5 ` Corporate ` Dimensional Information Factory Modeling 1.Top down 1.Bottom up 2.Data normalized to 2.Data denormalized to 3rd Normal Form form star schema 3.Enterprise data 3.Data marts conform to warehouse spawns develop the enterprise data marts data warehouse Copyright (c) 2010 BIS3 LLC. All rights reserved. 6 ` Focus ◦ Single repository of enterprise data ◦ Framework for Decision Support Systems (DSS) ` Specifics ◦ Create specific structures for distinct purpose ◦ Model data in 3rd Normal Form ◦ As a Hub and Spoke Approach, create data marts as subsets of data warehouse as needed Copyright (c) 2010 BIS3 LLC. All rights reserved. 7 Copyright (c) 2010 BIS3 LLC. -

The Data Warehouse ETL Toolkit

P1: FCH/SPH P2: FCH/SPH QC: FCH/SPH T1: FCH WY046-FM WY046-Kimball-v4.cls August 18, 2004 13:42 The Data Warehouse ETL Toolkit i P1: FCH/SPH P2: FCH/SPH QC: FCH/SPH T1: FCH WY046-FM WY046-Kimball-v4.cls August 18, 2004 13:42 ii P1: FCH/SPH P2: FCH/SPH QC: FCH/SPH T1: FCH WY046-FM WY046-Kimball-v4.cls August 18, 2004 13:42 The Data Warehouse ETL Toolkit Practical Techniques for Extracting, Cleaning, Conforming, and Delivering Data Ralph Kimball Joe Caserta Wiley Publishing, Inc. iii P1: FCH/SPH P2: FCH/SPH QC: FCH/SPH T1: FCH WY046-FM WY046-Kimball-v4.cls August 18, 2004 13:42 Published by Wiley Publishing, Inc. 10475 Crosspoint Boulevard Indianapolis, IN 46256 www.wiley.com Copyright C 2004 by Wiley Publishing, Inc. All rights reserved. Published simultaneously in Canada eISBN: 0-764-57923-1 Printed in the United States of America 10987654321 No part of this publication may be reproduced, stored in a retrieval system, or transmitted in any form or by any means, electronic, mechanical, photocopying, recording, scanning or otherwise, ex- cept as permitted under Sections 107 or 108 of the 1976 United States Copyright Act, without either the prior written permission of the Publisher, or authorization through payment of the appropriate per-copy fee to the Copyright Clearance Center, 222 Rosewood Drive, Danvers, MA 01923, (978) 750-8400, fax (978) 646-8600. Requests to the Publisher for permission should be addressed to the Legal Department, Wiley Publishing, Inc., 10475 Crosspoint Blvd., Indianapolis, IN 46256, (317) 572-3447, fax (317) 572-4355, e-mail: [email protected]. -

Raquel Gouveia Ribeiro Sistemas De Bases De Dados Orientados Por Colunas

Escola de Engenharia Raquel Gouveia Ribeiro Sistemas de Bases de Dados Orientados por Colunas Outubro de 2013 Escola de Engenharia Departamento de Informática Raquel Gouveia Ribeiro Sistemas de Bases de Dados Orientados por Colunas Dissertação de Mestrado Mestrado em Engenharia Informática Trabalho realizado sob orientação de Professor Doutor Orlando Manuel de Oliveira Belo Outubro de 2013 À minha família. iii Agradecimentos Agradeço em primeiro lugar ao meu orientador Professor Orlando Belo, por toda a colaboração e dedicação que me disponibilizou na realização desta dissertação. Foi a pessoa que sempre me ensinou a dar o passo seguinte e que me fez crescer. Muito obrigada pela paciência demonstrada em diversos assuntos e pelos seus conselhos, aos quais muitas vezes recorri, pela sua vasta experiência e respeito pelo seu trabalho e pessoa. Queria também agradecer à minha família, por toda a dedicação, apoio e por todos os momentos em que me fizeram ver a realidade, fosse ela dura ou não. Por todas as alturas que me proporcionaram as condições de trabalho necessárias, por todo o encorajamento e palavras que me fizeram acreditar. É graças sobretudo a eles, que encarei esta fase da minha vida sempre com confiança que era capaz, e com o lema de que se deve olhar em frente e nunca para trás. Muito obrigado! iv v Resumo Sistemas de Bases de Dados Orientados por Colunas Nos últimos tempos os sistemas de bases de dados orientados por colunas têm atraído muita atenção, quer por parte de investigadores e modeladores de base de dados, quer por profissionais da área, com particular interesse em aspectos relacionados com arquiteturas, desempenho dos sistemas e sua escalabilidade ou em aplicações de suporte à decisão, nomeadamente em Data Warehousing e Business Inteligence. -

Dimensional Modeling: Kimball Fundamentals & Advanced

Dimensional Modeling: Kimball Fundamentals & Advanced Techniques Why Attend Excellence in dimensional modeling remains the keystone of a well-designed data warehouse/business intelligence system, regardless of whether you’ve adopted a Kimball, Corporate Information Factory, or hybrid architecture. The Data Warehouse Toolkit established an extensive portfolio of dimensional techniques and vocabulary, including conformed dimensions, slowly changing dimensions, junk dimensions, mini-dimensions, bridge tables, periodic and accumulating snapshot fact tables, and the list goes on. In this course, you will learn practical dimensional modeling techniques covering fundamental to advanced patterns and best practices. Concepts are illustrated through real-world industry scenarios, conveyed via a combination of lectures, through a combination of lectures, class exercises, small group workshops, and individual problem solving. Bringing DecisionWorks onsite enables everyone on the team to get on the same page with a common vocabulary and understanding of core techniques. The result is more effective and efficient education with lower travel cost and lost productivity, plus less downstream “tire spinning” within the team. Who Should Attend This course is primarily intended for DW/BI team members who’ve had prior exposure to dimensional modeling. The first day is appropriate for anyone on the team, including project managers, data warehouse architects, data modelers, database administrators, business analysts, and ETL or BI application developers and -

Rethinking EDW in the Era of Expansive Information Management

3/14/2011 Rethinking EDW In The Era Of Expansive Information Management Ralph Kimball Informatica March 2011 Ralph Kimball Informatica March 2011 Trends Driving the Expansion Of The EDW in 2011 The big business trends big data big expectations Management appreciates role of information assets: drive revenue growth, conversion rate, cost reduction, asset efficiency (doing more with less) Competitors are monetizing opportunities, management is noticing! Social media analysis, especially sentiment analysis becoming mainstream The EDW has become operational: Real time data drives intervention, feature development, strategic planning Irresistible push to zero latency data delivery Delivery modes have exploded BI everywhere Smart phones Tablets PCs at work, on the airplane, at home Delivery to operational/control user interfaces, portals, alerts, tweets 1 3/14/2011 The EDW Must Change Importance and critical scrutiny of the EDW is growing as organizations monetize the decisions made with their data The size and types of the data being fed to the EDW is exploding – much new “big data” is not suited for relational processing EDW must adapt to different architectural roles: source or target, centralized or distributed, batch or real-time Many new unfamiliar technologies are required to exploit the data and stay competitive – need to shift the mindset to design for the unknown IT management is faced with an unprecedented array of choices and not much time to evaluate Increased Scope of the EDW No longer a library for simple -

The Technology of Using a Data Warehouse to Support Decision -Making in Health Care

International Journal of Database Management Systems ( IJDMS ) Vol.5, No.3, June 2013 THE TECHNOLOGY OF USING A DATA WAREHOUSE TO SUPPORT DECISION -MAKING IN HEALTH CARE Dr. Osama E.Sheta 1 and Ahmed Nour Eldeen 2 1,2 Department of Mathematics (Computer Science) Faculty of Science, Zagazig University, Zagazig, Elsharkia, Egypt. 1 2 oesheta75@gmail .com, [email protected] ABSTRACT: This paper describes the technology of data warehouse in healthcare decision-making and tools for support of these technologies, which is used to cancer diseases. The healthcare executive managers and doctors needs information about and insight into the existing health data, so as to make decision more efficiently without interrupting the daily work of an On-Line Transaction Processing (OLTP) system. This is a complex problem during the healthcare decision-making process. To solve this problem, the building a healthcare data warehouse seems to be efficient. First in this paper we explain the concepts of the data warehouse, On-Line Analysis Processing (OLAP). Changing the data in the data warehouse into a multidimensional data cube is then shown. Finally, an application example is given to illustrate the use of the healthcare data warehouse specific to cancer diseases developed in this study. The executive managers and doctors can view data from more than one perspective with reduced query time, thus making decisions faster and more comprehensive. KEYWORDS : Healthcare data warehouse, Extract-Transformation-Load (ETL), Cancer data warehouse, On-Line Analysis Processing (OLAP), Multidimensional Expression (MDX) and Health Insurance Organization(HIO). 1. I NTRODUCTION Managing data in healthcare organizations has become a challenge as a result of healthcare managers having considerable differences in objectives, concerns, priorities and constraints.