Mining Development Knowledge to Understand and Sup- Port Software Logging Practices

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Tracking Known Security Vulnerabilities in Third-Party Components

Tracking known security vulnerabilities in third-party components Master’s Thesis Mircea Cadariu Tracking known security vulnerabilities in third-party components THESIS submitted in partial fulfillment of the requirements for the degree of MASTER OF SCIENCE in COMPUTER SCIENCE by Mircea Cadariu born in Brasov, Romania Software Engineering Research Group Software Improvement Group Department of Software Technology Rembrandt Tower, 15th floor Faculty EEMCS, Delft University of Technology Amstelplein 1 - 1096HA Delft, the Netherlands Amsterdam, the Netherlands www.ewi.tudelft.nl www.sig.eu c 2014 Mircea Cadariu. All rights reserved. Tracking known security vulnerabilities in third-party components Author: Mircea Cadariu Student id: 4252373 Email: [email protected] Abstract Known security vulnerabilities are introduced in software systems as a result of de- pending on third-party components. These documented software weaknesses are hiding in plain sight and represent the lowest hanging fruit for attackers. Despite the risk they introduce for software systems, it has been shown that developers consistently download vulnerable components from public repositories. We show that these downloads indeed find their way in many industrial and open-source software systems. In order to improve the status quo, we introduce the Vulnerability Alert Service, a tool-based process to track known vulnerabilities in software projects throughout the development process. Its usefulness has been empirically validated in the context of the external software product quality monitoring service offered by the Software Improvement Group, a software consultancy company based in Amsterdam, the Netherlands. Thesis Committee: Chair: Prof. Dr. A. van Deursen, Faculty EEMCS, TU Delft University supervisor: Prof. Dr. A. -

Vimal Daga Chief Technical Officer (CTO) – Linuxworld Informatics Pvt Ltd Professional Experience & Certifications

Vimal Daga Chief Technical Officer (CTO) – LinuxWorld Informatics Pvt Ltd Professional Experience & Certifications: I Professional Experience During this period, has been engaged with various corporate clients on different domains and has been involved in imparting corporate Training programs and Consultancy for various technologies that covers the following: A. Sr. Machine Learning / Deep Learning / Data Scientist / NLP Consultant and Researcher Expertise in the field of Artificial Intelligence, Deep Learning, and Computer Vision and having ability to solve problems such as Face Detection, Face Recognition and Object Detection using Deep Neural Network (CNN, DNN, RNN, Convolution Networks etc.) and Optical Character Detection and Recognition (OCD & OCR) Worked in tools such as Tensorflow, Caffe/Caffe2, Keras, Theano, PyTorch etc. Build prototypes related to deep learning problems in the field of computer vision. Publications at top international conferences/ journals in fields related to computer vision/deep learning/machine learning / AI Experience on tools, frameworks like Microsoft Azure ML, Chat Bot Framework/LUIS . IBM Watson / ConversationService, Google TensorFlow / Python for Machine Learning (e.g. scikit-learn),Open source ML libraries and tools like Apache Spark Highly Worked on Data Science, Big Data,datastructures, statistics , algorithms like Regression, Classification etc. Working knowlegde of Supervised / Unsuperivsed learning (Decision Trees, Logistic Regression, SVMs,GBM, etc) Expertise in Sentiment Analysis, Entity Extraction, Natural Language Understanding (NLU), Intent recognition Strong understanding of text pre-processing and normalization techniques, such as tokenization, POS tagging, and parsing, and how they work at a basic level and NLP toolkits as NLTK, Gensim,, Apac SpaCyhe UIMA etc. I have Hands on experience related to Datasets such as or including text, images and other logs or clickstreams. -

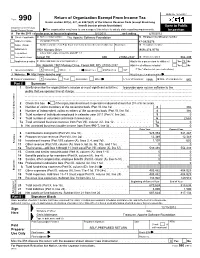

Return of Organization Exempt from Income

OMB No. 1545-0047 Return of Organization Exempt From Income Tax Form 990 Under section 501(c), 527, or 4947(a)(1) of the Internal Revenue Code (except black lung benefit trust or private foundation) Open to Public Department of the Treasury Internal Revenue Service The organization may have to use a copy of this return to satisfy state reporting requirements. Inspection A For the 2011 calendar year, or tax year beginning 5/1/2011 , and ending 4/30/2012 B Check if applicable: C Name of organization The Apache Software Foundation D Employer identification number Address change Doing Business As 47-0825376 Name change Number and street (or P.O. box if mail is not delivered to street address) Room/suite E Telephone number Initial return 1901 Munsey Drive (909) 374-9776 Terminated City or town, state or country, and ZIP + 4 Amended return Forest Hill MD 21050-2747 G Gross receipts $ 554,439 Application pending F Name and address of principal officer: H(a) Is this a group return for affiliates? Yes X No Jim Jagielski 1901 Munsey Drive, Forest Hill, MD 21050-2747 H(b) Are all affiliates included? Yes No I Tax-exempt status: X 501(c)(3) 501(c) ( ) (insert no.) 4947(a)(1) or 527 If "No," attach a list. (see instructions) J Website: http://www.apache.org/ H(c) Group exemption number K Form of organization: X Corporation Trust Association Other L Year of formation: 1999 M State of legal domicile: MD Part I Summary 1 Briefly describe the organization's mission or most significant activities: to provide open source software to the public that we sponsor free of charge 2 Check this box if the organization discontinued its operations or disposed of more than 25% of its net assets. -

PROJECT REPORT IT2901 - Informatics Project II

PROJECT REPORT IT2901 - Informatics Project II Group 02 - FFI Publish/Subscribe Written by: Fredrik Christoffer Berg Kristoffer Andreas Breiland Dalby Hakon˚ Ødegard˚ Løvdal Aleksander Skraastad Fredrik Borgen Tørnvall Trond Walleraunet Spring 2015 Norwegian University of Science and Technology Page intentionally left blank. We would like to extend our gratitude to Frank Trethan Johnsen and Trude Hafsøe Bloebaum from the Norwegian Defence Research Establishment for their excellent collaboration throughout the lifetime of this project. We would also like to thank our supervisor Alfredo Perez Fernandez, for his excellent feedback and help on the process and report parts of the project. At last we would like to thank Marianne Valstad for proofreading and giving feedback on the report. Abstract This report describes the work done during the course IT2901 - Informatics Project II. Our customer was the Norwegian Defence Research Establishment, which is a governmental organization responsible for research and development for the Norwegian Armed Forces. The assignment was to make an application that translates between different publish/sub- scribe protocols used in the Norwegian Armed Forces and the North Atlantic Treaty Or- ganisation. The relevant protocols were the Web Services Notification protocol, Advanced Messaging Queuing Protocol, Message Queue Telemetry Transport and ZeroMQ. FFI and The Norwegian Armed Forces needed such a broker in order to participate in federated mission networking. This report mainly focuses on the development process. -

BUILDING RESILIENT MICROSERVICES with APACHE QPID PROTON

BUILDING RESILIENT MICROSERVICES with APACHE QPID PROTON Richard Li Rafael Schloming datawire.io • MICROSERVICES • DESIGNING MICROSERVICES • DEMO • WRAP UP • Release any time • You’re responsible for reliability, availability, scalability, security • You’re also responsible for monitoring, billing, user admin, … Idiot proof deploy Homogenous tech stack Minimize upgrade Synchronized release frequency ACID; 1 simultaneous Easy for vendor to debug release Ship as fast as possible Continuous delivery Lots of functional Design/build in parts breadth Reliability, availability, Resilient system design security, scale Continuous delivery Design/build in parts Microservices. Resilient system design Componentization via Services Organized around Business Capabilities Products not Projects Smart endpoints and dumb pipes Decentralized Governance Decentralized Data Management Infrastructure Automation Design for failure Evolutionary Design http://martinfowler.com/articles/microservices.html DESIGNING MICROSERVICES Monolith 1. Send a tweet. 2. Get followers. 3. Publish tweet. App server Three App Servers 1. Send a tweet. 2. Get followers. 3. Publish tweet. App server App Servers + Asynchronous Queue 1. Send a tweet. 4. Get followers. 3. Process new tweets. 2. Queue tweet for sending. 5. Publish tweet. Not a typical app server App Servers + Asynchronous Queue 4. Get followers. Recommend followers 1. Send a tweet. 3. Process new tweets. 2. Queue tweet for sending. 5. Publish tweet. Not a typical app server Fully Asynchronous Publish changes to followers -

Full-Graph-Limited-Mvn-Deps.Pdf

org.jboss.cl.jboss-cl-2.0.9.GA org.jboss.cl.jboss-cl-parent-2.2.1.GA org.jboss.cl.jboss-classloader-N/A org.jboss.cl.jboss-classloading-vfs-N/A org.jboss.cl.jboss-classloading-N/A org.primefaces.extensions.master-pom-1.0.0 org.sonatype.mercury.mercury-mp3-1.0-alpha-1 org.primefaces.themes.overcast-${primefaces.theme.version} org.primefaces.themes.dark-hive-${primefaces.theme.version}org.primefaces.themes.humanity-${primefaces.theme.version}org.primefaces.themes.le-frog-${primefaces.theme.version} org.primefaces.themes.south-street-${primefaces.theme.version}org.primefaces.themes.sunny-${primefaces.theme.version}org.primefaces.themes.hot-sneaks-${primefaces.theme.version}org.primefaces.themes.cupertino-${primefaces.theme.version} org.primefaces.themes.trontastic-${primefaces.theme.version}org.primefaces.themes.excite-bike-${primefaces.theme.version} org.apache.maven.mercury.mercury-external-N/A org.primefaces.themes.redmond-${primefaces.theme.version}org.primefaces.themes.afterwork-${primefaces.theme.version}org.primefaces.themes.glass-x-${primefaces.theme.version}org.primefaces.themes.home-${primefaces.theme.version} org.primefaces.themes.black-tie-${primefaces.theme.version}org.primefaces.themes.eggplant-${primefaces.theme.version} org.apache.maven.mercury.mercury-repo-remote-m2-N/Aorg.apache.maven.mercury.mercury-md-sat-N/A org.primefaces.themes.ui-lightness-${primefaces.theme.version}org.primefaces.themes.midnight-${primefaces.theme.version}org.primefaces.themes.mint-choc-${primefaces.theme.version}org.primefaces.themes.afternoon-${primefaces.theme.version}org.primefaces.themes.dot-luv-${primefaces.theme.version}org.primefaces.themes.smoothness-${primefaces.theme.version}org.primefaces.themes.swanky-purse-${primefaces.theme.version} -

Information Extraction from Semi-Structured Documents Msci

Kieran Brahney Information Extraction from Semi-Structured Documents MSci. Computer Science with Industrial Experience 05/06/2015 SCC421: Information Extraction from Semi-Structured Documents I certify that the material contained in this dissertation is my own work and does not contain unreferenced or unacknowledged material. I also warrant that the above statement applies to the implementation of the project and all associated documentation. Regarding the electronically submitted version of this submitted work, I consent to this being stored electronically and copied for assessment purposes, including the Department’s use of plagiarism detection systems in order to check the integrity of assessed work. I agree to my dissertation being placed in the public domain, with my name explicitly included as the author of the work. Date: _______________ Signed: _______________ Kieran Brahney (32857004) Page 2 of 55 SCC421: Information Extraction from Semi-Structured Documents ABSTRACT. Every hazardous chemical material is required to be accompanied by Safety Data Sheets (SDS). Employing a semi-structured format, they offer information and advice with regard to the safe handling and use of the product. In an effort to separate out specific data items, a variety of information extraction techniques were studied to utilise their best features and apply them to the SDS. An extensible and scalable Java-based system was designed, implemented and tested. The system supports information extraction using a user-provided lexical specification containing a series of regular expressions. The accuracy of the system was evaluated for each XML element on 40 ‘unseen’ safety data sheets. On structured textual elements, for example phrase codes, the system was able to reach accuracies between 85 – 100%. -

A Survey of Distributed Message Broker Queues

A Survey of Distributed Message Broker Queues Vineet John Xia Liu University of Waterloo University of Waterloo [email protected] [email protected] ABSTRACT This paper surveys the message brokers that are in vogue today for distributed communication. Their primary goal is to facilitate the construction of decentralized topolo- gies without single points of failure, enabling fault tol- erance and high availability. These characteristics make them optimal for usage within distributed architectures. However, there are multiple protocols built to achieve this, and it would be beneficial to have a empirical comparison between their features and performance to determine their real-world applicability. Figure 1: Kafka Architecture This paper focuses on two popular protocols (Kafka and AMQP) and explores the divergence in their fea- RQ0 What are the message broker implementations tures as well as their performance under varied testing commonly in use today? workloads. RQ1 What are the common requirements for the im- plementation of message queues? KEYWORDS RQ2 What are the divergent functionalities in the distributed message broker, message queue, kafka, amqp, current message queue offerings? rabbitmq RQ3 How do each of the implementations offer relia- bility, partitioning and fault tolerance? 1 INTRODUCTION 3 KAFKA Kafka was developed at LinkedIn and primarily used Distributed Message Brokers are typically used to decou- for log processing. This worked well for Kafka’s user en- ple separate stages of a software architecture. They per- gagement metrics collection use-case. The fundamental mit communication between these stages asynchronously, features behind Kafka are performance over reliability by using the publish-subscribe paradigm.[1]. These mes- and it offers high throughput, low latency message queu- sage brokers are also finding new applications in the ing. -

How We Use Open Source at Salesforce.Com – Part 1

How We Use Open Source at Salesforce.com – Part 1 By Ian Varley | Published: July 18, 2014 (Series complete July 29, 2014) While many may not realize the importance of open source at salesforce.com, it plays a critical role here. These days, developers at salesforce.com spend all day working in open-source software, but this wasn’t always the case. In the early years of the company, the standard-issue workstation ran Windows. But a few developers noticed that building the Salesforce application went much faster on Ubuntu Linux, using the same exact hardware — cutting the build time from hours to minutes. They started passing around a CD, and before anyone knew what had happened, the change went viral and nearly everyone in engineering had switched to Linux. (Thankfully, our awesome corporate IT team soon followed suit and started issuing new machines with a supported version of Linux for new hires.) On top of the Linux OS, the entire development stack also runs on open source. The Salesforce platform is primarily written in Java and runs on Sun’s JDK (which is open source). Most developers at salesforce.com write, compile, debug, and test the application in Eclipse, an open-source programming editor (maintained by the aptly named Eclipse Foundation). Many projects use Git as their source version control, although the central repository is Perforce because of its size. Like most companies, we use many of the “usual suspect” Java open-source libraries during development: Guava, Gin, and Guice (open sourced by Google); Apache Commons (from the Apache Software Foundation); Jackson (killer JSON parsing library written and maintained by Salesforce engineer Tatu Saloranta); among hundreds of others. -

Message-Oriented Middleware As a Queue Management Solution to Improve Job Handling Within an E- Commerce System

DEGREE PROJECT IN INFORMATION AND COMMUNICATION TECHNOLOGY, SECOND CYCLE, 30 CREDITS STOCKHOLM, SWEDEN 2018 Message-Oriented Middleware as a Queue Management Solution to Improve Job Handling within an E- Commerce System TOBIAS JOHANSSON KTH ROYAL INSTITUTE OF TECHNOLOGY SCHOOL OF ELECTRICAL ENGINEERING AND COMPUTER SCIENCE CONTENTS 1 Abstract Today’s applications are required to continuously adapt and adjust, to be able to meet a constant change in demand. As result of an in- creasing amount of data, choosing the right communication method becomes a vital step. A solution that have been functional for a long time, may at any point in time be unable to reach the level it requires and instead turns into bottlenecks and inefficient solutions. Using a database as a communication method between system enti- ties, does not have to be a bad solution. A database has it perks with being a simple solution and efficient query operations. However, us- ing it as a queue management system, requires entities to continuously poll new table entries. This solution may not be the most suitable nor best available option. There exists communication system developed for the specific purpose of efficiently distributing messages to avail- able parties. Implementing a message-oriented middleware enables for asynchronous communication which promotes applications to be more loosely cou- pled. As a result, available resources could be better utilised and im- prove the system performance. This degree project investigates the development and integration of two message-oriented middlewares, RabbitMQ and AcviteMQ, within an e-commerce system. The pur- pose is to explore the potentials of changing queue management sys- tem from a database to a message broker. -

Code Smell Prediction Employing Machine Learning Meets Emerging Java Language Constructs"

Appendix to the paper "Code smell prediction employing machine learning meets emerging Java language constructs" Hanna Grodzicka, Michał Kawa, Zofia Łakomiak, Arkadiusz Ziobrowski, Lech Madeyski (B) The Appendix includes two tables containing the dataset used in the paper "Code smell prediction employing machine learning meets emerging Java lan- guage constructs". The first table contains information about 792 projects selected for R package reproducer [Madeyski and Kitchenham(2019)]. Projects were the base dataset for cre- ating the dataset used in the study (Table I). The second table contains information about 281 projects filtered by Java version from build tool Maven (Table II) which were directly used in the paper. TABLE I: Base projects used to create the new dataset # Orgasation Project name GitHub link Commit hash Build tool Java version 1 adobe aem-core-wcm- www.github.com/adobe/ 1d1f1d70844c9e07cd694f028e87f85d926aba94 other or lack of unknown components aem-core-wcm-components 2 adobe S3Mock www.github.com/adobe/ 5aa299c2b6d0f0fd00f8d03fda560502270afb82 MAVEN 8 S3Mock 3 alexa alexa-skills- www.github.com/alexa/ bf1e9ccc50d1f3f8408f887f70197ee288fd4bd9 MAVEN 8 kit-sdk-for- alexa-skills-kit-sdk- java for-java 4 alibaba ARouter www.github.com/alibaba/ 93b328569bbdbf75e4aa87f0ecf48c69600591b2 GRADLE unknown ARouter 5 alibaba atlas www.github.com/alibaba/ e8c7b3f1ff14b2a1df64321c6992b796cae7d732 GRADLE unknown atlas 6 alibaba canal www.github.com/alibaba/ 08167c95c767fd3c9879584c0230820a8476a7a7 MAVEN 7 canal 7 alibaba cobar www.github.com/alibaba/ -

Universal Messaging Concepts

Universal Messaging Concepts Version 10.5 October 2019 This document applies to Software AG Universal Messaging 10.5 and to all subsequent releases. Specifications contained herein are subject to change and these changes will be reported in subsequent release notes or new editions. Copyright © 2013-2021 Software AG, Darmstadt, Germany and/or Software AG USA, Inc., Reston, VA, USA, and/or its subsidiaries and/or its affiliates and/or their licensors. The name Software AG and all Software AG product names are either trademarks or registered trademarks of Software AG and/or Software AG USA Inc. and/or its subsidiaries and/or its affiliates and/or their licensors. Other company and product names mentioned herein may be trademarks of their respective owners. Detailed information on trademarks and patents owned by Software AG and/or its subsidiaries is located at https://softwareag.com/licenses/. Use of this software is subject to adherence to Software AG's licensing conditions and terms. These terms are part of the product documentation, located at https://softwareag.com/licenses/ and/or in the root installation directory of the licensed product(s). This software may include portions of third-party products. For third-party copyright notices, license terms, additional rights or restrictions, please refer to "License Texts, Copyright Notices and Disclaimers of Third Party Products". For certain specific third-party license restrictions, please refer to section E of the Legal Notices available under "License Terms and Conditions for Use of Software AG Products / Copyright and Trademark Notices of Software AG Products". These documents are part of the product documentation, located at https://softwareag.com/licenses/ and/or in the root installation directory of the licensed product(s).