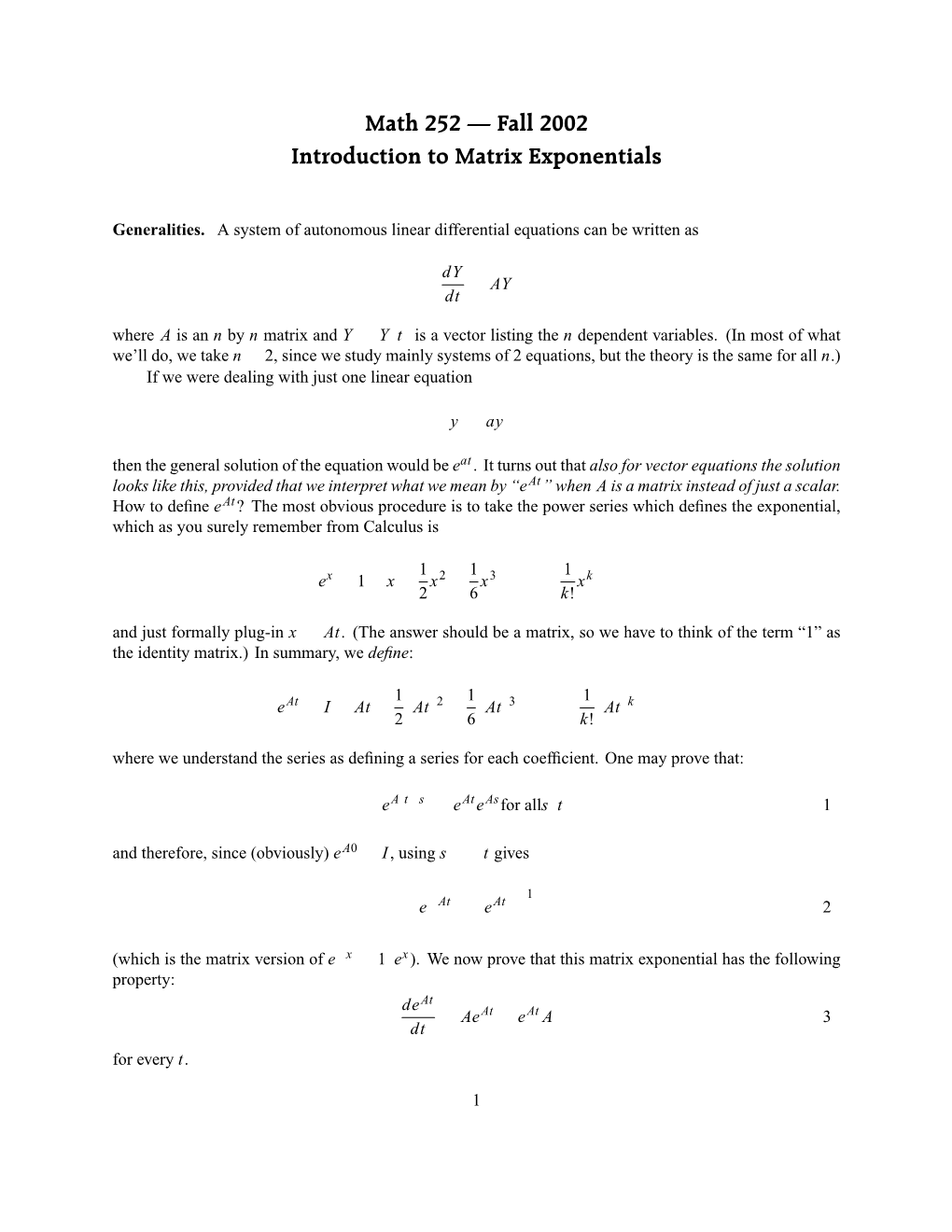

Introduction to Matrix Exponentials

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Quantum Information

Quantum Information J. A. Jones Michaelmas Term 2010 Contents 1 Dirac Notation 3 1.1 Hilbert Space . 3 1.2 Dirac notation . 4 1.3 Operators . 5 1.4 Vectors and matrices . 6 1.5 Eigenvalues and eigenvectors . 8 1.6 Hermitian operators . 9 1.7 Commutators . 10 1.8 Unitary operators . 11 1.9 Operator exponentials . 11 1.10 Physical systems . 12 1.11 Time-dependent Hamiltonians . 13 1.12 Global phases . 13 2 Quantum bits and quantum gates 15 2.1 The Bloch sphere . 16 2.2 Density matrices . 16 2.3 Propagators and Pauli matrices . 18 2.4 Quantum logic gates . 18 2.5 Gate notation . 21 2.6 Quantum networks . 21 2.7 Initialization and measurement . 23 2.8 Experimental methods . 24 3 An atom in a laser field 25 3.1 Time-dependent systems . 25 3.2 Sudden jumps . 26 3.3 Oscillating fields . 27 3.4 Time-dependent perturbation theory . 29 3.5 Rabi flopping and Fermi's Golden Rule . 30 3.6 Raman transitions . 32 3.7 Rabi flopping as a quantum gate . 32 3.8 Ramsey fringes . 33 3.9 Measurement and initialisation . 34 1 CONTENTS 2 4 Spins in magnetic fields 35 4.1 The nuclear spin Hamiltonian . 35 4.2 The rotating frame . 36 4.3 On-resonance excitation . 38 4.4 Excitation phases . 38 4.5 Off-resonance excitation . 39 4.6 Practicalities . 40 4.7 The vector model . 40 4.8 Spin echoes . 41 4.9 Measurement and initialisation . 42 5 Photon techniques 43 5.1 Spatial encoding . -

The Inverse Along a Lower Triangular Matrix∗

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Universidade do Minho: RepositoriUM The inverse along a lower triangular matrix∗ Xavier Marya, Pedro Patr´ıciob aUniversit´eParis-Ouest Nanterre { La D´efense,Laboratoire Modal'X, 200 avenuue de la r´epublique,92000 Nanterre, France. email: [email protected] bDepartamento de Matem´aticae Aplica¸c~oes,Universidade do Minho, 4710-057 Braga, Portugal. email: [email protected] Abstract In this paper, we investigate the recently defined notion of inverse along an element in the context of matrices over a ring. Precisely, we study the inverse of a matrix along a lower triangular matrix, under some conditions. Keywords: Generalized inverse, inverse along an element, Dedekind-finite ring, Green's relations, rings AMS classification: 15A09, 16E50 1 Introduction In this paper, R is a ring with identity. We say a is (von Neumann) regular in R if a 2 aRa.A particular solution to axa = a is denoted by a−, and the set of all such solutions is denoted by af1g. Given a−; a= 2 af1g then x = a=aa− satisfies axa = a; xax = a simultaneously. Such a solution is called a reflexive inverse, and is denoted by a+. The set of all reflexive inverses of a is denoted by af1; 2g. Finally, a is group invertible if there is a# 2 af1; 2g that commutes with a, and a is Drazin invertible if ak is group invertible, for some non-negative integer k. This is equivalent to the existence of aD 2 R such that ak+1aD = ak; aDaaD = aD; aaD = aDa. -

MATH 237 Differential Equations and Computer Methods

Queen’s University Mathematics and Engineering and Mathematics and Statistics MATH 237 Differential Equations and Computer Methods Supplemental Course Notes Serdar Y¨uksel November 19, 2010 This document is a collection of supplemental lecture notes used for Math 237: Differential Equations and Computer Methods. Serdar Y¨uksel Contents 1 Introduction to Differential Equations 7 1.1 Introduction: ................................... ....... 7 1.2 Classification of Differential Equations . ............... 7 1.2.1 OrdinaryDifferentialEquations. .......... 8 1.2.2 PartialDifferentialEquations . .......... 8 1.2.3 Homogeneous Differential Equations . .......... 8 1.2.4 N-thorderDifferentialEquations . ......... 8 1.2.5 LinearDifferentialEquations . ......... 8 1.3 Solutions of Differential equations . .............. 9 1.4 DirectionFields................................. ........ 10 1.5 Fundamental Questions on First-Order Differential Equations............... 10 2 First-Order Ordinary Differential Equations 11 2.1 ExactDifferentialEquations. ........... 11 2.2 MethodofIntegratingFactors. ........... 12 2.3 SeparableDifferentialEquations . ............ 13 2.4 Differential Equations with Homogenous Coefficients . ................ 13 2.5 First-Order Linear Differential Equations . .............. 14 2.6 Applications.................................... ....... 14 3 Higher-Order Ordinary Linear Differential Equations 15 3.1 Higher-OrderDifferentialEquations . ............ 15 3.1.1 LinearIndependence . ...... 16 3.1.2 WronskianofasetofSolutions . ........ 16 3.1.3 Non-HomogeneousProblem -

LU Decompositions We Seek a Factorization of a Square Matrix a Into the Product of Two Matrices Which Yields an Efficient Method

LU Decompositions We seek a factorization of a square matrix A into the product of two matrices which yields an efficient method for solving the system where A is the coefficient matrix, x is our variable vector and is a constant vector for . The factorization of A into the product of two matrices is closely related to Gaussian elimination. Definition 1. A square matrix is said to be lower triangular if for all . 2. A square matrix is said to be unit lower triangular if it is lower triangular and each . 3. A square matrix is said to be upper triangular if for all . Examples 1. The following are all lower triangular matrices: , , 2. The following are all unit lower triangular matrices: , , 3. The following are all upper triangular matrices: , , We note that the identity matrix is only square matrix that is both unit lower triangular and upper triangular. Example Let . For elementary matrices (See solv_lin_equ2.pdf) , , and we find that . Now, if , then direct computation yields and . It follows that and, hence, that where L is unit lower triangular and U is upper triangular. That is, . Observe the key fact that the unit lower triangular matrix L “contains” the essential data of the three elementary matrices , , and . Definition We say that the matrix A has an LU decomposition if where L is unit lower triangular and U is upper triangular. We also call the LU decomposition an LU factorization. Example 1. and so has an LU decomposition. 2. The matrix has more than one LU decomposition. Two such LU factorizations are and . -

The Exponential of a Matrix

5-28-2012 The Exponential of a Matrix The solution to the exponential growth equation dx kt = kx is given by x = c e . dt 0 It is natural to ask whether you can solve a constant coefficient linear system ′ ~x = A~x in a similar way. If a solution to the system is to have the same form as the growth equation solution, it should look like At ~x = e ~x0. The first thing I need to do is to make sense of the matrix exponential eAt. The Taylor series for ez is ∞ n z z e = . n! n=0 X It converges absolutely for all z. It A is an n × n matrix with real entries, define ∞ n n At t A e = . n! n=0 X The powers An make sense, since A is a square matrix. It is possible to show that this series converges for all t and every matrix A. Differentiating the series term-by-term, ∞ ∞ ∞ ∞ n−1 n n−1 n n−1 n−1 m m d At t A t A t A t A At e = n = = A = A = Ae . dt n! (n − 1)! (n − 1)! m! n=0 n=1 n=1 m=0 X X X X At ′ This shows that e solves the differential equation ~x = A~x. The initial condition vector ~x(0) = ~x0 yields the particular solution At ~x = e ~x0. This works, because e0·A = I (by setting t = 0 in the power series). Another familiar property of ordinary exponentials holds for the matrix exponential: If A and B com- mute (that is, AB = BA), then A B A B e e = e + . -

Handout 9 More Matrix Properties; the Transpose

Handout 9 More matrix properties; the transpose Square matrix properties These properties only apply to a square matrix, i.e. n £ n. ² The leading diagonal is the diagonal line consisting of the entries a11, a22, a33, . ann. ² A diagonal matrix has zeros everywhere except the leading diagonal. ² The identity matrix I has zeros o® the leading diagonal, and 1 for each entry on the diagonal. It is a special case of a diagonal matrix, and A I = I A = A for any n £ n matrix A. ² An upper triangular matrix has all its non-zero entries on or above the leading diagonal. ² A lower triangular matrix has all its non-zero entries on or below the leading diagonal. ² A symmetric matrix has the same entries below and above the diagonal: aij = aji for any values of i and j between 1 and n. ² An antisymmetric or skew-symmetric matrix has the opposite entries below and above the diagonal: aij = ¡aji for any values of i and j between 1 and n. This automatically means the digaonal entries must all be zero. Transpose To transpose a matrix, we reect it across the line given by the leading diagonal a11, a22 etc. In general the result is a di®erent shape to the original matrix: a11 a21 a11 a12 a13 > > A = A = 0 a12 a22 1 [A ]ij = A : µ a21 a22 a23 ¶ ji a13 a23 @ A > ² If A is m £ n then A is n £ m. > ² The transpose of a symmetric matrix is itself: A = A (recalling that only square matrices can be symmetric). -

Triangular Factorization

Chapter 1 Triangular Factorization This chapter deals with the factorization of arbitrary matrices into products of triangular matrices. Since the solution of a linear n n system can be easily obtained once the matrix is factored into the product× of triangular matrices, we will concentrate on the factorization of square matrices. Specifically, we will show that an arbitrary n n matrix A has the factorization P A = LU where P is an n n permutation matrix,× L is an n n unit lower triangular matrix, and U is an n ×n upper triangular matrix. In connection× with this factorization we will discuss pivoting,× i.e., row interchange, strategies. We will also explore circumstances for which A may be factored in the forms A = LU or A = LLT . Our results for a square system will be given for a matrix with real elements but can easily be generalized for complex matrices. The corresponding results for a general m n matrix will be accumulated in Section 1.4. In the general case an arbitrary m× n matrix A has the factorization P A = LU where P is an m m permutation× matrix, L is an m m unit lower triangular matrix, and U is an×m n matrix having row echelon structure.× × 1.1 Permutation matrices and Gauss transformations We begin by defining permutation matrices and examining the effect of premulti- plying or postmultiplying a given matrix by such matrices. We then define Gauss transformations and show how they can be used to introduce zeros into a vector. Definition 1.1 An m m permutation matrix is a matrix whose columns con- sist of a rearrangement of× the m unit vectors e(j), j = 1,...,m, in RI m, i.e., a rearrangement of the columns (or rows) of the m m identity matrix. -

The Exponential Function for Matrices

The exponential function for matrices Matrix exponentials provide a concise way of describing the solutions to systems of homoge- neous linear differential equations that parallels the use of ordinary exponentials to solve simple differential equations of the form y0 = λ y. For square matrices the exponential function can be defined by the same sort of infinite series used in calculus courses, but some work is needed in order to justify the construction of such an infinite sum. Therefore we begin with some material needed to prove that certain infinite sums of matrices can be defined in a mathematically sound manner and have reasonable properties. Limits and infinite series of matrices Limits of vector valued sequences in Rn can be defined and manipulated much like limits of scalar valued sequences, the key adjustment being that distances between real numbers that are expressed in the form js−tj are replaced by distances between vectors expressed in the form jx−yj. 1 Similarly, one can talk about convergence of a vector valued infinite series n=0 vn in terms of n the convergence of the sequence of partial sums sn = i=0 vk. As in the case of ordinary infinite series, the best form of convergence is absolute convergence, which correspondsP to the convergence 1 P of the real valued infinite series jvnj with nonnegative terms. A fundamental theorem states n=0 1 that a vector valued infinite series converges if the auxiliary series jvnj does, and there is P n=0 a generalization of the standard M-test: If jvnj ≤ Mn for all n where Mn converges, then P n vn also converges. -

Approximating the Exponential from a Lie Algebra to a Lie Group

MATHEMATICS OF COMPUTATION Volume 69, Number 232, Pages 1457{1480 S 0025-5718(00)01223-0 Article electronically published on March 15, 2000 APPROXIMATING THE EXPONENTIAL FROM A LIE ALGEBRA TO A LIE GROUP ELENA CELLEDONI AND ARIEH ISERLES 0 Abstract. Consider a differential equation y = A(t; y)y; y(0) = y0 with + y0 2 GandA : R × G ! g,whereg is a Lie algebra of the matricial Lie group G. Every B 2 g canbemappedtoGbythematrixexponentialmap exp (tB)witht 2 R. Most numerical methods for solving ordinary differential equations (ODEs) on Lie groups are based on the idea of representing the approximation yn of + the exact solution y(tn), tn 2 R , by means of exact exponentials of suitable elements of the Lie algebra, applied to the initial value y0. This ensures that yn 2 G. When the exponential is difficult to compute exactly, as is the case when the dimension is large, an approximation of exp (tB) plays an important role in the numerical solution of ODEs on Lie groups. In some cases rational or poly- nomial approximants are unsuitable and we consider alternative techniques, whereby exp (tB) is approximated by a product of simpler exponentials. In this paper we present some ideas based on the use of the Strang splitting for the approximation of matrix exponentials. Several cases of g and G are considered, in tandem with general theory. Order conditions are discussed, and a number of numerical experiments conclude the paper. 1. Introduction Consider the differential equation (1.1) y0 = A(t; y)y; y(0) 2 G; with A : R+ × G ! g; where G is a matricial Lie group and g is the underlying Lie algebra. -

New Foundations for Geometric Algebra1

Text published in the electronic journal Clifford Analysis, Clifford Algebras and their Applications vol. 2, No. 3 (2013) pp. 193-211 New foundations for geometric algebra1 Ramon González Calvet Institut Pere Calders, Campus Universitat Autònoma de Barcelona, 08193 Cerdanyola del Vallès, Spain E-mail : [email protected] Abstract. New foundations for geometric algebra are proposed based upon the existing isomorphisms between geometric and matrix algebras. Each geometric algebra always has a faithful real matrix representation with a periodicity of 8. On the other hand, each matrix algebra is always embedded in a geometric algebra of a convenient dimension. The geometric product is also isomorphic to the matrix product, and many vector transformations such as rotations, axial symmetries and Lorentz transformations can be written in a form isomorphic to a similarity transformation of matrices. We collect the idea Dirac applied to develop the relativistic electron equation when he took a basis of matrices for the geometric algebra instead of a basis of geometric vectors. Of course, this way of understanding the geometric algebra requires new definitions: the geometric vector space is defined as the algebraic subspace that generates the rest of the matrix algebra by addition and multiplication; isometries are simply defined as the similarity transformations of matrices as shown above, and finally the norm of any element of the geometric algebra is defined as the nth root of the determinant of its representative matrix of order n. The main idea of this proposal is an arithmetic point of view consisting of reversing the roles of matrix and geometric algebras in the sense that geometric algebra is a way of accessing, working and understanding the most fundamental conception of matrix algebra as the algebra of transformations of multiple quantities. -

8.3 Positive Definite Matrices

8.3. Positive Definite Matrices 433 Exercise 8.2.25 Show that every 2 2 orthog- [Hint: If a2 + b2 = 1, then a = cos θ and b = sinθ for × cos θ sinθ some angle θ.] onal matrix has the form − or sinθ cosθ cos θ sin θ Exercise 8.2.26 Use Theorem 8.2.5 to show that every for some angle θ. sinθ cosθ symmetric matrix is orthogonally diagonalizable. − 8.3 Positive Definite Matrices All the eigenvalues of any symmetric matrix are real; this section is about the case in which the eigenvalues are positive. These matrices, which arise whenever optimization (maximum and minimum) problems are encountered, have countless applications throughout science and engineering. They also arise in statistics (for example, in factor analysis used in the social sciences) and in geometry (see Section 8.9). We will encounter them again in Chapter 10 when describing all inner products in Rn. Definition 8.5 Positive Definite Matrices A square matrix is called positive definite if it is symmetric and all its eigenvalues λ are positive, that is λ > 0. Because these matrices are symmetric, the principal axes theorem plays a central role in the theory. Theorem 8.3.1 If A is positive definite, then it is invertible and det A > 0. Proof. If A is n n and the eigenvalues are λ1, λ2, ..., λn, then det A = λ1λ2 λn > 0 by the principal axes theorem (or× the corollary to Theorem 8.2.5). ··· If x is a column in Rn and A is any real n n matrix, we view the 1 1 matrix xT Ax as a real number. -

Wronskian Solutions to the Kdv Equation Via B\" Acklund

Wronskian solutions to the KdV equation via B¨acklund transformation Qi-fei Xuan∗, Mei-ying Ou, Da-jun Zhang† Department of Mathematics, Shanghai University, Shanghai 200444, P.R. China October 27, 2018 Abstract In the paper we discuss the B¨acklund transformation of the KdV equation between solitons and solitons, between negatons and negatons, between positons and positons, between rational solution and rational solution, and between complexitons and complexitons. We investigate the conditions that Wronskian entries satisfy for the bilinear B¨acklund transformation of the KdV equation. By choosing suitable Wronskian entries and the parameter in the bilinear B¨acklund transformation, we obtain transformations between many kinds of solutions. Keywords: the KdV equation, Wronskian solution, bilinear form, B¨acklund transformation 1 Introduction The Wronskian can be considered as a bridge connecting with many classical methods in soliton theory. This is not only because soliton solutions in Wronskian form can be obtained from the Darboux transformation[1], Sato theory[2, 3] and Wronskian technique[4]-[10], but also because the exponential polynomial for N-solitons derived from Hirota method[11, 12] and the matrix form given by the Inverse Scattering Transform[13, 14] can be transformed into a Wronskian by extracting some exponential factors. The special structure of a Wronskian contributes simple arXiv:0706.3487v1 [nlin.SI] 24 Jun 2007 forms of its derivatives, and this admits solution verification by direct substituting Wronskians into a bilinear soliton equation or a bilinear B¨acklund transformation(BT). This approach is re- ferred to as Wronskian technique[4]. In the approach a bilinear soliton equation is some algebraic identity provided that Wronskian entry vector satisfies some differential equation set which we call Wronskian condition.