Big Data Fundamentals

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Getting Started with Derby Version 10.14

Getting Started with Derby Version 10.14 Derby Document build: April 6, 2018, 6:13:12 PM (PDT) Version 10.14 Getting Started with Derby Contents Copyright................................................................................................................................3 License................................................................................................................................... 4 Introduction to Derby........................................................................................................... 8 Deployment options...................................................................................................8 System requirements.................................................................................................8 Product documentation for Derby........................................................................... 9 Installing and configuring Derby.......................................................................................10 Installing Derby........................................................................................................ 10 Setting up your environment..................................................................................10 Choosing a method to run the Derby tools and startup utilities...........................11 Setting the environment variables.......................................................................12 Syntax for the derbyrun.jar file............................................................................13 -

Operational Database Offload

Operational Database Offload Partner Brief Facing increased data growth and cost pressures, scale‐out technology has become very popular as more businesses become frustrated with their costly “Our partnership with Hortonworks is able to scale‐up RDBMSs. With Hadoop emerging as the de facto scale‐out file system, a deliver to our clients 5‐10x faster performance Hadoop RDBMS is a natural choice to replace traditional relational databases and over 75% reduction in TCO over traditional scale‐up databases. With Splice like Oracle and IBM DB2, which struggle with cost or scaling issues. Machine’s SQL‐based transactional processing Designed to meet the needs of real‐time, data‐driven businesses, Splice engine, our clients are able to migrate their legacy database applications without Machine is the only Hadoop RDBMS. Splice Machine offers an ANSI‐SQL application rewrites” database with support for ACID transactions on the distributed computing Monte Zweben infrastructure of Hadoop. Like Oracle and MySQL, it is an operational database Chief Executive Office that can handle operational (OLTP) or analytical (OLAP) workloads, while scaling Splice Machine out cost‐effectively from terabytes to petabytes on inexpensive commodity servers. Splice Machine, a technology partner with Hortonworks, chose HBase and Hadoop as its scale‐out architecture because of their proven auto‐sharding, replication, and failover technology. This partnership now allows businesses the best of all worlds: a standard SQL database, the proven scale‐out of Hadoop, and the ability to leverage current staff, operations, and applications without specialized hardware or significant application modifications. What Business Challenges are Solved? Leverage Existing SQL Tools Cost Effective Scaling Real‐Time Updates Leveraging the proven SQL processing of Splice Machine leverages the proven Splice Machine provides full ACID Apache Derby, Splice Machine is a true ANSI auto‐sharding of HBase to scale with transactions across rows and tables by using SQL database on Hadoop. -

Tuning Derby Version 10.14

Tuning Derby Version 10.14 Derby Document build: April 6, 2018, 6:14:42 PM (PDT) Version 10.14 Tuning Derby Contents Copyright................................................................................................................................4 License................................................................................................................................... 5 About this guide....................................................................................................................9 Purpose of this guide................................................................................................9 Audience..................................................................................................................... 9 How this guide is organized.....................................................................................9 Performance tips and tricks.............................................................................................. 10 Use prepared statements with substitution parameters......................................10 Create indexes, and make sure they are being used...........................................10 Ensure that table statistics are accurate.............................................................. 10 Increase the size of the data page cache............................................................. 11 Tune the size of database pages...........................................................................11 Performance trade-offs of large pages.............................................................. -

Unravel Data Systems Version 4.5

UNRAVEL DATA SYSTEMS VERSION 4.5 Component name Component version name License names jQuery 1.8.2 MIT License Apache Tomcat 5.5.23 Apache License 2.0 Tachyon Project POM 0.8.2 Apache License 2.0 Apache Directory LDAP API Model 1.0.0-M20 Apache License 2.0 apache/incubator-heron 0.16.5.1 Apache License 2.0 Maven Plugin API 3.0.4 Apache License 2.0 ApacheDS Authentication Interceptor 2.0.0-M15 Apache License 2.0 Apache Directory LDAP API Extras ACI 1.0.0-M20 Apache License 2.0 Apache HttpComponents Core 4.3.3 Apache License 2.0 Spark Project Tags 2.0.0-preview Apache License 2.0 Curator Testing 3.3.0 Apache License 2.0 Apache HttpComponents Core 4.4.5 Apache License 2.0 Apache Commons Daemon 1.0.15 Apache License 2.0 classworlds 2.4 Apache License 2.0 abego TreeLayout Core 1.0.1 BSD 3-clause "New" or "Revised" License jackson-core 2.8.6 Apache License 2.0 Lucene Join 6.6.1 Apache License 2.0 Apache Commons CLI 1.3-cloudera-pre-r1439998 Apache License 2.0 hive-apache 0.5 Apache License 2.0 scala-parser-combinators 1.0.4 BSD 3-clause "New" or "Revised" License com.springsource.javax.xml.bind 2.1.7 Common Development and Distribution License 1.0 SnakeYAML 1.15 Apache License 2.0 JUnit 4.12 Common Public License 1.0 ApacheDS Protocol Kerberos 2.0.0-M12 Apache License 2.0 Apache Groovy 2.4.6 Apache License 2.0 JGraphT - Core 1.2.0 (GNU Lesser General Public License v2.1 or later AND Eclipse Public License 1.0) chill-java 0.5.0 Apache License 2.0 Apache Commons Logging 1.2 Apache License 2.0 OpenCensus 0.12.3 Apache License 2.0 ApacheDS Protocol -

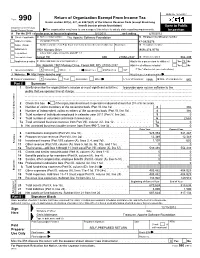

Return of Organization Exempt from Income

OMB No. 1545-0047 Return of Organization Exempt From Income Tax Form 990 Under section 501(c), 527, or 4947(a)(1) of the Internal Revenue Code (except black lung benefit trust or private foundation) Open to Public Department of the Treasury Internal Revenue Service The organization may have to use a copy of this return to satisfy state reporting requirements. Inspection A For the 2011 calendar year, or tax year beginning 5/1/2011 , and ending 4/30/2012 B Check if applicable: C Name of organization The Apache Software Foundation D Employer identification number Address change Doing Business As 47-0825376 Name change Number and street (or P.O. box if mail is not delivered to street address) Room/suite E Telephone number Initial return 1901 Munsey Drive (909) 374-9776 Terminated City or town, state or country, and ZIP + 4 Amended return Forest Hill MD 21050-2747 G Gross receipts $ 554,439 Application pending F Name and address of principal officer: H(a) Is this a group return for affiliates? Yes X No Jim Jagielski 1901 Munsey Drive, Forest Hill, MD 21050-2747 H(b) Are all affiliates included? Yes No I Tax-exempt status: X 501(c)(3) 501(c) ( ) (insert no.) 4947(a)(1) or 527 If "No," attach a list. (see instructions) J Website: http://www.apache.org/ H(c) Group exemption number K Form of organization: X Corporation Trust Association Other L Year of formation: 1999 M State of legal domicile: MD Part I Summary 1 Briefly describe the organization's mission or most significant activities: to provide open source software to the public that we sponsor free of charge 2 Check this box if the organization discontinued its operations or disposed of more than 25% of its net assets. -

The Network Data Storage Model Based on the HTML

Proceedings of the 2nd International Conference on Computer Science and Electronics Engineering (ICCSEE 2013) The network data storage model based on the HTML Lei Li1, Jianping Zhou2 Runhua Wang3, Yi Tang4 1Network Information Center 3Teaching Quality and Assessment Office Chongqing University of Science and Technology Chongqing University of Science and Technology Chongqing, China Chongqing, China [email protected] [email protected] 2Network Information Center 4School of Electronic and Information Engineering Chongqing University of Science and Technology Chongqing University of Science and Technology Chongqing, China Chongqing, China [email protected] [email protected] Abstract—in this paper, the mass oriented HTML data refers popular NoSQL for HTML database. This article describes to those large enough for HTML data, that can no longer use the SMAQ stack model and those who today may be traditional methods of treatment. In the past, has been the included in the model under mass oriented HTML data Web search engine creators have to face this problem be the processing tools. first to bear the brunt. Today, various social networks, mobile applications and various sensors and scientific fields are II. MAPREDUCE created daily with PB for HTML data. In order to deal with MapReduce is a Google for creating web webpage index the massive data processing challenges for HTML, Google created MapReduce. Google and Yahoo created Hadoop created. MapReduce framework has become today most hatching out of an intact mass oriented HTML data processing mass oriented HTML data processing plant. MapReduce is tools for ecological system. the key, will be oriented in the HTML data collection of a query division, then at multiple nodes to execute in parallel. -

Projects – Other Than Hadoop! Created By:-Samarjit Mahapatra [email protected]

Projects – other than Hadoop! Created By:-Samarjit Mahapatra [email protected] Mostly compatible with Hadoop/HDFS Apache Drill - provides low latency ad-hoc queries to many different data sources, including nested data. Inspired by Google's Dremel, Drill is designed to scale to 10,000 servers and query petabytes of data in seconds. Apache Hama - is a pure BSP (Bulk Synchronous Parallel) computing framework on top of HDFS for massive scientific computations such as matrix, graph and network algorithms. Akka - a toolkit and runtime for building highly concurrent, distributed, and fault tolerant event-driven applications on the JVM. ML-Hadoop - Hadoop implementation of Machine learning algorithms Shark - is a large-scale data warehouse system for Spark designed to be compatible with Apache Hive. It can execute Hive QL queries up to 100 times faster than Hive without any modification to the existing data or queries. Shark supports Hive's query language, metastore, serialization formats, and user-defined functions, providing seamless integration with existing Hive deployments and a familiar, more powerful option for new ones. Apache Crunch - Java library provides a framework for writing, testing, and running MapReduce pipelines. Its goal is to make pipelines that are composed of many user-defined functions simple to write, easy to test, and efficient to run Azkaban - batch workflow job scheduler created at LinkedIn to run their Hadoop Jobs Apache Mesos - is a cluster manager that provides efficient resource isolation and sharing across distributed applications, or frameworks. It can run Hadoop, MPI, Hypertable, Spark, and other applications on a dynamically shared pool of nodes. -

Javaedge Setup and Installation

APPENDIX A ■ ■ ■ JavaEdge Setup and Installation Throughout the book, we have used the example application, JavaEdge, to provide a practical demonstration of all the features discussed. In this appendix, we will walk you through setting up the tools and applications required to build and run JavaEdge, as well as take you through the steps needed to get the JavaEdge application running on your platform. Environment Setup Before you can get started with the JavaEdge application, you need to configure your platform to be able to build and run JavaEdge. Specifically, you need to configure Apache Ant in order to build the JavaEdge application and package it up for deployment. In addition, the JavaEdge application is designed to run on a J2EE application server and to use MySQL as the back-end database. You also need to have a current JDK installed; the JavaEdge application relies on JVM version 1.5 or higher, so make sure your JDK is compatible. We haven’t included instruc- tions for this here, since we are certain that you will already have a JDK installed if you are reading this book. However, if you do need to download one, you can find it at http://java. sun.com/j2se/1.5.0/download.jsp. Installing MySQL The JavaEdge application uses MySQL as the data store for all user, story, and comment data. If you don’t already have the MySQL database server, then you need to obtain the version applicable to your platform. You can obtain the latest production binary release of MySQL for your platform at http://www.mysql.com. -

Database Software Market: Billy Fitzsimmons +1 312 364 5112

Equity Research Technology, Media, & Communications | Enterprise and Cloud Infrastructure March 22, 2019 Industry Report Jason Ader +1 617 235 7519 [email protected] Database Software Market: Billy Fitzsimmons +1 312 364 5112 The Long-Awaited Shake-up [email protected] Naji +1 212 245 6508 [email protected] Please refer to important disclosures on pages 70 and 71. Analyst certification is on page 70. William Blair or an affiliate does and seeks to do business with companies covered in its research reports. As a result, investors should be aware that the firm may have a conflict of interest that could affect the objectivity of this report. This report is not intended to provide personal investment advice. The opinions and recommendations here- in do not take into account individual client circumstances, objectives, or needs and are not intended as recommen- dations of particular securities, financial instruments, or strategies to particular clients. The recipient of this report must make its own independent decisions regarding any securities or financial instruments mentioned herein. William Blair Contents Key Findings ......................................................................................................................3 Introduction .......................................................................................................................5 Database Market History ...................................................................................................7 Market Definitions -

Developing Applications Using the Derby Plug-Ins Lab Instructions

Developing Applications using the Derby Plug-ins Lab Instructions Goal: Use the Derby plug-ins to write a simple embedded and network server application. In this lab you will use the tools provided with the Derby plug-ins to create a simple database schema in the Java perspective and a stand-alone application which accesses a Derby database via the embedded driver and/or the network server. Additionally, you will create and use three stored procedures that access the Derby database. Intended Audience: This lab is intended for both experienced and new users to Eclipse and those new to the Derby plug-ins. Some of the Eclipse basics are described, but may be skipped if the student is primarily focused on learning how to use the Derby plug-ins. High Level Tasks Accomplished in this Lab Create a database and two tables using the jay_tables.sql file. Data is also inserted into the tables. Create a public java class which contains two static methods which will be called as SQL stored procedures on the two tables. Write and execute the SQL to create the stored procedures which calls the static methods in the java class. Test the stored procedures by using the SQL 'call' command. Write a stand alone application which uses the stored procedures. The stand alone application should accept command line or console input. Detailed Instructions These instructions are detailed, but do not necessarily provide each step of a task. Part of the goal of the lab is to become familiar with the Eclipse Help document, the Derby Plug- ins User Guide. -

Big Data: a Survey the New Paradigms, Methodologies and Tools

Big Data: A Survey The New Paradigms, Methodologies and Tools Enrico Giacinto Caldarola1,2 and Antonio Maria Rinaldi1,3 1Department of Electrical Engineering and Information Technologies, University of Naples Federico II, Napoli, Italy 2Institute of Industrial Technologies and Automation, National Research Council, Bari, Italy 3IKNOS-LAB Intelligent and Knowledge Systems, University of Naples Federico II, LUPT 80134, via Toledo, 402-Napoli, Italy Keywords: Big Data, NoSQL, NewSQL, Big Data Analytics, Data Management, Map-reduce. Abstract: For several years we are living in the era of information. Since any human activity is carried out by means of information technologies and tends to be digitized, it produces a humongous stack of data that becomes more and more attractive to different stakeholders such as data scientists, entrepreneurs or just privates. All of them are interested in the possibility to gain a deep understanding about people and things, by accurately and wisely analyzing the gold mine of data they produce. The reason for such interest derives from the competitive advantage and the increase in revenues expected from this deep understanding. In order to help analysts in revealing the insights hidden behind data, new paradigms, methodologies and tools have emerged in the last years. There has been a great explosion of technological solutions that arises the need for a review of the current state of the art in the Big Data technologies scenario. Thus, after a characterization of the new paradigm under study, this work aims at surveying the most spread technologies under the Big Data umbrella, throughout a qualitative analysis of their characterizing features. -

Java DB Based on Apache Derby

JAVA™ DB BASED ON APACHE DERBY What is Java™ DB? Java DB is Sun’s supported distribution of the open source Apache Derby database. Java DB is written in Java, providing “write once, run anywhere” portability. Its ease of use, standards compliance, full feature set, and small footprint make it the ideal database for Java developers. It can be embedded in Java applications, requiring zero administration by the developer or user. It can also be used in client server mode. Java DB is fully transactional and provides a standard SQL interface as well as a JDBC 4.0 compliant driver. The Apache Derby project has a strong and growing community that includes developers from large companies such as Sun Microsystems and IBM as well as individual contributors. How can I use Java DB? Java DB is ideal for: • Departmental Java client-server applications that need up to 24 x 7 support and the sophisti- cation of a transactional SQL database that protects against data corruption without requiring a database administrator. • Java application development and testing because it’s extremely easy to use, can run on a laptop, is available at no cost under the Apache license, and is also full-featured. • Embedded applications where there is no need for the developer or the end-user to buy, down- load, install, administer — or even be aware of — the database separately from the application. • Multi-platform use due to Java portability. And, because Java DB is fully standards-compliant, it is easy to migrate an application between Java DB and other open standard databases.