A FIRST COURSE in PROBABILITY This Page Intentionally Left Blank a FIRST COURSE in PROBABILITY

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Creating Modern Probability. Its Mathematics, Physics and Philosophy in Historical Perspective

HM 23 REVIEWS 203 The reviewer hopes this book will be widely read and enjoyed, and that it will be followed by other volumes telling even more of the fascinating story of Soviet mathematics. It should also be followed in a few years by an update, so that we can know if this great accumulation of talent will have survived the economic and political crisis that is just now robbing it of many of its most brilliant stars (see the article, ``To guard the future of Soviet mathematics,'' by A. M. Vershik, O. Ya. Viro, and L. A. Bokut' in Vol. 14 (1992) of The Mathematical Intelligencer). Creating Modern Probability. Its Mathematics, Physics and Philosophy in Historical Perspective. By Jan von Plato. Cambridge/New York/Melbourne (Cambridge Univ. Press). 1994. 323 pp. View metadata, citation and similar papers at core.ac.uk brought to you by CORE Reviewed by THOMAS HOCHKIRCHEN* provided by Elsevier - Publisher Connector Fachbereich Mathematik, Bergische UniversitaÈt Wuppertal, 42097 Wuppertal, Germany Aside from the role probabilistic concepts play in modern science, the history of the axiomatic foundation of probability theory is interesting from at least two more points of view. Probability as it is understood nowadays, probability in the sense of Kolmogorov (see [3]), is not easy to grasp, since the de®nition of probability as a normalized measure on a s-algebra of ``events'' is not a very obvious one. Furthermore, the discussion of different concepts of probability might help in under- standing the philosophy and role of ``applied mathematics.'' So the exploration of the creation of axiomatic probability should be interesting not only for historians of science but also for people concerned with didactics of mathematics and for those concerned with philosophical questions. -

2013--Annual-Report-Accounts.Pdf

Helping people make measurable progress in their lives through learning ANNUAL REPORT AND ACCOUNTS 2013 OUR TRANSFORMATION To find out more about how we are transforming our business go to page 09 EFFICACY To find out more about our focus on efficacy go to page 14 OUR PERFORMANCE For an in-depth analysis of our performance in 2013 go to page 19 Pearson is the world’s leading learning company, with 40,000 employees in more than 80 countries working to help people of all ages to make measurable progress in their lives through learning. We provide learning materials, technologies, assessments and services to teachers and students in order to help people everywhere aim higher and fulfil their true potential. We put the learner at the centre of everything we do. READ OUR REPORT ONLINE Learn more www.pearson.com/ar2013.html/ar2013.html To stay up to date wwithith PPearsonearson throughout the year,r, visit ouourr blog at blog.pearson.comn.com and follow us on Twitteritter – @pearsonplc 01 Heading one OVERVIEW Overview 02 Financial highlights A summary of who we are and what 04 Chairman’s introduction 1 we do, including performance highlights, 06 Our business models our business strategy and key areas of 09 Chief executive’s strategic overview investment and focus. 14 Pearson’s commitment to efficacy OUR PERFORMANCE OUR Our performance 19 Our performance An in-depth analysis of how we 20 Outlook 2014 2 performed in 2013, the outlook 23 Education: North America, International, Professional for 2014 and the principal risks and 32 Financial Times Group uncertainties affecting our businesses. -

Tricky Dice a Game Design by Andy Miner Using Square Shooters Poker

Tricky Dice A game design by Andy Miner using Square Shooters Poker Dice Players: 4 Playing Time: about an hour Required Components: Standard deck of 52 playing cards (no jokers) One complete set of nine Square Shooters poker dice Bag/pouch to hold dice Pen and paper for scoring Order of Play 1. Before the Bid One player is chosen to be dealer. The dealer deals eight cards to every player. He then places all dice in the bag and passes it to his left. Each player in turn draws two dice from the bag and rolls them, placing them face up in front of him. After rolling his two dice, the dealer draws the last remaining dice from the bag and rolls it. The face-up side of this die will show the trump suit and highest card value for this hand. Note: If a player rolls a joker on his dice, he may turn the die to any other side that he wishes, after looking at his cards. However, it cannot be changed after that. Note: If the dealer rolls a joker space on the trump die, he may look at his cards and turn the die to one of the other five sides (his choosing) to determine the trump suit and high card for the hand. 2. The Bid Starting to the dealer’s left, each player may bid (or pass) on how many tricks they think they can take this hand with the help of a partner. The player on the dealer’s left must start with the minimum bid of four tricks, but may start higher. -

Panther Auto Corner Left: the Band Marches on to Victory

PA NTHER PRIDE Volume 11, I ssue #2 TABLE OF CONTENTS Band's Victory in M aryville Page 1: Bands Victory in Maryville The marching band has had a fantastic Middle School Sports season! At the first competition in Carrollton they Middle School Football had a high music score but a low marching score and Page 2: Clubs and Activities flipped the scores in Cameron. On October 26th they Art Club had a competition in Maryville where they placed Elementary News second in their class 2A, only four points away from Blast Off Into the School Year first. The band had the third-highest music score over all the bands that performed. The band was very Stacking It Up excited to beat some very good bands for their last Books for First Grade marching competition of the year and are getting Character Assembly ready for concert season. Page 3: Current Events Florida Man Drumline this year had a good season. They Hong Kong Protests only had two competitions compared to the three that Creative Minds the whole band had. They played Blazed Blues and Lobster Walk for their performance. The drumline Photography Basics placed fourth in Carrollton and didn?t place in Kara Claypole, Mr.Dunker, and Ysee Chorot celebrate their "Song of the Sea" Cameron. second place trophy as they marched at Maryville for the "Bernard's Poem" Northwest Homecoming Parade. Page 4: Panther Auto Corner Left: The band marches on to victory. Ask Anonymous Entertainment Corbin's Destroy... Page 5: Joker Is a Marvel New Music Releases Page 6: New Video Game Releases Page 7: Horoscopes Page 8: November Menu Polo M iddle School Sports M iddle School Football Stats Board - By: M r. -

40Ppfinal (0708)

Washington www.Washington BridgeLeague.org Bridge League Sept./Oct. 2002 B♣U♥L♠L♦E♥T♣I♠N Thursday, October 10 ♣Stratified Open Pairs ............................................................................ 10:30am Washington Bridge Center,,, 1620 Elton Road, Silver Spring MD ♦StrataFlighted Open Pairs (both sites) + Stratified 199er Pairs (Christ the King Church only) or Stratified 99er Pairs (Beth El only) Beth El Congregation, 3830 Seminary Rd, Alexandria .......................... 7:00pm Christ the King Church, 2301 Colston Drive, Silver Spring ................... 7:30pm Capital Beltway to Connecticut Ave. South. Left on East-West Hwy. Right on Grubb Rd. 1st left on Colston. The church is one block on the left. * * * * Remainder of Tournament held at White Oak Armory Only * * * * 12200 Cherry Hill Road, Silver Spring MD Capital Beltway East to US 29 North (Exit 30A- toward Columbia) or Capital Beltway West to MD 193 West (Exit 29 - toward Wheaton); go ½ mile and turn right on US 29 North. Go north 4 miles, then right on Cherry Hill Rd. Right on Robert L. Finn Dr. (immediately after Toyota dealer) and left into parking lot. Friday, October 11 ♥Stratified Open Pairs (single sessions).................. 10:00am, 2:00pm & 8:00pm ♠Stratified Senior Pairs (single sessions) .............................. 10:00am & 2:00pm ♣Intermediate/Novice Pairs (single sessions) ......... 10:00am, 2:00pm & 8:00pm ♦Stratified Triple Nickel Swiss Teams, VPs ............................................. 8:00pm Saturday, October 12 ♥Stratified Senior Pairs (single sessions) ................................. 9:30am &1:30pm ♠Newcomer Pairs (0-5 masterpoints) ........................................................ 1:30pm ♣50/20/10/5 Special 49er Stratified Trophy Pairs ................................ 1:30pm ♦StrataFlighted Open Pairs (single sessions)......................... 1:30pm & 7:00pm ♥StrataFlighted Open Pairs (single sessions)........................ -

There Is No Pure Empirical Reasoning

There Is No Pure Empirical Reasoning 1. Empiricism and the Question of Empirical Reasons Empiricism may be defined as the view there is no a priori justification for any synthetic claim. Critics object that empiricism cannot account for all the kinds of knowledge we seem to possess, such as moral knowledge, metaphysical knowledge, mathematical knowledge, and modal knowledge.1 In some cases, empiricists try to account for these types of knowledge; in other cases, they shrug off the objections, happily concluding, for example, that there is no moral knowledge, or that there is no metaphysical knowledge.2 But empiricism cannot shrug off just any type of knowledge; to be minimally plausible, empiricism must, for example, at least be able to account for paradigm instances of empirical knowledge, including especially scientific knowledge. Empirical knowledge can be divided into three categories: (a) knowledge by direct observation; (b) knowledge that is deductively inferred from observations; and (c) knowledge that is non-deductively inferred from observations, including knowledge arrived at by induction and inference to the best explanation. Category (c) includes all scientific knowledge. This category is of particular import to empiricists, many of whom take scientific knowledge as a sort of paradigm for knowledge in general; indeed, this forms a central source of motivation for empiricism.3 Thus, if there is any kind of knowledge that empiricists need to be able to account for, it is knowledge of type (c). I use the term “empirical reasoning” to refer to the reasoning involved in acquiring this type of knowledge – that is, to any instance of reasoning in which (i) the premises are justified directly by observation, (ii) the reasoning is non- deductive, and (iii) the reasoning provides adequate justification for the conclusion. -

Version 7.8-Systemd

Linux From Scratch Version 7.8-systemd Created by Gerard Beekmans Edited by Douglas R. Reno Linux From Scratch: Version 7.8-systemd by Created by Gerard Beekmans and Edited by Douglas R. Reno Copyright © 1999-2015 Gerard Beekmans Copyright © 1999-2015, Gerard Beekmans All rights reserved. This book is licensed under a Creative Commons License. Computer instructions may be extracted from the book under the MIT License. Linux® is a registered trademark of Linus Torvalds. Linux From Scratch - Version 7.8-systemd Table of Contents Preface .......................................................................................................................................................................... vii i. Foreword ............................................................................................................................................................. vii ii. Audience ............................................................................................................................................................ vii iii. LFS Target Architectures ................................................................................................................................ viii iv. LFS and Standards ............................................................................................................................................ ix v. Rationale for Packages in the Book .................................................................................................................... x vi. Prerequisites -

To Read Raidernet Daily

RaiderNet Daily G. Ray Bodley High School, Fulton, NY Volume 2, Number 24 Monday, October 25, 2010 Raiders set for sectional opener By Colin Shannon goals. This gives the keeper an 81.3 save per- tough battle. The second round playoff game centage on the season, a good mark to be at. will take place Thursday night at 6 P.M. The Fulton varsity boys soccer team earned a The Cortland Tigers are led by a select few The Raider girls varsity will also open sec- playoff birth as the tenth seed after finishing on their roster. The two main offensive threats tional play on Tuesday, travelling to Indian the season with a record of 8-7-1. Unfortu- are Colby Reagan, with 5 goals and 3 assits, River for a 6 p.m. clash. Fulton, at 7-9, is the nately, having such a low rank forces the boys and Patrick Mahar with 5 goals and two as- #9 seed while the 7-7-1 Warriors are seeded to play a road game at Cortland to advance to sists. Once one looks past these two, the third just ahead of them at #8. Tuesday’s winner will the next round. The Cortland Tigers possess a scorer is Gregory Masler with 3 goals and a have a formidable task ahead as they must face record of 8-6-2, with one additional tie being lone assist. #1 seeded Jamesville-Dewitt at a time and the difference between the tenth and the sev- Standing between the pipes is keeper Chad place to be determined. -

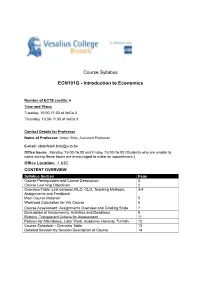

Course Syllabus ECN101G

Course Syllabus ECN101G - Introduction to Economics Number of ECTS credits: 6 Time and Place: Tuesday, 10:00-11:30 at VeCo 3 Thursday, 10:00-11:30 at VeCo 3 Contact Details for Professor Name of Professor: Abdel. Bitat, Assistant Professor E-mail: [email protected] Office hours: Monday, 15:00-16:00 and Friday, 15:00-16:00 (Students who are unable to come during these hours are encouraged to make an appointment.) Office Location: -1.63C CONTENT OVERVIEW Syllabus Section Page Course Prerequisites and Course Description 2 Course Learning Objectives 2 Overview Table: Link between MLO, CLO, Teaching Methods, 3-4 Assignments and Feedback Main Course Material 5 Workload Calculation for this Course 6 Course Assessment: Assignments Overview and Grading Scale 7 Description of Assignments, Activities and Deadlines 8 Rubrics: Transparent Criteria for Assessment 11 Policies for Attendance, Later Work, Academic Honesty, Turnitin 12 Course Schedule – Overview Table 13 Detailed Session-by-Session Description of Course 14 Course Prerequisites (if any) There are no pre-requisites for the course. However, since economics is mathematically intensive, it is worth reviewing secondary school mathematics for a good mastering of the course. A great source which starts with the basics and is available at the VUB library is Simon, C., & Blume, L. (1994). Mathematics for economists. New York: Norton. Course Description The course illustrates the way in which economists view the world. You will learn about basic tools of micro- and macroeconomic analysis and, by applying them, you will understand the behavior of households, firms and government. Problems include: trade and specialization; the operation of markets; industrial structure and economic welfare; the determination of aggregate output and price level; fiscal and monetary policy and foreign exchange rates. -

Probability and Counting Rules

blu03683_ch04.qxd 09/12/2005 12:45 PM Page 171 C HAPTER 44 Probability and Counting Rules Objectives Outline After completing this chapter, you should be able to 4–1 Introduction 1 Determine sample spaces and find the probability of an event, using classical 4–2 Sample Spaces and Probability probability or empirical probability. 4–3 The Addition Rules for Probability 2 Find the probability of compound events, using the addition rules. 4–4 The Multiplication Rules and Conditional 3 Find the probability of compound events, Probability using the multiplication rules. 4–5 Counting Rules 4 Find the conditional probability of an event. 5 Find the total number of outcomes in a 4–6 Probability and Counting Rules sequence of events, using the fundamental counting rule. 4–7 Summary 6 Find the number of ways that r objects can be selected from n objects, using the permutation rule. 7 Find the number of ways that r objects can be selected from n objects without regard to order, using the combination rule. 8 Find the probability of an event, using the counting rules. 4–1 blu03683_ch04.qxd 09/12/2005 12:45 PM Page 172 172 Chapter 4 Probability and Counting Rules Statistics Would You Bet Your Life? Today Humans not only bet money when they gamble, but also bet their lives by engaging in unhealthy activities such as smoking, drinking, using drugs, and exceeding the speed limit when driving. Many people don’t care about the risks involved in these activities since they do not understand the concepts of probability. -

When Algebraic Entropy Vanishes Jim Propp (U. Wisconsin) Tufts

When algebraic entropy vanishes Jim Propp (U. Wisconsin) Tufts University October 31, 2003 1 I. Overview P robabilistic DYNAMICS ↑ COMBINATORICS ↑ Algebraic DYNAMICS 2 II. Rational maps Projective space: CPn = (Cn+1 \ (0, 0,..., 0)) / ∼, where u ∼ v iff v = cu for some c =6 0. We write the equivalence class of (x1, x2, . , xn+1) n in CP as (x1 : x2 : ... : xn+1). The standard imbedding of affine n-space into projective n-space is (x1, x2, . , xn) 7→ (x1 : x2 : ... : xn : 1). The “inverse map” is (x : ... : x : x ) 7→ ( x1 ,..., xn ). 1 n n+1 xn+1 xn+1 3 Geometrical version: CPn is the set of lines through the origin in (n + 1)-space. n The point (a1 : a2 : ... : an+1) in CP cor- responds to the line a1x1 = a2x2 = ··· = n+1 an+1xn+1 in C . The intersection of this line with the hyperplane xn+1 = 1 is the point a a a ( 1 , 2 ,..., n , 1) an+1 an+1 an+1 (as long as an+1 =6 0). We identity affine n-space with the hyperplane xn+1 = 1. Affine n-space is a Zariski-dense subset of pro- jective n-space. 4 A rational map is a function from (a Zariski-dense subset of) CPn to CPm given by m rational functions of the affine coordinate variables, or, the associated function from a Zariski-dense sub- set of Cn to CPm (e.g., the “function” x 7→ 1/x on affine 1-space, associated with the function (x : y) 7→ (y : x) on projective 1-space). -

From the Editor

Department of Mathematics University of Wisconsin From the Editor. This year’s biggest news is the awarding of the National Medal of Science to Carl de Boor, professor emeritus of mathematics and computer science. Accompanied by his family, Professor de Boor received the medal at a White House ceremony on March 14, 2005. Carl was one of 14 new National Medal of Science Lau- reates, the only one in the category of mathematics. The award, administered by the National Science Foundation originated with the 1959 Congress. It honors individuals for pioneering research that has led to a better under- standing of the world, as well as to innovations and tech- nologies that give the USA a global economic edge. Carl is an authority on the theory and application of splines, which play a central role in, among others, computer- aided design and manufacturing, applications of com- puter graphics, and signal and image processing. The new dean of the College of Letters and Science, Gary Sandefur, said “Carl de Boor’s selection for the na- tion’s highest scientific award reflects the significance of his work and the tradition of excellence among our mathematics and computer science faculty.” We in the Department of Mathematics are extremely proud of Carl de Boor. Carl retired from the University in 2003 as Steen- bock Professor of Mathematical Sciences and now lives in Washington State, although he keeps a small condo- minium in Madison. Last year’s newsletter contains in- Carl de Boor formation about Carl’s distinguished career and a 65th birthday conference held in his honor in Germany.