Why Intermediate Languages ?

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Ironpython in Action

IronPytho IN ACTION Michael J. Foord Christian Muirhead FOREWORD BY JIM HUGUNIN MANNING IronPython in Action Download at Boykma.Com Licensed to Deborah Christiansen <[email protected]> Download at Boykma.Com Licensed to Deborah Christiansen <[email protected]> IronPython in Action MICHAEL J. FOORD CHRISTIAN MUIRHEAD MANNING Greenwich (74° w. long.) Download at Boykma.Com Licensed to Deborah Christiansen <[email protected]> For online information and ordering of this and other Manning books, please visit www.manning.com. The publisher offers discounts on this book when ordered in quantity. For more information, please contact Special Sales Department Manning Publications Co. Sound View Court 3B fax: (609) 877-8256 Greenwich, CT 06830 email: [email protected] ©2009 by Manning Publications Co. All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by means electronic, mechanical, photocopying, or otherwise, without prior written permission of the publisher. Many of the designations used by manufacturers and sellers to distinguish their products are claimed as trademarks. Where those designations appear in the book, and Manning Publications was aware of a trademark claim, the designations have been printed in initial caps or all caps. Recognizing the importance of preserving what has been written, it is Manning’s policy to have the books we publish printed on acid-free paper, and we exert our best efforts to that end. Recognizing also our responsibility to conserve the resources of our planet, Manning books are printed on paper that is at least 15% recycled and processed without the use of elemental chlorine. -

NET Framework

Advanced Windows Programming .NET Framework based on: A. Troelsen, Pro C# 2005 and .NET 2.0 Platform, 3rd Ed., 2005, Apress J. Richter, Applied .NET Frameworks Programming, 2002, MS Press D. Watkins et al., Programming in the .NET Environment, 2002, Addison Wesley T. Thai, H. Lam, .NET Framework Essentials, 2001, O’Reilly D. Beyer, C# COM+ Programming, M&T Books, 2001, chapter 1 Krzysztof Mossakowski Faculty of Mathematics and Information Science http://www.mini.pw.edu.pl/~mossakow Advanced Windows Programming .NET Framework - 2 Contents The most important features of .NET Assemblies Metadata Common Type System Common Intermediate Language Common Language Runtime Deploying .NET Runtime Garbage Collection Serialization Krzysztof Mossakowski Faculty of Mathematics and Information Science http://www.mini.pw.edu.pl/~mossakow Advanced Windows Programming .NET Framework - 3 .NET Benefits In comparison with previous Microsoft’s technologies: Consistent programming model – common OO programming model Simplified programming model – no error codes, GUIDs, IUnknown, etc. Run once, run always – no "DLL hell" Simplified deployment – easy to use installation projects Wide platform reach Programming language integration Simplified code reuse Automatic memory management (garbage collection) Type-safe verification Rich debugging support – CLR debugging, language independent Consistent method failure paradigm – exceptions Security – code access security Interoperability – using existing COM components, calling Win32 functions Krzysztof -

Understanding CIL

Understanding CIL James Crowley Developer Fusion http://www.developerfusion.co.uk/ Overview Generating and understanding CIL De-compiling CIL Protecting against de-compilation Merging assemblies Common Language Runtime (CLR) Core component of the .NET Framework on which everything else is built. A runtime environment which provides A unified type system Metadata Execution engine, that deals with programs written in a Common Intermediate Language (CIL) Common Intermediate Language All compilers targeting the CLR translate their source code into CIL A kind of assembly language for an abstract stack-based machine, but is not specific to any hardware architecture Includes instructions specifically designed to support object-oriented concepts Platform Independence The intermediate language is not interpreted, but is not platform specific. The CLR uses JIT (Just-in-time) compilation to translate the CIL into native code Applications compiled in .NET can be moved to any machine, providing there is a CLR implementation for it (Mono, SSCLI etc) Demo Generating IL using the C# compiler .method private hidebysig static void Main(string[] args) cil managed { .entrypoint // Code size 31 (0x1f) Some familiar keywords with some additions: .maxstack 2 .locals init (int32 V_0, .method – this is a method int32 V_1, hidebysig – the method hides other methods with int32 V_2) the same name and signature. IL_0000: ldc.i4.s 50 cil managed – written in CIL and should be IL_0002: stloc.0 executed by the execution engine (C++ allows IL_0003: ldc.i4.s -

Working with Ironpython and WPF

Working with IronPython and WPF Douglas Blank Bryn Mawr College Programming Paradigms Spring 2010 With thanks to: http://www.ironpython.info/ http://devhawk.net/ IronPython Demo with WPF >>> import clr >>> clr.AddReference("PresentationFramework") >>> from System.Windows import * >>> window = Window() >>> window.Title = "Hello" >>> window.Show() >>> button = Controls.Button() >>> button.Content = "Push Me" >>> panel = Controls.StackPanel() >>> window.Content = panel >>> panel.Children.Add(button) 0 >>> app = System.Windows.Application() >>> app.Run(window) XAML Example: Main.xaml <Window xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation" xmlns: x="http://schemas.microsoft.com/winfx/2006/xaml" Title="TestApp" Width="640" Height="480"> <StackPanel> <Label>Iron Python and WPF</Label> <ListBox Grid.Column="0" x:Name="listbox1" > <ListBox.ItemTemplate> <DataTemplate> <TextBlock Text="{Binding Path=title}" /> </DataTemplate> </ListBox.ItemTemplate> </ListBox> </StackPanel> </Window> IronPython + XAML import sys if 'win' in sys.platform: import pythoncom pythoncom.CoInitialize() import clr clr.AddReference("System.Xml") clr.AddReference("PresentationFramework") clr.AddReference("PresentationCore") from System.IO import StringReader from System.Xml import XmlReader from System.Windows.Markup import XamlReader, XamlWriter from System.Windows import Window, Application xaml = """<Window xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation" Title="XamlReader Example" Width="300" Height="200"> <StackPanel Margin="5"> <Button -

The Zonnon Project: a .NET Language and Compiler Experiment

The Zonnon Project: A .NET Language and Compiler Experiment Jürg Gutknecht Vladimir Romanov Eugene Zueff Swiss Fed Inst of Technology Moscow State University Swiss Fed Inst of Technology (ETH) Computer Science Department (ETH) Zürich, Switzerland Moscow, Russia Zürich, Switzerland [email protected] [email protected] [email protected] ABSTRACT Zonnon is a new programming language that combines the style and the virtues of the Pascal family with a number of novel programming concepts and constructs. It covers a wide range of programming models from algorithms and data structures to interoperating active objects in a distributed system. In contrast to popular object-oriented languages, Zonnon propagates a symmetric compositional inheritance model. In this paper, we first give a brief overview of the language and then focus on the implementation of the compiler and builder on top of .NET, with a particular emphasis on the use of the MS Common Compiler Infrastructure (CCI). The Zonnon compiler is an interesting showcase for the .NET interoperability platform because it implements a non-trivial but still “natural” mapping from the language’s intrinsic object model to the underlying CLR. Keywords Oberon, Zonnon, Compiler, Common Compiler Infrastructure (CCI), Integration. 1. INTRODUCTION: THE BRIEF CCI and b) to experiment with evolutionary language HISTORY OF THE PROJECT concepts. The notion of active object was taken from the Active Oberon language [Gut01]. In addition, two This is a technical paper presenting and describing new concurrency mechanisms have been added: an the current state of the Zonnon project. Zonnon is an accompanying communication mechanism based on evolution of the Pascal, Modula, Oberon language syntax-oriented protocols , borrowed from the Active line [Wir88]. -

Languages and Compilers (Sprog Og Oversættere)

Languages and Compilers (SProg og Oversættere) Bent Thomsen Department of Computer Science Aalborg University With acknowledgement to Microsoft, especially Nick Benton,whose slides this lecture is based on. 1 The common intermediate format nirvana • If we have n number of languages and need to have them running on m number of machines we need m*n compilers! •Ifwehave onecommon intermediate format we only need n front-ends and m back-ends, i.e. m+n • Why haven’t you taught us about the common intermediate language? 2 Strong et al. “The Problem of Programming Communication with Changing Machines: A Proposed Solution” C.ACM. 1958 3 Quote This concept is not particularly new or original. It has been discussed by many independent persons as long ago as 1954. It might not be difficult to prove that “this was well-known to Babbage,” so no effort has been made to give credit to the originator, if indeed there was a unique originator. 4 “Everybody knows that UNCOL was a failure” • Subsequent attempts: – Janus (1978) • Pascal, Algol68 – Amsterdam Compiler Kit (1983) • Modula-2, C, Fortran, Pascal, Basic, Occam – Pcode -> Ucode -> HPcode (1977-?) • FORTRAN, Ada, Pascal, COBOL, C++ – Ten15 -> TenDRA -> ANDF (1987-1996) • Ada, C, C++, Fortran – .... 5 Sharing parts of compiler pipelines is common • Compiling to textual assembly language • Retargetable code-generation libraries – VPO, MLRISC • Compiling via C – Cedar, Fortran, Modula 2, Ada, Scheme, Standard ML, Haskell, Prolog, Mercury,... • x86 is a pretty convincing UNCOL – pure software translation -

Programming with Windows Forms

A P P E N D I X A ■ ■ ■ Programming with Windows Forms Since the release of the .NET platform (circa 2001), the base class libraries have included a particular API named Windows Forms, represented primarily by the System.Windows.Forms.dll assembly. The Windows Forms toolkit provides the types necessary to build desktop graphical user interfaces (GUIs), create custom controls, manage resources (e.g., string tables and icons), and perform other desktop- centric programming tasks. In addition, a separate API named GDI+ (represented by the System.Drawing.dll assembly) provides additional types that allow programmers to generate 2D graphics, interact with networked printers, and manipulate image data. The Windows Forms (and GDI+) APIs remain alive and well within the .NET 4.0 platform, and they will exist within the base class library for quite some time (arguably forever). However, Microsoft has shipped a brand new GUI toolkit called Windows Presentation Foundation (WPF) since the release of .NET 3.0. As you saw in Chapters 27-31, WPF provides a massive amount of horsepower that you can use to build bleeding-edge user interfaces, and it has become the preferred desktop API for today’s .NET graphical user interfaces. The point of this appendix, however, is to provide a tour of the traditional Windows Forms API. One reason it is helpful to understand the original programming model: you can find many existing Windows Forms applications out there that will need to be maintained for some time to come. Also, many desktop GUIs simply might not require the horsepower offered by WPF. -

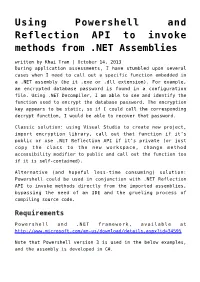

Using Powershell and Reflection API to Invoke Methods from .NET

Using Powershell and Reflection API to invoke methods from .NET Assemblies written by Khai Tran | October 14, 2013 During application assessments, I have stumbled upon several cases when I need to call out a specific function embedded in a .NET assembly (be it .exe or .dll extension). For example, an encrypted database password is found in a configuration file. Using .NET Decompiler, I am able to see and identify the function used to encrypt the database password. The encryption key appears to be static, so if I could call the corresponding decrypt function, I would be able to recover that password. Classic solution: using Visual Studio to create new project, import encryption library, call out that function if it’s public or use .NET Reflection API if it’s private (or just copy the class to the new workspace, change method accessibility modifier to public and call out the function too if it is self-contained). Alternative (and hopeful less-time consuming) solution: Powershell could be used in conjunction with .NET Reflection API to invoke methods directly from the imported assemblies, bypassing the need of an IDE and the grueling process of compiling source code. Requirements Powershell and .NET framework, available at http://www.microsoft.com/en-us/download/details.aspx?id=34595 Note that Powershell version 3 is used in the below examples, and the assembly is developed in C#. Walkthrough First, identify the fully qualified class name (typically in the form of Namespace.Classname ), method name, accessibility level, member modifier and method arguments. This can easily be done with any available .NET Decompiler (dotPeek, JustDecompile, Reflector) Scenario 1: Public static class – Call public static method namespace AesSample { public class AesLibStatic { .. -

NET Reverse Engineering

.NET.NET ReverseReverse EngineeringEngineering Erez Metula, CISSP Application Security Department Manager Security Software Engineer 2B Secure ErezMetula @2bsecure.co.il Agenda • The problem of reversing & decompilation • Server DLL hijacking • Introduction to MSIL & the CLR • Advanced techniques • Debugging • Patching • Unpacking • Reversing the framework • Exposing .NET CLR vulnerabilities • Revealing Hidden functionality • Tools! The problem of reversing & decompilation • Code exposure • Business logic • Secrets in code – passwords – connection strings – Encryption keys • Intellectual proprietary (IP) & software piracy • Code modification • Add backdoors to original code • Change the application logic • Enable functionality (example: “only for registered user ”) • Disable functionality (example: security checks) Example – simple reversing • Let ’s peak into the code with reflector Example – reversing server DLL • Intro • Problem description (code) • Topology • The target application • What we ’ll see Steps – tweaking with the logic • Exploiting ANY server / application vulnerability to execute commands • Information gathering • Download an assembly • Reverse engineer the assembly • Change the assembly internal logic • Upload the modified assembly, overwrite the old one. • Wait for some new action • Collect the data … Exploiting ANY server / application vulnerability to execute commands • Example application has a vulnerability that let us to access th e file system • Sql injection • Configuration problem (Open share, IIS permissions, -

Diploma Thesis

Faculty of Computer Science Chair for Real Time Systems Diploma Thesis Porting DotGNU to Embedded Linux Author: Alexander Stein Supervisor: Jun.-Prof. Dr.-Ing. Robert Baumgartl Dipl.-Ing. Ronald Sieber Date of Submission: May 15, 2008 Alexander Stein Porting DotGNU to Embedded Linux Diploma Thesis, Chemnitz University of Technology, 2008 Abstract Programming PLC systems is limited by the provided libraries. In contrary, hardware-near programming needs bigger eorts in e. g. initializing the hardware. This work oers a foundation to combine advantages of both development sides. Therefore, Portable.NET from the DotGNU project has been used, which is an im- plementation of CLI, better known as .NET. The target system is the PLCcore- 5484 microcontroller board, developed by SYS TEC electronic GmbH. Built upon the porting, two variants to use interrupt routines withing the Portabe.NET runtime environment have been analyzed. Finally, the reaction times to occuring interrupt events have been examined and compared. Die Programmierung für SPS-Systeme ist durch die gegebenen Bibliotheken beschränkt, während hardwarenahe Programmierung einen gröÿeren Aufwand durch z.B. Initialisierungen hat. Diese Arbeit bietet eine Grundlage, um die Vorteile bei- der Entwicklungsseiten zu kombinieren. Dafür wurde Portable.NET des DotGNU- Projekts, eine Implementierung des CLI, bekannter unter dem Namen .NET, be- nutzt. Das Zielsystem ist das PLCcore-5484 Mikrocontrollerboard der SYS TEC electronic GmbH. Aufbauend auf der Portierung wurden zwei Varianten zur Ein- bindung von Interrupt-Routinen in die Portable.NET Laufzeitumgebung untersucht. Abschlieÿend wurden die Reaktionszeiten zu eintretenden Interrupts analysiert und verglichen. Acknowledgements I would like to thank some persons who had inuence and supported me in my work. -

Mono on F&S Boards

Mono on F&S Boards Version 0.2 (2020-06-16) © F&S Elektronik Systeme GmbH Untere Waldplätze 23 D-70569 Stuttgart Germany Phone: +49(0)711-123722-0 Fax: +49(0)711-123722-99 About This Document This document describes how to run .NET framework applications via Mono on F&S Boards. Remark The version number on the title page of this document is the version of the document. It is not related to the version number of any software release! The latest version of this document can always be found at http://www.fs-net.de. How To Print This Document This document is designed to be printed double-sided (front and back) on A4 paper. If you want to read it with a PDF reader program, you should use a two-page layout where the title page is an extra single page. The settings are correct if the page numbers are at the outside of the pages, even pages on the left and odd pages on the right side. If it is reversed, then the title page is handled wrongly and is part of the first double-page instead of a single page. Titlepage 8 9 Typographical Conventions We use different fonts and highlighting to emphasize the context of special terms: File names Menu entries Board input/output Program code PC input/output Listings Generic input/output Variables History Date V Platform A,M,R Chapter Description Au 2020-05-18 0.1 ALL A ALL Initial version PG 2020-06-09 0.2 ALL M ALL Correct typos and footer PG 2020-06-09 0.2 ALL A 2 Add System requirements chapter PG 2020-06-09 0.2 ALL M 8 Add GTK# tutorial directly into this document PG V Version A,M,R Added, Modified, Removed -

NET Hacking & In-Memory Malware

.NET Hacking & In-Memory Malware Shawn Edwards Shawn Edwards Cyber Adversarial Engineer The MITRE Corporation Hacker Maker Learner Take stuff apart. Change it. Put Motivated by an incessant Devoted to a continuous effort it back together. desire to create and craft. of learning and sharing knowledge. Red teamer. Adversary Numerous personal and emulator. professional projects. B.S. in Computer Science. Adversary Emulation @ MITRE • Red teaming, but specific threat actors • Use open-source knowledge of their TTPs to emulate their behavior and operations • Ensures techniques are accurate to real world • ATT&CK (Adversarial Tactics Techniques and Common Knowledge) • Public wiki of real-world adversary TTPs, software, and groups • CALDERA • Modular Automated Adversary Emulation framework Adversary Emulation @ MITRE • ATT&CK • Adversarial Tactics Techniques and Common Knowledge • Public wiki of real-world adversary TTPs, software, and groups • Lets blue team and red team speak in the same language • CALDERA • Modular Automated Adversary Emulation framework • Adversary Mode: • AI-driven “red team in a box” • Atomic Mode: • Define Adversaries, give them abilities, run operations. Customize everything at will. In-Memory Malware • Is not new • Process Injection has been around for a long time • Typically thought of as advanced tradecraft; not really • Surged in popularity recently • Made easier by open-source or commercial red team tools • For this talk, only discuss Windows malware • When relevant, will include the ATT&CK Technique ID In-Memory